Shin-ichi Maeda

Segmentation with Noisy Labels via Spatially Correlated Distributions

Apr 21, 2025Abstract:In semantic segmentation, the accuracy of models heavily depends on the high-quality annotations. However, in many practical scenarios such as medical imaging and remote sensing, obtaining true annotations is not straightforward and usually requires significant human labor. Relying on human labor often introduces annotation errors, including mislabeling, omissions, and inconsistency between annotators. In the case of remote sensing, differences in procurement time can lead to misaligned ground truth annotations. These label errors are not independently distributed, and instead usually appear in spatially connected regions where adjacent pixels are more likely to share the same errors. To address these issues, we propose an approximate Bayesian estimation based on a probabilistic model that assumes training data includes label errors, incorporating the tendency for these errors to occur with spatial correlations between adjacent pixels. Bayesian inference requires computing the posterior distribution of label errors, which becomes intractable when spatial correlations are present. We represent the correlation of label errors between adjacent pixels through a Gaussian distribution whose covariance is structured by a Kac-Murdock-Szeg\"{o} (KMS) matrix, solving the computational challenges. Through experiments on multiple segmentation tasks, we confirm that leveraging the spatial correlation of label errors significantly improves performance. Notably, in specific tasks such as lung segmentation, the proposed method achieves performance comparable to training with clean labels under moderate noise levels. Code is available at https://github.com/pfnet-research/Bayesian_SpatialCorr.

Four-Axis Adaptive Fingers Hand for Object Insertion: FAAF Hand

Jul 30, 2024

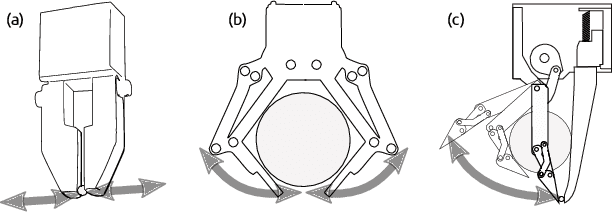

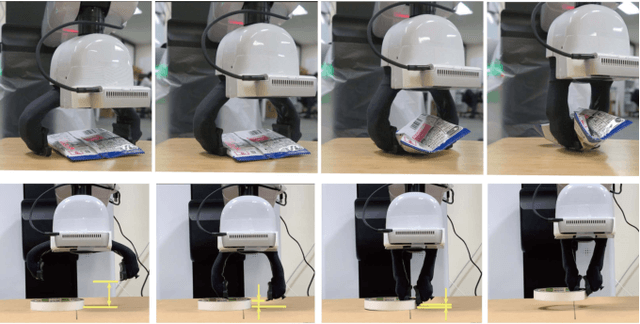

Abstract:Robots operating in the real world face significant but unavoidable issues in object localization that must be dealt with. A typical approach to address this is the addition of compliance mechanisms to hardware to absorb and compensate for some of these errors. However, for fine-grained manipulation tasks, the location and choice of appropriate compliance mechanisms are critical for success. For objects to be inserted in a target site on a flat surface, the object must first be successfully aligned with the opening of the slot, as well as correctly oriented along its central axis, before it can be inserted. We developed the Four-Axis Adaptive Finger Hand (FAAF hand) that is equipped with fingers that can passively adapt in four axes (x, y, z, yaw) enabling it to perform insertion tasks including lid fitting in the presence of significant localization errors. Furthermore, this adaptivity allows the use of simple control methods without requiring contact sensors or other devices. Our results confirm the ability of the FAAF hand on challenging insertion tasks of square and triangle-shaped pegs (or prisms) and placing of container lids in the presence of position errors in all directions and rotational error along the object's central axis, using a simple control scheme.

Deep Bayesian Filter for Bayes-faithful Data Assimilation

May 29, 2024

Abstract:State estimation for nonlinear state space models is a challenging task. Existing assimilation methodologies predominantly assume Gaussian posteriors on physical space, where true posteriors become inevitably non-Gaussian. We propose Deep Bayesian Filtering (DBF) for data assimilation on nonlinear state space models (SSMs). DBF constructs new latent variables $h_t$ on a new latent (``fancy'') space and assimilates observations $o_t$. By (i) constraining the state transition on fancy space to be linear and (ii) learning a Gaussian inverse observation operator $q(h_t|o_t)$, posteriors always remain Gaussian for DBF. Quite distinctively, the structured design of posteriors provides an analytic formula for the recursive computation of posteriors without accumulating Monte-Carlo sampling errors over time steps. DBF seeks the Gaussian inverse observation operators $q(h_t|o_t)$ and other latent SSM parameters (e.g., dynamics matrix) by maximizing the evidence lower bound. Experiments show that DBF outperforms model-based approaches and latent assimilation methods in various tasks and conditions.

JPEG Information Regularized Deep Image Prior for Denoising

Oct 02, 2023Abstract:Image denoising is a representative image restoration task in computer vision. Recent progress of image denoising from only noisy images has attracted much attention. Deep image prior (DIP) demonstrated successful image denoising from only a noisy image by inductive bias of convolutional neural network architectures without any pre-training. The major challenge of DIP based image denoising is that DIP would completely recover the original noisy image unless applying early stopping. For early stopping without a ground-truth clean image, we propose to monitor JPEG file size of the recovered image during optimization as a proxy metric of noise levels in the recovered image. Our experiments show that the compressed image file size works as an effective metric for early stopping.

Two-fingered Hand with Gear-type Synchronization Mechanism with Magnet for Improved Small and Offset Objects Grasping: F2 Hand

Sep 20, 2023

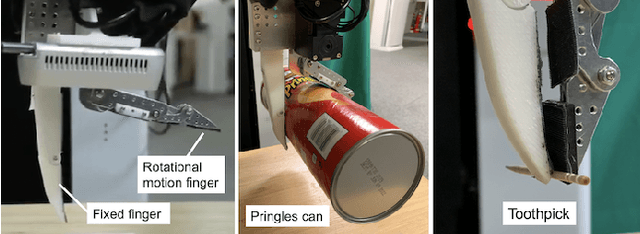

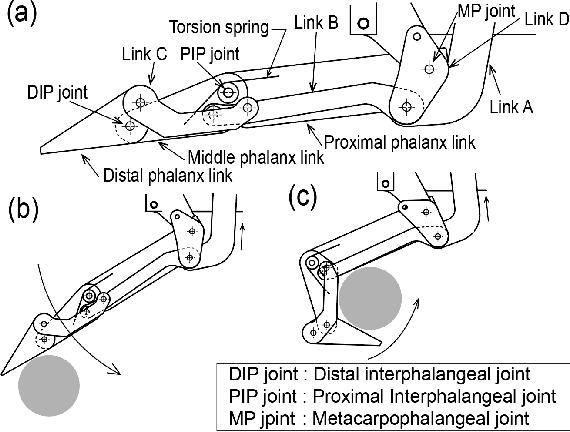

Abstract:A problem that plagues robotic grasping is the misalignment of the object and gripper due to difficulties in precise localization, actuation, etc. Under-actuated robotic hands with compliant mechanisms are used to adapt and compensate for these inaccuracies. However, these mechanisms come at the cost of controllability and coordination. For instance, adaptive functions that let the fingers of a two-fingered gripper adapt independently may affect the coordination necessary for grasping small objects. In this work, we develop a two-fingered robotic hand capable of grasping objects that are offset from the gripper's center, while still having the requisite coordination for grasping small objects via a novel gear-type synchronization mechanism with a magnet. This gear synchronization mechanism allows the adaptive finger's tips to be aligned enabling it to grasp objects as small as toothpicks and washers. The magnetic component allows this coordination to automatically turn off when needed, allowing for the grasping of objects that are offset/misaligned from the gripper. This equips the hand with the capability of grasping light, fragile objects (strawberries, creampuffs, etc) to heavy frying pan lids, all while maintaining their position and posture which is vital in numerous applications that require precise positioning or careful manipulation.

Virtual Human Generative Model: Masked Modeling Approach for Learning Human Characteristics

Jun 19, 2023

Abstract:Identifying the relationship between healthcare attributes, lifestyles, and personality is vital for understanding and improving physical and mental conditions. Machine learning approaches are promising for modeling their relationships and offering actionable suggestions. In this paper, we propose Virtual Human Generative Model (VHGM), a machine learning model for estimating attributes about healthcare, lifestyles, and personalities. VHGM is a deep generative model trained with masked modeling to learn the joint distribution of attributes conditioned on known ones. Using heterogeneous tabular datasets, VHGM learns more than 1,800 attributes efficiently. We numerically evaluate the performance of VHGM and its training techniques. As a proof-of-concept of VHGM, we present several applications demonstrating user scenarios, such as virtual measurements of healthcare attributes and hypothesis verifications of lifestyles.

Controlling Posterior Collapse by an Inverse Lipschitz Constraint on the Decoder Network

Apr 25, 2023

Abstract:Variational autoencoders (VAEs) are one of the deep generative models that have experienced enormous success over the past decades. However, in practice, they suffer from a problem called posterior collapse, which occurs when the encoder coincides, or collapses, with the prior taking no information from the latent structure of the input data into consideration. In this work, we introduce an inverse Lipschitz neural network into the decoder and, based on this architecture, provide a new method that can control in a simple and clear manner the degree of posterior collapse for a wide range of VAE models equipped with a concrete theoretical guarantee. We also illustrate the effectiveness of our method through several numerical experiments.

F3 Hand: A Versatile Robot Hand Inspired by Human Thumb and Index Fingers

Jun 16, 2022

Abstract:It is challenging to grasp numerous objects with varying sizes and shapes with a single robot hand. To address this, we propose a new robot hand called the 'F3 hand' inspired by the complex movements of human index finger and thumb. The F3 hand attempts to realize complex human-like grasping movements by combining a parallel motion finger and a rotational motion finger with an adaptive function. In order to confirm the performance of our hand, we attached it to a mobile manipulator - the Toyota Human Support Robot (HSR) and conducted grasping experiments. In our results, we show that it is able to grasp all YCB objects (82 in total), including washers with outer diameters as small as 6.4mm. We also built a system for intuitive operation with a 3D mouse and grasp an additional 24 objects, including small toothpicks and paper clips and large pitchers and cracker boxes. The F3 hand is able to achieve a 98% success rate in grasping even under imprecise control and positional offsets. Furthermore, owing to the finger's adaptive function, we demonstrate characteristics of the F3 hand that facilitate the grasping of soft objects such as strawberries in a desirable posture.

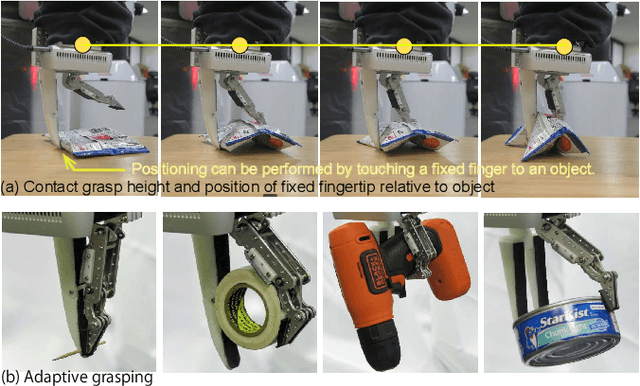

F1 Hand: A Versatile Fixed-Finger Gripper for Delicate Teleoperation and Autonomous Grasping

May 14, 2022

Abstract:Teleoperation is often limited by the ability of an operator to react and predict the behavior of the robot as it interacts with the environment. For example, to grasp small objects on a table, the teleoperator needs to predict the position of the fingertips before the fingers are closed to avoid them hitting the table. For that reason, we developed the F1 hand, a single-motor gripper that facilitates teleoperation with the use of a fixed finger. The hand is capable of grasping objects as thin as a paper clip, and as heavy and large as a cordless drill. The applicability of the hand can be expanded by replacing the fixed finger with different shapes. This flexibility makes the hand highly versatile while being easy and cheap to develop. However, due to the atypical asymmetric structure and actuation of the hand usual grasping strategies no longer apply. Thus, we propose a controller that approximates actuation symmetry by using the motion of the whole arm. The F1 hand and its controller are compared side-by-side with the original Toyota Human Support Robot (HSR) gripper in teleoperation using 22 objects from the YCB dataset in addition to small objects. The grasping time and peak contact forces could be decreased by 20% and 70%, respectively while increasing success rates by 5%. Using an off-the-shelf grasp pose estimator for autonomous grasping, the system achieved similar success rates to the original HSR gripper, at the order of 80%.

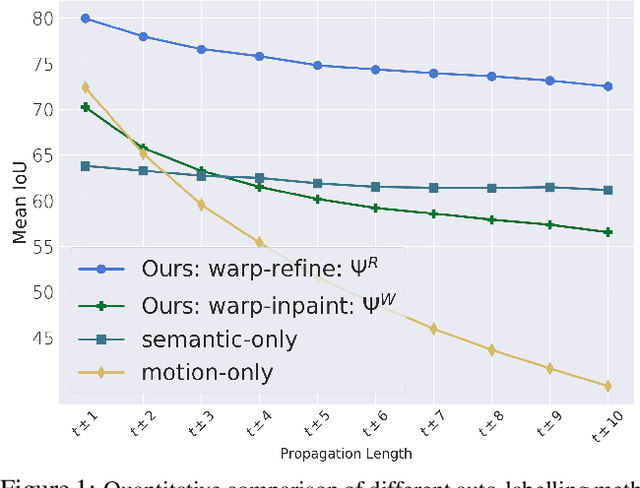

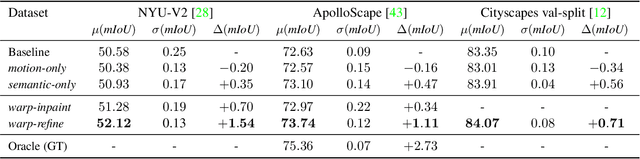

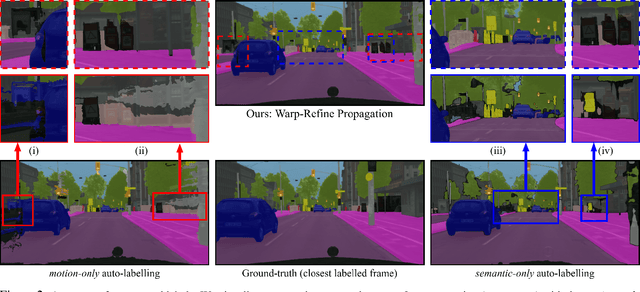

Warp-Refine Propagation: Semi-Supervised Auto-labeling via Cycle-consistency

Sep 28, 2021

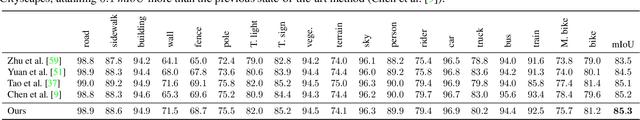

Abstract:Deep learning models for semantic segmentation rely on expensive, large-scale, manually annotated datasets. Labelling is a tedious process that can take hours per image. Automatically annotating video sequences by propagating sparsely labeled frames through time is a more scalable alternative. In this work, we propose a novel label propagation method, termed Warp-Refine Propagation, that combines semantic cues with geometric cues to efficiently auto-label videos. Our method learns to refine geometrically-warped labels and infuse them with learned semantic priors in a semi-supervised setting by leveraging cycle consistency across time. We quantitatively show that our method improves label-propagation by a noteworthy margin of 13.1 mIoU on the ApolloScape dataset. Furthermore, by training with the auto-labelled frames, we achieve competitive results on three semantic-segmentation benchmarks, improving the state-of-the-art by a large margin of 1.8 and 3.61 mIoU on NYU-V2 and KITTI, while matching the current best results on Cityscapes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge