Mitchell Gordon

StyleRemix: Interpretable Authorship Obfuscation via Distillation and Perturbation of Style Elements

Aug 28, 2024

Abstract:Authorship obfuscation, rewriting a text to intentionally obscure the identity of the author, is an important but challenging task. Current methods using large language models (LLMs) lack interpretability and controllability, often ignoring author-specific stylistic features, resulting in less robust performance overall. To address this, we develop StyleRemix, an adaptive and interpretable obfuscation method that perturbs specific, fine-grained style elements of the original input text. StyleRemix uses pre-trained Low Rank Adaptation (LoRA) modules to rewrite an input specifically along various stylistic axes (e.g., formality and length) while maintaining low computational cost. StyleRemix outperforms state-of-the-art baselines and much larger LLMs in a variety of domains as assessed by both automatic and human evaluation. Additionally, we release AuthorMix, a large set of 30K high-quality, long-form texts from a diverse set of 14 authors and 4 domains, and DiSC, a parallel corpus of 1,500 texts spanning seven style axes in 16 unique directions

Localizing Paragraph Memorization in Language Models

Mar 28, 2024Abstract:Can we localize the weights and mechanisms used by a language model to memorize and recite entire paragraphs of its training data? In this paper, we show that while memorization is spread across multiple layers and model components, gradients of memorized paragraphs have a distinguishable spatial pattern, being larger in lower model layers than gradients of non-memorized examples. Moreover, the memorized examples can be unlearned by fine-tuning only the high-gradient weights. We localize a low-layer attention head that appears to be especially involved in paragraph memorization. This head is predominantly focusing its attention on distinctive, rare tokens that are least frequent in a corpus-level unigram distribution. Next, we study how localized memorization is across the tokens in the prefix by perturbing tokens and measuring the caused change in the decoding. A few distinctive tokens early in a prefix can often corrupt the entire continuation. Overall, memorized continuations are not only harder to unlearn, but also to corrupt than non-memorized ones.

A Roadmap to Pluralistic Alignment

Feb 07, 2024Abstract:With increased power and prevalence of AI systems, it is ever more critical that AI systems are designed to serve all, i.e., people with diverse values and perspectives. However, aligning models to serve pluralistic human values remains an open research question. In this piece, we propose a roadmap to pluralistic alignment, specifically using language models as a test bed. We identify and formalize three possible ways to define and operationalize pluralism in AI systems: 1) Overton pluralistic models that present a spectrum of reasonable responses; 2) Steerably pluralistic models that can steer to reflect certain perspectives; and 3) Distributionally pluralistic models that are well-calibrated to a given population in distribution. We also propose and formalize three possible classes of pluralistic benchmarks: 1) Multi-objective benchmarks, 2) Trade-off steerable benchmarks, which incentivize models to steer to arbitrary trade-offs, and 3) Jury-pluralistic benchmarks which explicitly model diverse human ratings. We use this framework to argue that current alignment techniques may be fundamentally limited for pluralistic AI; indeed, we highlight empirical evidence, both from our own experiments and from other work, that standard alignment procedures might reduce distributional pluralism in models, motivating the need for further research on pluralistic alignment.

Visual Intelligence through Human Interaction

Nov 12, 2021Abstract:Over the last decade, Computer Vision, the branch of Artificial Intelligence aimed at understanding the visual world, has evolved from simply recognizing objects in images to describing pictures, answering questions about images, aiding robots maneuver around physical spaces and even generating novel visual content. As these tasks and applications have modernized, so too has the reliance on more data, either for model training or for evaluation. In this chapter, we demonstrate that novel interaction strategies can enable new forms of data collection and evaluation for Computer Vision. First, we present a crowdsourcing interface for speeding up paid data collection by an order of magnitude, feeding the data-hungry nature of modern vision models. Second, we explore a method to increase volunteer contributions using automated social interventions. Third, we develop a system to ensure human evaluation of generative vision models are reliable, affordable and grounded in psychophysics theory. We conclude with future opportunities for Human-Computer Interaction to aid Computer Vision.

Approximating Human Judgment of Generated Image Quality

Nov 30, 2019

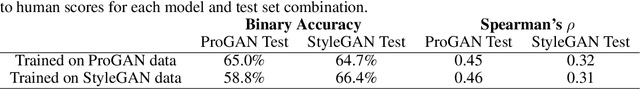

Abstract:Generative models have made immense progress in recent years, particularly in their ability to generate high quality images. However, that quality has been difficult to evaluate rigorously, with evaluation dominated by heuristic approaches that do not correlate well with human judgment, such as the Inception Score and Fr\'echet Inception Distance. Real human labels have also been used in evaluation, but are inefficient and expensive to collect for each image. Here, we present a novel method to automatically evaluate images based on their quality as perceived by humans. By not only generating image embeddings from Inception network activations and comparing them to the activations for real images, of which other methods perform a variant, but also regressing the activation statistics to match gold standard human labels, we demonstrate 66% accuracy in predicting human scores of image realism, matching the human inter-rater agreement rate. Our approach also generalizes across generative models, suggesting the potential for capturing a model-agnostic measure of image quality. We open source our dataset of human labels for the advancement of research and techniques in this area.

HYPE: Human eYe Perceptual Evaluation of Generative Models

Apr 24, 2019

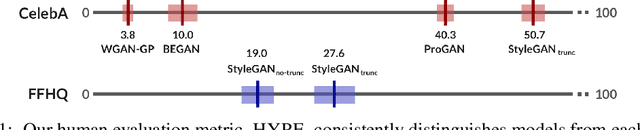

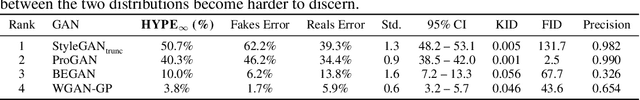

Abstract:Generative models often use human evaluations to determine and justify progress. Unfortunately, existing human evaluation methods are ad-hoc: there is currently no standardized, validated evaluation that: (1) measures perceptual fidelity, (2) is reliable, (3) separates models into clear rank order, and (4) ensures high-quality measurement without intractable cost. In response, we construct Human-eYe Perceptual Evaluation (HYPE), a human metric that is (1) grounded in psychophysics research in perception, (2) reliable across different sets of randomly sampled outputs from a model, (3) results in separable model performances, and (4) efficient in cost and time. We introduce two methods. The first, HYPE-Time, measures visual perception under adaptive time constraints to determine the minimum length of time (e.g., 250ms) that model output such as a generated face needs to be visible for people to distinguish it as real or fake. The second, HYPE-Infinity, measures human error rate on fake and real images with no time constraints, maintaining stability and drastically reducing time and cost. We test HYPE across four state-of-the-art generative adversarial networks (GANs) on unconditional image generation using two datasets, the popular CelebA and the newer higher-resolution FFHQ, and two sampling techniques of model outputs. By simulating HYPE's evaluation multiple times, we demonstrate consistent ranking of different models, identifying StyleGAN with truncation trick sampling (27.6% HYPE-Infinity deception rate, with roughly one quarter of images being misclassified by humans) as superior to StyleGAN without truncation (19.0%) on FFHQ. See https://hype.stanford.edu for details.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge