Y. Alex Kolchinski

Approximating Human Judgment of Generated Image Quality

Nov 30, 2019

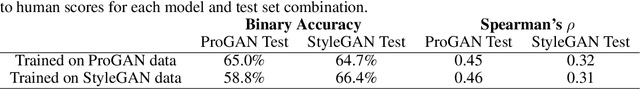

Abstract:Generative models have made immense progress in recent years, particularly in their ability to generate high quality images. However, that quality has been difficult to evaluate rigorously, with evaluation dominated by heuristic approaches that do not correlate well with human judgment, such as the Inception Score and Fr\'echet Inception Distance. Real human labels have also been used in evaluation, but are inefficient and expensive to collect for each image. Here, we present a novel method to automatically evaluate images based on their quality as perceived by humans. By not only generating image embeddings from Inception network activations and comparing them to the activations for real images, of which other methods perform a variant, but also regressing the activation statistics to match gold standard human labels, we demonstrate 66% accuracy in predicting human scores of image realism, matching the human inter-rater agreement rate. Our approach also generalizes across generative models, suggesting the potential for capturing a model-agnostic measure of image quality. We open source our dataset of human labels for the advancement of research and techniques in this area.

Representing Social Media Users for Sarcasm Detection

Aug 25, 2018

Abstract:We explore two methods for representing authors in the context of textual sarcasm detection: a Bayesian approach that directly represents authors' propensities to be sarcastic, and a dense embedding approach that can learn interactions between the author and the text. Using the SARC dataset of Reddit comments, we show that augmenting a bidirectional RNN with these representations improves performance; the Bayesian approach suffices in homogeneous contexts, whereas the added power of the dense embeddings proves valuable in more diverse ones.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge