Mingbin Xu

Segmental Attention Decoding With Long Form Acoustic Encodings

Dec 16, 2025

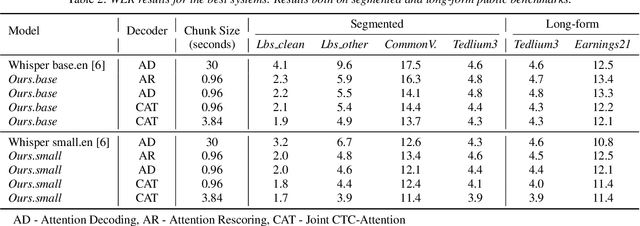

Abstract:We address the fundamental incompatibility of attention-based encoder-decoder (AED) models with long-form acoustic encodings. AED models trained on segmented utterances learn to encode absolute frame positions by exploiting limited acoustic context beyond segment boundaries, but fail to generalize when decoding long-form segments where these cues vanish. The model loses ability to order acoustic encodings due to permutation invariance of keys and values in cross-attention. We propose four modifications: (1) injecting explicit absolute positional encodings into cross-attention for each decoded segment, (2) long-form training with extended acoustic context to eliminate implicit absolute position encoding, (3) segment concatenation to cover diverse segmentations needed during training, and (4) semantic segmentation to align AED-decoded segments with training segments. We show these modifications close the accuracy gap between continuous and segmented acoustic encodings, enabling auto-regressive use of the attention decoder.

Enhancing CTC-based speech recognition with diverse modeling units

Jun 05, 2024

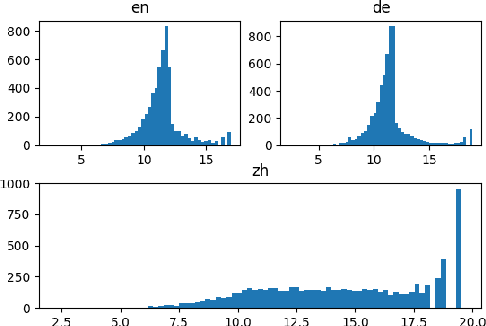

Abstract:In recent years, the evolution of end-to-end (E2E) automatic speech recognition (ASR) models has been remarkable, largely due to advances in deep learning architectures like transformer. On top of E2E systems, researchers have achieved substantial accuracy improvement by rescoring E2E model's N-best hypotheses with a phoneme-based model. This raises an interesting question about where the improvements come from other than the system combination effect. We examine the underlying mechanisms driving these gains and propose an efficient joint training approach, where E2E models are trained jointly with diverse modeling units. This methodology does not only align the strengths of both phoneme and grapheme-based models but also reveals that using these diverse modeling units in a synergistic way can significantly enhance model accuracy. Our findings offer new insights into the optimal integration of heterogeneous modeling units in the development of more robust and accurate ASR systems.

Conformer-Based Speech Recognition On Extreme Edge-Computing Devices

Dec 16, 2023

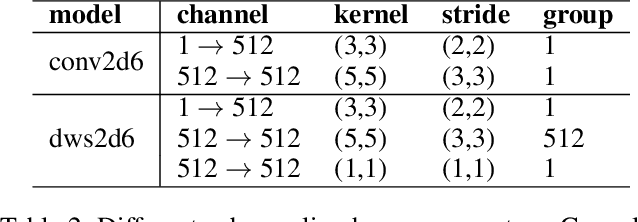

Abstract:With increasingly more powerful compute capabilities and resources in today's devices, traditionally compute-intensive automatic speech recognition (ASR) has been moving from the cloud to devices to better protect user privacy. However, it is still challenging to implement on-device ASR on resource-constrained devices, such as smartphones, smart wearables, and other small home automation devices. In this paper, we propose a series of model architecture adaptions, neural network graph transformations, and numerical optimizations to fit an advanced Conformer based end-to-end streaming ASR system on resource-constrained devices without accuracy degradation. We achieve over 5.26 times faster than realtime (0.19 RTF) speech recognition on small wearables while minimizing energy consumption and achieving state-of-the-art accuracy. The proposed methods are widely applicable to other transformer-based server-free AI applications. In addition, we provide a complete theory on optimal pre-normalizers that numerically stabilize layer normalization in any Lp-norm using any floating point precision.

Personalization of CTC-based End-to-End Speech Recognition Using Pronunciation-Driven Subword Tokenization

Oct 16, 2023

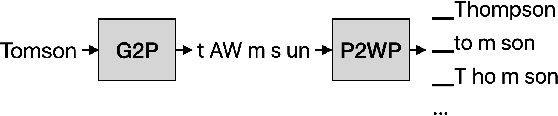

Abstract:Recent advances in deep learning and automatic speech recognition have improved the accuracy of end-to-end speech recognition systems, but recognition of personal content such as contact names remains a challenge. In this work, we describe our personalization solution for an end-to-end speech recognition system based on connectionist temporal classification. Building on previous work, we present a novel method for generating additional subword tokenizations for personal entities from their pronunciations. We show that using this technique in combination with two established techniques, contextual biasing and wordpiece prior normalization, we are able to achieve personal named entity accuracy on par with a competitive hybrid system.

Acoustic Model Fusion for End-to-end Speech Recognition

Oct 10, 2023

Abstract:Recent advances in deep learning and automatic speech recognition (ASR) have enabled the end-to-end (E2E) ASR system and boosted the accuracy to a new level. The E2E systems implicitly model all conventional ASR components, such as the acoustic model (AM) and the language model (LM), in a single network trained on audio-text pairs. Despite this simpler system architecture, fusing a separate LM, trained exclusively on text corpora, into the E2E system has proven to be beneficial. However, the application of LM fusion presents certain drawbacks, such as its inability to address the domain mismatch issue inherent to the internal AM. Drawing inspiration from the concept of LM fusion, we propose the integration of an external AM into the E2E system to better address the domain mismatch. By implementing this novel approach, we have achieved a significant reduction in the word error rate, with an impressive drop of up to 14.3% across varied test sets. We also discovered that this AM fusion approach is particularly beneficial in enhancing named entity recognition.

Training Large-Vocabulary Neural Language Models by Private Federated Learning for Resource-Constrained Devices

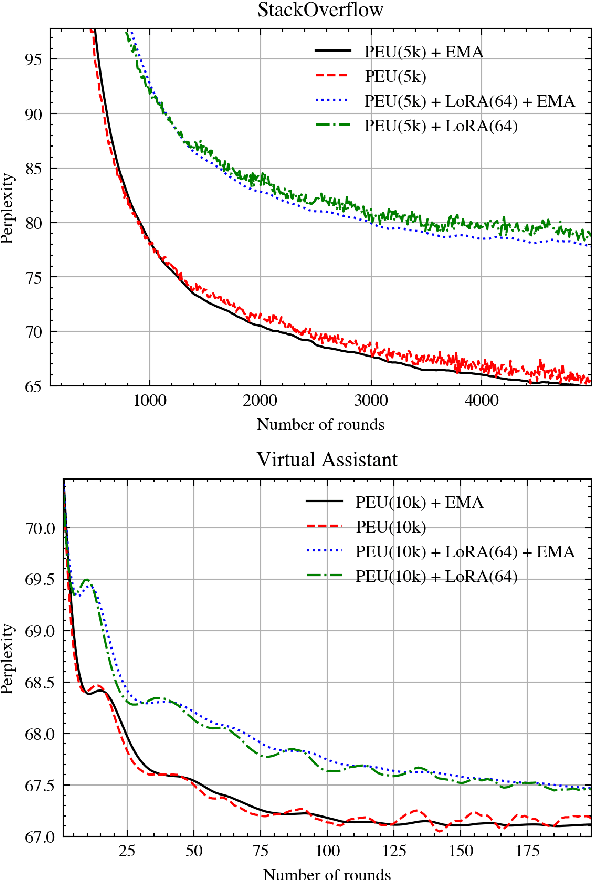

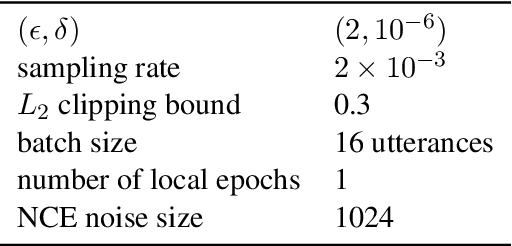

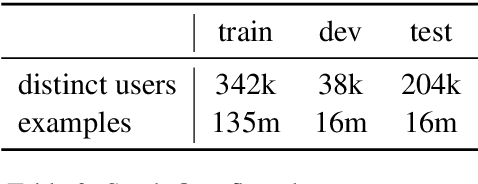

Jul 18, 2022

Abstract:Federated Learning (FL) is a technique to train models using data distributed across devices. Differential Privacy (DP) provides a formal privacy guarantee for sensitive data. Our goal is to train a large neural network language model (NNLM) on compute-constrained devices while preserving privacy using FL and DP. However, the DP-noise introduced to the model increases as the model size grows, which often prevents convergence. We propose Partial Embedding Updates (PEU), a novel technique to decrease noise by decreasing payload size. Furthermore, we adopt Low Rank Adaptation (LoRA) and Noise Contrastive Estimation (NCE) to reduce the memory demands of large models on compute-constrained devices. This combination of techniques makes it possible to train large-vocabulary language models while preserving accuracy and privacy.

A Multi-task Learning Approach for Named Entity Recognition using Local Detection

Apr 21, 2019

Abstract:Named entity recognition (NER) systems that perform well require task-related and manually annotated datasets. However, they are expensive to develop, and are thus limited in size. As there already exists a large number of NER datasets that share a certain degree of relationship but differ in content, it is important to explore the question of whether such datasets can be combined as a simple method for improving NER performance. To investigate this, we developed a novel locally detecting multitask model using FFNNs. The model relies on encoding variable-length sequences of words into theoretically lossless and unique fixed-size representations. We applied this method to several well-known NER tasks and compared the results of our model to baseline models as well as other published results. As a result, we observed competitive performance in nearly all of the tasks.

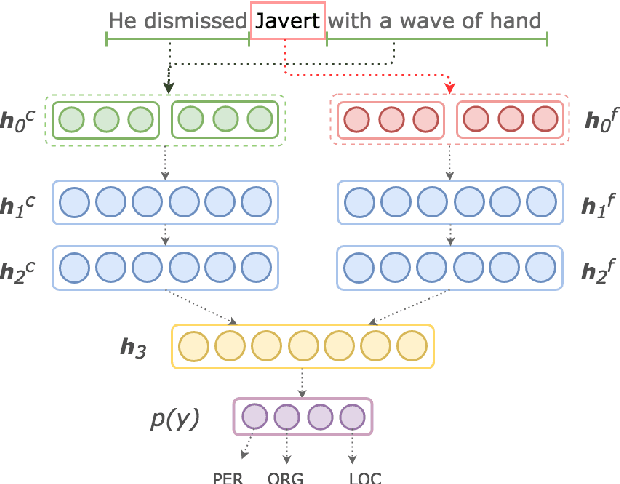

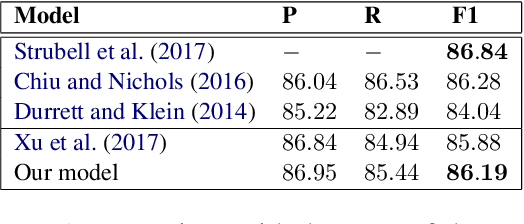

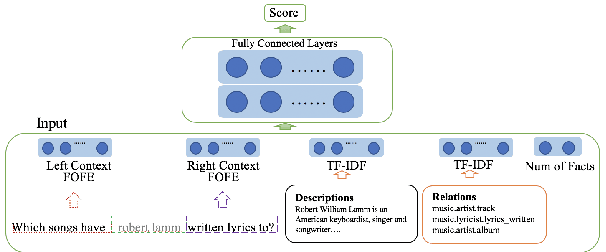

Effective Context and Fragment Feature Usage for Named Entity Recognition

Apr 21, 2019

Abstract:In this paper, we explore a new approach to named entity recognition (NER) with the goal of learning from context and fragment features more effectively, contributing to the improvement of overall recognition performance. We use the recent fixed-size ordinally forgetting encoding (FOFE) method to fully encode each sentence fragment and its left-right contexts into a fixed-size representation. Next, we organize the context and fragment features into groups, and feed each feature group to dedicated fully-connected layers. Finally, we merge each group's final dedicated layers and add a shared layer leading to a single output. The outcome of our experiments show that, given only tokenized text and trained word embeddings, our system outperforms our baseline models, and is competitive to the state-of-the-arts of various well-known NER tasks.

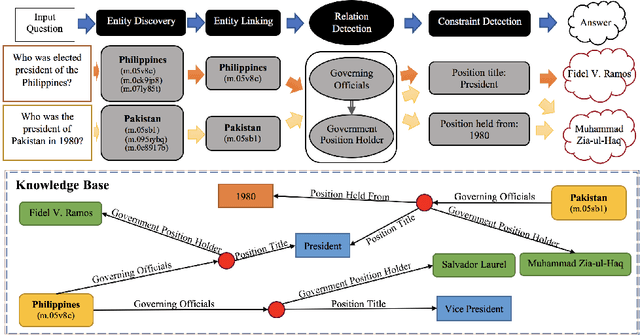

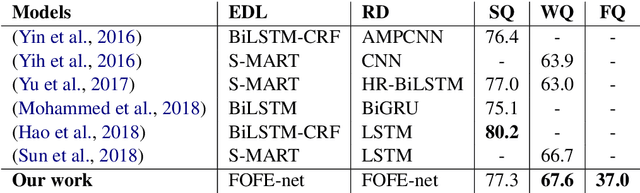

A General FOFE-net Framework for Simple and Effective Question Answering over Knowledge Bases

Mar 29, 2019

Abstract:Question answering over knowledge base (KB-QA) has recently become a popular research topic in NLP. One popular way to solve the KB-QA problem is to make use of a pipeline of several NLP modules, including entity discovery and linking (EDL) and relation detection. Recent success on KB-QA task usually involves complex network structures with sophisticated heuristics. Inspired by a previous work that builds a strong KB-QA baseline, we propose a simple but general neural model composed of fixed-size ordinally forgetting encoding (FOFE) and deep neural networks, called FOFE-net to solve KB-QA problem at different stages. For evaluation, we use two popular KB-QA datasets, SimpleQuestions and WebQSP, and a newly created dataset, FreebaseQA. The experimental results show that FOFE-net performs well on KB-QA subtasks, entity discovery and linking (EDL) and relation detection, and in turn pushing overall KB-QA system to achieve strong results on all datasets.

Fixed-Size Ordinally Forgetting Encoding Based Word Sense Disambiguation

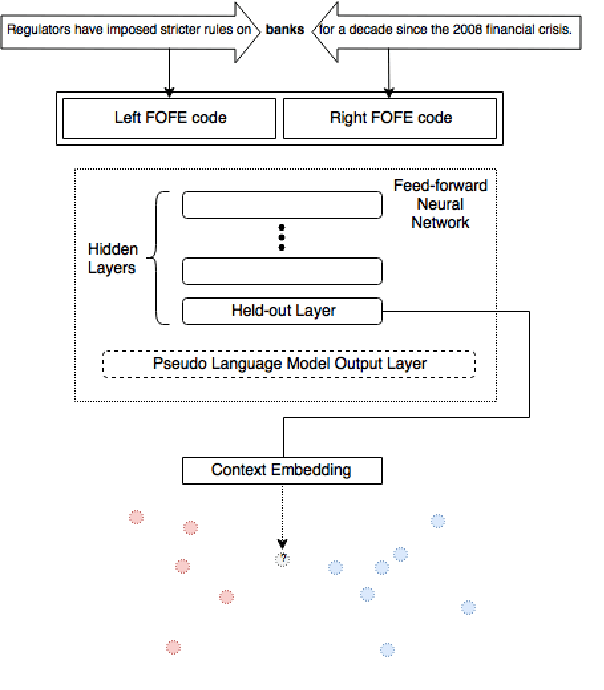

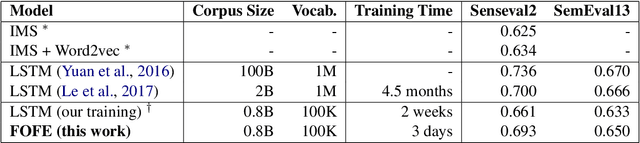

Feb 23, 2019

Abstract:In this paper, we present our method of using fixed-size ordinally forgetting encoding (FOFE) to solve the word sense disambiguation (WSD) problem. FOFE enables us to encode variable-length sequence of words into a theoretically unique fixed-size representation that can be fed into a feed forward neural network (FFNN), while keeping the positional information between words. In our method, a FOFE-based FFNN is used to train a pseudo language model over unlabelled corpus, then the pre-trained language model is capable of abstracting the surrounding context of polyseme instances in labelled corpus into context embeddings. Next, we take advantage of these context embeddings towards WSD classification. We conducted experiments on several WSD data sets, which demonstrates that our proposed method can achieve comparable performance to that of the state-of-the-art approach at the expense of much lower computational cost.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge