Mengzhou Li

Data-driven imaging geometric recovery of ultrahigh resolution robotic micro-CT for in-vivo and other applications

Jun 26, 2024

Abstract:We introduce an ultrahigh-resolution (50\mu m\) robotic micro-CT design for localized imaging of carotid plaques using robotic arms, cutting-edge detector, and machine learning technologies. To combat geometric error-induced artifacts in interior CT scans, we propose a data-driven geometry estimation method that maximizes the consistency between projection data and the reprojection counterparts of a reconstructed volume. Particularly, we use a normalized cross correlation metric to overcome the projection truncation effect. Our approach is validated on a robotic CT scan of a sacrificed mouse and a micro-CT phantom scan, both producing sharper images with finer details than that prior correction.

Deep Few-view High-resolution Photon-counting Extremity CT at Halved Dose for a Clinical Trial

Mar 19, 2024Abstract:The latest X-ray photon-counting computed tomography (PCCT) for extremity allows multi-energy high-resolution (HR) imaging for tissue characterization and material decomposition. However, both radiation dose and imaging speed need improvement for contrast-enhanced and other studies. Despite the success of deep learning methods for 2D few-view reconstruction, applying them to HR volumetric reconstruction of extremity scans for clinical diagnosis has been limited due to GPU memory constraints, training data scarcity, and domain gap issues. In this paper, we propose a deep learning-based approach for PCCT image reconstruction at halved dose and doubled speed in a New Zealand clinical trial. Particularly, we present a patch-based volumetric refinement network to alleviate the GPU memory limitation, train network with synthetic data, and use model-based iterative refinement to bridge the gap between synthetic and real-world data. The simulation and phantom experiments demonstrate consistently improved results under different acquisition conditions on both in- and off-domain structures using a fixed network. The image quality of 8 patients from the clinical trial are evaluated by three radiologists in comparison with the standard image reconstruction with a full-view dataset. It is shown that our proposed approach is essentially identical to or better than the clinical benchmark in terms of diagnostic image quality scores. Our approach has a great potential to improve the safety and efficiency of PCCT without compromising image quality.

Photon-counting CT using a Conditional Diffusion Model for Super-resolution and Texture-preservation

Feb 25, 2024

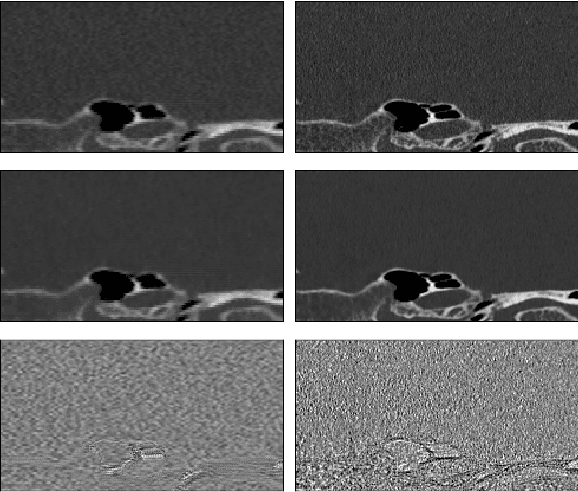

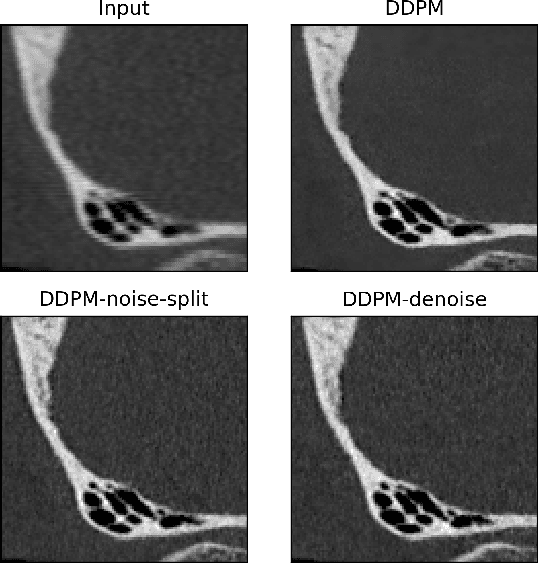

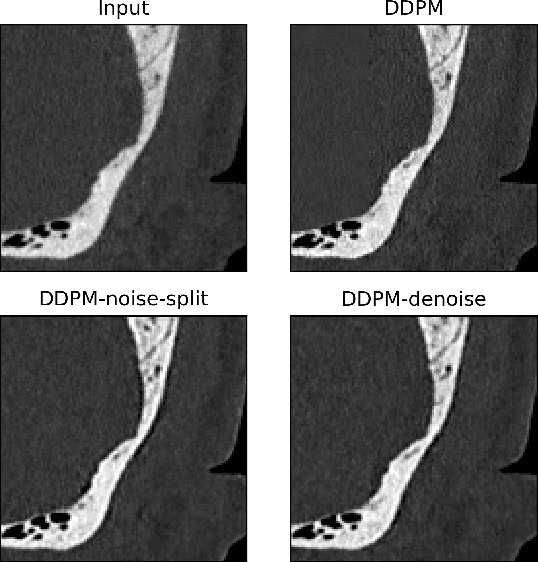

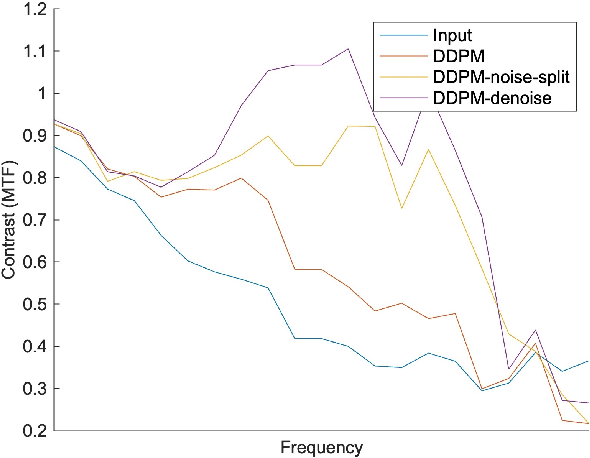

Abstract:Ultra-high resolution images are desirable in photon counting CT (PCCT), but resolution is physically limited by interactions such as charge sharing. Deep learning is a possible method for super-resolution (SR), but sourcing paired training data that adequately models the target task is difficult. Additionally, SR algorithms can distort noise texture, which is an important in many clinical diagnostic scenarios. Here, we train conditional denoising diffusion probabilistic models (DDPMs) for PCCT super-resolution, with the objective to retain textural characteristics of local noise. PCCT simulation methods are used to synthesize realistic resolution degradation. To preserve noise texture, we explore decoupling the noise and signal image inputs and outputs via deep denoisers, explicitly mapping to each during the SR process. Our experimental results indicate that our DDPM trained on simulated data can improve sharpness in real PCCT images. Additionally, the disentanglement of noise from the original image allows our model more faithfully preserve noise texture.

Coronary Atherosclerotic Plaque Characterization with Photon-counting CT: a Simulation-based Feasibility Study

Dec 04, 2023

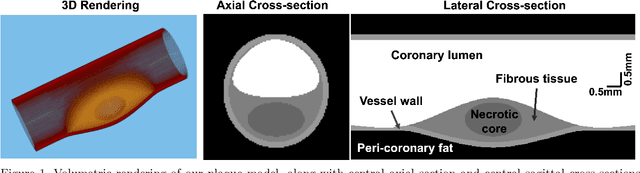

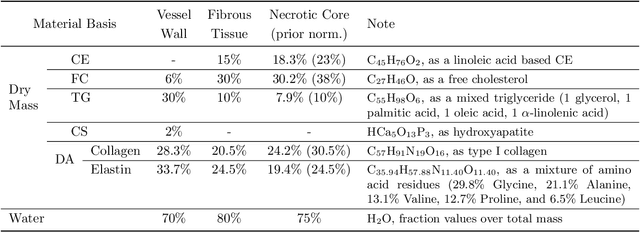

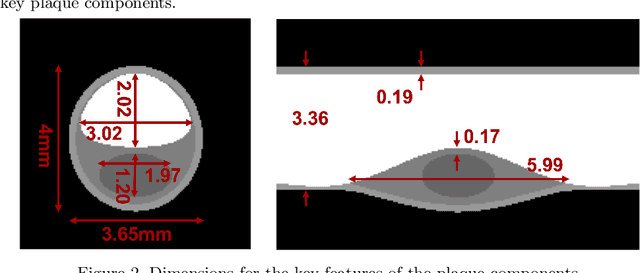

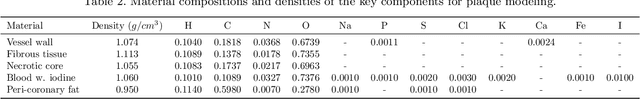

Abstract:Recent development of photon-counting CT (PCCT) brings great opportunities for plaque characterization with much-improved spatial resolution and spectral imaging capability. While existing coronary plaque PCCT imaging results are based on detectors made of CZT or CdTe materials, deep-silicon photon-counting detectors have unique performance characteristics and promise distinct imaging capabilities. In this work, we report a systematic simulation study of a deep-silicon PCCT scanner with a new clinically-relevant digital plaque phantom with realistic geometrical parameters and chemical compositions. This work investigates the effects of spatial resolution, noise, motion artifacts, radiation dose, and spectral characterization. Our simulation results suggest that the deep-silicon PCCT design provides adequate spatial resolution for visualizing a necrotic core and quantitation of key plaque features. Advanced denoising techniques and aggressive bowtie filter designs can keep image noise to acceptable levels at this resolution while keeping radiation dose comparable to that of a conventional CT scan. The ultrahigh resolution of PCCT also means an elevated sensitivity to motion artifacts. It is found that a tolerance of less than 0.4 mm residual movement range requires the application of accurate motion correction methods for best plaque imaging quality with PCCT.

Systematic Review on Learning-based Spectral CT

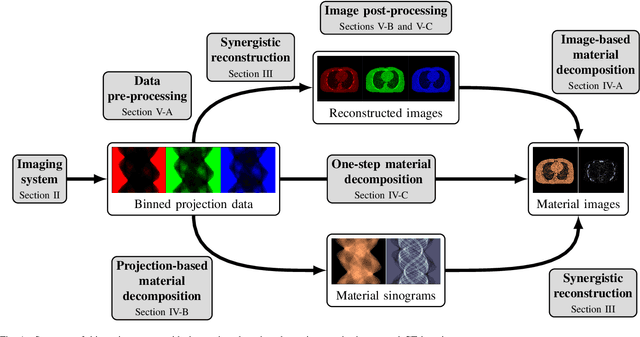

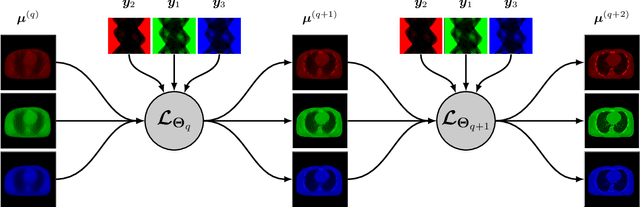

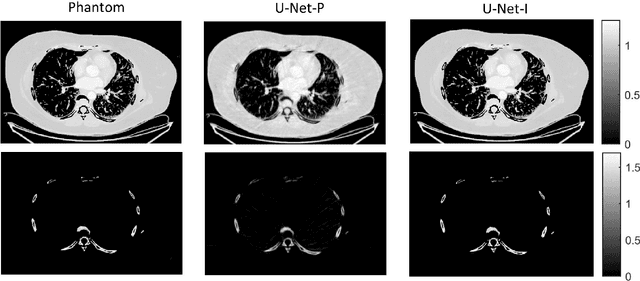

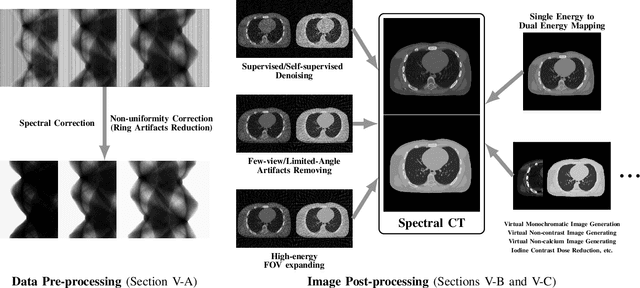

Apr 15, 2023

Abstract:Spectral computed tomography (CT) has recently emerged as an advanced version of medical CT and significantly improves conventional (single-energy) CT. Spectral CT has two main forms: dual-energy computed tomography (DECT) and photon-counting computed tomography (PCCT), which offer image improvement, material decomposition, and feature quantification relative to conventional CT. However, the inherent challenges of spectral CT, evidenced by data and image artifacts, remain a bottleneck for clinical applications. To address these problems, machine learning techniques have been widely applied to spectral CT. In this review, we present the state-of-the-art data-driven techniques for spectral CT.

Motion Correction via Locally Linear Embedding for Helical Photon-counting CT

Apr 05, 2022

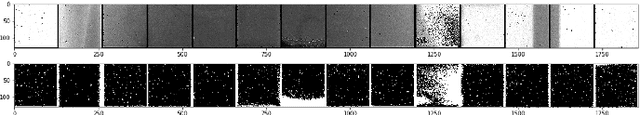

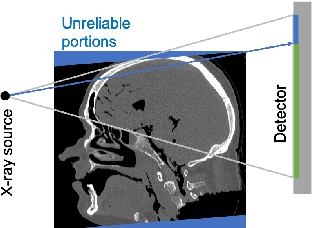

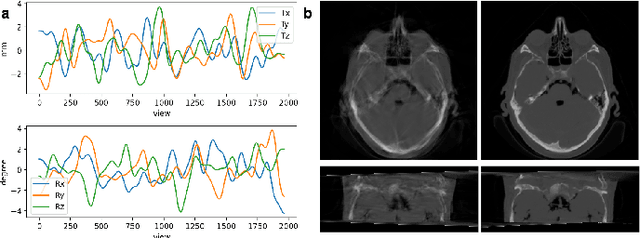

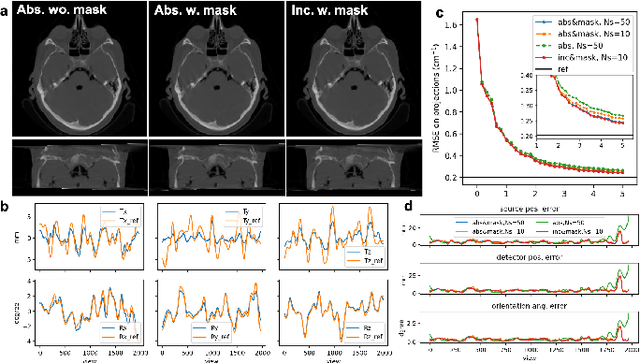

Abstract:X-ray photon-counting detector (PCD) offers low noise, high resolution, and spectral characterization, representing a next generation of CT and enabling new biomedical applications. It is well known that involuntary patient motion may induce image artifacts with conventional CT scanning, and this problem becomes more serious with PCD due to its high detector pitch and extended scan time. Furthermore, PCD often comes with a substantial number of bad pixels, making analytic image reconstruction challenging and ruling out state-of-the-art motion correction methods that are based on analytical reconstruction. In this paper, we extend our previous locally linear embedding (LLE) cone-beam motion correction method to the helical scanning geometry, which is especially desirable given the high cost of large-area PCD. In addition to our adaption of LLE-based parametric searching to helical cone-beam photon-counting CT geometry, we introduce an unreliable-volume mask to improve the motion estimation accuracy and perform incremental updating on gradually refined sampling grids for optimization of both accuracy and efficiency. Our numerical results demonstrate that our method reduces the estimation errors near the two longitudinal ends of the reconstructed volume and overall image quality. The experimental results on clinical photon-counting scans of the patient extremities show significant resolution improvement after motion correction using our method, which reveals subtle fine structures previously hidden under motion blurring and artifacts.

Expressivity and Trainability of Quadratic Networks

Oct 12, 2021

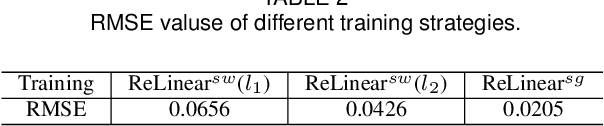

Abstract:Inspired by diversity of biological neurons, quadratic artificial neurons can play an important role in deep learning models. The type of quadratic neurons of our interest replaces the inner-product operation in the conventional neuron with a quadratic function. Despite promising results so far achieved by networks of quadratic neurons, there are important issues not well addressed. Theoretically, the superior expressivity of a quadratic network over either a conventional network or a conventional network via quadratic activation is not fully elucidated, which makes the use of quadratic networks not well grounded. Practically, although a quadratic network can be trained via generic backpropagation, it can be subject to a higher risk of collapse than the conventional counterpart. To address these issues, we first apply the spline theory and a measure from algebraic geometry to give two theorems that demonstrate better model expressivity of a quadratic network than the conventional counterpart with or without quadratic activation. Then, we propose an effective and efficient training strategy referred to as ReLinear to stabilize the training process of a quadratic network, thereby unleashing the full potential in its associated machine learning tasks. Comprehensive experiments on popular datasets are performed to support our findings and evaluate the performance of quadratic deep learning.

Low-dimensional Manifold Constrained Disentanglement Network for Metal Artifact Reduction

Jul 08, 2020

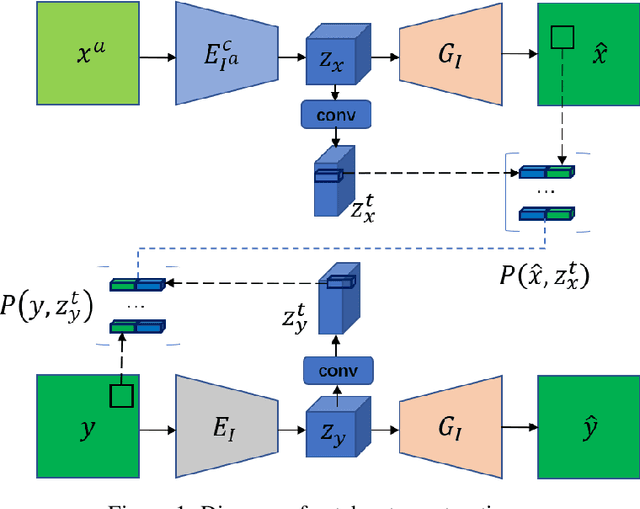

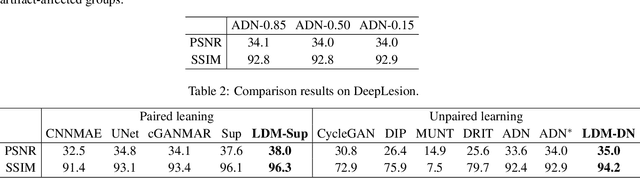

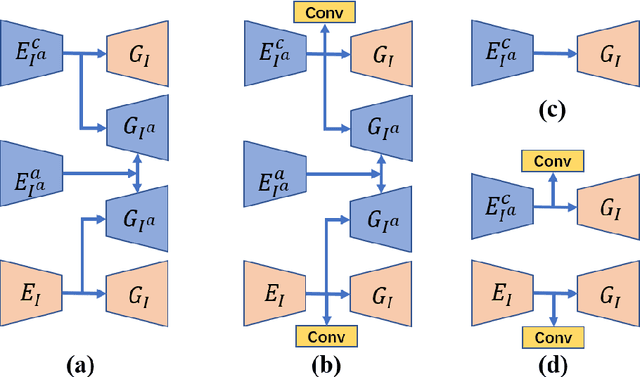

Abstract:Deep neural network based methods have achieved promising results for CT metal artifact reduction (MAR), most of which use many synthesized paired images for training. As synthesized metal artifacts in CT images may not accurately reflect the clinical counterparts, an artifact disentanglement network (ADN) was proposed with unpaired clinical images directly, producing promising results on clinical datasets. However, without sufficient supervision, it is difficult for ADN to recover structural details of artifact-affected CT images based on adversarial losses only. To overcome these problems, here we propose a low-dimensional manifold (LDM) constrained disentanglement network (DN), leveraging the image characteristics that the patch manifold is generally low-dimensional. Specifically, we design an LDM-DN learning algorithm to empower the disentanglement network through optimizing the synergistic network loss functions while constraining the recovered images to be on a low-dimensional patch manifold. Moreover, learning from both paired and unpaired data, an efficient hybrid optimization scheme is proposed to further improve the MAR performance on clinical datasets. Extensive experiments demonstrate that the proposed LDM-DN approach can consistently improve the MAR performance in paired and/or unpaired learning settings, outperforming competing methods on synthesized and clinical datasets.

X-ray Photon-Counting Data Correction through Deep Learning

Jul 06, 2020

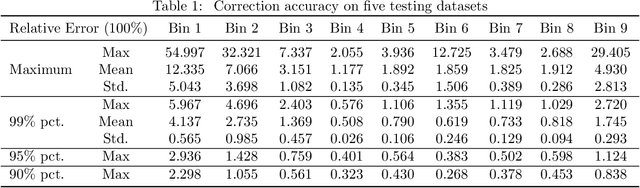

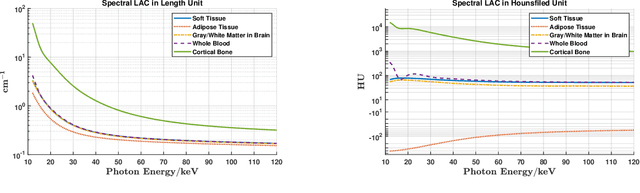

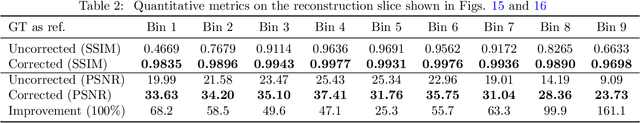

Abstract:X-ray photon-counting detectors (PCDs) are drawing an increasing attention in recent years due to their low noise and energy discrimination capabilities. The energy/spectral dimension associated with PCDs potentially brings great benefits such as for material decomposition, beam hardening and metal artifact reduction, as well as low-dose CT imaging. However, X-ray PCDs are currently limited by several technical issues, particularly charge splitting (including charge sharing and K-shell fluorescence re-absorption or escaping) and pulse pile-up effects which distort the energy spectrum and compromise the data quality. Correction of raw PCD measurements with hardware improvement and analytic modeling is rather expensive and complicated. Hence, here we proposed a deep neural network based PCD data correction approach which directly maps imperfect data to the ideal data in the supervised learning mode. In this work, we first establish a complete simulation model incorporating the charge splitting and pulse pile-up effects. The simulated PCD data and the ground truth counterparts are then fed to a specially designed deep adversarial network for PCD data correction. Next, the trained network is used to correct separately generated PCD data. The test results demonstrate that the trained network successfully recovers the ideal spectrum from the distorted measurement within $\pm6\%$ relative error. Significant data and image fidelity improvements are clearly observed in both projection and reconstruction domains.

Soft-Autoencoder and Its Wavelet Shrinkage Interpretation

Dec 31, 2018

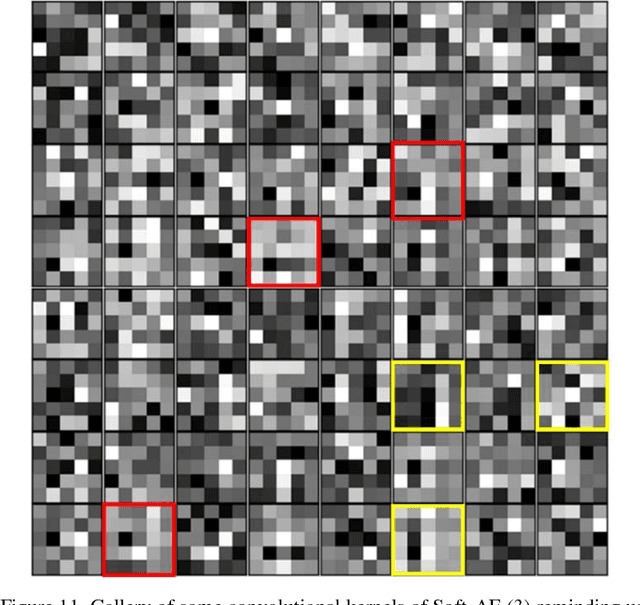

Abstract:Deep learning is a main focus of artificial intelligence and has greatly impacted other fields. However, deep learning is often criticized for its lack of interpretation. As a successful unsupervised model in deep learning, various autoencoders, especially convolutional autoencoders, are very popular and important. Since these autoencoders need improvements and insights, in this paper we shed light on the nonlinearity of a deep convolutional autoencoder in perspective of perfect signal recovery. In particular, we propose a new type of convolutional autoencoders, termed as Soft-Autoencoder (Soft-AE), in which the activations of encoding layers are implemented with adaptable soft-thresholding units while decoding layers are realized with linear units. Consequently, Soft-AE can be naturally interpreted as a learned cascaded wavelet shrinkage system. Our denoising numerical experiments on CIFAR-10, BSD-300 and Mayo Clinical Challenge Dataset demonstrate that Soft-AE gives a competitive performance relative to its counterparts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge