Alexandre Bousse

Direct Dual-Energy CT Material Decomposition using Model-based Denoising Diffusion Model

Jul 24, 2025

Abstract:Dual-energy X-ray Computed Tomography (DECT) constitutes an advanced technology which enables automatic decomposition of materials in clinical images without manual segmentation using the dependency of the X-ray linear attenuation with energy. However, most methods perform material decomposition in the image domain as a post-processing step after reconstruction but this procedure does not account for the beam-hardening effect and it results in sub-optimal results. In this work, we propose a deep learning procedure called Dual-Energy Decomposition Model-based Diffusion (DEcomp-MoD) for quantitative material decomposition which directly converts the DECT projection data into material images. The algorithm is based on incorporating the knowledge of the spectral DECT model into the deep learning training loss and combining a score-based denoising diffusion learned prior in the material image domain. Importantly the inference optimization loss takes as inputs directly the sinogram and converts to material images through a model-based conditional diffusion model which guarantees consistency of the results. We evaluate the performance with both quantitative and qualitative estimation of the proposed DEcomp-MoD method on synthetic DECT sinograms from the low-dose AAPM dataset. Finally, we show that DEcomp-MoD outperform state-of-the-art unsupervised score-based model and supervised deep learning networks, with the potential to be deployed for clinical diagnosis.

Semi-Supervised Learning for Dose Prediction in Targeted Radionuclide: A Synthetic Data Study

Mar 07, 2025Abstract:Targeted Radionuclide Therapy (TRT) is a modern strategy in radiation oncology that aims to administer a potent radiation dose specifically to cancer cells using cancer-targeting radiopharmaceuticals. Accurate radiation dose estimation tailored to individual patients is crucial. Deep learning, particularly with pre-therapy imaging, holds promise for personalizing TRT doses. However, current methods require large time series of SPECT imaging, which is hardly achievable in routine clinical practice, and thus raises issues of data availability. Our objective is to develop a semi-supervised learning (SSL) solution to personalize dosimetry using pre-therapy images. The aim is to develop an approach that achieves accurate results when PET/CT images are available, but are associated with only a few post-therapy dosimetry data provided by SPECT images. In this work, we introduce an SSL method using a pseudo-label generation approach for regression tasks inspired by the FixMatch framework. The feasibility of the proposed solution was preliminarily evaluated through an in-silico study using synthetic data and Monte Carlo simulation. Experimental results for organ dose prediction yielded promising outcomes, showing that the use of pseudo-labeled data provides better accuracy compared to using only labeled data.

Multi-Branch Generative Models for Multichannel Imaging with an Application to PET/CT Joint Reconstruction

Apr 12, 2024Abstract:This paper presents a proof-of-concept approach for learned synergistic reconstruction of medical images using multi-branch generative models. Leveraging variational autoencoders (VAEs) and generative adversarial networks (GANs), our models learn from pairs of images simultaneously, enabling effective denoising and reconstruction. Synergistic image reconstruction is achieved by incorporating the trained models in a regularizer that evaluates the distance between the images and the model, in a similar fashion to multichannel dictionary learning (DiL). We demonstrate the efficacy of our approach on both Modified National Institute of Standards and Technology (MNIST) and positron emission tomography (PET)/computed tomography (CT) datasets, showcasing improved image quality and information sharing between modalities. Despite challenges such as patch decomposition and model limitations, our results underscore the potential of generative models for enhancing medical imaging reconstruction.

Systematic Review on Learning-based Spectral CT

Apr 15, 2023

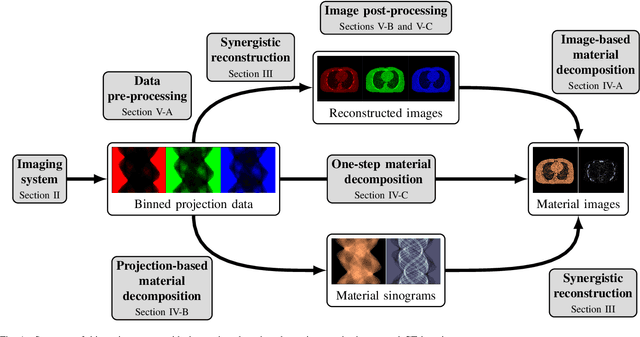

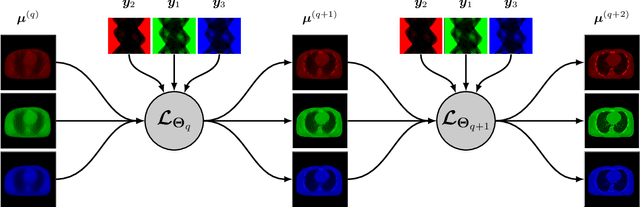

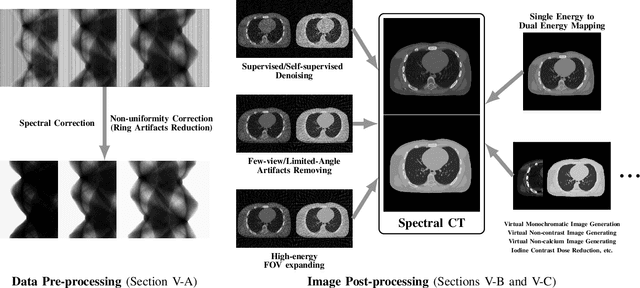

Abstract:Spectral computed tomography (CT) has recently emerged as an advanced version of medical CT and significantly improves conventional (single-energy) CT. Spectral CT has two main forms: dual-energy computed tomography (DECT) and photon-counting computed tomography (PCCT), which offer image improvement, material decomposition, and feature quantification relative to conventional CT. However, the inherent challenges of spectral CT, evidenced by data and image artifacts, remain a bottleneck for clinical applications. To address these problems, machine learning techniques have been widely applied to spectral CT. In this review, we present the state-of-the-art data-driven techniques for spectral CT.

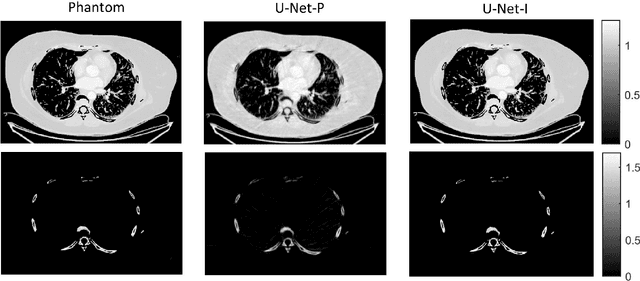

Multi-Channel Convolutional Analysis Operator Learning for Dual-Energy CT Reconstruction

Mar 10, 2022Abstract:Objective. Dual-energy computed tomography (DECT) has the potential to improve contrast, reduce artifacts and the ability to perform material decomposition in advanced imaging applications. The increased number or measurements results with a higher radiation dose and it is therefore essential to reduce either number of projections per energy or the source X-ray intensity, but this makes tomographic reconstruction more ill-posed. Approach. We developed the multi-channel convolutional analysis operator learning (MCAOL) method to exploit common spatial features within attenuation images at different energies and we propose an optimization method which jointly reconstructs the attenuation images at low and high energies with a mixed norm regularization on the sparse features obtained by pre-trained convolutional filters through the convolutional analysis operator learning (CAOL) algorithm. Main results. Extensive experiments with simulated and real computed tomography (CT) data were performed to validate the effectiveness of the proposed methods and we reported increased reconstruction accuracy compared to CAOL and iterative methods with single and joint total-variation (TV) regularization. Significance. Qualitative and quantitative results on sparse-views and low-dose DECT demonstrate that the proposed MCAOL method outperforms both CAOL applied on each energy independently and several existing state-of-the-art model-based iterative reconstruction (MBIR) techniques, thus paving the way for dose reduction.

* 23 pages, 11 figures, published in the Physics in Medicine & Biology journal

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge