Mengyu Yang

CoPHo: Classifier-guided Conditional Topology Generation with Persistent Homology

Dec 17, 2025Abstract:The structure of topology underpins much of the research on performance and robustness, yet available topology data are typically scarce, necessitating the generation of synthetic graphs with desired properties for testing or release. Prior diffusion-based approaches either embed conditions into the diffusion model, requiring retraining for each attribute and hindering real-time applicability, or use classifier-based guidance post-training, which does not account for topology scale and practical constraints. In this paper, we show from a discrete perspective that gradients from a pre-trained graph-level classifier can be incorporated into the discrete reverse diffusion posterior to steer generation toward specified structural properties. Based on this insight, we propose Classifier-guided Conditional Topology Generation with Persistent Homology (CoPHo), which builds a persistent homology filtration over intermediate graphs and interprets features as guidance signals that steer generation toward the desired properties at each denoising step. Experiments on four generic/network datasets demonstrate that CoPHo outperforms existing methods at matching target metrics, and we further validate its transferability on the QM9 molecular dataset.

GEN3D: Generating Domain-Free 3D Scenes from a Single Image

Nov 18, 2025Abstract:Despite recent advancements in neural 3D reconstruction, the dependence on dense multi-view captures restricts their broader applicability. Additionally, 3D scene generation is vital for advancing embodied AI and world models, which depend on diverse, high-quality scenes for learning and evaluation. In this work, we propose Gen3d, a novel method for generation of high-quality, wide-scope, and generic 3D scenes from a single image. After the initial point cloud is created by lifting the RGBD image, Gen3d maintains and expands its world model. The 3D scene is finalized through optimizing a Gaussian splatting representation. Extensive experiments on diverse datasets demonstrate the strong generalization capability and superior performance of our method in generating a world model and Synthesizing high-fidelity and consistent novel views.

Clink! Chop! Thud! -- Learning Object Sounds from Real-World Interactions

Oct 02, 2025

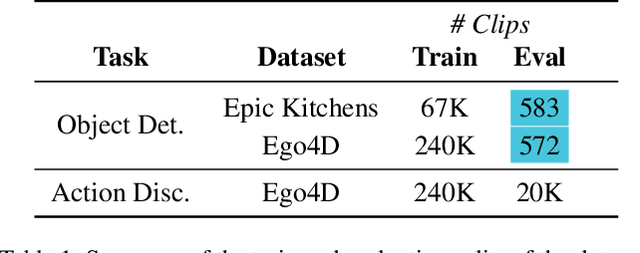

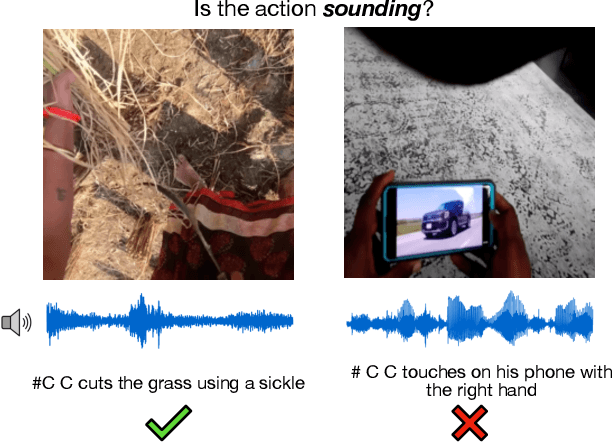

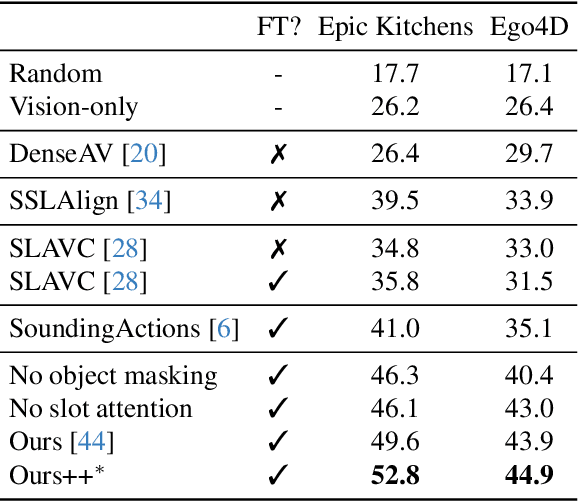

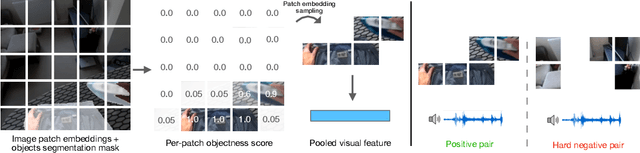

Abstract:Can a model distinguish between the sound of a spoon hitting a hardwood floor versus a carpeted one? Everyday object interactions produce sounds unique to the objects involved. We introduce the sounding object detection task to evaluate a model's ability to link these sounds to the objects directly involved. Inspired by human perception, our multimodal object-aware framework learns from in-the-wild egocentric videos. To encourage an object-centric approach, we first develop an automatic pipeline to compute segmentation masks of the objects involved to guide the model's focus during training towards the most informative regions of the interaction. A slot attention visual encoder is used to further enforce an object prior. We demonstrate state of the art performance on our new task along with existing multimodal action understanding tasks.

SDP: Spiking Diffusion Policy for Robotic Manipulation with Learnable Channel-Wise Membrane Thresholds

Sep 17, 2024

Abstract:This paper introduces a Spiking Diffusion Policy (SDP) learning method for robotic manipulation by integrating Spiking Neurons and Learnable Channel-wise Membrane Thresholds (LCMT) into the diffusion policy model, thereby enhancing computational efficiency and achieving high performance in evaluated tasks. Specifically, the proposed SDP model employs the U-Net architecture as the backbone for diffusion learning within the Spiking Neural Network (SNN). It strategically places residual connections between the spike convolution operations and the Leaky Integrate-and-Fire (LIF) nodes, thereby preventing disruptions to the spiking states. Additionally, we introduce a temporal encoding block and a temporal decoding block to transform static and dynamic data with timestep $T_S$ into each other, enabling the transmission of data within the SNN in spike format. Furthermore, we propose LCMT to enable the adaptive acquisition of membrane potential thresholds, thereby matching the conditions of varying membrane potentials and firing rates across channels and avoiding the cumbersome process of manually setting and tuning hyperparameters. Evaluating the SDP model on seven distinct tasks with SNN timestep $T_S=4$, we achieve results comparable to those of the ANN counterparts, along with faster convergence speeds than the baseline SNN method. This improvement is accompanied by a reduction of 94.3\% in dynamic energy consumption estimated on 45nm hardware.

AdaViPro: Region-based Adaptive Visual Prompt for Large-Scale Models Adapting

Mar 20, 2024

Abstract:Recently, prompt-based methods have emerged as a new alternative `parameter-efficient fine-tuning' paradigm, which only fine-tunes a small number of additional parameters while keeping the original model frozen. However, despite achieving notable results, existing prompt methods mainly focus on `what to add', while overlooking the equally important aspect of `where to add', typically relying on the manually crafted placement. To this end, we propose a region-based Adaptive Visual Prompt, named AdaViPro, which integrates the `where to add' optimization of the prompt into the learning process. Specifically, we reconceptualize the `where to add' optimization as a problem of regional decision-making. During inference, AdaViPro generates a regionalized mask map for the whole image, which is composed of 0 and 1, to designate whether to apply or discard the prompt in each specific area. Therefore, we employ Gumbel-Softmax sampling to enable AdaViPro's end-to-end learning through standard back-propagation. Extensive experiments demonstrate that our AdaViPro yields new efficiency and accuracy trade-offs for adapting pre-trained models.

The Un-Kidnappable Robot: Acoustic Localization of Sneaking People

Oct 05, 2023

Abstract:How easy is it to sneak up on a robot? We examine whether we can detect people using only the incidental sounds they produce as they move, even when they try to be quiet. We collect a robotic dataset of high-quality 4-channel audio paired with 360 degree RGB data of people moving in different indoor settings. We train models that predict if there is a moving person nearby and their location using only audio. We implement our method on a robot, allowing it to track a single person moving quietly with only passive audio sensing. For demonstration videos, see our project page: https://sites.google.com/view/unkidnappable-robot

View while Moving: Efficient Video Recognition in Long-untrimmed Videos

Aug 09, 2023

Abstract:Recent adaptive methods for efficient video recognition mostly follow the two-stage paradigm of "preview-then-recognition" and have achieved great success on multiple video benchmarks. However, this two-stage paradigm involves two visits of raw frames from coarse-grained to fine-grained during inference (cannot be parallelized), and the captured spatiotemporal features cannot be reused in the second stage (due to varying granularity), being not friendly to efficiency and computation optimization. To this end, inspired by human cognition, we propose a novel recognition paradigm of "View while Moving" for efficient long-untrimmed video recognition. In contrast to the two-stage paradigm, our paradigm only needs to access the raw frame once. The two phases of coarse-grained sampling and fine-grained recognition are combined into unified spatiotemporal modeling, showing great performance. Moreover, we investigate the properties of semantic units in video and propose a hierarchical mechanism to efficiently capture and reason about the unit-level and video-level temporal semantics in long-untrimmed videos respectively. Extensive experiments on both long-untrimmed and short-trimmed videos demonstrate that our approach outperforms state-of-the-art methods in terms of accuracy as well as efficiency, yielding new efficiency and accuracy trade-offs for video spatiotemporal modeling.

Improving Social Media Popularity Prediction with Multiple Post Dependencies

Jul 28, 2023

Abstract:Social Media Popularity Prediction has drawn a lot of attention because of its profound impact on many different applications, such as recommendation systems and multimedia advertising. Despite recent efforts to leverage the content of social media posts to improve prediction accuracy, many existing models fail to fully exploit the multiple dependencies between posts, which are important to comprehensively extract content information from posts. To tackle this problem, we propose a novel prediction framework named Dependency-aware Sequence Network (DSN) that exploits both intra- and inter-post dependencies. For intra-post dependency, DSN adopts a multimodal feature extractor with an efficient fine-tuning strategy to obtain task-specific representations from images and textual information of posts. For inter-post dependency, DSN uses a hierarchical information propagation method to learn category representations that could better describe the difference between posts. DSN also exploits recurrent networks with a series of gating layers for more flexible local temporal processing abilities and multi-head attention for long-term dependencies. The experimental results on the Social Media Popularity Dataset demonstrate the superiority of our method compared to existing state-of-the-art models.

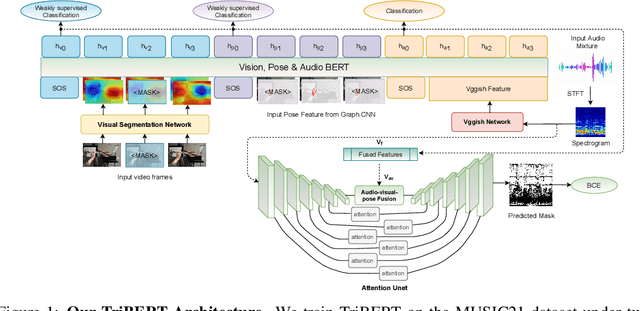

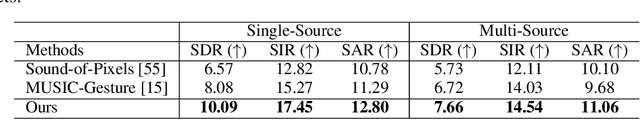

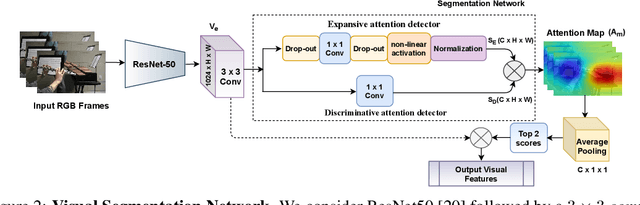

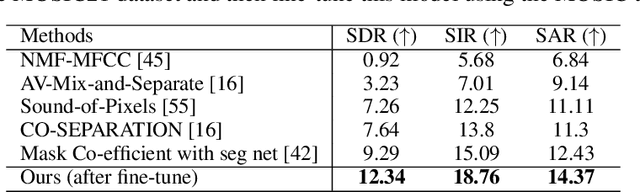

TriBERT: Full-body Human-centric Audio-visual Representation Learning for Visual Sound Separation

Oct 26, 2021

Abstract:The recent success of transformer models in language, such as BERT, has motivated the use of such architectures for multi-modal feature learning and tasks. However, most multi-modal variants (e.g., ViLBERT) have limited themselves to visual-linguistic data. Relatively few have explored its use in audio-visual modalities, and none, to our knowledge, illustrate them in the context of granular audio-visual detection or segmentation tasks such as sound source separation and localization. In this work, we introduce TriBERT -- a transformer-based architecture, inspired by ViLBERT, which enables contextual feature learning across three modalities: vision, pose, and audio, with the use of flexible co-attention. The use of pose keypoints is inspired by recent works that illustrate that such representations can significantly boost performance in many audio-visual scenarios where often one or more persons are responsible for the sound explicitly (e.g., talking) or implicitly (e.g., sound produced as a function of human manipulating an object). From a technical perspective, as part of the TriBERT architecture, we introduce a learned visual tokenization scheme based on spatial attention and leverage weak-supervision to allow granular cross-modal interactions for visual and pose modalities. Further, we supplement learning with sound-source separation loss formulated across all three streams. We pre-train our model on the large MUSIC21 dataset and demonstrate improved performance in audio-visual sound source separation on that dataset as well as other datasets through fine-tuning. In addition, we show that the learned TriBERT representations are generic and significantly improve performance on other audio-visual tasks such as cross-modal audio-visual-pose retrieval by as much as 66.7% in top-1 accuracy.

* 10 pages, 5 Figures, Neurips 2021

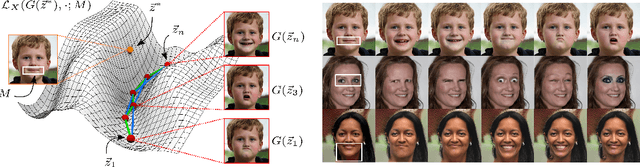

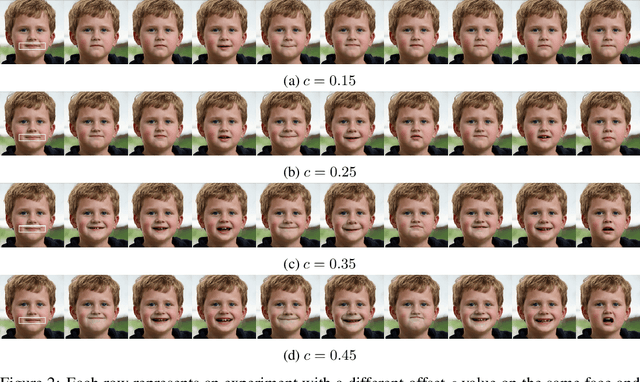

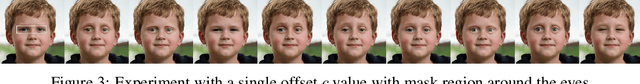

Mask-Guided Discovery of Semantic Manifolds in Generative Models

May 15, 2021

Abstract:Advances in the realm of Generative Adversarial Networks (GANs) have led to architectures capable of producing amazingly realistic images such as StyleGAN2, which, when trained on the FFHQ dataset, generates images of human faces from random vectors in a lower-dimensional latent space. Unfortunately, this space is entangled - translating a latent vector along its axes does not correspond to a meaningful transformation in the output space (e.g., smiling mouth, squinting eyes). The model behaves as a black box, providing neither control over its output nor insight into the structures it has learned from the data. We present a method to explore the manifolds of changes of spatially localized regions of the face. Our method discovers smoothly varying sequences of latent vectors along these manifolds suitable for creating animations. Unlike existing disentanglement methods that either require labelled data or explicitly alter internal model parameters, our method is an optimization-based approach guided by a custom loss function and manually defined region of change. Our code is open-sourced, which can be found, along with supplementary results, on our project page: https://github.com/bmolab/masked-gan-manifold

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge