Charles C. Kemp

Exercise Specialists Evaluation of Robot-led Physical Therapy for People with Parkinsons Disease

Feb 07, 2025Abstract:Robot-led physical therapy (PT) offers a promising avenue to enhance the care provided by clinical exercise specialists (ES) and physical and occupational therapists to improve patients' adherence to prescribed exercises outside of a clinic, such as at home. Collaborative efforts among roboticists, ES, physical and occupational therapists, and patients are essential for developing interactive, personalized exercise systems that meet each stakeholder's needs. We conducted a user study in which 11 ES evaluated a novel robot-led PT system for people with Parkinson's disease (PD), introduced in [1], focusing on the system's perceived efficacy and acceptance. Utilizing a mixed-methods approach, including technology acceptance questionnaires, task load questionnaires, and semi-structured interviews, we gathered comprehensive insights into ES perspectives and experiences after interacting with the system. Findings reveal a broadly positive reception, which highlights the system's capacity to augment traditional PT for PD, enhance patient engagement, and ensure consistent exercise support. We also identified two key areas for improvement: incorporating more human-like feedback systems and increasing the robot's ease of use. This research emphasizes the value of incorporating robotic aids into PT for PD, offering insights that can guide the development of more effective and user-friendly rehabilitation technologies.

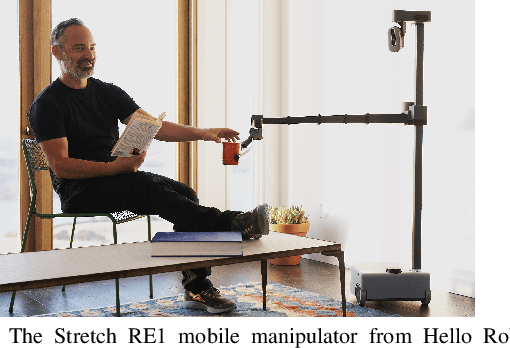

Stretch with Stretch: Physical Therapy Exercise Games Led by a Mobile Manipulator

Dec 21, 2023

Abstract:Physical therapy (PT) is a key component of many rehabilitation regimens, such as treatments for Parkinson's disease (PD). However, there are shortages of physical therapists and adherence to self-guided PT is low. Robots have the potential to support physical therapists and increase adherence to self-guided PT, but prior robotic systems have been large and immobile, which can be a barrier to use in homes and clinics. We present Stretch with Stretch (SWS), a novel robotic system for leading stretching exercise games for older adults with PD. SWS consists of a compact and lightweight mobile manipulator (Hello Robot Stretch RE1) that visually and verbally guides users through PT exercises. The robot's soft end effector serves as a target that users repetitively reach towards and press with a hand, foot, or knee. For each exercise, target locations are customized for the individual via a visually estimated kinematic model, a haptically estimated range of motion, and the person's exercise performance. The system includes sound effects and verbal feedback from the robot to keep users engaged throughout a session and augment physical exercise with cognitive exercise. We conducted a user study for which people with PD (n=10) performed 6 exercises with the system. Participants perceived the SWS to be useful and easy to use. They also reported mild to moderate perceived exertion (RPE).

The Un-Kidnappable Robot: Acoustic Localization of Sneaking People

Oct 05, 2023

Abstract:How easy is it to sneak up on a robot? We examine whether we can detect people using only the incidental sounds they produce as they move, even when they try to be quiet. We collect a robotic dataset of high-quality 4-channel audio paired with 360 degree RGB data of people moving in different indoor settings. We train models that predict if there is a moving person nearby and their location using only audio. We implement our method on a robot, allowing it to track a single person moving quietly with only passive audio sensing. For demonstration videos, see our project page: https://sites.google.com/view/unkidnappable-robot

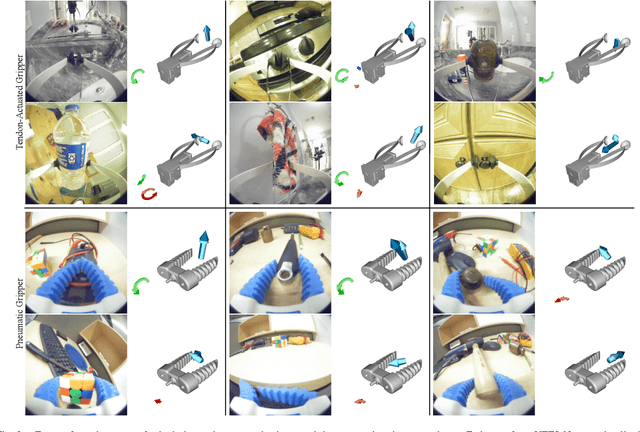

ForceSight: Text-Guided Mobile Manipulation with Visual-Force Goals

Sep 24, 2023

Abstract:We present ForceSight, a system for text-guided mobile manipulation that predicts visual-force goals using a deep neural network. Given a single RGBD image combined with a text prompt, ForceSight determines a target end-effector pose in the camera frame (kinematic goal) and the associated forces (force goal). Together, these two components form a visual-force goal. Prior work has demonstrated that deep models outputting human-interpretable kinematic goals can enable dexterous manipulation by real robots. Forces are critical to manipulation, yet have typically been relegated to lower-level execution in these systems. When deployed on a mobile manipulator equipped with an eye-in-hand RGBD camera, ForceSight performed tasks such as precision grasps, drawer opening, and object handovers with an 81% success rate in unseen environments with object instances that differed significantly from the training data. In a separate experiment, relying exclusively on visual servoing and ignoring force goals dropped the success rate from 90% to 45%, demonstrating that force goals can significantly enhance performance. The appendix, videos, code, and trained models are available at https://force-sight.github.io/.

Visual Contact Pressure Estimation for Grippers in the Wild

Mar 13, 2023Abstract:Sensing contact pressure applied by a gripper is useful for autonomous and teleoperated robotic manipulation, but adding tactile sensing to a gripper's surface can be difficult or impractical. If a gripper visibly deforms when forces are applied, contact pressure can be visually estimated using images from an external camera that observes the gripper. While researchers have demonstrated this capability in controlled laboratory settings, prior work has not addressed challenges associated with visual pressure estimation in the wild, where lighting, surfaces, and other factors vary widely. We present a deep learning model and associated methods that enable visual pressure estimation under widely varying conditions. Our model, Visual Pressure Estimation for Robots (ViPER), takes an image from an eye-in-hand camera as input and outputs an image representing the pressure applied by a soft gripper. Our key insight is that force/torque sensing can be used as a weak label to efficiently collect training data in settings where pressure measurements would be difficult to obtain. When trained on this weakly labeled data combined with fully labeled data containing pressure measurements, ViPER outperforms prior methods, enables precision manipulation in cluttered settings, and provides accurate estimates for unseen conditions relevant to in-home use.

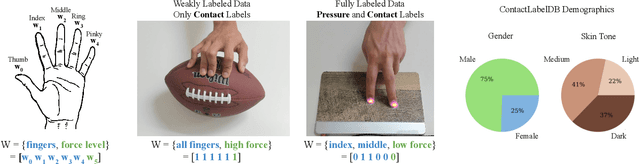

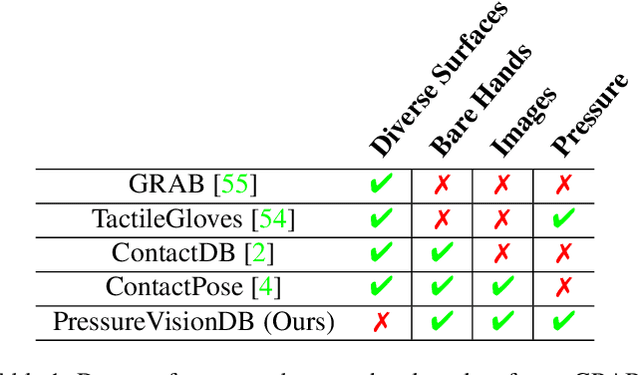

Visual Estimation of Fingertip Pressure on Diverse Surfaces using Easily Captured Data

Jan 05, 2023

Abstract:Prior research has shown that deep models can estimate the pressure applied by a hand to a surface based on a single RGB image. Training these models requires high-resolution pressure measurements that are difficult to obtain with physical sensors. Additionally, even experts cannot reliably annotate pressure from images. Thus, data collection is a critical barrier to generalization and improved performance. We present a novel approach that enables training data to be efficiently captured from unmodified surfaces with only an RGB camera and a cooperative participant. Our key insight is that people can be prompted to perform actions that correspond with categorical labels (contact labels) describing contact pressure, such as using a specific fingertip to make low-force contact. We present ContactLabelNet, which visually estimates pressure applied by fingertips. With the use of contact labels, ContactLabelNet achieves improved performance, generalizes to novel surfaces, and outperforms models from prior work.

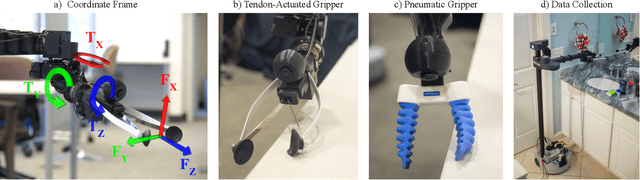

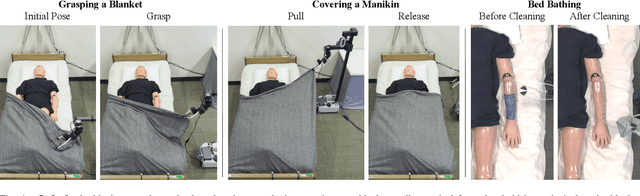

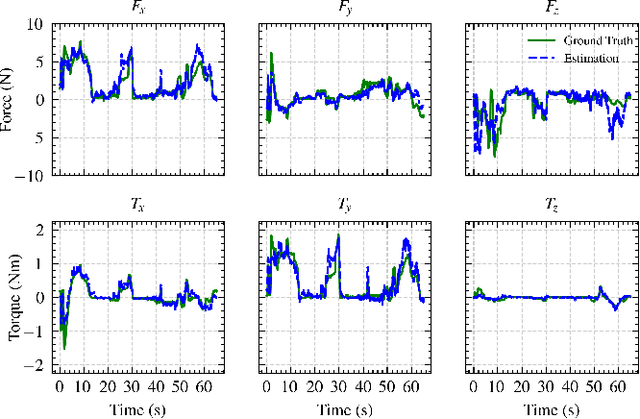

Force/Torque Sensing for Soft Grippers using an External Camera

Sep 30, 2022

Abstract:Robotic manipulation can benefit from wrist-mounted force/torque (F/T) sensors, but conventional F/T sensors can be expensive, difficult to install, and damaged by high loads. We present Visual Force/Torque Sensing (VFTS), a method that visually estimates the 6-axis F/T measurement that would be reported by a conventional F/T sensor. In contrast to approaches that sense loads using internal cameras placed behind soft exterior surfaces, our approach uses an external camera with a fisheye lens that observes a soft gripper. VFTS includes a deep learning model that takes a single RGB image as input and outputs a 6-axis F/T estimate. We trained the model with sensor data collected while teleoperating a robot (Stretch RE1 from Hello Robot Inc.) to perform manipulation tasks. VFTS outperformed F/T estimates based on motor currents, generalized to a novel home environment, and supported three autonomous tasks relevant to healthcare: grasping a blanket, pulling a blanket over a manikin, and cleaning a manikin's limbs. VFTS also performed well with a manually operated pneumatic gripper. Overall, our results suggest that an external camera observing a soft gripper can perform useful visual force/torque sensing for a variety of manipulation tasks.

Visual Pressure Estimation and Control for Soft Robotic Grippers

Apr 14, 2022

Abstract:Soft robotic grippers facilitate contact-rich manipulation, including robust grasping of varied objects. Yet the beneficial compliance of a soft gripper also results in significant deformation that can make precision manipulation challenging. We present visual pressure estimation & control (VPEC), a method that uses a single RGB image of an unmodified soft gripper from an external camera to directly infer pressure applied to the world by the gripper. We present inference results for a pneumatic gripper and a tendon-actuated gripper making contact with a flat surface. We also show that VPEC enables precision manipulation via closed-loop control of inferred pressure. We present results for a mobile manipulator (Stretch RE1 from Hello Robot) using visual servoing to do the following: achieve target pressures when making contact; follow a spatial pressure trajectory; and grasp small objects, including a microSD card, a washer, a penny, and a pill. Overall, our results show that VPEC enables grippers with high compliance to perform precision manipulation.

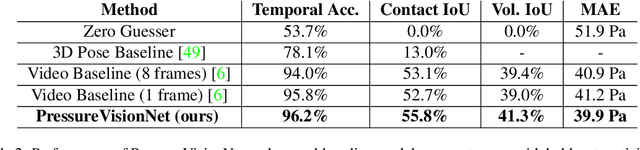

PressureVision: Estimating Hand Pressure from a Single RGB Image

Mar 19, 2022

Abstract:People often interact with their surroundings by applying pressure with their hands. Machine perception of hand pressure has been limited by the challenges of placing sensors between the hand and the contact surface. We explore the possibility of using a conventional RGB camera to infer hand pressure. The central insight is that the application of pressure by a hand results in informative appearance changes. Hands share biomechanical properties that result in similar observable phenomena, such as soft-tissue deformation, blood distribution, hand pose, and cast shadows. We collected videos of 36 participants with diverse skin tone applying pressure to an instrumented planar surface. We then trained a deep model (PressureVisionNet) to infer a pressure image from a single RGB image. Our model infers pressure for participants outside of the training data and outperforms baselines. We also show that the output of our model depends on the appearance of the hand and cast shadows near contact regions. Overall, our results suggest the appearance of a previously unobserved human hand can be used to accurately infer applied pressure.

The Design of Stretch: A Compact, Lightweight Mobile Manipulator for Indoor Human Environments

Sep 22, 2021

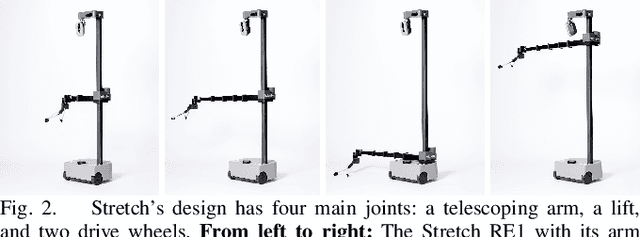

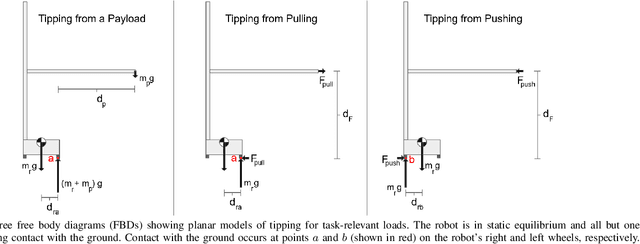

Abstract:Mobile manipulators for indoor human environments can serve as versatile devices that perform a variety of tasks, yet adoption of this technology has been limited. Reducing size, weight, and cost could facilitate adoption, but risks restricting capabilities. We present a novel design that reduces size, weight, and cost, while still performing a variety of tasks. The core design consists of a two-wheeled differential-drive mobile base, a lift, and a telescoping arm configured to achieve Cartesian motion at the end of the arm. Design extensions include a 1 degree-of-freedom (DOF) wrist to stow a tool, a 2-DOF dexterous wrist to pitch and roll a tool, and a compliant gripper. We justify our design with mathematical models of static stability that relate the robot's size and weight to its workspace, payload, and applied forces. We also provide empirical support by teleoperating and autonomously controlling a commercial robot based on our design (the Stretch RE1 from Hello Robot Inc.) to perform tasks in real homes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge