Mathew Yarossi

User Training with Error Augmentation for Electromyogram-based Gesture Classification

Sep 13, 2023

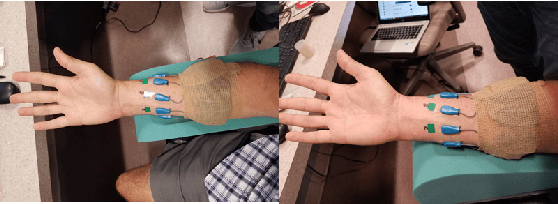

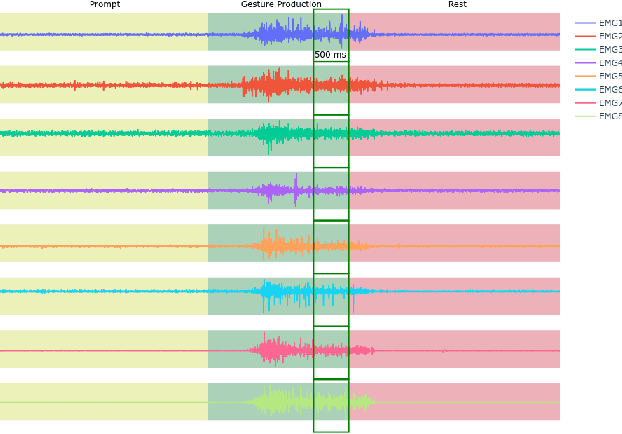

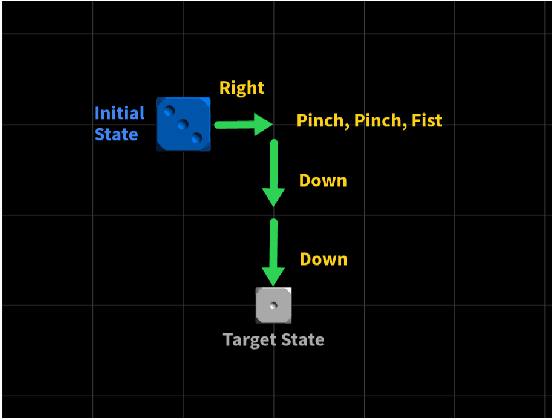

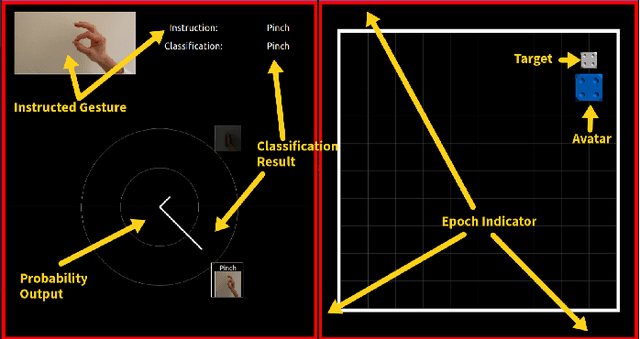

Abstract:We designed and tested a system for real-time control of a user interface by extracting surface electromyographic (sEMG) activity from eight electrodes in a wrist-band configuration. sEMG data were streamed into a machine-learning algorithm that classified hand gestures in real-time. After an initial model calibration, participants were presented with one of three types of feedback during a human-learning stage: veridical feedback, in which predicted probabilities from the gesture classification algorithm were displayed without alteration, modified feedback, in which we applied a hidden augmentation of error to these probabilities, and no feedback. User performance was then evaluated in a series of minigames, in which subjects were required to use eight gestures to manipulate their game avatar to complete a task. Experimental results indicated that, relative to baseline, the modified feedback condition led to significantly improved accuracy and improved gesture class separation. These findings suggest that real-time feedback in a gamified user interface with manipulation of feedback may enable intuitive, rapid, and accurate task acquisition for sEMG-based gesture recognition applications.

A Multi-label Classification Approach to Increase Expressivity of EMG-based Gesture Recognition

Sep 13, 2023Abstract:Objective: The objective of the study is to efficiently increase the expressivity of surface electromyography-based (sEMG) gesture recognition systems. Approach: We use a problem transformation approach, in which actions were subset into two biomechanically independent components - a set of wrist directions and a set of finger modifiers. To maintain fast calibration time, we train models for each component using only individual gestures, and extrapolate to the full product space of combination gestures by generating synthetic data. We collected a supervised dataset with high-confidence ground truth labels in which subjects performed combination gestures while holding a joystick, and conducted experiments to analyze the impact of model architectures, classifier algorithms, and synthetic data generation strategies on the performance of the proposed approach. Main Results: We found that a problem transformation approach using a parallel model architecture in combination with a non-linear classifier, along with restricted synthetic data generation, shows promise in increasing the expressivity of sEMG-based gestures with a short calibration time. Significance: sEMG-based gesture recognition has applications in human-computer interaction, virtual reality, and the control of robotic and prosthetic devices. Existing approaches require exhaustive model calibration. The proposed approach increases expressivity without requiring users to demonstrate all combination gesture classes. Our results may be extended to larger gesture vocabularies and more complicated model architectures.

Segmentation and Classification of EMG Time-Series During Reach-to-Grasp Motion

Apr 19, 2021

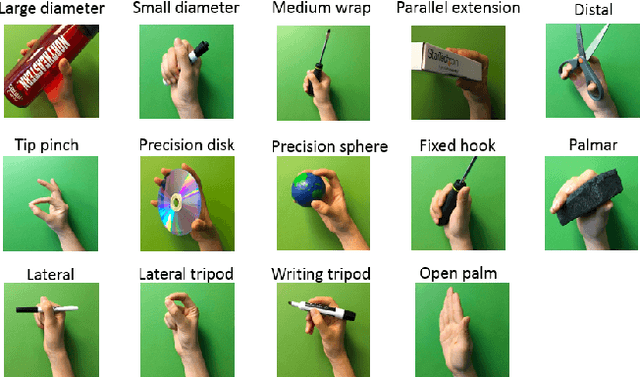

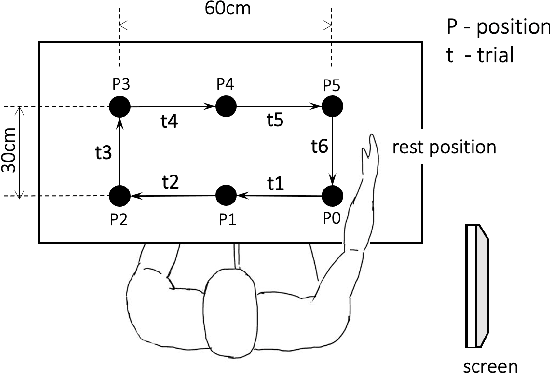

Abstract:The electromyography (EMG) signals have been widely utilized in human robot interaction for extracting user hand and arm motion instructions. A major challenge of the online interaction with robots is the reliable EMG recognition from real-time data. However, previous studies mainly focused on using steady-state EMG signals with a small number of grasp patterns to implement classification algorithms, which is insufficient to generate robust control regarding the dynamic muscular activity variation in practice. Introducing more EMG variability during training and validation could implement a better dynamic-motion detection, but only limited research focused on such grasp-movement identification, and all of those assessments on the non-static EMG classification require supervised ground-truth label of the movement status. In this study, we propose a framework for classifying EMG signals generated from continuous grasp movements with variations on dynamic arm/hand postures, using an unsupervised motion status segmentation method. We collected data from large gesture vocabularies with multiple dynamic motion phases to encode the transitions from one intent to another based on common sequences of the grasp movements. Two classifiers were constructed for identifying the motion-phase label and grasp-type label, where the dynamic motion phases were segmented and labeled in an unsupervised manner. The proposed framework was evaluated in real-time with the accuracy variation over time presented, which was shown to be efficient due to the high degree of freedom of the EMG data.

Multimodal Fusion of EMG and Vision for Human Grasp Intent Inference in Prosthetic Hand Control

Apr 08, 2021

Abstract:For lower arm amputees, robotic prosthetic hands offer the promise to regain the capability to perform fine object manipulation in activities of daily living. Current control methods based on physiological signals such as EEG and EMG are prone to poor inference outcomes due to motion artifacts, variability of skin electrode junction impedance over time, muscle fatigue, and other factors. Visual evidence is also susceptible to its own artifacts, most often due to object occlusion, lighting changes, variable shapes of objects depending on view-angle, among other factors. Multimodal evidence fusion using physiological and vision sensor measurements is a natural approach due to the complementary strengths of these modalities. In this paper, we present a Bayesian evidence fusion framework for grasp intent inference using eye-view video, gaze, and EMG from the forearm processed by neural network models. We analyze individual and fused performance as a function of time as the hand approaches the object to grasp it. For this purpose, we have also developed novel data processing and augmentation techniques to train neural network components. Our experimental data analyses demonstrate that EMG and visual evidence show complementary strengths, and as a consequence, fusion of multimodal evidence can outperform each individual evidence modality at any given time. Specifically, results indicate that, on average, fusion improves the instantaneous upcoming grasp type classification accuracy while in the reaching phase by 13.66% and 14.8%, relative to EMG and visual evidence individually. An overall fusion accuracy of 95.3% among 13 labels (compared to a chance level of 7.7%) is achieved, and more detailed analysis indicate that the correct grasp is inferred sufficiently early and with high confidence compared to the top contender, in order to allow successful robot actuation to close the loop.

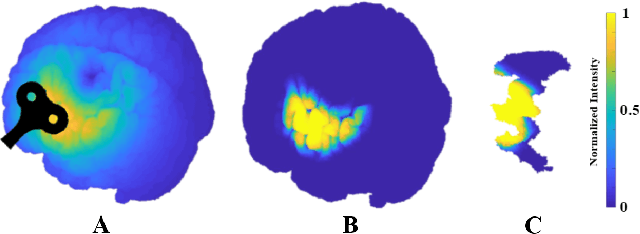

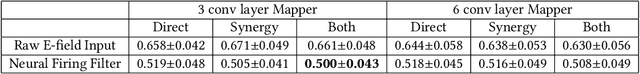

Mapping Motor Cortex Stimulation to Muscle Responses: A Deep Neural Network Modeling Approach

Feb 14, 2020

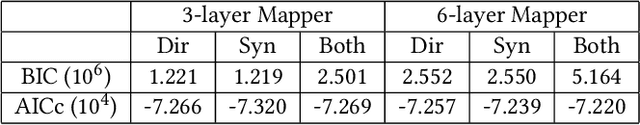

Abstract:A deep neural network (DNN) that can reliably model muscle responses from corresponding brain stimulation has the potential to increase knowledge of coordinated motor control for numerous basic science and applied use cases. Such cases include the understanding of abnormal movement patterns due to neurological injury from stroke, and stimulation based interventions for neurological recovery such as paired associative stimulation. In this work, potential DNN models are explored and the one with the minimum squared errors is recommended for the optimal performance of the M2M-Net, a network that maps transcranial magnetic stimulation of the motor cortex to corresponding muscle responses, using: a finite element simulation, an empirical neural response profile, a convolutional autoencoder, a separate deep network mapper, and recordings of multi-muscle activation. We discuss the rationale behind the different modeling approaches and architectures, and contrast their results. Additionally, to obtain a comparative insight of the trade-off between complexity and performance analysis, we explore different techniques, including the extension of two classical information criteria for M2M-Net. Finally, we find that the model analogous to mapping the motor cortex stimulation to a combination of direct and synergistic connection to the muscles performs the best, when the neural response profile is used at the input.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge