Lars Rosenbaum

Unscented Autoencoder

Jun 08, 2023

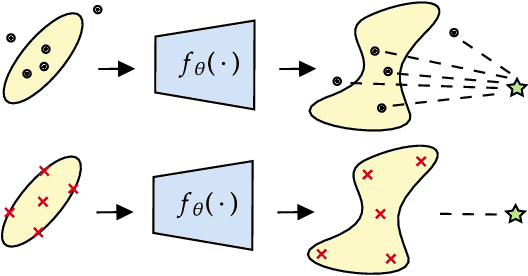

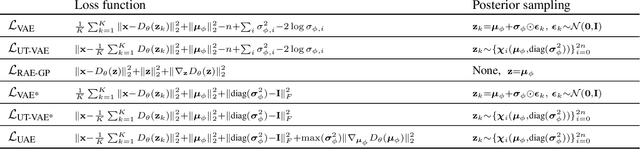

Abstract:The Variational Autoencoder (VAE) is a seminal approach in deep generative modeling with latent variables. Interpreting its reconstruction process as a nonlinear transformation of samples from the latent posterior distribution, we apply the Unscented Transform (UT) -- a well-known distribution approximation used in the Unscented Kalman Filter (UKF) from the field of filtering. A finite set of statistics called sigma points, sampled deterministically, provides a more informative and lower-variance posterior representation than the ubiquitous noise-scaling of the reparameterization trick, while ensuring higher-quality reconstruction. We further boost the performance by replacing the Kullback-Leibler (KL) divergence with the Wasserstein distribution metric that allows for a sharper posterior. Inspired by the two components, we derive a novel, deterministic-sampling flavor of the VAE, the Unscented Autoencoder (UAE), trained purely with regularization-like terms on the per-sample posterior. We empirically show competitive performance in Fr\'echet Inception Distance (FID) scores over closely-related models, in addition to a lower training variance than the VAE.

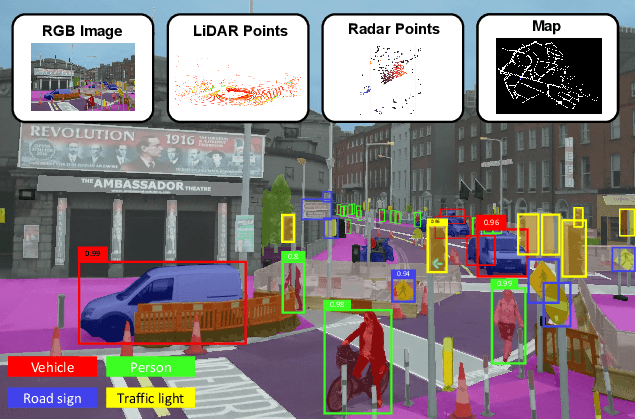

DeepFusion: A Robust and Modular 3D Object Detector for Lidars, Cameras and Radars

Sep 27, 2022

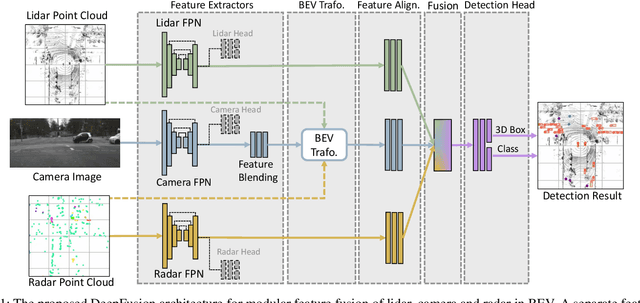

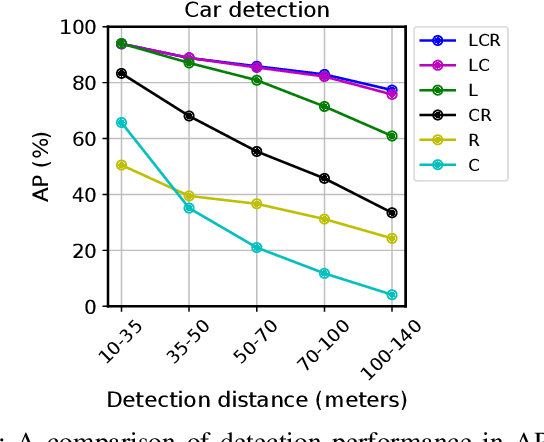

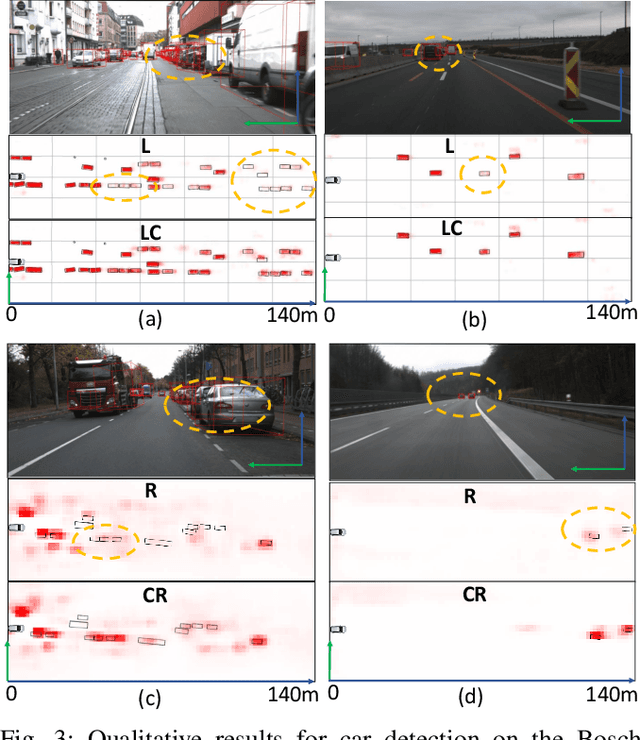

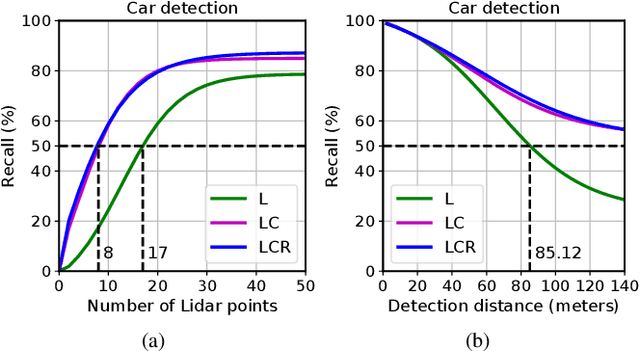

Abstract:We propose DeepFusion, a modular multi-modal architecture to fuse lidars, cameras and radars in different combinations for 3D object detection. Specialized feature extractors take advantage of each modality and can be exchanged easily, making the approach simple and flexible. Extracted features are transformed into bird's-eye-view as a common representation for fusion. Spatial and semantic alignment is performed prior to fusing modalities in the feature space. Finally, a detection head exploits rich multi-modal features for improved 3D detection performance. Experimental results for lidar-camera, lidar-camera-radar and camera-radar fusion show the flexibility and effectiveness of our fusion approach. In the process, we study the largely unexplored task of faraway car detection up to 225 meters, showing the benefits of our lidar-camera fusion. Furthermore, we investigate the required density of lidar points for 3D object detection and illustrate implications at the example of robustness against adverse weather conditions. Moreover, ablation studies on our camera-radar fusion highlight the importance of accurate depth estimation.

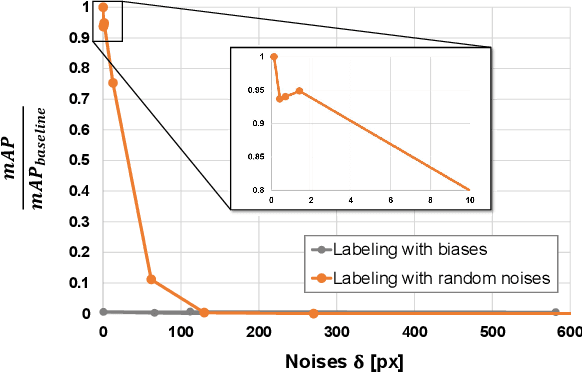

Labels Are Not Perfect: Inferring Spatial Uncertainty in Object Detection

Dec 18, 2020

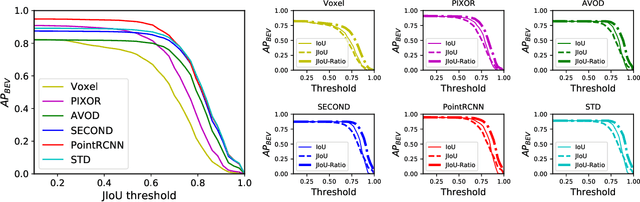

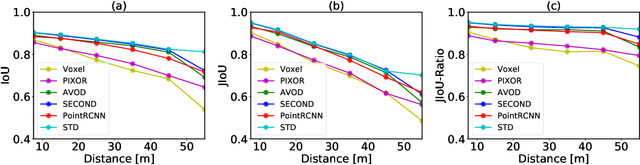

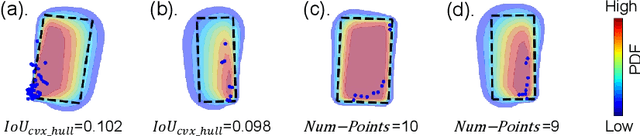

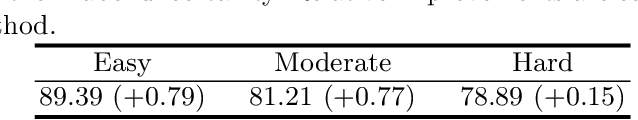

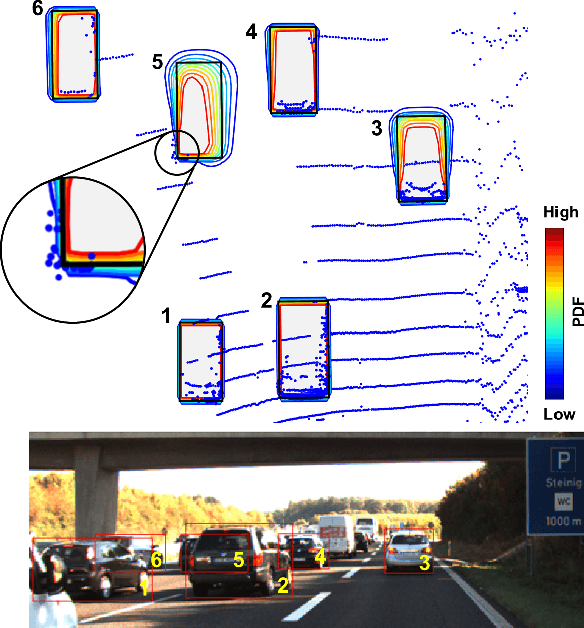

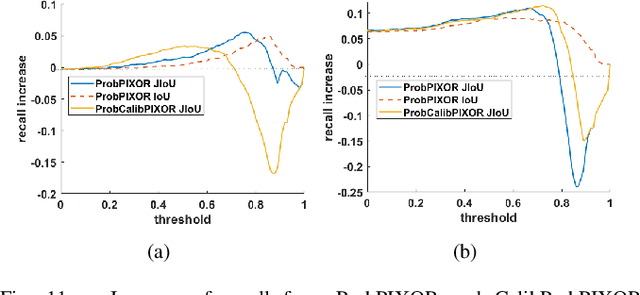

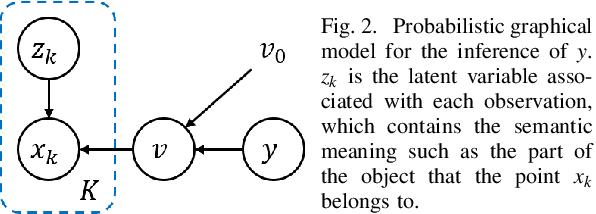

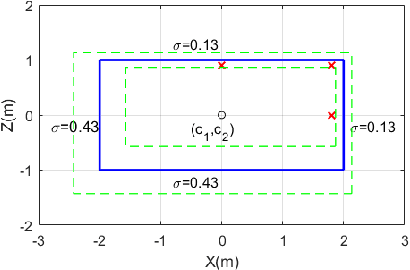

Abstract:The availability of many real-world driving datasets is a key reason behind the recent progress of object detection algorithms in autonomous driving. However, there exist ambiguity or even failures in object labels due to error-prone annotation process or sensor observation noise. Current public object detection datasets only provide deterministic object labels without considering their inherent uncertainty, as does the common training process or evaluation metrics for object detectors. As a result, an in-depth evaluation among different object detection methods remains challenging, and the training process of object detectors is sub-optimal, especially in probabilistic object detection. In this work, we infer the uncertainty in bounding box labels from LiDAR point clouds based on a generative model, and define a new representation of the probabilistic bounding box through a spatial uncertainty distribution. Comprehensive experiments show that the proposed model reflects complex environmental noises in LiDAR perception and the label quality. Furthermore, we propose Jaccard IoU (JIoU) as a new evaluation metric that extends IoU by incorporating label uncertainty. We conduct an in-depth comparison among several LiDAR-based object detectors using the JIoU metric. Finally, we incorporate the proposed label uncertainty in a loss function to train a probabilistic object detector and to improve its detection accuracy. We verify our proposed methods on two public datasets (KITTI, Waymo), as well as on simulation data. Code is released at https://bit.ly/2W534yo.

Labels Are Not Perfect: Improving Probabilistic Object Detection via Label Uncertainty

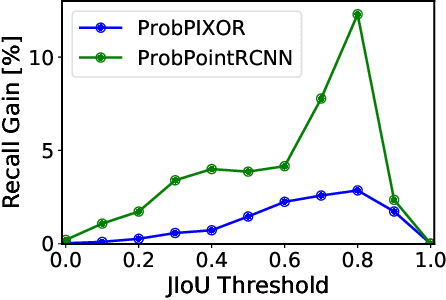

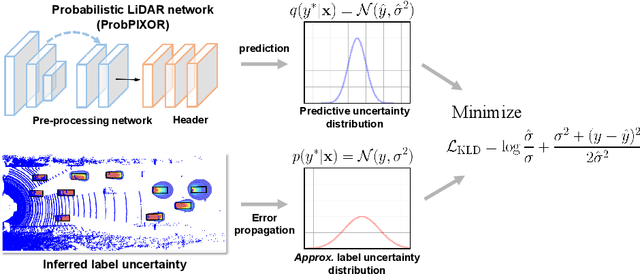

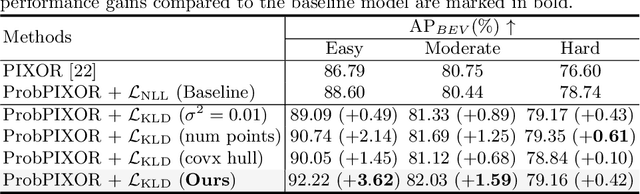

Aug 10, 2020

Abstract:Reliable uncertainty estimation is crucial for robust object detection in autonomous driving. However, previous works on probabilistic object detection either learn predictive probability for bounding box regression in an un-supervised manner, or use simple heuristics to do uncertainty regularization. This leads to unstable training or suboptimal detection performance. In this work, we leverage our previously proposed method for estimating uncertainty inherent in ground truth bounding box parameters (which we call label uncertainty) to improve the detection accuracy of a probabilistic LiDAR-based object detector. Experimental results on the KITTI dataset show that our method surpasses both the baseline model and the models based on simple heuristics by up to 3.6% in terms of Average Precision.

Inferring Spatial Uncertainty in Object Detection

Mar 07, 2020

Abstract:The availability of real-world datasets is the prerequisite to develop object detection methods for autonomous driving. While ambiguity exists in object labels due to error-prone annotation process or sensor observation noises, current object detection datasets only provide deterministic annotations, without considering their uncertainty. This precludes an in-depth evaluation among different object detection methods, especially for those that explicitly model predictive probability. In this work, we propose a generative model to estimate bounding box label uncertainties from LiDAR point clouds, and define a new representation of the probabilistic bounding box through spatial distribution. Comprehensive experiments show that the proposed model represents uncertainties commonly seen in driving scenarios. Based on the spatial distribution, we further propose an extension of IoU, called the Jaccard IoU (JIoU), as a new evaluation metric that incorporates label uncertainty. The experiments on the KITTI and the Waymo Open Datasets show that JIoU is superior to IoU when evaluating probabilistic object detectors.

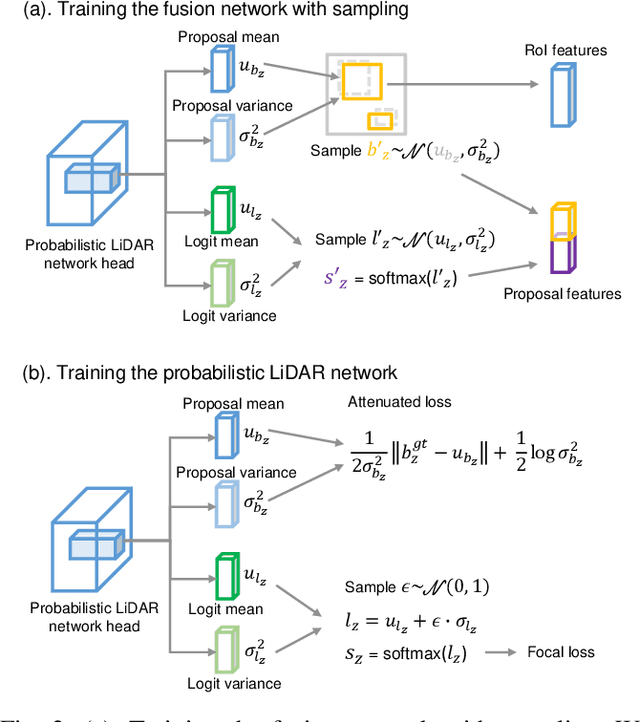

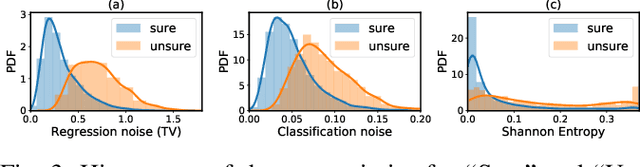

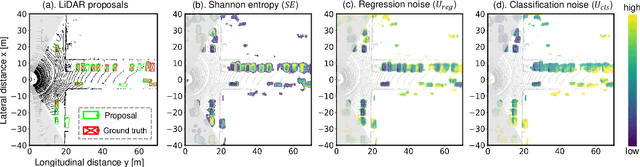

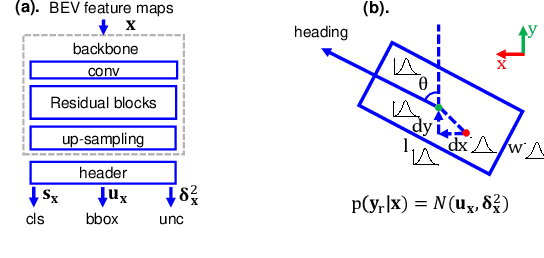

Leveraging Uncertainties for Deep Multi-modal Object Detection in Autonomous Driving

Feb 01, 2020

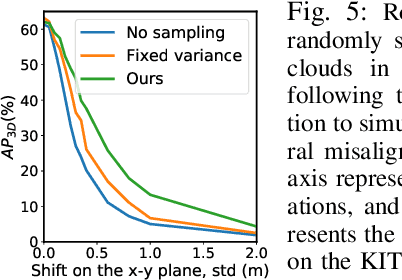

Abstract:This work presents a probabilistic deep neural network that combines LiDAR point clouds and RGB camera images for robust, accurate 3D object detection. We explicitly model uncertainties in the classification and regression tasks, and leverage uncertainties to train the fusion network via a sampling mechanism. We validate our method on three datasets with challenging real-world driving scenarios. Experimental results show that the predicted uncertainties reflect complex environmental uncertainty like difficulties of a human expert to label objects. The results also show that our method consistently improves the Average Precision by up to 7% compared to the baseline method. When sensors are temporally misaligned, the sampling method improves the Average Precision by up to 20%, showing its high robustness against noisy sensor inputs.

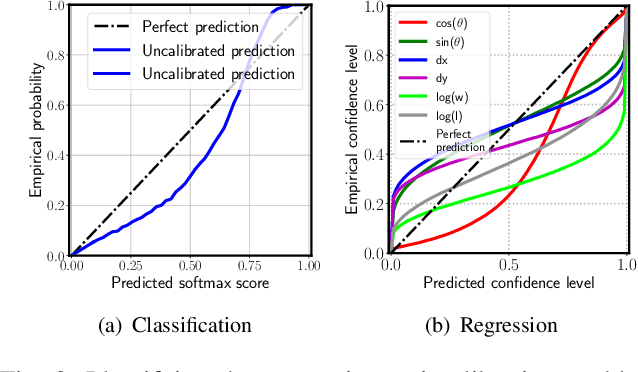

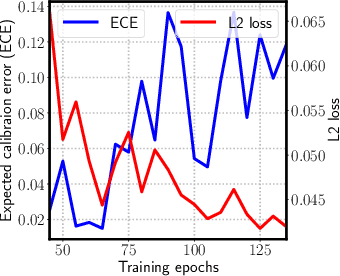

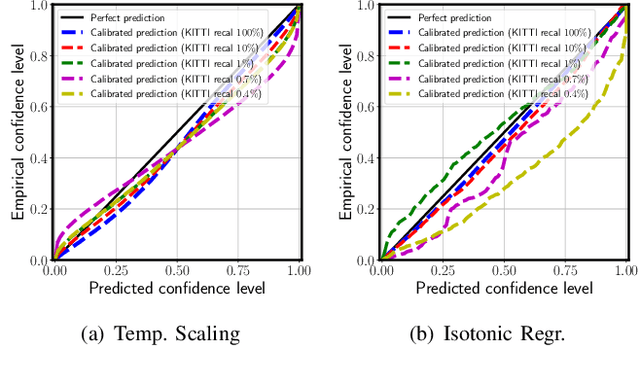

Can We Trust You? On Calibration of a Probabilistic Object Detector for Autonomous Driving

Sep 26, 2019

Abstract:Reliable uncertainty estimation is crucial for perception systems in safe autonomous driving. Recently, many methods have been proposed to model uncertainties in deep learning based object detectors. However, the estimated probabilities are often uncalibrated, which may lead to severe problems in safety critical scenarios. In this work, we identify such uncertainty miscalibration problems in a probabilistic LiDAR 3D object detection network, and propose three practical methods to significantly reduce errors in uncertainty calibration. Extensive experiments on several datasets show that our methods produce well-calibrated uncertainties, and generalize well between different datasets.

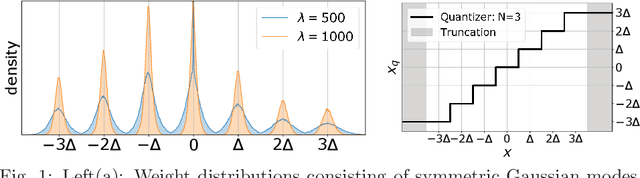

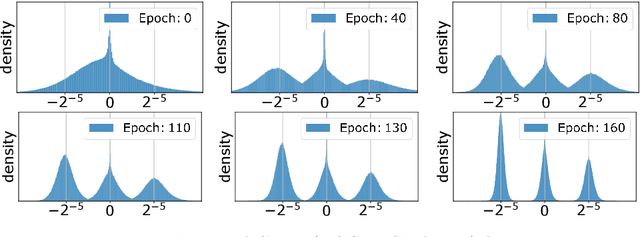

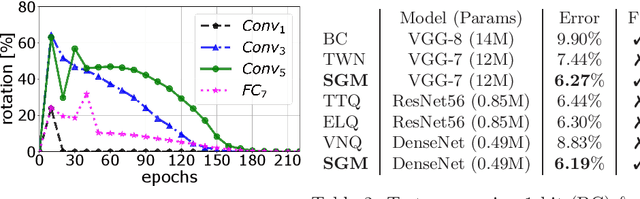

Learning Multimodal Fixed-Point Weights using Gradient Descent

Jul 16, 2019

Abstract:Due to their high computational complexity, deep neural networks are still limited to powerful processing units. To promote a reduced model complexity by dint of low-bit fixed-point quantization, we propose a gradient-based optimization strategy to generate a symmetric mixture of Gaussian modes (SGM) where each mode belongs to a particular quantization stage. We achieve 2-bit state-of-the-art performance and illustrate the model's ability for self-dependent weight adaptation during training.

* presented at ESANN 2019 (European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning)

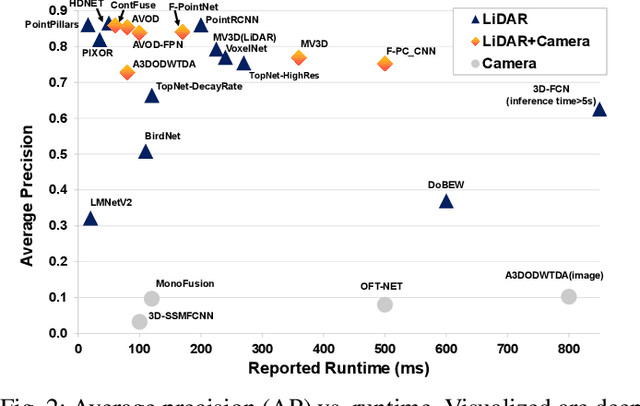

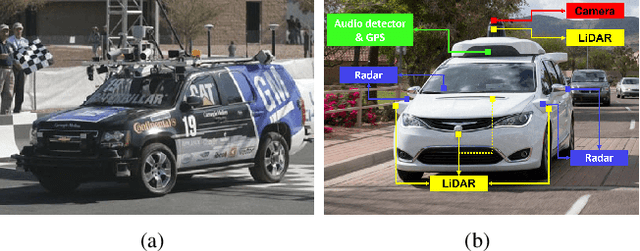

Deep Multi-modal Object Detection and Semantic Segmentation for Autonomous Driving: Datasets, Methods, and Challenges

Feb 21, 2019

Abstract:Recent advancements in the perception for autonomous driving are driven by deep learning. In order to achieve the robust and accurate scene understanding, autonomous vehicles are usually equipped with different sensors (e.g. cameras, LiDARs, Radars), and multiple sensing modalities can be fused to exploit their complementary properties. In this context, many methods have been proposed for deep multi-modal perception problems. However, there is no general guideline for network architecture design, and questions of "what to fuse", "when to fuse", and "how to fuse" remain open. This review paper attempts to systematically summarize methodologies and discuss challenges for deep multi-modal object detection and semantic segmentation in autonomous driving. To this end, we first provide an overview of on-board sensors on test vehicles, open datasets and the background information of object detection and semantic segmentation for the autonomous driving research. We then summarize the fusion methodologies and discuss challenges and open questions. In the appendix, we provide tables that summarize topics and methods. We also provide an interactive online platform to navigate each reference: https://multimodalperception.github.io.

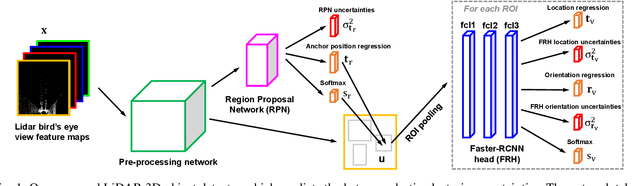

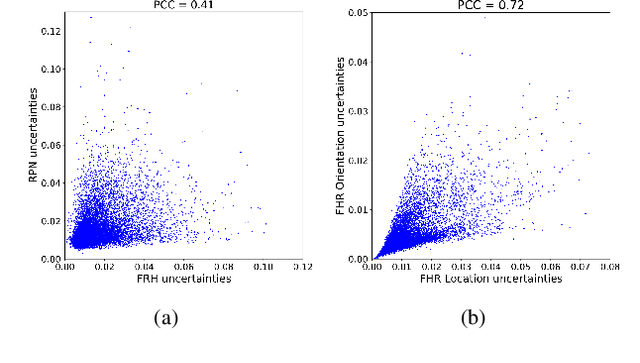

Leveraging Heteroscedastic Aleatoric Uncertainties for Robust Real-Time LiDAR 3D Object Detection

Feb 03, 2019

Abstract:We present a robust real-time LiDAR 3D object detector that leverages heteroscedastic aleatoric uncertainties to significantly improve its detection performance. A multi-loss function is designed to incorporate uncertainty estimations predicted by auxiliary output layers. Using our proposed method, the network ignores to train from noisy samples, and focuses more on informative ones. We validate our method on the KITTI object detection benchmark. Our method surpasses the baseline method which does not explicitly estimate uncertainties by up to nearly 9% in terms of Average Precision (AP). It also produces state-of-the-art results compared to other methods while running with an inference time of only 72 ms. In addition, we conduct extensive experiments to understand how aleatoric uncertainties behave. Extracting aleatoric uncertainties brings almost no additional computation cost during the deployment, making our method highly desirable for autonomous driving applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge