Kerry He

Collaborative Object Handover in a Robot Crafting Assistant

Feb 27, 2025

Abstract:Robots are increasingly working alongside people, delivering food to patrons in restaurants or helping workers on assembly lines. These scenarios often involve object handovers between the person and the robot. To achieve safe and efficient human-robot collaboration (HRC), it is important to incorporate human context in a robot's handover strategies. Therefore, in this work, we develop a collaborative handover model trained on human teleoperation data collected in a naturalistic crafting task. To evaluate the performance of this model, we conduct cross-validation experiments on the training dataset as well as a user study in the same HRC crafting task. The handover episodes and user perceptions of the autonomous handover policy were compared with those of the human teleoperated handovers. While the cross-validation experiment and user study indicate that the autonomous policy successfully achieved collaborative handovers, the comparison with human teleoperation revealed avenues for further improvements.

A Review of Differentiable Simulators

Jul 08, 2024

Abstract:Differentiable simulators continue to push the state of the art across a range of domains including computational physics, robotics, and machine learning. Their main value is the ability to compute gradients of physical processes, which allows differentiable simulators to be readily integrated into commonly employed gradient-based optimization schemes. To achieve this, a number of design decisions need to be considered representing trade-offs in versatility, computational speed, and accuracy of the gradients obtained. This paper presents an in-depth review of the evolving landscape of differentiable physics simulators. We introduce the foundations and core components of differentiable simulators alongside common design choices. This is followed by a practical guide and overview of open-source differentiable simulators that have been used across past research. Finally, we review and contextualize prominent applications of differentiable simulation. By offering a comprehensive review of the current state-of-the-art in differentiable simulation, this work aims to serve as a resource for researchers and practitioners looking to understand and integrate differentiable physics within their research. We conclude by highlighting current limitations as well as providing insights into future directions for the field.

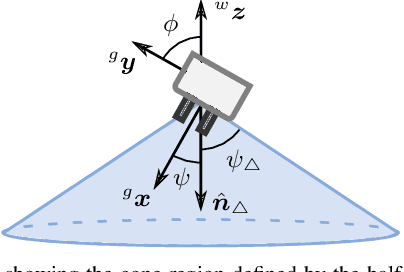

Variable Grasp Pose and Commitment for Trajectory Optimization

May 21, 2023

Abstract:We propose enhancing trajectory optimization methods through the incorporation of two key ideas: variable-grasp pose sampling and trajectory commitment. Our iterative approach samples multiple grasp poses, increasing the likelihood of finding a solution while gradually narrowing the optimization horizon towards the goal region for improved computational efficiency. We conduct experiments comparing our approach with sampling-based planning and fixed-goal optimization. In simulated experiments featuring 4 different task scenes, our approach consistently outperforms baselines by generating lower-cost trajectories and achieving higher success rates in challenging constrained and cluttered environments, at the trade-off of longer computation times. Real-world experiments further validate the superiority of our approach in generating lower-cost trajectories and exhibiting enhanced robustness. While we acknowledge the limitations of our experimental design, our proposed approach holds significant potential for enhancing trajectory optimization methods and offers a promising solution for achieving consistent and reliable robotic manipulation.

Robot Gaze During Autonomous Navigation and its Effect on Social Presence

May 10, 2023Abstract:As robots have become increasingly common in human-rich environments, it is critical that they are able to exhibit social cues to be perceived as a cooperative and socially-conformant team member. We investigate the effect of robot gaze cues on people's subjective perceptions of a mobile robot as a socially present entity in three common hallway navigation scenarios. The tested robot gaze behaviors were path-oriented (looking at its own future path), or person-oriented (looking at the nearest person), with fixed-gaze as the control. We conduct a real-world study with 36 participants who walked through the hallway, and an online study with 233 participants who were shown simulated videos of the same scenarios. Our results suggest that the preferred gaze behavior is scenario-dependent. Person-oriented gaze behaviors which acknowledge the presence of the human are generally preferred when the robot and human cross paths. However, this benefit is diminished in scenarios that involve less implicit interaction between the robot and the human.

Crafting with a Robot Assistant: Use Social Cues to Inform Adaptive Handovers in Human-Robot Collaboration

Jan 07, 2023

Abstract:We study human-robot handovers in a naturalistic collaboration scenario, where a mobile manipulator robot assists a person during a crafting session by providing and retrieving objects used for wooden piece assembly (functional activities) and painting (creative activities). We collect quantitative and qualitative data from 20 participants in a Wizard-of-Oz study, generating the Functional And Creative Tasks Human-Robot Collaboration dataset (the FACT HRC dataset), available to the research community. This work illustrates how social cues and task context inform the temporal-spatial coordination in human-robot handovers, and how human-robot collaboration is shaped by and in turn influences people's functional and creative activities.

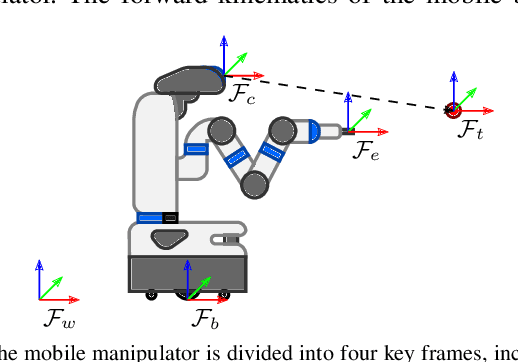

On-The-Go Robot-to-Human Handovers with a Mobile Manipulator

Mar 16, 2022

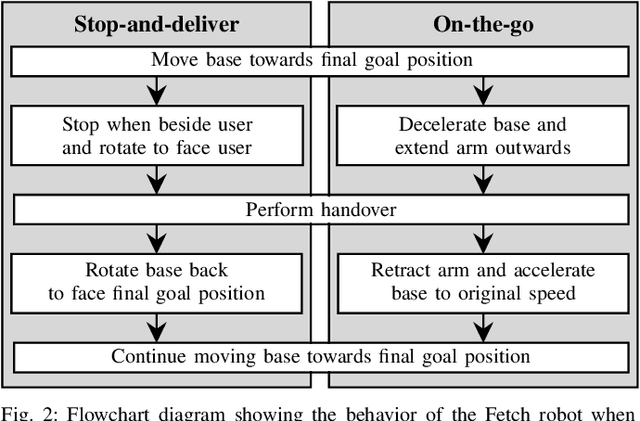

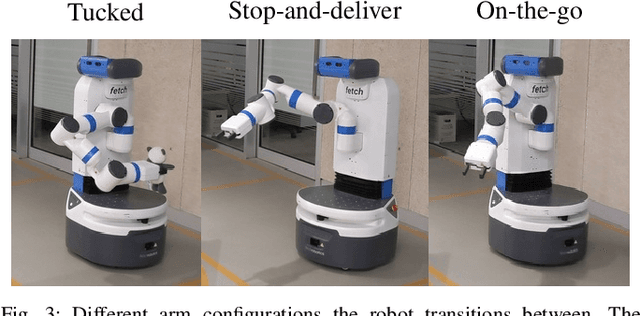

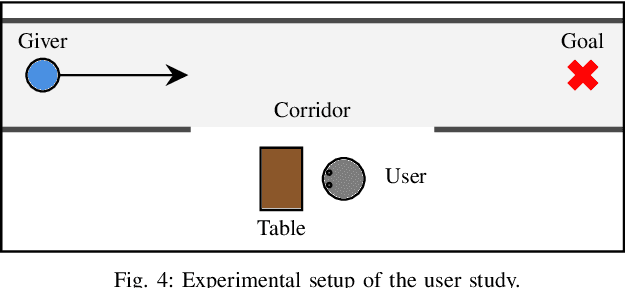

Abstract:Existing approaches to direct robot-to-human handovers are typically implemented on fixed-base robot arms, or on mobile manipulators that come to a full stop before performing the handover. We propose "on-the-go" handovers which permit a moving mobile manipulator to hand over an object to a human without stopping. The on-the-go handover motion is generated with a reactive controller that allows simultaneous control of the base and the arm. In a user study, human receivers subjectively assessed on-the-go handovers to be more efficient, predictable, natural, better timed and safer than handovers that implemented a "stop-and-deliver" behavior.

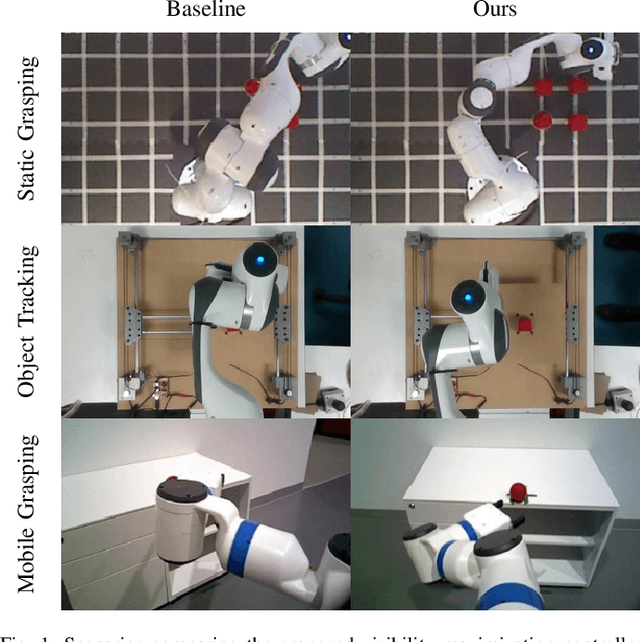

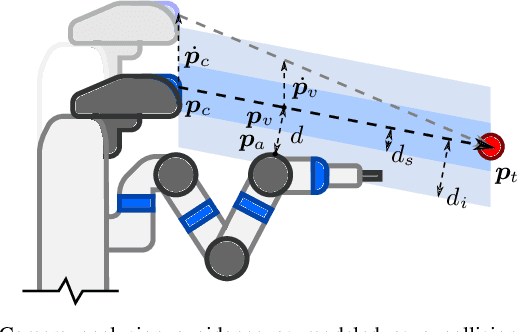

Visibility Maximization Controller for Robotic Manipulation

Feb 25, 2022

Abstract:Occlusions caused by a robot's own body is a common problem for closed-loop control methods employed in eye-to-hand camera setups. We propose an optimization-based reactive controller that minimizes self-occlusions while achieving a desired goal pose. The approach allows coordinated control between the robot's base, arm and head by encoding the line-of-sight visibility to the target as a soft constraint along with other task-related constraints, and solving for feasible joint and base velocities. The generalizability of the approach is demonstrated in simulated and real-world experiments, on robots with fixed or mobile bases, with moving or fixed objects, and multiple objects. The experiments revealed a trade-off between occlusion rates and other task metrics. While a planning-based baseline achieved lower occlusion rates than the proposed controller, it came at the expense of highly inefficient paths and a significant drop in the task success. On the other hand, the proposed controller is shown to improve visibility to the line target object(s) without sacrificing too much from the task success and efficiency. Videos and code can be found at: rhys-newbury.github.io/projects/vmc/.

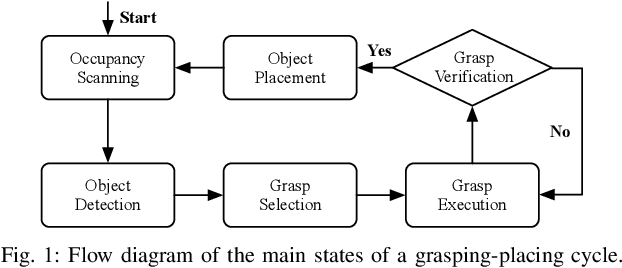

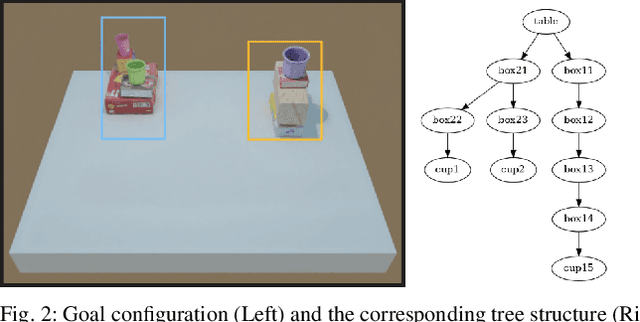

Tabletop Object Rearrangement: Team ACRV's Entry to OCRTOC

Apr 15, 2021

Abstract:Open Cloud Robot Table Organization Challenge (OCRTOC) is one of the most comprehensive cloud-based robotic manipulation competitions. It focuses on rearranging tabletop objects using vision as its primary sensing modality. In this extended abstract, we present our entry to the OCRTOC2020 and the key challenges the team has experienced.

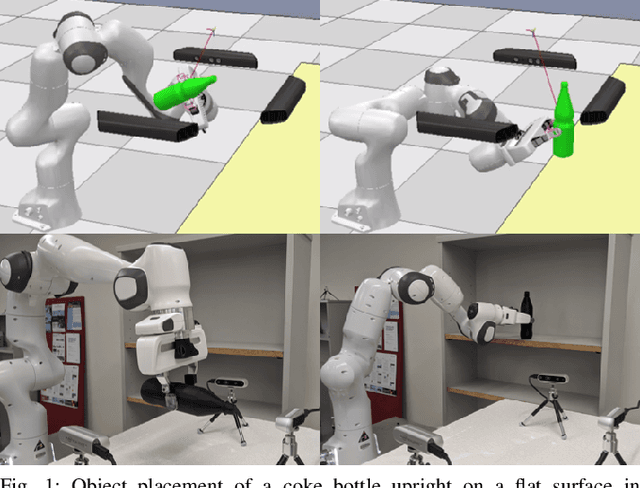

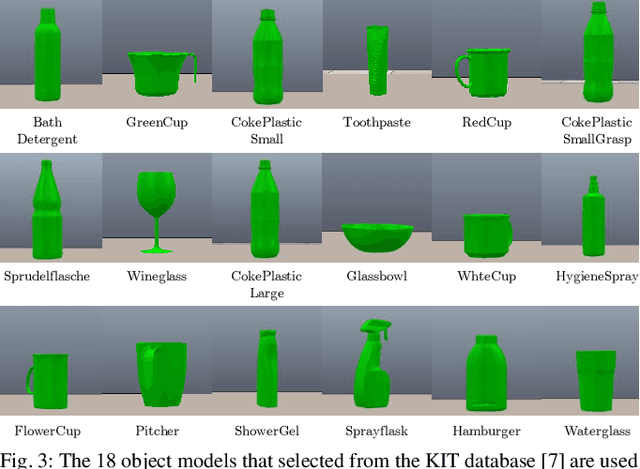

Learning to Place Objects onto Flat Surfaces in Human-Preferred Orientations

Apr 01, 2020

Abstract:We study the problem of placing a grasped object on an empty flat surface in a human-preferred orientation, such as placing a cup on its bottom rather than on its side. We aim to find the required object rotation such that when the gripper is opened after the object makes a contact with the surface, the object would be stably placed in the desired orientation. We use two neural networks in an iterative fashion. At every iteration, Placement Rotation CNN (PR-CNN) estimates the required object rotation which is executed by the robot, and then Placement Stability CNN (PS-CNN) estimates if the object would be stable if it is placed in its current orientation. In simulation experiments, our approach places objects in human-preferred orientations with a success rate of 86.1% using a dataset of 18 everyday objects. A real-world implementation is presented, which serves as a proof-of-concept for direct sim-to-real transfer. We observe that sometimes it is impossible to place a grasped object in a desired orientation without re-grasping, which motivates future research for grasping with intention to place objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge