Kang Zhang

DualTSR: Unified Dual-Diffusion Transformer for Scene Text Image Super-Resolution

Mar 15, 2026Abstract:Scene Text Image Super-Resolution (STISR) aims to restore high-resolution details in low-resolution text images, which is crucial for both human readability and machine recognition. Existing methods, however, often depend on external Optical Character Recognition (OCR) models for textual priors or rely on complex multi-component architectures that are difficult to train and reproduce. In this paper, we introduce DualTSR, a unified end-to-end framework that addresses both issues. DualTSR employs a single multimodal transformer backbone trained with a dual diffusion objective. It simultaneously models the continuous distribution of high-resolution images via Conditional Flow Matching and the discrete distribution of textual content via discrete diffusion. This shared design enables visual and textual information to interact at every layer, allowing the model to infer text priors internally instead of relying on an external OCR module. Compared with prior multi-branch diffusion systems, DualTSR offers a simpler end-to-end formulation with fewer hand-crafted components. Experiments on synthetic Chinese benchmarks and a curated real-world evaluation protocol show that DualTSR achieves strong perceptual quality and text fidelity.

A Hidden Semantic Bottleneck in Conditional Embeddings of Diffusion Transformers

Feb 25, 2026Abstract:Diffusion Transformers have achieved state-of-the-art performance in class-conditional and multimodal generation, yet the structure of their learned conditional embeddings remains poorly understood. In this work, we present the first systematic study of these embeddings and uncover a notable redundancy: class-conditioned embeddings exhibit extreme angular similarity, exceeding 99\% on ImageNet-1K, while continuous-condition tasks such as pose-guided image generation and video-to-audio generation reach over 99.9\%. We further find that semantic information is concentrated in a small subset of dimensions, with head dimensions carrying the dominant signal and tail dimensions contributing minimally. By pruning low-magnitude dimensions--removing up to two-thirds of the embedding space--we show that generation quality and fidelity remain largely unaffected, and in some cases improve. These results reveal a semantic bottleneck in Transformer-based diffusion models, providing new insights into how semantics are encoded and suggesting opportunities for more efficient conditioning mechanisms.

LangPert: Detecting and Handling Task-level Perturbations for Robust Object Rearrangement

Apr 14, 2025Abstract:Task execution for object rearrangement could be challenged by Task-Level Perturbations (TLP), i.e., unexpected object additions, removals, and displacements that can disrupt underlying visual policies and fundamentally compromise task feasibility and progress. To address these challenges, we present LangPert, a language-based framework designed to detect and mitigate TLP situations in tabletop rearrangement tasks. LangPert integrates a Visual Language Model (VLM) to comprehensively monitor policy's skill execution and environmental TLP, while leveraging the Hierarchical Chain-of-Thought (HCoT) reasoning mechanism to enhance the Large Language Model (LLM)'s contextual understanding and generate adaptive, corrective skill-execution plans. Our experimental results demonstrate that LangPert handles diverse TLP situations more effectively than baseline methods, achieving higher task completion rates, improved execution efficiency, and potential generalization to unseen scenarios.

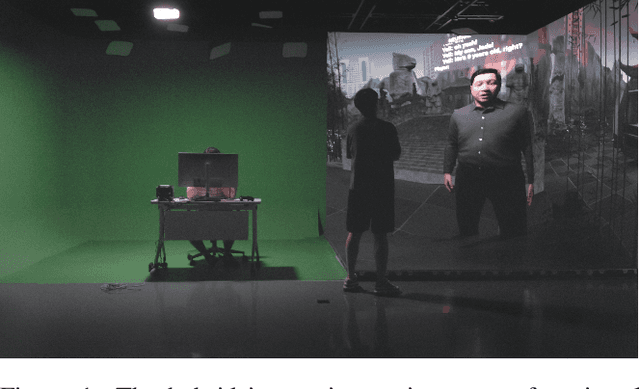

The Dream Within Huang Long Cave: AI-Driven Interactive Narrative for Family Storytelling and Emotional Reflection

Apr 07, 2025

Abstract:This paper introduces the art project The Dream Within Huang Long Cave, an AI-driven interactive and immersive narrative experience. The project offers new insights into AI technology, artistic practice, and psychoanalysis. Inspired by actual geographical landscapes and familial archetypes, the work combines psychoanalytic theory and computational technology, providing an artistic response to the concept of the non-existence of the Big Other. The narrative is driven by a combination of a large language model (LLM) and a realistic digital character, forming a virtual agent named YELL. Through dialogue and exploration within a cave automatic virtual environment (CAVE), the audience is invited to unravel the language puzzles presented by YELL and help him overcome his life challenges. YELL is a fictional embodiment of the Big Other, modeled after the artist's real father. Through a cross-temporal interaction with this digital father, the project seeks to deconstruct complex familial relationships. By demonstrating the non-existence of the Big Other, we aim to underscore the authenticity of interpersonal emotions, positioning art as a bridge for emotional connection and understanding within family dynamics.

Strategic priorities for transformative progress in advancing biology with proteomics and artificial intelligence

Feb 21, 2025

Abstract:Artificial intelligence (AI) is transforming scientific research, including proteomics. Advances in mass spectrometry (MS)-based proteomics data quality, diversity, and scale, combined with groundbreaking AI techniques, are unlocking new challenges and opportunities in biological discovery. Here, we highlight key areas where AI is driving innovation, from data analysis to new biological insights. These include developing an AI-friendly ecosystem for proteomics data generation, sharing, and analysis; improving peptide and protein identification and quantification; characterizing protein-protein interactions and protein complexes; advancing spatial and perturbation proteomics; integrating multi-omics data; and ultimately enabling AI-empowered virtual cells.

Towards a Physics Engine to Simulate Robotic Laser Surgery: Finite Element Modeling of Thermal Laser-Tissue Interactions

Nov 21, 2024

Abstract:This paper presents a computational model, based on the Finite Element Method (FEM), that simulates the thermal response of laser-irradiated tissue. This model addresses a gap in the current ecosystem of surgical robot simulators, which generally lack support for lasers and other energy-based end effectors. In the proposed model, the thermal dynamics of the tissue are calculated as the solution to a heat conduction problem with appropriate boundary conditions. The FEM formulation allows the model to capture complex phenomena, such as convection, which is crucial for creating realistic simulations. The accuracy of the model was verified via benchtop laser-tissue interaction experiments using agar tissue phantoms and ex-vivo chicken muscle. The results revealed an average root-mean-square error (RMSE) of less than 2 degrees Celsius across most experimental conditions.

Physics Informed Distillation for Diffusion Models

Nov 13, 2024

Abstract:Diffusion models have recently emerged as a potent tool in generative modeling. However, their inherent iterative nature often results in sluggish image generation due to the requirement for multiple model evaluations. Recent progress has unveiled the intrinsic link between diffusion models and Probability Flow Ordinary Differential Equations (ODEs), thus enabling us to conceptualize diffusion models as ODE systems. Simultaneously, Physics Informed Neural Networks (PINNs) have substantiated their effectiveness in solving intricate differential equations through implicit modeling of their solutions. Building upon these foundational insights, we introduce Physics Informed Distillation (PID), which employs a student model to represent the solution of the ODE system corresponding to the teacher diffusion model, akin to the principles employed in PINNs. Through experiments on CIFAR 10 and ImageNet 64x64, we observe that PID achieves performance comparable to recent distillation methods. Notably, it demonstrates predictable trends concerning method-specific hyperparameters and eliminates the need for synthetic dataset generation during the distillation process. Both of which contribute to its easy-to-use nature as a distillation approach for Diffusion Models. Our code and pre-trained checkpoint are publicly available at: https://github.com/pantheon5100/pid_diffusion.git.

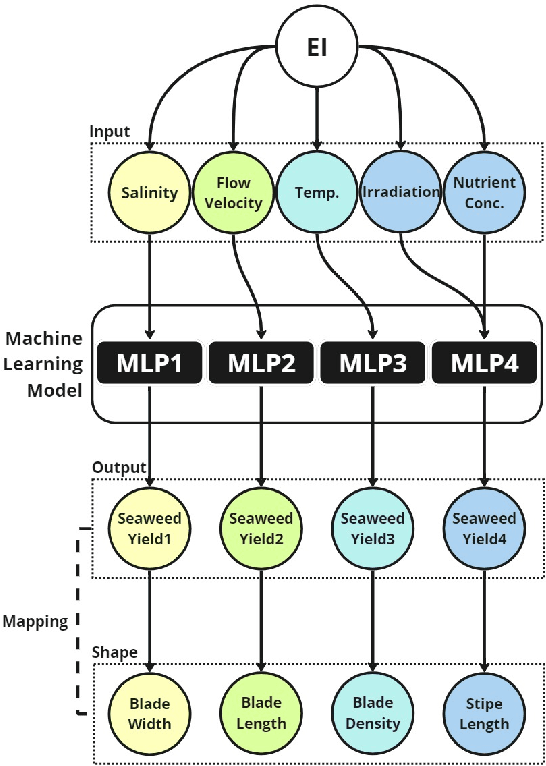

"Benefit Game: Alien Seaweed Swarms" -- Real-time Gamification of Digital Seaweed Ecology

Aug 30, 2024

Abstract:"Benefit Game: Alien Seaweed Swarms" combines artificial life art and interactive game with installation to explore the impact of human activity on fragile seaweed ecosystems. The project aims to promote ecological consciousness by creating a balance in digital seaweed ecologies. Inspired by the real species "Laminaria saccharina", the author employs Procedural Content Generation via Machine Learning technology to generate variations of virtual seaweeds and symbiotic fungi. The audience can explore the consequences of human activities through gameplay and observe the ecosystem's feedback on the benefits and risks of seaweed aquaculture. This Benefit Game offers dynamic and real-time responsive artificial seaweed ecosystems for an interactive experience that enhances ecological consciousness.

DEGAS: Detailed Expressions on Full-Body Gaussian Avatars

Aug 20, 2024

Abstract:Although neural rendering has made significant advancements in creating lifelike, animatable full-body and head avatars, incorporating detailed expressions into full-body avatars remains largely unexplored. We present DEGAS, the first 3D Gaussian Splatting (3DGS)-based modeling method for full-body avatars with rich facial expressions. Trained on multiview videos of a given subject, our method learns a conditional variational autoencoder that takes both the body motion and facial expression as driving signals to generate Gaussian maps in the UV layout. To drive the facial expressions, instead of the commonly used 3D Morphable Models (3DMMs) in 3D head avatars, we propose to adopt the expression latent space trained solely on 2D portrait images, bridging the gap between 2D talking faces and 3D avatars. Leveraging the rendering capability of 3DGS and the rich expressiveness of the expression latent space, the learned avatars can be reenacted to reproduce photorealistic rendering images with subtle and accurate facial expressions. Experiments on an existing dataset and our newly proposed dataset of full-body talking avatars demonstrate the efficacy of our method. We also propose an audio-driven extension of our method with the help of 2D talking faces, opening new possibilities to interactive AI agents.

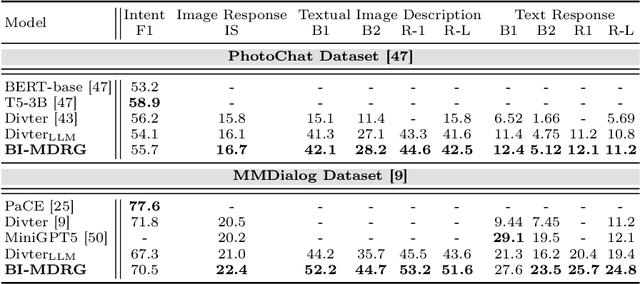

BI-MDRG: Bridging Image History in Multimodal Dialogue Response Generation

Aug 12, 2024

Abstract:Multimodal Dialogue Response Generation (MDRG) is a recently proposed task where the model needs to generate responses in texts, images, or a blend of both based on the dialogue context. Due to the lack of a large-scale dataset specifically for this task and the benefits of leveraging powerful pre-trained models, previous work relies on the text modality as an intermediary step for both the image input and output of the model rather than adopting an end-to-end approach. However, this approach can overlook crucial information about the image, hindering 1) image-grounded text response and 2) consistency of objects in the image response. In this paper, we propose BI-MDRG that bridges the response generation path such that the image history information is utilized for enhanced relevance of text responses to the image content and the consistency of objects in sequential image responses. Through extensive experiments on the multimodal dialogue benchmark dataset, we show that BI-MDRG can effectively increase the quality of multimodal dialogue. Additionally, recognizing the gap in benchmark datasets for evaluating the image consistency in multimodal dialogue, we have created a curated set of 300 dialogues annotated to track object consistency across conversations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge