Hiroki Kanezashi

LLM-Augmented Graph Neural Recommenders: Integrating User Reviews

Apr 03, 2025

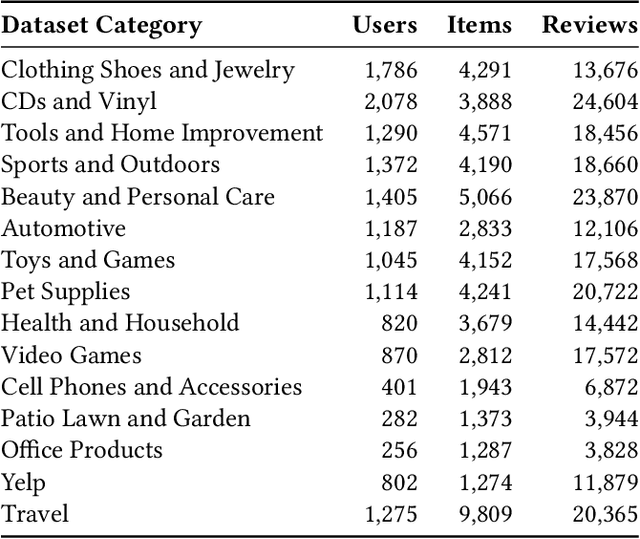

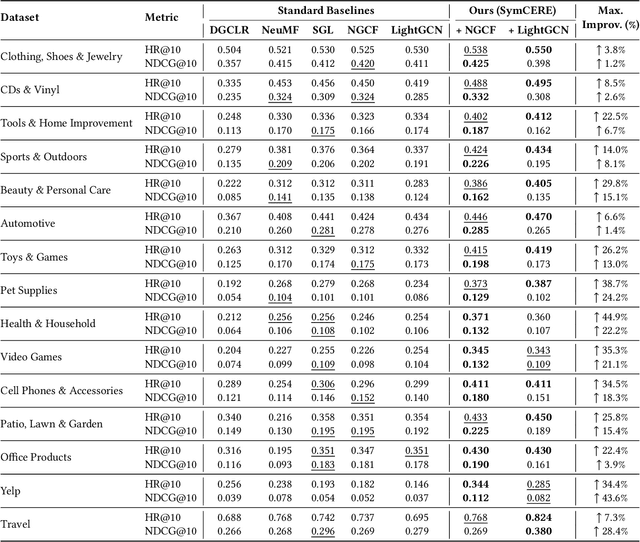

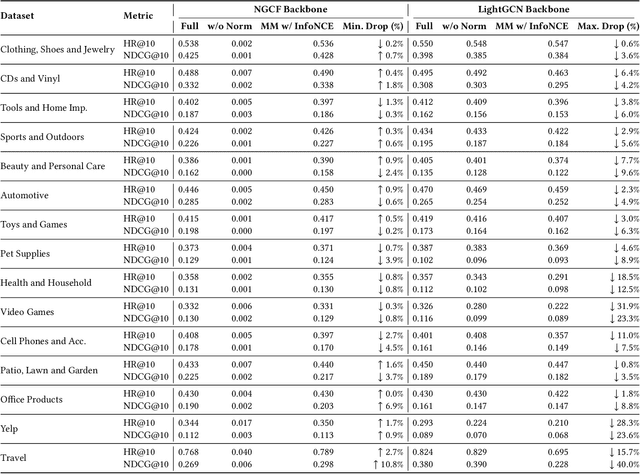

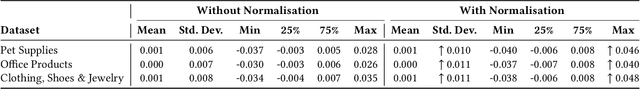

Abstract:Recommender systems increasingly aim to combine signals from both user reviews and purchase (or other interaction) behaviors. While user-written comments provide explicit insights about preferences, merging these textual representations from large language models (LLMs) with graph-based embeddings of user actions remains a challenging task. In this work, we propose a framework that employs both a Graph Neural Network (GNN)-based model and an LLM to produce review-aware representations, preserving review semantics while mitigating textual noise. Our approach utilizes a hybrid objective that balances user-item interactions against text-derived features, ensuring that user's both behavioral and linguistic signals are effectively captured. We evaluate this method on multiple datasets from diverse application domains, demonstrating consistent improvements over a baseline GNN-based recommender model. Notably, our model achieves significant gains in recommendation accuracy when review data is sparse or unevenly distributed. These findings highlight the importance of integrating LLM-driven textual feedback with GNN-derived user behavioral patterns to develop robust, context-aware recommender systems.

Graph-Enhanced EEG Foundation Model

Nov 29, 2024

Abstract:Electroencephalography (EEG) signals provide critical insights for applications in disease diagnosis and healthcare. However, the scarcity of labeled EEG data poses a significant challenge. Foundation models offer a promising solution by leveraging large-scale unlabeled data through pre-training, enabling strong performance across diverse tasks. While both temporal dynamics and inter-channel relationships are vital for understanding EEG signals, existing EEG foundation models primarily focus on the former, overlooking the latter. To address this limitation, we propose a novel foundation model for EEG that integrates both temporal and inter-channel information. Our architecture combines Graph Neural Networks (GNNs), which effectively capture relational structures, with a masked autoencoder to enable efficient pre-training. We evaluated our approach using three downstream tasks and experimented with various GNN architectures. The results demonstrate that our proposed model, particularly when employing the GCN architecture with optimized configurations, consistently outperformed baseline methods across all tasks. These findings suggest that our model serves as a robust foundation model for EEG analysis.

Graph Adapter of EEG Foundation Models for Parameter Efficient Fine Tuning

Nov 25, 2024

Abstract:In diagnosing mental diseases from electroencephalography (EEG) data, neural network models such as Transformers have been employed to capture temporal dynamics. Additionally, it is crucial to learn the spatial relationships between EEG sensors, for which Graph Neural Networks (GNNs) are commonly used. However, fine-tuning large-scale complex neural network models simultaneously to capture both temporal and spatial features increases computational costs due to the more significant number of trainable parameters. It causes the limited availability of EEG datasets for downstream tasks, making it challenging to fine-tune large models effectively. We propose EEG-GraphAdapter (EGA), a parameter-efficient fine-tuning (PEFT) approach to address these challenges. EGA is integrated into pre-trained temporal backbone models as a GNN-based module and fine-tuned itself alone while keeping the backbone model parameters frozen. This enables the acquisition of spatial representations of EEG signals for downstream tasks, significantly reducing computational overhead and data requirements. Experimental evaluations on healthcare-related downstream tasks of Major Depressive Disorder and Abnormality Detection demonstrate that our EGA improves performance by up to 16.1% in the F1-score compared with the backbone BENDR model.

Multimodal Point-of-Interest Recommendation

Oct 07, 2024

Abstract:Large Language Models are applied to recommendation tasks such as items to buy and news articles to read. Point of Interest is quite a new area to sequential recommendation based on language representations of multimodal datasets. As a first step to prove our concepts, we focused on restaurant recommendation based on each user's past visit history. When choosing a next restaurant to visit, a user would consider genre and location of the venue and, if available, pictures of dishes served there. We created a pseudo restaurant check-in history dataset from the Foursquare dataset and the FoodX-251 dataset by converting pictures into text descriptions with a multimodal model called LLaVA, and used a language-based sequential recommendation framework named Recformer proposed in 2023. A model trained on this semi-multimodal dataset has outperformed another model trained on the same dataset without picture descriptions. This suggests that this semi-multimodal model reflects actual human behaviours and that our path to a multimodal recommendation model is in the right direction.

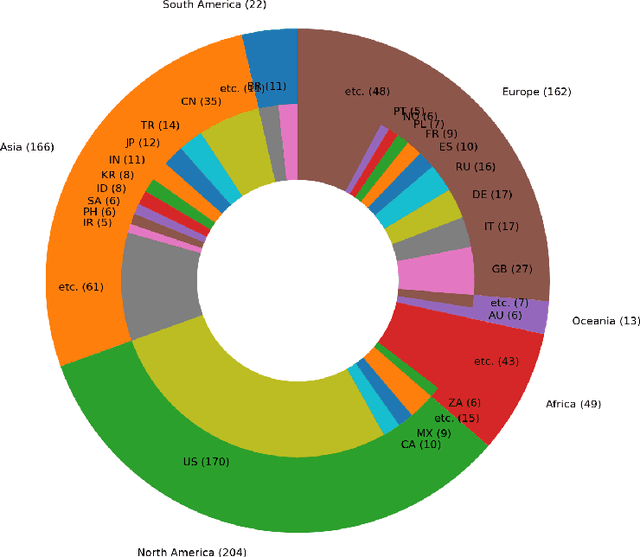

LLM-jp: A Cross-organizational Project for the Research and Development of Fully Open Japanese LLMs

Jul 04, 2024

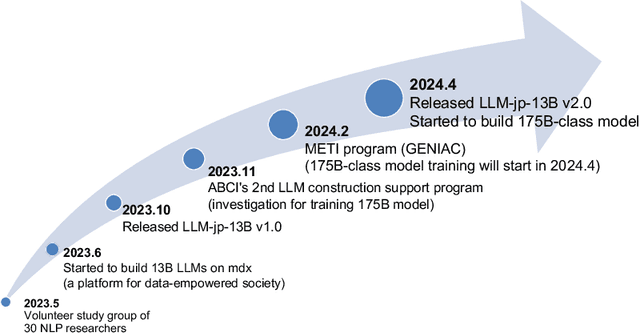

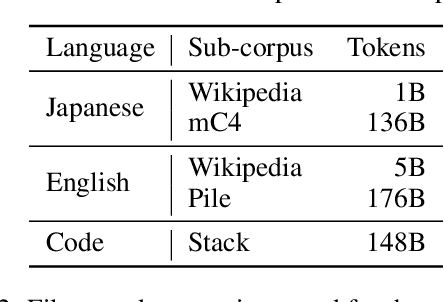

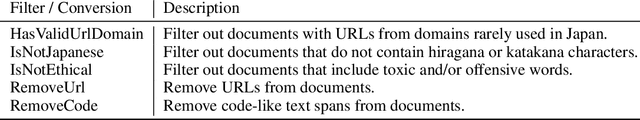

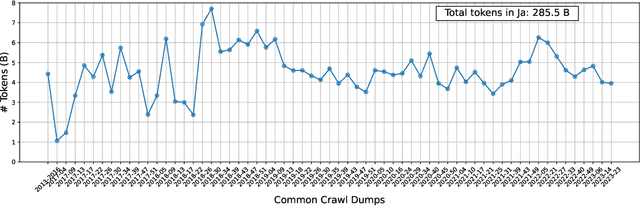

Abstract:This paper introduces LLM-jp, a cross-organizational project for the research and development of Japanese large language models (LLMs). LLM-jp aims to develop open-source and strong Japanese LLMs, and as of this writing, more than 1,500 participants from academia and industry are working together for this purpose. This paper presents the background of the establishment of LLM-jp, summaries of its activities, and technical reports on the LLMs developed by LLM-jp. For the latest activities, visit https://llm-jp.nii.ac.jp/en/.

Revisiting Mobility Modeling with Graph: A Graph Transformer Model for Next Point-of-Interest Recommendation

Oct 02, 2023Abstract:Next Point-of-Interest (POI) recommendation plays a crucial role in urban mobility applications. Recently, POI recommendation models based on Graph Neural Networks (GNN) have been extensively studied and achieved, however, the effective incorporation of both spatial and temporal information into such GNN-based models remains challenging. Extracting distinct fine-grained features unique to each piece of information is difficult since temporal information often includes spatial information, as users tend to visit nearby POIs. To address the challenge, we propose \textbf{\underline{Mob}}ility \textbf{\underline{G}}raph \textbf{\underline{T}}ransformer (MobGT) that enables us to fully leverage graphs to capture both the spatial and temporal features in users' mobility patterns. MobGT combines individual spatial and temporal graph encoders to capture unique features and global user-location relations. Additionally, it incorporates a mobility encoder based on Graph Transformer to extract higher-order information between POIs. To address the long-tailed problem in spatial-temporal data, MobGT introduces a novel loss function, Tail Loss. Experimental results demonstrate that MobGT outperforms state-of-the-art models on various datasets and metrics, achieving 24\% improvement on average. Our codes are available at \url{https://github.com/Yukayo/MobGT}.

Ethereum Fraud Detection with Heterogeneous Graph Neural Networks

Mar 23, 2022

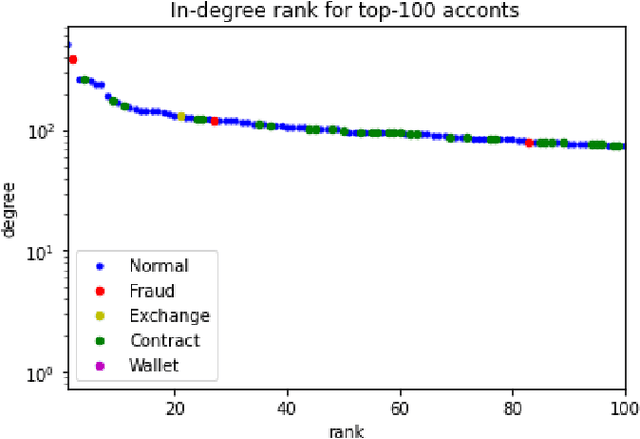

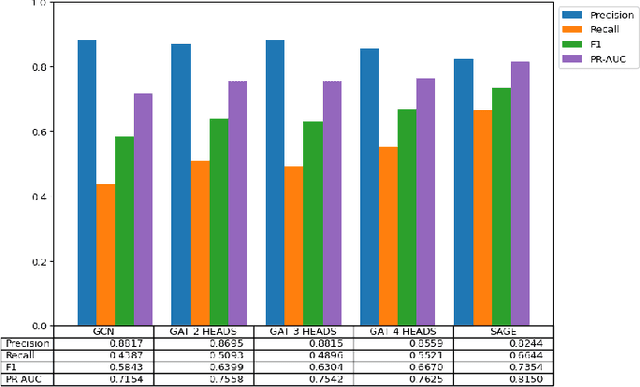

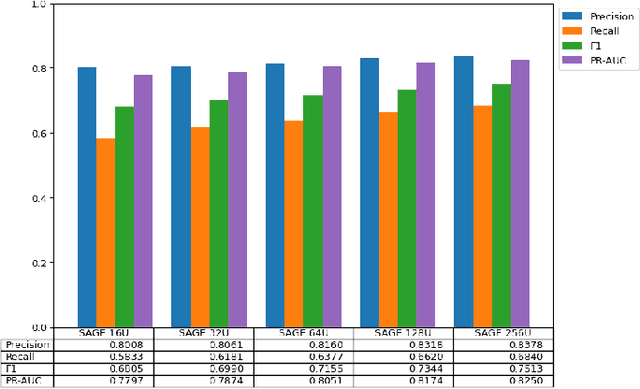

Abstract:While transactions with cryptocurrencies such as Ethereum are becoming more prevalent, fraud and other criminal transactions are not uncommon. Graph analysis algorithms and machine learning techniques detect suspicious transactions that lead to phishing in large transaction networks. Many graph neural network (GNN) models have been proposed to apply deep learning techniques to graph structures. Although there is research on phishing detection using GNN models in the Ethereum transaction network, models that address the scale of the number of vertices and edges and the imbalance of labels have not yet been studied. In this paper, we compared the model performance of GNN models on the actual Ethereum transaction network dataset and phishing reported label data to exhaustively compare and verify which GNN models and hyperparameters produce the best accuracy. Specifically, we evaluated the model performance of representative homogeneous GNN models which consider single-type nodes and edges and heterogeneous GNN models which support different types of nodes and edges. We showed that heterogeneous models had better model performance than homogeneous models. In particular, the RGCN model achieved the best performance in the overall metrics.

Investigating Expressiveness of Transformer in Spectral Domain for Graphs

Jan 27, 2022

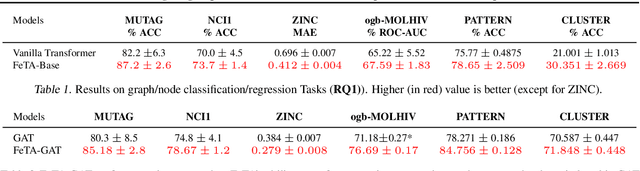

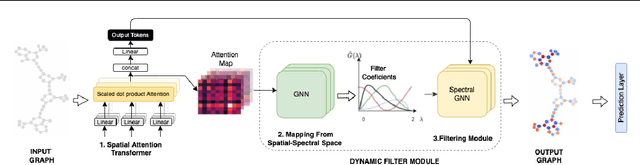

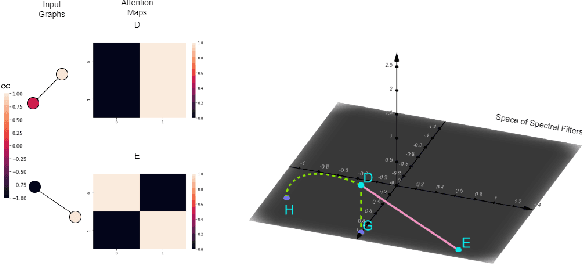

Abstract:Transformers have been proven to be inadequate for graph representation learning. To understand this inadequacy, there is need to investigate if spectral analysis of transformer will reveal insights on its expressive power. Similar studies already established that spectral analysis of Graph neural networks (GNNs) provides extra perspectives on their expressiveness. In this work, we systematically study and prove the link between the spatial and spectral domain in the realm of the transformer. We further provide a theoretical analysis that the spatial attention mechanism in the transformer cannot effectively capture the desired frequency response, thus, inherently limiting its expressiveness in spectral space. Therefore, we propose FeTA, a framework that aims to perform attention over the entire graph spectrum analogous to the attention in spatial space. Empirical results suggest that FeTA provides homogeneous performance gain against vanilla transformer across all tasks on standard benchmarks and can easily be extended to GNN based models with low-pass characteristics (e.g., GAT). Furthermore, replacing the vanilla transformer model with FeTA in recently proposed position encoding schemes has resulted in comparable or better performance than transformer and GNN baselines.

Global Data Science Project for COVID-19 Summary Report

Jun 10, 2020

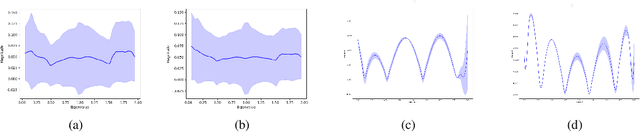

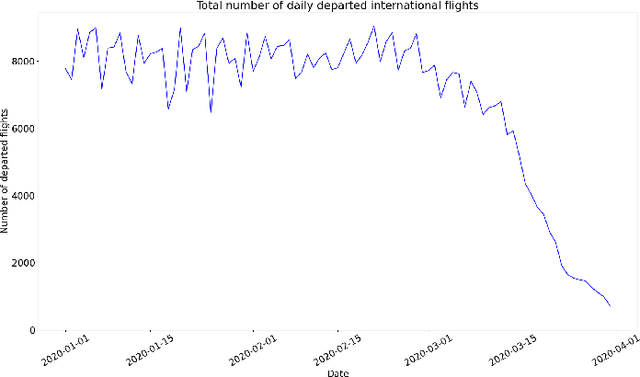

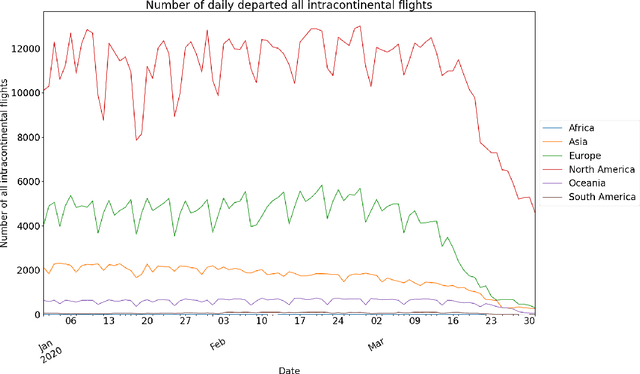

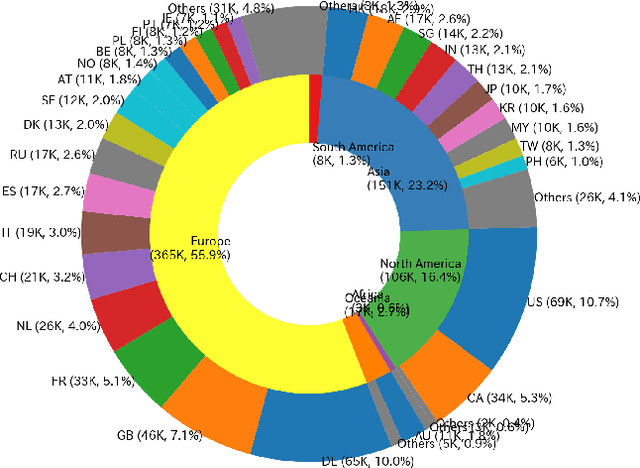

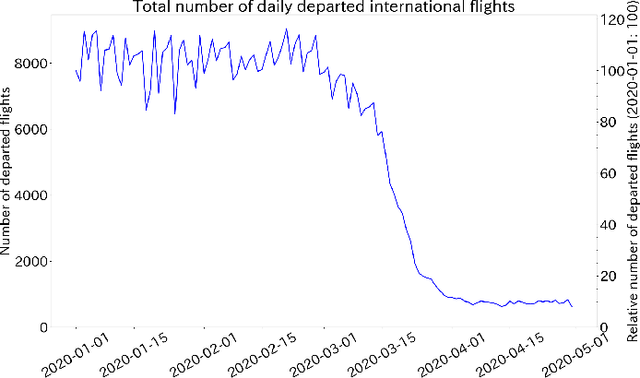

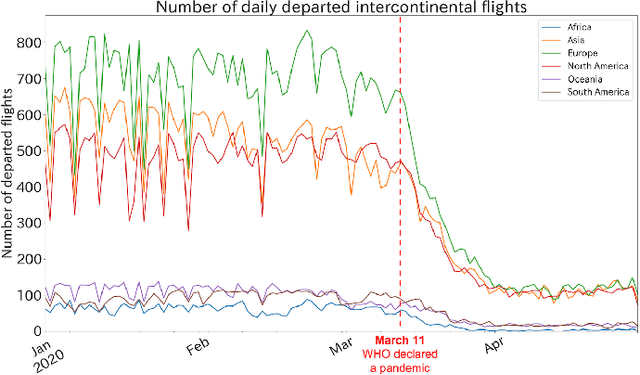

Abstract:This paper aims at providing the summary of the Global Data Science Project (GDSC) for COVID-19. as on May 31 2020. COVID-19 has largely impacted on our societies through both direct and indirect effects transmitted by the policy measures to counter the spread of viruses. We quantitatively analysed the multifaceted impacts of the COVID-19 pandemic on our societies including people's mobility, health, and social behaviour changes. People's mobility has changed significantly due to the implementation of travel restriction and quarantine measurements. Indeed, the physical distance has widened at international (cross-border), national and regional level. At international level, due to the travel restrictions, the number of international flights has plunged overall at around 88 percent during March. In particular, the number of flights connecting Europe dropped drastically in mid of March after the United States announced travel restrictions to Europe and the EU and participating countries agreed to close borders, at 84 percent decline compared to March 10th. Similarly, we examined the impacts of quarantine measures in the major city: Tokyo (Japan), New York City (the United States), and Barcelona (Spain). Within all three cities, we found the significant decline in traffic volume. We also identified the increased concern for mental health through the analysis of posts on social networking services such as Twitter and Instagram. Notably, in the beginning of April 2020, the number of post with #depression on Instagram doubled, which might reflect the rise in mental health awareness among Instagram users. Besides, we identified the changes in a wide range of people's social behaviors, as well as economic impacts through the analysis of Instagram data and primary survey data.

The Impact of COVID-19 on Flight Networks

Jun 05, 2020

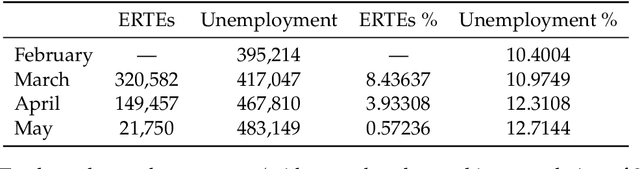

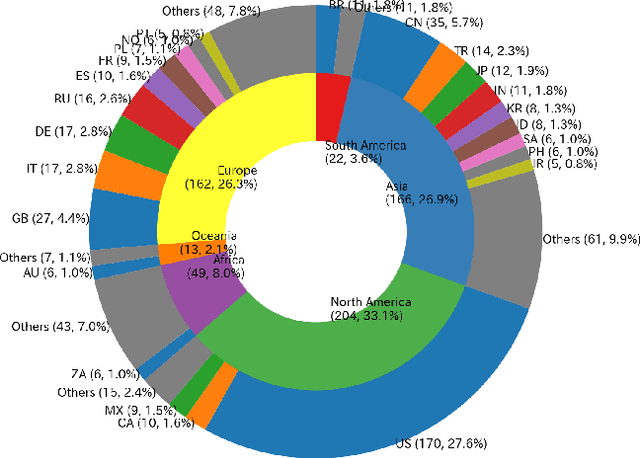

Abstract:As COVID-19 transmissions spread worldwide, governments have announced and enforced travel restrictions to prevent further infections. Such restrictions have a direct effect on the volume of international flights among these countries, resulting in extensive social and economic costs. To better understand the situation in a quantitative manner, we used the Opensky network data to clarify flight patterns and flight densities around the world and observe relationships between flight numbers with new infections, and with the economy (unemployment rate) in Barcelona. We found that the number of daily flights gradually decreased and suddenly dropped 64% during the second half of March in 2020 after the US and Europe enacted travel restrictions. We also observed a 51% decrease in the global flight network density decreased during this period. Regarding new COVID-19 cases, the world had an unexpected surge regardless of travel restrictions. Finally, the layoffs for temporary workers in the tourism and airplane business increased by 4.3 fold in the weeks following Spain's decision to close its borders.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge