Boris Bonev

MARA: Continuous SE(3)-Equivariant Attention for Molecular Force Fields

Feb 02, 2026Abstract:Machine learning force fields (MLFFs) have become essential for accurate and efficient atomistic modeling. Despite their high accuracy, most existing approaches rely on fixed angular expansions, limiting flexibility in weighting local geometric interactions. We introduce Modular Angular-Radial Attention (MARA), a module that extends spherical attention -- originally developed for SO(3) tasks -- to the molecular domain and SE(3), providing an efficient approximation of equivariant interactions. MARA operates directly on the angular and radial coordinates of neighboring atoms, enabling flexible, geometrically informed, and modular weighting of local environments. Unlike existing attention mechanisms in SE(3)-equivariant architectures, MARA can be integrated in a plug-and-play manner into models such as MACE without architectural modifications. Across molecular benchmarks, MARA improves energy and force predictions, reduces high-error events, and enhances robustness. These results demonstrate that continuous spherical attention is an effective and generalizable geometric operator that increases the expressiveness, stability, and reliability of atomistic models.

Demystifying Data-Driven Probabilistic Medium-Range Weather Forecasting

Jan 26, 2026Abstract:The recent revolution in data-driven methods for weather forecasting has lead to a fragmented landscape of complex, bespoke architectures and training strategies, obscuring the fundamental drivers of forecast accuracy. Here, we demonstrate that state-of-the-art probabilistic skill requires neither intricate architectural constraints nor specialized training heuristics. We introduce a scalable framework for learning multi-scale atmospheric dynamics by combining a directly downsampled latent space with a history-conditioned local projector that resolves high-resolution physics. We find that our framework design is robust to the choice of probabilistic estimator, seamlessly supporting stochastic interpolants, diffusion models, and CRPS-based ensemble training. Validated against the Integrated Forecasting System and the deep learning probabilistic model GenCast, our framework achieves statistically significant improvements on most of the variables. These results suggest scaling a general-purpose model is sufficient for state-of-the-art medium-range prediction, eliminating the need for tailored training recipes and proving effective across the full spectrum of probabilistic frameworks.

Data-driven solar forecasting enables near-optimal economic decisions

Sep 08, 2025Abstract:Solar energy adoption is critical to achieving net-zero emissions. However, it remains difficult for many industrial and commercial actors to decide on whether they should adopt distributed solar-battery systems, which is largely due to the unavailability of fast, low-cost, and high-resolution irradiance forecasts. Here, we present SunCastNet, a lightweight data-driven forecasting system that provides 0.05$^\circ$, 10-minute resolution predictions of surface solar radiation downwards (SSRD) up to 7 days ahead. SunCastNet, coupled with reinforcement learning (RL) for battery scheduling, reduces operational regret by 76--93\% compared to robust decision making (RDM). In 25-year investment backtests, it enables up to five of ten high-emitting industrial sectors per region to cross the commercial viability threshold of 12\% Internal Rate of Return (IRR). These results show that high-resolution, long-horizon solar forecasts can directly translate into measurable economic gains, supporting near-optimal energy operations and accelerating renewable deployment.

FourCastNet 3: A geometric approach to probabilistic machine-learning weather forecasting at scale

Jul 16, 2025Abstract:FourCastNet 3 advances global weather modeling by implementing a scalable, geometric machine learning (ML) approach to probabilistic ensemble forecasting. The approach is designed to respect spherical geometry and to accurately model the spatially correlated probabilistic nature of the problem, resulting in stable spectra and realistic dynamics across multiple scales. FourCastNet 3 delivers forecasting accuracy that surpasses leading conventional ensemble models and rivals the best diffusion-based methods, while producing forecasts 8 to 60 times faster than these approaches. In contrast to other ML approaches, FourCastNet 3 demonstrates excellent probabilistic calibration and retains realistic spectra, even at extended lead times of up to 60 days. All of these advances are realized using a purely convolutional neural network architecture tailored for spherical geometry. Scalable and efficient large-scale training on 1024 GPUs and more is enabled by a novel training paradigm for combined model- and data-parallelism, inspired by domain decomposition methods in classical numerical models. Additionally, FourCastNet 3 enables rapid inference on a single GPU, producing a 90-day global forecast at 0.25{\deg}, 6-hourly resolution in under 20 seconds. Its computational efficiency, medium-range probabilistic skill, spectral fidelity, and rollout stability at subseasonal timescales make it a strong candidate for improving meteorological forecasting and early warning systems through large ensemble predictions.

Principled Approaches for Extending Neural Architectures to Function Spaces for Operator Learning

Jun 12, 2025Abstract:A wide range of scientific problems, such as those described by continuous-time dynamical systems and partial differential equations (PDEs), are naturally formulated on function spaces. While function spaces are typically infinite-dimensional, deep learning has predominantly advanced through applications in computer vision and natural language processing that focus on mappings between finite-dimensional spaces. Such fundamental disparities in the nature of the data have limited neural networks from achieving a comparable level of success in scientific applications as seen in other fields. Neural operators are a principled way to generalize neural networks to mappings between function spaces, offering a pathway to replicate deep learning's transformative impact on scientific problems. For instance, neural operators can learn solution operators for entire classes of PDEs, e.g., physical systems with different boundary conditions, coefficient functions, and geometries. A key factor in deep learning's success has been the careful engineering of neural architectures through extensive empirical testing. Translating these neural architectures into neural operators allows operator learning to enjoy these same empirical optimizations. However, prior neural operator architectures have often been introduced as standalone models, not directly derived as extensions of existing neural network architectures. In this paper, we identify and distill the key principles for constructing practical implementations of mappings between infinite-dimensional function spaces. Using these principles, we propose a recipe for converting several popular neural architectures into neural operators with minimal modifications. This paper aims to guide practitioners through this process and details the steps to make neural operators work in practice. Our code can be found at https://github.com/neuraloperator/NNs-to-NOs

Attention on the Sphere

May 16, 2025Abstract:We introduce a generalized attention mechanism for spherical domains, enabling Transformer architectures to natively process data defined on the two-dimensional sphere - a critical need in fields such as atmospheric physics, cosmology, and robotics, where preserving spherical symmetries and topology is essential for physical accuracy. By integrating numerical quadrature weights into the attention mechanism, we obtain a geometrically faithful spherical attention that is approximately rotationally equivariant, providing strong inductive biases and leading to better performance than Cartesian approaches. To further enhance both scalability and model performance, we propose neighborhood attention on the sphere, which confines interactions to geodesic neighborhoods. This approach reduces computational complexity and introduces the additional inductive bias for locality, while retaining the symmetry properties of our method. We provide optimized CUDA kernels and memory-efficient implementations to ensure practical applicability. The method is validated on three diverse tasks: simulating shallow water equations on the rotating sphere, spherical image segmentation, and spherical depth estimation. Across all tasks, our spherical Transformers consistently outperform their planar counterparts, highlighting the advantage of geometric priors for learning on spherical domains.

A Library for Learning Neural Operators

Dec 13, 2024

Abstract:We present NeuralOperator, an open-source Python library for operator learning. Neural operators generalize neural networks to maps between function spaces instead of finite-dimensional Euclidean spaces. They can be trained and inferenced on input and output functions given at various discretizations, satisfying a discretization convergence properties. Built on top of PyTorch, NeuralOperator provides all the tools for training and deploying neural operator models, as well as developing new ones, in a high-quality, tested, open-source package. It combines cutting-edge models and customizability with a gentle learning curve and simple user interface for newcomers.

Data-driven Surface Solar Irradiance Estimation using Neural Operators at Global Scale

Nov 13, 2024

Abstract:Accurate surface solar irradiance (SSI) forecasting is essential for optimizing renewable energy systems, particularly in the context of long-term energy planning on a global scale. This paper presents a pioneering approach to solar radiation forecasting that leverages recent advancements in numerical weather prediction (NWP) and data-driven machine learning weather models. These advances facilitate long, stable rollouts and enable large ensemble forecasts, enhancing the reliability of predictions. Our flexible model utilizes variables forecast by these NWP and AI weather models to estimate 6-hourly SSI at global scale. Developed using NVIDIA Modulus, our model represents the first adaptive global framework capable of providing long-term SSI forecasts. Furthermore, it can be fine-tuned using satellite data, which significantly enhances its performance in the fine-tuned regions, while maintaining accuracy elsewhere. The improved accuracy of these forecasts has substantial implications for the integration of solar energy into power grids, enabling more efficient energy management and contributing to the global transition to renewable energy sources.

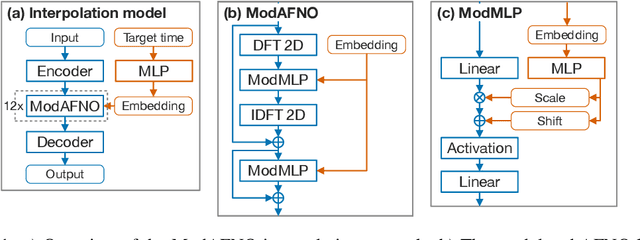

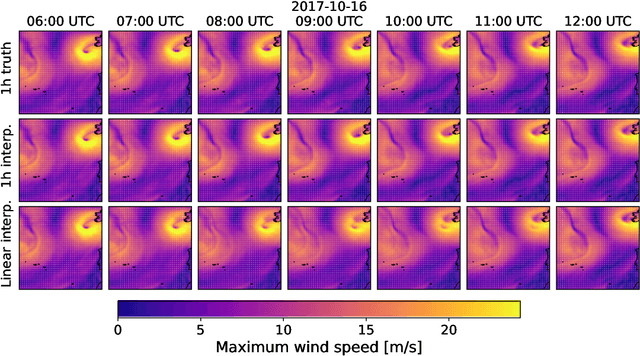

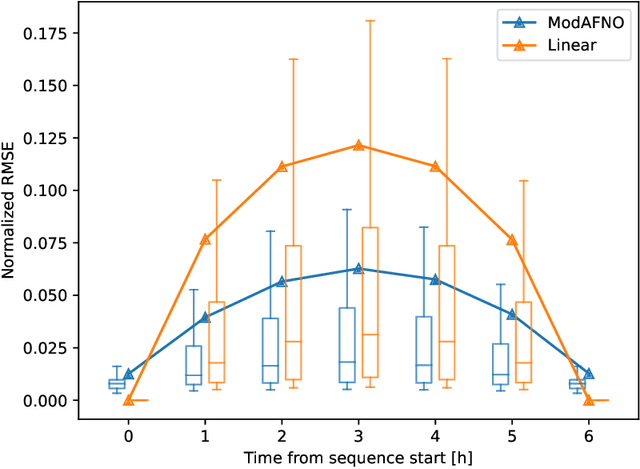

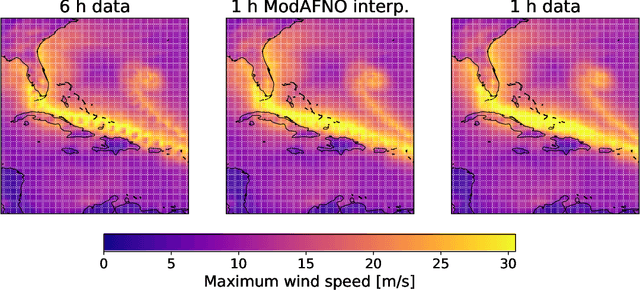

Modulated Adaptive Fourier Neural Operators for Temporal Interpolation of Weather Forecasts

Oct 24, 2024

Abstract:Weather and climate data are often available at limited temporal resolution, either due to storage limitations, or in the case of weather forecast models based on deep learning, their inherently long time steps. The coarse temporal resolution makes it difficult to capture rapidly evolving weather events. To address this limitation, we introduce an interpolation model that reconstructs the atmospheric state between two points in time for which the state is known. The model makes use of a novel network layer that modifies the adaptive Fourier neural operator (AFNO), which has been previously used in weather prediction and other applications of machine learning to physics problems. The modulated AFNO (ModAFNO) layer takes an embedding, here computed from the interpolation target time, as an additional input and applies a learned shift-scale operation inside the AFNO layers to adapt them to the target time. Thus, one model can be used to produce all intermediate time steps. Trained to interpolate between two time steps 6 h apart, the ModAFNO-based interpolation model produces 1 h resolution intermediate time steps that are visually nearly indistinguishable from the actual corresponding 1 h resolution data. The model reduces the RMSE loss of reconstructing the intermediate steps by approximately 50% compared to linear interpolation. We also demonstrate its ability to reproduce the statistics of extreme weather events such as hurricanes and heat waves better than 6 h resolution data. The ModAFNO layer is generic and is expected to be applicable to other problems, including weather forecasting with tunable lead time.

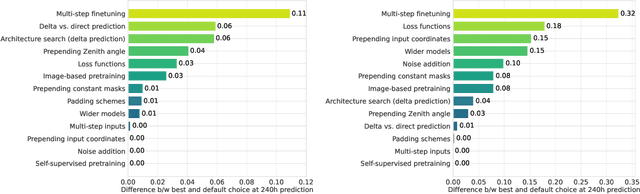

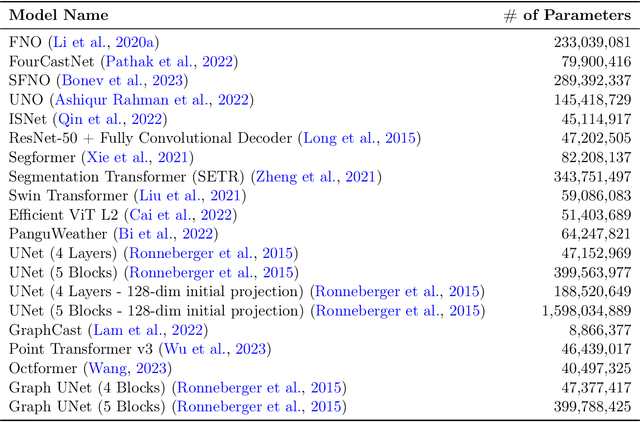

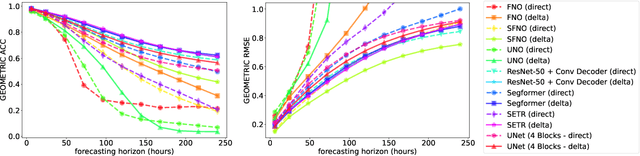

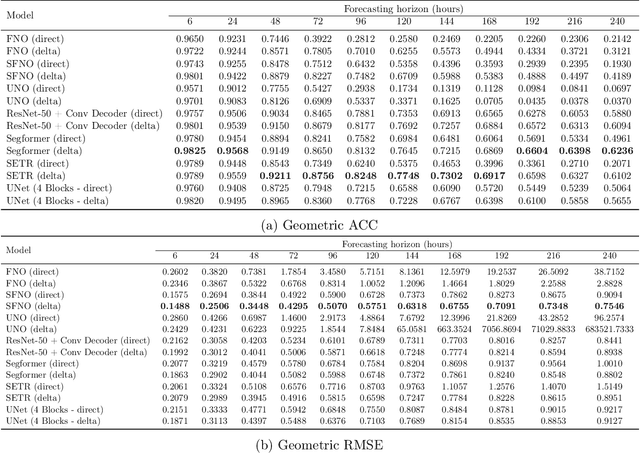

Exploring the design space of deep-learning-based weather forecasting systems

Oct 09, 2024

Abstract:Despite tremendous progress in developing deep-learning-based weather forecasting systems, their design space, including the impact of different design choices, is yet to be well understood. This paper aims to fill this knowledge gap by systematically analyzing these choices including architecture, problem formulation, pretraining scheme, use of image-based pretrained models, loss functions, noise injection, multi-step inputs, additional static masks, multi-step finetuning (including larger stride models), as well as training on a larger dataset. We study fixed-grid architectures such as UNet, fully convolutional architectures, and transformer-based models, along with grid-invariant architectures, including graph-based and operator-based models. Our results show that fixed-grid architectures outperform grid-invariant architectures, indicating a need for further architectural developments in grid-invariant models such as neural operators. We therefore propose a hybrid system that combines the strong performance of fixed-grid models with the flexibility of grid-invariant architectures. We further show that multi-step fine-tuning is essential for most deep-learning models to work well in practice, which has been a common practice in the past. Pretraining objectives degrade performance in comparison to supervised training, while image-based pretrained models provide useful inductive biases in some cases in comparison to training the model from scratch. Interestingly, we see a strong positive effect of using a larger dataset when training a smaller model as compared to training on a smaller dataset for longer. Larger models, on the other hand, primarily benefit from just an increase in the computational budget. We believe that these results will aid in the design of better weather forecasting systems in the future.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge