Aude Billard

EPFL

Quantitative Outcome-Oriented Assessment of Microsurgical Anastomosis

Aug 26, 2025

Abstract:Microsurgical anastomosis demands exceptional dexterity and visuospatial skills, underscoring the importance of comprehensive training and precise outcome assessment. Currently, methods such as the outcome-oriented anastomosis lapse index are used to evaluate this procedure. However, they often rely on subjective judgment, which can introduce biases that affect the reliability and efficiency of the assessment of competence. Leveraging three datasets from hospitals with participants at various levels, we introduce a quantitative framework that uses image-processing techniques for objective assessment of microsurgical anastomoses. The approach uses geometric modeling of errors along with a detection and scoring mechanism, enhancing the efficiency and reliability of microsurgical proficiency assessment and advancing training protocols. The results show that the geometric metrics effectively replicate expert raters' scoring for the errors considered in this work.

Positive-Unlabeled Constraint Learning (PUCL) for Inferring Nonlinear Continuous Constraints Functions from Expert Demonstrations

Aug 03, 2024Abstract:Planning for a wide range of real-world robotic tasks necessitates to know and write all constraints. However, instances exist where these constraints are either unknown or challenging to specify accurately. A possible solution is to infer the unknown constraints from expert demonstration. This paper presents a novel Positive-Unlabeled Constraint Learning (PUCL) algorithm to infer a continuous arbitrary constraint function from demonstration, without requiring prior knowledge of the true constraint parameterization or environmental model as existing works. Within our framework, we treat all data in demonstrations as positive (feasible) data, and learn a control policy to generate potentially infeasible trajectories, which serve as unlabeled data. In each iteration, we first update the policy and then a two-step positive-unlabeled learning procedure is applied, where it first identifies reliable infeasible data using a distance metric, and secondly learns a binary feasibility classifier (i.e., constraint function) from the feasible demonstrations and reliable infeasible data. The proposed framework is flexible to learn complex-shaped constraint boundary and will not mistakenly classify demonstrations as infeasible as previous methods. The effectiveness of the proposed method is verified in three robotic tasks, using a networked policy or a dynamical system policy. It successfully infers and transfers the continuous nonlinear constraints and outperforms other baseline methods in terms of constraint accuracy and policy safety.

Learning General Continuous Constraint from Demonstrations via Positive-Unlabeled Learning

Jul 23, 2024

Abstract:Planning for a wide range of real-world tasks necessitates to know and write all constraints. However, instances exist where these constraints are either unknown or challenging to specify accurately. A possible solution is to infer the unknown constraints from expert demonstration. The majority of prior works limit themselves to learning simple linear constraints, or require strong knowledge of the true constraint parameterization or environmental model. To mitigate these problems, this paper presents a positive-unlabeled (PU) learning approach to infer a continuous, arbitrary and possibly nonlinear, constraint from demonstration. From a PU learning view, We treat all data in demonstrations as positive (feasible) data, and learn a (sub)-optimal policy to generate high-reward-winning but potentially infeasible trajectories, which serve as unlabeled data containing both feasible and infeasible states. Under an assumption on data distribution, a feasible-infeasible classifier (i.e., constraint model) is learned from the two datasets through a postprocessing PU learning technique. The entire method employs an iterative framework alternating between updating the policy, which generates and selects higher-reward policies, and updating the constraint model. Additionally, a memory buffer is introduced to record and reuse samples from previous iterations to prevent forgetting. The effectiveness of the proposed method is validated in two Mujoco environments, successfully inferring continuous nonlinear constraints and outperforming a baseline method in terms of constraint accuracy and policy safety.

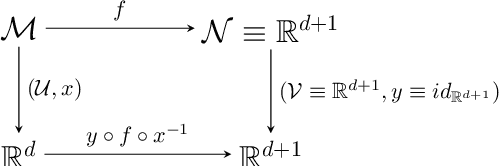

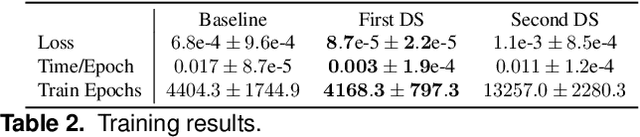

Hybrid Quadratic Programming -- Pullback Bundle Dynamical Systems Control

Jun 03, 2024Abstract:Dynamical System (DS)-based closed-loop control is a simple and effective way to generate reactive motion policies that well generalize to the robotic workspace, while retaining stability guarantees. Lately the formalism has been expanded in order to handle arbitrary geometry curved spaces, namely manifolds, beyond the standard flat Euclidean space. Despite the many different ways proposed to handle DS on manifolds, it is still unclear how to apply such structures on real robotic systems. In this preliminary study, we propose a way to combine modern optimal control techniques with a geometry-based formulation of DS. The advantage of such approach is two fold. First, it yields a torque-based control for compliant and adaptive motions; second, it generates dynamical systems consistent with the controlled system's dynamics. The salient point of the approach is that the complexity of designing a proper constrained-based optimal control problem, to ensure that dynamics move on a manifold while avoiding obstacles or self-collisions, is "outsourced" to the geometric DS. Constraints are implicitly embedded into the structure of the space in which the DS evolves. The optimal control, on the other hand, provides a torque-based control interface, and ensures dynamical consistency of the generated output. The whole can be achieved with minimal computational overhead since most of the computational complexity is delegated to the closed-form geometric DS.

Passive Obstacle Aware Control to Follow Desired Velocities

May 09, 2024

Abstract:Evaluating and updating the obstacle avoidance velocity for an autonomous robot in real-time ensures robustness against noise and disturbances. A passive damping controller can obtain the desired motion with a torque-controlled robot, which remains compliant and ensures a safe response to external perturbations. Here, we propose a novel approach for designing the passive control policy. Our algorithm complies with obstacle-free zones while transitioning to increased damping near obstacles to ensure collision avoidance. This approach ensures stability across diverse scenarios, effectively mitigating disturbances. Validation on a 7DoF robot arm demonstrates superior collision rejection capabilities compared to the baseline, underlining its practicality for real-world applications. Our obstacle-aware damping controller represents a substantial advancement in secure robot control within complex and uncertain environments.

Action Contextualization: Adaptive Task Planning and Action Tuning using Large Language Models

Apr 19, 2024

Abstract:Large Language Models (LLMs) present a promising frontier in robotic task planning by leveraging extensive human knowledge. Nevertheless, the current literature often overlooks the critical aspects of adaptability and error correction within robotic systems. This work aims to overcome this limitation by enabling robots to modify their motion strategies and select the most suitable task plans based on the context. We introduce a novel framework termed action contextualization, aimed at tailoring robot actions to the precise requirements of specific tasks, thereby enhancing adaptability through applying LLM-derived contextual insights. Our proposed motion metrics guarantee the feasibility and efficiency of adjusted motions, which evaluate robot performance and eliminate planning redundancies. Moreover, our framework supports online feedback between the robot and the LLM, enabling immediate modifications to the task plans and corrections of errors. Our framework has achieved an overall success rate of 81.25% through extensive validation. Finally, integrated with dynamic system (DS)-based robot controllers, the robotic arm-hand system demonstrates its proficiency in autonomously executing LLM-generated motion plans for sequential table-clearing tasks, rectifying errors without human intervention, and completing tasks, showcasing robustness against external disturbances. Our proposed framework features the potential to be integrated with modular control approaches, significantly enhancing robots' adaptability and autonomy in sequential task execution.

Learning Dynamical Systems Encoding Non-Linearity within Space Curvature

Mar 18, 2024

Abstract:Dynamical Systems (DS) are an effective and powerful means of shaping high-level policies for robotics control. They provide robust and reactive control while ensuring the stability of the driving vector field. The increasing complexity of real-world scenarios necessitates DS with a higher degree of non-linearity, along with the ability to adapt to potential changes in environmental conditions, such as obstacles. Current learning strategies for DSs often involve a trade-off, sacrificing either stability guarantees or offline computational efficiency in order to enhance the capabilities of the learned DS. Online local adaptation to environmental changes is either not taken into consideration or treated as a separate problem. In this paper, our objective is to introduce a method that enhances the complexity of the learned DS without compromising efficiency during training or stability guarantees. Furthermore, we aim to provide a unified approach for seamlessly integrating the initially learned DS's non-linearity with any local non-linearities that may arise due to changes in the environment. We propose a geometrical approach to learn asymptotically stable non-linear DS for robotics control. Each DS is modeled as a harmonic damped oscillator on a latent manifold. By learning the manifold's Euclidean embedded representation, our approach encodes the non-linearity of the DS within the curvature of the space. Having an explicit embedded representation of the manifold allows us to showcase obstacle avoidance by directly inducing local deformations of the space. We demonstrate the effectiveness of our methodology through two scenarios: first, the 2D learning of synthetic vector fields, and second, the learning of 3D robotic end-effector motions in real-world settings.

Learning Barrier-Certified Polynomial Dynamical Systems for Obstacle Avoidance with Robots

Mar 13, 2024Abstract:Established techniques that enable robots to learn from demonstrations are based on learning a stable dynamical system (DS). To increase the robots' resilience to perturbations during tasks that involve static obstacle avoidance, we propose incorporating barrier certificates into an optimization problem to learn a stable and barrier-certified DS. Such optimization problem can be very complex or extremely conservative when the traditional linear parameter-varying formulation is used. Thus, different from previous approaches in the literature, we propose to use polynomial representations for DSs, which yields an optimization problem that can be tackled by sum-of-squares techniques. Finally, our approach can handle obstacle shapes that fall outside the scope of assumptions typically found in the literature concerning obstacle avoidance within the DS learning framework. Supplementary material can be found at the project webpage: https://martinschonger.github.io/abc-ds

Transfer Learning in Robotics: An Upcoming Breakthrough? A Review of Promises and Challenges

Nov 29, 2023Abstract:Transfer learning is a conceptually-enticing paradigm in pursuit of truly intelligent embodied agents. The core concept -- reusing prior knowledge to learn in and from novel situations -- is successfully leveraged by humans to handle novel situations. In recent years, transfer learning has received renewed interest from the community from different perspectives, including imitation learning, domain adaptation, and transfer of experience from simulation to the real world, among others. In this paper, we unify the concept of transfer learning in robotics and provide the first taxonomy of its kind considering the key concepts of robot, task, and environment. Through a review of the promises and challenges in the field, we identify the need of transferring at different abstraction levels, the need of quantifying the transfer gap and the quality of transfer, as well as the dangers of negative transfer. Via this position paper, we hope to channel the effort of the community towards the most significant roadblocks to realize the full potential of transfer learning in robotics.

Implicit Manifold Gaussian Process Regression

Oct 30, 2023

Abstract:Gaussian process regression is widely used because of its ability to provide well-calibrated uncertainty estimates and handle small or sparse datasets. However, it struggles with high-dimensional data. One possible way to scale this technique to higher dimensions is to leverage the implicit low-dimensional manifold upon which the data actually lies, as postulated by the manifold hypothesis. Prior work ordinarily requires the manifold structure to be explicitly provided though, i.e. given by a mesh or be known to be one of the well-known manifolds like the sphere. In contrast, in this paper we propose a Gaussian process regression technique capable of inferring implicit structure directly from data (labeled and unlabeled) in a fully differentiable way. For the resulting model, we discuss its convergence to the Mat\'ern Gaussian process on the assumed manifold. Our technique scales up to hundreds of thousands of data points, and may improve the predictive performance and calibration of the standard Gaussian process regression in high-dimensional~settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge