Andrea Cherubini

Temporally-Aware Supervised Contrastive Learning for Polyp Counting in Colonoscopy

Jul 03, 2025Abstract:Automated polyp counting in colonoscopy is a crucial step toward automated procedure reporting and quality control, aiming to enhance the cost-effectiveness of colonoscopy screening. Counting polyps in a procedure involves detecting and tracking polyps, and then clustering tracklets that belong to the same polyp entity. Existing methods for polyp counting rely on self-supervised learning and primarily leverage visual appearance, neglecting temporal relationships in both tracklet feature learning and clustering stages. In this work, we introduce a paradigm shift by proposing a supervised contrastive loss that incorporates temporally-aware soft targets. Our approach captures intra-polyp variability while preserving inter-polyp discriminability, leading to more robust clustering. Additionally, we improve tracklet clustering by integrating a temporal adjacency constraint, reducing false positive re-associations between visually similar but temporally distant tracklets. We train and validate our method on publicly available datasets and evaluate its performance with a leave-one-out cross-validation strategy. Results demonstrate a 2.2x reduction in fragmentation rate compared to prior approaches. Our results highlight the importance of temporal awareness in polyp counting, establishing a new state-of-the-art. Code is available at https://github.com/lparolari/temporally-aware-polyp-counting.

Interaction force estimation for tactile sensor arrays: Toward tactile-based interaction control for robotic fingers

Nov 20, 2024Abstract:Accurate estimation of interaction forces is crucial for achieving fine, dexterous control in robotic systems. Although tactile sensor arrays offer rich sensing capabilities, their effective use has been limited by challenges such as calibration complexities, nonlinearities, and deformation. In this paper, we tackle these issues by presenting a novel method for obtaining 3D force estimation using tactile sensor arrays. Unlike existing approaches that focus on specific or decoupled force components, our method estimates full 3D interaction forces across an array of distributed sensors, providing comprehensive real-time feedback. Through systematic data collection and model training, our approach overcomes the limitations of prior methods, achieving accurate and reliable tactile-based force estimation. Besides, we integrate this estimation in a real-time control loop, enabling implicit, stable force regulation that is critical for precise robotic manipulation. Experimental validation on the Allegro robot hand with uSkin sensors demonstrates the effectiveness of our approach in real-time control, and its ability to enhance the robot's adaptability and dexterity.

Hard-Attention Gates with Gradient Routing for Endoscopic Image Computing

Jul 05, 2024Abstract:To address overfitting and enhance model generalization in gastroenterological polyp size assessment, our study introduces Feature-Selection Gates (FSG) or Hard-Attention Gates (HAG) alongside Gradient Routing (GR) for dynamic feature selection. This technique aims to boost Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs) by promoting sparse connectivity, thereby reducing overfitting and enhancing generalization. HAG achieves this through sparsification with learnable weights, serving as a regularization strategy. GR further refines this process by optimizing HAG parameters via dual forward passes, independently from the main model, to improve feature re-weighting. Our evaluation spanned multiple datasets, including CIFAR-100 for a broad impact assessment and specialized endoscopic datasets (REAL-Colon, Misawa, and SUN) focusing on polyp size estimation, covering over 200 polyps in more than 370,000 frames. The findings indicate that our HAG-enhanced networks substantially enhance performance in both binary and triclass classification tasks related to polyp sizing. Specifically, CNNs experienced an F1 Score improvement to 87.8% in binary classification, while in triclass classification, the ViT-T model reached an F1 Score of 76.5%, outperforming traditional CNNs and ViT-T models. To facilitate further research, we are releasing our codebase, which includes implementations for CNNs, multistream CNNs, ViT, and HAG-augmented variants. This resource aims to standardize the use of endoscopic datasets, providing public training-validation-testing splits for reliable and comparable research in gastroenterological polyp size estimation. The codebase is available at github.com/cosmoimd/feature-selection-gates.

* Attention Gates, Hard-Attention Gates, Gradient Routing, Feature Selection Gates, Endoscopy, Medical Image Processing, Computer Vision

REAL-Colon: A dataset for developing real-world AI applications in colonoscopy

Mar 04, 2024Abstract:Detection and diagnosis of colon polyps are key to preventing colorectal cancer. Recent evidence suggests that AI-based computer-aided detection (CADe) and computer-aided diagnosis (CADx) systems can enhance endoscopists' performance and boost colonoscopy effectiveness. However, most available public datasets primarily consist of still images or video clips, often at a down-sampled resolution, and do not accurately represent real-world colonoscopy procedures. We introduce the REAL-Colon (Real-world multi-center Endoscopy Annotated video Library) dataset: a compilation of 2.7M native video frames from sixty full-resolution, real-world colonoscopy recordings across multiple centers. The dataset contains 350k bounding-box annotations, each created under the supervision of expert gastroenterologists. Comprehensive patient clinical data, colonoscopy acquisition information, and polyp histopathological information are also included in each video. With its unprecedented size, quality, and heterogeneity, the REAL-Colon dataset is a unique resource for researchers and developers aiming to advance AI research in colonoscopy. Its openness and transparency facilitate rigorous and reproducible research, fostering the development and benchmarking of more accurate and reliable colonoscopy-related algorithms and models.

Towards vision-based dual arm robotic fruit harvesting

Jun 16, 2023Abstract:Interest in agricultural robotics has increased considerably in recent years due to benefits such as improvement in productivity and labor reduction. However, current problems associated with unstructured environments make the development of robotic harvesters challenging. Most research in agricultural robotics focuses on single arm manipulation. Here, we propose a dual-arm approach. We present a dual-arm fruit harvesting robot equipped with a RGB-D camera, cutting and collecting tools. We exploit the cooperative task description to maximize the capabilities of the dual-arm robot. We designed a Hierarchical Quadratic Programming based control strategy to fulfill the set of hard constrains related to the robot and environment: robot joint limits, robot self-collisions, robot-fruit and robot-tree collisions. We combine deep learning and standard image processing algorithms to detect and track fruits as well as the tree trunk in the scene. We validate our perception methods on real-world RGB-D images and our control method on simulated experiments.

Can robots mold soft plastic materials by shaping depth images?

Jun 16, 2023Abstract:Can robots mold soft plastic materials by shaping depth images? The short answer is no: current day robots can't. In this article, we address the problem of shaping plastic material with an anthropomorphic arm/hand robot, which observes the material with a fixed depth camera. Robots capable of molding could assist humans in many tasks, such as cooking, scooping or gardening. Yet, the problem is complex, due to its high-dimensionality at both perception and control levels. To address it, we design three alternative data-based methods for predicting the effect of robot actions on the material. Then, the robot can plan the sequence of actions and their positions, to mold the material into a desired shape. To make the prediction problem tractable, we rely on two original ideas. First, we prove that under reasonable assumptions, the shaping problem can be mapped from point cloud to depth image space, with many benefits (simpler processing, no need for registration, lower computation time and memory requirements). Second, we design a novel, simple metric for quickly measuring the distance between two depth images. The metric is based on the inherent point cloud representation of depth images, which enables direct and consistent comparison of image pairs through a non-uniform scaling approach, and therefore opens promising perspectives for designing \textit{depth image -- based} robot controllers. We assess our approach in a series of unprecedented experiments, where a robotic arm/hand molds flour from initial to final shapes, either with its own dataset, or by transfer learning from a human dataset. We conclude the article by discussing the limitations of our framework and those of current day hardware, which make human-like robot molding a challenging open research problem.

Challenges and Outlook in Robotic Manipulation of Deformable Objects

May 04, 2021

Abstract:Deformable object manipulation (DOM) is an emerging research problem in robotics. The ability to manipulate deformable objects endows robots with higher autonomy and promises new applications in the industrial, services, and healthcare sectors. However, compared to rigid object manipulation, the manipulation of deformable objects is considerably more complex and is still an open research problem. Tackling the challenges in DOM demands breakthroughs in almost all aspects of robotics, namely hardware design, sensing, deformation modeling, planning, and control. In this article, we highlight the main challenges that arise by considering deformation and review recent advances in each sub-field. A particular focus of our paper lies in the discussions of these challenges and proposing promising directions of research.

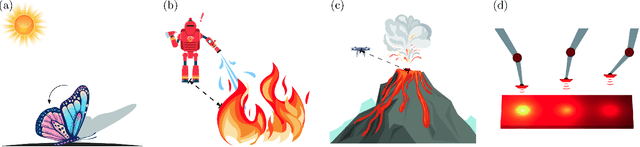

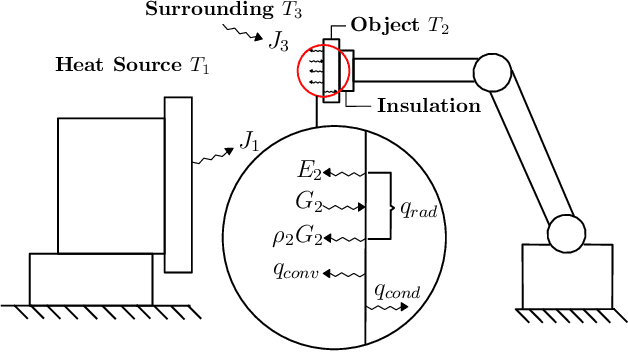

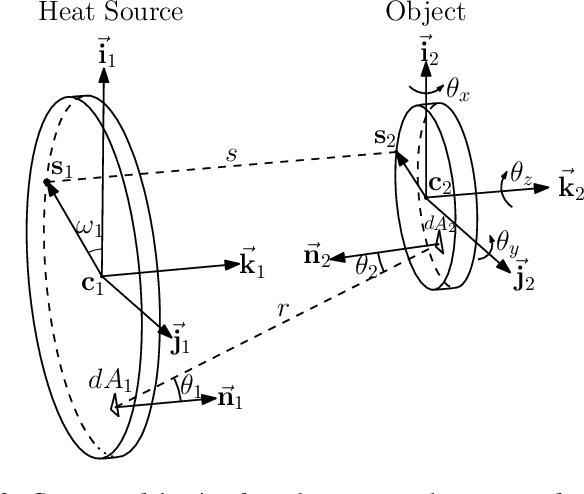

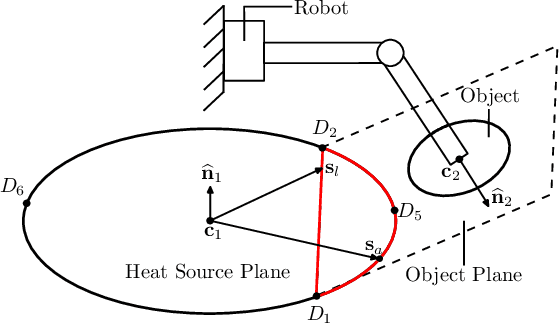

On Radiation-Based Thermal Servoing: New Models, Controls and Experiments

Dec 24, 2020

Abstract:In this paper, we introduce a new sensor-based control method that regulates (by means of robot motions) the heat transfer between a radiative source and an object of interest. This valuable sensorimotor capability is needed in many industrial, dermatology and field robot applications, and it is an essential component for creating machines with advanced thermo-motor intelligence. To this end, we derive a geometric-thermal-motor model which describes the relationship between the robot's active configuration and the produced dynamic thermal response. We then use the model to guide the design of two new thermal servoing controllers (one model-based and one adaptive), and analyze their stability with Lyapunov theory. To validate our method, we report a detailed experimental study with a robotic manipulator conducting autonomous thermal servoing tasks. To the best of the authors' knowledge, this is the first time that temperature regulation has been formulated as a motion control problem for robots.

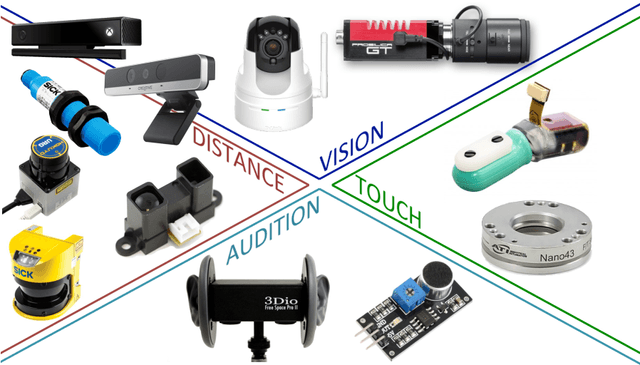

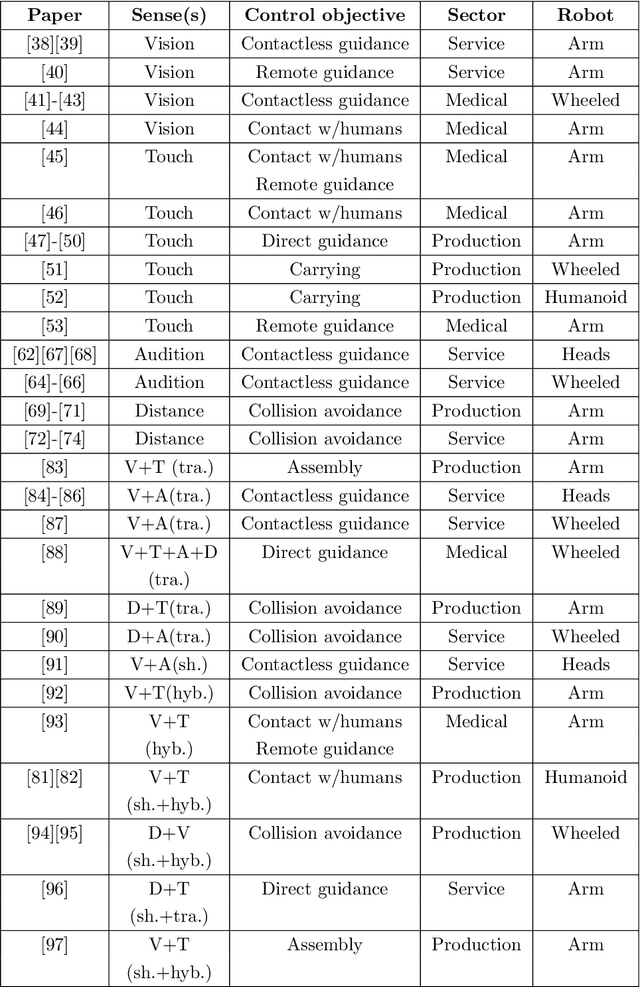

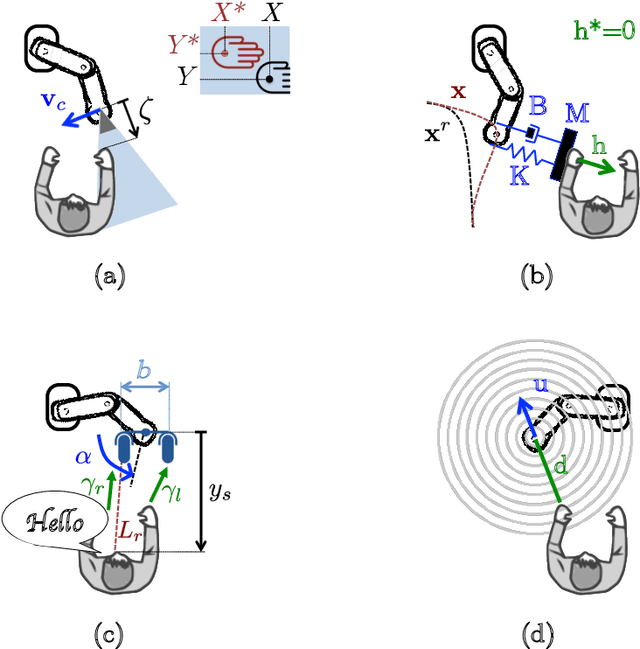

Sensor-Based Control for Collaborative Robots: Fundamentals, Challenges and Opportunities

Jul 04, 2020

Abstract:The objective of this paper is to present a systematic review of existing sensor-based control methodologies for applications that involve direct interaction between humans and robots, in the form of either physical collaboration or safe coexistence. To this end, we first introduce the basic formulation of the sensor-servo problem, then present the most common approaches: vision-based, touch-based, audio-based, and distance-based control. Afterwards, we discuss and formalize the methods that integrate heterogeneous sensors at the control level. The surveyed body of literature is classified according to the type of sensor, to the way multiple measurements are combined, and to the target objectives and applications. Finally, we discuss open problems, potential applications, and future research directions.

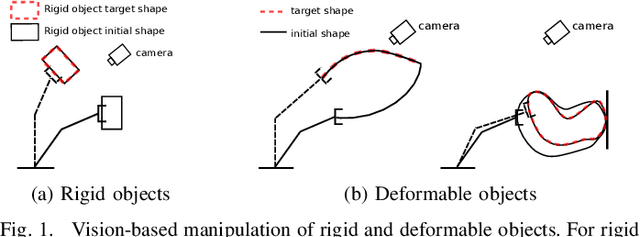

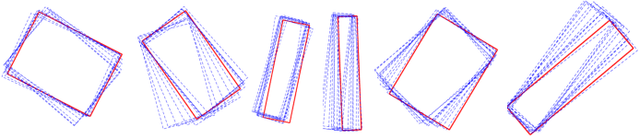

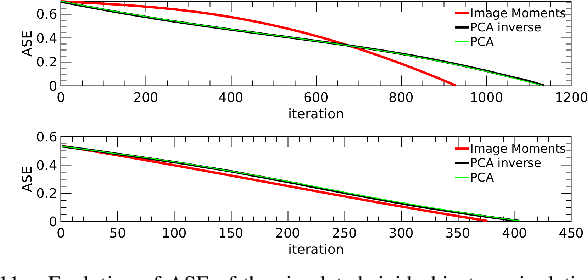

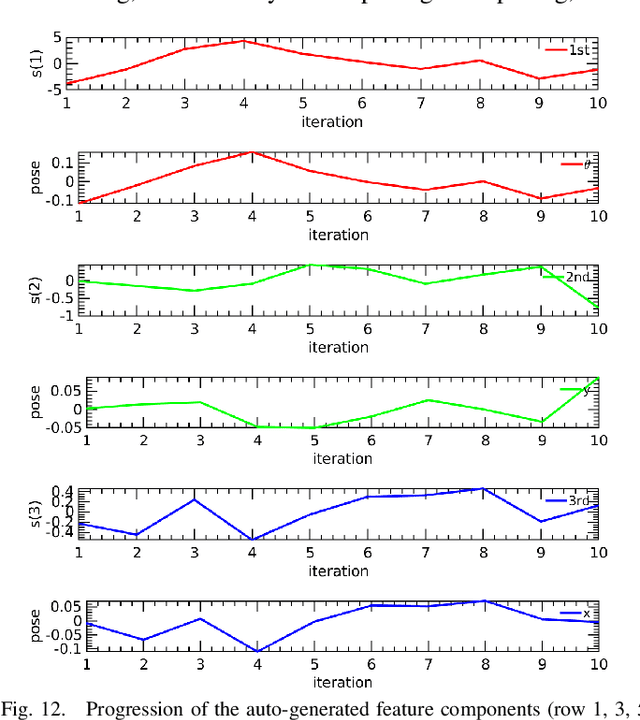

Vision-based Manipulation of Deformable and Rigid Objects Using Subspace Projections of 2D Contours

Jun 16, 2020

Abstract:This paper proposes a unified vision-based manipulation framework using image contours of deformable/rigid objects. Instead of using human-defined cues, the robot automatically learns the features from processed vision data. Our method simultaneously generates---from the same data---both, visual features and the interaction matrix that relates them to the robot control inputs. Extraction of the feature vector and control commands is done online and adaptively, with little data for initialization. The method allows the robot to manipulate an object without knowing whether it is rigid or deformable. To validate our approach, we conduct numerical simulations and experiments with both deformable and rigid objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge