Abdulla Ayyad

E-Calib: A Fast, Robust and Accurate Calibration Toolbox for Event Cameras

Jun 15, 2023Abstract:Event cameras triggered a paradigm shift in the computer vision community delineated by their asynchronous nature, low latency, and high dynamic range. Calibration of event cameras is always essential to account for the sensor intrinsic parameters and for 3D perception. However, conventional image-based calibration techniques are not applicable due to the asynchronous, binary output of the sensor. The current standard for calibrating event cameras relies on either blinking patterns or event-based image reconstruction algorithms. These approaches are difficult to deploy in factory settings and are affected by noise and artifacts degrading the calibration performance. To bridge these limitations, we present E-Calib, a novel, fast, robust, and accurate calibration toolbox for event cameras utilizing the asymmetric circle grid, for its robustness to out-of-focus scenes. The proposed method is tested in a variety of rigorous experiments for different event camera models, on circle grids with different geometric properties, and under challenging illumination conditions. The results show that our approach outperforms the state-of-the-art in detection success rate, reprojection error, and estimation accuracy of extrinsic parameters.

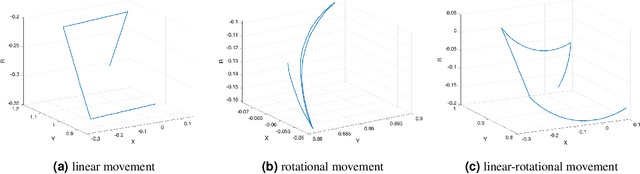

High Speed Neuromorphic Vision-Based Inspection of Countersinks in Automated Manufacturing Processes

Apr 08, 2023Abstract:Countersink inspection is crucial in various automated assembly lines, especially in the aerospace and automotive sectors. Advancements in machine vision introduced automated robotic inspection of countersinks using laser scanners and monocular cameras. Nevertheless, the aforementioned sensing pipelines require the robot to pause on each hole for inspection due to high latency and measurement uncertainties with motion, leading to prolonged execution times of the inspection task. The neuromorphic vision sensor, on the other hand, has the potential to expedite the countersink inspection process, but the unorthodox output of the neuromorphic technology prohibits utilizing traditional image processing techniques. Therefore, novel event-based perception algorithms need to be introduced. We propose a countersink detection approach on the basis of event-based motion compensation and the mean-shift clustering principle. In addition, our framework presents a robust event-based circle detection algorithm to precisely estimate the depth of the countersink specimens. The proposed approach expedites the inspection process by a factor of 10$\times$ compared to conventional countersink inspection methods. The work in this paper was validated for over 50 trials on three countersink workpiece variants. The experimental results show that our method provides a precision of 0.025 mm for countersink depth inspection despite the low resolution of commercially available neuromorphic cameras.

A Neuromorphic Dataset for Object Segmentation in Indoor Cluttered Environment

Feb 17, 2023

Abstract:Taking advantage of an event-based camera, the issues of motion blur, low dynamic range and low time sampling of standard cameras can all be addressed. However, there is a lack of event-based datasets dedicated to the benchmarking of segmentation algorithms, especially those that provide depth information which is critical for segmentation in occluded scenes. This paper proposes a new Event-based Segmentation Dataset (ESD), a high-quality 3D spatial and temporal dataset for object segmentation in an indoor cluttered environment. Our proposed dataset ESD comprises 145 sequences with 14,166 RGB frames that are manually annotated with instance masks. Overall 21.88 million and 20.80 million events from two event-based cameras in a stereo-graphic configuration are collected, respectively. To the best of our knowledge, this densely annotated and 3D spatial-temporal event-based segmentation benchmark of tabletop objects is the first of its kind. By releasing ESD, we expect to provide the community with a challenging segmentation benchmark with high quality.

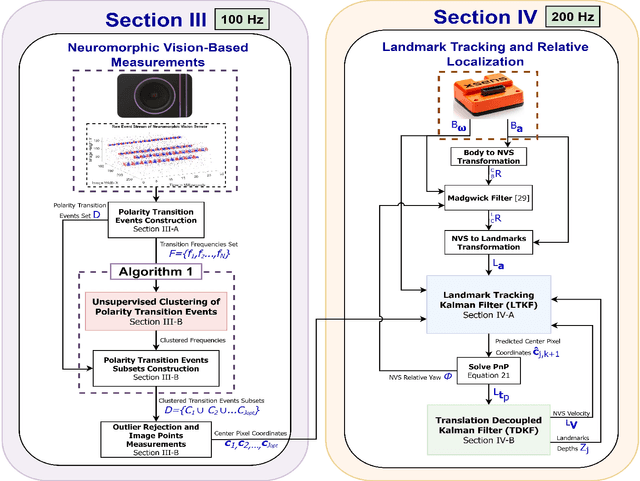

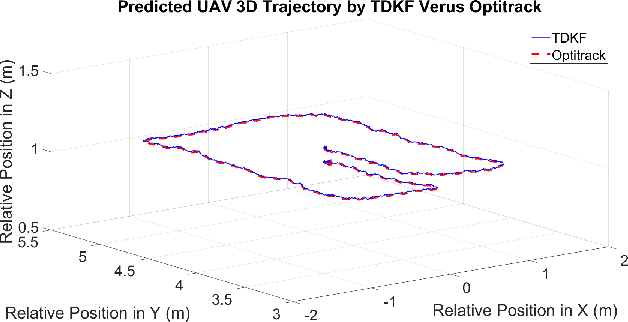

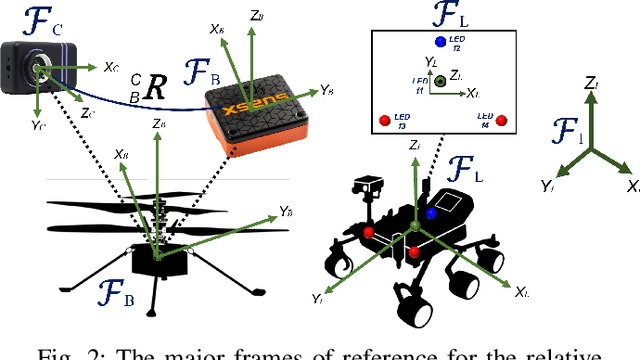

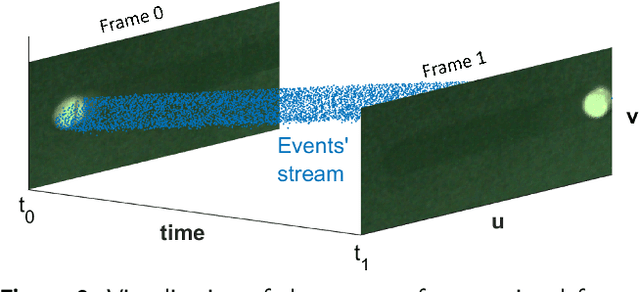

A Neuromorphic Vision-Based Measurement for Robust Relative Localization in Future Space Exploration Missions

Jun 23, 2022

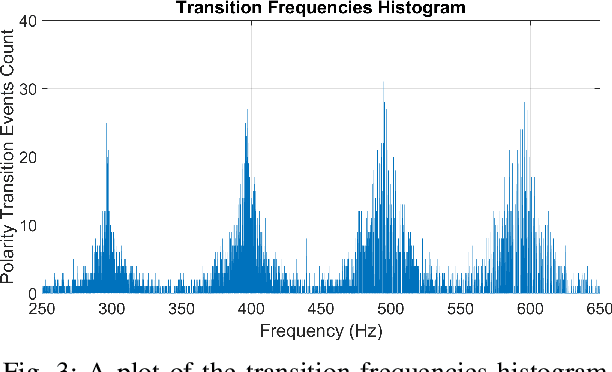

Abstract:Space exploration has witnessed revolutionary changes upon landing of the Perseverance Rover on the Martian surface and demonstrating the first flight beyond Earth by the Mars helicopter, Ingenuity. During their mission on Mars, Perseverance Rover and Ingenuity collaboratively explore the Martian surface, where Ingenuity scouts terrain information for rover's safe traversability. Hence, determining the relative poses between both the platforms is of paramount importance for the success of this mission. Driven by this necessity, this work proposes a robust relative localization system based on a fusion of neuromorphic vision-based measurements (NVBMs) and inertial measurements. The emergence of neuromorphic vision triggered a paradigm shift in the computer vision community, due to its unique working principle delineated with asynchronous events triggered by variations of light intensities occurring in the scene. This implies that observations cannot be acquired in static scenes due to illumination invariance. To circumvent this limitation, high frequency active landmarks are inserted in the scene to guarantee consistent event firing. These landmarks are adopted as salient features to facilitate relative localization. A novel event-based landmark identification algorithm using Gaussian Mixture Models (GMM) is developed for matching the landmarks correspondences formulating our NVBMs. The NVBMs are fused with inertial measurements in proposed state estimators, landmark tracking Kalman filter (LTKF) and translation decoupled Kalman filter (TDKF) for landmark tracking and relative localization, respectively. The proposed system was tested in a variety of experiments and has outperformed state-of-the-art approaches in accuracy and range.

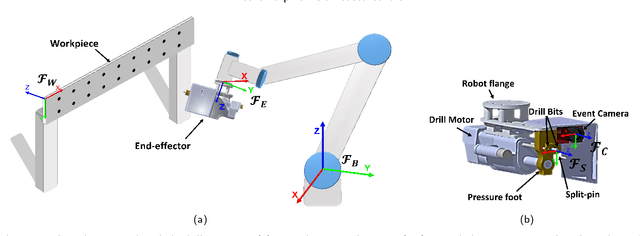

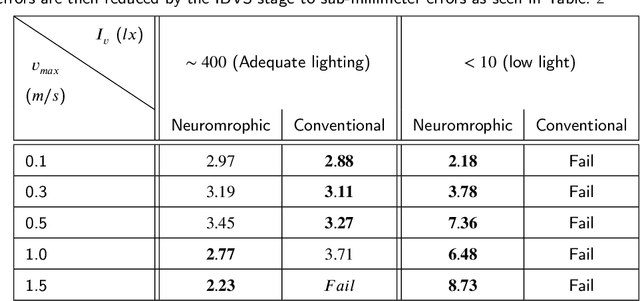

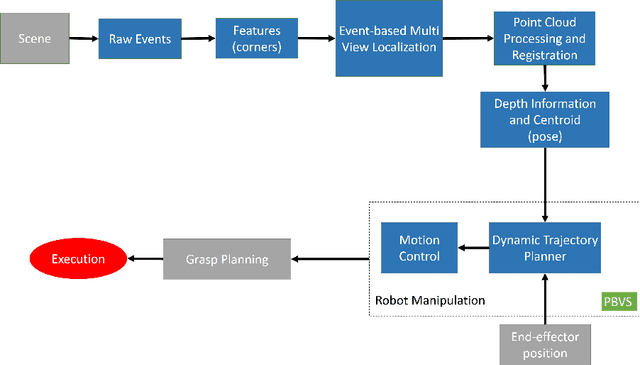

Neuromorphic Vision Based Control for the Precise Positioning of Robotic Drilling Systems

Jan 05, 2022

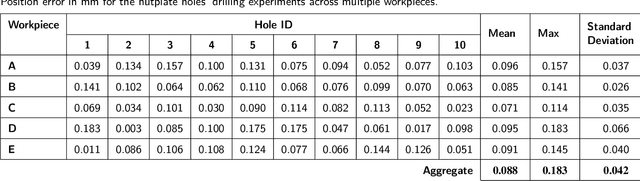

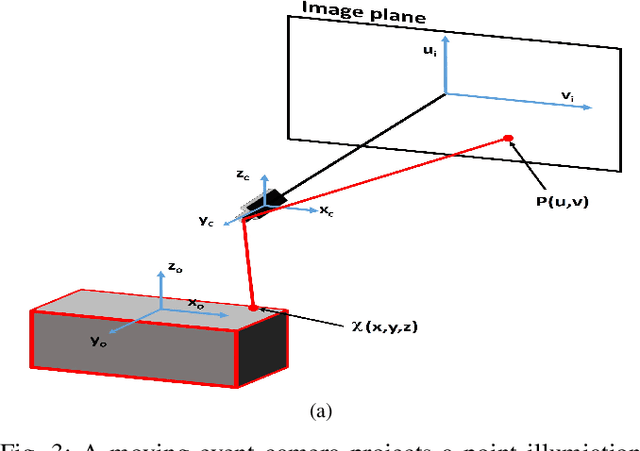

Abstract:The manufacturing industry is currently witnessing a paradigm shift with the unprecedented adoption of industrial robots, and machine vision is a key perception technology that enables these robots to perform precise operations in unstructured environments. However, the sensitivity of conventional vision sensors to lighting conditions and high-speed motion sets a limitation on the reliability and work-rate of production lines. Neuromorphic vision is a recent technology with the potential to address the challenges of conventional vision with its high temporal resolution, low latency, and wide dynamic range. In this paper and for the first time, we propose a novel neuromorphic vision based controller for faster and more reliable machining operations, and present a complete robotic system capable of performing drilling tasks with sub-millimeter accuracy. Our proposed system localizes the target workpiece in 3D using two perception stages that we developed specifically for the asynchronous output of neuromorphic cameras. The first stage performs multi-view reconstruction for an initial estimate of the workpiece's pose, and the second stage refines this estimate for a local region of the workpiece using circular hole detection. The robot then precisely positions the drilling end-effector and drills the target holes on the workpiece using a combined position-based and image-based visual servoing approach. The proposed solution is validated experimentally for drilling nutplate holes on workpieces placed arbitrarily in an unstructured environment with uncontrolled lighting. Experimental results prove the effectiveness of our solution with an average positional errors of less than 0.1 mm, and demonstrate that the use of neuromorphic vision overcomes the lighting and speed limitations of conventional cameras.

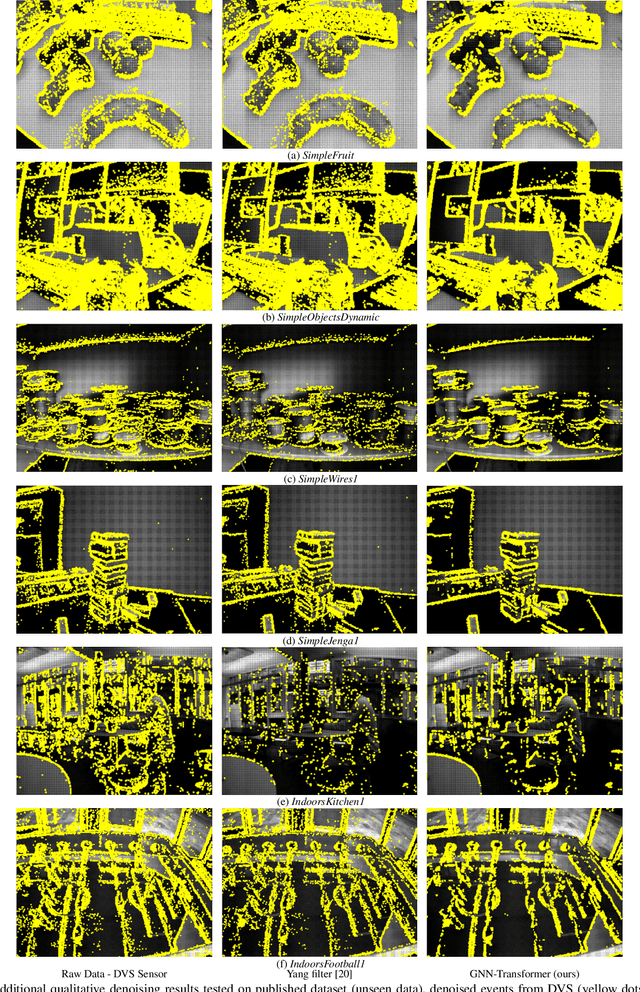

Neuromorphic Camera Denoising using Graph Neural Network-driven Transformers

Dec 17, 2021

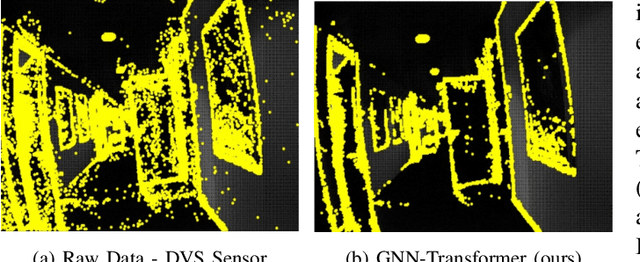

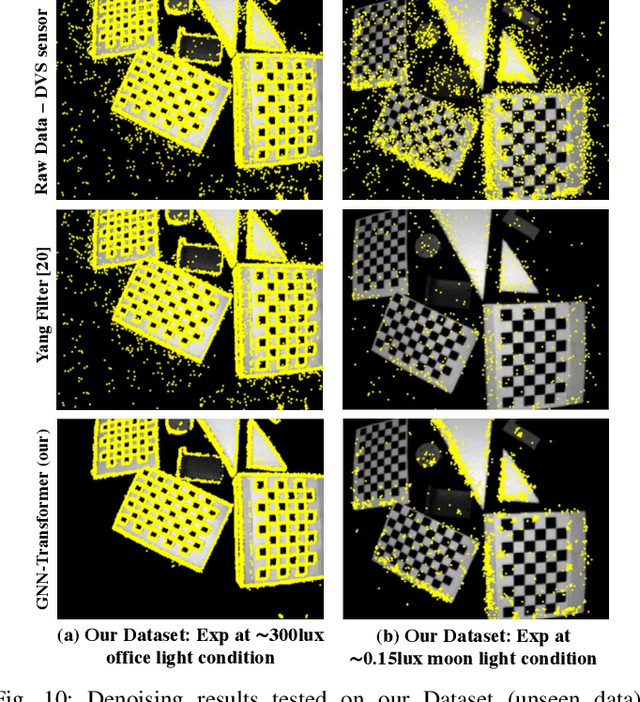

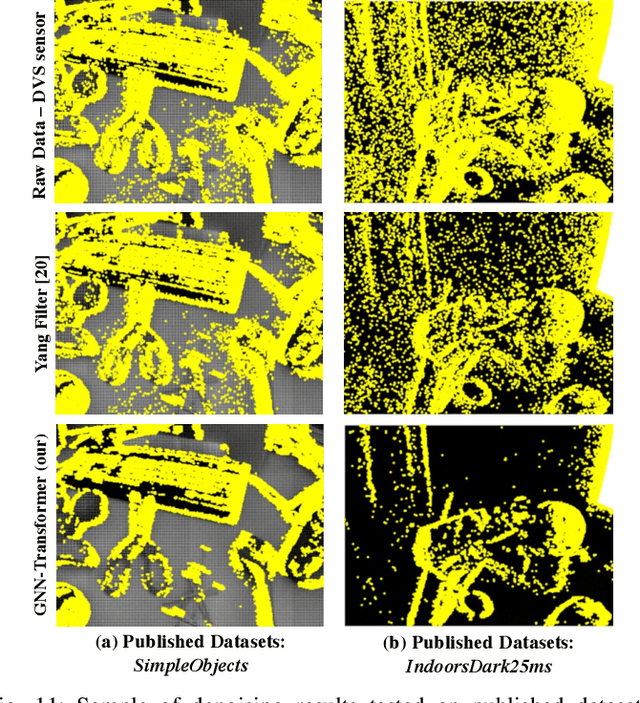

Abstract:Neuromorphic vision is a bio-inspired technology that has triggered a paradigm shift in the computer-vision community and is serving as a key-enabler for a multitude of applications. This technology has offered significant advantages including reduced power consumption, reduced processing needs, and communication speed-ups. However, neuromorphic cameras suffer from significant amounts of measurement noise. This noise deteriorates the performance of neuromorphic event-based perception and navigation algorithms. In this paper, we propose a novel noise filtration algorithm to eliminate events which do not represent real log-intensity variations in the observed scene. We employ a Graph Neural Network (GNN)-driven transformer algorithm, called GNN-Transformer, to classify every active event pixel in the raw stream into real-log intensity variation or noise. Within the GNN, a message-passing framework, called EventConv, is carried out to reflect the spatiotemporal correlation among the events, while preserving their asynchronous nature. We also introduce the Known-object Ground-Truth Labeling (KoGTL) approach for generating approximate ground truth labels of event streams under various illumination conditions. KoGTL is used to generate labeled datasets, from experiments recorded in challenging lighting conditions. These datasets are used to train and extensively test our proposed algorithm. When tested on unseen datasets, the proposed algorithm outperforms existing methods by 12% in terms of filtration accuracy. Additional tests are also conducted on publicly available datasets to demonstrate the generalization capabilities of the proposed algorithm in the presence of illumination variations and different motion dynamics. Compared to existing solutions, qualitative results verified the superior capability of the proposed algorithm to eliminate noise while preserving meaningful scene events.

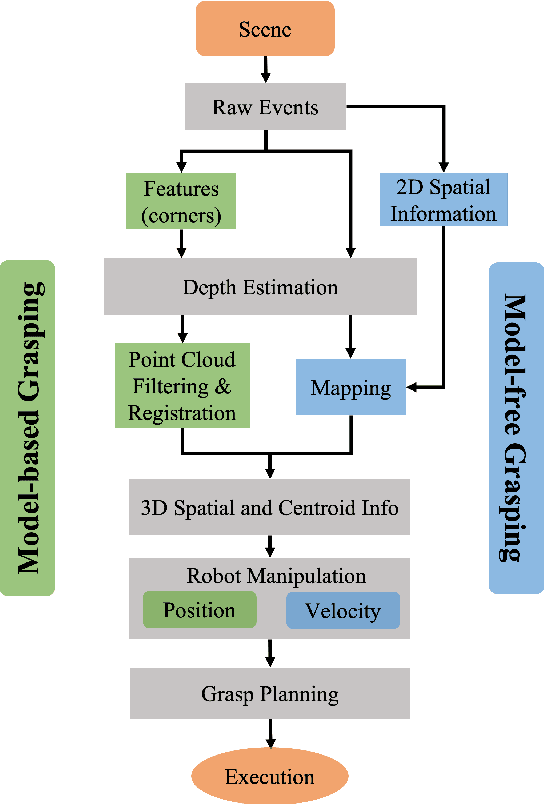

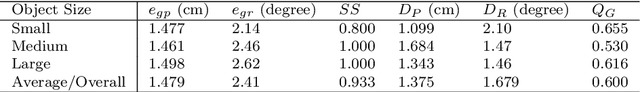

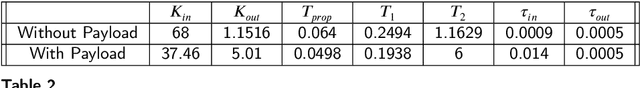

Real-Time Grasping Strategies Using Event Camera

Jul 15, 2021

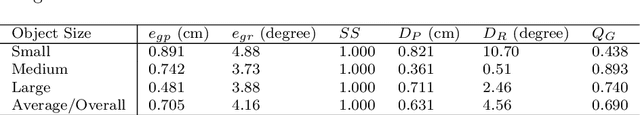

Abstract:Robotic vision plays a key role for perceiving the environment in grasping applications. However, the conventional framed-based robotic vision, suffering from motion blur and low sampling rate, may not meet the automation needs of evolving industrial requirements. This paper, for the first time, proposes an event-based robotic grasping framework for multiple known and unknown objects in a cluttered scene. Compared with standard frame-based vision, neuromorphic vision has advantages of microsecond-level sampling rate and no motion blur. Building on that, the model-based and model-free approaches are developed for known and unknown objects' grasping respectively. For the model-based approach, event-based multi-view approach is used to localize the objects in the scene, and then point cloud processing allows for the clustering and registering of objects. Differently, the proposed model-free approach utilizes the developed event-based object segmentation, visual servoing and grasp planning to localize, align to, and grasp the targeting object. The proposed approaches are experimentally validated with objects of different sizes, using a UR10 robot with an eye-in-hand neuromorphic camera and a Barrett hand gripper. Moreover, the robustness of the two proposed event-based grasping approaches are validated in a low-light environment. This low-light operating ability shows a great advantage over the grasping using the standard frame-based vision. Furthermore, the developed model-free approach demonstrates the advantage of dealing with unknown object without prior knowledge compared to the proposed model-based approach.

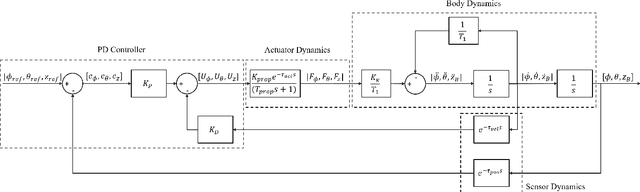

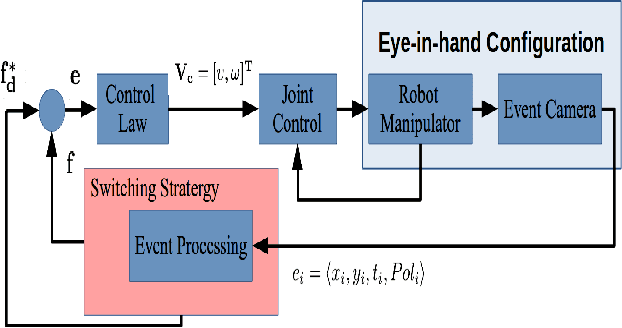

Unified Identification and Tuning Approach Using Deep Neural Networks For Visual Servoing Applications

Jul 04, 2021

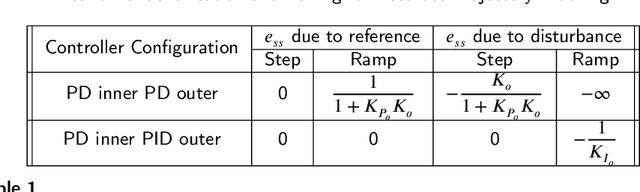

Abstract:Vision based control of Unmanned Aerial Vehicles (UAVs) has been adopted by a wide range of applications due to the availability of low-cost on-board sensors and computers. Tuning such systems to work properly requires extensive domain specific experience which limits the growth of emerging applications. Moreover, obtaining performance limits of UAV based visual servoing with the current state-of-the-art is not possible due to the complexity of the models used. In this paper, we present a systematic approach for real-time identification and tuning of visual servoing systems based on a novel robustified version of the recent deep neural networks with the modified relay feedback test (DNN-MRFT) approach. The proposed robust DNN-MRFT algorithm can be used with a multitude of vision sensors and estimation algorithms despite the high levels of sensor's noise. Sensitivity of MRFT to perturbations is investigated and its effect on identification and tuning performance is analyzed. DNN-MRFT was able to detect performance changes due to the use of slower vision sensors, or due to the integration of accelerometer measurements. Experimental identification results were closely matching simulation results, which can be used to explain system behaviour and anticipate the closed loop performance limits given a certain hardware and software setup. Finally, we demonstrate the capability of the DNN-MRFT tuned visual servoing systems to reject external disturbances. Some advantages of the suggested robust identification approach compared to existing visual servoing design approaches are presented.

Real-time Identification and Tuning of Multirotors Based on Deep Neural Networks for Accurate Trajectory Tracking Under Wind Disturbances

Jun 07, 2021

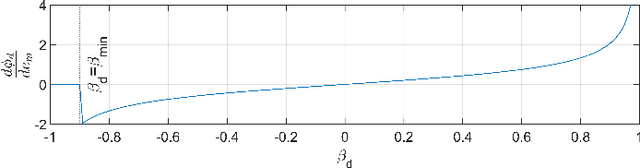

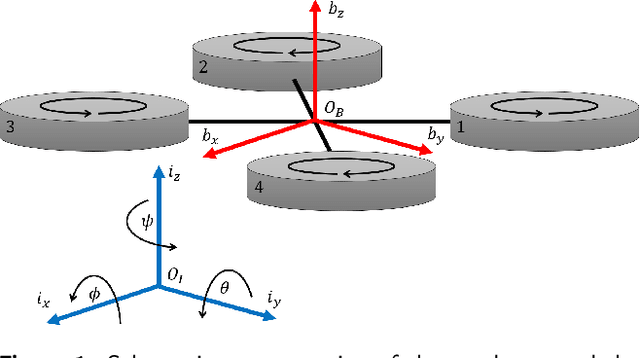

Abstract:High performance trajectory tracking for multirotor Unmanned Aerial Vehicles (UAVs) is a fast growing research area due to the increase in popularity and demand. In many applications, the multirotor UAV dynamics would change in-flight resulting in performance degradation, or even instability, such that the control system is required to adapt its parameters to the new dynamics. In this paper, we developed a real-time identification approach based on Deep Neural Networks (DNNs) and the Modified Relay Feedback Test (MRFT) to optimally tune PID controllers suitable for aggressive trajectory tracking. We also propose a feedback linearization technique along with additional feedforward terms to achieve high trajectory tracking performance. In addition, we investigate and analyze different PID configurations for position controllers to maximize the tracking performance in the presence of wind disturbance and system parameter changes, and provide a systematic design methodology to trade-off performance for robustness. We prove the effectiveness and applicability of our developed approach through a set of experiments where accurate trajectory tracking is maintained despite significant changes to the UAV aerodynamic characteristics and the application of external wind. We demonstrate low discrepancy between simulation and experimental results which proves the potential of using the suggested approach for planning and fault detection tasks. The achieved tracking results on figure-eight trajectory is on par with the state-of-the-art.

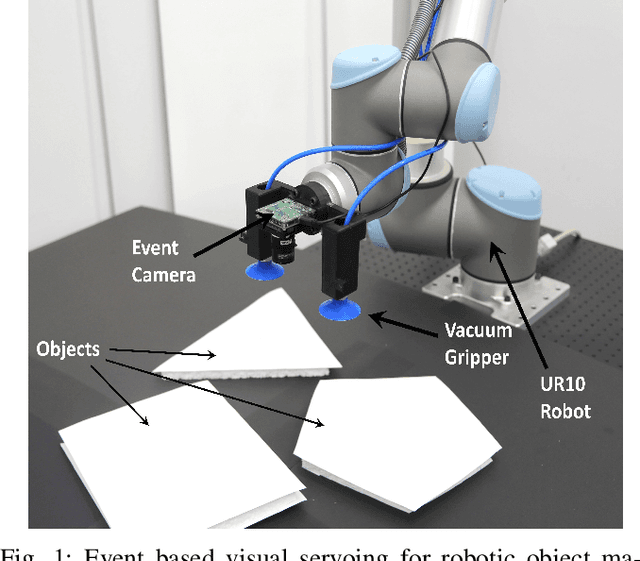

Neuromorphic Eye-in-Hand Visual Servoing

Apr 15, 2020

Abstract:Robotic vision plays a major role in factory automation to service robot applications. However, the traditional use of frame-based camera sets a limitation on continuous visual feedback due to their low sampling rate and redundant data in real-time image processing, especially in the case of high-speed tasks. Event cameras give human-like vision capabilities such as observing the dynamic changes asynchronously at a high temporal resolution ($1\mu s$) with low latency and wide dynamic range. In this paper, we present a visual servoing method using an event camera and a switching control strategy to explore, reach and grasp to achieve a manipulation task. We devise three surface layers of active events to directly process stream of events from relative motion. A purely event based approach is adopted to extract corner features, localize them robustly using heat maps and generate virtual features for tracking and alignment. Based on the visual feedback, the motion of the robot is controlled to make the temporal upcoming event features converge to the desired event in spatio-temporal space. The controller switches its strategy based on the sequence of operation to establish a stable grasp. The event based visual servoing (EVBS) method is validated experimentally using a commercial robot manipulator in an eye-in-hand configuration. Experiments prove the effectiveness of the EBVS method to track and grasp objects of different shapes without the need for re-tuning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge