Rajkumar Muthusamy

A Multi-Modal Interaction Framework for Efficient Human-Robot Collaborative Shelf Picking

Apr 09, 2025

Abstract:The growing presence of service robots in human-centric environments, such as warehouses, demands seamless and intuitive human-robot collaboration. In this paper, we propose a collaborative shelf-picking framework that combines multimodal interaction, physics-based reasoning, and task division for enhanced human-robot teamwork. The framework enables the robot to recognize human pointing gestures, interpret verbal cues and voice commands, and communicate through visual and auditory feedback. Moreover, it is powered by a Large Language Model (LLM) which utilizes Chain of Thought (CoT) and a physics-based simulation engine for safely retrieving cluttered stacks of boxes on shelves, relationship graph for sub-task generation, extraction sequence planning and decision making. Furthermore, we validate the framework through real-world shelf picking experiments such as 1) Gesture-Guided Box Extraction, 2) Collaborative Shelf Clearing and 3) Collaborative Stability Assistance.

Collapse and Collision Aware Grasping for Cluttered Shelf Picking

Mar 28, 2025

Abstract:Efficient and safe retrieval of stacked objects in warehouse environments is a significant challenge due to complex spatial dependencies and structural inter-dependencies. Traditional vision-based methods excel at object localization but often lack the physical reasoning required to predict the consequences of extraction, leading to unintended collisions and collapses. This paper proposes a collapse and collision aware grasp planner that integrates dynamic physics simulations for robotic decision-making. Using a single image and depth map, an approximate 3D representation of the scene is reconstructed in a simulation environment, enabling the robot to evaluate different retrieval strategies before execution. Two approaches 1) heuristic-based and 2) physics-based are proposed for both single-box extraction and shelf clearance tasks. Extensive real-world experiments on structured and unstructured box stacks, along with validation using datasets from existing databases, show that our physics-aware method significantly improves efficiency and success rates compared to baseline heuristics.

Bimodal SegNet: Instance Segmentation Fusing Events and RGB Frames for Robotic Grasping

Mar 20, 2023

Abstract:Object segmentation for robotic grasping under dynamic conditions often faces challenges such as occlusion, low light conditions, motion blur and object size variance. To address these challenges, we propose a Deep Learning network that fuses two types of visual signals, event-based data and RGB frame data. The proposed Bimodal SegNet network has two distinct encoders, one for each signal input and a spatial pyramidal pooling with atrous convolutions. Encoders capture rich contextual information by pooling the concatenated features at different resolutions while the decoder obtains sharp object boundaries. The evaluation of the proposed method undertakes five unique image degradation challenges including occlusion, blur, brightness, trajectory and scale variance on the Event-based Segmentation (ESD) Dataset. The evaluation results show a 6-10\% segmentation accuracy improvement over state-of-the-art methods in terms of mean intersection over the union and pixel accuracy. The model code is available at https://github.com/sanket0707/Bimodal-SegNet.git

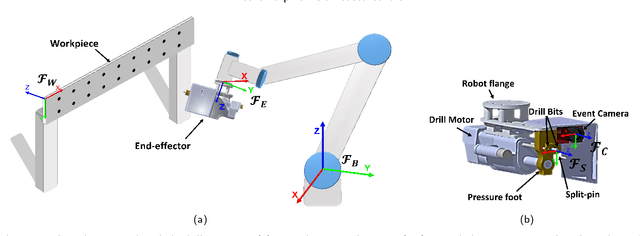

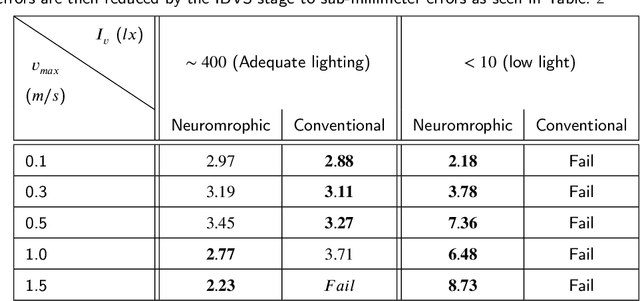

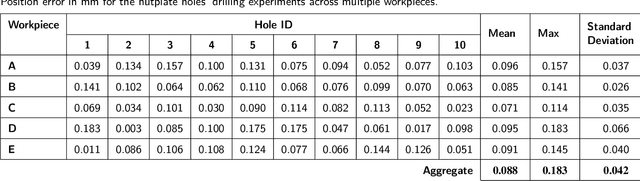

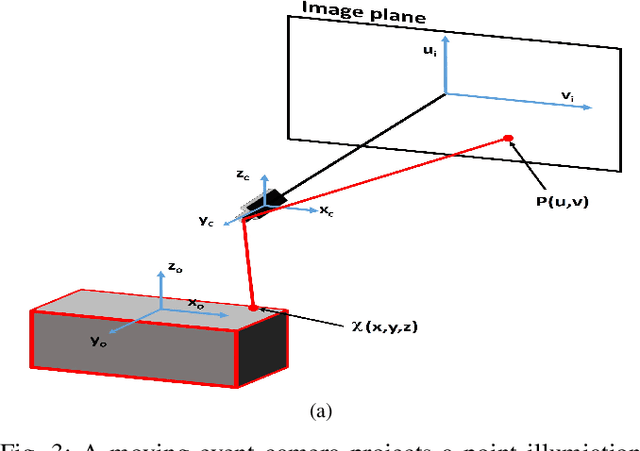

Neuromorphic Vision Based Control for the Precise Positioning of Robotic Drilling Systems

Jan 05, 2022

Abstract:The manufacturing industry is currently witnessing a paradigm shift with the unprecedented adoption of industrial robots, and machine vision is a key perception technology that enables these robots to perform precise operations in unstructured environments. However, the sensitivity of conventional vision sensors to lighting conditions and high-speed motion sets a limitation on the reliability and work-rate of production lines. Neuromorphic vision is a recent technology with the potential to address the challenges of conventional vision with its high temporal resolution, low latency, and wide dynamic range. In this paper and for the first time, we propose a novel neuromorphic vision based controller for faster and more reliable machining operations, and present a complete robotic system capable of performing drilling tasks with sub-millimeter accuracy. Our proposed system localizes the target workpiece in 3D using two perception stages that we developed specifically for the asynchronous output of neuromorphic cameras. The first stage performs multi-view reconstruction for an initial estimate of the workpiece's pose, and the second stage refines this estimate for a local region of the workpiece using circular hole detection. The robot then precisely positions the drilling end-effector and drills the target holes on the workpiece using a combined position-based and image-based visual servoing approach. The proposed solution is validated experimentally for drilling nutplate holes on workpieces placed arbitrarily in an unstructured environment with uncontrolled lighting. Experimental results prove the effectiveness of our solution with an average positional errors of less than 0.1 mm, and demonstrate that the use of neuromorphic vision overcomes the lighting and speed limitations of conventional cameras.

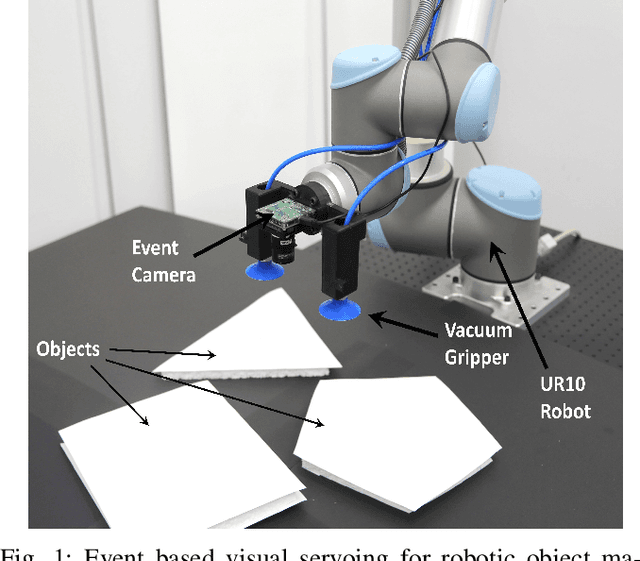

Real-Time Grasping Strategies Using Event Camera

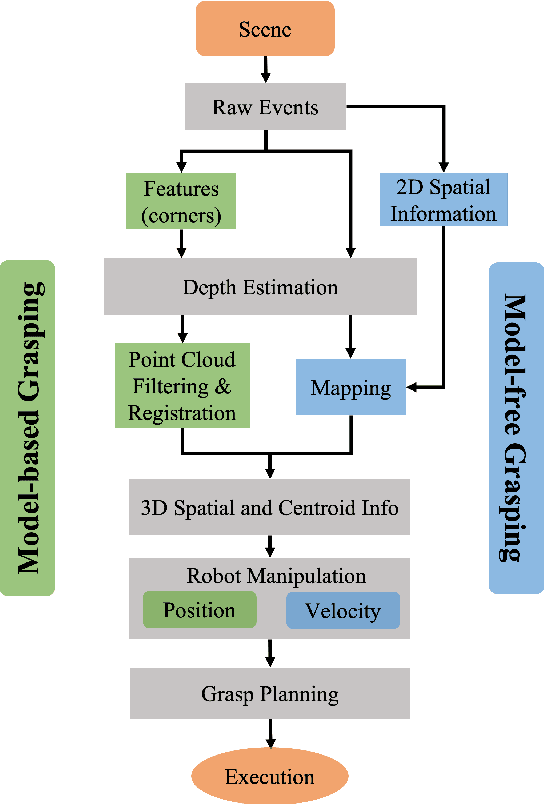

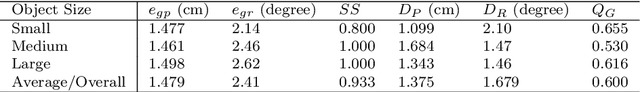

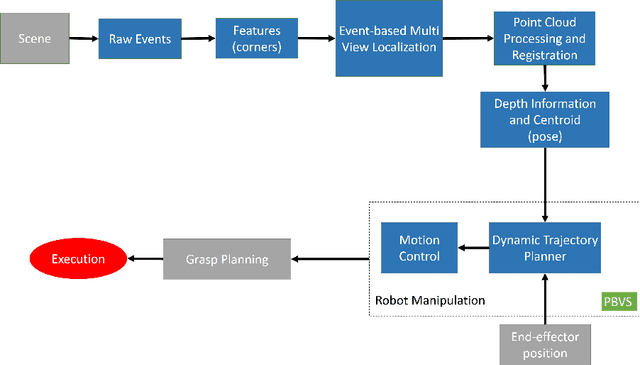

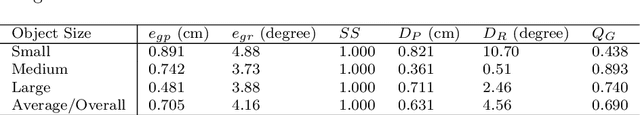

Jul 15, 2021

Abstract:Robotic vision plays a key role for perceiving the environment in grasping applications. However, the conventional framed-based robotic vision, suffering from motion blur and low sampling rate, may not meet the automation needs of evolving industrial requirements. This paper, for the first time, proposes an event-based robotic grasping framework for multiple known and unknown objects in a cluttered scene. Compared with standard frame-based vision, neuromorphic vision has advantages of microsecond-level sampling rate and no motion blur. Building on that, the model-based and model-free approaches are developed for known and unknown objects' grasping respectively. For the model-based approach, event-based multi-view approach is used to localize the objects in the scene, and then point cloud processing allows for the clustering and registering of objects. Differently, the proposed model-free approach utilizes the developed event-based object segmentation, visual servoing and grasp planning to localize, align to, and grasp the targeting object. The proposed approaches are experimentally validated with objects of different sizes, using a UR10 robot with an eye-in-hand neuromorphic camera and a Barrett hand gripper. Moreover, the robustness of the two proposed event-based grasping approaches are validated in a low-light environment. This low-light operating ability shows a great advantage over the grasping using the standard frame-based vision. Furthermore, the developed model-free approach demonstrates the advantage of dealing with unknown object without prior knowledge compared to the proposed model-based approach.

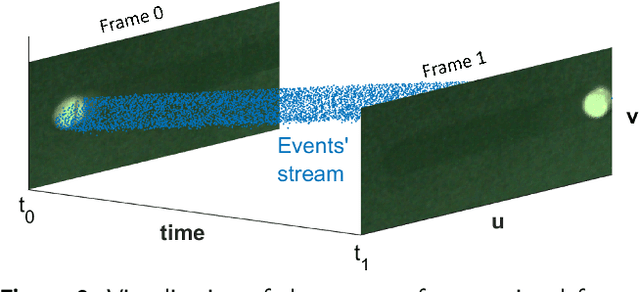

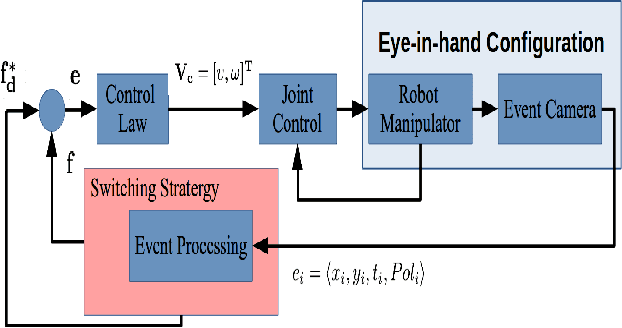

Neuromorphic Eye-in-Hand Visual Servoing

Apr 15, 2020

Abstract:Robotic vision plays a major role in factory automation to service robot applications. However, the traditional use of frame-based camera sets a limitation on continuous visual feedback due to their low sampling rate and redundant data in real-time image processing, especially in the case of high-speed tasks. Event cameras give human-like vision capabilities such as observing the dynamic changes asynchronously at a high temporal resolution ($1\mu s$) with low latency and wide dynamic range. In this paper, we present a visual servoing method using an event camera and a switching control strategy to explore, reach and grasp to achieve a manipulation task. We devise three surface layers of active events to directly process stream of events from relative motion. A purely event based approach is adopted to extract corner features, localize them robustly using heat maps and generate virtual features for tracking and alignment. Based on the visual feedback, the motion of the robot is controlled to make the temporal upcoming event features converge to the desired event in spatio-temporal space. The controller switches its strategy based on the sequence of operation to establish a stable grasp. The event based visual servoing (EVBS) method is validated experimentally using a commercial robot manipulator in an eye-in-hand configuration. Experiments prove the effectiveness of the EBVS method to track and grasp objects of different shapes without the need for re-tuning.

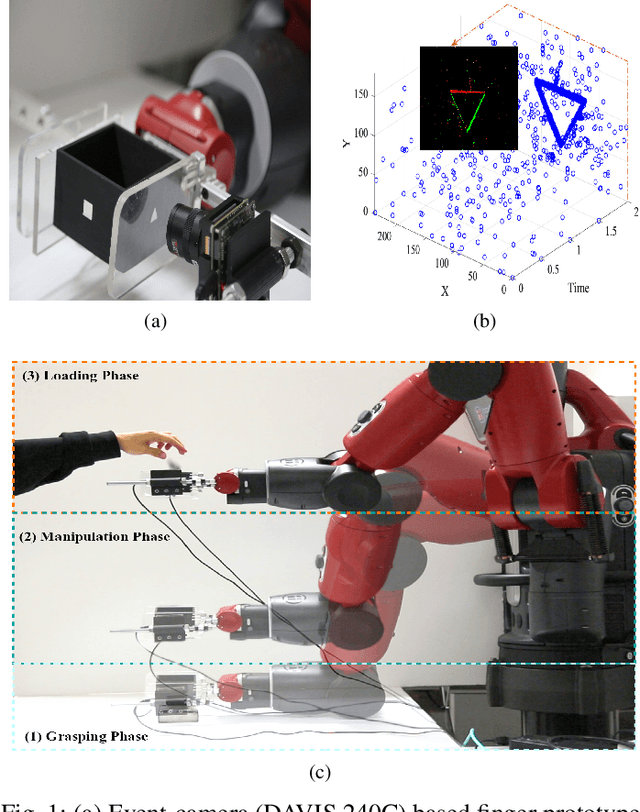

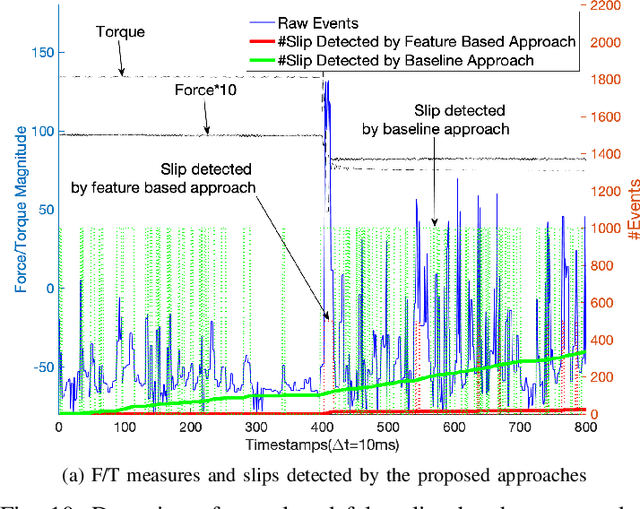

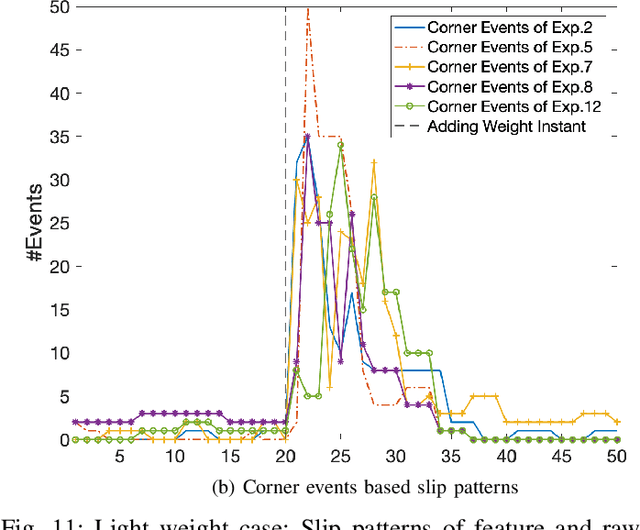

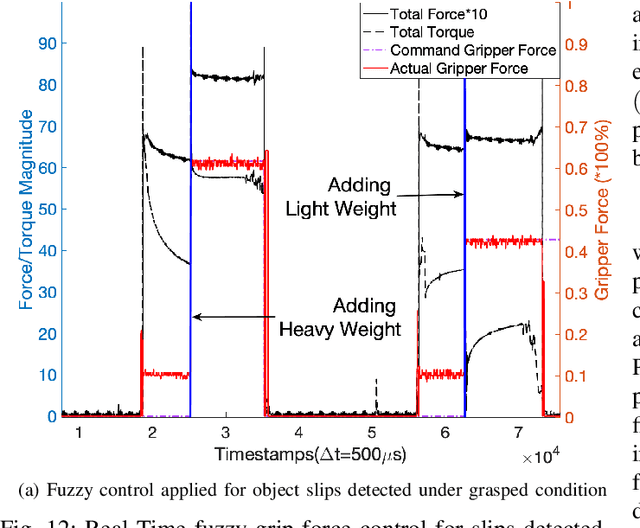

Neuromorphic Event-Based Slip Detection and suppression in Robotic Grasping and Manipulation

Apr 15, 2020

Abstract:Slip detection is essential for robots to make robust grasping and fine manipulation. In this paper, a novel dynamic vision-based finger system for slip detection and suppression is proposed. We also present a baseline and feature based approach to detect object slips under illumination and vibration uncertainty. A threshold method is devised to autonomously sample noise in real-time to improve slip detection. Moreover, a fuzzy based suppression strategy using incipient slip feedback is proposed for regulating the grip force. A comprehensive experimental study of our proposed approaches under uncertainty and system for high-performance precision manipulation are presented. We also propose a slip metric to evaluate such performance quantitatively. Results indicate that the system can effectively detect incipient slip events at a sampling rate of 2kHz ($\Delta t = 500\mu s$) and suppress them before a gross slip occurs. The event-based approach holds promises to high precision manipulation task requirement in industrial manufacturing and household services.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge