Yunhui Zheng

VELVET: a noVel Ensemble Learning approach to automatically locate VulnErable sTatements

Jan 13, 2022

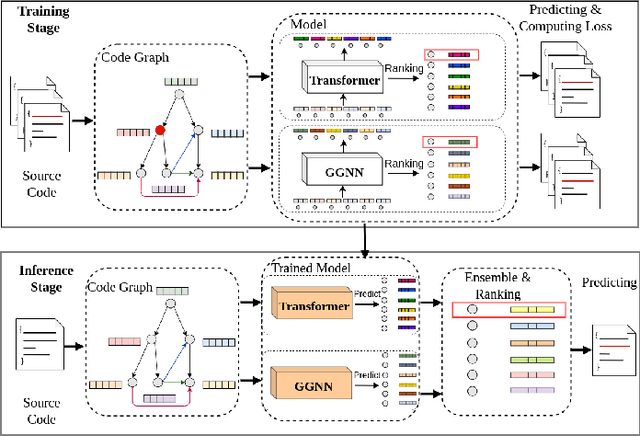

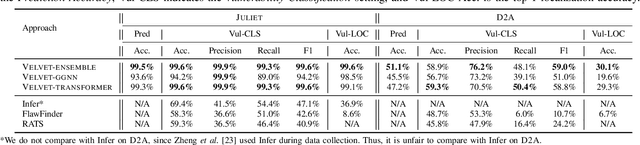

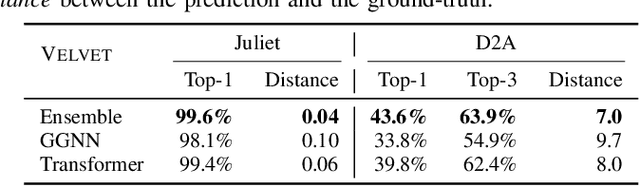

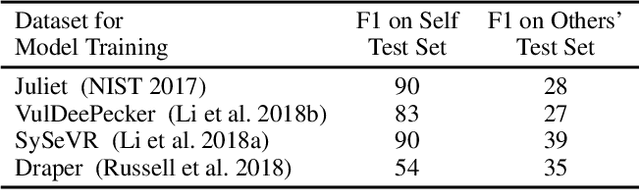

Abstract:Automatically locating vulnerable statements in source code is crucial to assure software security and alleviate developers' debugging efforts. This becomes even more important in today's software ecosystem, where vulnerable code can flow easily and unwittingly within and across software repositories like GitHub. Across such millions of lines of code, traditional static and dynamic approaches struggle to scale. Although existing machine-learning-based approaches look promising in such a setting, most work detects vulnerable code at a higher granularity -- at the method or file level. Thus, developers still need to inspect a significant amount of code to locate the vulnerable statement(s) that need to be fixed. This paper presents VELVET, a novel ensemble learning approach to locate vulnerable statements. Our model combines graph-based and sequence-based neural networks to successfully capture the local and global context of a program graph and effectively understand code semantics and vulnerable patterns. To study VELVET's effectiveness, we use an off-the-shelf synthetic dataset and a recently published real-world dataset. In the static analysis setting, where vulnerable functions are not detected in advance, VELVET achieves 4.5x better performance than the baseline static analyzers on the real-world data. For the isolated vulnerability localization task, where we assume the vulnerability of a function is known while the specific vulnerable statement is unknown, we compare VELVET with several neural networks that also attend to local and global context of code. VELVET achieves 99.6% and 43.6% top-1 accuracy over synthetic data and real-world data, respectively, outperforming the baseline deep-learning models by 5.3-29.0%.

Data-Driven and SE-assisted AI Model Signal-Awareness Enhancement and Introspection

Nov 10, 2021

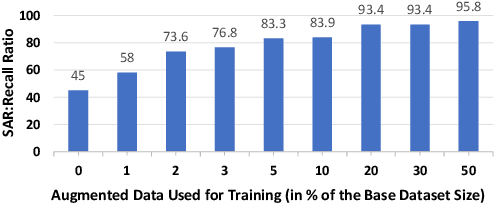

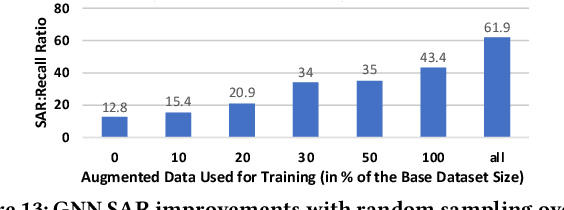

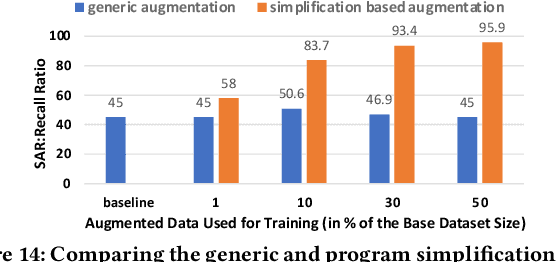

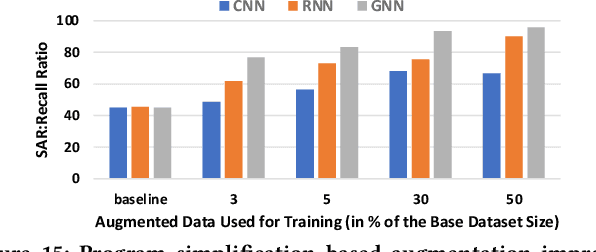

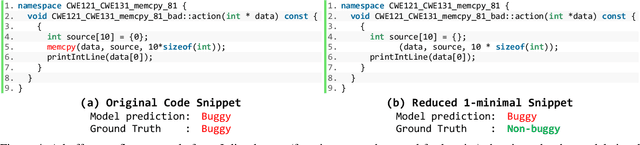

Abstract:AI modeling for source code understanding tasks has been making significant progress, and is being adopted in production development pipelines. However, reliability concerns, especially whether the models are actually learning task-related aspects of source code, are being raised. While recent model-probing approaches have observed a lack of signal awareness in many AI-for-code models, i.e. models not capturing task-relevant signals, they do not offer solutions to rectify this problem. In this paper, we explore data-driven approaches to enhance models' signal-awareness: 1) we combine the SE concept of code complexity with the AI technique of curriculum learning; 2) we incorporate SE assistance into AI models by customizing Delta Debugging to generate simplified signal-preserving programs, augmenting them to the training dataset. With our techniques, we achieve up to 4.8x improvement in model signal awareness. Using the notion of code complexity, we further present a novel model learning introspection approach from the perspective of the dataset.

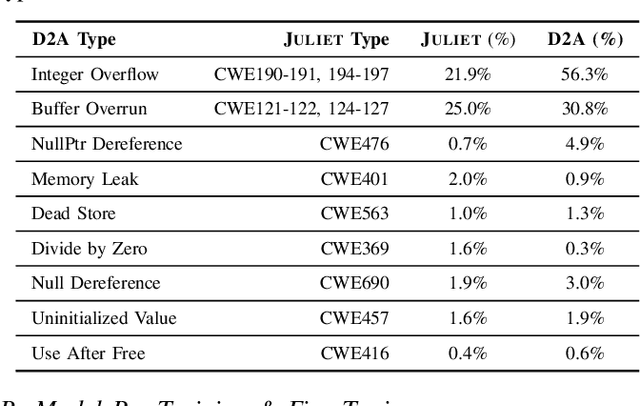

D2A: A Dataset Built for AI-Based Vulnerability Detection Methods Using Differential Analysis

Feb 16, 2021

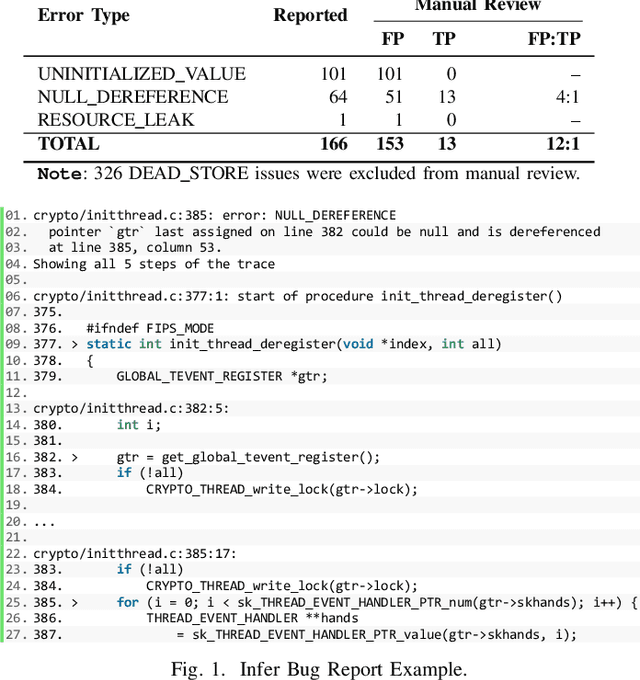

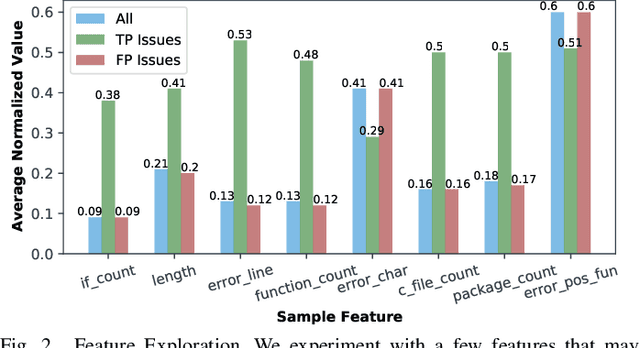

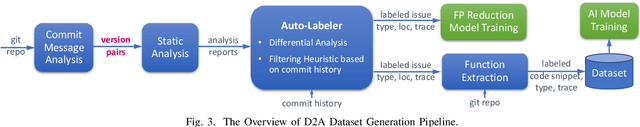

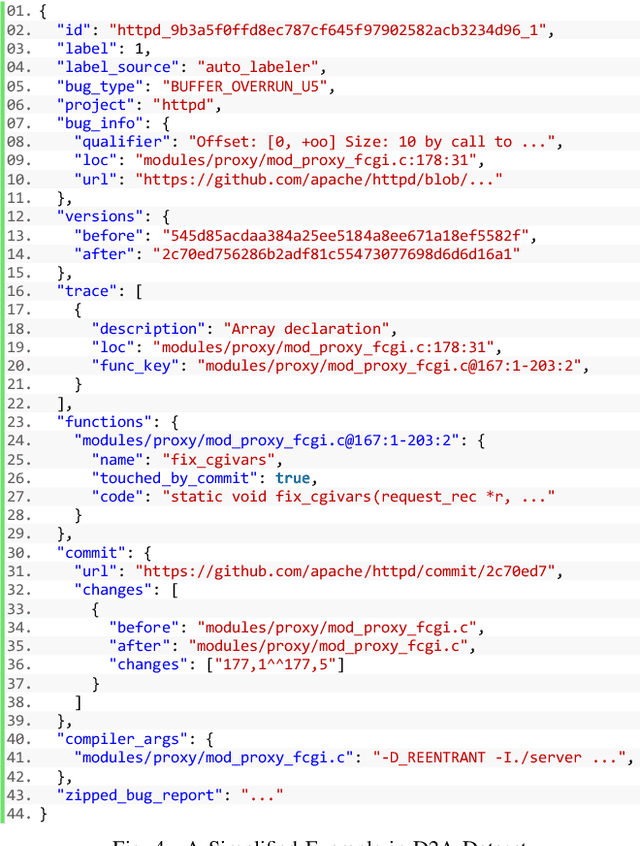

Abstract:Static analysis tools are widely used for vulnerability detection as they understand programs with complex behavior and millions of lines of code. Despite their popularity, static analysis tools are known to generate an excess of false positives. The recent ability of Machine Learning models to understand programming languages opens new possibilities when applied to static analysis. However, existing datasets to train models for vulnerability identification suffer from multiple limitations such as limited bug context, limited size, and synthetic and unrealistic source code. We propose D2A, a differential analysis based approach to label issues reported by static analysis tools. The D2A dataset is built by analyzing version pairs from multiple open source projects. From each project, we select bug fixing commits and we run static analysis on the versions before and after such commits. If some issues detected in a before-commit version disappear in the corresponding after-commit version, they are very likely to be real bugs that got fixed by the commit. We use D2A to generate a large labeled dataset to train models for vulnerability identification. We show that the dataset can be used to build a classifier to identify possible false alarms among the issues reported by static analysis, hence helping developers prioritize and investigate potential true positives first.

Probing Model Signal-Awareness via Prediction-Preserving Input Minimization

Nov 25, 2020

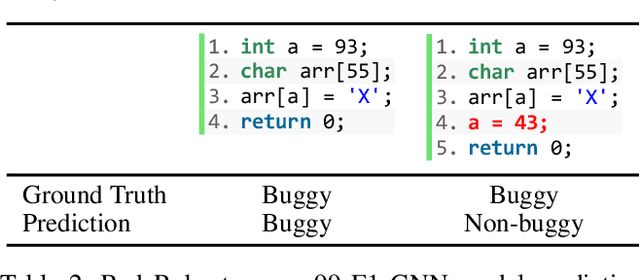

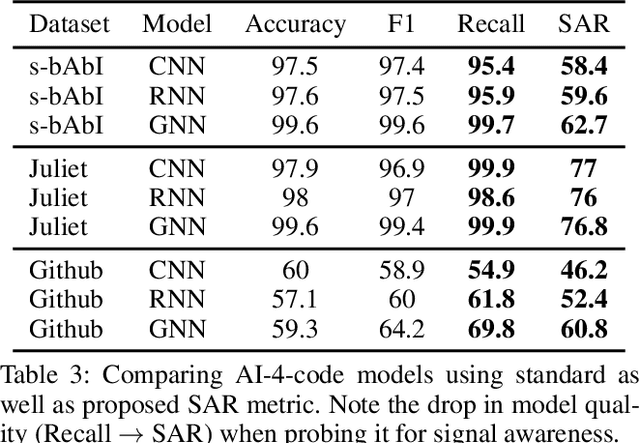

Abstract:This work explores the signal awareness of AI models for source code understanding. Using a software vulnerability detection use-case, we evaluate the models' ability to capture the correct vulnerability signals to produce their predictions. Our prediction-preserving input minimization (P2IM) approach systematically reduces the original source code to a minimal snippet which a model needs to maintain its prediction. The model's reliance on incorrect signals is then uncovered when a vulnerability in the original code is missing in the minimal snippet, both of which the model however predicts as being vulnerable. We apply P2IM on three state-of-the-art neural network models across multiple datasets, and measure their signal awareness using a new metric we propose- Signal-aware Recall (SAR). The results show a sharp drop in the model's Recall from the high 90s to sub-60s with the new metric, highlighting that the models are presumably picking up a lot of noise or dataset nuances while learning their vulnerability detection logic.

Exploring Software Naturalness through Neural Language Models

Jun 24, 2020

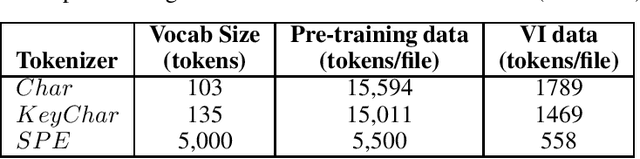

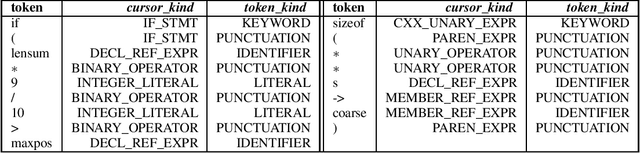

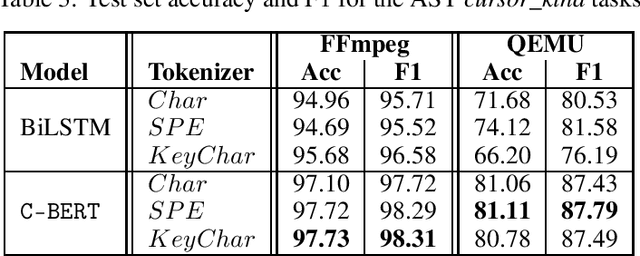

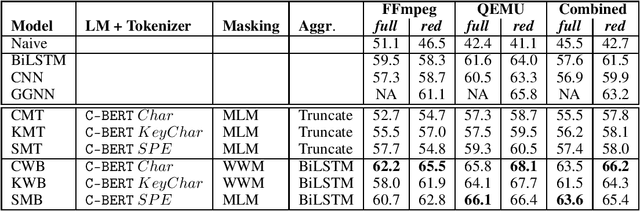

Abstract:The Software Naturalness hypothesis argues that programming languages can be understood through the same techniques used in natural language processing. We explore this hypothesis through the use of a pre-trained transformer-based language model to perform code analysis tasks. Present approaches to code analysis depend heavily on features derived from the Abstract Syntax Tree (AST) while our transformer-based language models work on raw source code. This work is the first to investigate whether such language models can discover AST features automatically. To achieve this, we introduce a sequence labeling task that directly probes the language models understanding of AST. Our results show that transformer based language models achieve high accuracy in the AST tagging task. Furthermore, we evaluate our model on a software vulnerability identification task. Importantly, we show that our approach obtains vulnerability identification results comparable to graph based approaches that rely heavily on compilers for feature extraction.

Learning to map source code to software vulnerability using code-as-a-graph

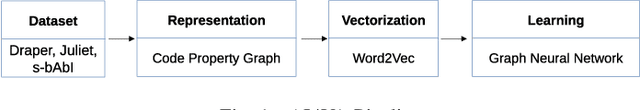

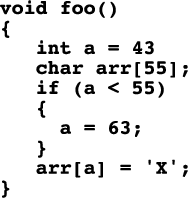

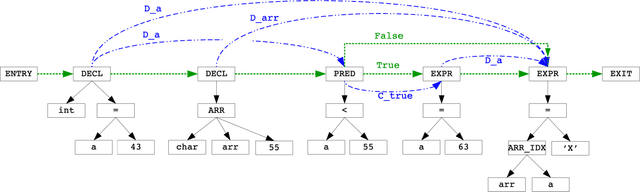

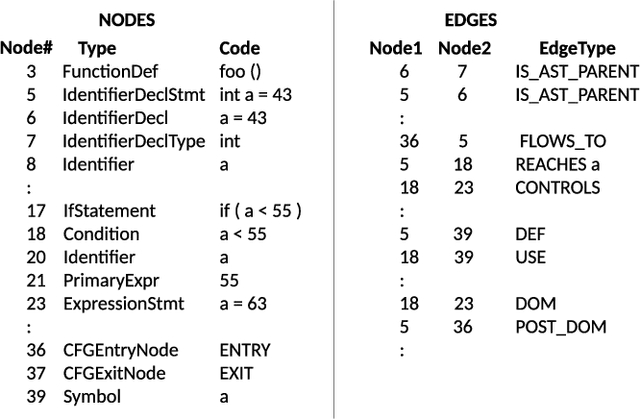

Jun 15, 2020

Abstract:We explore the applicability of Graph Neural Networks in learning the nuances of source code from a security perspective. Specifically, whether signatures of vulnerabilities in source code can be learned from its graph representation, in terms of relationships between nodes and edges. We create a pipeline we call AI4VA, which first encodes a sample source code into a Code Property Graph. The extracted graph is then vectorized in a manner which preserves its semantic information. A Gated Graph Neural Network is then trained using several such graphs to automatically extract templates differentiating the graph of a vulnerable sample from a healthy one. Our model outperforms static analyzers, classic machine learning, as well as CNN and RNN-based deep learning models on two of the three datasets we experiment with. We thus show that a code-as-graph encoding is more meaningful for vulnerability detection than existing code-as-photo and linear sequence encoding approaches. (Submitted Oct 2019, Paper #28, ICST)

GaDei: On Scale-up Training As A Service For Deep Learning

Oct 03, 2017

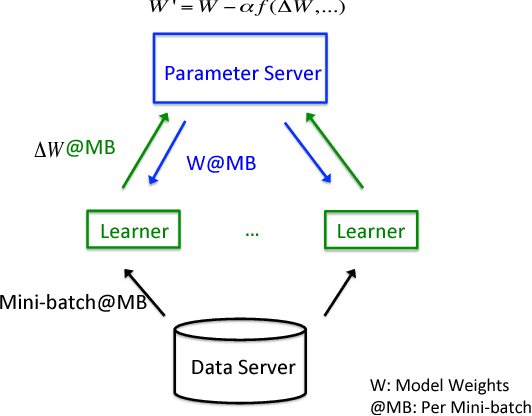

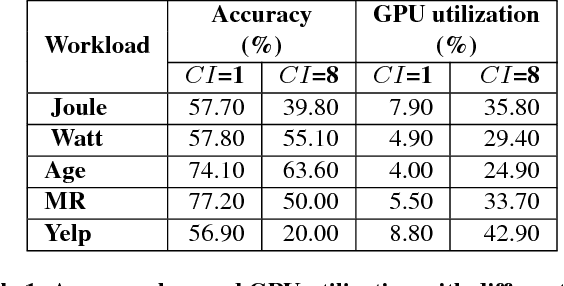

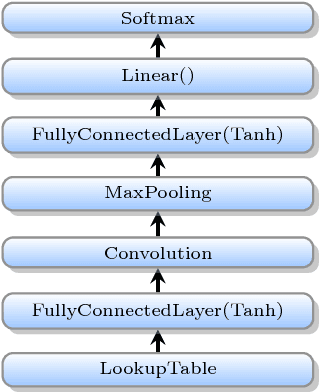

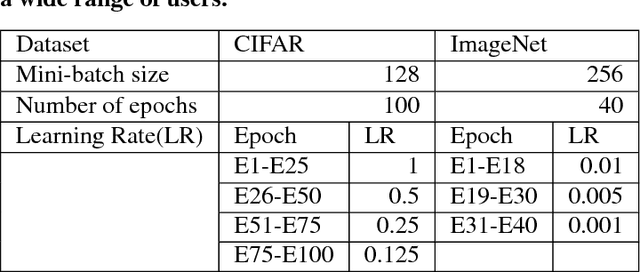

Abstract:Deep learning (DL) training-as-a-service (TaaS) is an important emerging industrial workload. The unique challenge of TaaS is that it must satisfy a wide range of customers who have no experience and resources to tune DL hyper-parameters, and meticulous tuning for each user's dataset is prohibitively expensive. Therefore, TaaS hyper-parameters must be fixed with values that are applicable to all users. IBM Watson Natural Language Classifier (NLC) service, the most popular IBM cognitive service used by thousands of enterprise-level clients around the globe, is a typical TaaS service. By evaluating the NLC workloads, we show that only the conservative hyper-parameter setup (e.g., small mini-batch size and small learning rate) can guarantee acceptable model accuracy for a wide range of customers. We further justify theoretically why such a setup guarantees better model convergence in general. Unfortunately, the small mini-batch size causes a high volume of communication traffic in a parameter-server based system. We characterize the high communication bandwidth requirement of TaaS using representative industrial deep learning workloads and demonstrate that none of the state-of-the-art scale-up or scale-out solutions can satisfy such a requirement. We then present GaDei, an optimized shared-memory based scale-up parameter server design. We prove that the designed protocol is deadlock-free and it processes each gradient exactly once. Our implementation is evaluated on both commercial benchmarks and public benchmarks to demonstrate that it significantly outperforms the state-of-the-art parameter-server based implementation while maintaining the required accuracy and our implementation reaches near the best possible runtime performance, constrained only by the hardware limitation. Furthermore, to the best of our knowledge, GaDei is the only scale-up DL system that provides fault-tolerance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge