Wenhuan Lu

Whisper-MLA: Reducing GPU Memory Consumption of ASR Models based on MHA2MLA Conversion

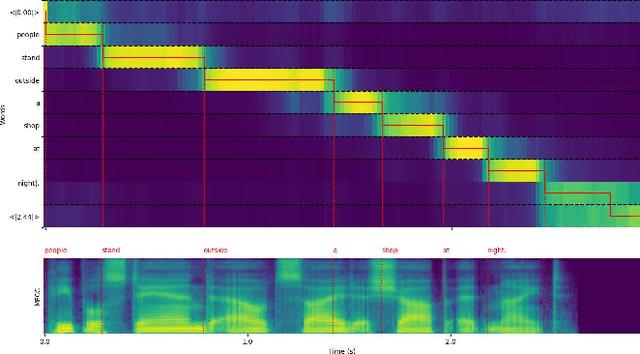

Feb 28, 2026Abstract:The Transformer-based Whisper model has achieved state-of-the-art performance in Automatic Speech Recognition (ASR). However, its Multi-Head Attention (MHA) mechanism results in significant GPU memory consumption due to the linearly growing Key-Value (KV) cache usage, which is problematic for many applications especially with long-form audio. To address this, we introduce Whisper-MLA, a novel architecture that incorporates Multi-Head Latent Attention (MLA) into the Whisper model. Specifically, we adapt MLA for Whisper's absolute positional embeddings and systematically investigate its application across encoder self-attention, decoder self-attention, and cross-attention modules. Empirical results indicate that applying MLA exclusively to decoder self-attention yields the desired balance between performance and memory efficiency. Our proposed approach allows conversion of a pretrained Whisper model to Whisper-MLA with minimal fine-tuning. Extensive experiments on the LibriSpeech benchmark validate the effectiveness of this conversion, demonstrating that Whisper-MLA reduces the KV cache size by up to 87.5% while maintaining competitive accuracy.

Listening for "You": Enhancing Speech Image Retrieval via Target Speaker Extraction

Sep 11, 2025Abstract:Image retrieval using spoken language cues has emerged as a promising direction in multimodal perception, yet leveraging speech in multi-speaker scenarios remains challenging. We propose a novel Target Speaker Speech-Image Retrieval task and a framework that learns the relationship between images and multi-speaker speech signals in the presence of a target speaker. Our method integrates pre-trained self-supervised audio encoders with vision models via target speaker-aware contrastive learning, conditioned on a Target Speaker Extraction and Retrieval module. This enables the system to extract spoken commands from the target speaker and align them with corresponding images. Experiments on SpokenCOCO2Mix and SpokenCOCO3Mix show that TSRE significantly outperforms existing methods, achieving 36.3% and 29.9% Recall@1 in 2 and 3 speaker scenarios, respectively - substantial improvements over single speaker baselines and state-of-the-art models. Our approach demonstrates potential for real-world deployment in assistive robotics and multimodal interaction systems.

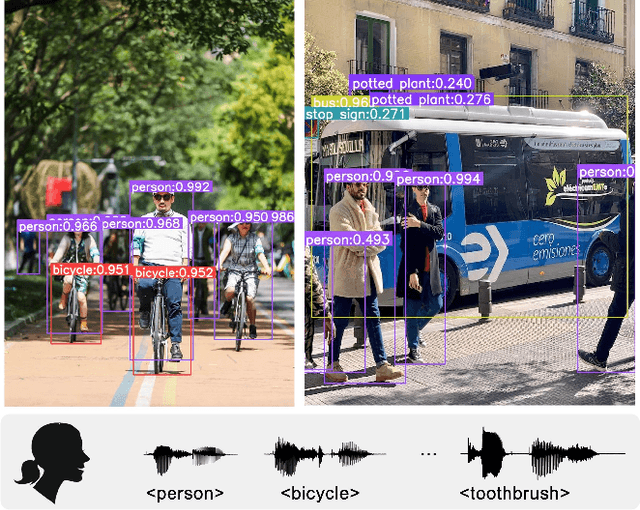

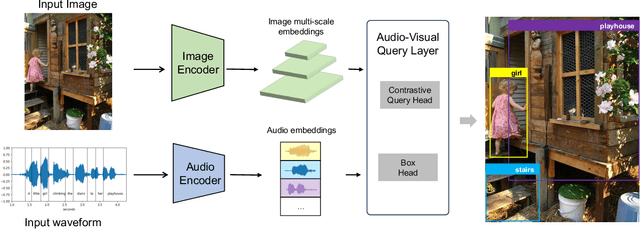

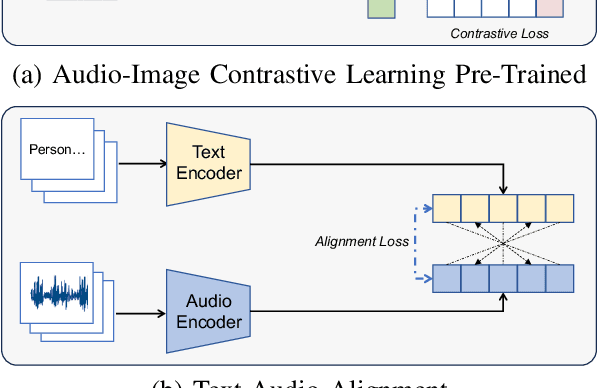

You Only Speak Once to See

Sep 27, 2024

Abstract:Grounding objects in images using visual cues is a well-established approach in computer vision, yet the potential of audio as a modality for object recognition and grounding remains underexplored. We introduce YOSS, "You Only Speak Once to See," to leverage audio for grounding objects in visual scenes, termed Audio Grounding. By integrating pre-trained audio models with visual models using contrastive learning and multi-modal alignment, our approach captures speech commands or descriptions and maps them directly to corresponding objects within images. Experimental results indicate that audio guidance can be effectively applied to object grounding, suggesting that incorporating audio guidance may enhance the precision and robustness of current object grounding methods and improve the performance of robotic systems and computer vision applications. This finding opens new possibilities for advanced object recognition, scene understanding, and the development of more intuitive and capable robotic systems.

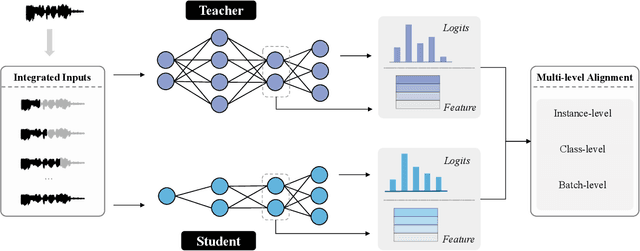

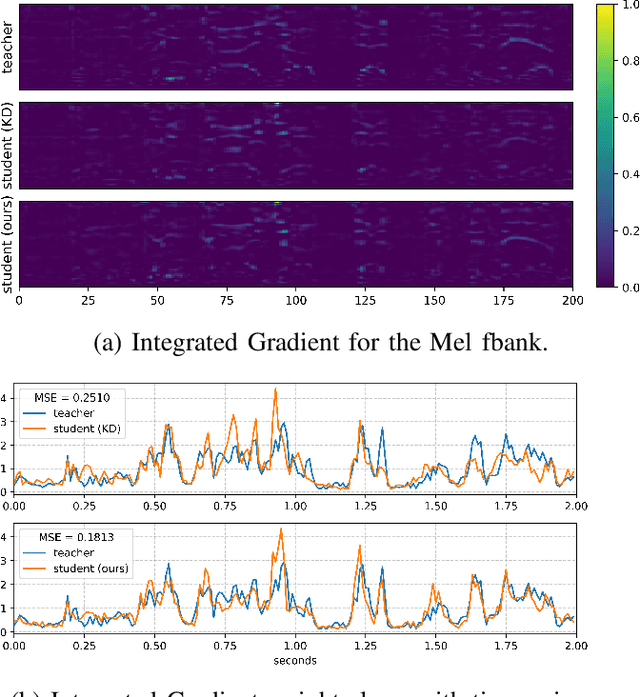

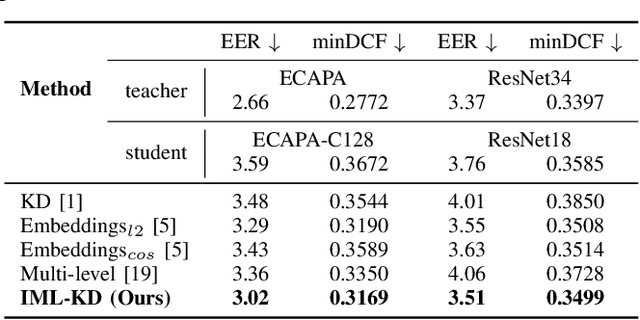

Integrated Multi-Level Knowledge Distillation for Enhanced Speaker Verification

Sep 14, 2024

Abstract:Knowledge distillation (KD) is widely used in audio tasks, such as speaker verification (SV), by transferring knowledge from a well-trained large model (the teacher) to a smaller, more compact model (the student) for efficiency and portability. Existing KD methods for SV often mirror those used in image processing, focusing on approximating predicted probabilities and hidden representations. However, these methods fail to account for the multi-level temporal properties of speech audio. In this paper, we propose a novel KD method, i.e., Integrated Multi-level Knowledge Distillation (IML-KD), to transfer knowledge of various temporal-scale features of speech from a teacher model to a student model. In the IML-KD, temporal context information from the teacher model is integrated into novel Integrated Gradient-based input-sensitive representations from speech segments with various durations, and the student model is trained to infer these representations with multi-level alignment for the output. We conduct SV experiments on the VoxCeleb1 dataset to evaluate the proposed method. Experimental results demonstrate that IML-KD significantly enhances KD performance, reducing the Equal Error Rate (EER) by 5%.

Channel Adaptation for Speaker Verification Using Optimal Transport with Pseudo Label

Sep 14, 2024

Abstract:Domain gap often degrades the performance of speaker verification (SV) systems when the statistical distributions of training data and real-world test speech are mismatched. Channel variation, a primary factor causing this gap, is less addressed than other issues (e.g., noise). Although various domain adaptation algorithms could be applied to handle this domain gap problem, most algorithms could not take the complex distribution structure in domain alignment with discriminative learning. In this paper, we propose a novel unsupervised domain adaptation method, i.e., Joint Partial Optimal Transport with Pseudo Label (JPOT-PL), to alleviate the channel mismatch problem. Leveraging the geometric-aware distance metric of optimal transport in distribution alignment, we further design a pseudo label-based discriminative learning where the pseudo label can be regarded as a new type of soft speaker label derived from the optimal coupling. With the JPOT-PL, we carry out experiments on the SV channel adaptation task with VoxCeleb as the basis corpus. Experiments show our method reduces EER by over 10% compared with several state-of-the-art channel adaptation algorithms.

Robust Channel Learning for Large-Scale Radio Speaker Verification

Jun 16, 2024Abstract:Recent research in speaker verification has increasingly focused on achieving robust and reliable recognition under challenging channel conditions and noisy environments. Identifying speakers in radio communications is particularly difficult due to inherent limitations such as constrained bandwidth and pervasive noise interference. To address this issue, we present a Channel Robust Speaker Learning (CRSL) framework that enhances the robustness of the current speaker verification pipeline, considering data source, data augmentation, and the efficiency of model transfer processes. Our framework introduces an augmentation module that mitigates bandwidth variations in radio speech datasets by manipulating the bandwidth of training inputs. It also addresses unknown noise by introducing noise within the manifold space. Additionally, we propose an efficient fine-tuning method that reduces the need for extensive additional training time and large amounts of data. Moreover, we develop a toolkit for assembling a large-scale radio speech corpus and establish a benchmark specifically tailored for radio scenario speaker verification studies. Experimental results demonstrate that our proposed methodology effectively enhances performance and mitigates degradation caused by radio transmission in speaker verification tasks. The code will be available on Github.

Weakly-Supervised Video Anomaly Detection with Snippet Anomalous Attention

Sep 28, 2023

Abstract:With a focus on abnormal events contained within untrimmed videos, there is increasing interest among researchers in video anomaly detection. Among different video anomaly detection scenarios, weakly-supervised video anomaly detection poses a significant challenge as it lacks frame-wise labels during the training stage, only relying on video-level labels as coarse supervision. Previous methods have made attempts to either learn discriminative features in an end-to-end manner or employ a twostage self-training strategy to generate snippet-level pseudo labels. However, both approaches have certain limitations. The former tends to overlook informative features at the snippet level, while the latter can be susceptible to noises. In this paper, we propose an Anomalous Attention mechanism for weakly-supervised anomaly detection to tackle the aforementioned problems. Our approach takes into account snippet-level encoded features without the supervision of pseudo labels. Specifically, our approach first generates snippet-level anomalous attention and then feeds it together with original anomaly scores into a Multi-branch Supervision Module. The module learns different areas of the video, including areas that are challenging to detect, and also assists the attention optimization. Experiments on benchmark datasets XDViolence and UCF-Crime verify the effectiveness of our method. Besides, thanks to the proposed snippet-level attention, we obtain a more precise anomaly localization.

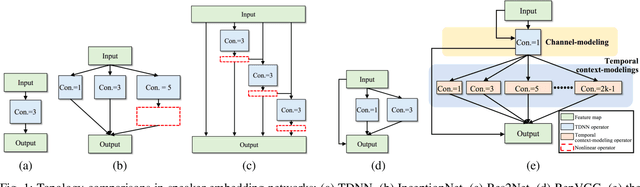

TMS: A Temporal Multi-scale Backbone Design for Speaker Embedding

Mar 17, 2022

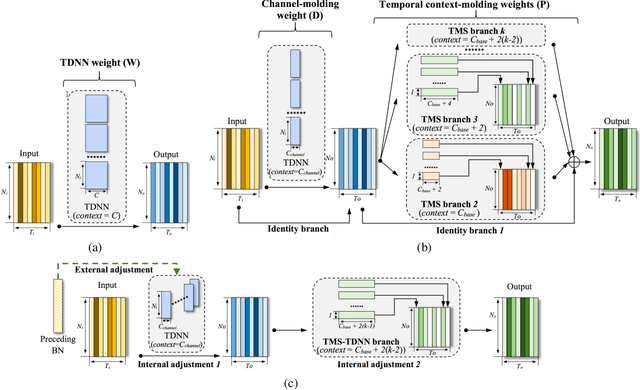

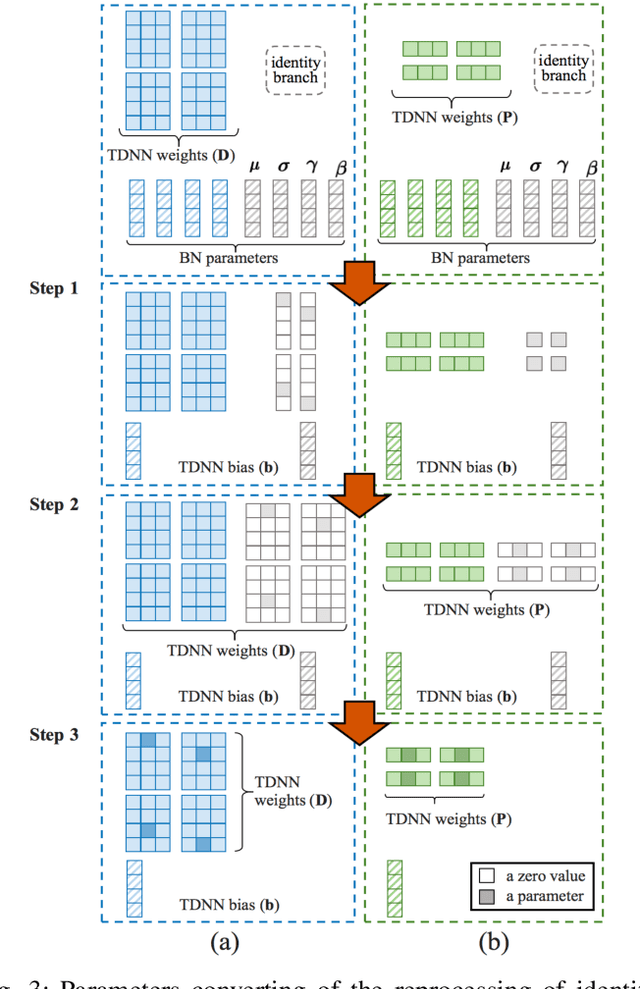

Abstract:Speaker embedding is an important front-end module to explore discriminative speaker features for many speech applications where speaker information is needed. Current SOTA backbone networks for speaker embedding are designed to aggregate multi-scale features from an utterance with multi-branch network architectures for speaker representation. However, naively adding many branches of multi-scale features with the simple fully convolutional operation could not efficiently improve the performance due to the rapid increase of model parameters and computational complexity. Therefore, in the most current state-of-the-art network architectures, only a few branches corresponding to a limited number of temporal scales could be designed for speaker embeddings. To address this problem, in this paper, we propose an effective temporal multi-scale (TMS) model where multi-scale branches could be efficiently designed in a speaker embedding network almost without increasing computational costs. The new model is based on the conventional TDNN, where the network architecture is smartly separated into two modeling operators: a channel-modeling operator and a temporal multi-branch modeling operator. Adding temporal multi-scale in the temporal multi-branch operator needs only a little bit increase of the number of parameters, and thus save more computational budget for adding more branches with large temporal scales. Moreover, in the inference stage, we further developed a systemic re-parameterization method to convert the TMS-based model into a single-path-based topology in order to increase inference speed. We investigated the performance of the new TMS method for automatic speaker verification (ASV) on in-domain and out-of-domain conditions. Results show that the TMS-based model obtained a significant increase in the performance over the SOTA ASV models, meanwhile, had a faster inference speed.

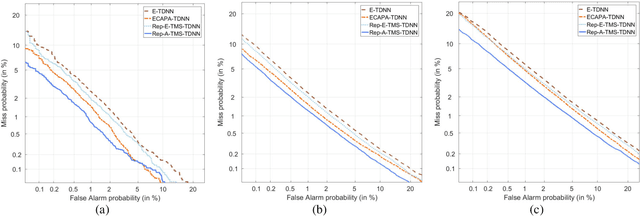

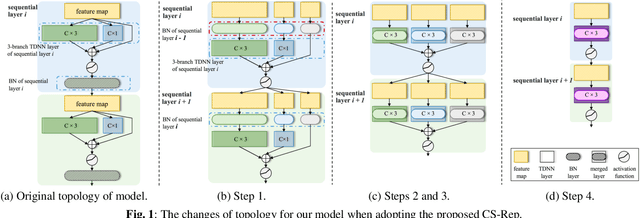

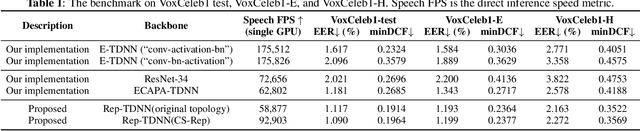

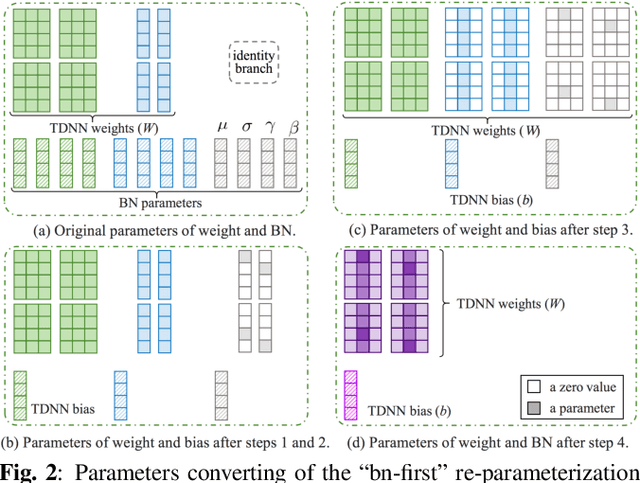

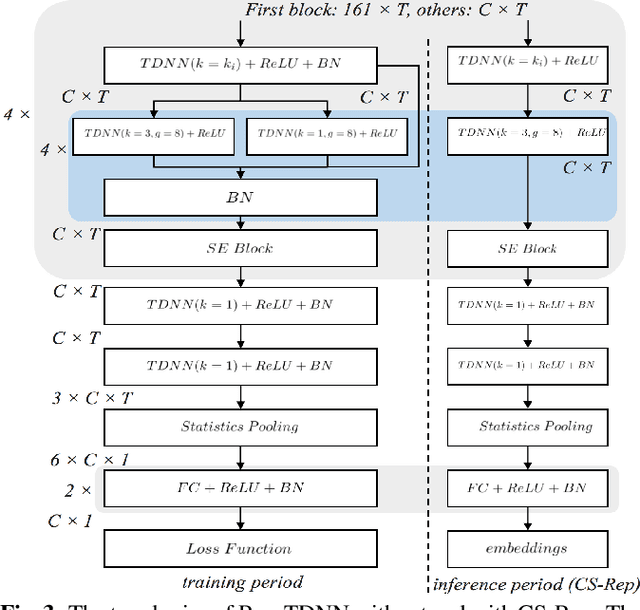

CS-Rep: Making Speaker Verification Networks Embracing Re-parameterization

Oct 26, 2021

Abstract:Automatic speaker verification (ASV) systems, which determine whether two speeches are from the same speaker, mainly focus on verification accuracy while ignoring inference speed. However, in real applications, both inference speed and verification accuracy are essential. This study proposes cross-sequential re-parameterization (CS-Rep), a novel topology re-parameterization strategy for multi-type networks, to increase the inference speed and verification accuracy of models. CS-Rep solves the problem that existing re-parameterization methods are unsuitable for typical ASV backbones. When a model applies CS-Rep, the training-period network utilizes a multi-branch topology to capture speaker information, whereas the inference-period model converts to a time-delay neural network (TDNN)-like plain backbone with stacked TDNN layers to achieve the fast inference speed. Based on CS-Rep, an improved TDNN with friendly test and deployment called Rep-TDNN is proposed. Compared with the state-of-the-art model ECAPA-TDNN, which is highly recognized in the industry, Rep-TDNN increases the actual inference speed by about 50% and reduces the EER by 10%. The code will be released.

Scene Learning: Deep Convolutional Networks For Wind Power Prediction by Embedding Turbines into Grid Space

Jul 18, 2018

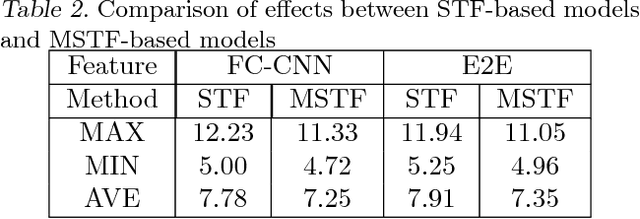

Abstract:Wind power prediction is of vital importance in wind power utilization. There have been a lot of researches based on the time series of the wind power or speed, but In fact, these time series cannot express the temporal and spatial changes of wind, which fundamentally hinders the advance of wind power prediction. In this paper, a new kind of feature that can describe the process of temporal and spatial variation is proposed, namely, Spatio-Temporal Features. We first map the data collected at each moment from the wind turbine to the plane to form the state map, namely, the scene, according to the relative positions. The scene time series over a period of time is a multi-channel image, i.e. the Spatio-Temporal Features. Based on the Spatio-Temporal Features, the deep convolutional network is applied to predict the wind power, achieving a far better accuracy than the existing methods. Compared with the starge-of-the-art method, the mean-square error (MSE) in our method is reduced by 49.83%, and the average time cost for training models can be shortened by a factor of more than 150.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge