Sunggoo Jung

Staircase Localization for Autonomous Exploration in Urban Environments

Mar 26, 2024

Abstract:A staircase localization method is proposed for robots to explore urban environments autonomously. The proposed method employs a modular design in the form of a cascade pipeline consisting of three modules of stair detection, line segment detection, and stair localization modules. The stair detection module utilizes an object detection algorithm based on deep learning to generate a region of interest (ROI). From the ROI, line segment features are extracted using a deep line segment detection algorithm. The extracted line segments are used to localize a staircase in terms of position, orientation, and stair direction. The stair detection and localization are performed only with a single RGB-D camera. Each component of the proposed pipeline does not need to be designed particularly for staircases, which makes it easy to maintain the whole pipeline and replace each component with state-of-the-art deep learning detection techniques. The results of real-world experiments show that the proposed method can perform accurate stair detection and localization during autonomous exploration for various structured and unstructured upstairs and downstairs with shadows, dirt, and occlusions by artificial and natural objects.

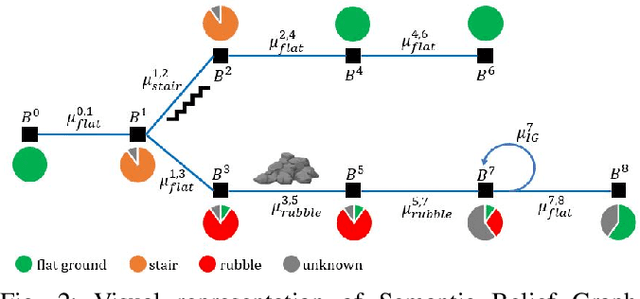

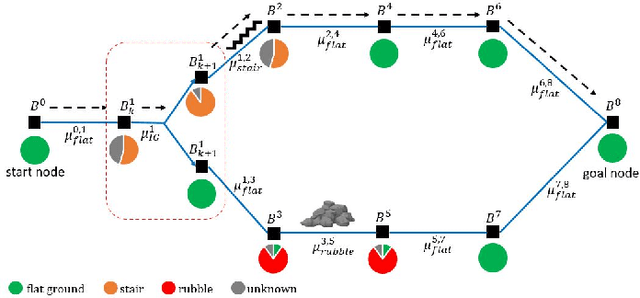

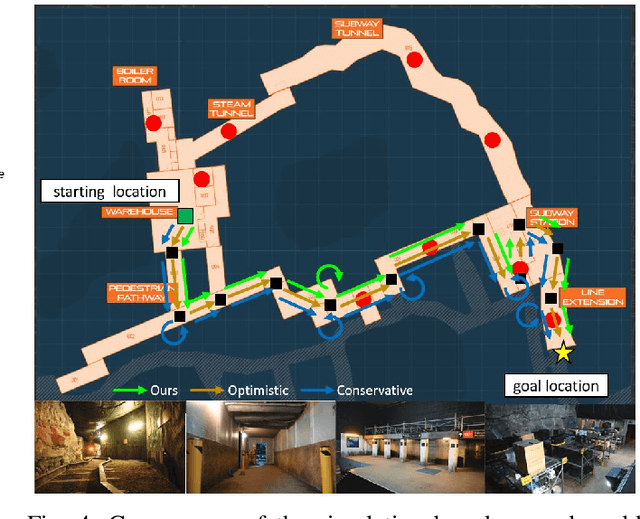

Safe and Efficient Navigation in Extreme Environments using Semantic Belief Graphs

Apr 02, 2023

Abstract:To achieve autonomy in unknown and unstructured environments, we propose a method for semantic-based planning under perceptual uncertainty. This capability is crucial for safe and efficient robot navigation in environment with mobility-stressing elements that require terrain-specific locomotion policies. We propose the Semantic Belief Graph (SBG), a geometric- and semantic-based representation of a robot's probabilistic roadmap in the environment. The SBG nodes comprise of the robot geometric state and the semantic-knowledge of the terrains in the environment. The SBG edges represent local semantic-based controllers that drive the robot between the nodes or invoke an information gathering action to reduce semantic belief uncertainty. We formulate a semantic-based planning problem on SBG that produces a policy for the robot to safely navigate to the target location with minimal traversal time. We analyze our method in simulation and present real-world results with a legged robotic platform navigating multi-level outdoor environments.

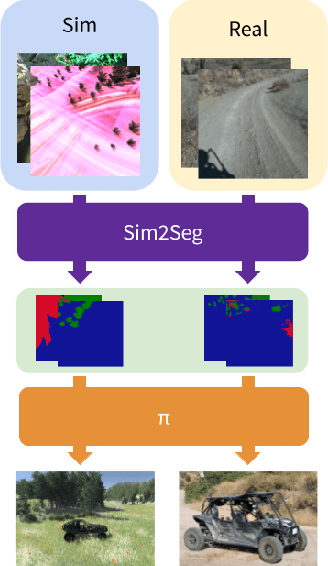

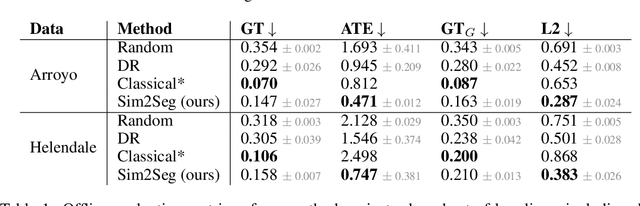

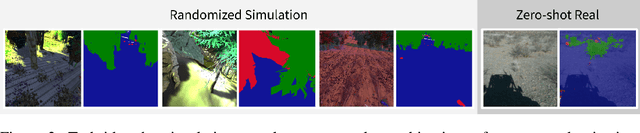

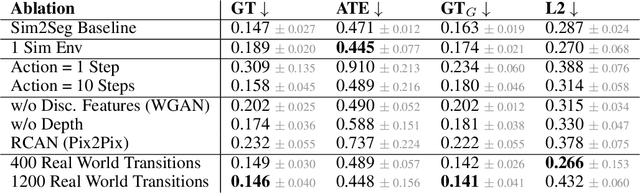

Sim-to-Real via Sim-to-Seg: End-to-end Off-road Autonomous Driving Without Real Data

Oct 25, 2022

Abstract:Autonomous driving is complex, requiring sophisticated 3D scene understanding, localization, mapping, and control. Rather than explicitly modelling and fusing each of these components, we instead consider an end-to-end approach via reinforcement learning (RL). However, collecting exploration driving data in the real world is impractical and dangerous. While training in simulation and deploying visual sim-to-real techniques has worked well for robot manipulation, deploying beyond controlled workspace viewpoints remains a challenge. In this paper, we address this challenge by presenting Sim2Seg, a re-imagining of RCAN that crosses the visual reality gap for off-road autonomous driving, without using any real-world data. This is done by learning to translate randomized simulation images into simulated segmentation and depth maps, subsequently enabling real-world images to also be translated. This allows us to train an end-to-end RL policy in simulation, and directly deploy in the real-world. Our approach, which can be trained in 48 hours on 1 GPU, can perform equally as well as a classical perception and control stack that took thousands of engineering hours over several months to build. We hope this work motivates future end-to-end autonomous driving research.

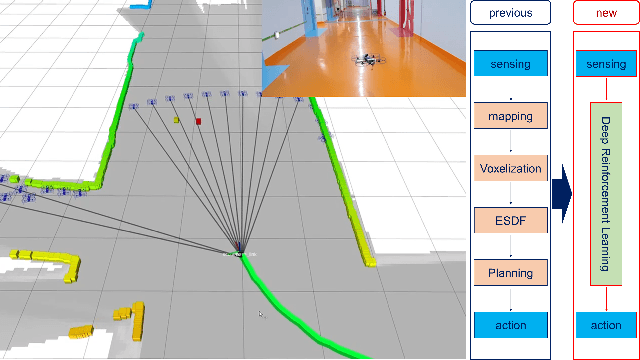

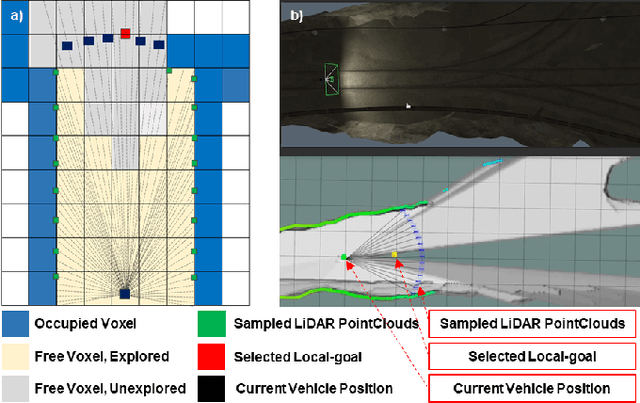

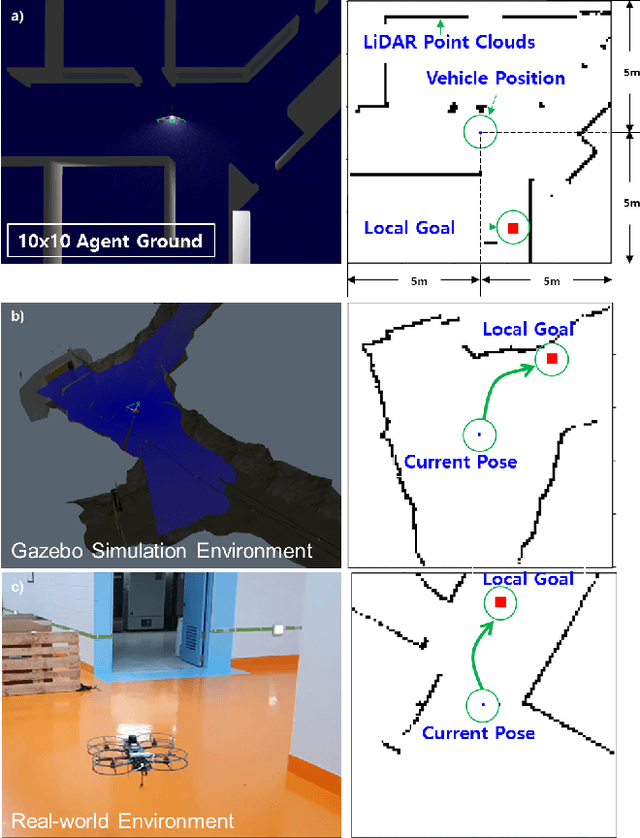

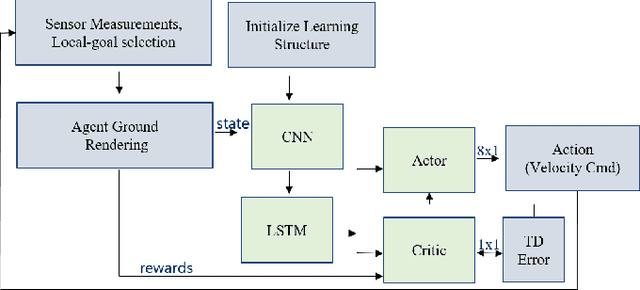

Mapless Navigation: Learning UAVs Motion forExploration of Unknown Environments

Oct 04, 2021

Abstract:This study presents a new methodology for learning-based motion planning for autonomous exploration using aerial robots. Through the reinforcement learning method of learning through trial and error, the action policy is derived that can guide autonomous exploration of underground and tunnel environments. A new Markov decision process state is designed to learn the robot's action policy by using simulation only, and the results are applied to the real-world environment without further learning. Reduce the need for the precision map in grid-based path planner and achieve map-less navigation. The proposed method can have a path with less computing cost than the grid-based planner but has similar performance. The trained action policy is broadly evaluated in both simulation and field trials related to autonomous exploration of underground mines or indoor spaces.

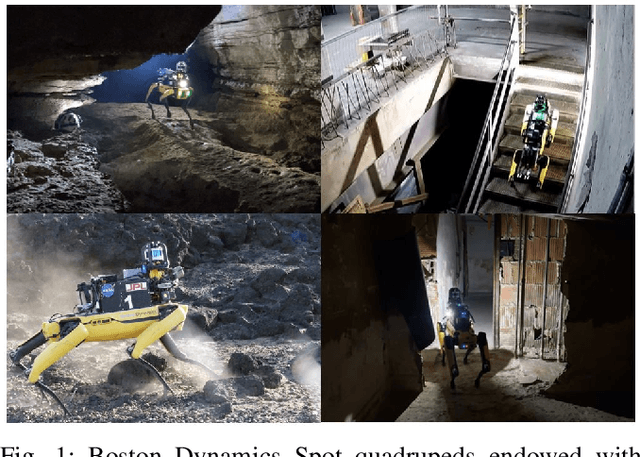

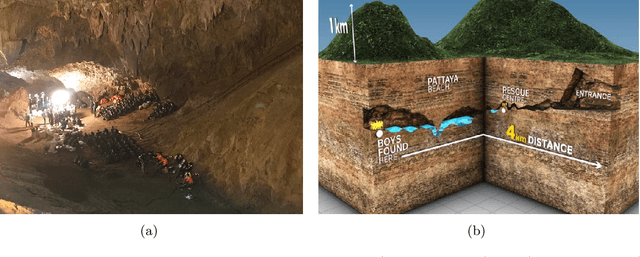

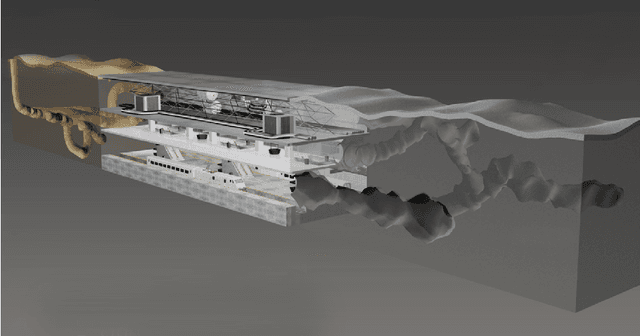

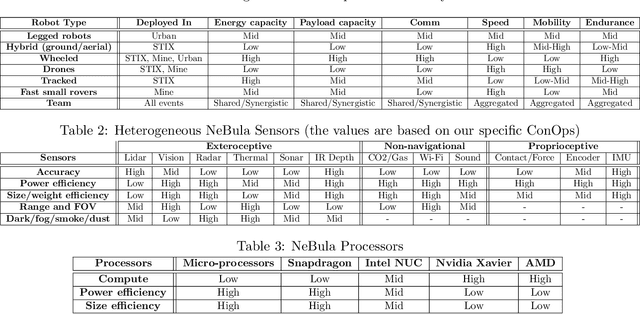

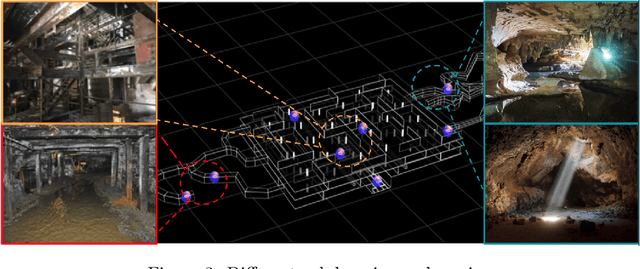

NeBula: Quest for Robotic Autonomy in Challenging Environments; TEAM CoSTAR at the DARPA Subterranean Challenge

Mar 28, 2021

Abstract:This paper presents and discusses algorithms, hardware, and software architecture developed by the TEAM CoSTAR (Collaborative SubTerranean Autonomous Robots), competing in the DARPA Subterranean Challenge. Specifically, it presents the techniques utilized within the Tunnel (2019) and Urban (2020) competitions, where CoSTAR achieved 2nd and 1st place, respectively. We also discuss CoSTAR's demonstrations in Martian-analog surface and subsurface (lava tubes) exploration. The paper introduces our autonomy solution, referred to as NeBula (Networked Belief-aware Perceptual Autonomy). NeBula is an uncertainty-aware framework that aims at enabling resilient and modular autonomy solutions by performing reasoning and decision making in the belief space (space of probability distributions over the robot and world states). We discuss various components of the NeBula framework, including: (i) geometric and semantic environment mapping; (ii) a multi-modal positioning system; (iii) traversability analysis and local planning; (iv) global motion planning and exploration behavior; (i) risk-aware mission planning; (vi) networking and decentralized reasoning; and (vii) learning-enabled adaptation. We discuss the performance of NeBula on several robot types (e.g. wheeled, legged, flying), in various environments. We discuss the specific results and lessons learned from fielding this solution in the challenging courses of the DARPA Subterranean Challenge competition.

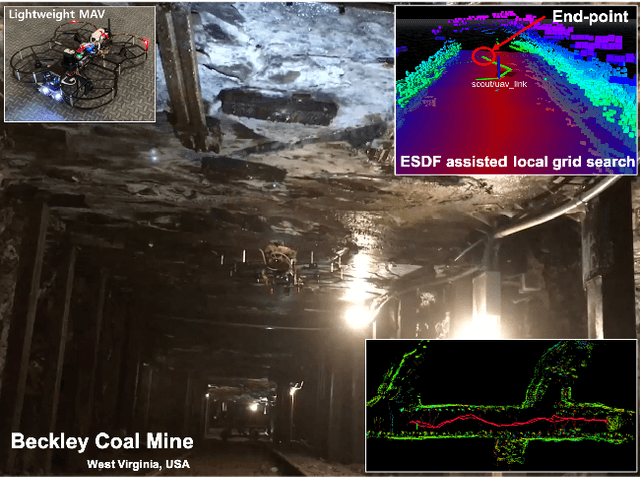

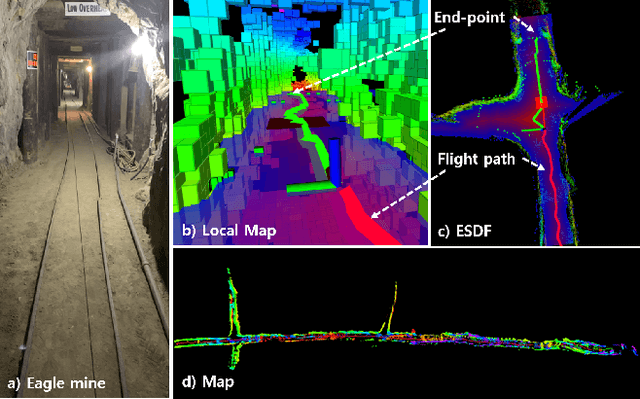

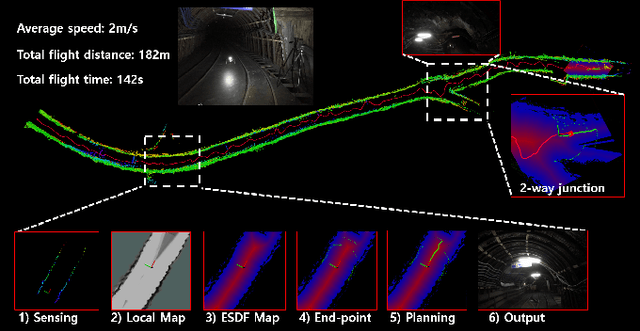

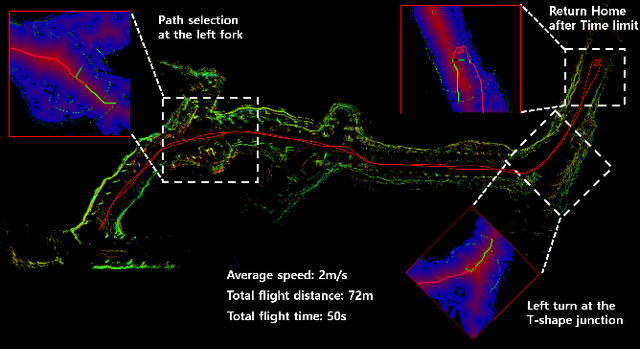

Robust Collision-free Lightweight Aerial Autonomy for Unknown Area Exploration

Mar 16, 2021

Abstract:Collision-free path planning is an essential requirement for autonomous exploration in unknown environments, especially when operating in confined spaces or near obstacles. This study presents an autonomous exploration technique using a small drone. A local end-point selection method is designed using LiDAR range measurement and then generates the path from the current position to the selected end-point. The generated path shows the consistent collision-free path in real-time by adopting the Euclidean signed distance field-based grid-search method. The simulation results consistently showed the safety, and reliability of the proposed path-planning method. Real-world experiments are conducted in three different mines, demonstrating successful autonomous exploration flight in environments with various structural conditions. The results showed the high capability of the proposed flight autonomy framework for lightweight aerial-robot systems. Besides, our drone performs an autonomous mission during our entry at the Tunnel Circuit competition (Phase 1) of the DARPA Subterranean Challenge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge