Stepan Perminov

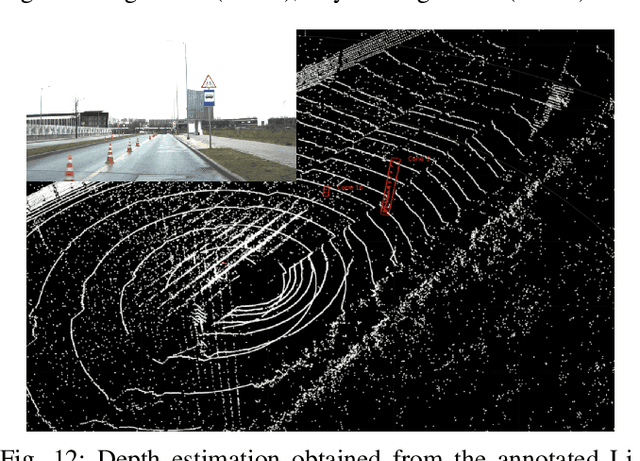

HawkDrive: A Transformer-driven Visual Perception System for Autonomous Driving in Night Scene

Apr 06, 2024

Abstract:Many established vision perception systems for autonomous driving scenarios ignore the influence of light conditions, one of the key elements for driving safety. To address this problem, we present HawkDrive, a novel perception system with hardware and software solutions. Hardware that utilizes stereo vision perception, which has been demonstrated to be a more reliable way of estimating depth information than monocular vision, is partnered with the edge computing device Nvidia Jetson Xavier AGX. Our software for low light enhancement, depth estimation, and semantic segmentation tasks, is a transformer-based neural network. Our software stack, which enables fast inference and noise reduction, is packaged into system modules in Robot Operating System 2 (ROS2). Our experimental results have shown that the proposed end-to-end system is effective in improving the depth estimation and semantic segmentation performance. Our dataset and codes will be released at https://github.com/ZionGo6/HawkDrive.

PolyMerge: A Novel Technique aimed at Dynamic HD Map Updates Leveraging Polylines

Oct 31, 2023

Abstract:Currently, High-Definition (HD) maps are a prerequisite for the stable operation of autonomous vehicles. Such maps contain information about all static road objects for the vehicle to consider during navigation, such as road edges, road lanes, crosswalks, and etc. To generate such an HD map, current approaches need to process pre-recorded environment data obtained from onboard sensors. However, recording such a dataset often requires a lot of time and effort. In addition, every time actual road environments are changed, a new dataset should be recorded to generate a relevant HD map. This paper addresses a novel approach that allows to continuously generate or update the HD map using onboard sensor data. When there is no need to pre-record the dataset, updating the HD map can be run in parallel with the main autonomous vehicle navigation pipeline. The proposed approach utilizes the VectorMapNet framework to generate vector road object instances from a sensor data scan. The PolyMerge technique is aimed to merge new instances into previous ones, mitigating detection errors and, therefore, generating or updating the HD map. The performance of the algorithm was confirmed by comparison with ground truth on the NuScenes dataset. Experimental results showed that the mean error for different levels of environment complexity was comparable to the VectorMapNet single instance error.

GHACPP: Genetic-based Human-Aware Coverage Path Planning Algorithm for Autonomous Disinfection Robot

Jul 17, 2023

Abstract:Numerous mobile robots with mounted Ultraviolet-C (UV-C) lamps were developed recently, yet they cannot work in the same space as humans without irradiating them by UV-C. This paper proposes a novel modular and scalable Human-Aware Genetic-based Coverage Path Planning algorithm (GHACPP), that aims to solve the problem of disinfecting of unknown environments by UV-C irradiation and preventing human eyes and skin from being harmed. The proposed genetic-based algorithm alternates between the stages of exploring a new area, generating parts of the resulting disinfection trajectory, called mini-trajectories, and updating the current state around the robot. The system performance in effectiveness and human safety is validated and compared with one of the latest state-of-the-art online coverage path planning algorithms called SimExCoverage-STC. The experimental results confirmed both the high level of safety for humans and the efficiency of the developed algorithm in terms of decrease of path length (by 37.1%), number (39.5%) and size (35.2%) of turns, and time (7.6%) to complete the disinfection task, with a small loss in the percentage of area covered (0.6%), in comparison with the state-of-the-art approach.

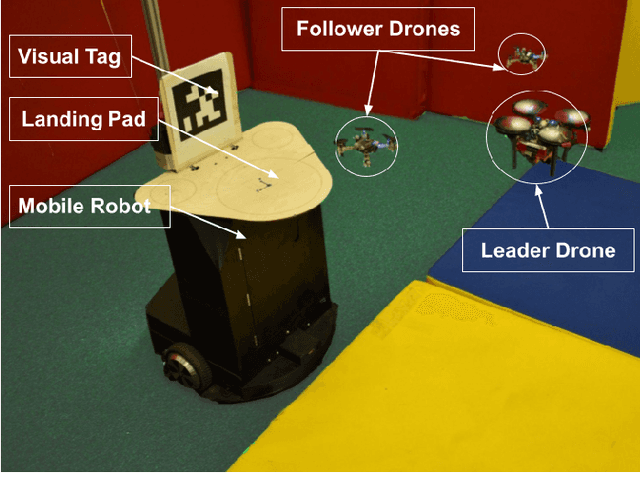

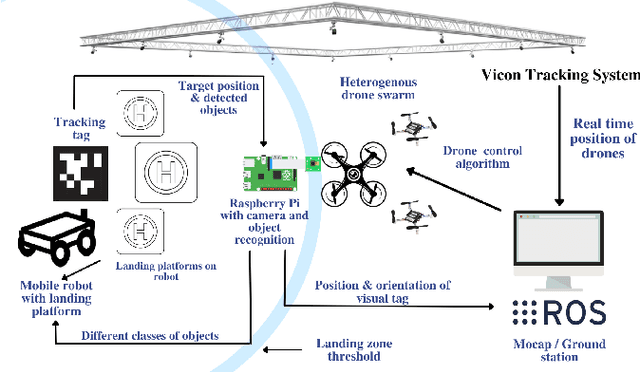

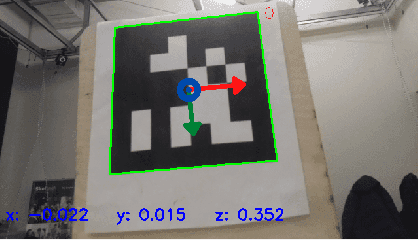

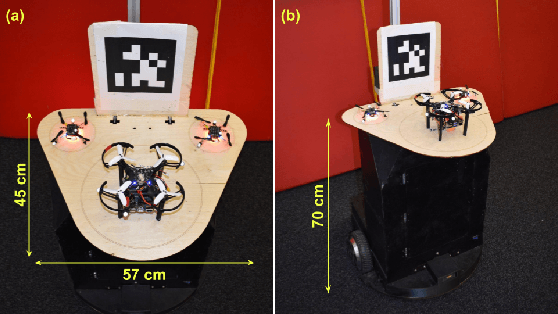

SwarmHive: Heterogeneous Swarm of Drones for Robust Autonomous Landing on Moving Robot

Jun 17, 2022

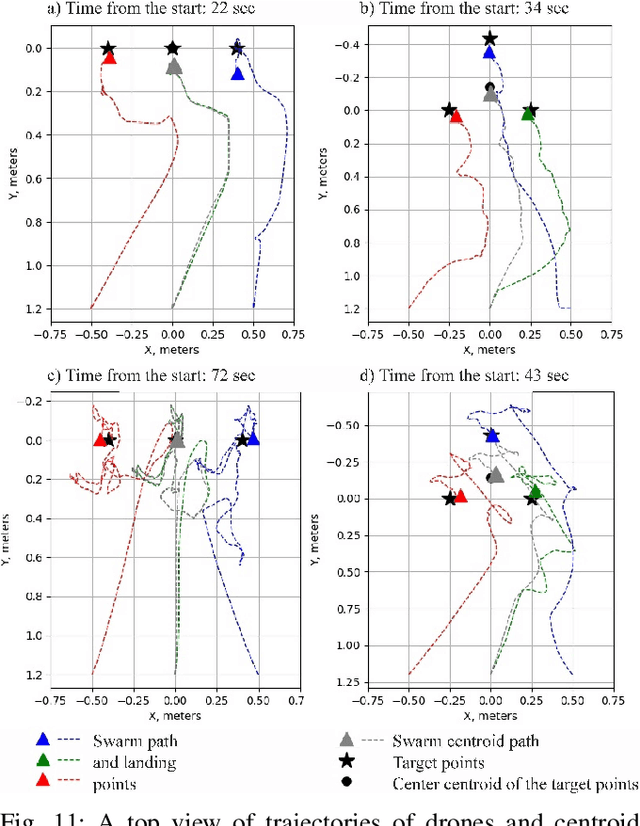

Abstract:The paper focuses on a heterogeneous swarm of drones to achieve a dynamic landing of formation on a moving robot. This challenging task was not yet achieved by scientists. The key technology is that instead of facilitating each agent of the swarm of drones with computer vision that considerably increases the payload and shortens the flight time, we propose to install only one camera on the leader drone. The follower drones receive the commands from the leader UAV and maintain a collision-free trajectory with the artificial potential field. The experimental results revealed a high accuracy of the swarm landing on a static mobile platform (RMSE of 4.48 cm). RMSE of swarm landing on the mobile platform moving with the maximum velocities of 1.0 m/s and 1.5 m/s equals 8.76 cm and 8.98 cm, respectively. The proposed SwarmHive technology will allow the time-saving landing of the swarm for further drone recharging. This will make it possible to achieve self-sustainable operation of a multi-agent robotic system for such scenarios as rescue operations, inspection and maintenance, autonomous warehouse inventory, cargo delivery, and etc.

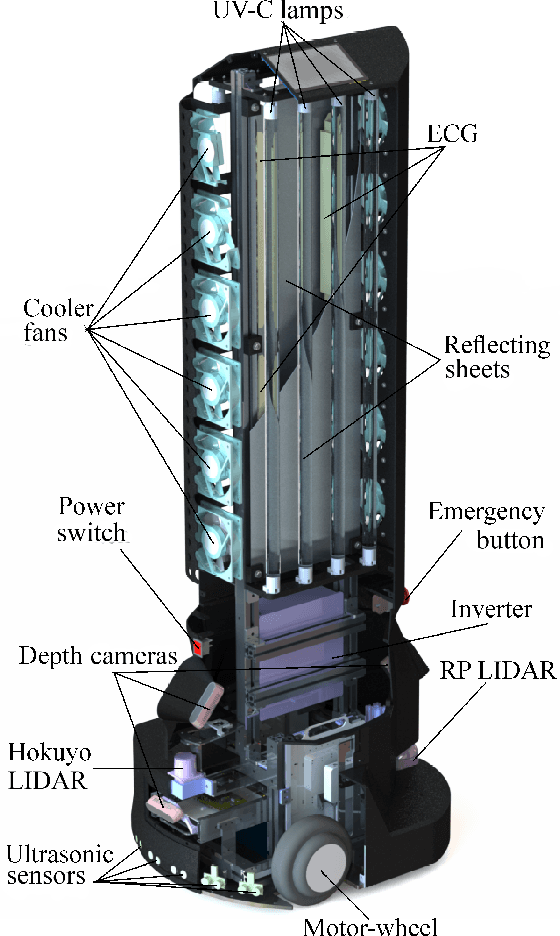

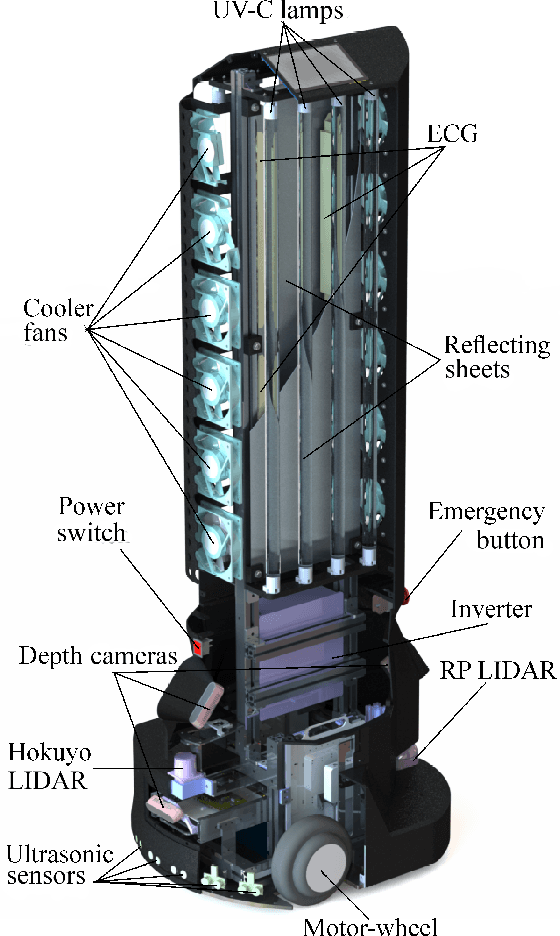

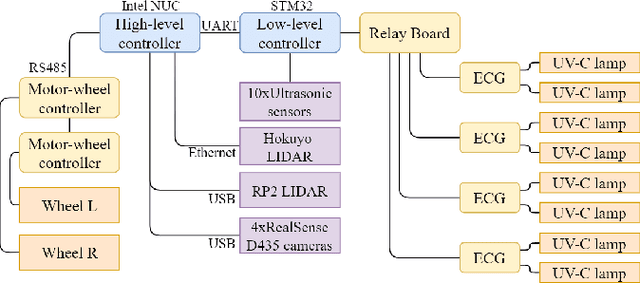

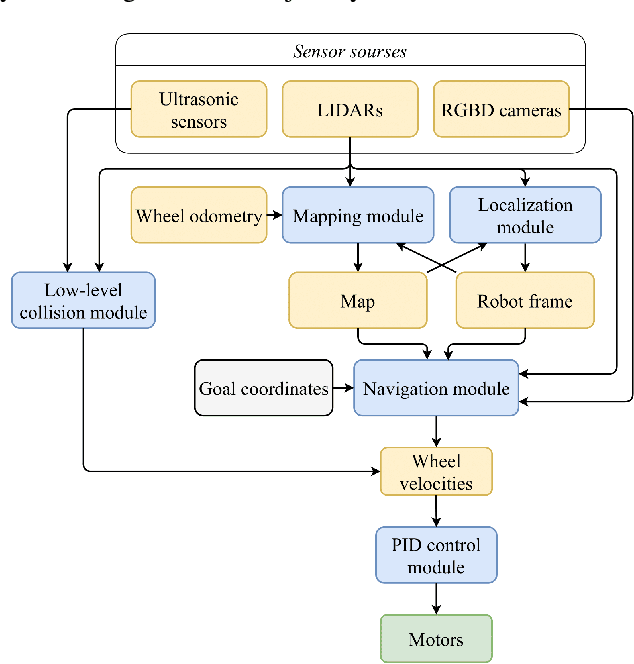

UltraBot: Autonomous Mobile Robot for Indoor UV-C Disinfection with Non-trivial Shape of Disinfection Zone

Aug 22, 2021

Abstract:The paper focuses on the development of an autonomous disinfection robot UltraBot to reduce COVID-19 transmission along with other harmful bacteria and viruses. The motivation behind the research is to develop such a robot that is capable of performing disinfection tasks without the use of harmful sprays and chemicals that can leave residues and require airing the room afterward for a long time. UltraBot technology has the potential to offer the most optimal autonomous disinfection performance along with taking care of people, keeping them from getting under the UV-C radiation. The paper highlights UltraBot's mechanical and electrical design as well as disinfection performance. The conducted experiments demonstrate the effectiveness of robot disinfection ability and actual disinfection area per each side with UV-C lamp array. The disinfection effectiveness results show actual performance for the multi-pass technique that provides 1-log reduction with combined direct UV-C exposure and ozone-based air purification after two robot passes at a speed of 0.14 m/s. This technique has the same performance as ten minutes static disinfection. Finally, we have calculated the non-trivial form of the robot disinfection zone by two consecutive experiment to produce optimal path planning and to provide full disinfection in selected areas.

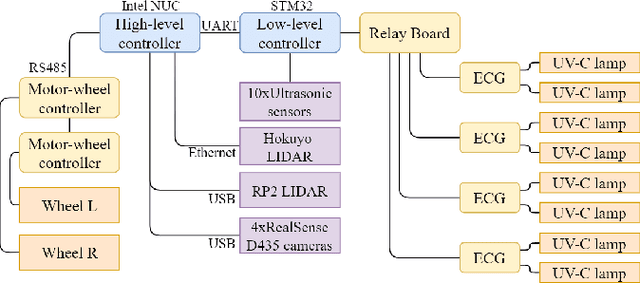

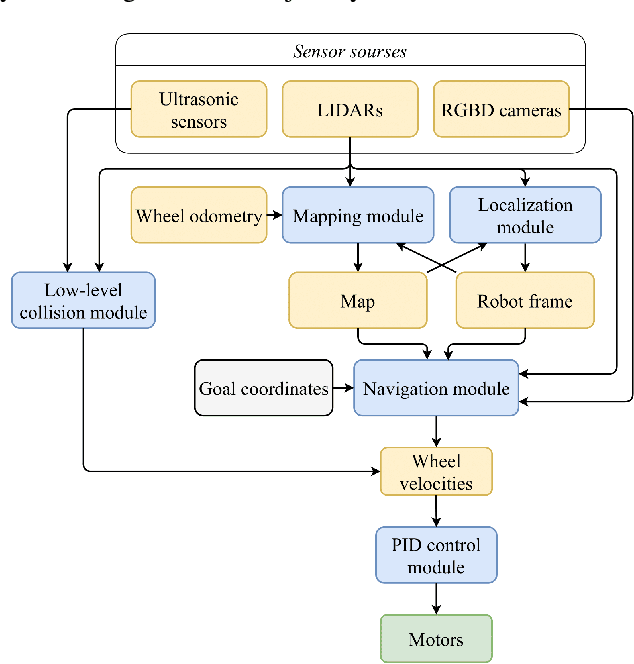

UltraBot: Autonomous Mobile Robot for Indoor UV-C Disinfection

Aug 22, 2021

Abstract:The paper focuses on the development of the autonomous robot UltraBot to reduce COVID-19 transmission and other harmful bacteria and viruses. The motivation behind the research is to develop such a robot that is capable of performing disinfection tasks without the use of harmful sprays and chemicals that can leave residues, require airing the room afterward for a long time, and can cause the corrosion of the metal structures. UltraBot technology has the potential to offer the most optimal autonomous disinfection performance along with taking care of people, keeping them from getting under UV-C radiation. The paper highlights UltraBot's mechanical and electrical structures as well as low-level and high-level control systems. The conducted experiments demonstrate the effectiveness of the robot localization module and optimal trajectories for UV-C disinfection. The results of UV-C disinfection performance revealed a decrease of the total bacterial count (TBC) by 94% on the distance of 2.8 meters from the robot after 10 minutes of UV-C irradiation.

SwarmPlay: Interactive Tic-tac-toe Board Game with Swarm of Nano-UAVs driven by Reinforcement Learning

Aug 03, 2021

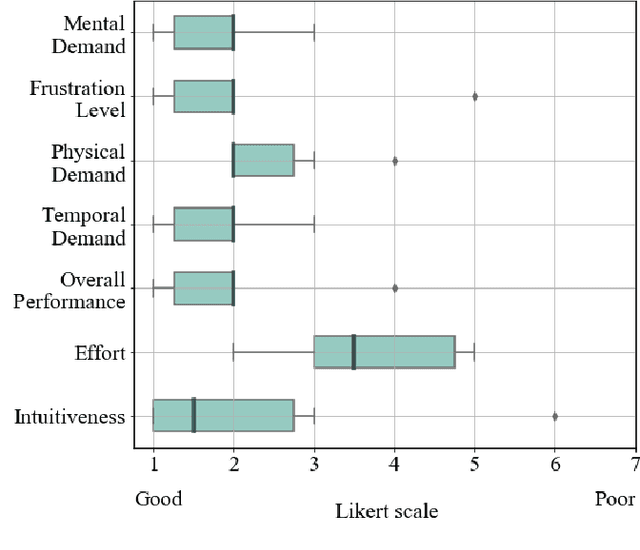

Abstract:Reinforcement learning (RL) methods have been actively applied in the field of robotics, allowing the system itself to find a solution for a task otherwise requiring a complex decision-making algorithm. In this paper, we present a novel RL-based Tic-tac-toe scenario, i.e. SwarmPlay, where each playing component is presented by an individual drone that has its own mobility and swarm intelligence to win against a human player. Thus, the combination of challenging swarm strategy and human-drone collaboration aims to make the games with machines tangible and interactive. Although some research on AI for board games already exists, e.g., chess, the SwarmPlay technology has the potential to offer much more engagement and interaction with the user as it proposes a multi-agent swarm instead of a single interactive robot. We explore user's evaluation of RL-based swarm behavior in comparison with the game theory-based behavior. The preliminary user study revealed that participants were highly engaged in the game with drones (70% put a maximum score on the Likert scale) and found it less artificial compared to the regular computer-based systems (80%). The affection of the user's game perception from its outcome was analyzed and put under discussion. User study revealed that SwarmPlay has the potential to be implemented in a wider range of games, significantly improving human-drone interactivity.

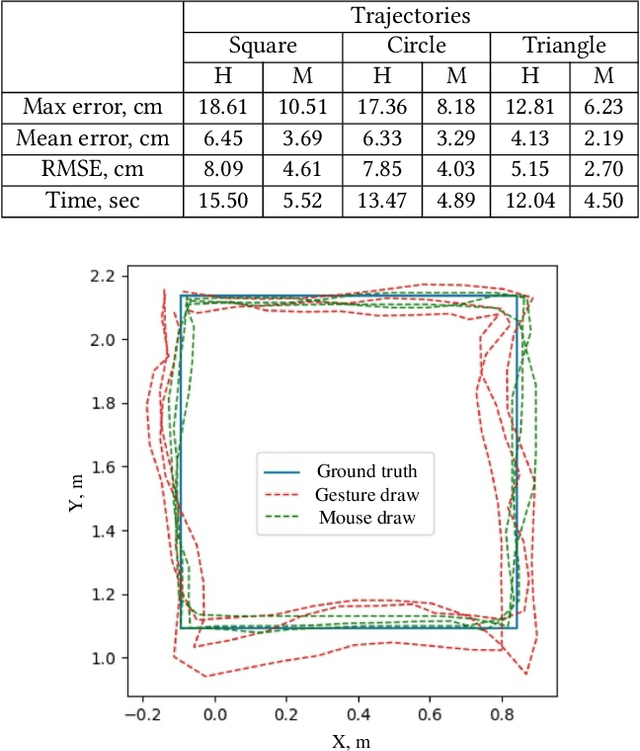

DronePaint: Swarm Light Painting with DNN-based Gesture Recognition

Jul 23, 2021

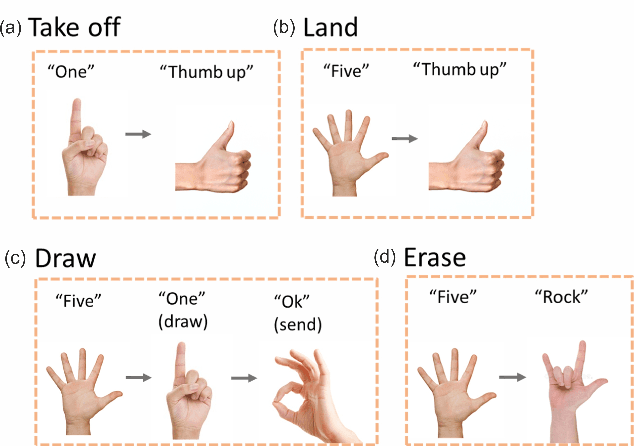

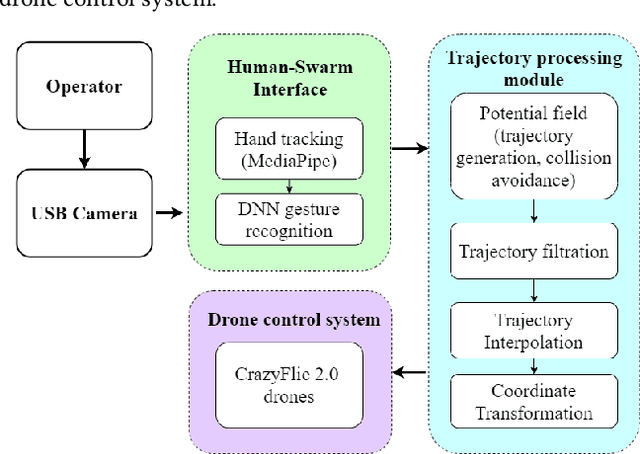

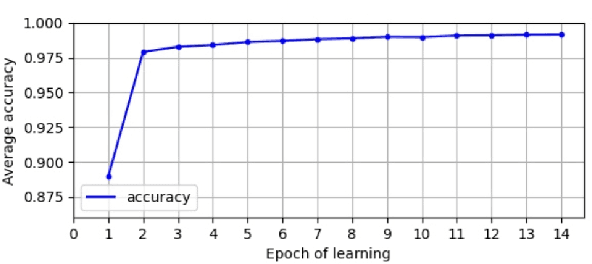

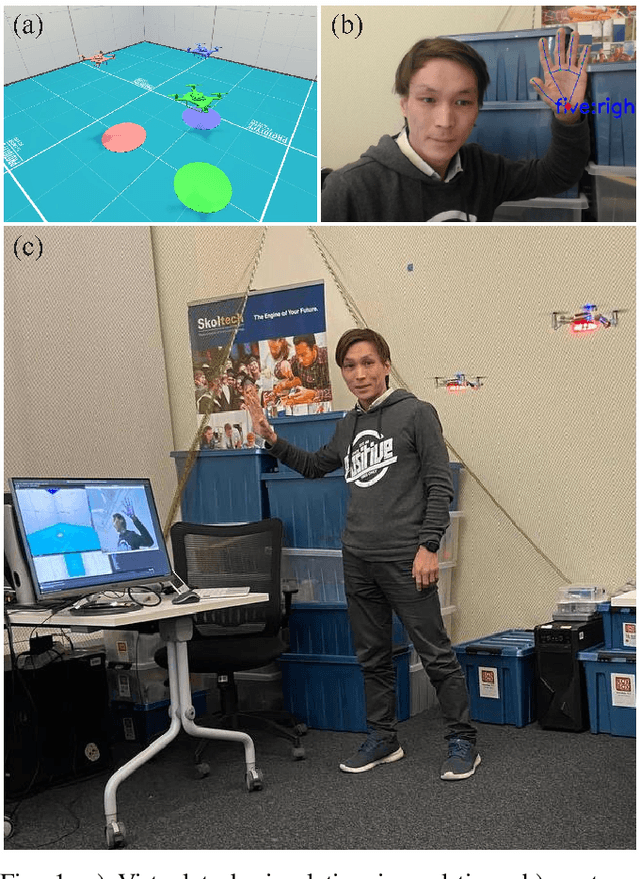

Abstract:We propose a novel human-swarm interaction system, allowing the user to directly control a swarm of drones in a complex environment through trajectory drawing with a hand gesture interface based on the DNN-based gesture recognition. The developed CV-based system allows the user to control the swarm behavior without additional devices through human gestures and motions in real-time, providing convenient tools to change the swarm's shape and formation. The two types of interaction were proposed and implemented to adjust the swarm hierarchy: trajectory drawing and free-form trajectory generation control. The experimental results revealed a high accuracy of the gesture recognition system (99.75%), allowing the user to achieve relatively high precision of the trajectory drawing (mean error of 5.6 cm in comparison to 3.1 cm by mouse drawing) over the three evaluated trajectory patterns. The proposed system can be potentially applied in complex environment exploration, spray painting using drones, and interactive drone shows, allowing users to create their own art objects by drone swarms.

SwarmPaint: Human-Swarm Interaction for Trajectory Generation and Formation Control by DNN-based Gesture Interface

Jun 28, 2021

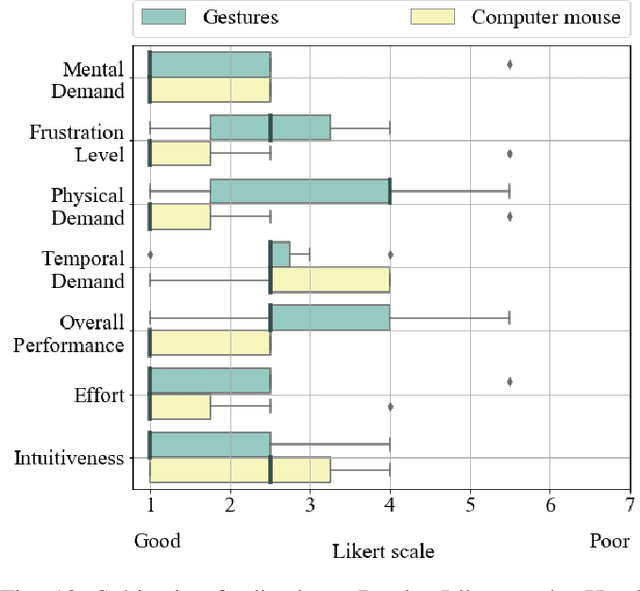

Abstract:Teleoperation tasks with multi-agent systems have a high potential in supporting human-swarm collaborative teams in exploration and rescue operations. However, it requires an intuitive and adaptive control approach to ensure swarm stability in a cluttered and dynamically shifting environment. We propose a novel human-swarm interaction system, allowing the user to control swarm position and formation by either direct hand motion or by trajectory drawing with a hand gesture interface based on the DNN gesture recognition. The key technology of the SwarmPaint is the user's ability to perform various tasks with the swarm without additional devices by switching between interaction modes. Two types of interaction were proposed and developed to adjust a swarm behavior: free-form trajectory generation control and shaped formation control. Two preliminary user studies were conducted to explore user's performance and subjective experience from human-swarm interaction through the developed control modes. The experimental results revealed a sufficient accuracy in the trajectory tracing task (mean error of 5.6 cm by gesture draw and 3.1 cm by mouse draw with the pattern of dimension 1 m by 1 m) over three evaluated trajectory patterns and up to 7.3 cm accuracy in targeting task with two target patterns of 1 m achieved by SwarmPaint interface. Moreover, the participants evaluated the trajectory drawing interface as more intuitive (12.9 %) and requiring less effort to utilize (22.7%) than direct shape and position control by gestures, although its physical workload and failure in performance were presumed as more significant (by 9.1% and 16.3%, respectively).

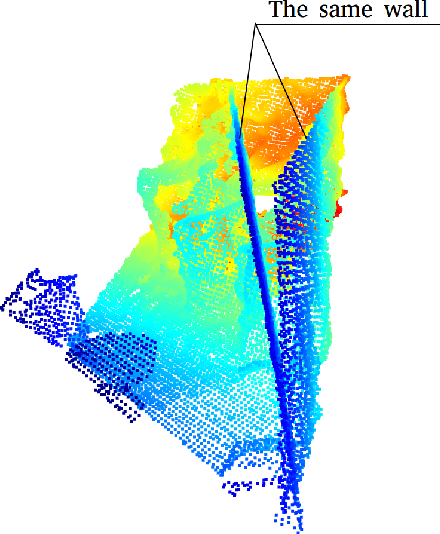

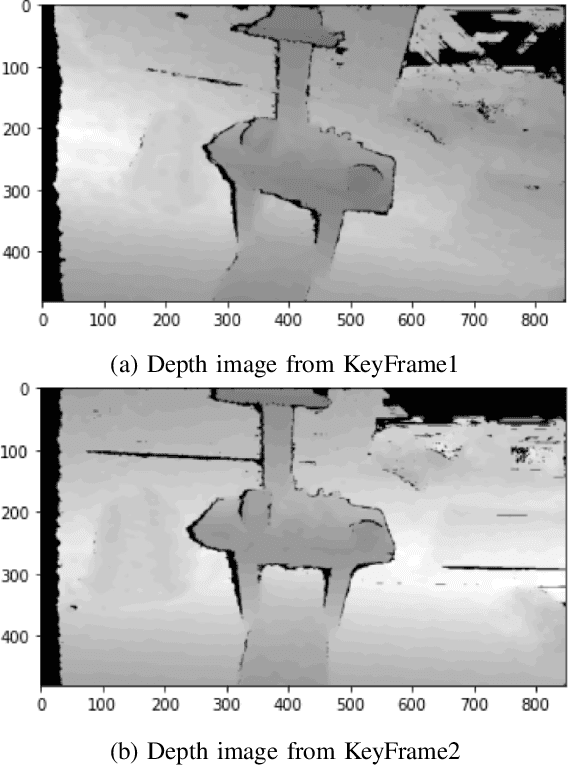

Coupling of localization and depth data for mapping using Intel RealSense T265 and D435i cameras

Apr 01, 2020

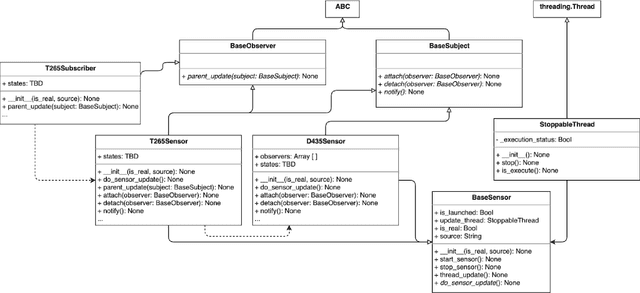

Abstract:We propose to couple two types of Intel RealSense sensors (tracking T265 and depth D435i) in order to obtain localization and 3D occupancy map of the indoor environment. We implemented a python-based observer pattern with multi-threaded approach for camera data synchronization. We compared different point cloud (PC) alignment methods (using transformations obtained from tracking camera and from ICP family methods). Tracking camera and PC alignment allow us to generate a set of transformations between frames. Based on these transformations we obtained different trajectories and provided their analysis. Finally, having poses for all frames, we combined depth data. Firstly we obtained a joint PC representing the whole scene. Then we used Octomap representation to build a map.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge