Siyuan Mei

GANeXt: A Fully ConvNeXt-Enhanced Generative Adversarial Network for MRI- and CBCT-to-CT Synthesis

Dec 22, 2025Abstract:The synthesis of computed tomography (CT) from magnetic resonance imaging (MRI) and cone-beam CT (CBCT) plays a critical role in clinical treatment planning by enabling accurate anatomical representation in adaptive radiotherapy. In this work, we propose GANeXt, a 3D patch-based, fully ConvNeXt-powered generative adversarial network for unified CT synthesis across different modalities and anatomical regions. Specifically, GANeXt employs an efficient U-shaped generator constructed from stacked 3D ConvNeXt blocks with compact convolution kernels, while the discriminator adopts a conditional PatchGAN. To improve synthesis quality, we incorporate a combination of loss functions, including mean absolute error (MAE), perceptual loss, segmentation-based masked MAE, and adversarial loss and a combination of Dice loss and cross-entropy for multi-head segmentation discriminator. For both tasks, training is performed with a batch size of 8 using two separate AdamW optimizers for the generator and discriminator, each equipped with a warmup and cosine decay scheduler, with learning rates of $5\times10^{-4}$ and $1\times10^{-3}$, respectively. Data preprocessing includes deformable registration, foreground cropping, percentile normalization for the input modality, and linear normalization of the CT to the range $[-1024, 1000]$. Data augmentation involves random zooming within $(0.8, 1.3)$ (for MRI-to-CT only), fixed-size cropping to $32\times160\times192$ for MRI-to-CT and $32\times128\times128$ for CBCT-to-CT, and random flipping. During inference, we apply a sliding-window approach with $0.8$ overlap and average folding to reconstruct the full-size sCT, followed by inversion of the CT normalization. After joint training on all regions without any fine-tuning, the final models are selected at the end of 3000 epochs for MRI-to-CT and 1000 epochs for CBCT-to-CT using the full training dataset.

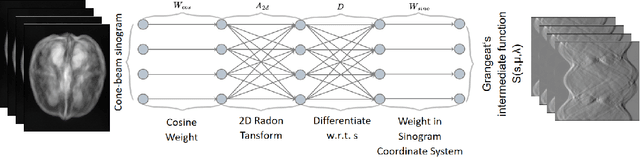

Learning Wavelet-Sparse FDK for 3D Cone-Beam CT Reconstruction

May 19, 2025Abstract:Cone-Beam Computed Tomography (CBCT) is essential in medical imaging, and the Feldkamp-Davis-Kress (FDK) algorithm is a popular choice for reconstruction due to its efficiency. However, FDK is susceptible to noise and artifacts. While recent deep learning methods offer improved image quality, they often increase computational complexity and lack the interpretability of traditional methods. In this paper, we introduce an enhanced FDK-based neural network that maintains the classical algorithm's interpretability by selectively integrating trainable elements into the cosine weighting and filtering stages. Recognizing the challenge of a large parameter space inherent in 3D CBCT data, we leverage wavelet transformations to create sparse representations of the cosine weights and filters. This strategic sparsification reduces the parameter count by $93.75\%$ without compromising performance, accelerates convergence, and importantly, maintains the inference computational cost equivalent to the classical FDK algorithm. Our method not only ensures volumetric consistency and boosts robustness to noise, but is also designed for straightforward integration into existing CT reconstruction pipelines. This presents a pragmatic enhancement that can benefit clinical applications, particularly in environments with computational limitations.

Filter2Noise: Interpretable Self-Supervised Single-Image Denoising for Low-Dose CT with Attention-Guided Bilateral Filtering

Apr 18, 2025Abstract:Effective denoising is crucial in low-dose CT to enhance subtle structures and low-contrast lesions while preventing diagnostic errors. Supervised methods struggle with limited paired datasets, and self-supervised approaches often require multiple noisy images and rely on deep networks like U-Net, offering little insight into the denoising mechanism. To address these challenges, we propose an interpretable self-supervised single-image denoising framework -- Filter2Noise (F2N). Our approach introduces an Attention-Guided Bilateral Filter that adapted to each noisy input through a lightweight module that predicts spatially varying filter parameters, which can be visualized and adjusted post-training for user-controlled denoising in specific regions of interest. To enable single-image training, we introduce a novel downsampling shuffle strategy with a new self-supervised loss function that extends the concept of Noise2Noise to a single image and addresses spatially correlated noise. On the Mayo Clinic 2016 low-dose CT dataset, F2N outperforms the leading self-supervised single-image method (ZS-N2N) by 4.59 dB PSNR while improving transparency, user control, and parametric efficiency. These features provide key advantages for medical applications that require precise and interpretable noise reduction. Our code is demonstrated at https://github.com/sypsyp97/Filter2Noise.git .

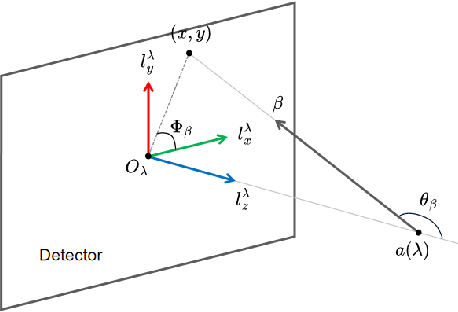

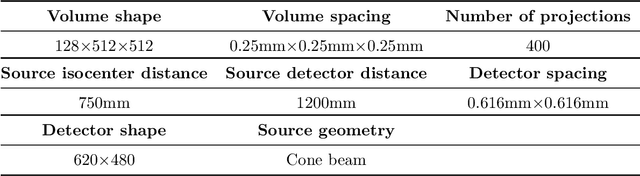

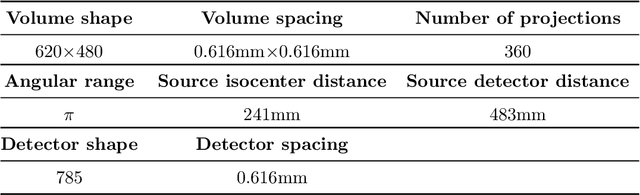

DRACO: Differentiable Reconstruction for Arbitrary CBCT Orbits

Oct 18, 2024

Abstract:This paper introduces a novel method for reconstructing cone beam computed tomography (CBCT) images for arbitrary orbits using a differentiable shift-variant filtered backprojection (FBP) neural network. Traditional CBCT reconstruction methods for arbitrary orbits, like iterative reconstruction algorithms, are computationally expensive and memory-intensive. The proposed method addresses these challenges by employing a shift-variant FBP algorithm optimized for arbitrary trajectories through a deep learning approach that adapts to a specific orbit geometry. This approach overcomes the limitations of existing techniques by integrating known operators into the learning model, minimizing the number of parameters, and improving the interpretability of the model. The proposed method is a significant advancement in interventional medical imaging, particularly for robotic C-arm CT systems, enabling faster and more accurate CBCT reconstructions with customized orbits. Especially this method can also be used for the analytical reconstruction of non-continuous orbits like circular plus arc. The experimental results demonstrate that the proposed method significantly accelerates the reconstruction process compared to conventional iterative algorithms. It achieves comparable or superior image quality, as evidenced by metrics such as the mean squared error (MSE), the peak signal-to-noise ratio (PSNR), and the structural similarity index measure (SSIM). The validation experiments show that the method can handle data from different trajectories, demonstrating its flexibility and robustness across different scan geometries. Our method demonstrates a significant improvement, particularly for the sinusoidal trajectory, achieving a 38.6% reduction in MSE, a 7.7% increase in PSNR, and a 5.0% improvement in SSIM. Furthermore, the computation time for reconstruction was reduced by more than 97%.

On the Influence of Smoothness Constraints in Computed Tomography Motion Compensation

May 29, 2024

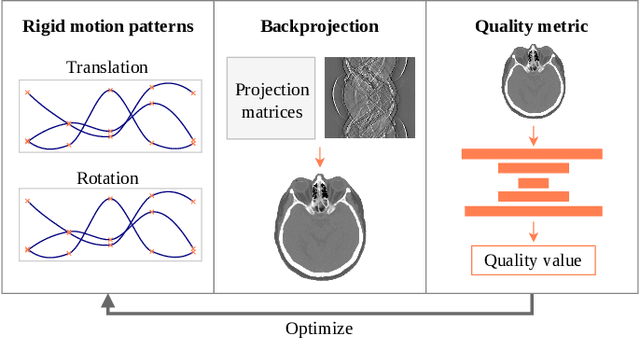

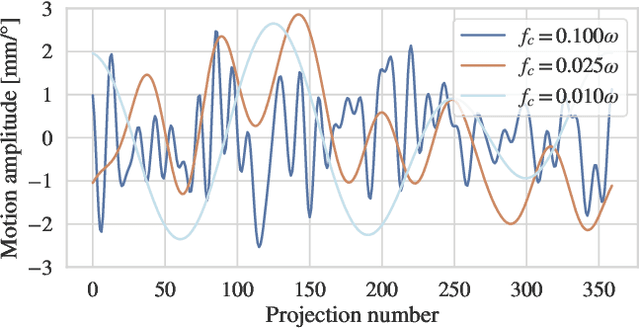

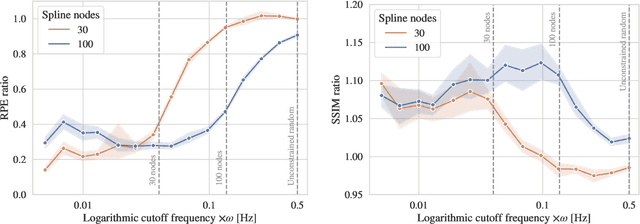

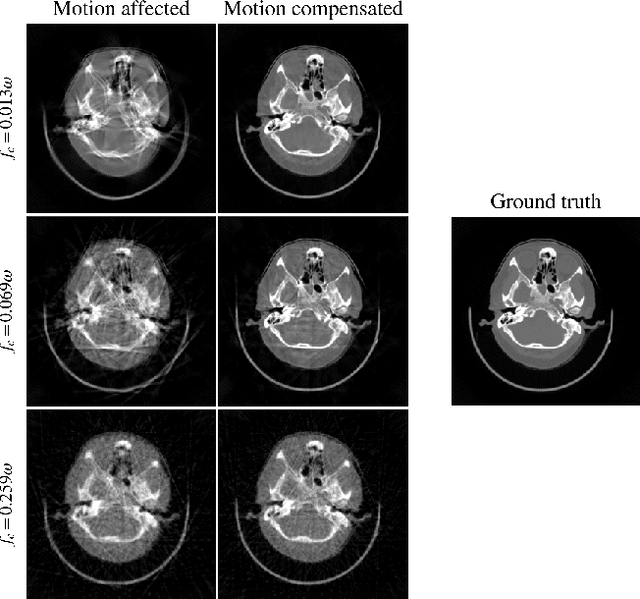

Abstract:Computed tomography (CT) relies on precise patient immobilization during image acquisition. Nevertheless, motion artifacts in the reconstructed images can persist. Motion compensation methods aim to correct such artifacts post-acquisition, often incorporating temporal smoothness constraints on the estimated motion patterns. This study analyzes the influence of a spline-based motion model within an existing rigid motion compensation algorithm for cone-beam CT on the recoverable motion frequencies. Results demonstrate that the choice of motion model crucially influences recoverable frequencies. The optimization-based motion compensation algorithm is able to accurately fit the spline nodes for frequencies almost up to the node-dependent theoretical limit according to the Nyquist-Shannon theorem. Notably, a higher node count does not compromise reconstruction performance for slow motion patterns, but can extend the range of recoverable high frequencies for the investigated algorithm. Eventually, the optimal motion model is dependent on the imaged anatomy, clinical use case, and scanning protocol and should be tailored carefully to the expected motion frequency spectrum to ensure accurate motion compensation.

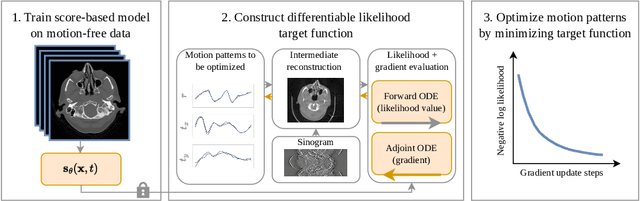

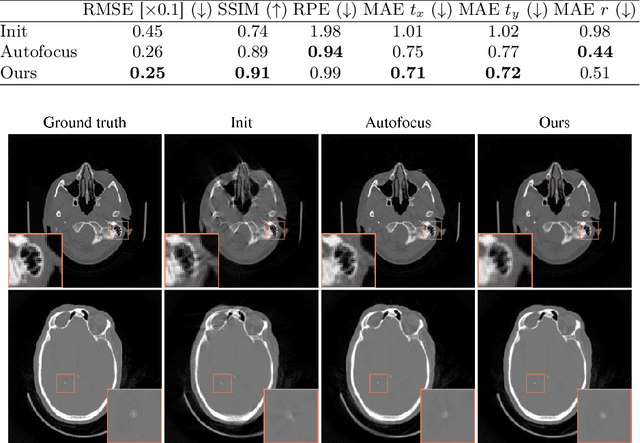

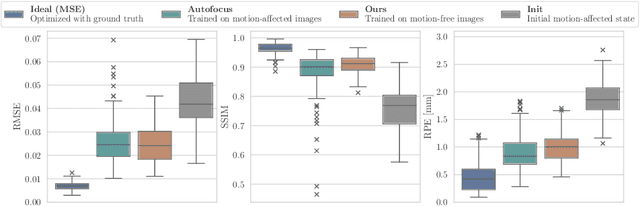

Differentiable Score-Based Likelihoods: Learning CT Motion Compensation From Clean Images

Apr 23, 2024

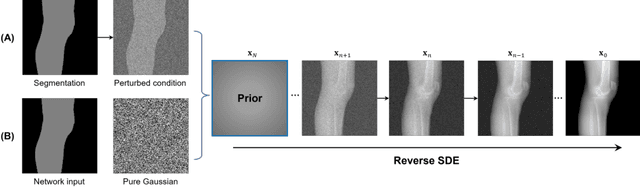

Abstract:Motion artifacts can compromise the diagnostic value of computed tomography (CT) images. Motion correction approaches require a per-scan estimation of patient-specific motion patterns. In this work, we train a score-based model to act as a probability density estimator for clean head CT images. Given the trained model, we quantify the deviation of a given motion-affected CT image from the ideal distribution through likelihood computation. We demonstrate that the likelihood can be utilized as a surrogate metric for motion artifact severity in the CT image facilitating the application of an iterative, gradient-based motion compensation algorithm. By optimizing the underlying motion parameters to maximize likelihood, our method effectively reduces motion artifacts, bringing the image closer to the distribution of motion-free scans. Our approach achieves comparable performance to state-of-the-art methods while eliminating the need for a representative data set of motion-affected samples. This is particularly advantageous in real-world applications, where patient motion patterns may exhibit unforeseen variability, ensuring robustness without implicit assumptions about recoverable motion types.

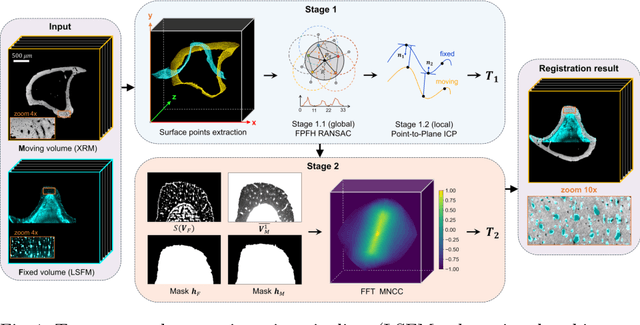

Reference-Free Multi-Modality Volume Registration of X-Ray Microscopy and Light-Sheet Fluorescence Microscopy

Apr 23, 2024

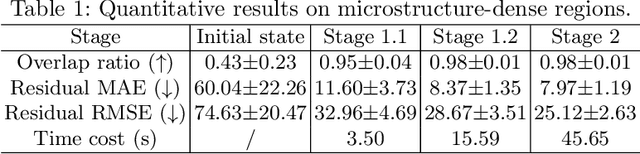

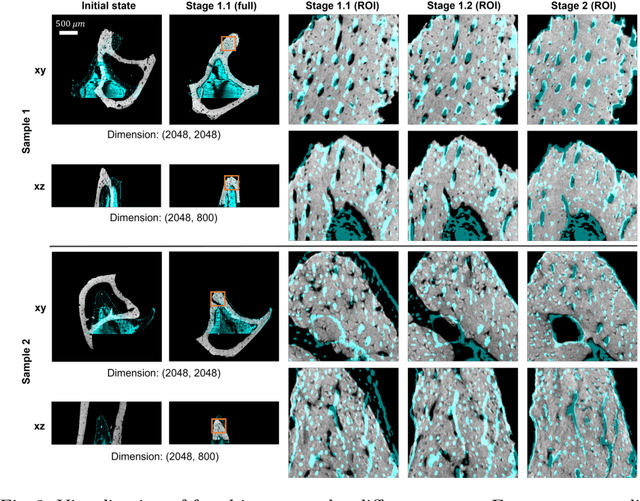

Abstract:Recently, X-ray microscopy (XRM) and light-sheet fluorescence microscopy (LSFM) have emerged as two pivotal imaging tools in preclinical research on bone remodeling diseases, offering micrometer-level resolution. Integrating these complementary modalities provides a holistic view of bone microstructures, facilitating function-oriented volume analysis across different disease cycles. However, registering such independently acquired large-scale volumes is extremely challenging under real and reference-free scenarios. This paper presents a fast two-stage pipeline for volume registration of XRM and LSFM. The first stage extracts the surface features and employs two successive point cloud-based methods for coarse alignment. The second stage fine-tunes the initial alignment using a modified cross-correlation method, ensuring precise volumetric registration. Moreover, we propose residual similarity as a novel metric to assess the alignment of two complementary modalities. The results imply robust gradual improvement across the stages. In the end, all correlating microstructures, particularly lacunae in XRM and bone cells in LSFM, are precisely matched, enabling new insights into bone diseases like osteoporosis which are a substantial burden in aging societies.

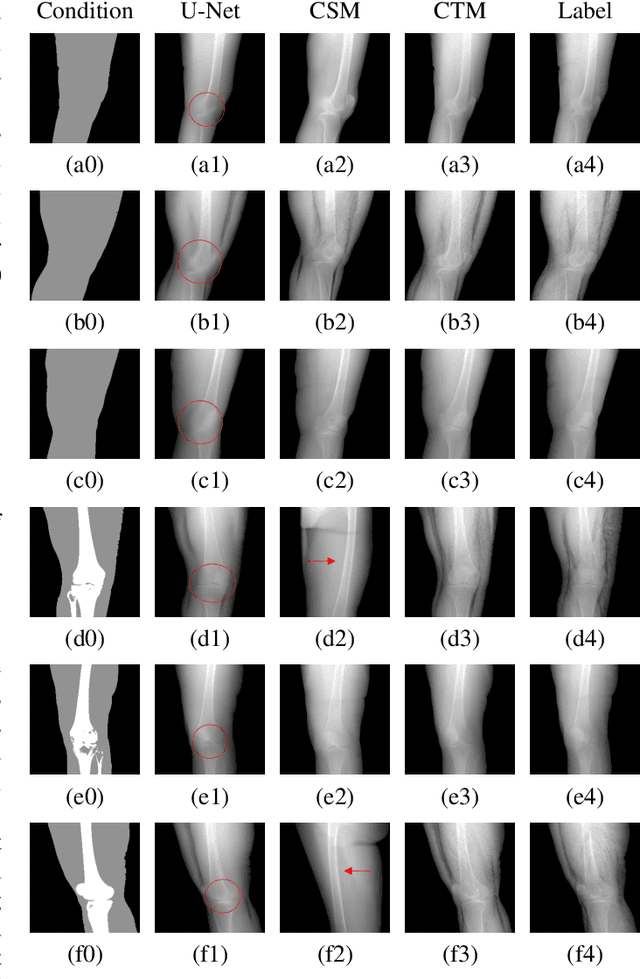

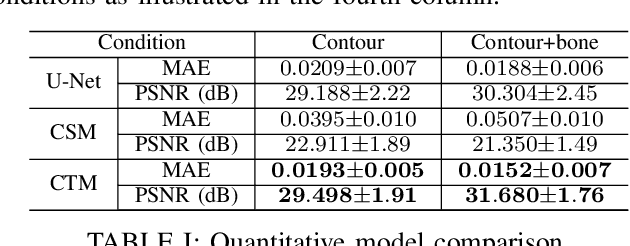

Segmentation-Guided Knee Radiograph Generation using Conditional Diffusion Models

Apr 04, 2024

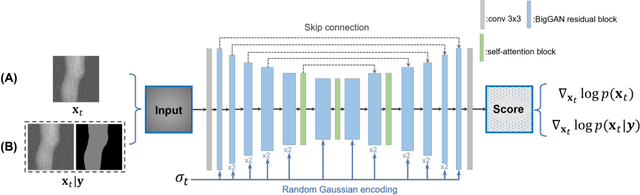

Abstract:Deep learning-based medical image processing algorithms require representative data during development. In particular, surgical data might be difficult to obtain, and high-quality public datasets are limited. To overcome this limitation and augment datasets, a widely adopted solution is the generation of synthetic images. In this work, we employ conditional diffusion models to generate knee radiographs from contour and bone segmentations. Remarkably, two distinct strategies are presented by incorporating the segmentation as a condition into the sampling and training process, namely, conditional sampling and conditional training. The results demonstrate that both methods can generate realistic images while adhering to the conditioning segmentation. The conditional training method outperforms the conditional sampling method and the conventional U-Net.

Analysing Diffusion Segmentation for Medical Images

Mar 21, 2024

Abstract:Denoising Diffusion Probabilistic models have become increasingly popular due to their ability to offer probabilistic modeling and generate diverse outputs. This versatility inspired their adaptation for image segmentation, where multiple predictions of the model can produce segmentation results that not only achieve high quality but also capture the uncertainty inherent in the model. Here, powerful architectures were proposed for improving diffusion segmentation performance. However, there is a notable lack of analysis and discussions on the differences between diffusion segmentation and image generation, and thorough evaluations are missing that distinguish the improvements these architectures provide for segmentation in general from their benefit for diffusion segmentation specifically. In this work, we critically analyse and discuss how diffusion segmentation for medical images differs from diffusion image generation, with a particular focus on the training behavior. Furthermore, we conduct an assessment how proposed diffusion segmentation architectures perform when trained directly for segmentation. Lastly, we explore how different medical segmentation tasks influence the diffusion segmentation behavior and the diffusion process could be adapted accordingly. With these analyses, we aim to provide in-depth insights into the behavior of diffusion segmentation that allow for a better design and evaluation of diffusion segmentation methods in the future.

EAGLE: An Edge-Aware Gradient Localization Enhanced Loss for CT Image Reconstruction

Mar 15, 2024Abstract:Computed Tomography (CT) image reconstruction is crucial for accurate diagnosis and deep learning approaches have demonstrated significant potential in improving reconstruction quality. However, the choice of loss function profoundly affects the reconstructed images. Traditional mean squared error loss often produces blurry images lacking fine details, while alternatives designed to improve may introduce structural artifacts or other undesirable effects. To address these limitations, we propose Eagle-Loss, a novel loss function designed to enhance the visual quality of CT image reconstructions. Eagle-Loss applies spectral analysis of localized features within gradient changes to enhance sharpness and well-defined edges. We evaluated Eagle-Loss on two public datasets across low-dose CT reconstruction and CT field-of-view extension tasks. Our results show that Eagle-Loss consistently improves the visual quality of reconstructed images, surpassing state-of-the-art methods across various network architectures. Code and data are available at \url{https://github.com/sypsyp97/Eagle_Loss}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge