Samuel Paradis

DeepChrome 2.0: Investigating and Improving Architectures, Visualizations, & Experiments

Sep 24, 2022

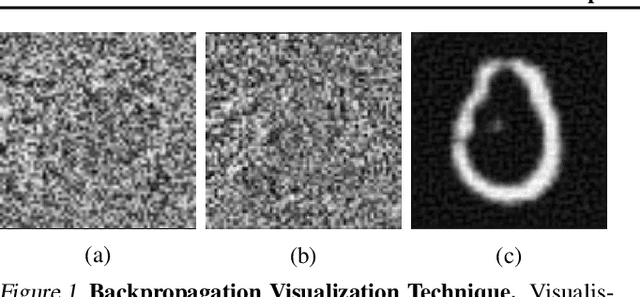

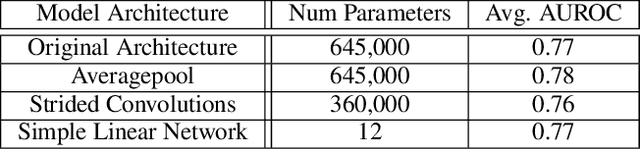

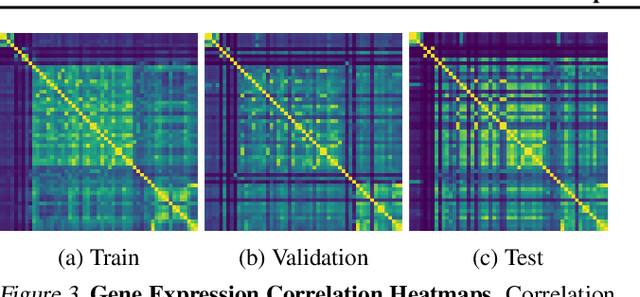

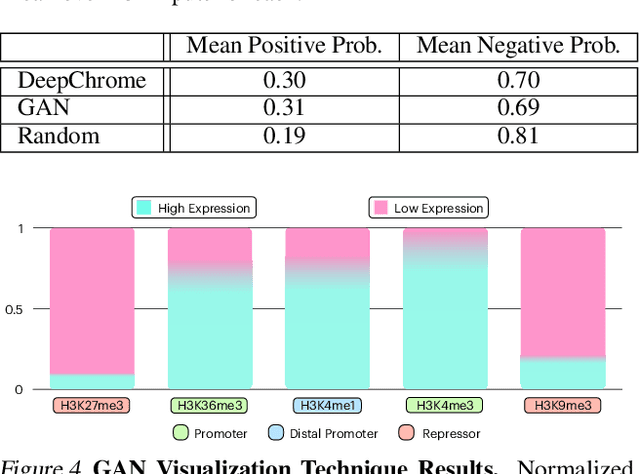

Abstract:Histone modifications play a critical role in gene regulation. Consequently, predicting gene expression from histone modification signals is a highly motivated problem in epigenetics. We build upon the work of DeepChrome by Singh et al. (2016), who trained classifiers that map histone modification signals to gene expression. We present a novel visualization technique for providing insight into combinatorial relationships among histone modifications for gene regulation that uses a generative adversarial network to generate histone modification signals. We also explore and compare various architectural changes, with results suggesting that the 645k-parameter convolutional neural network from DeepChrome has the same predictive power as a 12-parameter linear network. Results from cross-cell prediction experiments, where the model is trained and tested on datasets of varying sizes, cell-types, and correlations, suggest the relationship between histone modification signals and gene expression is independent of cell type. We release our PyTorch re-implementation of DeepChrome on GitHub \footnote{\url{github.com/ssss1029/gene_expression_294}}.\parfillskip=0pt

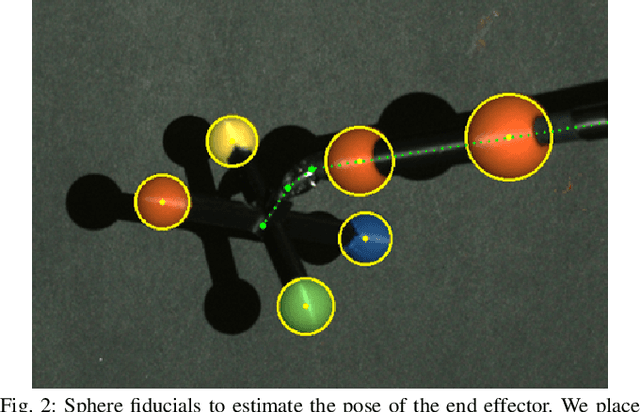

Learning to Localize, Grasp, and Hand Over Unmodified Surgical Needles

Dec 08, 2021

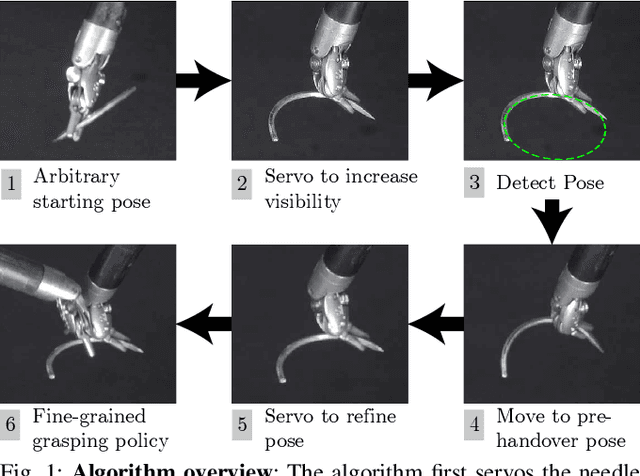

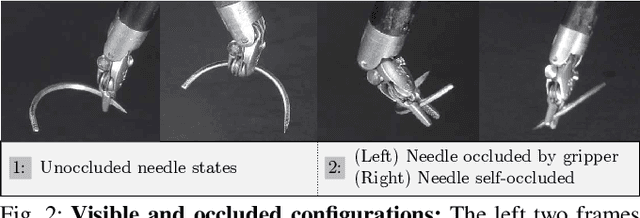

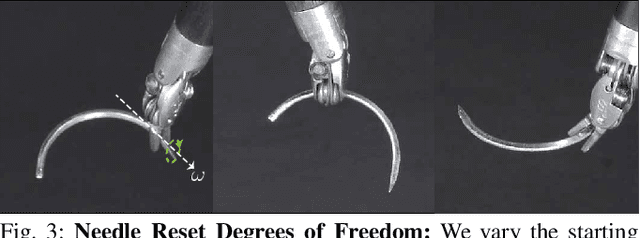

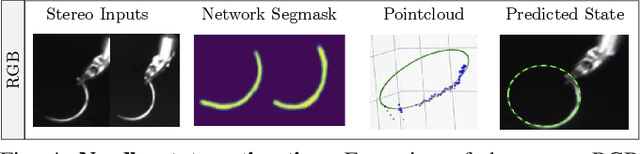

Abstract:Robotic Surgical Assistants (RSAs) are commonly used to perform minimally invasive surgeries by expert surgeons. However, long procedures filled with tedious and repetitive tasks such as suturing can lead to surgeon fatigue, motivating the automation of suturing. As visual tracking of a thin reflective needle is extremely challenging, prior work has modified the needle with nonreflective contrasting paint. As a step towards automation of a suturing subtask without modifying the needle, we propose HOUSTON: Handoff of Unmodified, Surgical, Tool-Obstructed Needles, a problem and algorithm that uses a learned active sensing policy with a stereo camera to localize and align the needle into a visible and accessible pose for the other arm. To compensate for robot positioning and needle perception errors, the algorithm then executes a high-precision grasping motion that uses multiple cameras. In physical experiments using the da Vinci Research Kit (dVRK), HOUSTON successfully passes unmodified surgical needles with a success rate of 96.7% and is able to perform handover sequentially between the arms 32.4 times on average before failure. On needles unseen in training, HOUSTON achieves a success rate of 75 - 92.9%. To our knowledge, this work is the first to study handover of unmodified surgical needles. See https://tinyurl.com/houston-surgery for additional materials.

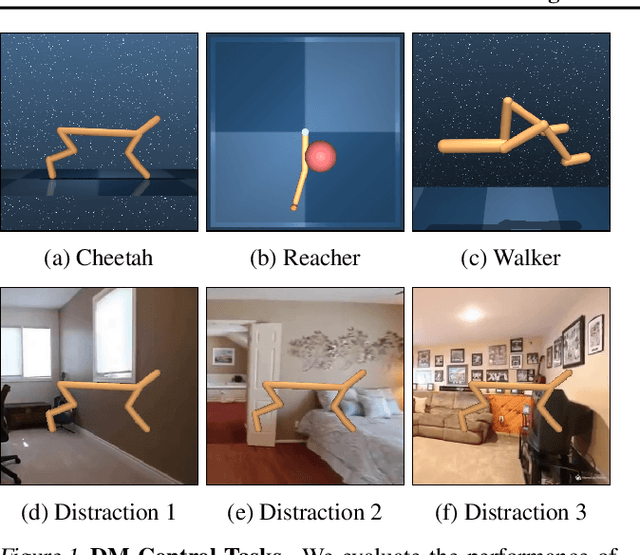

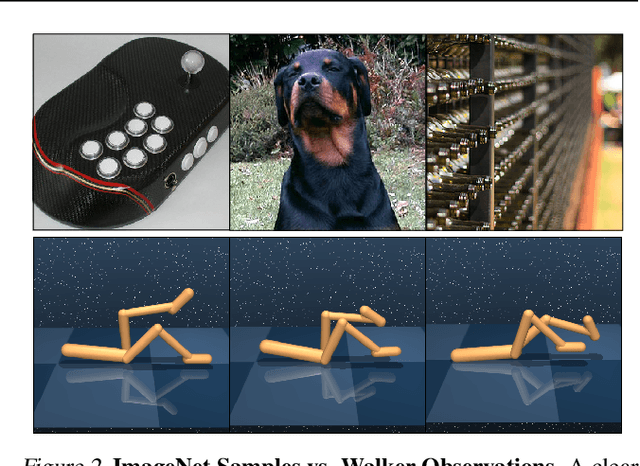

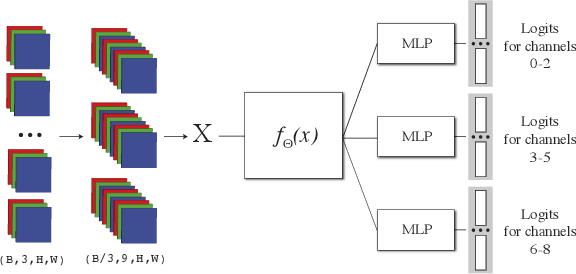

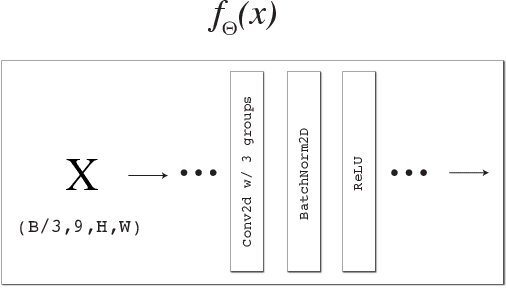

Pretraining & Reinforcement Learning: Sharpening the Axe Before Cutting the Tree

Oct 06, 2021

Abstract:Pretraining is a common technique in deep learning for increasing performance and reducing training time, with promising experimental results in deep reinforcement learning (RL). However, pretraining requires a relevant dataset for training. In this work, we evaluate the effectiveness of pretraining for RL tasks, with and without distracting backgrounds, using both large, publicly available datasets with minimal relevance, as well as case-by-case generated datasets labeled via self-supervision. Results suggest filters learned during training on less relevant datasets render pretraining ineffective, while filters learned during training on the in-distribution datasets reliably reduce RL training time and improve performance after 80k RL training steps. We further investigate, given a limited number of environment steps, how to optimally divide the available steps into pretraining and RL training to maximize RL performance. Our code is available on GitHub

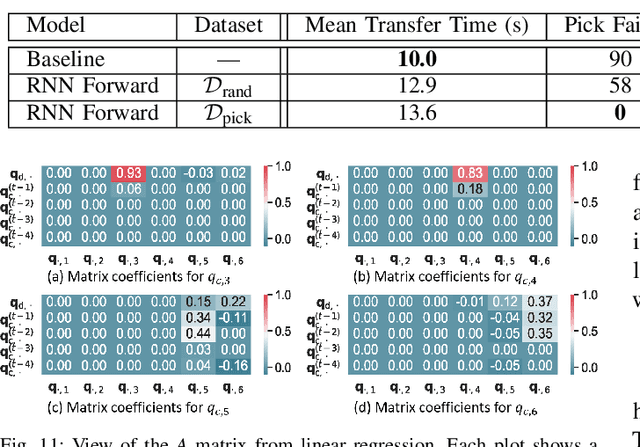

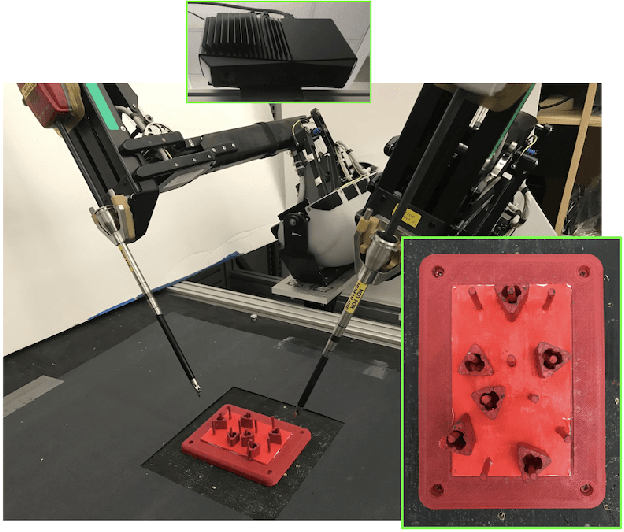

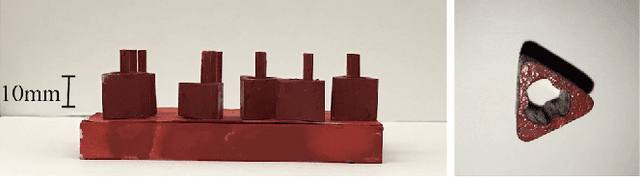

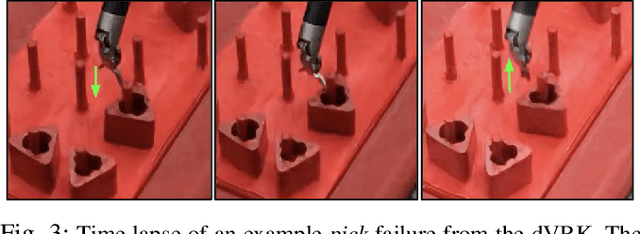

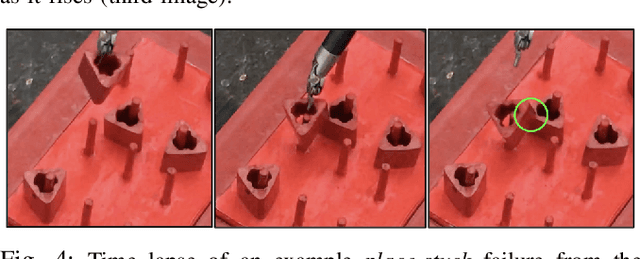

Superhuman Surgical Peg Transfer Using Depth-Sensing and Deep Recurrent Neural Networks

Dec 24, 2020

Abstract:We consider the automation of the well-known peg-transfer task from the Fundamentals of Laparoscopic Surgery (FLS). While human surgeons teleoperate robots to perform this task with great dexterity, it remains challenging to automate. We present an approach that leverages emerging innovations in depth sensing, deep learning, and Peiper's method for computing inverse kinematics with time-minimized joint motion. We use the da Vinci Research Kit (dVRK) surgical robot with a Zivid depth sensor, and automate three variants of the peg-transfer task: unilateral, bilateral without handovers, and bilateral with handovers. We use 3D-printed fiducial markers with depth sensing and a deep recurrent neural network to improve the precision of the dVRK to less than 1 mm. We report experimental results for 1800 block transfer trials. Results suggest that the fully automated system can outperform an experienced human surgical resident, who performs far better than untrained humans, in terms of both speed and success rate. For the most difficult variant of peg transfer (with handovers) we compare the performance of the surgical resident with performance of the automated system over 120 trials for each. The experienced surgical resident achieves success rate 93.2 % with mean transfer time of 8.6 seconds. The automated system achieves success rate 94.1 % with mean transfer time of 8.1 seconds. To our knowledge this is the first fully automated system to achieve "superhuman" performance in both speed and success on peg transfer. Supplementary material is available at https://sites.google.com/view/surgicalpegtransfer.

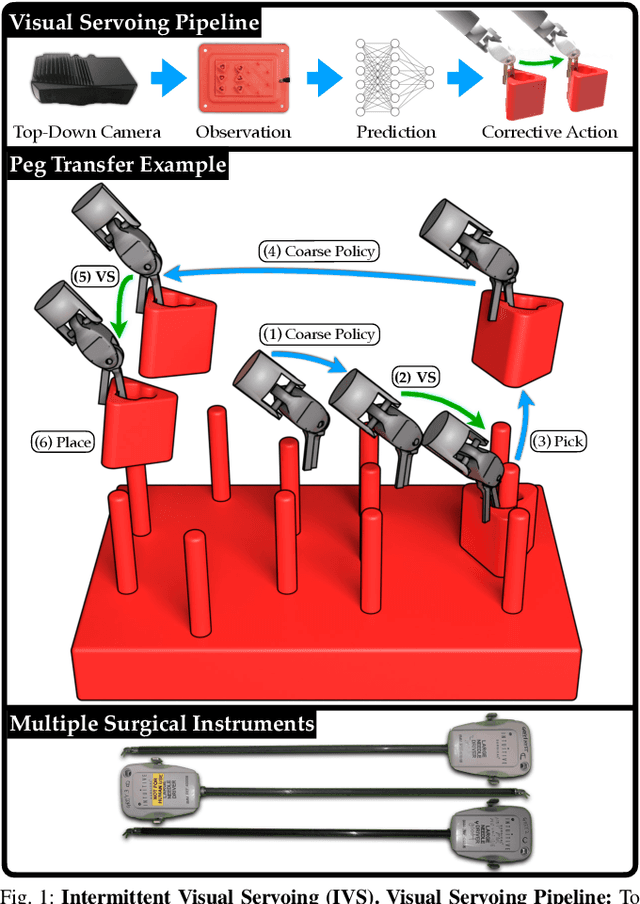

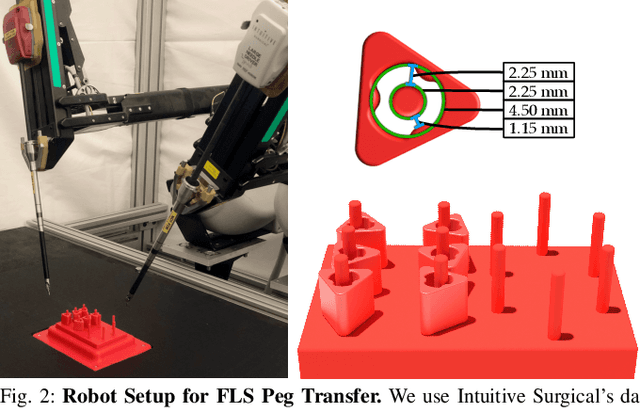

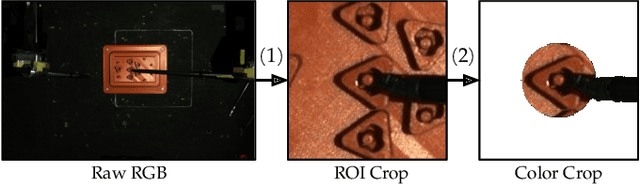

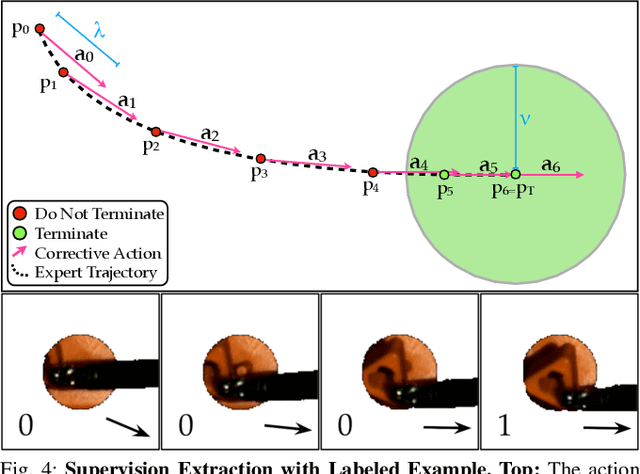

Intermittent Visual Servoing: Efficiently Learning Policies Robust to Instrument Changes for High-precision Surgical Manipulation

Nov 12, 2020

Abstract:Automation of surgical tasks using cable-driven robots is challenging due to backlash, hysteresis, and cable tension, and these issues are exacerbated as surgical instruments must often be changed during an operation. In this work, we propose a framework for automation of high-precision surgical tasks by learning sample efficient, accurate, closed-loop policies that operate directly on visual feedback instead of robot encoder estimates. This framework, which we call intermittent visual servoing (IVS), intermittently switches to a learned visual servo policy for high-precision segments of repetitive surgical tasks while relying on a coarse open-loop policy for the segments where precision is not necessary. To compensate for cable-related effects, we apply imitation learning to rapidly train a policy that maps images of the workspace and instrument from a top-down RGB camera to small corrective motions. We train the policy using only 180 human demonstrations that are roughly 2 seconds each. Results on a da Vinci Research Kit suggest that combining the coarse policy with half a second of corrections from the learned policy during each high-precision segment improves the success rate on the Fundamentals of Laparoscopic Surgery peg transfer task from 72.9% to 99.2%, 31.3% to 99.2%, and 47.2% to 100.0% for 3 instruments with differing cable-related effects. In the contexts we studied, IVS attains the highest published success rates for automated surgical peg transfer and is significantly more reliable than previous techniques when instruments are changed. Supplementary material is available at https://tinyurl.com/ivs-icra.

6-DoF Grasp Planning using Fast 3D Reconstruction and Grasp Quality CNN

Sep 18, 2020

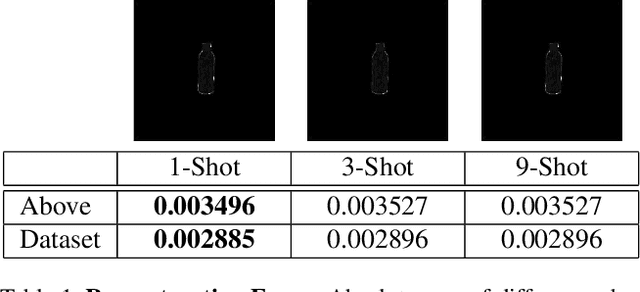

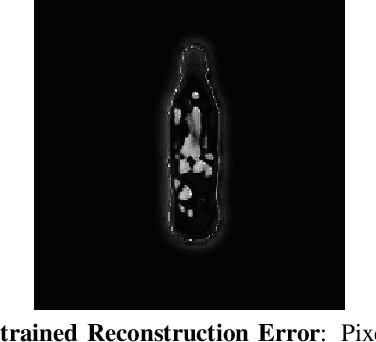

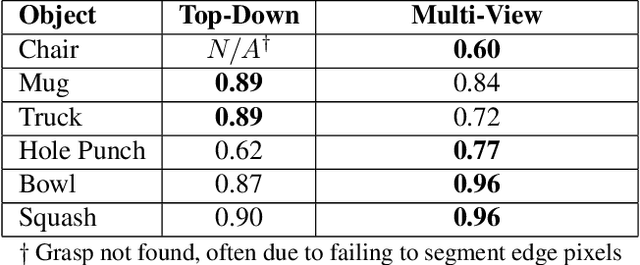

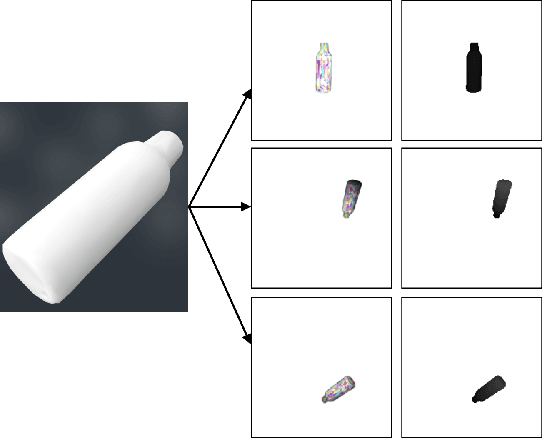

Abstract:Recent consumer demand for home robots has accelerated performance of robotic grasping. However, a key component of the perception pipeline, the depth camera, is still expensive and inaccessible to most consumers. In addition, grasp planning has significantly improved recently, by leveraging large datasets and cloud robotics, and by limiting the state and action space to top-down grasps with 4 degrees of freedom (DoF). By leveraging multi-view geometry of the object using inexpensive equipment such as off-the-shelf RGB cameras and state-of-the-art algorithms such as Learn Stereo Machine (LSM\cite{kar2017learning}), the robot is able to generate more robust grasps from different angles with 6-DoF. In this paper, we present a modification of LSM to graspable objects, evaluate the grasps, and develop a 6-DoF grasp planner based on Grasp-Quality CNN (GQ-CNN\cite{mahler2017dex}) that exploits multiple camera views to plan a robust grasp, even in the absence of a possible top-down grasp.

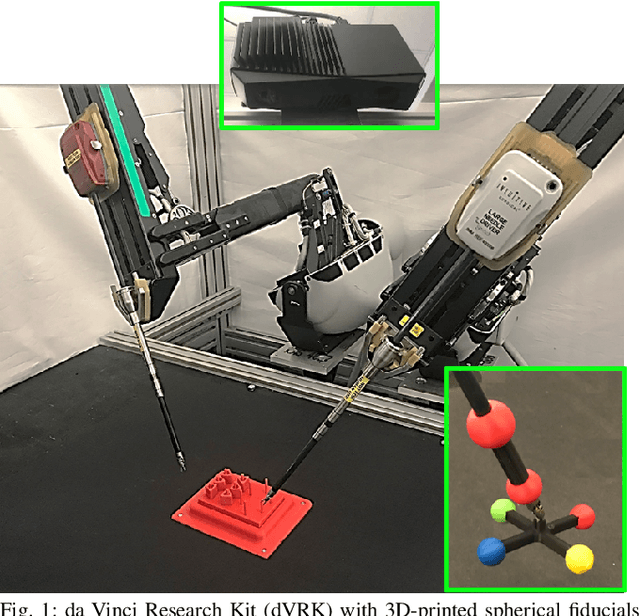

Efficiently Calibrating Cable-Driven Surgical Robots With RGBD Sensing, Temporal Windowing, and Linear and Recurrent Neural Network Compensation

Mar 19, 2020

Abstract:Automation of surgical subtasks using cable-driven robotic surgical assistants (RSAs) such as Intuitive Surgical's da Vinci Research Kit (dVRK) is challenging due to imprecision in control from cable-related effects such as backlash, stretch, and hysteresis. We propose a novel approach to efficiently calibrate a dVRK by placing a 3D printed fiducial coordinate frame on the arm and end-effector that is tracked using RGBD sensing. To measure the coupling effects between joints and history-dependent effects, we analyze data from sampled trajectories and consider 13 modeling approaches using LSTM recurrent neural networks and linear models with varying temporal window length to provide corrective feedback. With the proposed method, data collection takes 31 minutes to produce 1800 samples and model training takes less than a minute. Results suggest that the resulting model can reduce the mean tracking error of the physical robot from 2.96mm to 0.65mm on a test set of reference trajectories. We evaluate the model by executing open-loop trajectories of the FLS peg transfer surgeon training task. Results suggest that the best approach increases success rate from 39.4% to 96.7% comparable to the performance of an expert surgical resident. Supplementary material, including 3D-printable models, is available at https://sites.google.com/berkeley.edu/surgical-calibration.

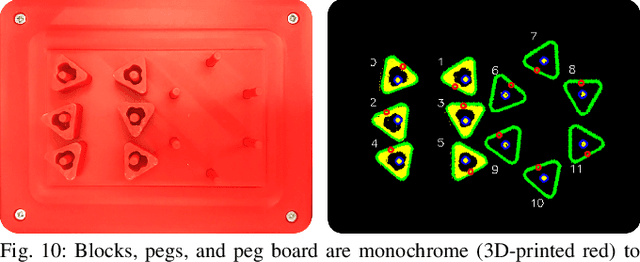

Applying Depth-Sensing to Automated Surgical Manipulation with a da Vinci Robot

Feb 15, 2020

Abstract:Recent advances in depth-sensing have significantly increased accuracy, resolution, and frame rate, as shown in the 1920x1200 resolution and 13 frames per second Zivid RGBD camera. In this study, we explore the potential of depth sensing for efficient and reliable automation of surgical subtasks. We consider a monochrome (all red) version of the peg transfer task from the Fundamentals of Laparoscopic Surgery training suite implemented with the da Vinci Research Kit (dVRK). We use calibration techniques that allow the imprecise, cable-driven da Vinci to reduce error from 4-5 mm to 1-2 mm in the task space. We report experimental results for a handover-free version of the peg transfer task, performing 20 and 5 physical episodes with single- and bilateral-arm setups, respectively. Results over 236 and 49 total block transfer attempts for the single- and bilateral-arm peg transfer cases suggest that reliability can be attained with 86.9 % and 78.0 % for each individual block, with respective block transfer speeds of 10.02 and 5.72 seconds. Supplementary material is available at https://sites.google.com/view/peg-transfer.

Pay Attention: Leveraging Sequence Models to Predict the Useful Life of Batteries

Oct 05, 2019

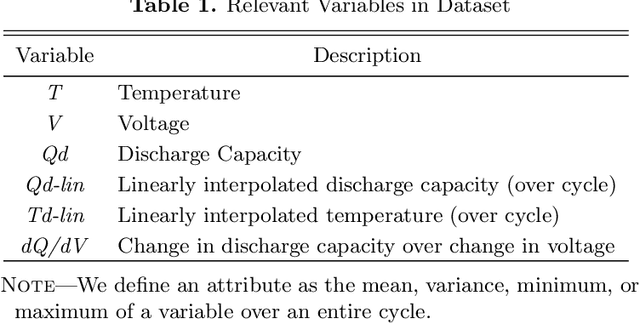

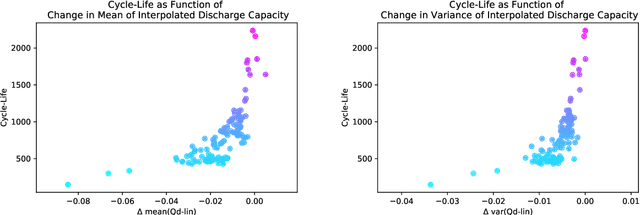

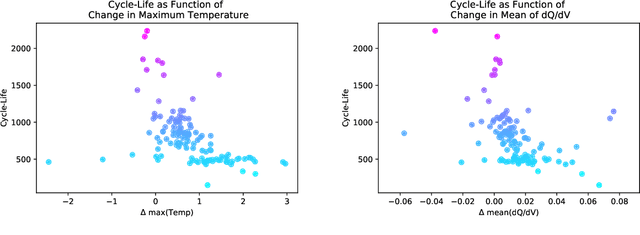

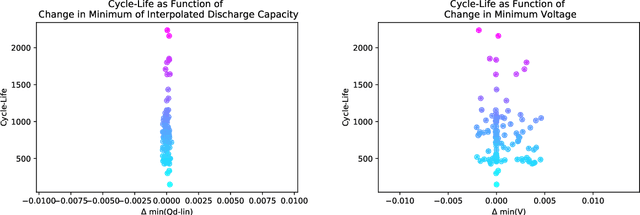

Abstract:We use data on 124 batteries released by Stanford University to first try to solve the binary classification problem of determining if a battery is "good" or "bad" given only the first 5 cycles of data (i.e., will it last longer than a certain threshold of cycles), as well as the prediction problem of determining the exact number of cycles a battery will last given the first 100 cycles of data. We approach the problem from a purely data-driven standpoint, hoping to use deep learning to learn the patterns in the sequences of data that the Stanford team engineered by hand. For both problems, we used a similar deep network design, that included an optional 1-D convolution, LSTMs, an optional Attention layer, followed by fully connected layers to produce our output. For the classification task, we were able to achieve very competitive results, with validation accuracies above 90%, and a test accuracy of 95%, compared to the 97.5% test accuracy of the current leading model. For the prediction task, we were also able to achieve competitive results, with a test MAPE error of 12.5% as compared with a 9.1% MAPE error achieved by the current leading model (Severson et al. 2019).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge