Renjie Wan

Nanyang Technological University, Singapore

Align 3D Representation and Text Embedding for 3D Content Personalization

Aug 23, 2025Abstract:Recent advances in NeRF and 3DGS have significantly enhanced the efficiency and quality of 3D content synthesis. However, efficient personalization of generated 3D content remains a critical challenge. Current 3D personalization approaches predominantly rely on knowledge distillation-based methods, which require computationally expensive retraining procedures. To address this challenge, we propose \textbf{Invert3D}, a novel framework for convenient 3D content personalization. Nowadays, vision-language models such as CLIP enable direct image personalization through aligned vision-text embedding spaces. However, the inherent structural differences between 3D content and 2D images preclude direct application of these techniques to 3D personalization. Our approach bridges this gap by establishing alignment between 3D representations and text embedding spaces. Specifically, we develop a camera-conditioned 3D-to-text inverse mechanism that projects 3D contents into a 3D embedding aligned with text embeddings. This alignment enables efficient manipulation and personalization of 3D content through natural language prompts, eliminating the need for computationally retraining procedures. Extensive experiments demonstrate that Invert3D achieves effective personalization of 3D content. Our work is available at: https://github.com/qsong2001/Invert3D.

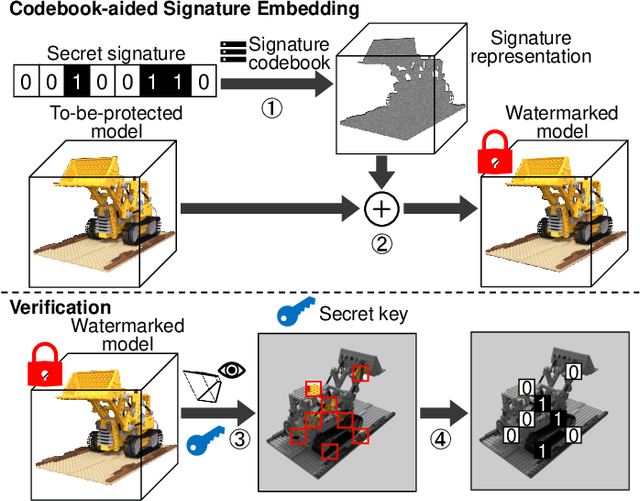

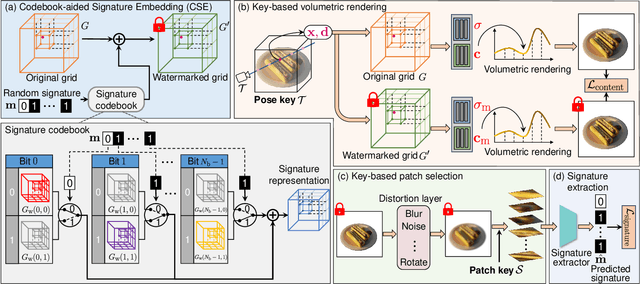

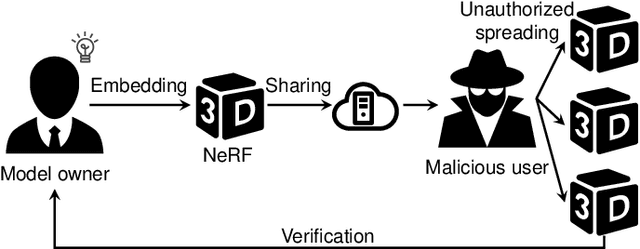

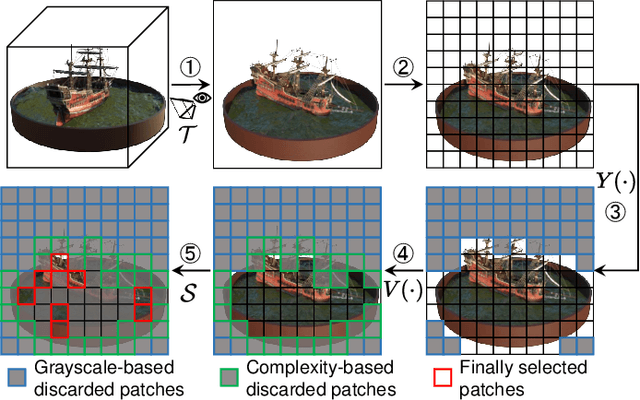

The NeRF Signature: Codebook-Aided Watermarking for Neural Radiance Fields

Feb 26, 2025

Abstract:Neural Radiance Fields (NeRF) have been gaining attention as a significant form of 3D content representation. With the proliferation of NeRF-based creations, the need for copyright protection has emerged as a critical issue. Although some approaches have been proposed to embed digital watermarks into NeRF, they often neglect essential model-level considerations and incur substantial time overheads, resulting in reduced imperceptibility and robustness, along with user inconvenience. In this paper, we extend the previous criteria for image watermarking to the model level and propose NeRF Signature, a novel watermarking method for NeRF. We employ a Codebook-aided Signature Embedding (CSE) that does not alter the model structure, thereby maintaining imperceptibility and enhancing robustness at the model level. Furthermore, after optimization, any desired signatures can be embedded through the CSE, and no fine-tuning is required when NeRF owners want to use new binary signatures. Then, we introduce a joint pose-patch encryption watermarking strategy to hide signatures into patches rendered from a specific viewpoint for higher robustness. In addition, we explore a Complexity-Aware Key Selection (CAKS) scheme to embed signatures in high visual complexity patches to enhance imperceptibility. The experimental results demonstrate that our method outperforms other baseline methods in terms of imperceptibility and robustness. The source code is available at: https://github.com/luo-ziyuan/NeRF_Signature.

CogSimulator: A Model for Simulating User Cognition & Behavior with Minimal Data for Tailored Cognitive Enhancement

Dec 10, 2024Abstract:The interplay between cognition and gaming, notably through educational games enhancing cognitive skills, has garnered significant attention in recent years. This research introduces the CogSimulator, a novel algorithm for simulating user cognition in small-group settings with minimal data, as the educational game Wordle exemplifies. The CogSimulator employs Wasserstein-1 distance and coordinates search optimization for hyperparameter tuning, enabling precise few-shot predictions in new game scenarios. Comparative experiments with the Wordle dataset illustrate that our model surpasses most conventional machine learning models in mean Wasserstein-1 distance, mean squared error, and mean accuracy, showcasing its efficacy in cognitive enhancement through tailored game design.

GaussianMarker: Uncertainty-Aware Copyright Protection of 3D Gaussian Splatting

Oct 31, 2024

Abstract:3D Gaussian Splatting (3DGS) has become a crucial method for acquiring 3D assets. To protect the copyright of these assets, digital watermarking techniques can be applied to embed ownership information discreetly within 3DGS models. However, existing watermarking methods for meshes, point clouds, and implicit radiance fields cannot be directly applied to 3DGS models, as 3DGS models use explicit 3D Gaussians with distinct structures and do not rely on neural networks. Naively embedding the watermark on a pre-trained 3DGS can cause obvious distortion in rendered images. In our work, we propose an uncertainty-based method that constrains the perturbation of model parameters to achieve invisible watermarking for 3DGS. At the message decoding stage, the copyright messages can be reliably extracted from both 3D Gaussians and 2D rendered images even under various forms of 3D and 2D distortions. We conduct extensive experiments on the Blender, LLFF and MipNeRF-360 datasets to validate the effectiveness of our proposed method, demonstrating state-of-the-art performance on both message decoding accuracy and view synthesis quality.

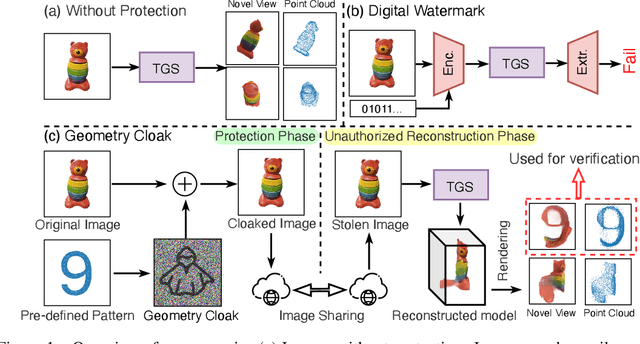

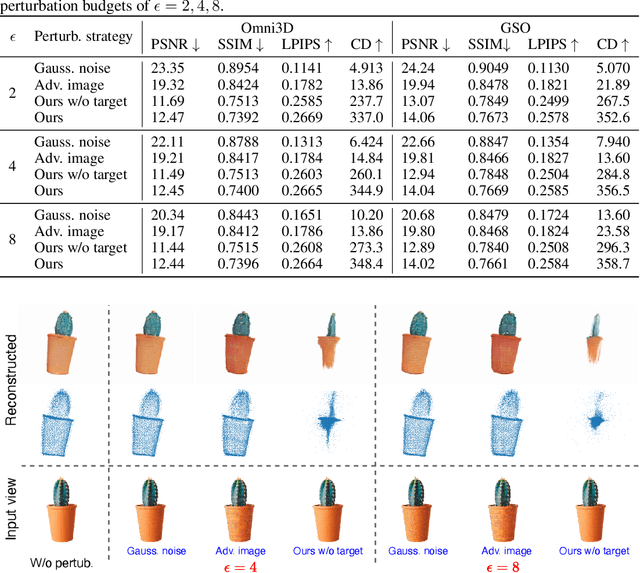

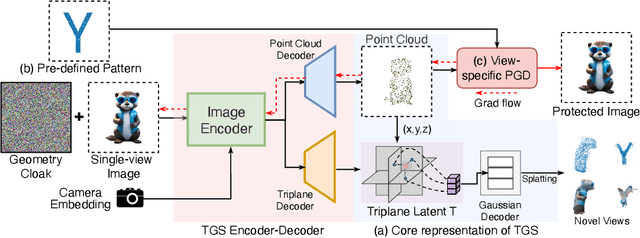

Geometry Cloak: Preventing TGS-based 3D Reconstruction from Copyrighted Images

Oct 30, 2024

Abstract:Single-view 3D reconstruction methods like Triplane Gaussian Splatting (TGS) have enabled high-quality 3D model generation from just a single image input within seconds. However, this capability raises concerns about potential misuse, where malicious users could exploit TGS to create unauthorized 3D models from copyrighted images. To prevent such infringement, we propose a novel image protection approach that embeds invisible geometry perturbations, termed "geometry cloaks", into images before supplying them to TGS. These carefully crafted perturbations encode a customized message that is revealed when TGS attempts 3D reconstructions of the cloaked image. Unlike conventional adversarial attacks that simply degrade output quality, our method forces TGS to fail the 3D reconstruction in a specific way - by generating an identifiable customized pattern that acts as a watermark. This watermark allows copyright holders to assert ownership over any attempted 3D reconstructions made from their protected images. Extensive experiments have verified the effectiveness of our geometry cloak. Our project is available at https://qsong2001.github.io/geometry_cloak.

Open-Set Deepfake Detection: A Parameter-Efficient Adaptation Method with Forgery Style Mixture

Aug 23, 2024

Abstract:Open-set face forgery detection poses significant security threats and presents substantial challenges for existing detection models. These detectors primarily have two limitations: they cannot generalize across unknown forgery domains and inefficiently adapt to new data. To address these issues, we introduce an approach that is both general and parameter-efficient for face forgery detection. It builds on the assumption that different forgery source domains exhibit distinct style statistics. Previous methods typically require fully fine-tuning pre-trained networks, consuming substantial time and computational resources. In turn, we design a forgery-style mixture formulation that augments the diversity of forgery source domains, enhancing the model's generalizability across unseen domains. Drawing on recent advancements in vision transformers (ViT) for face forgery detection, we develop a parameter-efficient ViT-based detection model that includes lightweight forgery feature extraction modules and enables the model to extract global and local forgery clues simultaneously. We only optimize the inserted lightweight modules during training, maintaining the original ViT structure with its pre-trained ImageNet weights. This training strategy effectively preserves the informative pre-trained knowledge while flexibly adapting the model to the task of Deepfake detection. Extensive experimental results demonstrate that the designed model achieves state-of-the-art generalizability with significantly reduced trainable parameters, representing an important step toward open-set Deepfake detection in the wild.

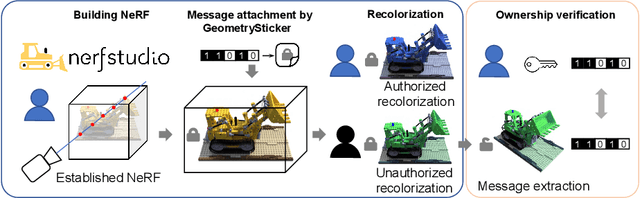

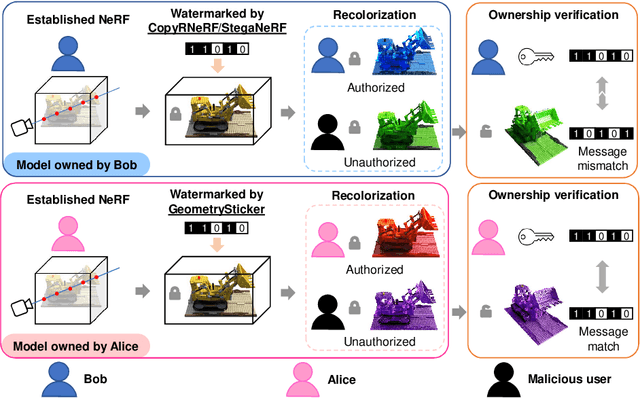

GeometrySticker: Enabling Ownership Claim of Recolorized Neural Radiance Fields

Jul 18, 2024

Abstract:Remarkable advancements in the recolorization of Neural Radiance Fields (NeRF) have simplified the process of modifying NeRF's color attributes. Yet, with the potential of NeRF to serve as shareable digital assets, there's a concern that malicious users might alter the color of NeRF models and falsely claim the recolorized version as their own. To safeguard against such breaches of ownership, enabling original NeRF creators to establish rights over recolorized NeRF is crucial. While approaches like CopyRNeRF have been introduced to embed binary messages into NeRF models as digital signatures for copyright protection, the process of recolorization can remove these binary messages. In our paper, we present GeometrySticker, a method for seamlessly integrating binary messages into the geometry components of radiance fields, akin to applying a sticker. GeometrySticker can embed binary messages into NeRF models while preserving the effectiveness of these messages against recolorization. Our comprehensive studies demonstrate that GeometrySticker is adaptable to prevalent NeRF architectures and maintains a commendable level of robustness against various distortions. Project page: https://kevinhuangxf.github.io/GeometrySticker/.

Imaging Interiors: An Implicit Solution to Electromagnetic Inverse Scattering Problems

Jul 12, 2024

Abstract:Electromagnetic Inverse Scattering Problems (EISP) have gained wide applications in computational imaging. By solving EISP, the internal relative permittivity of the scatterer can be non-invasively determined based on the scattered electromagnetic fields. Despite previous efforts to address EISP, achieving better solutions to this problem has remained elusive, due to the challenges posed by inversion and discretization. This paper tackles those challenges in EISP via an implicit approach. By representing the scatterer's relative permittivity as a continuous implicit representation, our method is able to address the low-resolution problems arising from discretization. Further, optimizing this implicit representation within a forward framework allows us to conveniently circumvent the challenges posed by inverse estimation. Our approach outperforms existing methods on standard benchmark datasets. Project page: https://luo-ziyuan.github.io/Imaging-Interiors

Protecting NeRFs' Copyright via Plug-And-Play Watermarking Base Model

Jul 10, 2024

Abstract:Neural Radiance Fields (NeRFs) have become a key method for 3D scene representation. With the rising prominence and influence of NeRF, safeguarding its intellectual property has become increasingly important. In this paper, we propose \textbf{NeRFProtector}, which adopts a plug-and-play strategy to protect NeRF's copyright during its creation. NeRFProtector utilizes a pre-trained watermarking base model, enabling NeRF creators to embed binary messages directly while creating their NeRF. Our plug-and-play property ensures NeRF creators can flexibly choose NeRF variants without excessive modifications. Leveraging our newly designed progressive distillation, we demonstrate performance on par with several leading-edge neural rendering methods. Our project is available at: \url{https://qsong2001.github.io/NeRFProtector}.

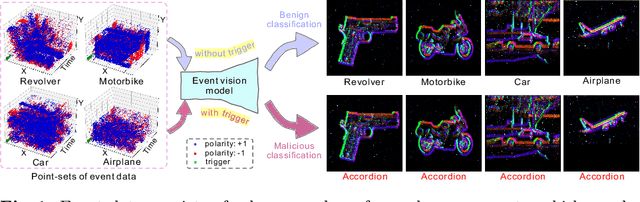

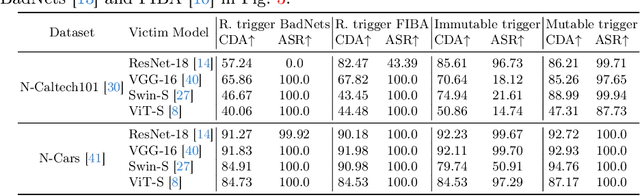

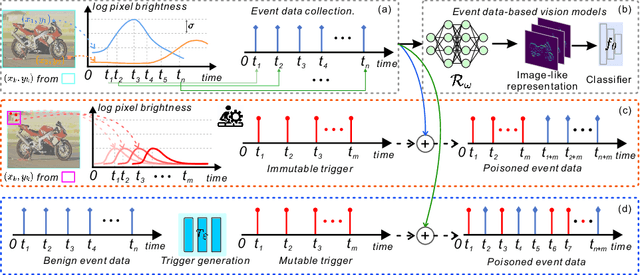

Event Trojan: Asynchronous Event-based Backdoor Attacks

Jul 09, 2024

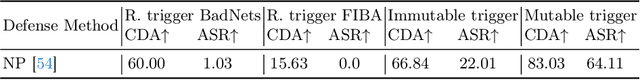

Abstract:As asynchronous event data is more frequently engaged in various vision tasks, the risk of backdoor attacks becomes more evident. However, research into the potential risk associated with backdoor attacks in asynchronous event data has been scarce, leaving related tasks vulnerable to potential threats. This paper has uncovered the possibility of directly poisoning event data streams by proposing Event Trojan framework, including two kinds of triggers, i.e., immutable and mutable triggers. Specifically, our two types of event triggers are based on a sequence of simulated event spikes, which can be easily incorporated into any event stream to initiate backdoor attacks. Additionally, for the mutable trigger, we design an adaptive learning mechanism to maximize its aggressiveness. To improve the stealthiness, we introduce a novel loss function that constrains the generated contents of mutable triggers, minimizing the difference between triggers and original events while maintaining effectiveness. Extensive experiments on public event datasets show the effectiveness of the proposed backdoor triggers. We hope that this paper can draw greater attention to the potential threats posed by backdoor attacks on event-based tasks. Our code is available at https://github.com/rfww/EventTrojan.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge