Nam Ling

Analysis of Converged 3D Gaussian Splatting Solutions: Density Effects and Prediction Limit

Feb 09, 2026Abstract:We investigate what structure emerges in 3D Gaussian Splatting (3DGS) solutions from standard multi-view optimization. We term these Rendering-Optimal References (RORs) and analyze their statistical properties, revealing stable patterns: mixture-structured scales and bimodal radiance across diverse scenes. To understand what determines these parameters, we apply learnability probes by training predictors to reconstruct RORs from point clouds without rendering supervision. Our analysis uncovers fundamental density-stratification. Dense regions exhibit geometry-correlated parameters amenable to render-free prediction, while sparse regions show systematic failure across architectures. We formalize this through variance decomposition, demonstrating that visibility heterogeneity creates covariance-dominated coupling between geometric and appearance parameters in sparse regions. This reveals the dual character of RORs: geometric primitives where point clouds suffice, and view synthesis primitives where multi-view constraints are essential. We provide density-aware strategies that improve training robustness and discuss architectural implications for systems that adaptively balance feed-forward prediction and rendering-based refinement.

Efficient Post-Training Pruning of Large Language Models with Statistical Correction

Feb 07, 2026Abstract:Post-training pruning is an effective approach for reducing the size and inference cost of large language models (LLMs), but existing methods often face a trade-off between pruning quality and computational efficiency. Heuristic pruning methods are efficient but sensitive to activation outliers, while reconstruction-based approaches improve fidelity at the cost of heavy computation. In this work, we propose a lightweight post-training pruning framework based on first-order statistical properties of model weights and activations. During pruning, channel-wise statistics are used to calibrate magnitude-based importance scores, reducing bias from activation-dominated channels. After pruning, we apply an analytic energy compensation to correct distributional distortions caused by weight removal. Both steps operate without retraining, gradients, or second-order information. Experiments across multiple LLM families, sparsity patterns, and evaluation tasks show that the proposed approach improves pruning performance while maintaining computational cost comparable to heuristic methods. The results suggest that simple statistical corrections can be effective for post-training pruning of LLMs.

SCONE: A Practical, Constraint-Aware Plug-in for Latent Encoding in Learned DNA Storage

Feb 05, 2026Abstract:DNA storage has matured from concept to practical stage, yet its integration with neural compression pipelines remains inefficient. Early DNA encoders applied redundancy-heavy constraint layers atop raw binary data - workable but primitive. Recent neural codecs compress data into learned latent representations with rich statistical structure, yet still convert these latents to DNA via naive binary-to-quaternary transcoding, discarding the entropy model's optimization. This mismatch undermines compression efficiency and complicates the encoding stack. A plug-in module that collapses latent compression and DNA encoding into a single step. SCONE performs quaternary arithmetic coding directly on the latent space in DNA bases. Its Constraint-Aware Adaptive Coding module dynamically steers the entropy encoder's learned probability distribution to enforce biochemical constraints - Guanine-Cytosine (GC) balance and homopolymer suppression - deterministically during encoding, eliminating post-hoc correction. The design preserves full reversibility and exploits the hyperprior model's learned priors without modification. Experiments show SCONE achieves near-perfect constraint satisfaction with negligible computational overhead (<2% latency), establishing a latent-agnostic interface for end-to-end DNA-compatible learned codecs.

Thinking Like Van Gogh: Structure-Aware Style Transfer via Flow-Guided 3D Gaussian Splatting

Jan 15, 2026Abstract:In 1888, Vincent van Gogh wrote, "I am seeking exaggeration in the essential." This principle, amplifying structural form while suppressing photographic detail, lies at the core of Post-Impressionist art. However, most existing 3D style transfer methods invert this philosophy, treating geometry as a rigid substrate for surface-level texture projection. To authentically reproduce Post-Impressionist stylization, geometric abstraction must be embraced as the primary vehicle of expression. We propose a flow-guided geometric advection framework for 3D Gaussian Splatting (3DGS) that operationalizes this principle in a mesh-free setting. Our method extracts directional flow fields from 2D paintings and back-propagates them into 3D space, rectifying Gaussian primitives to form flow-aligned brushstrokes that conform to scene topology without relying on explicit mesh priors. This enables expressive structural deformation driven directly by painterly motion rather than photometric constraints. Our contributions are threefold: (1) a projection-based, mesh-free flow guidance mechanism that transfers 2D artistic motion into 3D Gaussian geometry; (2) a luminance-structure decoupling strategy that isolates geometric deformation from color optimization, mitigating artifacts during aggressive structural abstraction; and (3) a VLM-as-a-Judge evaluation framework that assesses artistic authenticity through aesthetic judgment instead of conventional pixel-level metrics, explicitly addressing the subjective nature of artistic stylization.

TVC: Tokenized Video Compression with Ultra-Low Bitrate

Apr 22, 2025Abstract:Tokenized visual representations have shown great promise in image compression, yet their extension to video remains underexplored due to the challenges posed by complex temporal dynamics and stringent bitrate constraints. In this paper, we propose Tokenized Video Compression (TVC), the first token-based dual-stream video compression framework designed to operate effectively at ultra-low bitrates. TVC leverages the powerful Cosmos video tokenizer to extract both discrete and continuous token streams. The discrete tokens (i.e., code maps generated by FSQ) are partially masked using a strategic masking scheme, then compressed losslessly with a discrete checkerboard context model to reduce transmission overhead. The masked tokens are reconstructed by a decoder-only transformer with spatiotemporal token prediction. Meanwhile, the continuous tokens, produced via an autoencoder (AE), are quantized and compressed using a continuous checkerboard context model, providing complementary continuous information at ultra-low bitrate. At the Decoder side, both streams are fused using ControlNet, with multi-scale hierarchical integration to ensure high perceptual quality alongside strong fidelity in reconstruction. This work mitigates the long-standing skepticism about the practicality of tokenized video compression and opens up new avenues for semantics-aware, token-native video compression.

Stereo Image Coding for Machines with Joint Visual Feature Compression

Feb 20, 2025Abstract:2D image coding for machines (ICM) has achieved great success in coding efficiency, while less effort has been devoted to stereo image fields. To promote the efficiency of stereo image compression (SIC) and intelligent analysis, the stereo image coding for machines (SICM) is formulated and explored in this paper. More specifically, a machine vision-oriented stereo feature compression network (MVSFC-Net) is proposed for SICM, where the stereo visual features are effectively extracted, compressed, and transmitted for 3D visual task. To efficiently compress stereo visual features in MVSFC-Net, a stereo multi-scale feature compression (SMFC) module is designed to gradually transform sparse stereo multi-scale features into compact joint visual representations by removing spatial, inter-view, and cross-scale redundancies simultaneously. Experimental results show that the proposed MVSFC-Net obtains superior compression efficiency as well as 3D visual task performance, when compared with the existing ICM anchors recommended by MPEG and the state-of-the-art SIC method.

WTDUN: Wavelet Tree-Structured Sampling and Deep Unfolding Network for Image Compressed Sensing

Nov 25, 2024

Abstract:Deep unfolding networks have gained increasing attention in the field of compressed sensing (CS) owing to their theoretical interpretability and superior reconstruction performance. However, most existing deep unfolding methods often face the following issues: 1) they learn directly from single-channel images, leading to a simple feature representation that does not fully capture complex features; and 2) they treat various image components uniformly, ignoring the characteristics of different components. To address these issues, we propose a novel wavelet-domain deep unfolding framework named WTDUN, which operates directly on the multi-scale wavelet subbands. Our method utilizes the intrinsic sparsity and multi-scale structure of wavelet coefficients to achieve a tree-structured sampling and reconstruction, effectively capturing and highlighting the most important features within images. Specifically, the design of tree-structured reconstruction aims to capture the inter-dependencies among the multi-scale subbands, enabling the identification of both fine and coarse features, which can lead to a marked improvement in reconstruction quality. Furthermore, a wavelet domain adaptive sampling method is proposed to greatly improve the sampling capability, which is realized by assigning measurements to each wavelet subband based on its importance. Unlike pure deep learning methods that treat all components uniformly, our method introduces a targeted focus on important subbands, considering their energy and sparsity. This targeted strategy lets us capture key information more efficiently while discarding less important information, resulting in a more effective and detailed reconstruction. Extensive experimental results on various datasets validate the superior performance of our proposed method.

GameIR: A Large-Scale Synthesized Ground-Truth Dataset for Image Restoration over Gaming Content

Aug 29, 2024

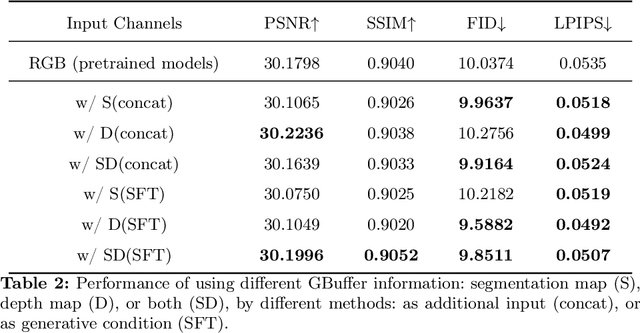

Abstract:Image restoration methods like super-resolution and image synthesis have been successfully used in commercial cloud gaming products like NVIDIA's DLSS. However, restoration over gaming content is not well studied by the general public. The discrepancy is mainly caused by the lack of ground-truth gaming training data that match the test cases. Due to the unique characteristics of gaming content, the common approach of generating pseudo training data by degrading the original HR images results in inferior restoration performance. In this work, we develop GameIR, a large-scale high-quality computer-synthesized ground-truth dataset to fill in the blanks, targeting at two different applications. The first is super-resolution with deferred rendering, to support the gaming solution of rendering and transferring LR images only and restoring HR images on the client side. We provide 19200 LR-HR paired ground-truth frames coming from 640 videos rendered at 720p and 1440p for this task. The second is novel view synthesis (NVS), to support the multiview gaming solution of rendering and transferring part of the multiview frames and generating the remaining frames on the client side. This task has 57,600 HR frames from 960 videos of 160 scenes with 6 camera views. In addition to the RGB frames, the GBuffers during the deferred rendering stage are also provided, which can be used to help restoration. Furthermore, we evaluate several SOTA super-resolution algorithms and NeRF-based NVS algorithms over our dataset, which demonstrates the effectiveness of our ground-truth GameIR data in improving restoration performance for gaming content. Also, we test the method of incorporating the GBuffers as additional input information for helping super-resolution and NVS. We release our dataset and models to the general public to facilitate research on restoration methods over gaming content.

HAAP: Vision-context Hierarchical Attention Autoregressive with Adaptive Permutation for Scene Text Recognition

May 15, 2024Abstract:Internal Language Model (LM)-based methods use permutation language modeling (PLM) to solve the error correction caused by conditional independence in external LM-based methods. However, random permutations of human interference cause fit oscillations in the model training, and Iterative Refinement (IR) operation to improve multimodal information decoupling also introduces additional overhead. To address these issues, this paper proposes the Hierarchical Attention autoregressive Model with Adaptive Permutation (HAAP) to enhance the location-context-image interaction capability, improving autoregressive generalization with internal LM. First, we propose Implicit Permutation Neurons (IPN) to generate adaptive attention masks to dynamically exploit token dependencies. The adaptive masks increase the diversity of training data and prevent model dependency on a specific order. It reduces the training overhead of PLM while avoiding training fit oscillations. Second, we develop Cross-modal Hierarchical Attention mechanism (CHA) to couple context and image features. This processing establishes rich positional semantic dependencies between context and image while avoiding IR. Extensive experimental results show the proposed HAAP achieves state-of-the-art (SOTA) performance in terms of accuracy, complexity, and latency on several datasets.

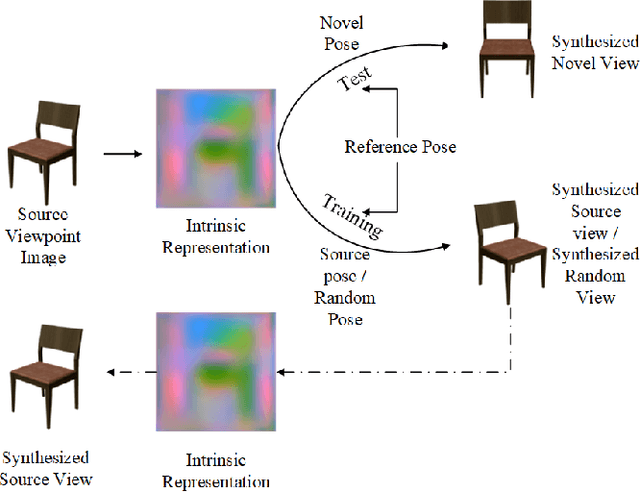

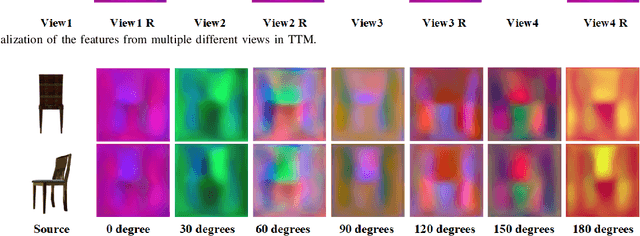

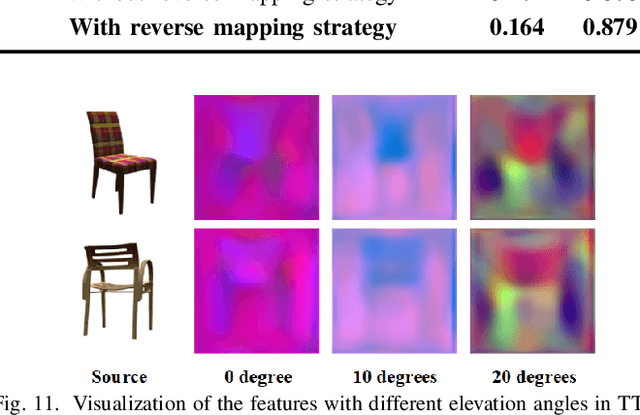

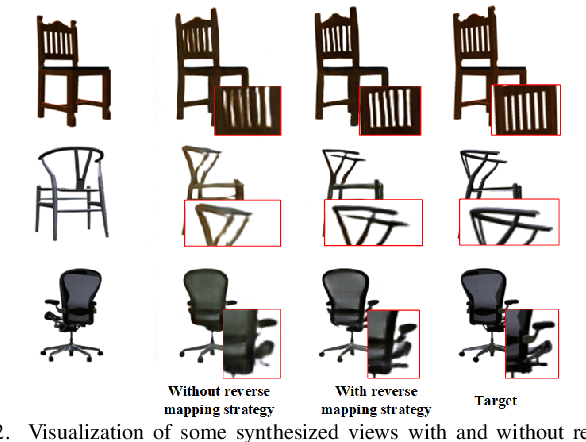

Novel View Synthesis from a Single Image via Unsupervised learning

Oct 29, 2021

Abstract:View synthesis aims to generate novel views from one or more given source views. Although existing methods have achieved promising performance, they usually require paired views of different poses to learn a pixel transformation. This paper proposes an unsupervised network to learn such a pixel transformation from a single source viewpoint. In particular, the network consists of a token transformation module (TTM) that facilities the transformation of the features extracted from a source viewpoint image into an intrinsic representation with respect to a pre-defined reference pose and a view generation module (VGM) that synthesizes an arbitrary view from the representation. The learned transformation allows us to synthesize a novel view from any single source viewpoint image of unknown pose. Experiments on the widely used view synthesis datasets have demonstrated that the proposed network is able to produce comparable results to the state-of-the-art methods despite the fact that learning is unsupervised and only a single source viewpoint image is required for generating a novel view. The code will be available soon.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge