Metin Sitti

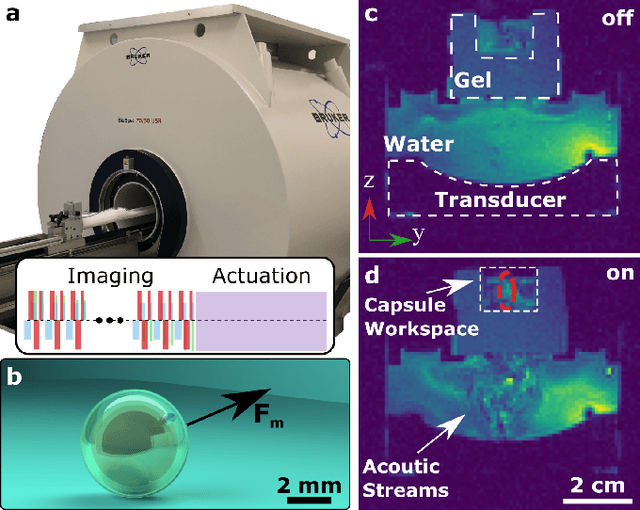

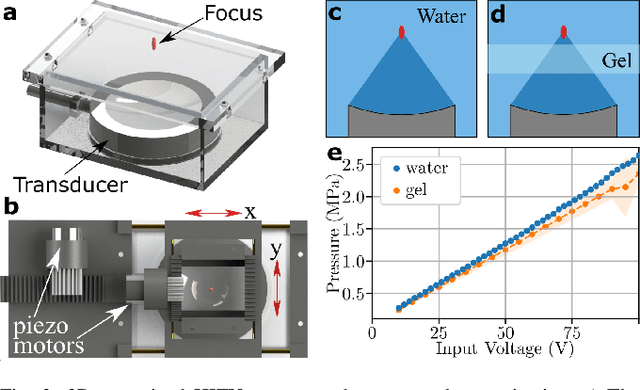

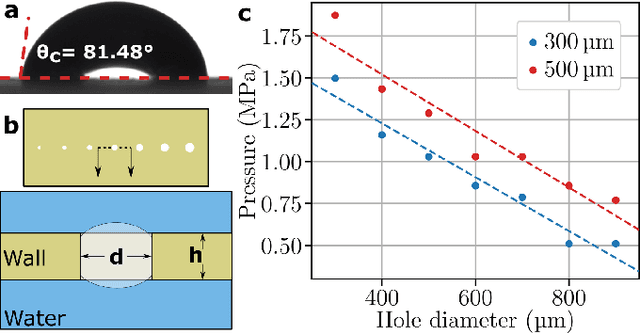

MRI-powered Magnetic Miniature Capsule Robot with HIFU-controlled On-demand Drug Delivery

Jan 17, 2023

Abstract:Magnetic resonance imaging (MRI)-guided robotic systems offer great potential for new minimally invasive medical tools, including MRI-powered miniature robots. By re-purposing the imaging hardware of an MRI scanner, the magnetic miniature robot could be navigated into the remote part of the patient's body without needing tethered endoscopic tools. However, the state-of-art MRI-powered magnetic miniature robots have limited functionality besides navigation. Here, we propose an MRI-powered magnetic miniature capsule robot benefiting from acoustic streaming forces generated by MRI-guided high-intensity focus ultrasound (HIFU) for controlled drug release. Our design comprises a polymer capsule shell with a submillimeter-diameter drug-release hole that captures an air bubble functioning as a stopper. We use the HIFU pulse to initiate drug release by removing the air bubble once the capsule robot reaches the target location. By controlling acoustic pressure, we also regulate the drug release rate for multiple location targeting during navigation. We demonstrated that the proposed magnetic capsule robot could travel at high speed up to 1.13 cm/s in ex vivo porcine small intestine and release drug to multiple target sites in a single operation, using a combination of MRI-powered actuation and HIFU-controlled release. The proposed MRI-guided microrobotic drug release system will greatly impact minimally invasive medical procedures by allowing on-demand targeted drug delivery.

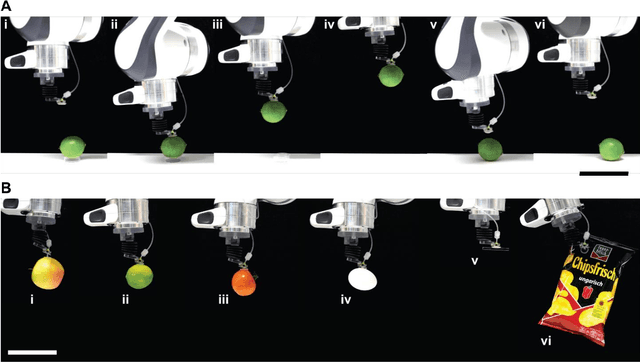

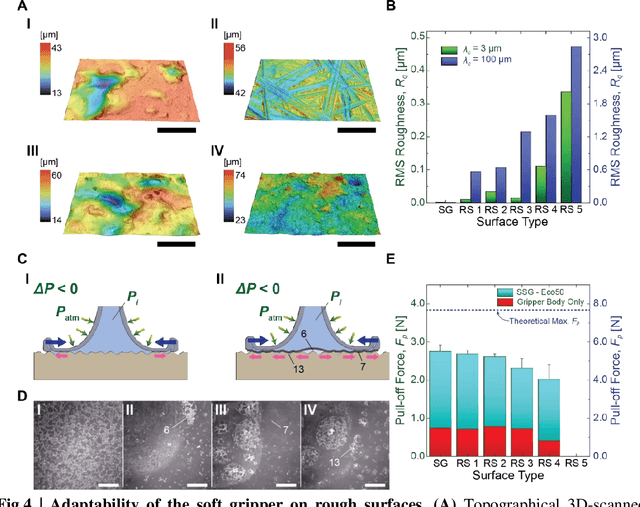

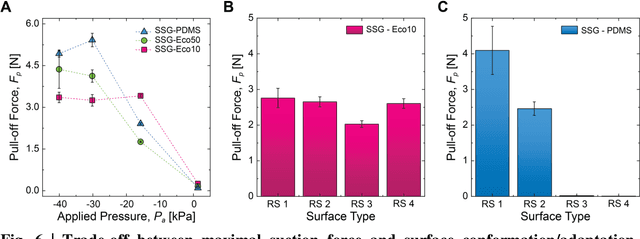

Suction-based Soft Robotic Gripping of Rough and Irregular Parts

Sep 17, 2020

Abstract:Recently, suction-based robotic systems with microscopic features or active suction components have been proposed to grip rough and irregular surfaces. However, sophisticated fabrication methods or complex control systems are required for such systems, and robust attachment to rough real-world surfaces still remains a grand challenge. Here, we propose a fully soft robotic gripper, where a flat elastic membrane is used to conform and contact parts or surfaces well, where an internal negative pressure exerted on the air-sealed membrane induces the suction-based gripping. 3D printing in combination with soft molding techniques enable the fabrication of the soft gripper. Robust attachment to complex 3D and rough surfaces is enabled by the surface-conformable soft flat membrane, which generates strong and robust suction at the contact interface. Such robust attachment to rough and irregular surfaces enables manipulation of a broad range of real-world objects, such as an egg, lime, and foiled package, without any physical damage. Compared to the conventional suction cup designs, the proposed suction gripper design shows a four-fold increase in gripping performance on rough surfaces. Furthermore, the structural and material simplicity of the proposed gripper architecture facilitates its system-level integration with other soft robotic peripherals, which can enable broader impact in diverse fields, such as digital manufacturing, robotic manipulation, and medical gripping applications.

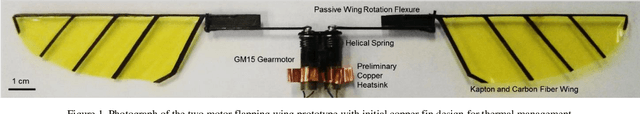

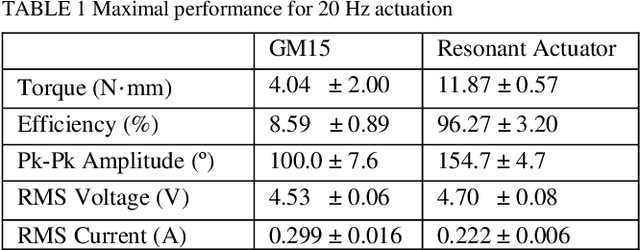

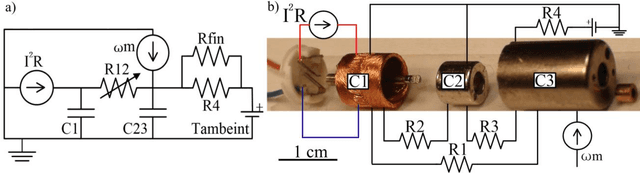

Characterization and Thermal Management of a DC Motor-Driven Resonant Actuator for Miniature Mobile Robots with Oscillating Limbs

Jan 24, 2020

Abstract:In this paper, we characterize the performance of and develop thermal management solutions for a DC motor-driven resonant actuator developed for flapping wing micro air vehicles. The actuator, a DC micro-gearmotor connected in parallel with a torsional spring, drives reciprocal wing motion. Compared to the gearmotor alone, this design increased torque and power density by 161.1% and 666.8%, respectively, while decreasing the drawn current by 25.8%. Characterization of the actuator, isolated from nonlinear aerodynamic loading, results in standard metrics directly comparable to other actuators. The micro-motor, selected for low weight considerations, operates at high power for limited duration due to thermal effects. To predict system performance, a lumped parameter thermal circuit model was developed. Critical model parameters for this micro-motor, two orders of magnitude smaller than those previously characterized, were identified experimentally. This included the effects of variable winding resistance, bushing friction, speed-dependent forced convection, and the addition of a heatsink. The model was then used to determine a safe operation envelope for the vehicle and to design a weight-optimal heatsink. This actuator design and thermal modeling approach could be applied more generally to improve the performance of any miniature mobile robot or device with motor-driven oscillating limbs or loads.

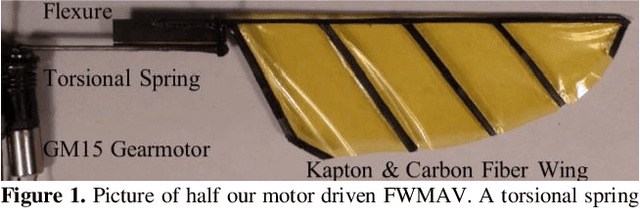

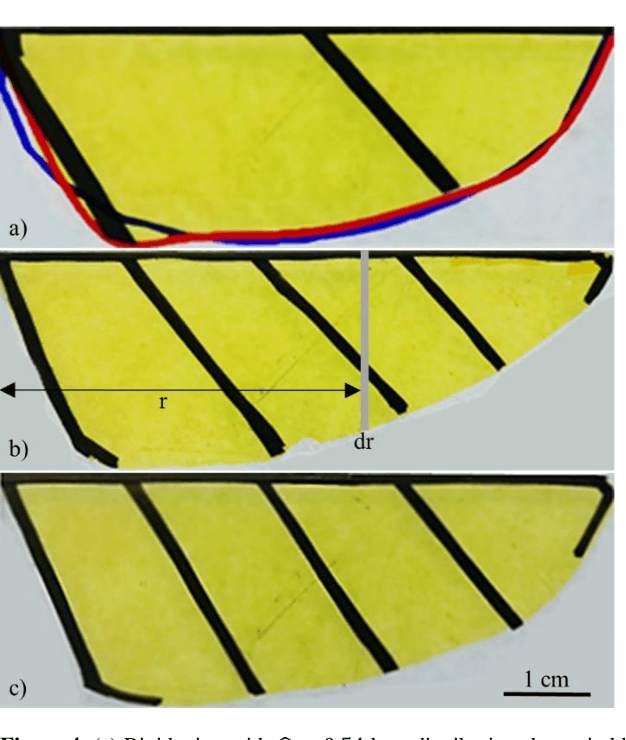

Bio-inspired Flexible Twisting Wings Increase Lift and Efficiency of a Flapping Wing Micro Air Vehicle

Jan 24, 2020

Abstract:We investigate the effect of wing twist flexibility on lift and efficiency of a flapping-wing micro air vehicle capable of liftoff. Wings used previously were chosen to be fully rigid due to modeling and fabrication constraints. However, biological wings are highly flexible and other micro air vehicles have successfully utilized flexible wing structures for specialized tasks. The goal of our study is to determine if dynamic twisting of flexible wings can increase overall aerodynamic lift and efficiency. A flexible twisting wing design was found to increase aerodynamic efficiency by 41.3%, translational lift production by 35.3%, and the effective lift coefficient by 63.7% compared to the rigid-wing design. These results exceed the predictions of quasi-steady blade element models, indicating the need for unsteady computational fluid dynamics simulations of twisted flapping wings.

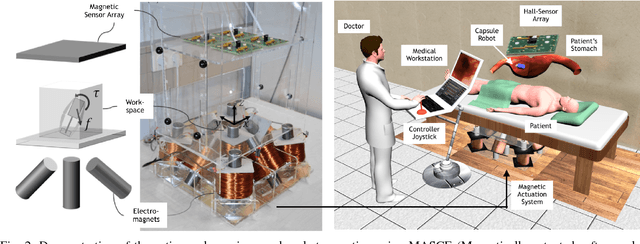

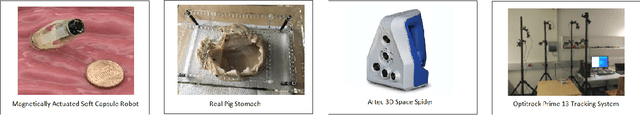

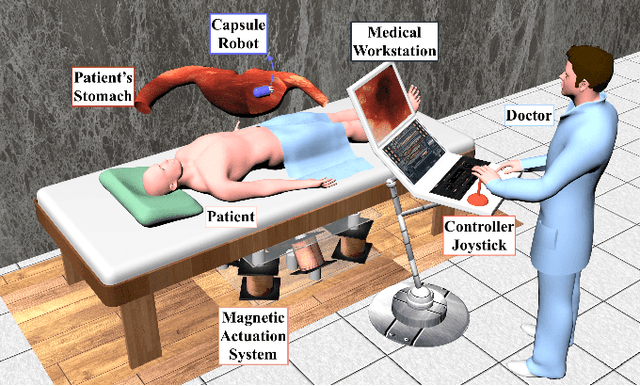

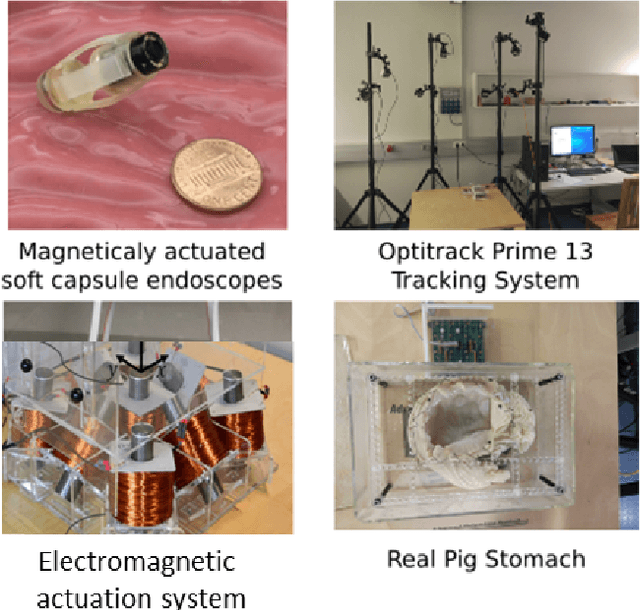

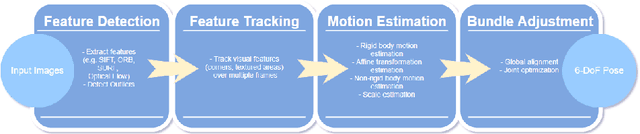

Magnetic-Visual Sensor Fusion-based Dense 3D Reconstruction and Localization for Endoscopic Capsule Robots

Mar 02, 2018

Abstract:Reliable and real-time 3D reconstruction and localization functionality is a crucial prerequisite for the navigation of actively controlled capsule endoscopic robots as an emerging, minimally invasive diagnostic and therapeutic technology for use in the gastrointestinal (GI) tract. In this study, we propose a fully dense, non-rigidly deformable, strictly real-time, intraoperative map fusion approach for actively controlled endoscopic capsule robot applications which combines magnetic and vision-based localization, with non-rigid deformations based frame-to-model map fusion. The performance of the proposed method is demonstrated using four different ex-vivo porcine stomach models. Across different trajectories of varying speed and complexity, and four different endoscopic cameras, the root mean square surface reconstruction errors 1.58 to 2.17 cm.

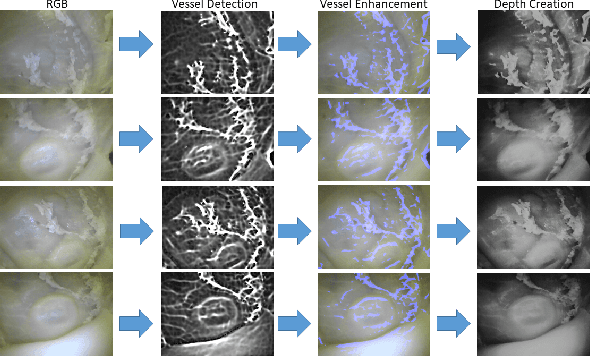

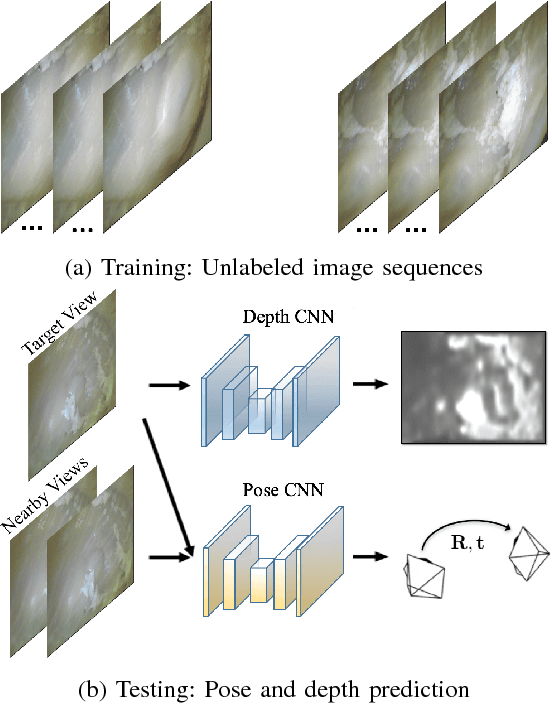

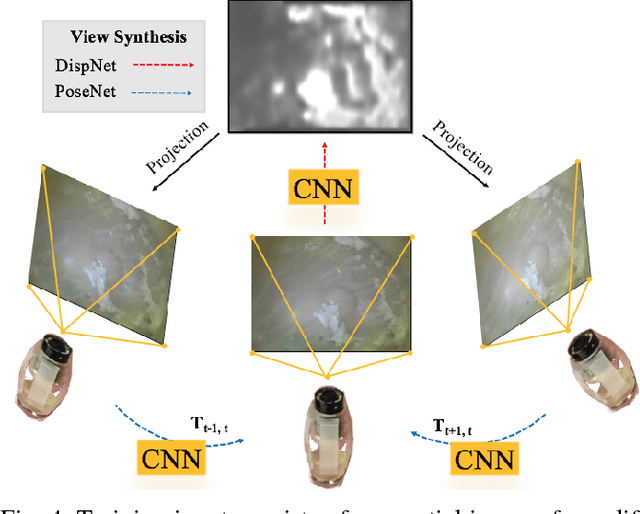

Unsupervised Odometry and Depth Learning for Endoscopic Capsule Robots

Mar 02, 2018

Abstract:In the last decade, many medical companies and research groups have tried to convert passive capsule endoscopes as an emerging and minimally invasive diagnostic technology into actively steerable endoscopic capsule robots which will provide more intuitive disease detection, targeted drug delivery and biopsy-like operations in the gastrointestinal(GI) tract. In this study, we introduce a fully unsupervised, real-time odometry and depth learner for monocular endoscopic capsule robots. We establish the supervision by warping view sequences and assigning the re-projection minimization to the loss function, which we adopt in multi-view pose estimation and single-view depth estimation network. Detailed quantitative and qualitative analyses of the proposed framework performed on non-rigidly deformable ex-vivo porcine stomach datasets proves the effectiveness of the method in terms of motion estimation and depth recovery.

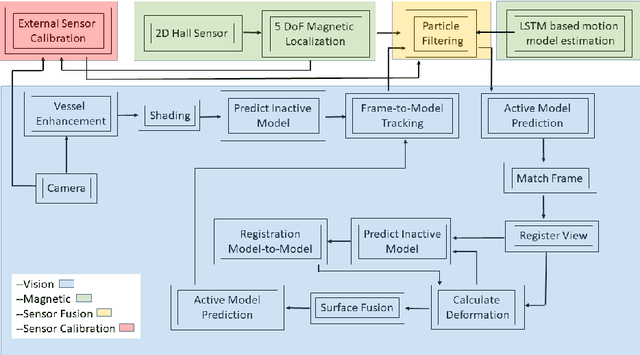

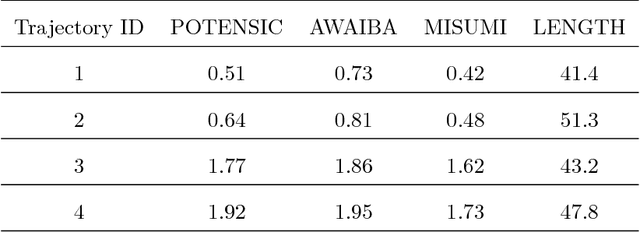

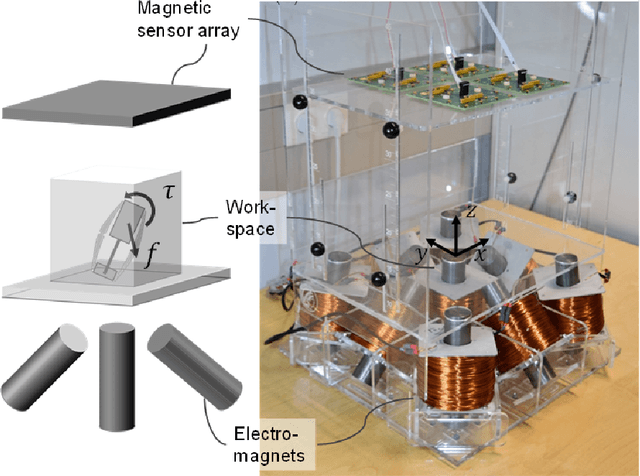

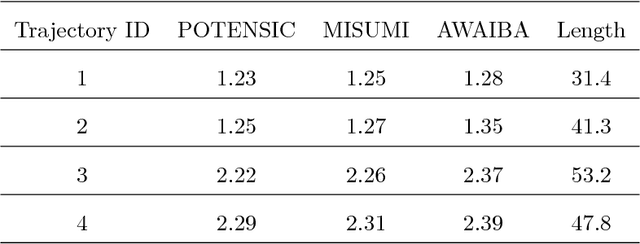

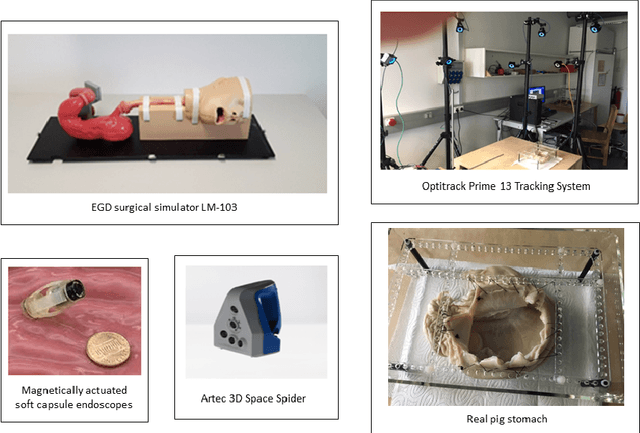

Magnetic-Visual Sensor Fusion based Medical SLAM for Endoscopic Capsule Robot

Nov 06, 2017

Abstract:A reliable, real-time simultaneous localization and mapping (SLAM) method is crucial for the navigation of actively controlled capsule endoscopy robots. These robots are an emerging, minimally invasive diagnostic and therapeutic technology for use in the gastrointestinal (GI) tract. In this study, we propose a dense, non-rigidly deformable, and real-time map fusion approach for actively controlled endoscopic capsule robot applications. The method combines magnetic and vision based localization, and makes use of frame-to-model fusion and model-to-model loop closure. The performance of the method is demonstrated using an ex-vivo porcine stomach model. Across four trajectories of varying speed and complexity, and across three cameras, the root mean square localization errors range from 0.42 to 1.92 cm, and the root mean square surface reconstruction errors range from 1.23 to 2.39 cm.

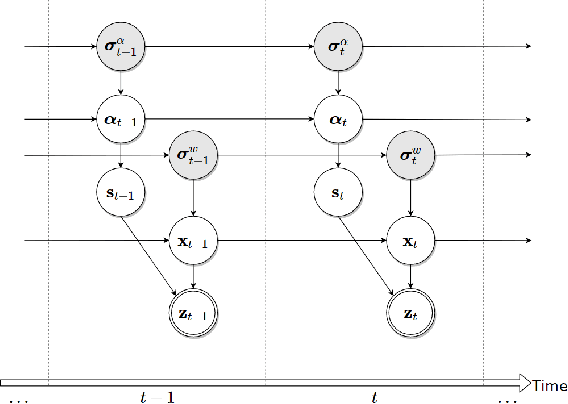

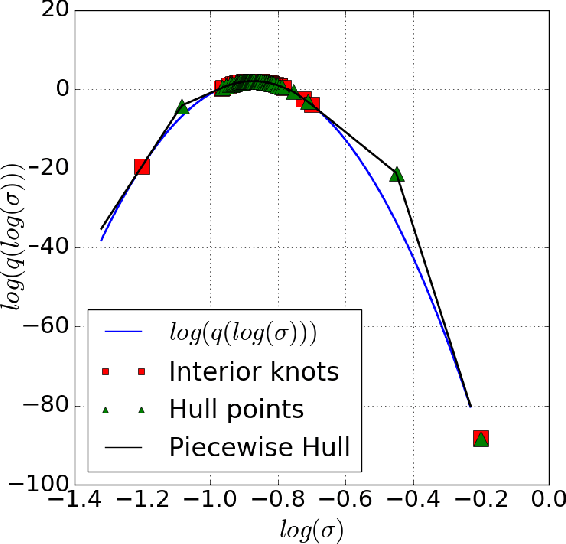

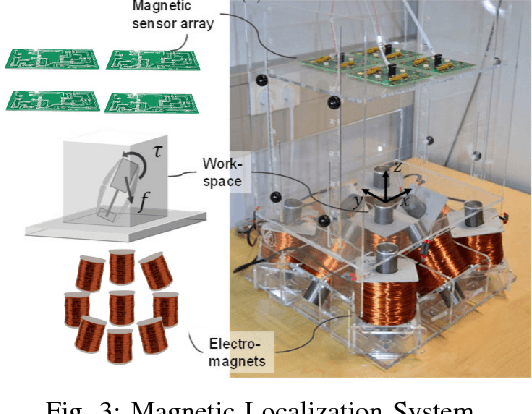

EndoSensorFusion: Particle Filtering-Based Multi-sensory Data Fusion with Switching State-Space Model for Endoscopic Capsule Robots

Sep 25, 2017

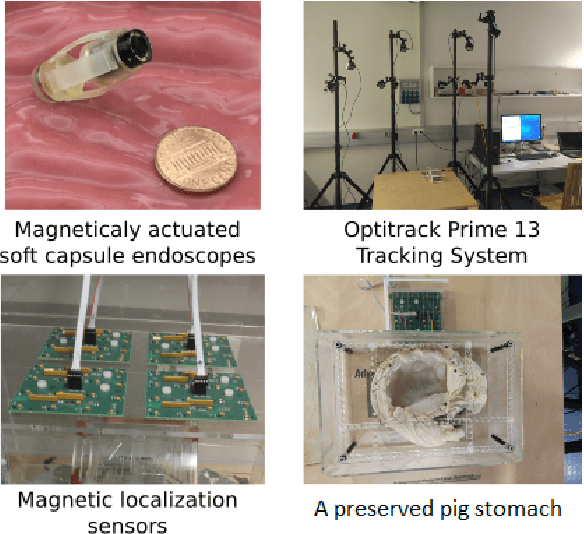

Abstract:A reliable, real time multi-sensor fusion functionality is crucial for localization of actively controlled capsule endoscopy robots, which are an emerging, minimally invasive diagnostic and therapeutic technology for the gastrointestinal (GI) tract. In this study, we propose a novel multi-sensor fusion approach based on a particle filter that incorporates an online estimation of sensor reliability and a non-linear kinematic model learned by a recurrent neural network. Our method sequentially estimates the true robot pose from noisy pose observations delivered by multiple sensors. We experimentally test the method using 5 degree-of-freedom (5-DoF) absolute pose measurement by a magnetic localization system and a 6-DoF relative pose measurement by visual odometry. In addition, the proposed method is capable of detecting and handling sensor failures by ignoring corrupted data, providing the robustness expected of a medical device. Detailed analyses and evaluations are presented using ex-vivo experiments on a porcine stomach model prove that our system achieves high translational and rotational accuracies for different types of endoscopic capsule robot trajectories.

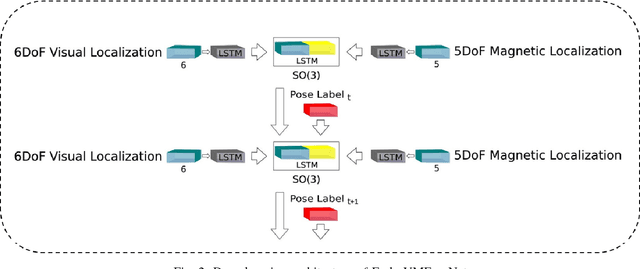

Endo-VMFuseNet: Deep Visual-Magnetic Sensor Fusion Approach for Uncalibrated, Unsynchronized and Asymmetric Endoscopic Capsule Robot Localization Data

Sep 22, 2017

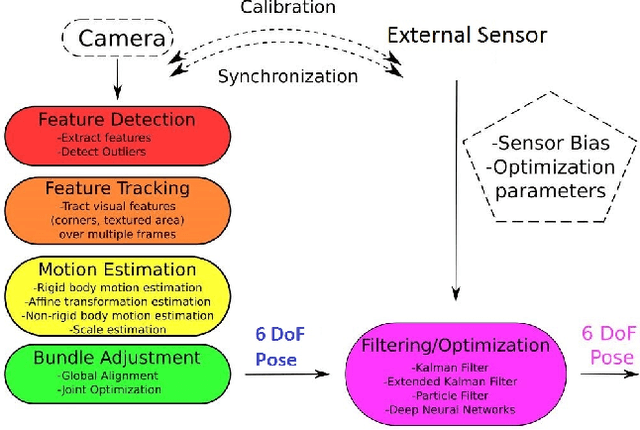

Abstract:In the last decade, researchers and medical device companies have made major advances towards transforming passive capsule endoscopes into active medical robots. One of the major challenges is to endow capsule robots with accurate perception of the environment inside the human body, which will provide necessary information and enable improved medical procedures. We extend the success of deep learning approaches from various research fields to the problem of uncalibrated, asynchronous, and asymmetric sensor fusion for endoscopic capsule robots. The results performed on real pig stomach datasets show that our method achieves sub-millimeter precision for both translational and rotational movements and contains various advantages over traditional sensor fusion techniques.

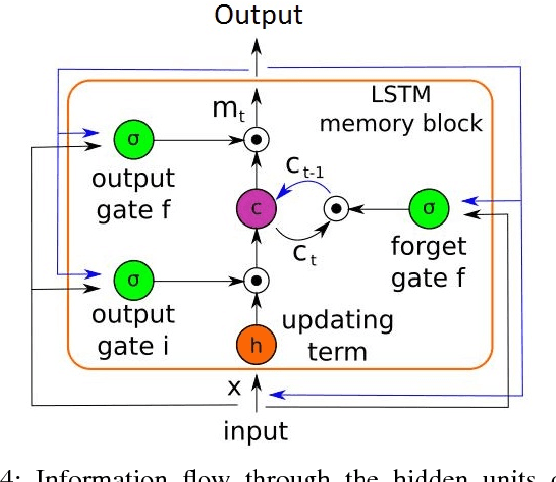

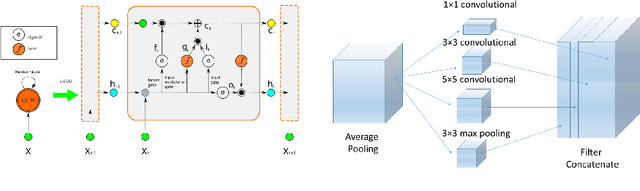

Deep EndoVO: A Recurrent Convolutional Neural Network (RCNN) based Visual Odometry Approach for Endoscopic Capsule Robots

Sep 08, 2017

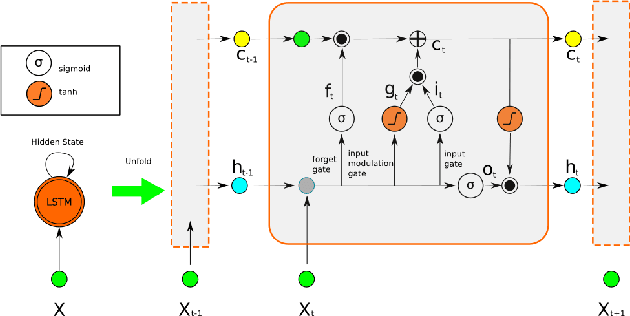

Abstract:Ingestible wireless capsule endoscopy is an emerging minimally invasive diagnostic technology for inspection of the GI tract and diagnosis of a wide range of diseases and pathologies. Medical device companies and many research groups have recently made substantial progresses in converting passive capsule endoscopes to active capsule robots, enabling more accurate, precise, and intuitive detection of the location and size of the diseased areas. Since a reliable real time pose estimation functionality is crucial for actively controlled endoscopic capsule robots, in this study, we propose a monocular visual odometry (VO) method for endoscopic capsule robot operations. Our method lies on the application of the deep Recurrent Convolutional Neural Networks (RCNNs) for the visual odometry task, where Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) are used for the feature extraction and inference of dynamics across the frames, respectively. Detailed analyses and evaluations made on a real pig stomach dataset proves that our system achieves high translational and rotational accuracies for different types of endoscopic capsule robot trajectories.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge