Lina Bariah

RF-GPT: Teaching AI to See the Wireless World

Feb 16, 2026Abstract:Large language models (LLMs) and multimodal models have become powerful general-purpose reasoning systems. However, radio-frequency (RF) signals, which underpin wireless systems, are still not natively supported by these models. Existing LLM-based approaches for telecom focus mainly on text and structured data, while conventional RF deep-learning models are built separately for specific signal-processing tasks, highlighting a clear gap between RF perception and high-level reasoning. To bridge this gap, we introduce RF-GPT, a radio-frequency language model (RFLM) that utilizes the visual encoders of multimodal LLMs to process and understand RF spectrograms. In this framework, complex in-phase/quadrature (IQ) waveforms are mapped to time-frequency spectrograms and then passed to pretrained visual encoders. The resulting representations are injected as RF tokens into a decoder-only LLM, which generates RF-grounded answers, explanations, and structured outputs. To train RF-GPT, we perform supervised instruction fine-tuning of a pretrained multimodal LLM using a fully synthetic RF corpus. Standards-compliant waveform generators produce wideband scenes for six wireless technologies, from which we derive time-frequency spectrograms, exact configuration metadata, and dense captions. A text-only LLM then converts these captions into RF-grounded instruction-answer pairs, yielding roughly 12,000 RF scenes and 0.625 million instruction examples without any manual labeling. Across benchmarks for wideband modulation classification, overlap analysis, wireless-technology recognition, WLAN user counting, and 5G NR information extraction, RF-GPT achieves strong multi-task performance, whereas general-purpose VLMs with no RF grounding largely fail.

Seeing Radio: From Zero RF Priors to Explainable Modulation Recognition with Vision Language Models

Jan 19, 2026Abstract:The rise of vision language models (VLMs) paves a new path for radio frequency (RF) perception. Rather than designing task-specific neural receivers, we ask if VLMs can learn to recognize modulations when RF waveforms are expressed as images. In this work, we find that they can. In specific, in this paper, we introduce a practical pipeline for converting complex IQ streams into visually interpretable inputs, hence, enabling general-purpose VLMs to classify modulation schemes without changing their underlying design. Building on this, we construct an RF visual question answering (VQA) benchmark framework that covers 57 classes across major families of analog/digital modulations with three complementary image modes, namely, (i) short \emph{time-series} IQ segments represented as real/imaginary traces, (ii) magnitude-only \emph{spectrograms}, and (iii) \emph{joint} representations that pair spectrograms with a synchronized time-series waveforms. We design uniform zero-shot and few-shot prompts for both class-level and family-level evaluations. Our finetuned VLMs with these images achieve competitive accuracy of $90\%$ compared to $10\%$ of the base models. Furthermore, the fine-tuned VLMs show robust performance under noise and demonstrate high generalization performance to unseen modulation types, without relying on RF-domain priors or specialized architectures. The obtained results show that combining RF-to-image conversion with promptable VLMs provides a scalable and practical foundation for RF-aware AI systems in future 6G networks.

Advancing THz Radio Map Construction and Obstacle Sensing: An Integrated Generative Framework in ISAC

Mar 29, 2025Abstract:Integrated sensing and communication (ISAC) in the terahertz (THz) band enables obstacle detection, which in turn facilitates efficient beam management to mitigate THz signal blockage. Simultaneously, a THz radio map, which captures signal propagation characteristics through the distribution of received signal strength (RSS), is well-suited for sensing, as it inherently contains obstacle-related information and reflects the unique properties of the THz channel. This means that communication-assisted sensing in ISAC can be effectively achieved using a THz radio map. However, constructing a radio map presents significant challenges due to the sparse deployment of THz sensors and their limited ability to accurately measure the RSS distribution, which directly affects obstacle sensing. In this paper, we formulate an integrated problem for the first time, leveraging the mutual enhancement between sensed obstacles and the constructed THz radio maps. To address this challenge while improving generalization, we propose an integration framework based on a conditional generative adversarial network (CGAN), which uncovers the manifold structure of THz radio maps embedded with obstacle information. Furthermore, recognizing the shared environmental semantics across THz radio maps from different beam directions, we introduce a novel voting-based sensing scheme, where obstacles are detected by aggregating votes from THz radio maps generated by the CGAN. Simulation results demonstrate that the proposed framework outperforms non-integrated baselines in both radio map construction and obstacle sensing, achieving up to 44.3% and 90.6% reductions in mean squared error (MSE), respectively, in a real-world scenario. These results validate the effectiveness of the proposed voting-based scheme.

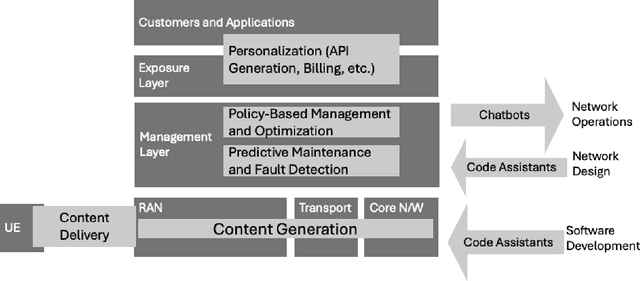

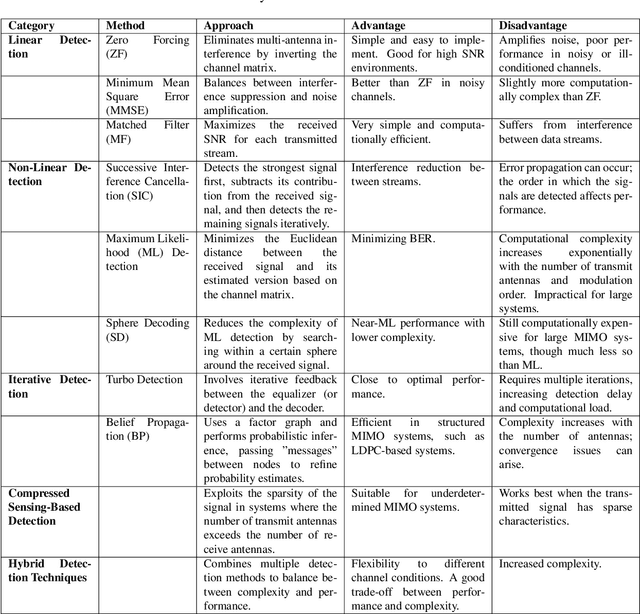

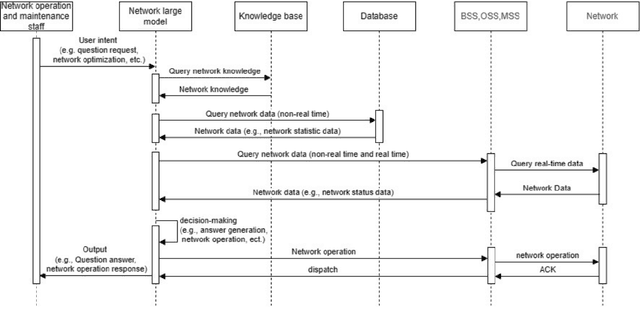

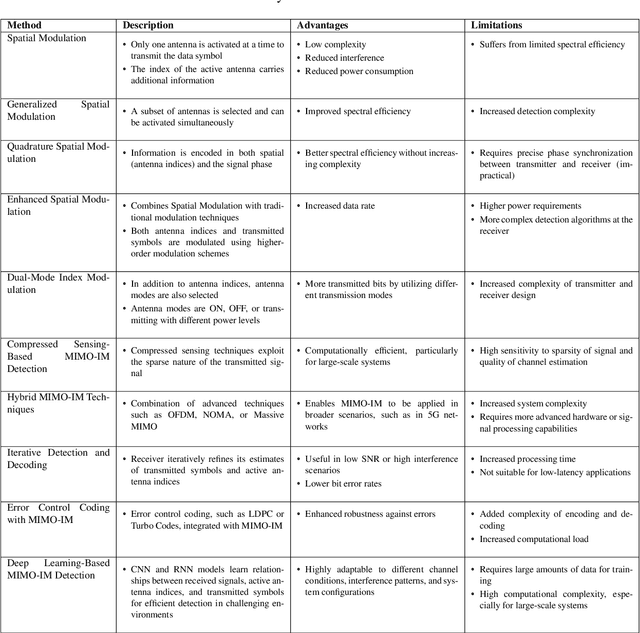

Large-Scale AI in Telecom: Charting the Roadmap for Innovation, Scalability, and Enhanced Digital Experiences

Mar 06, 2025

Abstract:This white paper discusses the role of large-scale AI in the telecommunications industry, with a specific focus on the potential of generative AI to revolutionize network functions and user experiences, especially in the context of 6G systems. It highlights the development and deployment of Large Telecom Models (LTMs), which are tailored AI models designed to address the complex challenges faced by modern telecom networks. The paper covers a wide range of topics, from the architecture and deployment strategies of LTMs to their applications in network management, resource allocation, and optimization. It also explores the regulatory, ethical, and standardization considerations for LTMs, offering insights into their future integration into telecom infrastructure. The goal is to provide a comprehensive roadmap for the adoption of LTMs to enhance scalability, performance, and user-centric innovation in telecom networks.

TelecomGPT: A Framework to Build Telecom-Specfic Large Language Models

Jul 12, 2024

Abstract:Large Language Models (LLMs) have the potential to revolutionize the Sixth Generation (6G) communication networks. However, current mainstream LLMs generally lack the specialized knowledge in telecom domain. In this paper, for the first time, we propose a pipeline to adapt any general purpose LLMs to a telecom-specific LLMs. We collect and build telecom-specific pre-train dataset, instruction dataset, preference dataset to perform continual pre-training, instruct tuning and alignment tuning respectively. Besides, due to the lack of widely accepted evaluation benchmarks in telecom domain, we extend existing evaluation benchmarks and proposed three new benchmarks, namely, Telecom Math Modeling, Telecom Open QnA and Telecom Code Tasks. These new benchmarks provide a holistic evaluation of the capabilities of LLMs including math modeling, Open-Ended question answering, code generation, infilling, summarization and analysis in telecom domain. Our fine-tuned LLM TelecomGPT outperforms state of the art (SOTA) LLMs including GPT-4, Llama-3 and Mistral in Telecom Math Modeling benchmark significantly and achieve comparable performance in various evaluation benchmarks such as TeleQnA, 3GPP technical documents classification, telecom code summary and generation and infilling.

Large Language Models for Power Scheduling: A User-Centric Approach

Jun 29, 2024Abstract:While traditional optimization and scheduling schemes are designed to meet fixed, predefined system requirements, future systems are moving toward user-driven approaches and personalized services, aiming to achieve high quality-of-experience (QoE) and flexibility. This challenge is particularly pronounced in wireless and digitalized energy networks, where users' requirements have largely not been taken into consideration due to the lack of a common language between users and machines. The emergence of powerful large language models (LLMs) marks a radical departure from traditional system-centric methods into more advanced user-centric approaches by providing a natural communication interface between users and devices. In this paper, for the first time, we introduce a novel architecture for resource scheduling problems by constructing three LLM agents to convert an arbitrary user's voice request (VRQ) into a resource allocation vector. Specifically, we design an LLM intent recognition agent to translate the request into an optimization problem (OP), an LLM OP parameter identification agent, and an LLM OP solving agent. To evaluate system performance, we construct a database of typical VRQs in the context of electric vehicle (EV) charging. As a proof of concept, we primarily use Llama 3 8B. Through testing with different prompt engineering scenarios, the obtained results demonstrate the efficiency of the proposed architecture. The conducted performance analysis allows key insights to be extracted. For instance, having a larger set of candidate OPs to model the real-world problem might degrade the final performance because of a higher recognition/OP classification noise level. All results and codes are open source.

Generative AI for Immersive Communication: The Next Frontier in Internet-of-Senses Through 6G

Apr 02, 2024

Abstract:Over the past two decades, the Internet-of-Things (IoT) has been a transformative concept, and as we approach 2030, a new paradigm known as the Internet of Senses (IoS) is emerging. Unlike conventional Virtual Reality (VR), IoS seeks to provide multi-sensory experiences, acknowledging that in our physical reality, our perception extends far beyond just sight and sound; it encompasses a range of senses. This article explores existing technologies driving immersive multi-sensory media, delving into their capabilities and potential applications. This exploration includes a comparative analysis between conventional immersive media streaming and a proposed use case that leverages semantic communication empowered by generative Artificial Intelligence (AI). The focal point of this analysis is the substantial reduction in bandwidth consumption by 99.93% in the proposed scheme. Through this comparison, we aim to underscore the practical applications of generative AI for immersive media while addressing the challenges and outlining future trajectories.

GenAINet: Enabling Wireless Collective Intelligence via Knowledge Transfer and Reasoning

Feb 28, 2024

Abstract:Generative artificial intelligence (GenAI) and communication networks are expected to have groundbreaking synergies in 6G. Connecting GenAI agents over a wireless network can potentially unleash the power of collective intelligence and pave the way for artificial general intelligence (AGI). However, current wireless networks are designed as a "data pipe" and are not suited to accommodate and leverage the power of GenAI. In this paper, we propose the GenAINet framework in which distributed GenAI agents communicate knowledge (high-level concepts or abstracts) to accomplish arbitrary tasks. We first provide a network architecture integrating GenAI capabilities to manage both network protocols and applications. Building on this, we investigate effective communication and reasoning problems by proposing a semantic-native GenAINet. Specifically, GenAI agents extract semantic concepts from multi-modal raw data, build a knowledgebase representing their semantic relations, which is retrieved by GenAI models for planning and reasoning. Under this paradigm, an agent can learn fast from other agents' experience for making better decisions with efficient communications. Furthermore, we conduct two case studies where in wireless device query, we show that extracting and transferring knowledge can improve query accuracy with reduced communication; and in wireless power control, we show that distributed agents can improve decisions via collaborative reasoning. Finally, we address that developing a hierarchical semantic level Telecom world model is a key path towards network of collective intelligence.

Large Language Models for Telecom: The Next Big Thing?

Jun 17, 2023Abstract:The evolution of generative artificial intelligence (GenAI) constitutes a turning point in reshaping the future of technology in different aspects. Wireless networks in particular, with the blooming of self-evolving networks, represent a rich field for exploiting GenAI and reaping several benefits that can fundamentally change the way how wireless networks are designed and operated nowadays. To be specific, large language models (LLMs), a subfield of GenAI, are envisioned to open up a new era of autonomous wireless networks, in which a multimodal large model trained over various Telecom data, can be fine-tuned to perform several downstream tasks, eliminating the need for dedicated AI models for each task and paving the way for the realization of artificial general intelligence (AGI)-empowered wireless networks. In this article, we aim to unfold the opportunities that can be reaped from integrating LLMs into the Telecom domain. In particular, we aim to put a forward-looking vision on a new realm of possibilities and applications of LLMs in future wireless networks, defining directions for designing, training, testing, and deploying Telecom LLMs, and reveal insights on the associated theoretical and practical challenges.

Understanding Telecom Language Through Large Language Models

Jun 09, 2023

Abstract:The recent progress of artificial intelligence (AI) opens up new frontiers in the possibility of automating many tasks involved in Telecom networks design, implementation, and deployment. This has been further pushed forward with the evolution of generative artificial intelligence (AI), including the emergence of large language models (LLMs), which is believed to be the cornerstone toward realizing self-governed, interactive AI agents. Motivated by this, in this paper, we aim to adapt the paradigm of LLMs to the Telecom domain. In particular, we fine-tune several LLMs including BERT, distilled BERT, RoBERTa and GPT-2, to the Telecom domain languages, and demonstrate a use case for identifying the 3rd Generation Partnership Project (3GPP) standard working groups. We consider training the selected models on 3GPP technical documents (Tdoc) pertinent to years 2009-2019 and predict the Tdoc categories in years 2020-2023. The results demonstrate that fine-tuning BERT and RoBERTa model achieves 84.6% accuracy, while GPT-2 model achieves 83% in identifying 3GPP working groups. The distilled BERT model with around 50% less parameters achieves similar performance as others. This corroborates that fine-tuning pretrained LLM can effectively identify the categories of Telecom language. The developed framework shows a stepping stone towards realizing intent-driven and self-evolving wireless networks from Telecom languages, and paves the way for the implementation of generative AI in the Telecom domain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge