Kecheng Liu

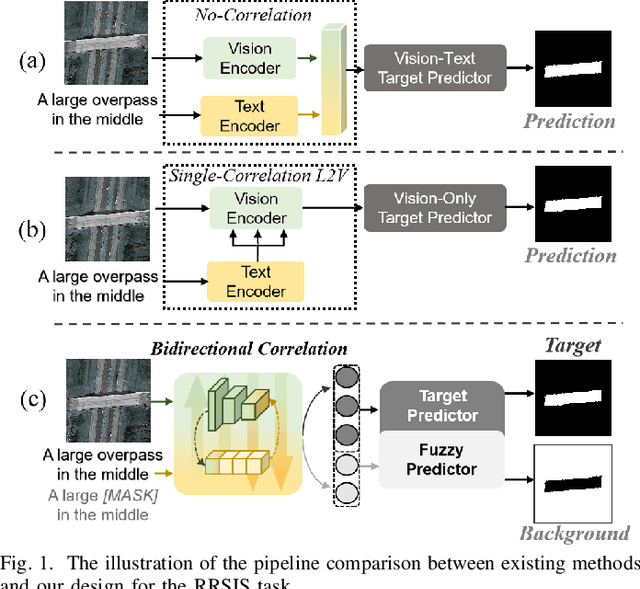

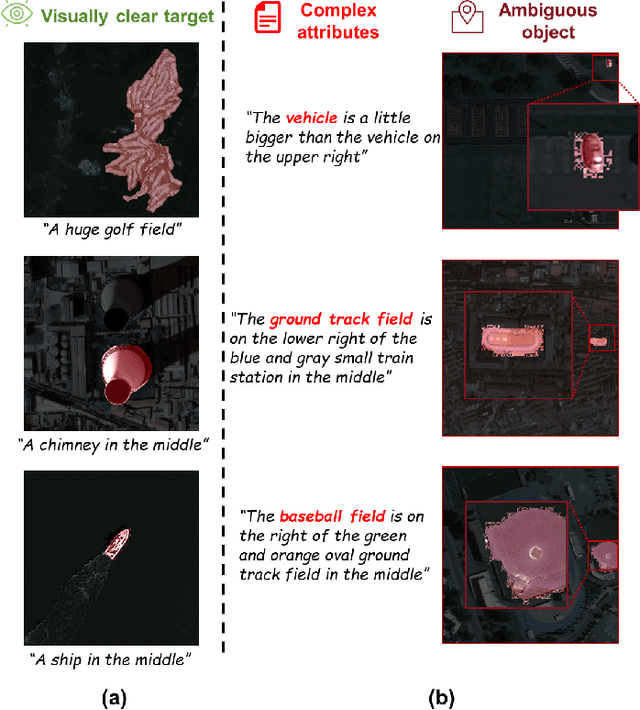

Referring Remote Sensing Image Segmentation via Bidirectional Alignment Guided Joint Prediction

Feb 12, 2025

Abstract:Referring Remote Sensing Image Segmentation (RRSIS) is critical for ecological monitoring, urban planning, and disaster management, requiring precise segmentation of objects in remote sensing imagery guided by textual descriptions. This task is uniquely challenging due to the considerable vision-language gap, the high spatial resolution and broad coverage of remote sensing imagery with diverse categories and small targets, and the presence of clustered, unclear targets with blurred edges. To tackle these issues, we propose \ours, a novel framework designed to bridge the vision-language gap, enhance multi-scale feature interaction, and improve fine-grained object differentiation. Specifically, \ours introduces: (1) the Bidirectional Spatial Correlation (BSC) for improved vision-language feature alignment, (2) the Target-Background TwinStream Decoder (T-BTD) for precise distinction between targets and non-targets, and (3) the Dual-Modal Object Learning Strategy (D-MOLS) for robust multimodal feature reconstruction. Extensive experiments on the benchmark datasets RefSegRS and RRSIS-D demonstrate that \ours achieves state-of-the-art performance. Specifically, \ours improves the overall IoU (oIoU) by 3.76 percentage points (80.57) and 1.44 percentage points (79.23) on the two datasets, respectively. Additionally, it outperforms previous methods in the mean IoU (mIoU) by 5.37 percentage points (67.95) and 1.84 percentage points (66.04), effectively addressing the core challenges of RRSIS with enhanced precision and robustness.

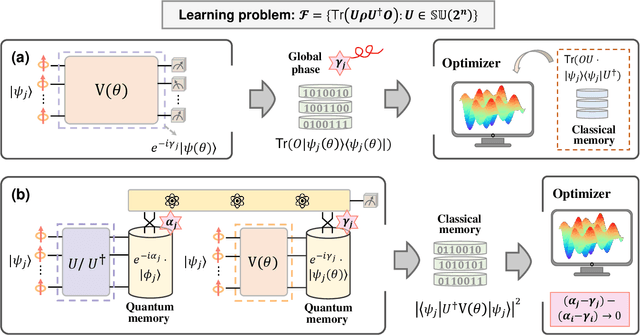

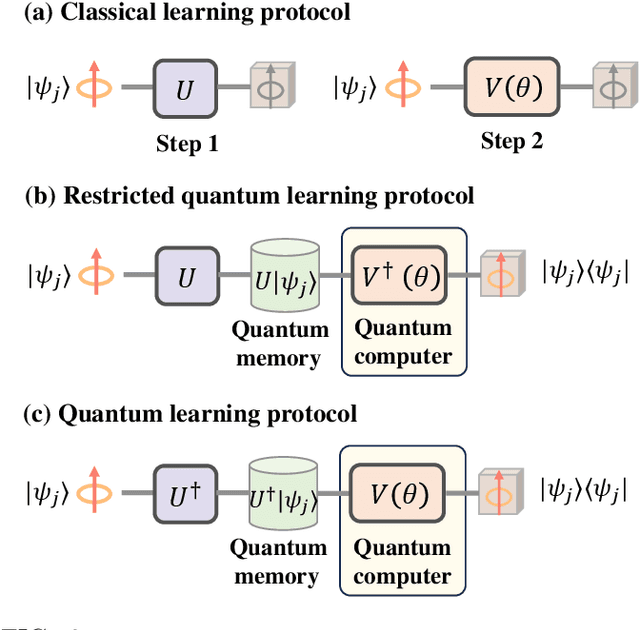

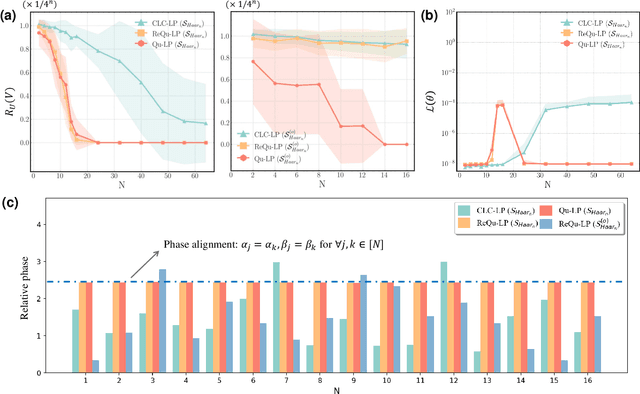

Separable Power of Classical and Quantum Learning Protocols Through the Lens of No-Free-Lunch Theorem

May 12, 2024

Abstract:The No-Free-Lunch (NFL) theorem, which quantifies problem- and data-independent generalization errors regardless of the optimization process, provides a foundational framework for comprehending diverse learning protocols' potential. Despite its significance, the establishment of the NFL theorem for quantum machine learning models remains largely unexplored, thereby overlooking broader insights into the fundamental relationship between quantum and classical learning protocols. To address this gap, we categorize a diverse array of quantum learning algorithms into three learning protocols designed for learning quantum dynamics under a specified observable and establish their NFL theorem. The exploited protocols, namely Classical Learning Protocols (CLC-LPs), Restricted Quantum Learning Protocols (ReQu-LPs), and Quantum Learning Protocols (Qu-LPs), offer varying levels of access to quantum resources. Our derived NFL theorems demonstrate quadratic reductions in sample complexity across CLC-LPs, ReQu-LPs, and Qu-LPs, contingent upon the orthogonality of quantum states and the diagonality of observables. We attribute this performance discrepancy to the unique capacity of quantum-related learning protocols to indirectly utilize information concerning the global phases of non-orthogonal quantum states, a distinctive physical feature inherent in quantum mechanics. Our findings not only deepen our understanding of quantum learning protocols' capabilities but also provide practical insights for the development of advanced quantum learning algorithms.

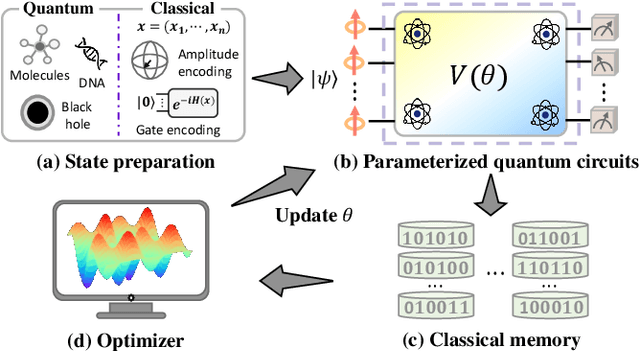

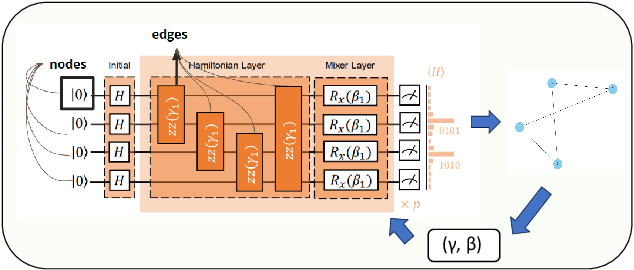

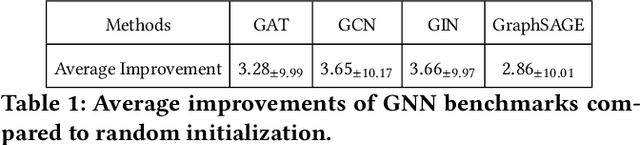

Graph Learning for Parameter Prediction of Quantum Approximate Optimization Algorithm

Mar 05, 2024

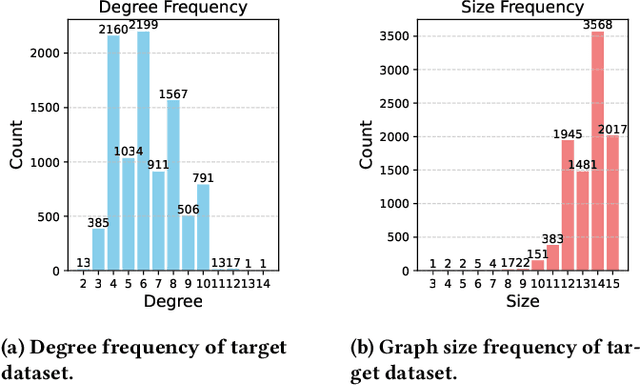

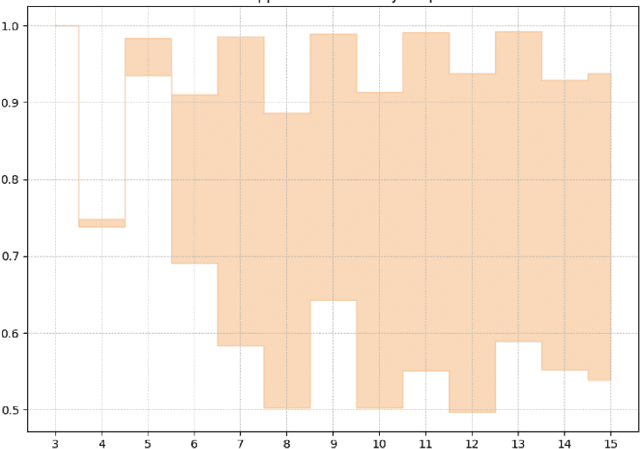

Abstract:In recent years, quantum computing has emerged as a transformative force in the field of combinatorial optimization, offering novel approaches to tackling complex problems that have long challenged classical computational methods. Among these, the Quantum Approximate Optimization Algorithm (QAOA) stands out for its potential to efficiently solve the Max-Cut problem, a quintessential example of combinatorial optimization. However, practical application faces challenges due to current limitations on quantum computational resource. Our work optimizes QAOA initialization, using Graph Neural Networks (GNN) as a warm-start technique. This sacrifices affordable computational resource on classical computer to reduce quantum computational resource overhead, enhancing QAOA's effectiveness. Experiments with various GNN architectures demonstrate the adaptability and stability of our framework, highlighting the synergy between quantum algorithms and machine learning. Our findings show GNN's potential in improving QAOA performance, opening new avenues for hybrid quantum-classical approaches in quantum computing and contributing to practical applications.

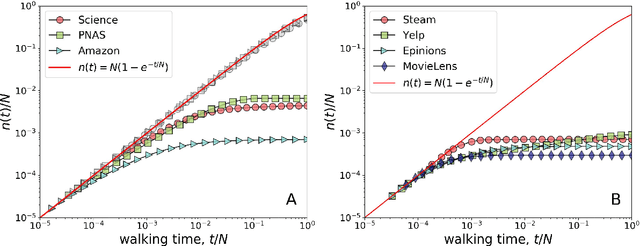

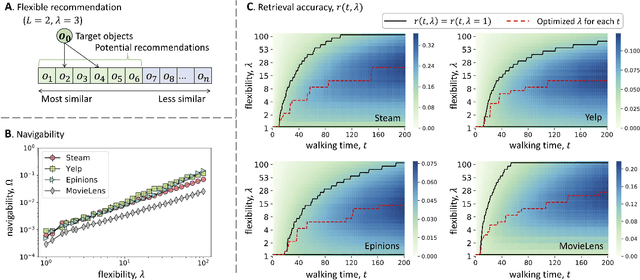

Information Cocoons in Online Navigation

Sep 14, 2021

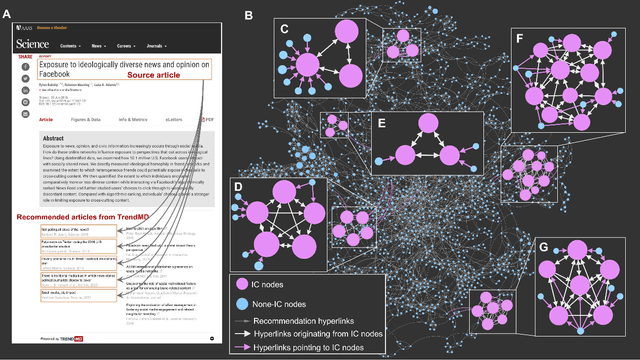

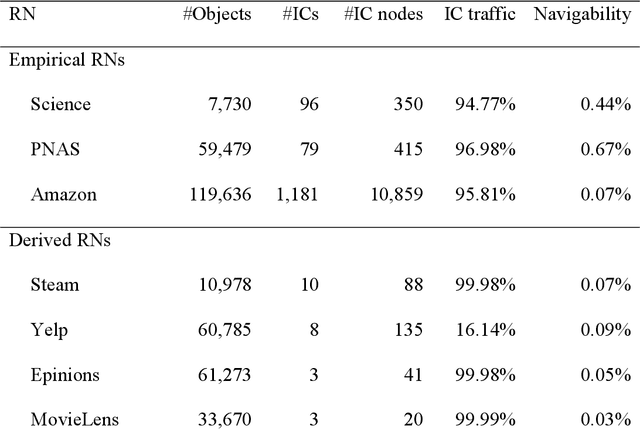

Abstract:Social media and online navigation bring us enjoyable experience in accessing information, and simultaneously create information cocoons (ICs) in which we are unconsciously trapped with limited and biased information. We provide a formal definition of IC in the scenario of online navigation. Subsequently, by analyzing real recommendation networks extracted from Science, PNAS and Amazon websites, and testing mainstream algorithms in disparate recommender systems, we demonstrate that similarity-based recommendation techniques result in ICs, which suppress the system navigability by hundreds of times. We further propose a flexible recommendation strategy that solves the IC-induced problem and improves retrieval accuracy in navigation, demonstrated by simulations on real data and online experiments on the largest video website in China.

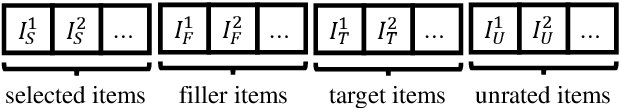

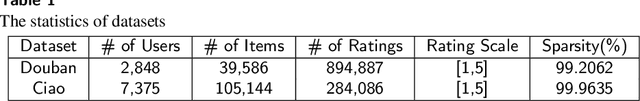

Ready for Emerging Threats to Recommender Systems? A Graph Convolution-based Generative Shilling Attack

Jul 22, 2021

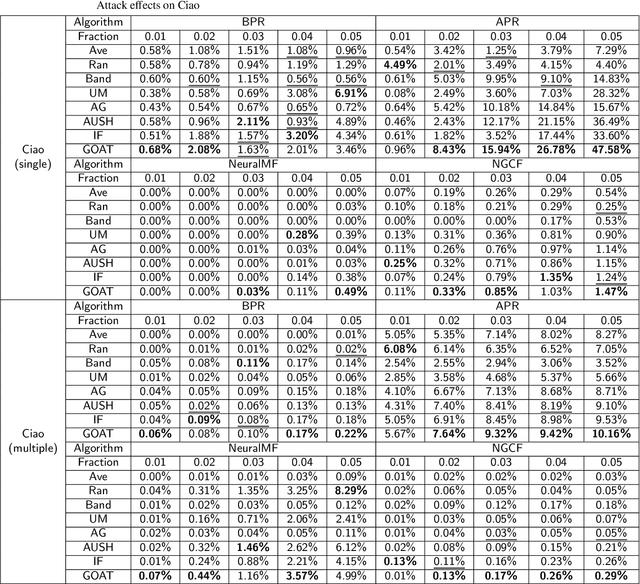

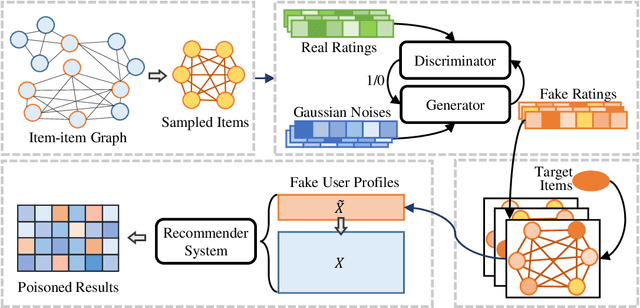

Abstract:To explore the robustness of recommender systems, researchers have proposed various shilling attack models and analyzed their adverse effects. Primitive attacks are highly feasible but less effective due to simplistic handcrafted rules, while upgraded attacks are more powerful but costly and difficult to deploy because they require more knowledge from recommendations. In this paper, we explore a novel shilling attack called Graph cOnvolution-based generative shilling ATtack (GOAT) to balance the attacks' feasibility and effectiveness. GOAT adopts the primitive attacks' paradigm that assigns items for fake users by sampling and the upgraded attacks' paradigm that generates fake ratings by a deep learning-based model. It deploys a generative adversarial network (GAN) that learns the real rating distribution to generate fake ratings. Additionally, the generator combines a tailored graph convolution structure that leverages the correlations between co-rated items to smoothen the fake ratings and enhance their authenticity. The extensive experiments on two public datasets evaluate GOAT's performance from multiple perspectives. Our study of the GOAT demonstrates technical feasibility for building a more powerful and intelligent attack model with a much-reduced cost, enables analysis the threat of such an attack and guides for investigating necessary prevention measures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge