John Lloyd

Pose and shear-based tactile servoing

Dec 13, 2023

Abstract:Tactile servoing is an important technique because it enables robots to manipulate objects with precision and accuracy while adapting to changes in their environments in real-time. One approach for tactile servo control with high-resolution soft tactile sensors is to estimate the contact pose relative to an object surface using a convolutional neural network (CNN) for use as a feedback signal. In this paper, we investigate how the surface pose estimation model can be extended to include shear, and utilize these combined pose-and-shear models to develop a tactile robotic system that can be programmed for diverse non-prehensile manipulation tasks, such as object tracking, surface following, single-arm object pushing and dual-arm object pushing. In doing this, two technical challenges had to be overcome. Firstly, the use of tactile data that includes shear-induced slippage can lead to error-prone estimates unsuitable for accurate control, and so we modified the CNN into a Gaussian-density neural network and used a discriminative Bayesian filter to improve the predictions with a state dynamics model that utilizes the robot kinematics. Secondly, to achieve smooth robot motion in 3D space while interacting with objects, we used SE(3) velocity-based servo control, which required re-deriving the Bayesian filter update equations using Lie group theory, as many standard assumptions do not hold for state variables defined on non-Euclidean manifolds. In future, we believe that pose and shear-based tactile servoing will enable many object manipulation tasks and the fully-dexterous utilization of multi-fingered tactile robot hands. Video: https://www.youtube.com/watch?v=xVs4hd34ek0

Sim-to-Real Model-Based and Model-Free Deep Reinforcement Learning for Tactile Pushing

Jul 26, 2023

Abstract:Object pushing presents a key non-prehensile manipulation problem that is illustrative of more complex robotic manipulation tasks. While deep reinforcement learning (RL) methods have demonstrated impressive learning capabilities using visual input, a lack of tactile sensing limits their capability for fine and reliable control during manipulation. Here we propose a deep RL approach to object pushing using tactile sensing without visual input, namely tactile pushing. We present a goal-conditioned formulation that allows both model-free and model-based RL to obtain accurate policies for pushing an object to a goal. To achieve real-world performance, we adopt a sim-to-real approach. Our results demonstrate that it is possible to train on a single object and a limited sample of goals to produce precise and reliable policies that can generalize to a variety of unseen objects and pushing scenarios without domain randomization. We experiment with the trained agents in harsh pushing conditions, and show that with significantly more training samples, a model-free policy can outperform a model-based planner, generating shorter and more reliable pushing trajectories despite large disturbances. The simplicity of our training environment and effective real-world performance highlights the value of rich tactile information for fine manipulation. Code and videos are available at https://sites.google.com/view/tactile-rl-pushing/.

Bi-Touch: Bimanual Tactile Manipulation with Sim-to-Real Deep Reinforcement Learning

Jul 12, 2023Abstract:Bimanual manipulation with tactile feedback will be key to human-level robot dexterity. However, this topic is less explored than single-arm settings, partly due to the availability of suitable hardware along with the complexity of designing effective controllers for tasks with relatively large state-action spaces. Here we introduce a dual-arm tactile robotic system (Bi-Touch) based on the Tactile Gym 2.0 setup that integrates two affordable industrial-level robot arms with low-cost high-resolution tactile sensors (TacTips). We present a suite of bimanual manipulation tasks tailored towards tactile feedback: bi-pushing, bi-reorienting and bi-gathering. To learn effective policies, we introduce appropriate reward functions for these tasks and propose a novel goal-update mechanism with deep reinforcement learning. We also apply these policies to real-world settings with a tactile sim-to-real approach. Our analysis highlights and addresses some challenges met during the sim-to-real application, e.g. the learned policy tended to squeeze an object in the bi-reorienting task due to the sim-to-real gap. Finally, we demonstrate the generalizability and robustness of this system by experimenting with different unseen objects with applied perturbations in the real world. Code and videos are available at https://sites.google.com/view/bi-touch/.

A pose and shear-based tactile robotic system for object tracking, surface following and object pushing

Jun 26, 2023Abstract:Tactile perception is a crucial sensing modality in robotics, particularly in scenarios that require precise manipulation and safe interaction with other objects. Previous research in this area has focused extensively on tactile perception of contact poses as this is an important capability needed for tasks such as traversing an object's surface or edge, manipulating an object, or pushing an object along a predetermined path. Another important capability needed for tasks such as object tracking and manipulation is estimation of post-contact shear but this has received much less attention. Indeed, post-contact shear has often been considered a "nuisance variable" and is removed if possible because it can have an adverse effect on other types of tactile perception such as contact pose estimation. This paper proposes a tactile robotic system that can simultaneously estimate both the contact pose and post-contact shear, and use this information to control its interaction with other objects. Moreover, our new system is capable of interacting with other objects in a smooth and continuous manner, unlike the stepwise, position-controlled systems we have used in the past. We demonstrate the capabilities of our new system using several different controller configurations, on tasks including object tracking, surface following, single-arm object pushing, and dual-arm object pushing.

Tactile-Driven Gentle Grasping for Human-Robot Collaborative Tasks

Mar 16, 2023

Abstract:This paper presents a control scheme for force sensitive, gentle grasping with a Pisa/IIT anthropomorphic SoftHand equipped with a miniaturised version of the TacTip optical tactile sensor on all five fingertips. The tactile sensors provide high-resolution information about a grasp and how the fingers interact with held objects. We first describe a series of hardware developments for performing asynchronous sensor data acquisition and processing, resulting in a fast control loop sufficient for real-time grasp control. We then develop a novel grasp controller that uses tactile feedback from all five fingertip sensors simultaneously to gently and stably grasp 43 objects of varying geometry and stiffness, which is then applied to a human-to-robot handover task. These developments open the door to more advanced manipulation with underactuated hands via fast reflexive control using high-resolution tactile sensing.

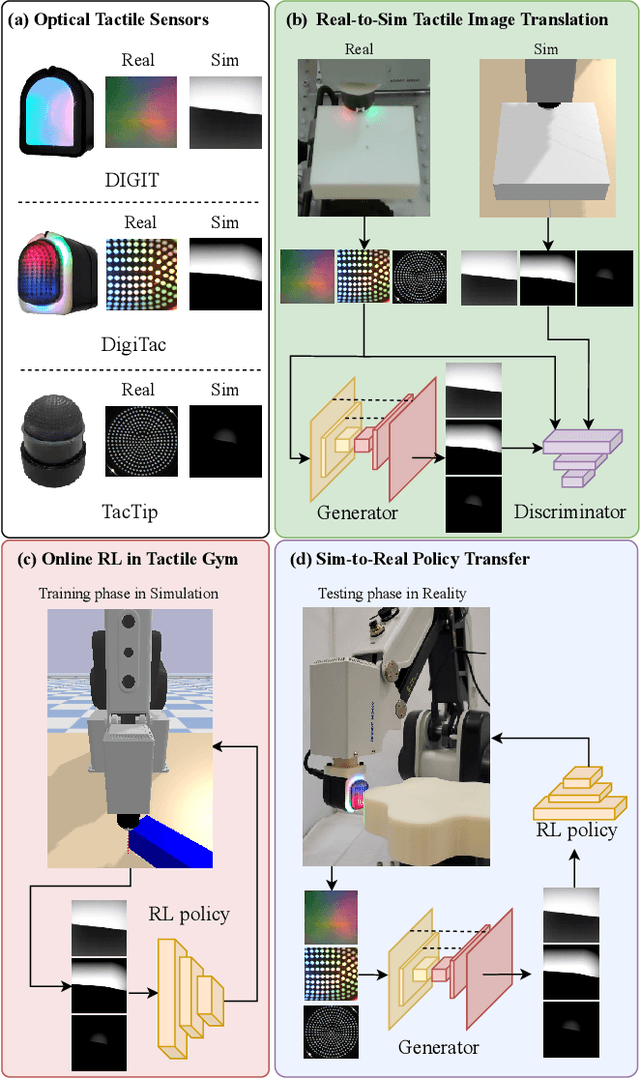

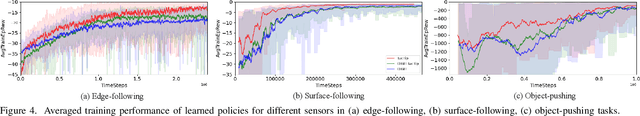

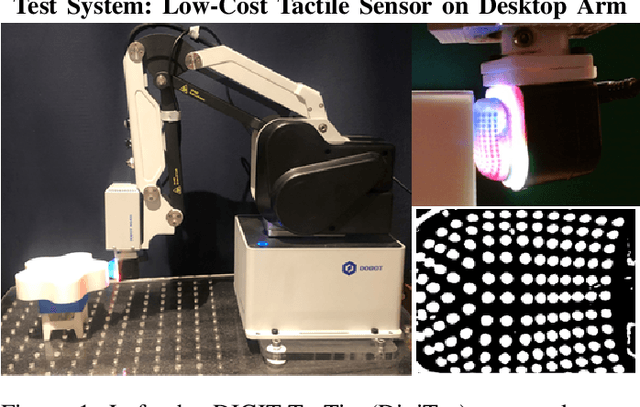

Tactile Gym 2.0: Sim-to-real Deep Reinforcement Learning for Comparing Low-cost High-Resolution Robot Touch

Jul 27, 2022

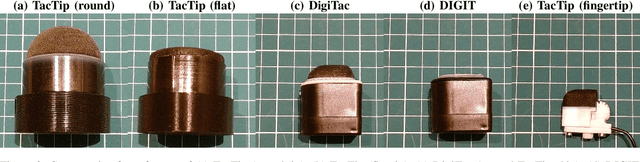

Abstract:High-resolution optical tactile sensors are increasingly used in robotic learning environments due to their ability to capture large amounts of data directly relating to agent-environment interaction. However, there is a high barrier of entry to research in this area due to the high cost of tactile robot platforms, specialised simulation software, and sim-to-real methods that lack generality across different sensors. In this letter we extend the Tactile Gym simulator to include three new optical tactile sensors (TacTip, DIGIT and DigiTac) of the two most popular types, Gelsight-style (image-shading based) and TacTip-style (marker based). We demonstrate that a single sim-to-real approach can be used with these three different sensors to achieve strong real-world performance despite the significant differences between real tactile images. Additionally, we lower the barrier of entry to the proposed tasks by adapting them to an inexpensive 4-DoF robot arm, further enabling the dissemination of this benchmark. We validate the extended environment on three physically-interactive tasks requiring a sense of touch: object pushing, edge following and surface following. The results of our experimental validation highlight some differences between these sensors, which may help future researchers select and customize the physical characteristics of tactile sensors for different manipulations scenarios.

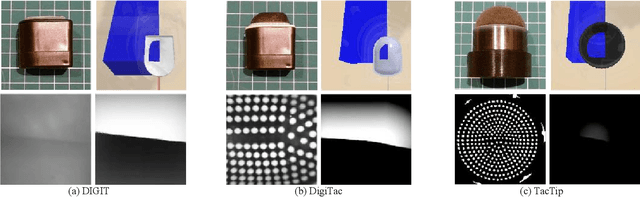

DigiTac: A DIGIT-TacTip Hybrid Tactile Sensor for Comparing Low-Cost High-Resolution Robot Touch

Jun 27, 2022

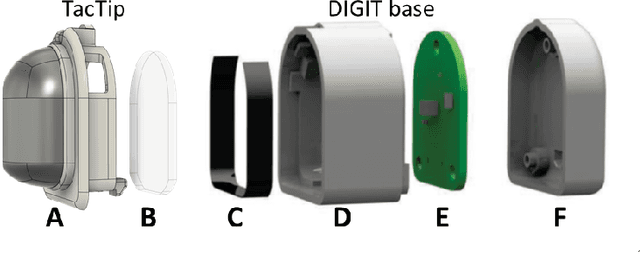

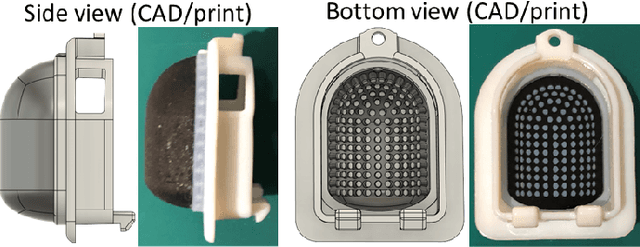

Abstract:Deep learning combined with high-resolution tactile sensing could lead to highly capable dexterous robots. However, progress is slow because of the specialist equipment and expertise. The DIGIT tactile sensor offers low-cost entry to high-resolution touch using GelSight-type sensors. Here we customize the DIGIT to have a 3D-printed sensing surface based on the TacTip family of soft biomimetic optical tactile sensors. The DIGIT-TacTip (DigiTac) enables direct comparison between these distinct tactile sensor types. For this comparison, we introduce a tactile robot system comprising a desktop arm, mounts and 3D-printed test objects. We use tactile servo control with a PoseNet deep learning model to compare the DIGIT, DigiTac and TacTip for edge- and surface-following over 3D-shapes. All three sensors performed similarly at pose prediction, but their constructions led to differing performances at servo control, offering guidance for researchers selecting or innovating tactile sensors. All hardware and software for reproducing this study will be openly released.

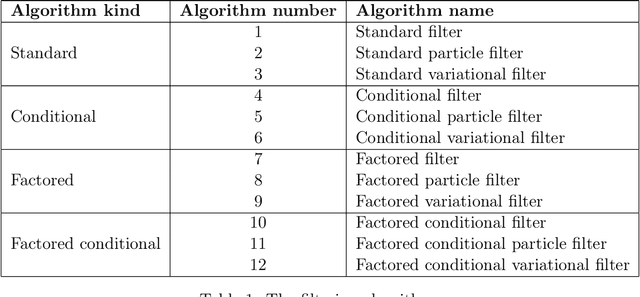

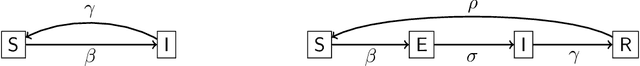

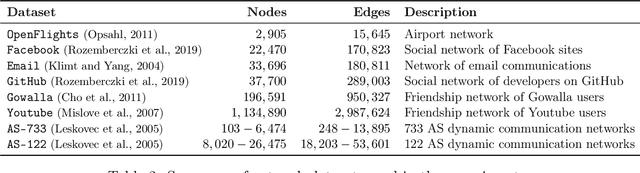

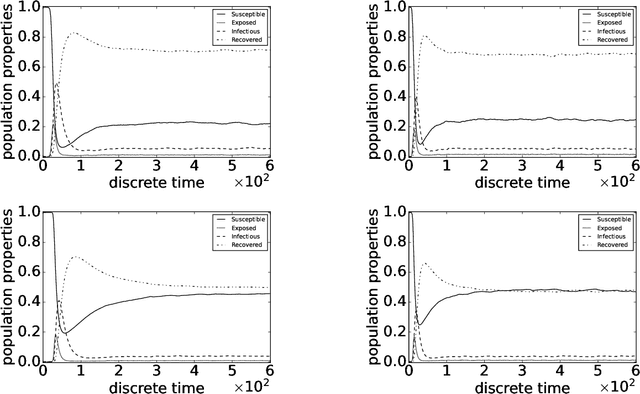

Factored Conditional Filtering: Tracking States and Estimating Parameters in High-Dimensional Spaces

Jun 05, 2022

Abstract:This paper introduces the factored conditional filter, a new filtering algorithm for simultaneously tracking states and estimating parameters in high-dimensional state spaces. The conditional nature of the algorithm is used to estimate parameters and the factored nature is used to decompose the state space into low-dimensional subspaces in such a way that filtering on these subspaces gives distributions whose product is a good approximation to the distribution on the entire state space. The conditions for successful application of the algorithm are that observations be available at the subspace level and that the transition model can be factored into local transition models that are approximately confined to the subspaces; these conditions are widely satisfied in computer science, engineering, and geophysical filtering applications. We give experimental results on tracking epidemics and estimating parameters in large contact networks that show the effectiveness of our approach.

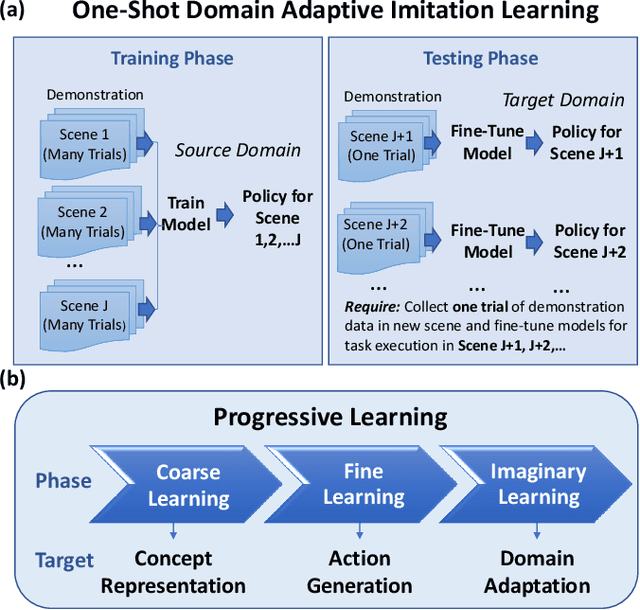

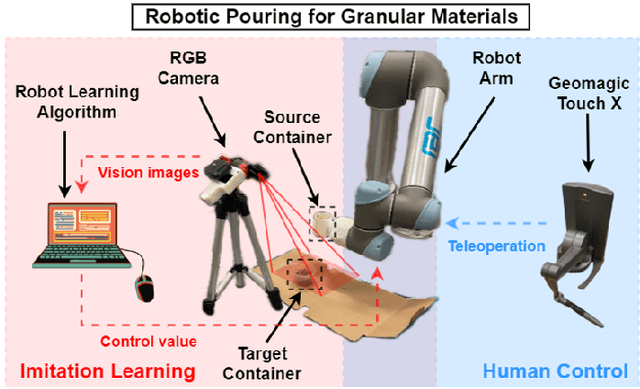

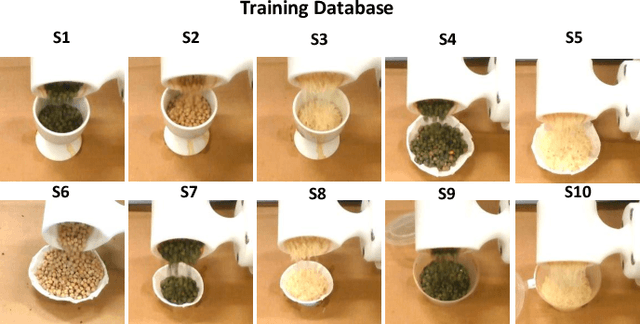

One-Shot Domain-Adaptive Imitation Learning via Progressive Learning

Apr 24, 2022

Abstract:Traditional deep learning-based visual imitation learning techniques require a large amount of demonstration data for model training, and the pre-trained models are difficult to adapt to new scenarios. To address these limitations, we propose a unified framework using a novel progressive learning approach comprised of three phases: i) a coarse learning phase for concept representation, ii) a fine learning phase for action generation, and iii) an imaginary learning phase for domain adaptation. Overall, this approach leads to a one-shot domain-adaptive imitation learning framework. We use robotic pouring task as an example to evaluate its effectiveness. Our results show that the method has several advantages over contemporary end-to-end imitation learning approaches, including an improved success rate for task execution and more efficient training for deep imitation learning. In addition, the generalizability to new domains is improved, as demonstrated here with novel background, target container and granule combinations. We believe that the proposed method can be broadly applicable to different industrial or domestic applications that involve deep imitation learning for robotic manipulation, where the target scenarios have high diversity while the human demonstration data is limited.

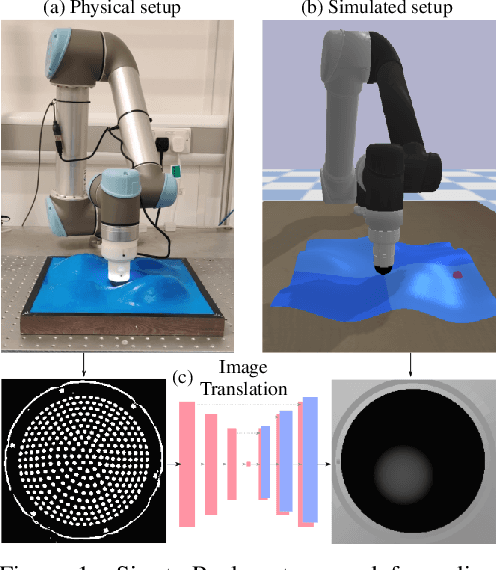

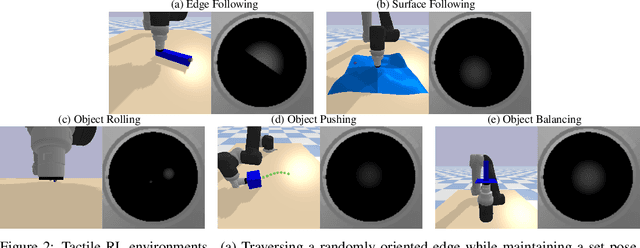

Optical Tactile Sim-to-Real Policy Transfer via Real-to-Sim Tactile Image Translation

Jun 16, 2021

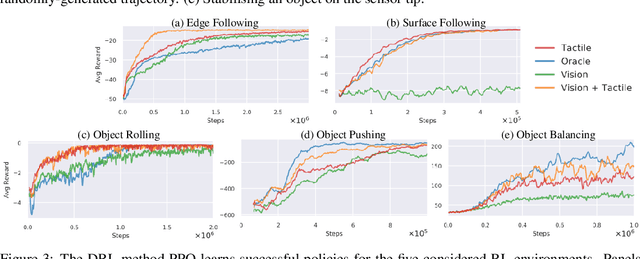

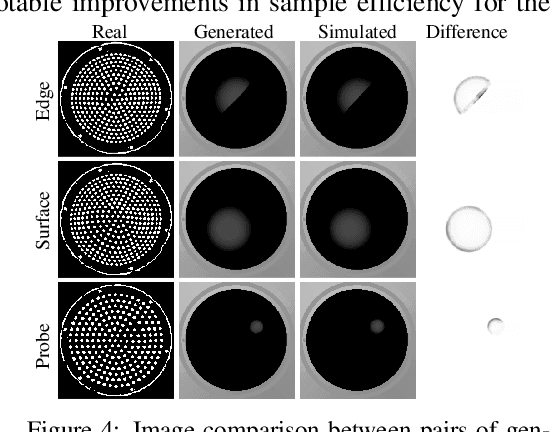

Abstract:Simulation has recently become key for deep reinforcement learning to safely and efficiently acquire general and complex control policies from visual and proprioceptive inputs. Tactile information is not usually considered despite its direct relation to environment interaction. In this work, we present a suite of simulated environments tailored towards tactile robotics and reinforcement learning. A simple and fast method of simulating optical tactile sensors is provided, where high-resolution contact geometry is represented as depth images. Proximal Policy Optimisation (PPO) is used to learn successful policies across all considered tasks. A data-driven approach enables translation of the current state of a real tactile sensor to corresponding simulated depth images. This policy is implemented within a real-time control loop on a physical robot to demonstrate zero-shot sim-to-real policy transfer on several physically-interactive tasks requiring a sense of touch.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge