Jingwen Su

PoseAnimate: Zero-shot high fidelity pose controllable character animation

Apr 30, 2024

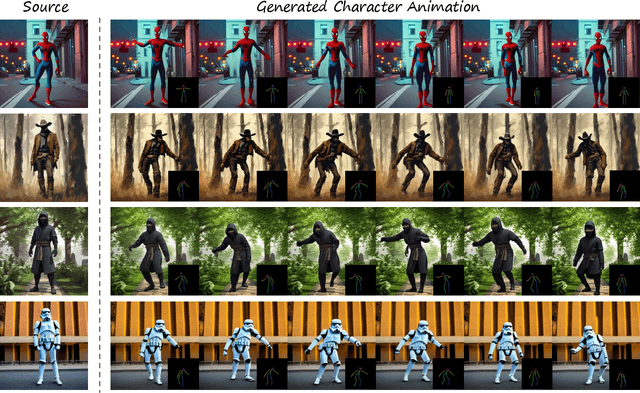

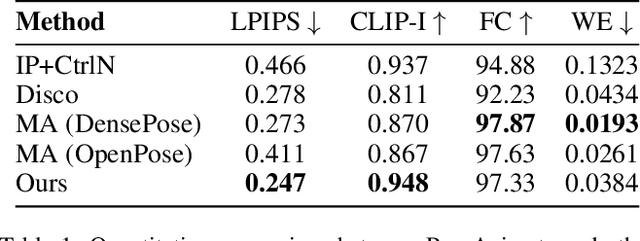

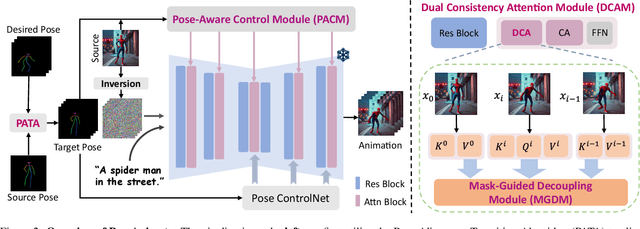

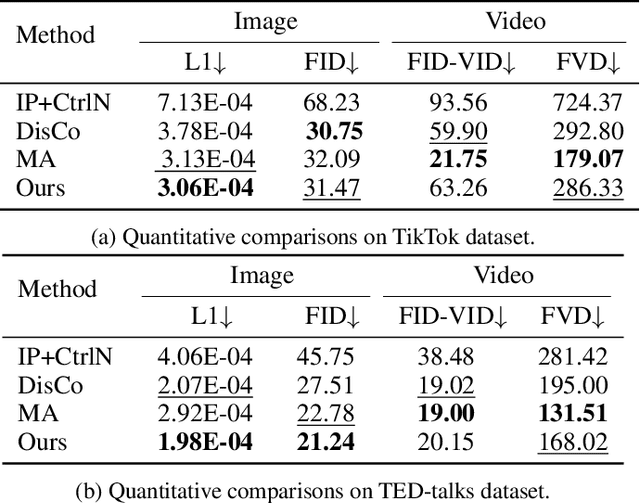

Abstract:Image-to-video(I2V) generation aims to create a video sequence from a single image, which requires high temporal coherence and visual fidelity with the source image.However, existing approaches suffer from character appearance inconsistency and poor preservation of fine details. Moreover, they require a large amount of video data for training, which can be computationally demanding.To address these limitations,we propose PoseAnimate, a novel zero-shot I2V framework for character animation.PoseAnimate contains three key components: 1) Pose-Aware Control Module (PACM) incorporates diverse pose signals into conditional embeddings, to preserve character-independent content and maintain precise alignment of actions.2) Dual Consistency Attention Module (DCAM) enhances temporal consistency, and retains character identity and intricate background details.3) Mask-Guided Decoupling Module (MGDM) refines distinct feature perception, improving animation fidelity by decoupling the character and background.We also propose a Pose Alignment Transition Algorithm (PATA) to ensure smooth action transition.Extensive experiment results demonstrate that our approach outperforms the state-of-the-art training-based methods in terms of character consistency and detail fidelity. Moreover, it maintains a high level of temporal coherence throughout the generated animations.

LoopAnimate: Loopable Salient Object Animation

Apr 14, 2024Abstract:Research on diffusion model-based video generation has advanced rapidly. However, limitations in object fidelity and generation length hinder its practical applications. Additionally, specific domains like animated wallpapers require seamless looping, where the first and last frames of the video match seamlessly. To address these challenges, this paper proposes LoopAnimate, a novel method for generating videos with consistent start and end frames. To enhance object fidelity, we introduce a framework that decouples multi-level image appearance and textual semantic information. Building upon an image-to-image diffusion model, our approach incorporates both pixel-level and feature-level information from the input image, injecting image appearance and textual semantic embeddings at different positions of the diffusion model. Existing UNet-based video generation models require to input the entire videos during training to encode temporal and positional information at once. However, due to limitations in GPU memory, the number of frames is typically restricted to 16. To address this, this paper proposes a three-stage training strategy with progressively increasing frame numbers and reducing fine-tuning modules. Additionally, we introduce the Temporal E nhanced Motion Module(TEMM) to extend the capacity for encoding temporal and positional information up to 36 frames. The proposed LoopAnimate, which for the first time extends the single-pass generation length of UNet-based video generation models to 35 frames while maintaining high-quality video generation. Experiments demonstrate that LoopAnimate achieves state-of-the-art performance in both objective metrics, such as fidelity and temporal consistency, and subjective evaluation results.

Lightweight high-resolution Subject Matting in the Real World

Dec 12, 2023

Abstract:Existing saliency object detection (SOD) methods struggle to satisfy fast inference and accurate results simultaneously in high resolution scenes. They are limited by the quality of public datasets and efficient network modules for high-resolution images. To alleviate these issues, we propose to construct a saliency object matting dataset HRSOM and a lightweight network PSUNet. Considering efficient inference of mobile depolyment framework, we design a symmetric pixel shuffle module and a lightweight module TRSU. Compared to 13 SOD methods, the proposed PSUNet has the best objective performance on the high-resolution benchmark dataset. Evaluation results of objective assessment are superior compared to U$^2$Net that has 10 times of parameter amount of our network. On Snapdragon 8 Gen 2 Mobile Platform, inference a single 640$\times$640 image only takes 113ms. And on the subjective assessment, evaluation results are better than the industry benchmark IOS16 (Lift subject from background).

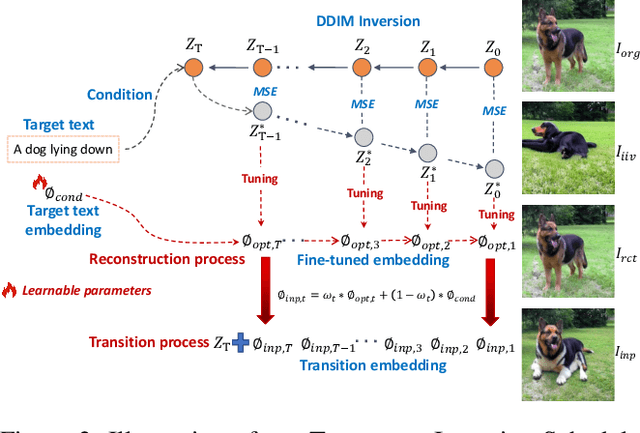

BARET : Balanced Attention based Real image Editing driven by Target-text Inversion

Dec 09, 2023

Abstract:Image editing approaches with diffusion models have been rapidly developed, yet their applicability are subject to requirements such as specific editing types (e.g., foreground or background object editing, style transfer), multiple conditions (e.g., mask, sketch, caption), and time consuming fine-tuning of diffusion models. For alleviating these limitations and realizing efficient real image editing, we propose a novel editing technique that only requires an input image and target text for various editing types including non-rigid edits without fine-tuning diffusion model. Our method contains three novelties:(I) Target-text Inversion Schedule (TTIS) is designed to fine-tune the input target text embedding to achieve fast image reconstruction without image caption and acceleration of convergence.(II) Progressive Transition Scheme applies progressive linear interpolation between target text embedding and its fine-tuned version to generate transition embedding for maintaining non-rigid editing capability.(III) Balanced Attention Module (BAM) balances the tradeoff between textual description and image semantics.By the means of combining self-attention map from reconstruction process and cross-attention map from transition process, the guidance of target text embeddings in diffusion process is optimized.In order to demonstrate editing capability, effectiveness and efficiency of the proposed BARET, we have conducted extensive qualitative and quantitative experiments. Moreover, results derived from user study and ablation study further prove the superiority over other methods.

EA-BEV: Edge-aware Bird' s-Eye-View Projector for 3D Object Detection

Apr 18, 2023

Abstract:In recent years, great progress has been made in the Lift-Splat-Shot-based (LSS-based) 3D object detection method, which converts features of 2D camera view and 3D lidar view to Bird's-Eye-View (BEV) for feature fusion. However, inaccurate depth estimation (e.g. the 'depth jump' problem) is an obstacle to develop LSS-based methods. To alleviate the 'depth jump' problem, we proposed Edge-Aware Bird's-Eye-View (EA-BEV) projector. By coupling proposed edge-aware depth fusion module and depth estimate module, the proposed EA-BEV projector solves the problem and enforces refined supervision on depth. Besides, we propose sparse depth supervision and gradient edge depth supervision, for constraining learning on global depth and local marginal depth information. Our EA-BEV projector is a plug-and-play module for any LSS-based 3D object detection models, and effectively improves the baseline performance. We demonstrate the effectiveness on the nuScenes benchmark. On the nuScenes 3D object detection validation dataset, our proposed EA-BEV projector can boost several state-of-the-art LLS-based baselines on nuScenes 3D object detection benchmark and nuScenes BEV map segmentation benchmark with negligible increment of inference time.

GAM : Gradient Attention Module of Optimization for Point Clouds Analysis

Mar 21, 2023Abstract:In point cloud analysis tasks, the existing local feature aggregation descriptors (LFAD) are unable to fully utilize information in the neighborhood of central points. Previous methods rely solely on Euclidean distance to constrain the local aggregation process, which can be easily affected by abnormal points and cannot adequately fit with the original geometry of the point cloud. We believe that fine-grained geometric information (FGGI) is significant for the aggregation of local features. Therefore, we propose a gradient-based local attention module, termed as Gradient Attention Module (GAM), to address the aforementioned problem. Our proposed GAM simplifies the process that extracts gradient information in the neighborhood and uses the Zenith Angle matrix and Azimuth Angle matrix as explicit representation, which accelerates the module by 35X. Comprehensive experiments were conducted on five benchmark datasets to demonstrate the effectiveness and generalization capability of the proposed GAM for 3D point cloud analysis. Especially on S3DIS dataset, GAM achieves the best performance among current point-based models with mIoU/OA/mAcc of 74.4%/90.6%/83.2%, respectively.

* In AAAI, 2023

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge