Jianxing Zhang

PerTouch: VLM-Driven Agent for Personalized and Semantic Image Retouching

Nov 17, 2025Abstract:Image retouching aims to enhance visual quality while aligning with users' personalized aesthetic preferences. To address the challenge of balancing controllability and subjectivity, we propose a unified diffusion-based image retouching framework called PerTouch. Our method supports semantic-level image retouching while maintaining global aesthetics. Using parameter maps containing attribute values in specific semantic regions as input, PerTouch constructs an explicit parameter-to-image mapping for fine-grained image retouching. To improve semantic boundary perception, we introduce semantic replacement and parameter perturbation mechanisms in the training process. To connect natural language instructions with visual control, we develop a VLM-driven agent that can handle both strong and weak user instructions. Equipped with mechanisms of feedback-driven rethinking and scene-aware memory, PerTouch better aligns with user intent and captures long-term preferences. Extensive experiments demonstrate each component's effectiveness and the superior performance of PerTouch in personalized image retouching. Code is available at: https://github.com/Auroral703/PerTouch.

NTIRE 2025 Challenge on Real-World Face Restoration: Methods and Results

Apr 20, 2025Abstract:This paper provides a review of the NTIRE 2025 challenge on real-world face restoration, highlighting the proposed solutions and the resulting outcomes. The challenge focuses on generating natural, realistic outputs while maintaining identity consistency. Its goal is to advance state-of-the-art solutions for perceptual quality and realism, without imposing constraints on computational resources or training data. The track of the challenge evaluates performance using a weighted image quality assessment (IQA) score and employs the AdaFace model as an identity checker. The competition attracted 141 registrants, with 13 teams submitting valid models, and ultimately, 10 teams achieved a valid score in the final ranking. This collaborative effort advances the performance of real-world face restoration while offering an in-depth overview of the latest trends in the field.

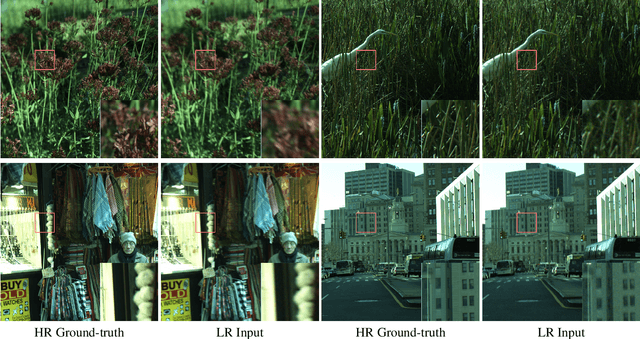

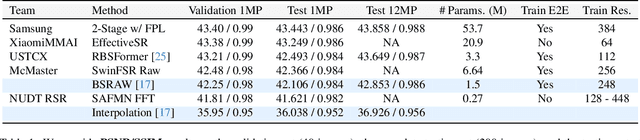

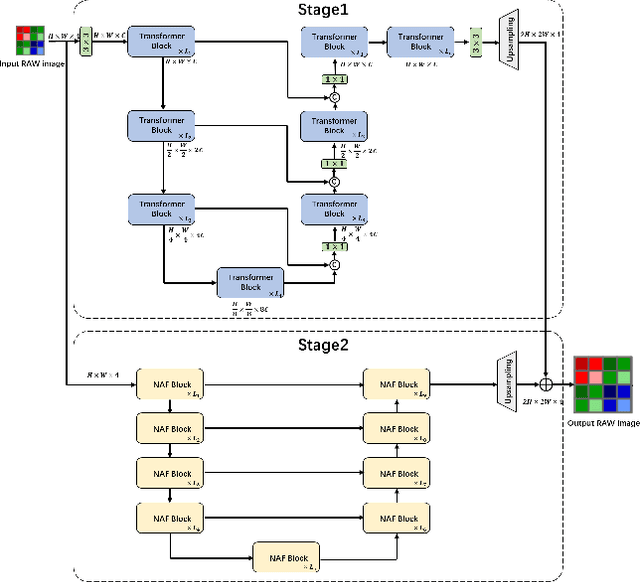

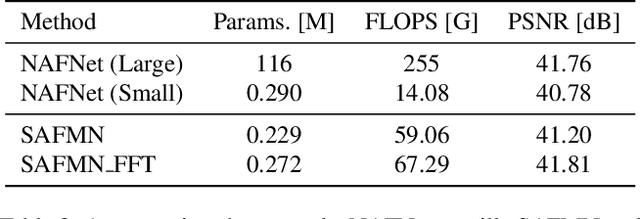

Deep RAW Image Super-Resolution. A NTIRE 2024 Challenge Survey

Apr 24, 2024

Abstract:This paper reviews the NTIRE 2024 RAW Image Super-Resolution Challenge, highlighting the proposed solutions and results. New methods for RAW Super-Resolution could be essential in modern Image Signal Processing (ISP) pipelines, however, this problem is not as explored as in the RGB domain. Th goal of this challenge is to upscale RAW Bayer images by 2x, considering unknown degradations such as noise and blur. In the challenge, a total of 230 participants registered, and 45 submitted results during thee challenge period. The performance of the top-5 submissions is reviewed and provided here as a gauge for the current state-of-the-art in RAW Image Super-Resolution.

Bridging Data-Driven and Knowledge-Driven Approaches for Safety-Critical Scenario Generation in Automated Vehicle Validation

Nov 18, 2023

Abstract:Automated driving vehicles~(ADV) promise to enhance driving efficiency and safety, yet they face intricate challenges in safety-critical scenarios. As a result, validating ADV within generated safety-critical scenarios is essential for both development and performance evaluations. This paper investigates the complexities of employing two major scenario-generation solutions: data-driven and knowledge-driven methods. Data-driven methods derive scenarios from recorded datasets, efficiently generating scenarios by altering the existing behavior or trajectories of traffic participants but often falling short in considering ADV perception; knowledge-driven methods provide effective coverage through expert-designed rules, but they may lead to inefficiency in generating safety-critical scenarios within that coverage. To overcome these challenges, we introduce BridgeGen, a safety-critical scenario generation framework, designed to bridge the benefits of both methodologies. Specifically, by utilizing ontology-based techniques, BridgeGen models the five scenario layers in the operational design domain (ODD) from knowledge-driven methods, ensuring broad coverage, and incorporating data-driven strategies to efficiently generate safety-critical scenarios. An optimized scenario generation toolkit is developed within BridgeGen. This expedites the crafting of safety-critical scenarios through a combination of traditional optimization and reinforcement learning schemes. Extensive experiments conducted using Carla simulator demonstrate the effectiveness of BridgeGen in generating diverse safety-critical scenarios.

Improving Classification Model Performance on Chest X-Rays through Lung Segmentation

Feb 22, 2022

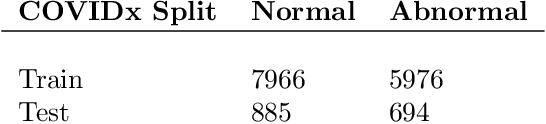

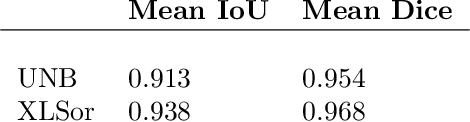

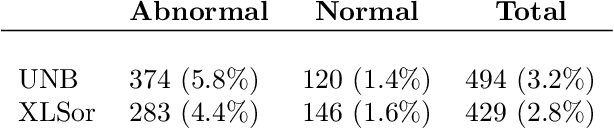

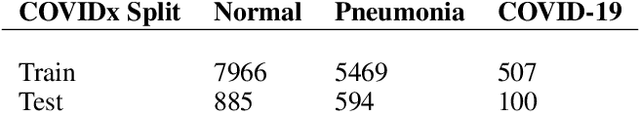

Abstract:Chest radiography is an effective screening tool for diagnosing pulmonary diseases. In computer-aided diagnosis, extracting the relevant region of interest, i.e., isolating the lung region of each radiography image, can be an essential step towards improved performance in diagnosing pulmonary disorders. Methods: In this work, we propose a deep learning approach to enhance abnormal chest x-ray (CXR) identification performance through segmentations. Our approach is designed in a cascaded manner and incorporates two modules: a deep neural network with criss-cross attention modules (XLSor) for localizing lung region in CXR images and a CXR classification model with a backbone of a self-supervised momentum contrast (MoCo) model pre-trained on large-scale CXR data sets. The proposed pipeline is evaluated on Shenzhen Hospital (SH) data set for the segmentation module, and COVIDx data set for both segmentation and classification modules. Novel statistical analysis is conducted in addition to regular evaluation metrics for the segmentation module. Furthermore, the results of the optimized approach are analyzed with gradient-weighted class activation mapping (Grad-CAM) to investigate the rationale behind the classification decisions and to interpret its choices. Results and Conclusion: Different data sets, methods, and scenarios for each module of the proposed pipeline are examined for designing an optimized approach, which has achieved an accuracy of 0.946 in distinguishing abnormal CXR images (i.e., Pneumonia and COVID-19) from normal ones. Numerical and visual validations suggest that applying automated segmentation as a pre-processing step for classification improves the generalization capability and the performance of the classification models.

COVID-19 Detection from Chest X-ray Images using Imprinted Weights Approach

May 04, 2021

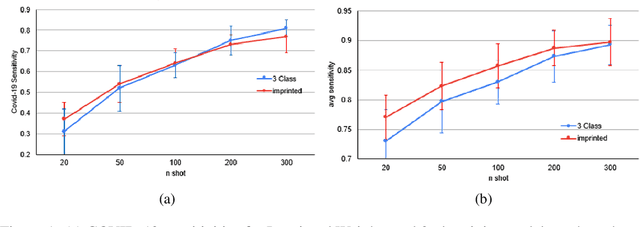

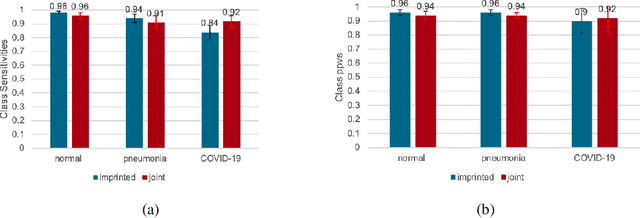

Abstract:The COVID-19 pandemic has had devastating effects on the well-being of the global population. The pandemic has been so prominent partly due to the high infection rate of the virus and its variants. In response, one of the most effective ways to stop infection is rapid diagnosis. The main-stream screening method, reverse transcription-polymerase chain reaction (RT-PCR), is time-consuming, laborious and in short supply. Chest radiography is an alternative screening method for the COVID-19 and computer-aided diagnosis (CAD) has proven to be a viable solution at low cost and with fast speed; however, one of the challenges in training the CAD models is the limited number of training data, especially at the onset of the pandemic. This becomes outstanding precisely when the quick and cheap type of diagnosis is critically needed for flattening the infection curve. To address this challenge, we propose the use of a low-shot learning approach named imprinted weights, taking advantage of the abundance of samples from known illnesses such as pneumonia to improve the detection performance on COVID-19.

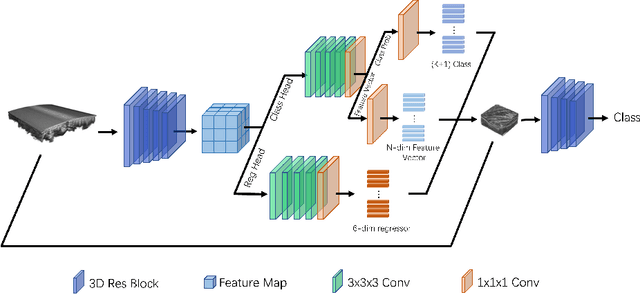

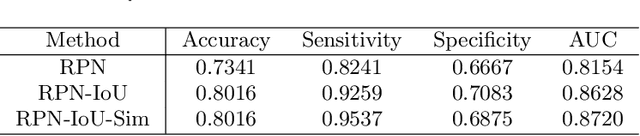

Computer-aided Tumor Diagnosis in Automated Breast Ultrasound using 3D Detection Network

Jul 31, 2020

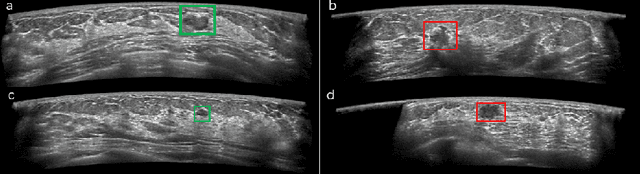

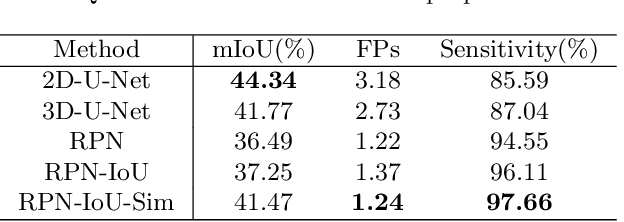

Abstract:Automated breast ultrasound (ABUS) is a new and promising imaging modality for breast cancer detection and diagnosis, which could provide intuitive 3D information and coronal plane information with great diagnostic value. However, manually screening and diagnosing tumors from ABUS images is very time-consuming and overlooks of abnormalities may happen. In this study, we propose a novel two-stage 3D detection network for locating suspected lesion areas and further classifying lesions as benign or malignant tumors. Specifically, we propose a 3D detection network rather than frequently-used segmentation network to locate lesions in ABUS images, thus our network can make full use of the spatial context information in ABUS images. A novel similarity loss is designed to effectively distinguish lesions from background. Then a classification network is employed to identify the located lesions as benign or malignant. An IoU-balanced classification loss is adopted to improve the correlation between classification and localization task. The efficacy of our network is verified from a collected dataset of 418 patients with 145 benign tumors and 273 malignant tumors. Experiments show our network attains a sensitivity of 97.66% with 1.23 false positives (FPs), and has an area under the curve(AUC) value of 0.8720.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge