Hamed Ahmadi

Failure-Aware Access Point Selection for Resilient Cell-Free Massive MIMO Networks

Feb 18, 2026Abstract:This paper presents a Failure-Aware Access Point Selection (FAAS) method aimed at improving hardware resilience in cell-free massive MIMO (CF-mMIMO) networks. FAAS selects APs for each user by jointly considering channel strength and the failure probability of each AP. A tunable parameter \(α\in [0,1]\) scales these failure probabilities to model different levels of network stress. We evaluate resilience using two key metrics: the minimum-user spectral efficiency, which captures worst-case user performance, and the outage probability, defined as the fraction of users left without any active APs. Simulation results show that FAAS maintains significantly better performance under failure conditions compared to failure-agnostic clustering. At high failure levels, FAAS reduces outage by over 85\% and improves worst-case user rates. These results confirm that FAAS is a practical and efficient solution for building more reliable CF-mMIMO networks.

Interpretable Attention-Based Multi-Agent PPO for Latency Spike Resolution in 6G RAN Slicing

Feb 11, 2026Abstract:Sixth-generation (6G) radio access networks (RANs) must enforce strict service-level agreements (SLAs) for heterogeneous slices, yet sudden latency spikes remain difficult to diagnose and resolve with conventional deep reinforcement learning (DRL) or explainable RL (XRL). We propose \emph{Attention-Enhanced Multi-Agent Proximal Policy Optimization (AE-MAPPO)}, which integrates six specialized attention mechanisms into multi-agent slice control and surfaces them as zero-cost, faithful explanations. The framework operates across O-RAN timescales with a three-phase strategy: predictive, reactive, and inter-slice optimization. A URLLC case study shows AE-MAPPO resolves a latency spike in $18$ms, restores latency to $0.98$ms with $99.9999\%$ reliability, and reduces troubleshooting time by $93\%$ while maintaining eMBB and mMTC continuity. These results confirm AE-MAPPO's ability to combine SLA compliance with inherent interpretability, enabling trustworthy and real-time automation for 6G RAN slicing.

HAPS-RIS and UAV Integrated Networks: A Unified Joint Multi-objective Framework

Feb 10, 2026Abstract:Future 6G non-terrestrial networks aim to deliver ubiquitous connectivity to remote and undeserved regions, but unmanned aerial vehicle (UAV) base stations face fundamental challenges such as limited numbers and power budgets. To overcome these obstacles, high-altitude platform station (HAPS) equipped with a reconfigurable intelligent surface (RIS), so-called HAPS-RIS, is a promising candidate. We propose a novel unified joint multi-objective framework where UAVs and HAPS-RIS are fully integrated to extend coverage and enhance network performance. This joint multi-objective design maximizes the number of users served by the HAPS-RIS, minimizes the number of UAVs deployed and minimizes the total average UAV path loss subject to quality-of-service (QoS) and resource constraints. We propose a novel low-complexity solution strategy by proving the equivalence between minimizing the total average UAV path loss upper bound and k-means clustering, deriving a practical closed-form RIS phase-shift design, and introducing a mapping technique that collapses the combinatorial assignments into a zone radius and a bandwidth-portioning factor. Then, we propose a dynamic Pareto optimization technique to solve the transformed optimization problem. Extensive simulation results demonstrate that the proposed framework adapts seamlessly across operating regimes. A HAPS-RIS-only setup achieves full coverage at low data rates, but UAV assistance becomes indispensable as rate demands increase. By tuning a single bandwidth portioning factor, the model recovers UAV-only, HAPS-RIS-only and equal bandwidth portioning baselines within one formulation and consistently surpasses them across diverse rate requirements. The simulations also quantify a tangible trade-off between RIS scale and UAV deployment, enabling designers to trade increased RIS elements for fewer UAVs as service demands evolve.

Enhancing Open RAN Digital Twin Through Power Consumption Measurement

Jul 01, 2025Abstract:The increasing demand for high-speed, ultra-reliable and low-latency communications in 5G and beyond networks has led to a significant increase in power consumption, particularly within the Radio Access Network (RAN). This growing energy demand raises operational and sustainability challenges for mobile network operators, requiring novel solutions to enhance energy efficiency while maintaining Quality of Service (QoS). 5G networks are evolving towards disaggregated, programmable, and intelligent architectures, with Open Radio Access Network (O-RAN) spearheaded by the O-RAN Alliance, enabling greater flexibility, interoperability, and cost-effectiveness. However, this disaggregated approach introduces new complexities, especially in terms of power consumption across different network components, including Open Radio Units (RUs), Open Distributed Units (DUs) and Open Central Units (CUs). Understanding the power efficiency of different O-RAN functional splits is crucial for optimising energy consumption and network sustainability. In this paper, we present a comprehensive measurement study of power consumption in RUs, DUs and CUs under varying network loads, specifically analysing the impact of Physical resource block (PRB) utilisation in Split 8 and Split 7.2b. The measurements were conducted on both software-defined radio (SDR)-based RUs and commercial indoor and outdoor RU, as well as their corresponding DU and CU. By evaluating real-world hardware deployments under different operational conditions, this study provides empirical insights into the power efficiency of various O-RAN configurations. The results highlight that power consumption does not scale significantly with network load, suggesting that a large portion of energy consumption remains constant regardless of traffic demand.

An Explainable AI Framework for Dynamic Resource Management in Vehicular Network Slicing

Jun 13, 2025Abstract:Effective resource management and network slicing are essential to meet the diverse service demands of vehicular networks, including Enhanced Mobile Broadband (eMBB) and Ultra-Reliable and Low-Latency Communications (URLLC). This paper introduces an Explainable Deep Reinforcement Learning (XRL) framework for dynamic network slicing and resource allocation in vehicular networks, built upon a near-real-time RAN intelligent controller. By integrating a feature-based approach that leverages Shapley values and an attention mechanism, we interpret and refine the decisions of our reinforcementlearning agents, addressing key reliability challenges in vehicular communication systems. Simulation results demonstrate that our approach provides clear, real-time insights into the resource allocation process and achieves higher interpretability precision than a pure attention mechanism. Furthermore, the Quality of Service (QoS) satisfaction for URLLC services increased from 78.0% to 80.13%, while that for eMBB services improved from 71.44% to 73.21%.

Large-Scale AI in Telecom: Charting the Roadmap for Innovation, Scalability, and Enhanced Digital Experiences

Mar 06, 2025

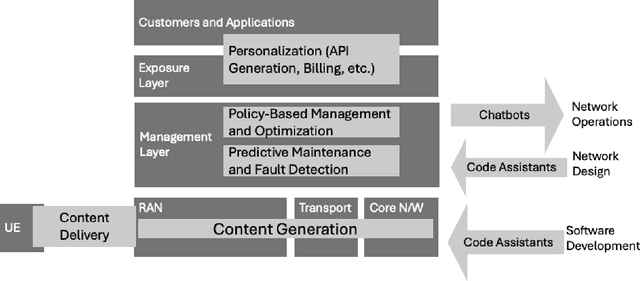

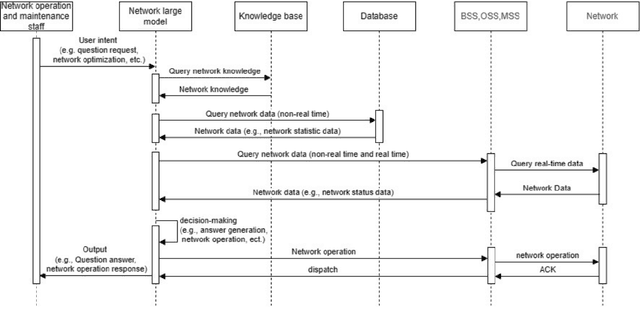

Abstract:This white paper discusses the role of large-scale AI in the telecommunications industry, with a specific focus on the potential of generative AI to revolutionize network functions and user experiences, especially in the context of 6G systems. It highlights the development and deployment of Large Telecom Models (LTMs), which are tailored AI models designed to address the complex challenges faced by modern telecom networks. The paper covers a wide range of topics, from the architecture and deployment strategies of LTMs to their applications in network management, resource allocation, and optimization. It also explores the regulatory, ethical, and standardization considerations for LTMs, offering insights into their future integration into telecom infrastructure. The goal is to provide a comprehensive roadmap for the adoption of LTMs to enhance scalability, performance, and user-centric innovation in telecom networks.

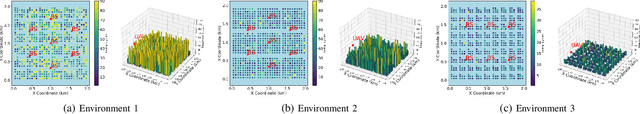

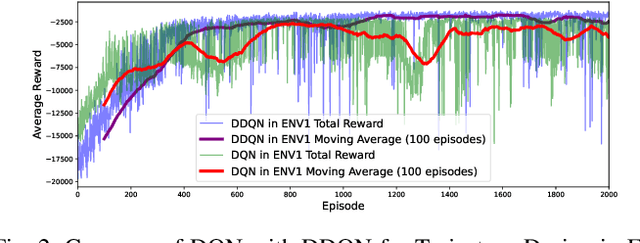

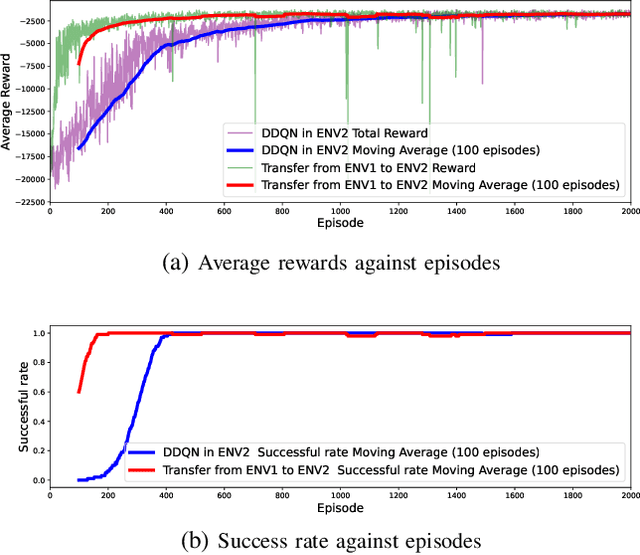

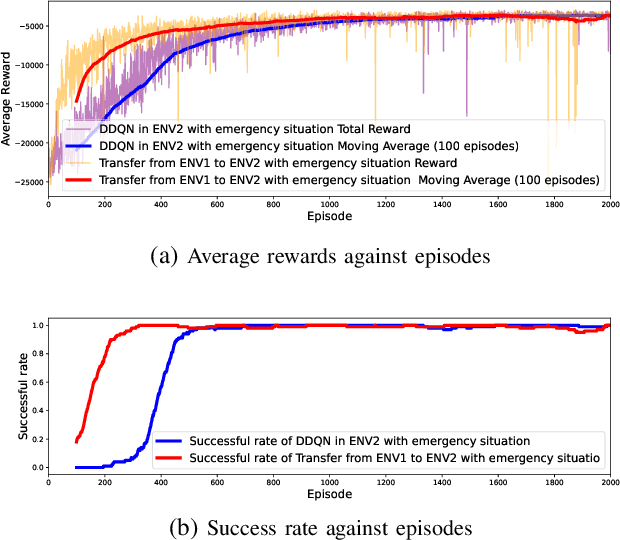

Energy Consumption Reduction for UAV Trajectory Training : A Transfer Learning Approach

Jan 20, 2025Abstract:The advent of 6G technology demands flexible, scalable wireless architectures to support ultra-low latency, high connectivity, and high device density. The Open Radio Access Network (O-RAN) framework, with its open interfaces and virtualized functions, provides a promising foundation for such architectures. However, traditional fixed base stations alone are not sufficient to fully capitalize on the benefits of O-RAN due to their limited flexibility in responding to dynamic network demands. The integration of Unmanned Aerial Vehicles (UAVs) as mobile RUs within the O-RAN architecture offers a solution by leveraging the flexibility of drones to dynamically extend coverage. However, UAV operating in diverse environments requires frequent retraining, leading to significant energy waste. We proposed transfer learning based on Dueling Double Deep Q network (DDQN) with multi-step learning, which significantly reduces the training time and energy consumption required for UAVs to adapt to new environments. We designed simulation environments and conducted ray tracing experiments using Wireless InSite with real-world map data. In the two simulated environments, training energy consumption was reduced by 30.52% and 58.51%, respectively. Furthermore, tests on real-world maps of Ottawa and Rosslyn showed energy reductions of 44.85% and 36.97%, respectively.

Optimized Resource Allocation for Cloud-Native 6G Networks: Zero-Touch ML Models in Microservices-based VNF Deployments

Oct 09, 2024

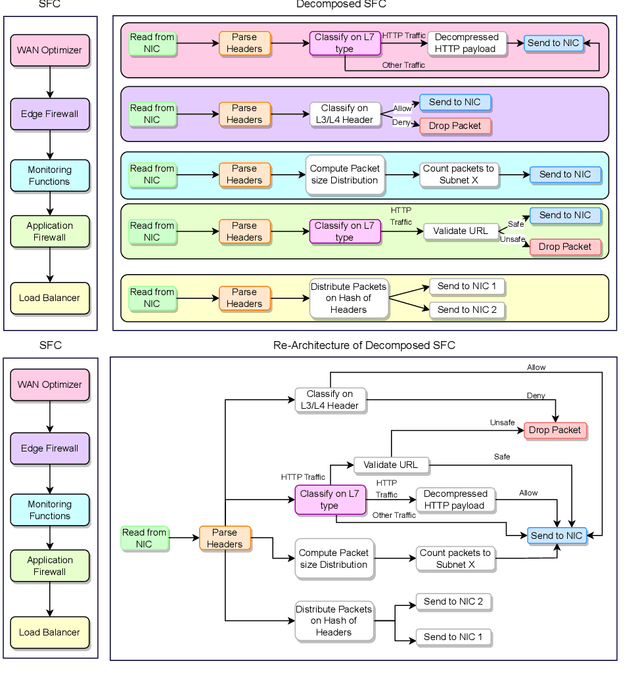

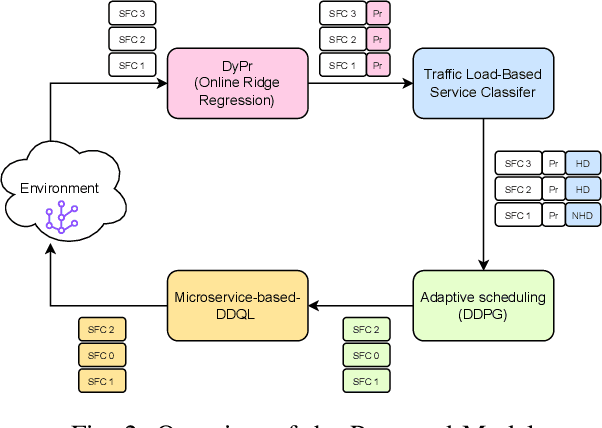

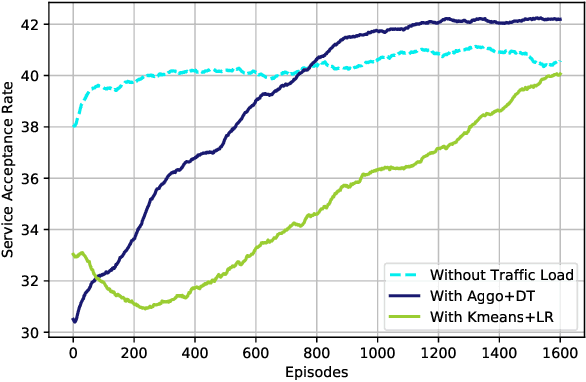

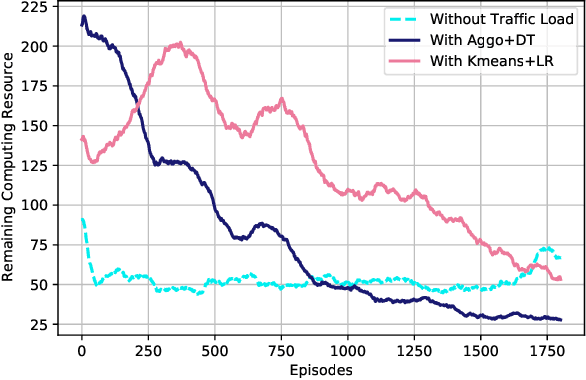

Abstract:6G, the next generation of mobile networks, is set to offer even higher data rates, ultra-reliability, and lower latency than 5G. New 6G services will increase the load and dynamism of the network. Network Function Virtualization (NFV) aids with this increased load and dynamism by eliminating hardware dependency. It aims to boost the flexibility and scalability of network deployment services by separating network functions from their specific proprietary forms so that they can run as virtual network functions (VNFs) on commodity hardware. It is essential to design an NFV orchestration and management framework to support these services. However, deploying bulky monolithic VNFs on the network is difficult, especially when underlying resources are scarce, resulting in ineffective resource management. To address this, microservices-based NFV approaches are proposed. In this approach, monolithic VNFs are decomposed into micro VNFs, increasing the likelihood of their successful placement and resulting in more efficient resource management. This article discusses the proposed framework for resource allocation for microservices-based services to provide end-to-end Quality of Service (QoS) using the Double Deep Q Learning (DDQL) approach. Furthermore, to enhance this resource allocation approach, we discussed and addressed two crucial sub-problems: the need for a dynamic priority technique and the presence of the low-priority starvation problem. Using the Deep Deterministic Policy Gradient (DDPG) model, an Adaptive Scheduling model is developed that effectively mitigates the starvation problem. Additionally, the impact of incorporating traffic load considerations into deployment and scheduling is thoroughly investigated.

Continuous Transfer Learning for UAV Communication-aware Trajectory Design

May 16, 2024

Abstract:Deep Reinforcement Learning (DRL) emerges as a prime solution for Unmanned Aerial Vehicle (UAV) trajectory planning, offering proficiency in navigating high-dimensional spaces, adaptability to dynamic environments, and making sequential decisions based on real-time feedback. Despite these advantages, the use of DRL for UAV trajectory planning requires significant retraining when the UAV is confronted with a new environment, resulting in wasted resources and time. Therefore, it is essential to develop techniques that can reduce the overhead of retraining DRL models, enabling them to adapt to constantly changing environments. This paper presents a novel method to reduce the need for extensive retraining using a double deep Q network (DDQN) model as a pretrained base, which is subsequently adapted to different urban environments through Continuous Transfer Learning (CTL). Our method involves transferring the learned model weights and adapting the learning parameters, including the learning and exploration rates, to suit each new environment specific characteristics. The effectiveness of our approach is validated in three scenarios, each with different levels of similarity. CTL significantly improves learning speed and success rates compared to DDQN models initiated from scratch. For similar environments, Transfer Learning (TL) improved stability, accelerated convergence by 65%, and facilitated 35% faster adaptation in dissimilar settings.

Enhancing Energy Efficiency in O-RAN Through Intelligent xApps Deployment

May 16, 2024

Abstract:The proliferation of 5G technology presents an unprecedented challenge in managing the energy consumption of densely deployed network infrastructures, particularly Base Stations (BSs), which account for the majority of power usage in mobile networks. The O-RAN architecture, with its emphasis on open and intelligent design, offers a promising framework to address the Energy Efficiency (EE) demands of modern telecommunication systems. This paper introduces two xApps designed for the O-RAN architecture to optimize power savings without compromising the Quality of Service (QoS). Utilizing a commercial RAN Intelligent Controller (RIC) simulator, we demonstrate the effectiveness of our proposed xApps through extensive simulations that reflect real-world operational conditions. Our results show a significant reduction in power consumption, achieving up to 50% power savings with a minimal number of User Equipments (UEs), by intelligently managing the operational state of Radio Cards (RCs), particularly through switching between active and sleep modes based on network resource block usage conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge