Geri Skenderi

Hyperbolic Graph Neural Networks Under the Microscope: The Role of Geometry-Task Alignment

Feb 02, 2026Abstract:Many complex networks exhibit hyperbolic structural properties, making hyperbolic space a natural candidate for representing hierarchical and tree-like graphs with low distortion. Based on this observation, Hyperbolic Graph Neural Networks (HGNNs) have been widely adopted as a principled choice for representation learning on tree-like graphs. In this work, we question this paradigm by proposing an additional condition of geometry-task alignment, i.e., whether the metric structure of the target follows that of the input graph. We theoretically and empirically demonstrate the capability of HGNNs to recover low-distortion representations on two synthetic regression problems, and show that their geometric inductive bias becomes helpful when the problem requires preserving metric structure. Additionally, we evaluate HGNNs on the tasks of link prediction and node classification by jointly analyzing predictive performance and embedding distortion, revealing that only link prediction is geometry-aligned. Overall, our findings shift the focus from only asking "Is the graph hyperbolic?" to also questioning "Is the task aligned with hyperbolic geometry?", showing that HGNNs consistently outperform Euclidean models under such alignment, while their advantage vanishes otherwise.

On the Hardness of Learning GNN-based SAT Solvers: The Role of Graph Ricci Curvature

Aug 29, 2025Abstract:Graph Neural Networks (GNNs) have recently shown promise as solvers for Boolean Satisfiability Problems (SATs) by operating on graph representations of logical formulas. However, their performance degrades sharply on harder instances, raising the question of whether this reflects fundamental architectural limitations. In this work, we provide a geometric explanation through the lens of graph Ricci Curvature (RC), which quantifies local connectivity bottlenecks. We prove that bipartite graphs derived from random k-SAT formulas are inherently negatively curved, and that this curvature decreases with instance difficulty. Building on this, we show that GNN-based SAT solvers are affected by oversquashing, a phenomenon where long-range dependencies become impossible to compress into fixed-length representations. We validate our claims empirically across different SAT benchmarks and confirm that curvature is both a strong indicator of problem complexity and can be used to predict performance. Finally, we connect our findings to design principles of existing solvers and outline promising directions for future work.

Sub-graph Based Diffusion Model for Link Prediction

Sep 13, 2024Abstract:Denoising Diffusion Probabilistic Models (DDPMs) represent a contemporary class of generative models with exceptional qualities in both synthesis and maximizing the data likelihood. These models work by traversing a forward Markov Chain where data is perturbed, followed by a reverse process where a neural network learns to undo the perturbations and recover the original data. There have been increasing efforts exploring the applications of DDPMs in the graph domain. However, most of them have focused on the generative perspective. In this paper, we aim to build a novel generative model for link prediction. In particular, we treat link prediction between a pair of nodes as a conditional likelihood estimation of its enclosing sub-graph. With a dedicated design to decompose the likelihood estimation process via the Bayesian formula, we are able to separate the estimation of sub-graph structure and its node features. Such designs allow our model to simultaneously enjoy the advantages of inductive learning and the strong generalization capability. Remarkably, comprehensive experiments across various datasets validate that our proposed method presents numerous advantages: (1) transferability across datasets without retraining, (2) promising generalization on limited training data, and (3) robustness against graph adversarial attacks.

Disentangled Latent Spaces Facilitate Data-Driven Auxiliary Learning

Oct 13, 2023

Abstract:In deep learning, auxiliary objectives are often used to facilitate learning in situations where data is scarce, or the principal task is extremely complex. This idea is primarily inspired by the improved generalization capability induced by solving multiple tasks simultaneously, which leads to a more robust shared representation. Nevertheless, finding optimal auxiliary tasks that give rise to the desired improvement is a crucial problem that often requires hand-crafted solutions or expensive meta-learning approaches. In this paper, we propose a novel framework, dubbed Detaux, whereby a weakly supervised disentanglement procedure is used to discover new unrelated classification tasks and the associated labels that can be exploited with the principal task in any Multi-Task Learning (MTL) model. The disentanglement procedure works at a representation level, isolating a subspace related to the principal task, plus an arbitrary number of orthogonal subspaces. In the most disentangled subspaces, through a clustering procedure, we generate the additional classification tasks, and the associated labels become their representatives. Subsequently, the original data, the labels associated with the principal task, and the newly discovered ones can be fed into any MTL framework. Extensive validation on both synthetic and real data, along with various ablation studies, demonstrate promising results, revealing the potential in what has been, so far, an unexplored connection between learning disentangled representations and MTL. The code will be made publicly available upon acceptance.

Graph-level Representation Learning with Joint-Embedding Predictive Architectures

Sep 27, 2023

Abstract:Joint-Embedding Predictive Architectures (JEPAs) have recently emerged as a novel and powerful technique for self-supervised representation learning. They aim to learn an energy-based model by predicting the latent representation of a target signal $y$ from a context signal $x$. JEPAs bypass the need for data augmentation and negative samples, which are typically required by contrastive learning, while avoiding the overfitting issues associated with generative-based pretraining. In this paper, we show that graph-level representations can be effectively modeled using this paradigm and propose Graph-JEPA, the first JEPA for the graph domain. In particular, we employ masked modeling to learn embeddings for different subgraphs of the input graph. To endow the representations with the implicit hierarchy that is often present in graph-level concepts, we devise an alternative training objective that consists of predicting the coordinates of the encoded subgraphs on the unit hyperbola in the 2D plane. Extensive validation shows that Graph-JEPA can learn representations that are expressive and competitive in both graph classification and regression problems.

Neuro-symbolic Empowered Denoising Diffusion Probabilistic Models for Real-time Anomaly Detection in Industry 4.0

Jul 18, 2023

Abstract:Industry 4.0 involves the integration of digital technologies, such as IoT, Big Data, and AI, into manufacturing and industrial processes to increase efficiency and productivity. As these technologies become more interconnected and interdependent, Industry 4.0 systems become more complex, which brings the difficulty of identifying and stopping anomalies that may cause disturbances in the manufacturing process. This paper aims to propose a diffusion-based model for real-time anomaly prediction in Industry 4.0 processes. Using a neuro-symbolic approach, we integrate industrial ontologies in the model, thereby adding formal knowledge on smart manufacturing. Finally, we propose a simple yet effective way of distilling diffusion models through Random Fourier Features for deployment on an embedded system for direct integration into the manufacturing process. To the best of our knowledge, this approach has never been explored before.

On the use of learning-based forecasting methods for ameliorating fashion business processes: A position paper

Nov 09, 2022Abstract:The fashion industry is one of the most active and competitive markets in the world, manufacturing millions of products and reaching large audiences every year. A plethora of business processes are involved in this large-scale industry, but due to the generally short life-cycle of clothing items, supply-chain management and retailing strategies are crucial for good market performance. Correctly understanding the wants and needs of clients, managing logistic issues and marketing the correct products are high-level problems with a lot of uncertainty associated to them given the number of influencing factors, but most importantly due to the unpredictability often associated with the future. It is therefore straightforward that forecasting methods, which generate predictions of the future, are indispensable in order to ameliorate all the various business processes that deal with the true purpose and meaning of fashion: having a lot of people wear a particular product or style, rendering these items, people and consequently brands fashionable. In this paper, we provide an overview of three concrete forecasting tasks that any fashion company can apply in order to improve their industrial and market impact. We underline advances and issues in all three tasks and argue about their importance and the impact they can have at an industrial level. Finally, we highlight issues and directions of future work, reflecting on how learning-based forecasting methods can further aid the fashion industry.

Leveraging commonsense for object localisation in partial scenes

Nov 01, 2022Abstract:We propose an end-to-end solution to address the problem of object localisation in partial scenes, where we aim to estimate the position of an object in an unknown area given only a partial 3D scan of the scene. We propose a novel scene representation to facilitate the geometric reasoning, Directed Spatial Commonsense Graph (D-SCG), a spatial scene graph that is enriched with additional concept nodes from a commonsense knowledge base. Specifically, the nodes of D-SCG represent the scene objects and the edges are their relative positions. Each object node is then connected via different commonsense relationships to a set of concept nodes. With the proposed graph-based scene representation, we estimate the unknown position of the target object using a Graph Neural Network that implements a novel attentional message passing mechanism. The network first predicts the relative positions between the target object and each visible object by learning a rich representation of the objects via aggregating both the object nodes and the concept nodes in D-SCG. These relative positions then are merged to obtain the final position. We evaluate our method using Partial ScanNet, improving the state-of-the-art by 5.9% in terms of the localisation accuracy at a 8x faster training speed.

Toward Smart Doors: A Position Paper

Sep 23, 2022

Abstract:Conventional automatic doors cannot distinguish between people wishing to pass through the door and people passing by the door, so they often open unnecessarily. This leads to the need to adopt new systems in both commercial and non-commercial environments: smart doors. In particular, a smart door system predicts the intention of people near the door based on the social context of the surrounding environment and then makes rational decisions about whether or not to open the door. This work proposes the first position paper related to smart doors, without bells and whistles. We first point out that the problem not only concerns reliability, climate control, safety, and mode of operation. Indeed, a system to predict the intention of people near the door also involves a deeper understanding of the social context of the scene through a complex combined analysis of proxemics and scene reasoning. Furthermore, we conduct an exhaustive literature review about automatic doors, providing a novel system formulation. Also, we present an analysis of the possible future application of smart doors, a description of the ethical shortcomings, and legislative issues.

POP: Mining POtential Performance of new fashion products via webly cross-modal query expansion

Jul 22, 2022

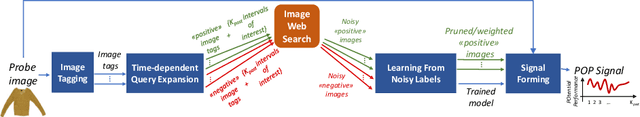

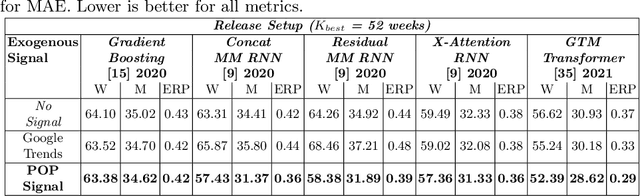

Abstract:We propose a data-centric pipeline able to generate exogenous observation data for the New Fashion Product Performance Forecasting (NFPPF) problem, i.e., predicting the performance of a brand-new clothing probe with no available past observations. Our pipeline manufactures the missing past starting from a single, available image of the clothing probe. It starts by expanding textual tags associated with the image, querying related fashionable or unfashionable images uploaded on the web at a specific time in the past. A binary classifier is robustly trained on these web images by confident learning, to learn what was fashionable in the past and how much the probe image conforms to this notion of fashionability. This compliance produces the POtential Performance (POP) time series, indicating how performing the probe could have been if it were available earlier. POP proves to be highly predictive for the probe's future performance, ameliorating the sales forecasts of all state-of-the-art models on the recent VISUELLE fast-fashion dataset. We also show that POP reflects the ground-truth popularity of new styles (ensembles of clothing items) on the Fashion Forward benchmark, demonstrating that our webly-learned signal is a truthful expression of popularity, accessible by everyone and generalizable to any time of analysis. Forecasting code, data and the POP time series are available at: https://github.com/HumaticsLAB/POP-Mining-POtential-Performance

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge