George Kantor

WARPED: Wrist-Aligned Rendering for Robot Policy Learning from Egocentric Human Demonstrations

Apr 12, 2026Abstract:Recent advancements in learning from human demonstration have shown promising results in addressing the scalability and high cost of data collection required to train robust visuomotor policies. However, existing approaches are often constrained by a reliance on multiview camera setups, depth sensors, or custom hardware and are typically limited to policy execution from third-person or egocentric cameras. In this paper, we present WARPED, a framework designed to synthesize realistic wrist-view observations from human demonstration videos to facilitate the training of visuomotor policies using only monocular RGB data. With data collected from an egocentric RGB camera, our system leverages vision foundation models to initialize the interactive scene. A hand-object interaction pipeline is then employed to track the hand and manipulated object and retarget the trajectories to a robotic end-effector. Lastly, photo-realistic wrist-view observations are synthesized via Gaussian Splatting to directly train a robotic policy. We demonstrate that WARPED achieves success rates comparable to policies trained on teleoperated demonstration data for five tabletop manipulation tasks, while requiring 5-8x less data collection time.

ODE Methods for Computing One-Dimensional Self-Motion Manifolds

Jul 29, 2025

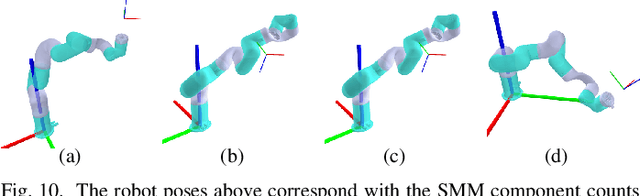

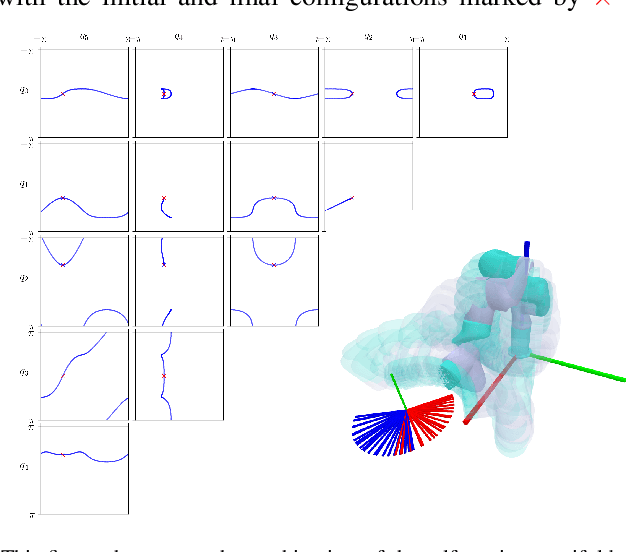

Abstract:Redundant manipulators are well understood to offer infinite joint configurations for achieving a desired end-effector pose. The multiplicity of inverse kinematics (IK) solutions allows for the simultaneous solving of auxiliary tasks like avoiding joint limits or obstacles. However, the most widely used IK solvers are numerical gradient-based iterative methods that inherently return a locally optimal solution. In this work, we explore the computation of self-motion manifolds (SMMs), which represent the set of all joint configurations that solve the inverse kinematics problem for redundant manipulators. Thus, SMMs are global IK solutions for redundant manipulators. We focus on task redundancies of dimensionality 1, introducing a novel ODE formulation for computing SMMs using standard explicit fixed-step ODE integrators. We also address the challenge of ``inducing'' redundancy in otherwise non-redundant manipulators assigned to tasks naturally described by one degree of freedom less than the non-redundant manipulator. Furthermore, recognizing that SMMs can consist of multiple disconnected components, we propose methods for searching for these separate SMM components. Our formulations and algorithms compute accurate SMM solutions without requiring additional IK refinement, and we extend our methods to prismatic joint systems -- an area not covered in current SMM literature. This manuscript presents the derivation of these methods and several examples that show how the methods work and their limitations.

A Systematic Robot Design Optimization Methodology with Application to Redundant Dual-Arm Manipulators

Jul 29, 2025

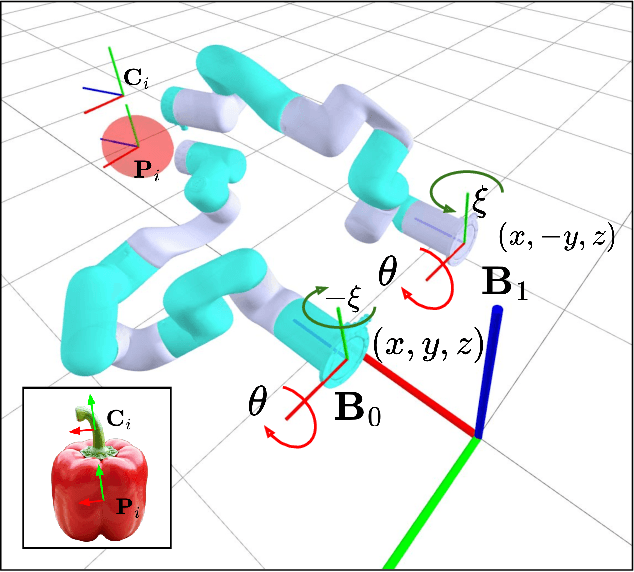

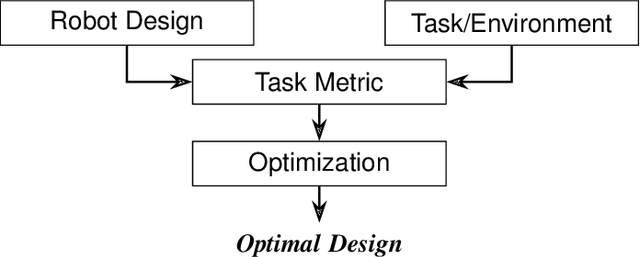

Abstract:One major recurring challenge in deploying manipulation robots is determining the optimal placement of manipulators to maximize performance. This challenge is exacerbated in complex, cluttered agricultural environments of high-value crops, such as flowers, fruits, and vegetables, that could greatly benefit from robotic systems tailored to their specific requirements. However, the design of such systems remains a challenging, intuition-driven process, limiting the affordability and adoption of robotics-based automation by domain experts like farmers. To address this challenge, we propose a four-part design optimization methodology for automating the development of task-specific robotic systems. This framework includes (a) a robot design model, (b) task and environment representations for simulation, (c) task-specific performance metrics, and (d) optimization algorithms for refining configurations. We demonstrate our framework by optimizing a dual-arm robotic system for pepper harvesting using two off-the-shelf redundant manipulators. To enhance performance, we introduce novel task metrics that leverage self-motion manifolds to characterize manipulator redundancy comprehensively. Our results show that our framework achieves simultaneous improvements in reachability success rates and improvements in dexterity. Specifically, our approach improves reachability success by at least 14\% over baseline methods and achieves over 30\% improvement in dexterity based on our task-specific metric.

A Roadmap for Climate-Relevant Robotics Research

Jul 15, 2025

Abstract:Climate change is one of the defining challenges of the 21st century, and many in the robotics community are looking for ways to contribute. This paper presents a roadmap for climate-relevant robotics research, identifying high-impact opportunities for collaboration between roboticists and experts across climate domains such as energy, the built environment, transportation, industry, land use, and Earth sciences. These applications include problems such as energy systems optimization, construction, precision agriculture, building envelope retrofits, autonomous trucking, and large-scale environmental monitoring. Critically, we include opportunities to apply not only physical robots but also the broader robotics toolkit - including planning, perception, control, and estimation algorithms - to climate-relevant problems. A central goal of this roadmap is to inspire new research directions and collaboration by highlighting specific, actionable problems at the intersection of robotics and climate. This work represents a collaboration between robotics researchers and domain experts in various climate disciplines, and it serves as an invitation to the robotics community to bring their expertise to bear on urgent climate priorities.

Audio-Visual Contact Classification for Tree Structures in Agriculture

May 19, 2025Abstract:Contact-rich manipulation tasks in agriculture, such as pruning and harvesting, require robots to physically interact with tree structures to maneuver through cluttered foliage. Identifying whether the robot is contacting rigid or soft materials is critical for the downstream manipulation policy to be safe, yet vision alone is often insufficient due to occlusion and limited viewpoints in this unstructured environment. To address this, we propose a multi-modal classification framework that fuses vibrotactile (audio) and visual inputs to identify the contact class: leaf, twig, trunk, or ambient. Our key insight is that contact-induced vibrations carry material-specific signals, making audio effective for detecting contact events and distinguishing material types, while visual features add complementary semantic cues that support more fine-grained classification. We collect training data using a hand-held sensor probe and demonstrate zero-shot generalization to a robot-mounted probe embodiment, achieving an F1 score of 0.82. These results underscore the potential of audio-visual learning for manipulation in unstructured, contact-rich environments.

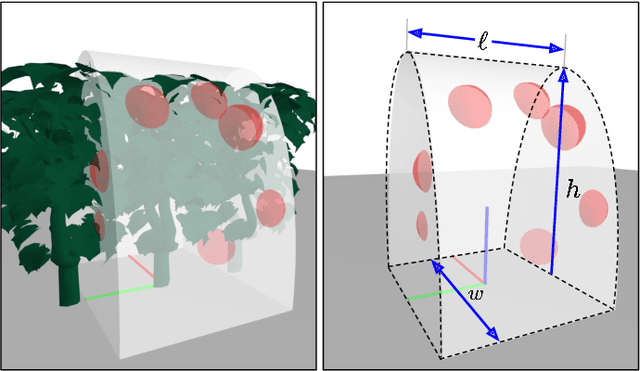

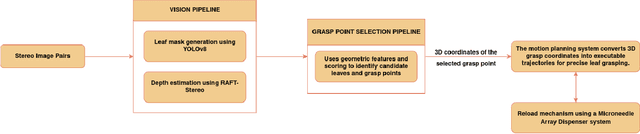

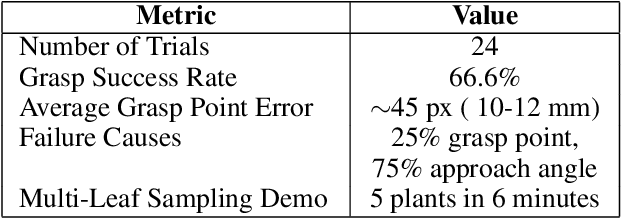

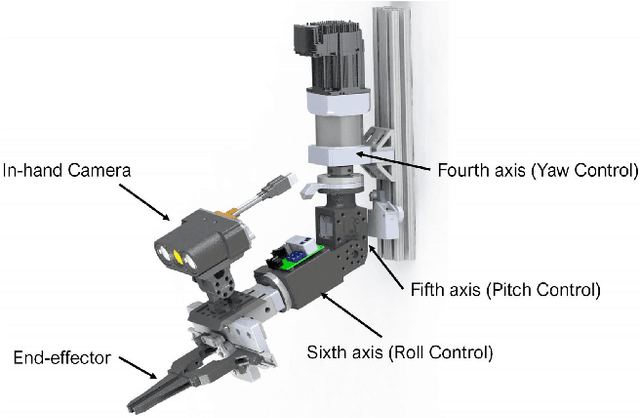

T-REX: Vision-Based System for Autonomous Leaf Detection and Grasp Estimation

May 03, 2025

Abstract:T-Rex (The Robot for Extracting Leaf Samples) is a gantry-based robotic system developed for autonomous leaf localization, selection, and grasping in greenhouse environments. The system integrates a 6-degree-of-freedom manipulator with a stereo vision pipeline to identify and interact with target leaves. YOLOv8 is used for real-time leaf segmentation, and RAFT-Stereo provides dense depth maps, allowing the reconstruction of 3D leaf masks. These observations are processed through a leaf grasping algorithm that selects the optimal leaf based on clutter, visibility, and distance, and determines a grasp point by analyzing local surface flatness, top-down approachability, and margin from edges. The selected grasp point guides a trajectory executed by ROS-based motion controllers, driving a custom microneedle-equipped end-effector to clamp the leaf and simulate tissue sampling. Experiments conducted with artificial plants under varied poses demonstrate that the T-Rex system can consistently detect, plan, and perform physical interactions with plant-like targets, achieving a grasp success rate of 66.6\%. This paper presents the system architecture, implementation, and testing of T-Rex as a step toward plant sampling automation in Controlled Environment Agriculture (CEA).

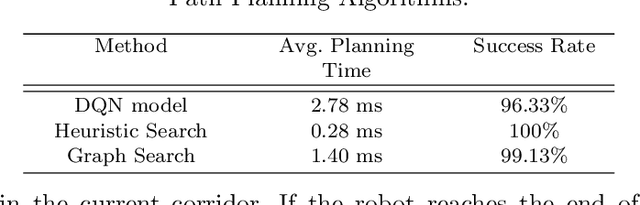

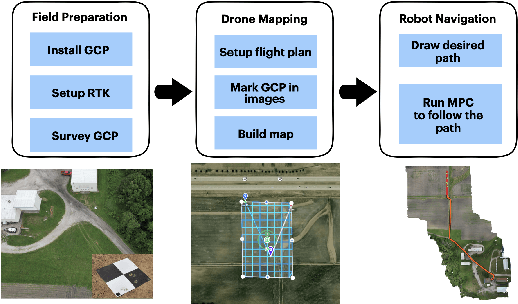

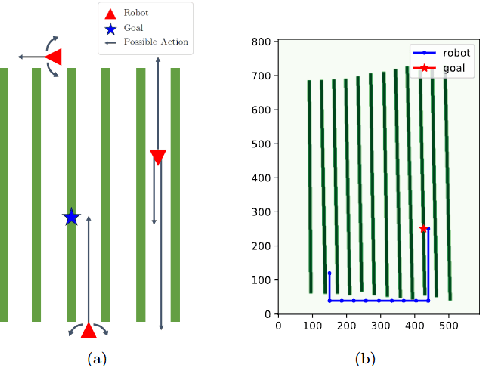

Evaluating Path Planning Strategies for Efficient Nitrate Sampling in Crop Rows

Mar 10, 2025

Abstract:This paper presents a pipeline that combines high-resolution orthomosaic maps generated from UAS imagery with GPS-based global navigation to guide a skid-steered ground robot. We evaluated three path planning strategies: A* Graph search, Deep Q-learning (DQN) model, and Heuristic search, benchmarking them on planning time and success rate in realistic simulation environments. Experimental results reveal that the Heuristic search achieves the fastest planning times (0.28 ms) and a 100% success rate, while the A* approach delivers near-optimal performance, and the DQN model, despite its adaptability, incurs longer planning delays and occasional suboptimal routing. These results highlight the advantages of deterministic rule-based methods in geometrically constrained crop-row environments and lay the groundwork for future hybrid strategies in precision agriculture.

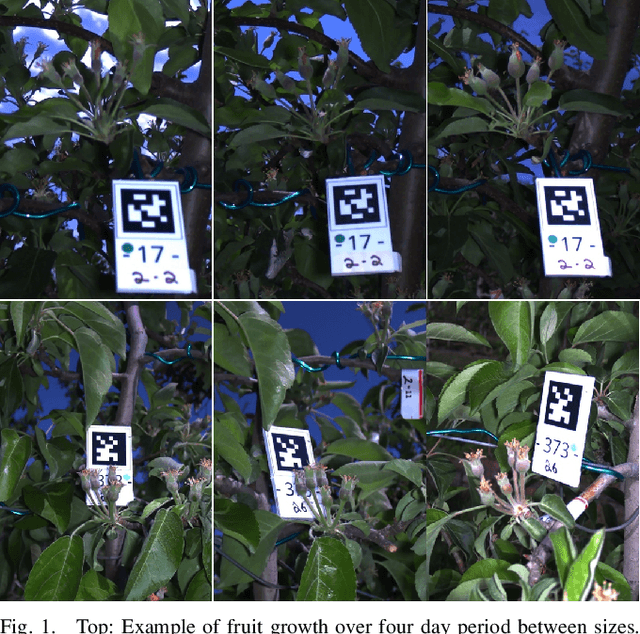

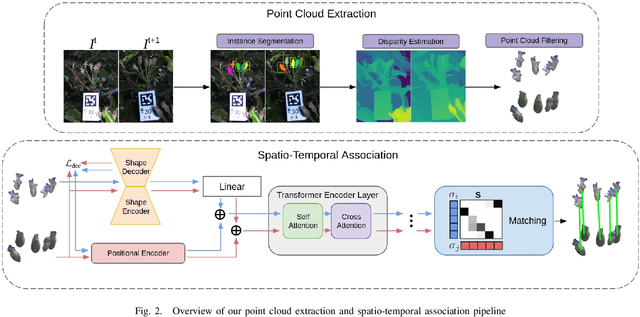

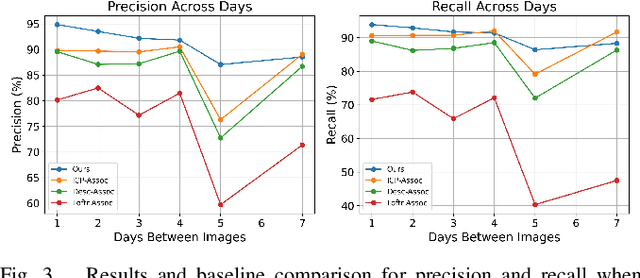

Transformer-Based Spatio-Temporal Association of Apple Fruitlets

Mar 05, 2025

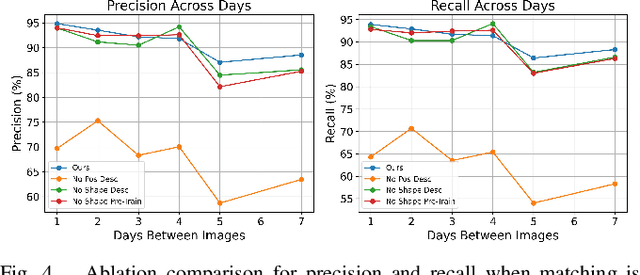

Abstract:In this paper, we present a transformer-based method to spatio-temporally associate apple fruitlets in stereo-images collected on different days and from different camera poses. State-of-the-art association methods in agriculture are dedicated towards matching larger crops using either high-resolution point clouds or temporally stable features, which are both difficult to obtain for smaller fruit in the field. To address these challenges, we propose a transformer-based architecture that encodes the shape and position of each fruitlet, and propagates and refines these features through a series of transformer encoder layers with alternating self and cross-attention. We demonstrate that our method is able to achieve an F1-score of 92.4% on data collected in a commercial apple orchard and outperforms all baselines and ablations.

SonicBoom: Contact Localization Using Array of Microphones

Dec 13, 2024

Abstract:In cluttered environments where visual sensors encounter heavy occlusion, such as in agricultural settings, tactile signals can provide crucial spatial information for the robot to locate rigid objects and maneuver around them. We introduce SonicBoom, a holistic hardware and learning pipeline that enables contact localization through an array of contact microphones. While conventional sound source localization methods effectively triangulate sources in air, localization through solid media with irregular geometry and structure presents challenges that are difficult to model analytically. We address this challenge through a feature engineering and learning based approach, autonomously collecting 18,000 robot interaction sound pairs to learn a mapping between acoustic signals and collision locations on the robot end effector link. By leveraging relative features between microphones, SonicBoom achieves localization errors of 0.42cm for in distribution interactions and maintains robust performance of 2.22cm error even with novel objects and contact conditions. We demonstrate the system's practical utility through haptic mapping of occluded branches in mock canopy settings, showing that acoustic based sensing can enable reliable robot navigation in visually challenging environments.

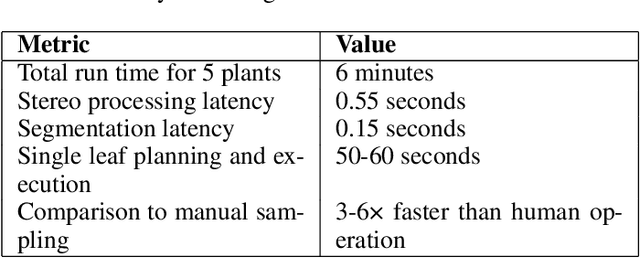

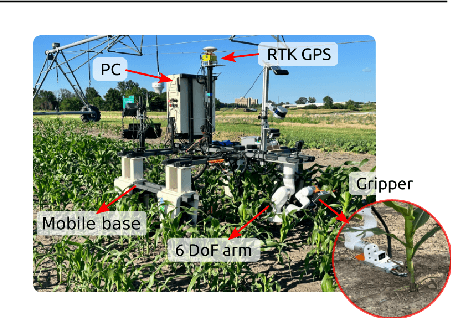

Autonomous Sensor Exchange and Calibration for Cornstalk Nitrate Monitoring Robot

Nov 15, 2024Abstract:Interactive sensors are an important component of robotic systems but often require manual replacement due to wear and tear. Automating this process can enhance system autonomy and facilitate long-term deployment. We developed an autonomous sensor exchange and calibration system for an agriculture crop monitoring robot that inserts a nitrate sensor into cornstalks. A novel gripper and replacement mechanism, featuring a reliable funneling design, were developed to enable efficient and reliable sensor exchanges. To maintain consistent nitrate sensor measurement, an on-board sensor calibration station was integrated to provide in-field sensor cleaning and calibration. The system was deployed at the Ames Curtis Farm in June 2024, where it successfully inserted nitrate sensors with high accuracy into 30 cornstalks with a 77$\%$ success rate.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge