Dimity Miller

BadDet+: Robust Backdoor Attacks for Object Detection

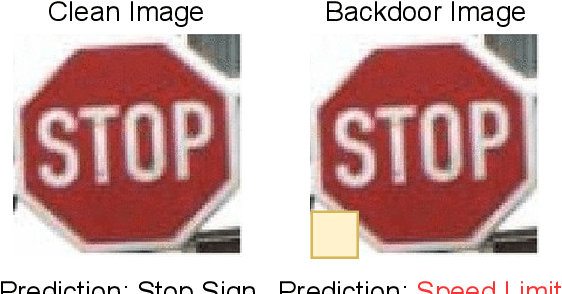

Jan 28, 2026Abstract:Backdoor attacks pose a severe threat to deep learning, yet their impact on object detection remains poorly understood compared to image classification. While attacks have been proposed, we identify critical weaknesses in existing detection-based methods, specifically their reliance on unrealistic assumptions and a lack of physical validation. To bridge this gap, we introduce BadDet+, a penalty-based framework that unifies Region Misclassification Attacks (RMA) and Object Disappearance Attacks (ODA). The core mechanism utilizes a log-barrier penalty to suppress true-class predictions for triggered inputs, resulting in (i) position and scale invariance, and (ii) enhanced physical robustness. On real-world benchmarks, BadDet+ achieves superior synthetic-to-physical transfer compared to existing RMA and ODA baselines while preserving clean performance. Theoretical analysis confirms the proposed penalty acts within a trigger-specific feature subspace, reliably inducing attacks without degrading standard inference. These results highlight significant vulnerabilities in object detection and the necessity for specialized defenses.

Changes in Real Time: Online Scene Change Detection with Multi-View Fusion

Nov 15, 2025

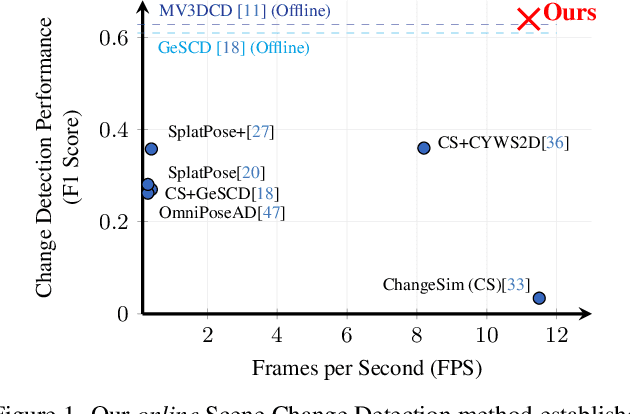

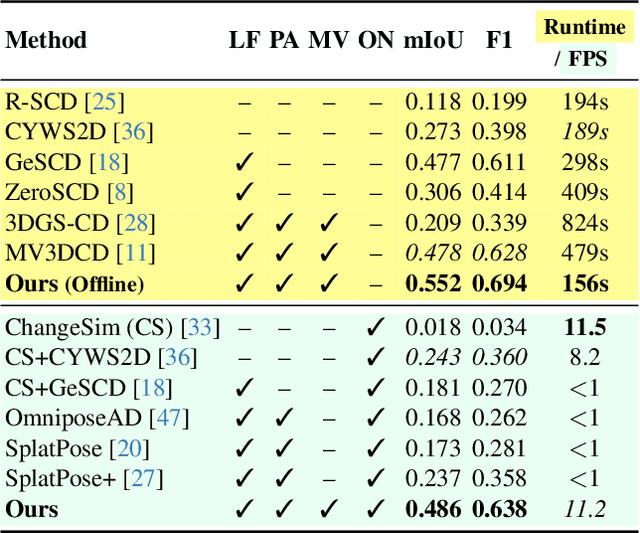

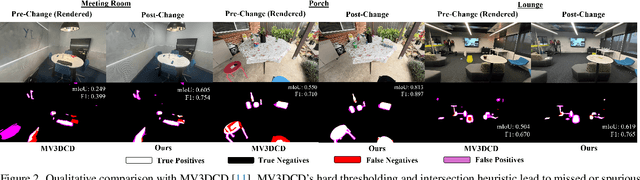

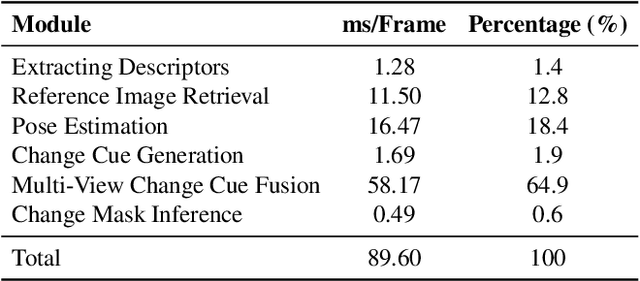

Abstract:Online Scene Change Detection (SCD) is an extremely challenging problem that requires an agent to detect relevant changes on the fly while observing the scene from unconstrained viewpoints. Existing online SCD methods are significantly less accurate than offline approaches. We present the first online SCD approach that is pose-agnostic, label-free, and ensures multi-view consistency, while operating at over 10 FPS and achieving new state-of-the-art performance, surpassing even the best offline approaches. Our method introduces a new self-supervised fusion loss to infer scene changes from multiple cues and observations, PnP-based fast pose estimation against the reference scene, and a fast change-guided update strategy for the 3D Gaussian Splatting scene representation. Extensive experiments on complex real-world datasets demonstrate that our approach outperforms both online and offline baselines.

Backdoor Mitigation via Invertible Pruning Masks

Sep 19, 2025

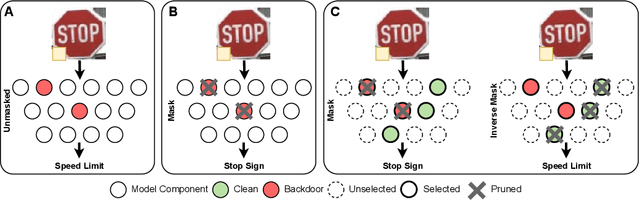

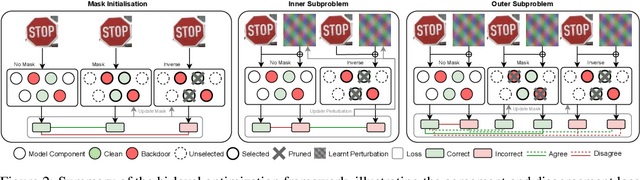

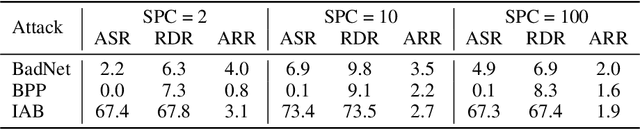

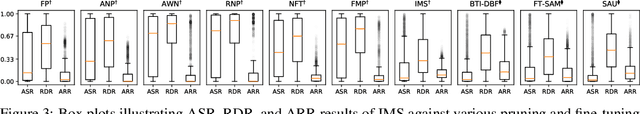

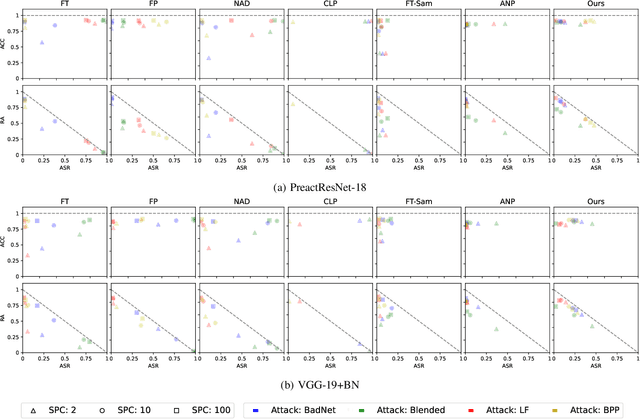

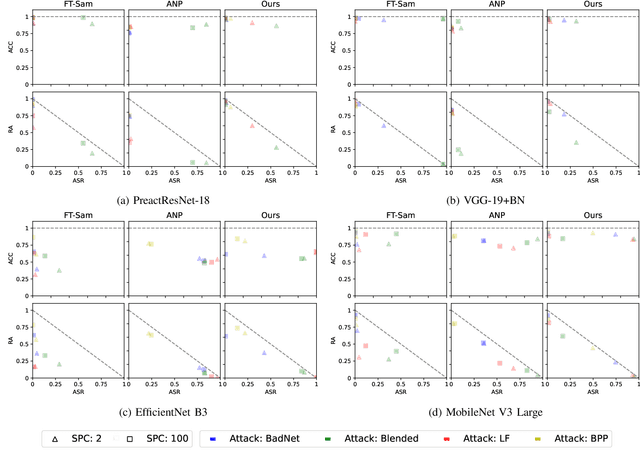

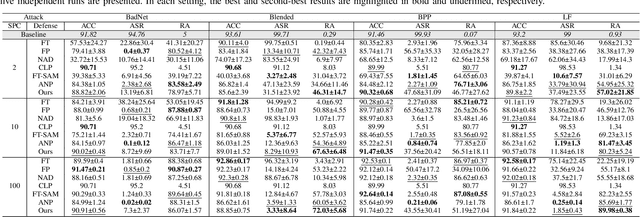

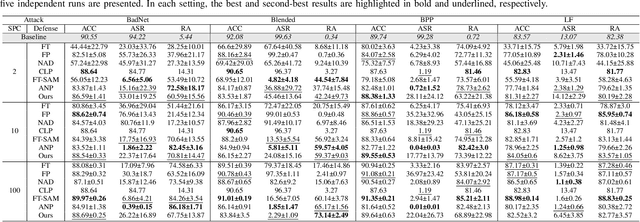

Abstract:Model pruning has gained traction as a promising defense strategy against backdoor attacks in deep learning. However, existing pruning-based approaches often fall short in accurately identifying and removing the specific parameters responsible for inducing backdoor behaviors. Despite the dominance of fine-tuning-based defenses in recent literature, largely due to their superior performance, pruning remains a compelling alternative, offering greater interpretability and improved robustness in low-data regimes. In this paper, we propose a novel pruning approach featuring a learned \emph{selection} mechanism to identify parameters critical to both main and backdoor tasks, along with an \emph{invertible} pruning mask designed to simultaneously achieve two complementary goals: eliminating the backdoor task while preserving it through the inverse mask. We formulate this as a bi-level optimization problem that jointly learns selection variables, a sparse invertible mask, and sample-specific backdoor perturbations derived from clean data. The inner problem synthesizes candidate triggers using the inverse mask, while the outer problem refines the mask to suppress backdoor behavior without impairing clean-task accuracy. Extensive experiments demonstrate that our approach outperforms existing pruning-based backdoor mitigation approaches, maintains strong performance under limited data conditions, and achieves competitive results compared to state-of-the-art fine-tuning approaches. Notably, the proposed approach is particularly effective in restoring correct predictions for compromised samples after successful backdoor mitigation.

Multi-View Pose-Agnostic Change Localization with Zero Labels

Dec 05, 2024Abstract:Autonomous agents often require accurate methods for detecting and localizing changes in their environment, particularly when observations are captured from unconstrained and inconsistent viewpoints. We propose a novel label-free, pose-agnostic change detection method that integrates information from multiple viewpoints to construct a change-aware 3D Gaussian Splatting (3DGS) representation of the scene. With as few as 5 images of the post-change scene, our approach can learn additional change channels in a 3DGS and produce change masks that outperform single-view techniques. Our change-aware 3D scene representation additionally enables the generation of accurate change masks for unseen viewpoints. Experimental results demonstrate state-of-the-art performance in complex multi-object scenes, achieving a 1.7$\times$ and 1.6$\times$ improvement in Mean Intersection Over Union and F1 score respectively over other baselines. We also contribute a new real-world dataset to benchmark change detection in diverse challenging scenes in the presence of lighting variations.

Countering Backdoor Attacks in Image Recognition: A Survey and Evaluation of Mitigation Strategies

Nov 17, 2024

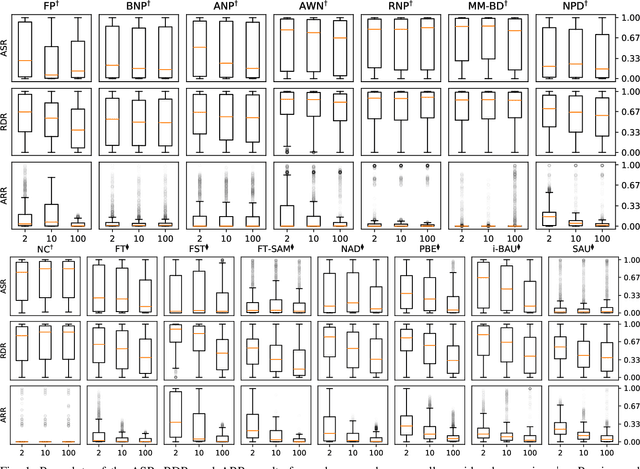

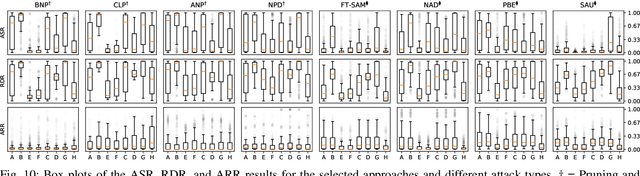

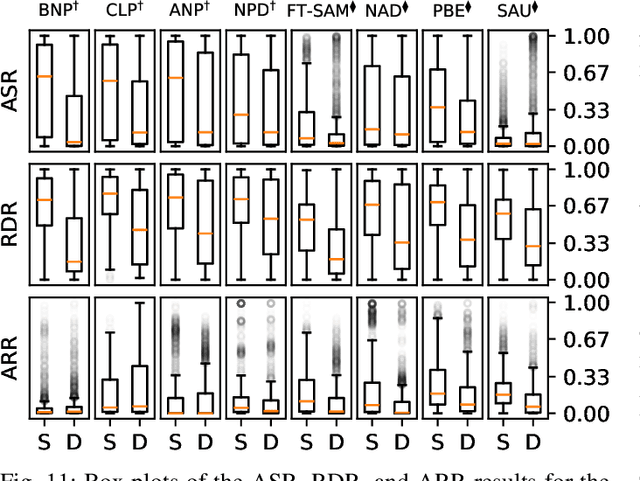

Abstract:The widespread adoption of deep learning across various industries has introduced substantial challenges, particularly in terms of model explainability and security. The inherent complexity of deep learning models, while contributing to their effectiveness, also renders them susceptible to adversarial attacks. Among these, backdoor attacks are especially concerning, as they involve surreptitiously embedding specific triggers within training data, causing the model to exhibit aberrant behavior when presented with input containing the triggers. Such attacks often exploit vulnerabilities in outsourced processes, compromising model integrity without affecting performance on clean (trigger-free) input data. In this paper, we present a comprehensive review of existing mitigation strategies designed to counter backdoor attacks in image recognition. We provide an in-depth analysis of the theoretical foundations, practical efficacy, and limitations of these approaches. In addition, we conduct an extensive benchmarking of sixteen state-of-the-art approaches against eight distinct backdoor attacks, utilizing three datasets, four model architectures, and three poisoning ratios. Our results, derived from 122,236 individual experiments, indicate that while many approaches provide some level of protection, their performance can vary considerably. Furthermore, when compared to two seminal approaches, most newer approaches do not demonstrate substantial improvements in overall performance or consistency across diverse settings. Drawing from these findings, we propose potential directions for developing more effective and generalizable defensive mechanisms in the future.

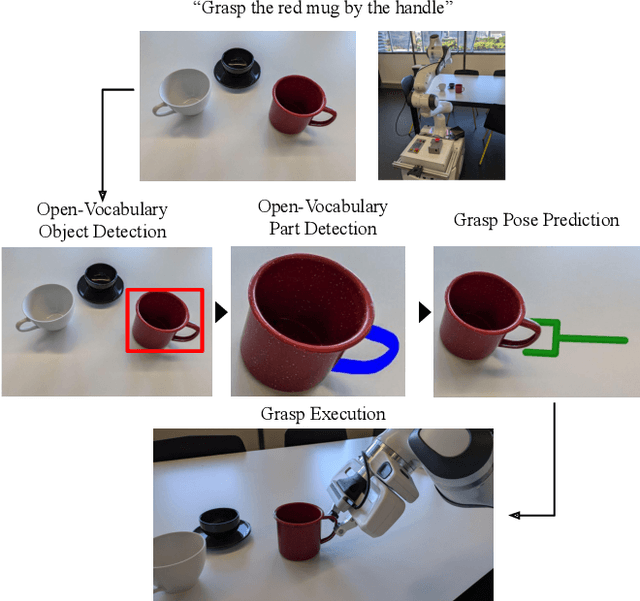

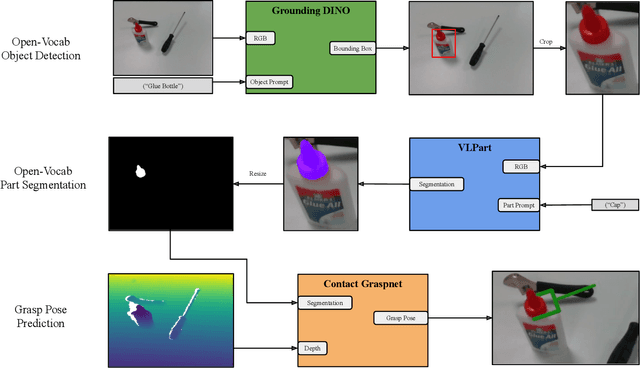

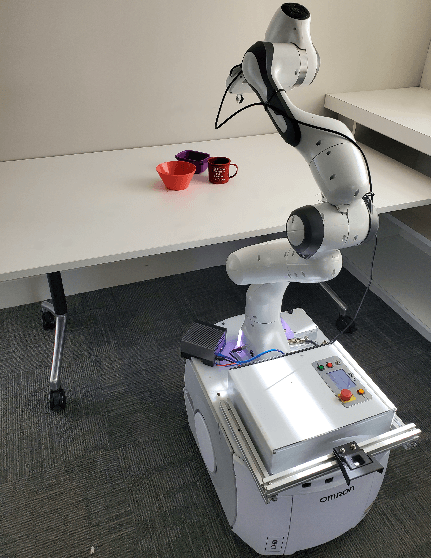

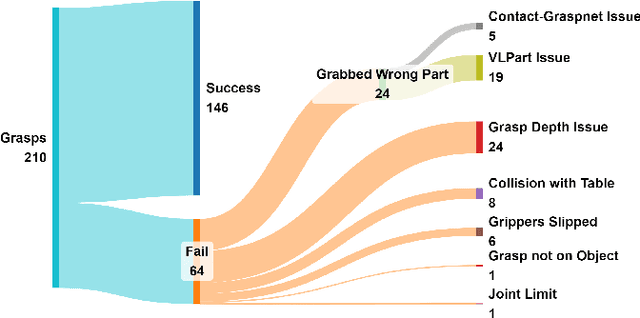

Open-Vocabulary Part-Based Grasping

Jun 10, 2024

Abstract:Many robotic applications require to grasp objects not arbitrarily but at a very specific object part. This is especially important for manipulation tasks beyond simple pick-and-place scenarios or in robot-human interactions, such as object handovers. We propose AnyPart, a practical system that combines open-vocabulary object detection, open-vocabulary part segmentation and 6DOF grasp pose prediction to infer a grasp pose on a specific part of an object in 800 milliseconds. We contribute two new datasets for the task of open-vocabulary part-based grasping, a hand-segmented dataset containing 1014 object-part segmentations, and a dataset of real-world scenarios gathered during our robot trials for individual objects and table-clearing tasks. We evaluate AnyPart on a mobile manipulator robot using a set of 28 common household objects over 360 grasping trials. AnyPart is capable of producing successful grasps 69.52 %, when ignoring robot-based grasp failures, AnyPart predicts a grasp location on the correct part 88.57 % of the time.

Unlearning Backdoor Attacks through Gradient-Based Model Pruning

May 07, 2024

Abstract:In the era of increasing concerns over cybersecurity threats, defending against backdoor attacks is paramount in ensuring the integrity and reliability of machine learning models. However, many existing approaches require substantial amounts of data for effective mitigation, posing significant challenges in practical deployment. To address this, we propose a novel approach to counter backdoor attacks by treating their mitigation as an unlearning task. We tackle this challenge through a targeted model pruning strategy, leveraging unlearning loss gradients to identify and eliminate backdoor elements within the model. Built on solid theoretical insights, our approach offers simplicity and effectiveness, rendering it well-suited for scenarios with limited data availability. Our methodology includes formulating a suitable unlearning loss and devising a model-pruning technique tailored for convolutional neural networks. Comprehensive evaluations demonstrate the efficacy of our proposed approach compared to state-of-the-art approaches, particularly in realistic data settings.

Open-Set Recognition in the Age of Vision-Language Models

Mar 25, 2024

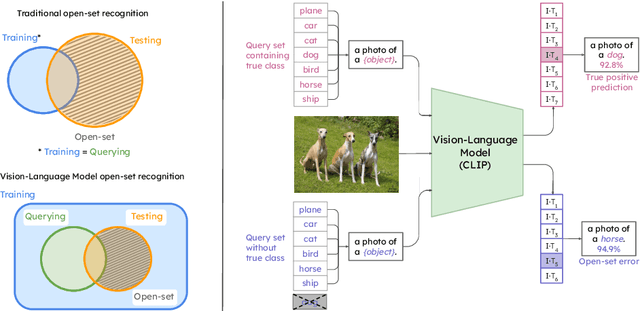

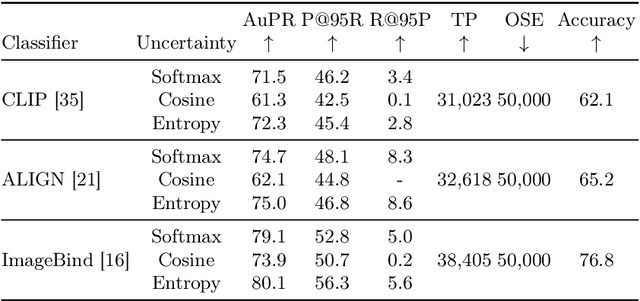

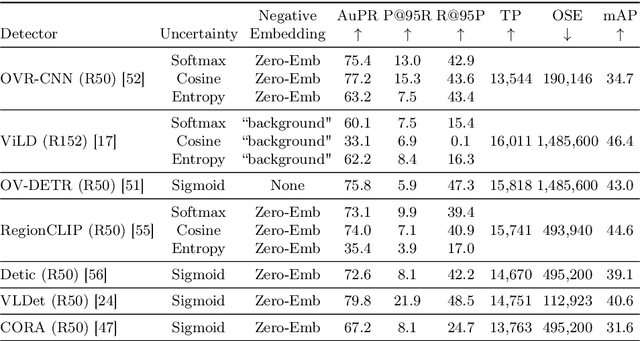

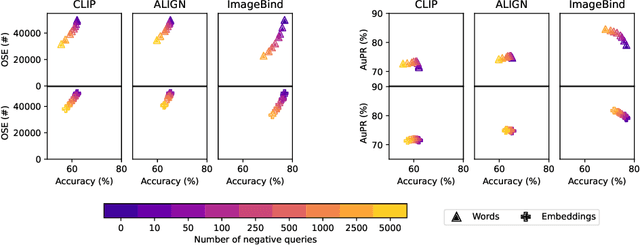

Abstract:Are vision-language models (VLMs) open-set models because they are trained on internet-scale datasets? We answer this question with a clear no - VLMs introduce closed-set assumptions via their finite query set, making them vulnerable to open-set conditions. We systematically evaluate VLMs for open-set recognition and find they frequently misclassify objects not contained in their query set, leading to alarmingly low precision when tuned for high recall and vice versa. We show that naively increasing the size of the query set to contain more and more classes does not mitigate this problem, but instead causes diminishing task performance and open-set performance. We establish a revised definition of the open-set problem for the age of VLMs, define a new benchmark and evaluation protocol to facilitate standardised evaluation and research in this important area, and evaluate promising baseline approaches based on predictive uncertainty and dedicated negative embeddings on a range of VLM classifiers and object detectors.

Addressing the Challenges of Open-World Object Detection

Mar 27, 2023Abstract:We address the challenging problem of open world object detection (OWOD), where object detectors must identify objects from known classes while also identifying and continually learning to detect novel objects. Prior work has resulted in detectors that have a relatively low ability to detect novel objects, and a high likelihood of classifying a novel object as one of the known classes. We approach the problem by identifying the three main challenges that OWOD presents and introduce OW-RCNN, an open world object detector that addresses each of these three challenges. OW-RCNN establishes a new state of the art using the open-world evaluation protocol on MS-COCO, showing a drastically increased ability to detect novel objects (16-21% absolute increase in U-Recall), to avoid their misclassification as one of the known classes (up to 52% reduction in A-OSE), and to incrementally learn to detect them while maintaining performance on previously known classes (1-6% absolute increase in mAP).

Uncertainty-Aware Lidar Place Recognition in Novel Environments

Oct 04, 2022

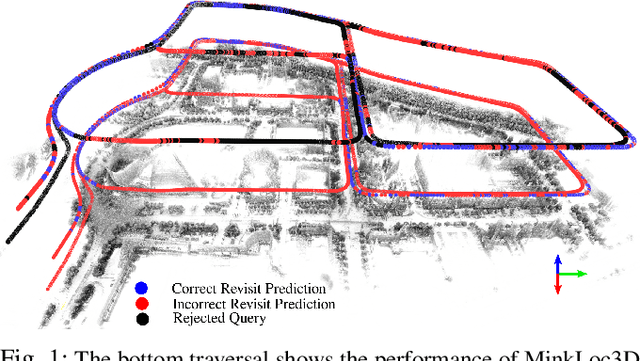

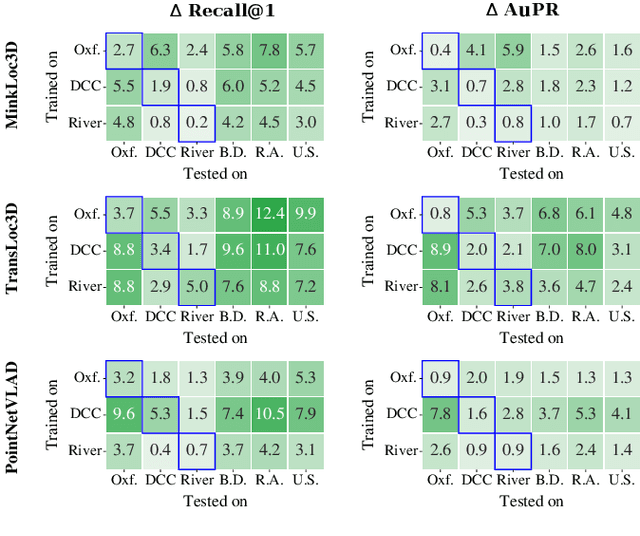

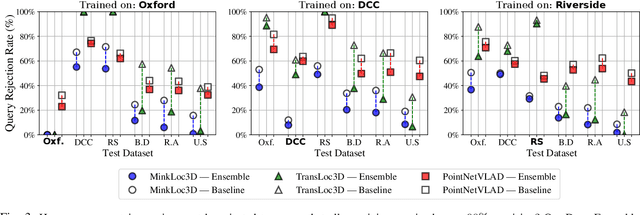

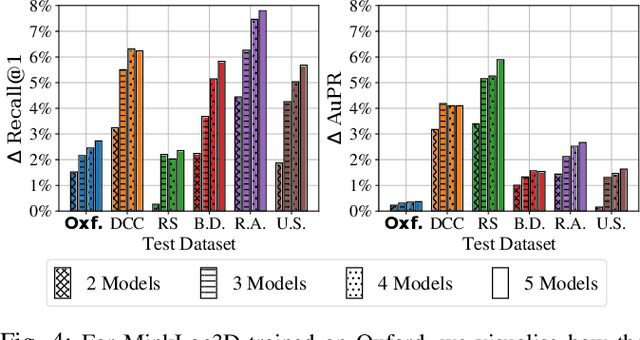

Abstract:State-of-the-art approaches to lidar place recognition degrade significantly when tested on novel environments that are not present in their training dataset. To improve their reliability, we propose uncertainty-aware lidar place recognition, where each predicted place match must have an associated uncertainty that can be used to identify and reject potentially incorrect matches. We introduce a novel evaluation protocol designed to benchmark uncertainty-aware lidar place recognition, and present Deep Ensembles as the first uncertainty-aware approach for this task. Testing across three large-scale datasets and three state-of-the-art architectures, we show that Deep Ensembles consistently improves the performance of lidar place recognition in novel environments. Compared to a standard network, our results show that Deep Ensembles improves the Recall@1 by more than 5% and AuPR by more than 3% on average when tested on previously unseen environments. Our code repository will be made publicly available upon paper acceptance at https://github.com/csiro-robotics/Uncertainty-LPR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge