Dariu M. Gavrila

A Vehicle System for Navigating Among Vulnerable Road Users Including Remote Operation

May 08, 2025

Abstract:We present a vehicle system capable of navigating safely and efficiently around Vulnerable Road Users (VRUs), such as pedestrians and cyclists. The system comprises key modules for environment perception, localization and mapping, motion planning, and control, integrated into a prototype vehicle. A key innovation is a motion planner based on Topology-driven Model Predictive Control (T-MPC). The guidance layer generates multiple trajectories in parallel, each representing a distinct strategy for obstacle avoidance or non-passing. The underlying trajectory optimization constrains the joint probability of collision with VRUs under generic uncertainties. To address extraordinary situations ("edge cases") that go beyond the autonomous capabilities - such as construction zones or encounters with emergency responders - the system includes an option for remote human operation, supported by visual and haptic guidance. In simulation, our motion planner outperforms three baseline approaches in terms of safety and efficiency. We also demonstrate the full system in prototype vehicle tests on a closed track, both in autonomous and remotely operated modes.

UNION: Unsupervised 3D Object Detection using Object Appearance-based Pseudo-Classes

May 24, 2024Abstract:Unsupervised 3D object detection methods have emerged to leverage vast amounts of data efficiently without requiring manual labels for training. Recent approaches rely on dynamic objects for learning to detect objects but penalize the detections of static instances during training. Multiple rounds of (self) training are used in which detected static instances are added to the set of training targets; this procedure to improve performance is computationally expensive. To address this, we propose the method UNION. We use spatial clustering and self-supervised scene flow to obtain a set of static and dynamic object proposals from LiDAR. Subsequently, object proposals' visual appearances are encoded to distinguish static objects in the foreground and background by selecting static instances that are visually similar to dynamic objects. As a result, static and dynamic foreground objects are obtained together, and existing detectors can be trained with a single training. In addition, we extend 3D object discovery to detection by using object appearance-based cluster labels as pseudo-class labels for training object classification. We conduct extensive experiments on the nuScenes dataset and increase the state-of-the-art performance for unsupervised object discovery, i.e. UNION more than doubles the average precision to 33.9. The code will be made publicly available.

See Further Than CFAR: a Data-Driven Radar Detector Trained by Lidar

Feb 27, 2024Abstract:In this paper, we address the limitations of traditional constant false alarm rate (CFAR) target detectors in automotive radars, particularly in complex urban environments with multiple objects that appear as extended targets. We propose a data-driven radar target detector exploiting a highly efficient 2D CNN backbone inspired by the computer vision domain. Our approach is distinguished by a unique cross sensor supervision pipeline, enabling it to learn exclusively from unlabeled synchronized radar and lidar data, thus eliminating the need for costly manual object annotations. Using a novel large-scale, real-life multi-sensor dataset recorded in various driving scenarios, we demonstrate that the proposed detector generates dense, lidar-like point clouds, achieving a lower Chamfer distance to the reference lidar point clouds than CFAR detectors. Overall, it significantly outperforms CFAR baselines detection accuracy.

Multimodal Object Query Initialization for 3D Object Detection

Oct 16, 2023Abstract:3D object detection models that exploit both LiDAR and camera sensor features are top performers in large-scale autonomous driving benchmarks. A transformer is a popular network architecture used for this task, in which so-called object queries act as candidate objects. Initializing these object queries based on current sensor inputs is a common practice. For this, existing methods strongly rely on LiDAR data however, and do not fully exploit image features. Besides, they introduce significant latency. To overcome these limitations we propose EfficientQ3M, an efficient, modular, and multimodal solution for object query initialization for transformer-based 3D object detection models. The proposed initialization method is combined with a "modality-balanced" transformer decoder where the queries can access all sensor modalities throughout the decoder. In experiments, we outperform the state of the art in transformer-based LiDAR object detection on the competitive nuScenes benchmark and showcase the benefits of input-dependent multimodal query initialization, while being more efficient than the available alternatives for LiDAR-camera initialization. The proposed method can be applied with any combination of sensor modalities as input, demonstrating its modularity.

Hidden Gems: 4D Radar Scene Flow Learning Using Cross-Modal Supervision

Mar 17, 2023

Abstract:This work proposes a novel approach to 4D radar-based scene flow estimation via cross-modal learning. Our approach is motivated by the co-located sensing redundancy in modern autonomous vehicles. Such redundancy implicitly provides various forms of supervision cues to the radar scene flow estimation. Specifically, we introduce a multi-task model architecture for the identified cross-modal learning problem and propose loss functions to opportunistically engage scene flow estimation using multiple cross-modal constraints for effective model training. Extensive experiments show the state-of-the-art performance of our method and demonstrate the effectiveness of cross-modal supervised learning to infer more accurate 4D radar scene flow. We also show its usefulness to two subtasks - motion segmentation and ego-motion estimation. Our source code will be available on https://github.com/Toytiny/CMFlow.

Structural Knowledge Distillation for Object Detection

Nov 23, 2022Abstract:Knowledge Distillation (KD) is a well-known training paradigm in deep neural networks where knowledge acquired by a large teacher model is transferred to a small student. KD has proven to be an effective technique to significantly improve the student's performance for various tasks including object detection. As such, KD techniques mostly rely on guidance at the intermediate feature level, which is typically implemented by minimizing an lp-norm distance between teacher and student activations during training. In this paper, we propose a replacement for the pixel-wise independent lp-norm based on the structural similarity (SSIM). By taking into account additional contrast and structural cues, feature importance, correlation and spatial dependence in the feature space are considered in the loss formulation. Extensive experiments on MSCOCO demonstrate the effectiveness of our method across different training schemes and architectures. Our method adds only little computational overhead, is straightforward to implement and at the same time it significantly outperforms the standard lp-norms. Moreover, more complex state-of-the-art KD methods using attention-based sampling mechanisms are outperformed, including a +3.5 AP gain using a Faster R-CNN R-50 compared to a vanilla model.

How do Cross-View and Cross-Modal Alignment Affect Representations in Contrastive Learning?

Nov 23, 2022Abstract:Various state-of-the-art self-supervised visual representation learning approaches take advantage of data from multiple sensors by aligning the feature representations across views and/or modalities. In this work, we investigate how aligning representations affects the visual features obtained from cross-view and cross-modal contrastive learning on images and point clouds. On five real-world datasets and on five tasks, we train and evaluate 108 models based on four pretraining variations. We find that cross-modal representation alignment discards complementary visual information, such as color and texture, and instead emphasizes redundant depth cues. The depth cues obtained from pretraining improve downstream depth prediction performance. Also overall, cross-modal alignment leads to more robust encoders than pre-training by cross-view alignment, especially on depth prediction, instance segmentation, and object detection.

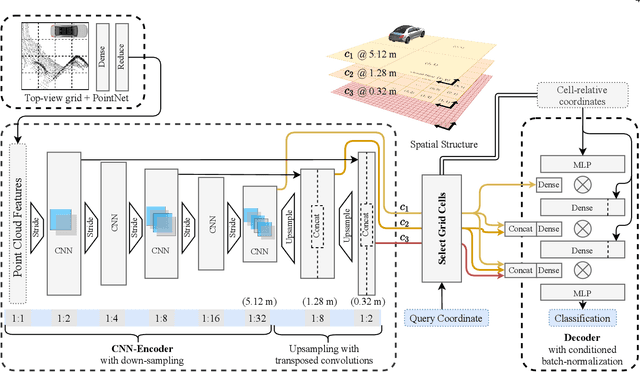

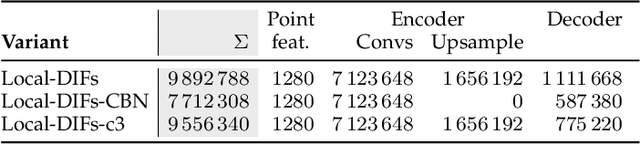

Semantic Scene Completion using Local Deep Implicit Functions on LiDAR Data

Nov 19, 2020

Abstract:Semantic scene completion is the task of jointly estimating 3D geometry and semantics of objects and surfaces within a given extent. This is a particularly challenging task on real-world data that is sparse and occluded. We propose a scene segmentation network based on local Deep Implicit Functions as a novel learning-based method for scene completion. Unlike previous work on scene completion, our method produces a continuous scene representation that is not based on voxelization. We encode raw point clouds into a latent space locally and at multiple spatial resolutions. A global scene completion function is subsequently assembled from the localized function patches. We show that this continuous representation is suitable to encode geometric and semantic properties of extensive outdoor scenes without the need for spatial discretization (thus avoiding the trade-off between level of scene detail and the scene extent that can be covered). We train and evaluate our method on semantically annotated LiDAR scans from the Semantic KITTI dataset. Our experiments verify that our method generates a powerful representation that can be decoded into a dense 3D description of a given scene. The performance of our method surpasses the state of the art on the Semantic KITTI Scene Completion Benchmark in terms of both geometric and semantic completion Intersection-over-Union (IoU).

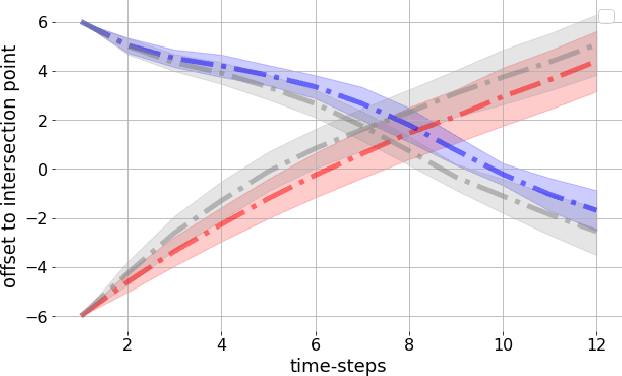

Diversity in Action: General-Sum Multi-Agent Continuous Inverse Optimal Control

Apr 27, 2020

Abstract:Traffic scenarios are inherently interactive. Multiple decision-makers predict the actions of others and choose strategies that maximize their rewards. We view these interactions from the perspective of game theory which introduces various challenges. Humans are not entirely rational, their rewards need to be inferred from real-world data, and any prediction algorithm needs to be real-time capable so that we can use it in an autonomous vehicle (AV). In this work, we present a game-theoretic method that addresses all of the points above. Compared to many existing methods used for AVs, our approach does 1) not require perfect communication, and 2) allows for individual rewards per agent. Our experiments demonstrate that these more realistic assumptions lead to qualitatively and quantitatively different reward inference and prediction of future actions that match better with expected real-world behaviour.

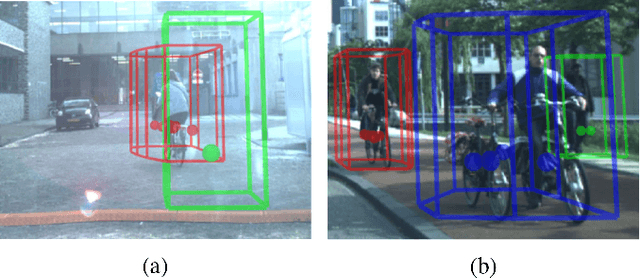

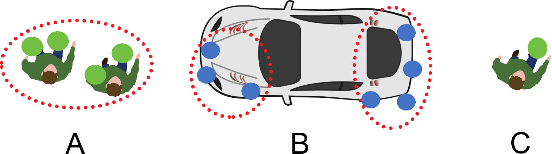

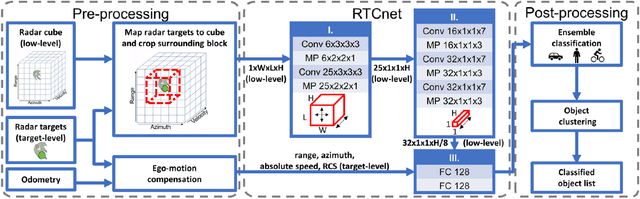

CNN based Road User Detection using the 3D Radar Cube

Apr 25, 2020

Abstract:This letter presents a novel radar based, single-frame, multi-class detection method for moving road users (pedestrian, cyclist, car), which utilizes low-level radar cube data. The method provides class information both on the radar target- and object-level. Radar targets are classified individually after extending the target features with a cropped block of the 3D radar cube around their positions, thereby capturing the motion of moving parts in the local velocity distribution. A Convolutional Neural Network (CNN) is proposed for this classification step. Afterwards, object proposals are generated with a clustering step, which not only considers the radar targets' positions and velocities, but their calculated class scores as well. In experiments on a real-life dataset we demonstrate that our method outperforms the state-of-the-art methods both target- and object-wise by reaching an average of 0.70 (baseline: 0.68) target-wise and 0.56 (baseline: 0.48) object-wise F1 score. Furthermore, we examine the importance of the used features in an ablation study.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge