Christo Kurisummoottil Thomas

Semantic Communication for 6G Networks: A Trade-off between Distortion Criticality and Information Representability

Mar 31, 2026Abstract:In this work, a self-attention based conditional generative adversarial network (SA-cGAN) framework for the sixth generation (6G) semantic communication system is proposed, explicitly designed to balance the trade-off between distortion criticality and information representability under varying channel conditions. The proposed SA-cGAN model continuously learns compact semantic representations by jointly considering semantic importance, reconstruction distortion, and channel quality, enabling adaptive selection of semantic tokens for transmission. A knowledge graph is integrated to preserve contextual relationships and enhance semantic robustness, particularly in low signal-to-noise ratio (SNR) regimes. The resulting optimization framework incorporates continuous relaxation, submodular semantic selection, and principled constraint handling, allowing efficient semantic resource allocation under bandwidth and multi-constraint conditions. Simulation results show that, although SA-cGAN achieves modest syntactic bilingual evaluation understudy scores at low SNR to approximately 0.72 at 20 dB, it significantly outperforms conventional and JSCC-based schemes in semantic metrics, with semantic similarity, semantic accuracy, and semantic completeness consistently improving above 0.90 with SNR. Additionally, the model exhibits adaptive compression behavior, aggressively reducing redundant content while preserving critical semantic information to maintain fidelity. The convergence of training loss further validates stable and efficient learning of semantic representations. Overall, the results confirm that the proposed SA-cGAN model effectively captures distortion-invariant semantic representations and dynamically adapts transmitted content based on distortion criticality and information representability for meaning-centric communication in future 6G networks.

Modeling Epidemiological Dynamics Under Adversarial Data and User Deception

Feb 23, 2026Abstract:Epidemiological models increasingly rely on self-reported behavioral data such as vaccination status, mask usage, and social distancing adherence to forecast disease transmission and assess the impact of non-pharmaceutical interventions (NPIs). While such data provide valuable real-time insights, they are often subject to strategic misreporting, driven by individual incentives to avoid penalties, access benefits, or express distrust in public health authorities. To account for such human behavior, in this paper, we introduce a game-theoretic framework that models the interaction between the population and a public health authority as a signaling game. Individuals (senders) choose how to report their behaviors, while the public health authority (receiver) updates their epidemiological model(s) based on potentially distorted signals. Focusing on deception around masking and vaccination, we characterize analytically game equilibrium outcomes and evaluate the degree to which deception can be tolerated while maintaining epidemic control through policy interventions. Our results show that separating equilibria-with minimal deception-drive infections to near zero over time. Remarkably, even under pervasive dishonesty in pooling equilibria, well-designed sender and receiver strategies can still maintain effective epidemic control. This work advances the understanding of adversarial data in epidemiology and offers tools for designing more robust public health models in the presence of strategic user behavior.

Semantic Communication with Hopfield Memories

Nov 13, 2025Abstract:Traditional joint source-channel coding employs static learned semantic representations that cannot dynamically adapt to evolving source distributions. Shared semantic memories between transmitter and receiver can potentially enable bandwidth savings by reusing previously transmitted concepts as context to reconstruct data, but require effective mechanisms to determine when current content is similar enough to stored patterns. However, existing hard quantization approaches based on variational autoencoders are limited by frequent memory updates even under small changes in data dynamics, which leads to inefficient usage of bandwidth.To address this challenge, in this paper, a memory-augmented semantic communication framework is proposed where both transmitter and receiver maintain a shared memory of semantic concepts using modern Hopfield networks (MHNs). The proposed framework employs soft attention-based retrieval that smoothly adjusts stored semantic prototype weights as data evolves that enables stable matching decisions under gradual data dynamics. A joint optimization of encoder, decoder, and memory retrieval mechanism is performed with the objective of maximizing a reasoning capacity metric that quantifies semantic efficiency as the product of memory reuse rate and compression ratio. Theoretical analysis establishes the fundamental rate-distortion-reuse tradeoff and proves that soft retrieval reduces unnecessary transmissions compared to hard quantization under bounded semantic drift. Extensive simulations over diverse video scenarios demonstrate that the proposed MHN-based approach achieves substantial bit reductions around 14% on average and up to 70% in scenarios with gradual content changes compared to baseline.

Causal Model-Based Reinforcement Learning for Sample-Efficient IoT Channel Access

Nov 13, 2025Abstract:Despite the advantages of multi-agent reinforcement learning (MARL) for wireless use case such as medium access control (MAC), their real-world deployment in Internet of Things (IoT) is hindered by their sample inefficiency. To alleviate this challenge, one can leverage model-based reinforcement learning (MBRL) solutions, however, conventional MBRL approaches rely on black-box models that are not interpretable and cannot reason. In contrast, in this paper, a novel causal model-based MARL framework is developed by leveraging tools from causal learn- ing. In particular, the proposed model can explicitly represent causal dependencies between network variables using structural causal models (SCMs) and attention-based inference networks. Interpretable causal models are then developed to capture how MAC control messages influence observations, how transmission actions determine outcomes, and how channel observations affect rewards. Data augmentation techniques are then used to generate synthetic rollouts using the learned causal model for policy optimization via proximal policy optimization (PPO). Analytical results demonstrate exponential sample complexity gains of causal MBRL over black-box approaches. Extensive simulations demonstrate that, on average, the proposed approach can reduce environment interactions by 58%, and yield faster convergence compared to model-free baselines. The proposed approach inherently is also shown to provide interpretable scheduling decisions via attention-based causal attribution, revealing which network conditions drive the policy. The resulting combination of sample efficiency and interpretability establishes causal MBRL as a practical approach for resource-constrained wireless systems.

Flexible Semantic-Aware Resource Allocation: Serving More Users Through Similarity Range Constraints

Apr 29, 2025

Abstract:Semantic communication (SemCom) aims to enhance the resource efficiency of next-generation networks by transmitting the underlying meaning of messages, focusing on information relevant to the end user. Existing literature on SemCom primarily emphasizes learning the encoder and decoder through end-to-end deep learning frameworks, with the objective of minimizing a task-specific semantic loss function. Beyond its influence on the physical and application layer design, semantic variability across users in multi-user systems enables the design of resource allocation schemes that incorporate user-specific semantic requirements. To this end, \emph{a semantic-aware resource allocation} scheme is proposed with the objective of maximizing transmission and semantic reliability, ultimately increasing the number of users whose semantic requirements are met. The resulting resource allocation problem is a non-convex mixed-integer nonlinear program (MINLP), which is known to be NP-hard. To make the problem tractable, it is decomposed into a set of sub-problems, each of which is efficiently solved via geometric programming techniques. Finally, simulations demonstrate that the proposed method improves user satisfaction by up to $17.1\%$ compared to state of the art methods based on quality of experience-aware SemCom methods.

Large-Scale AI in Telecom: Charting the Roadmap for Innovation, Scalability, and Enhanced Digital Experiences

Mar 06, 2025

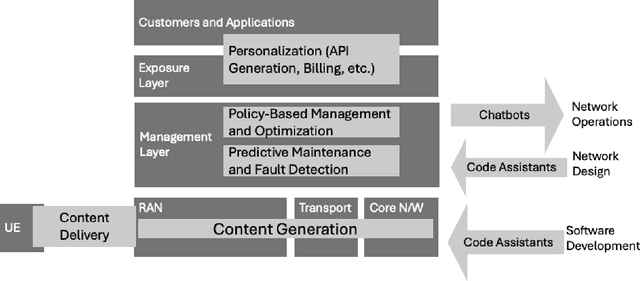

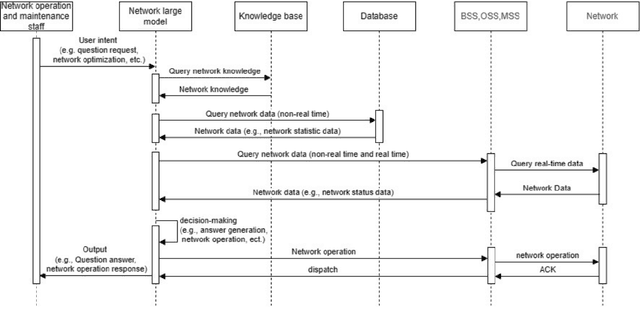

Abstract:This white paper discusses the role of large-scale AI in the telecommunications industry, with a specific focus on the potential of generative AI to revolutionize network functions and user experiences, especially in the context of 6G systems. It highlights the development and deployment of Large Telecom Models (LTMs), which are tailored AI models designed to address the complex challenges faced by modern telecom networks. The paper covers a wide range of topics, from the architecture and deployment strategies of LTMs to their applications in network management, resource allocation, and optimization. It also explores the regulatory, ethical, and standardization considerations for LTMs, offering insights into their future integration into telecom infrastructure. The goal is to provide a comprehensive roadmap for the adoption of LTMs to enhance scalability, performance, and user-centric innovation in telecom networks.

Joint Beamforming and 3D Location Optimization for Multi-User Holographic UAV Communications

Feb 24, 2025

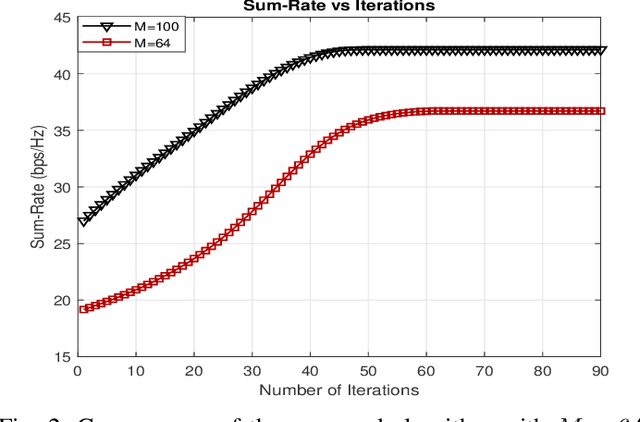

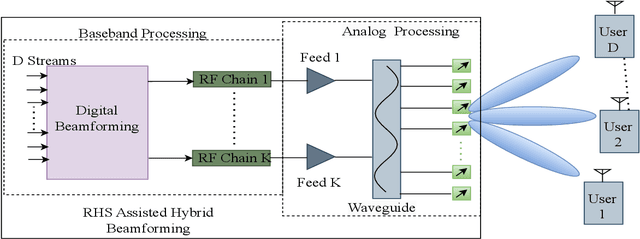

Abstract:This paper pioneers the field of multi-user holographic unmanned aerial vehicle (UAV) communications, laying a solid foundation for future innovations in next-generation aerial wireless networks. The study focuses on the challenging problem of jointly optimizing hybrid holographic beamforming and 3D UAV positioning in scenarios where the UAV is equipped with a reconfigurable holographic surface (RHS) instead of conventional phased array antennas. Using the unique capabilities of RHSs, the system dynamically adjusts both the position of the UAV and its hybrid beamforming properties to maximize the sum rate of the network. To address this complex optimization problem, we propose an iterative algorithm combining zero-forcing digital beamforming and a gradient ascent approach for the holographic patterns and the 3D position optimization, while ensuring practical feasibility constraints. The algorithm is designed to effectively balance the trade-offs between power, beamforming, and UAV trajectory constraints, enabling adaptive and efficient communications, while assuring a monotonic increase in the sum-rate performance. Our numerical investigations demonstrate that the significant performance improvements with the proposed approach over the benchmark methods, showcasing enhanced sum rate and system adaptability under varying conditions.

Joint Holographic Beamforming and User Scheduling with Individual QoS Constraints

Feb 24, 2025

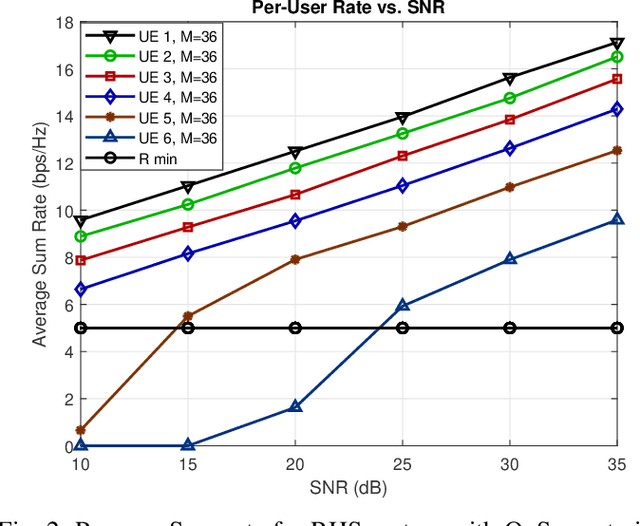

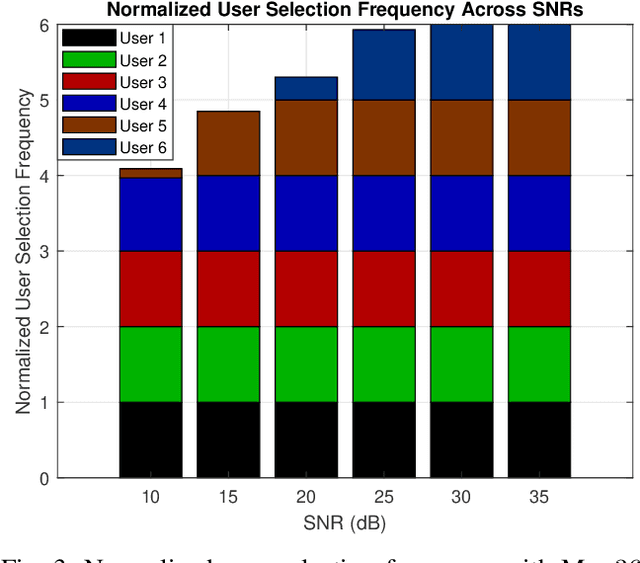

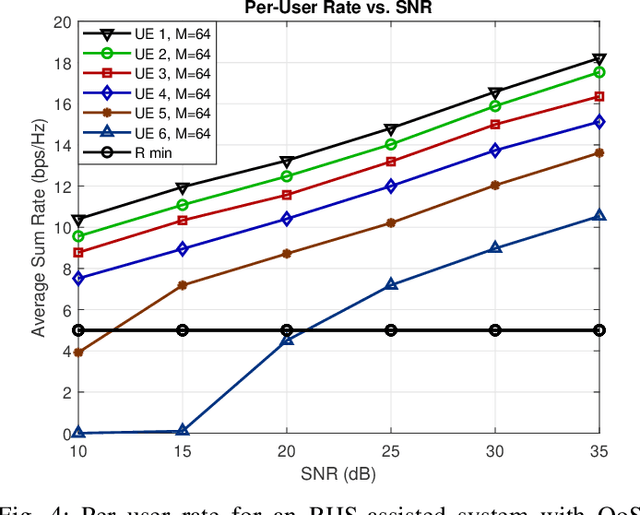

Abstract:Reconfigurable holographic surfaces (RHS) have emerged as a transformative material technology, enabling dynamic control of electromagnetic waves to generate versatile holographic beam patterns. This paper addresses the problem of joint hybrid holographic beamforming and user scheduling under per-user minimum quality-of-service (QoS) constraints, a critical challenge in resource-constrained networks. However, such a problem results in mixed-integer non-convex optimization, making it difficult to identify feasible solutions efficiently. To overcome this challenge, we propose a novel iterative optimization framework that jointly solves the problem to maximize the RHS-assisted network sum-rate, efficiently managing holographic beamforming patterns, dynamically scheduling users, and ensuring the minimum QoS requirements for each scheduled user. The proposed framework relies on zero-forcing digital beamforming, gradient-ascent-based holographic beamformer optimization, and a greedy user selection principle. Our extensive simulation results validate the effectiveness of the proposed scheme, demonstrating their superior performance compared to the benchmark algorithms in terms of sum-rate performance, while meeting the minimum per-user QoS constraints

Hypergame Theory for Decentralized Resource Allocation in Multi-user Semantic Communications

Sep 26, 2024

Abstract:Semantic communications (SC) is an emerging communication paradigm in which wireless devices can send only relevant information from a source of data while relying on computing resources to regenerate missing data points. However, the design of a multi-user SC system becomes more challenging because of the computing and communication overhead required for coordination. Existing solutions for learning the semantic language and performing resource allocation often fail to capture the computing and communication tradeoffs involved in multiuser SC. To address this gap, a novel framework for decentralized computing and communication resource allocation in multiuser SC systems is proposed. The challenge of efficiently allocating communication and computing resources (for reasoning) in a decentralized manner to maximize the quality of task experience for the end users is addressed through the application of Stackelberg hyper game theory. Leveraging the concept of second-level hyper games, novel analytical formulations are developed to model misperceptions of the users about each other's communication and control strategies. Further, equilibrium analysis of the learned resource allocation protocols examines the convergence of the computing and communication strategies to a local Stackelberg equilibria, considering misperceptions. Simulation results show that the proposed Stackelberg hyper game results in efficient usage of communication and computing resources while maintaining a high quality of experience for the users compared to state-of-the-art that does not account for the misperceptions.

Semantic Communication for the Internet of Sounds: Architecture, Design Principles, and Challenges

Jul 16, 2024

Abstract:The Internet of Sounds (IoS) combines sound sensing, processing, and transmission techniques, enabling collaboration among diverse sound devices. To achieve perceptual quality of sound synchronization in the IoS, it is necessary to precisely synchronize three critical factors: sound quality, timing, and behavior control. However, conventional bit-oriented communication, which focuses on bit reproduction, may not be able to fulfill these synchronization requirements under dynamic channel conditions. One promising approach to address the synchronization challenges of the IoS is through the use of semantic communication (SC) that can capture and leverage the logical relationships in its source data. Consequently, in this paper, we propose an IoS-centric SC framework with a transceiver design. The designed encoder extracts semantic information from diverse sources and transmits it to IoS listeners. It can also distill important semantic information to reduce transmission latency for timing synchronization. At the receiver's end, the decoder employs context- and knowledge-based reasoning techniques to reconstruct and integrate sounds, which achieves sound quality synchronization across diverse communication environments. Moreover, by periodically sharing knowledge, SC models of IoS devices can be updated to optimize their synchronization behavior. Finally, we explore several open issues on mathematical models, resource allocation, and cross-layer protocols.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge