Chenghao Shi

Latent-Space Autoregressive World Model for Efficient and Robust Image-Goal Navigation

Nov 14, 2025

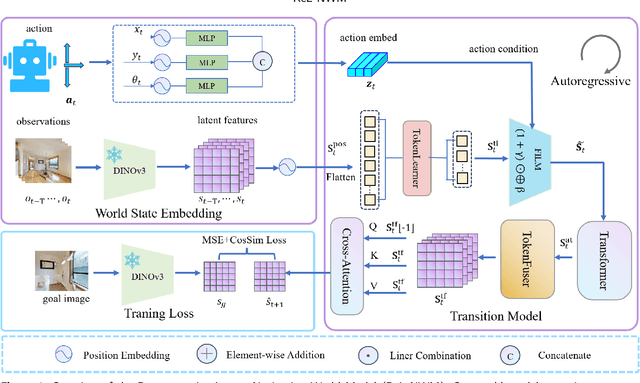

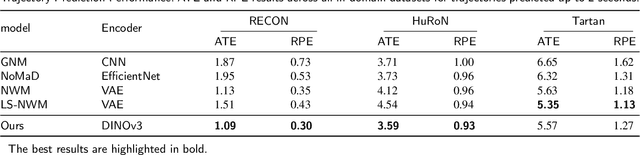

Abstract:Traditional navigation methods rely heavily on accurate localization and mapping. In contrast, world models that capture environmental dynamics in latent space have opened up new perspectives for navigation tasks, enabling systems to move beyond traditional multi-module pipelines. However, world model often suffers from high computational costs in both training and inference. To address this, we propose LS-NWM - a lightweight latent space navigation world model that is trained and operates entirely in latent space, compared to the state-of-the-art baseline, our method reduces training time by approximately 3.2x and planning time by about 447x,while further improving navigation performance with a 35% higher SR and an 11% higher SPL. The key idea is that accurate pixel-wise environmental prediction is unnecessary for navigation. Instead, the model predicts future latent states based on current observational features and action inputs, then performs path planning and decision-making within this compact representation, significantly improving computational efficiency. By incorporating an autoregressive multi-frame prediction strategy during training, the model effectively captures long-term spatiotemporal dependencies, thereby enhancing navigation performance in complex scenarios. Experimental results demonstrate that our method achieves state-of-the-art navigation performance while maintaining a substantial efficiency advantage over existing approaches.

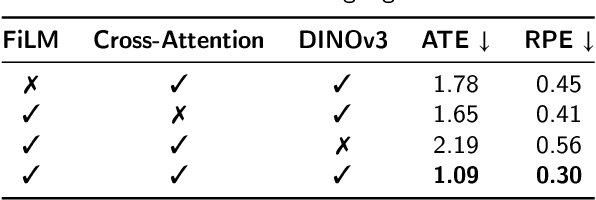

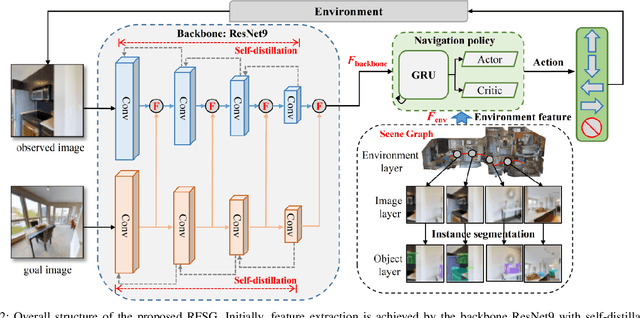

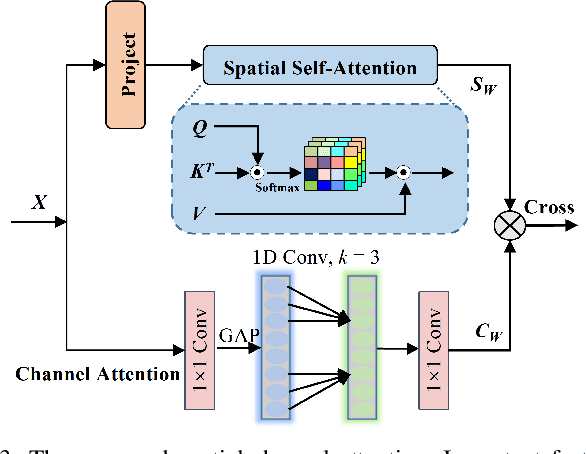

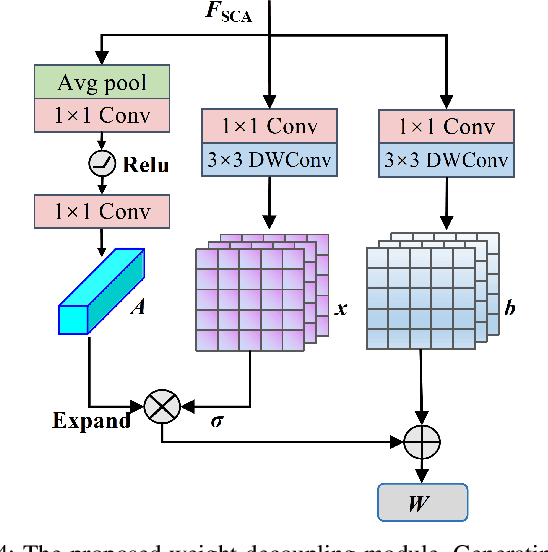

Image-Goal Navigation Using Refined Feature Guidance and Scene Graph Enhancement

Mar 14, 2025

Abstract:In this paper, we introduce a novel image-goal navigation approach, named RFSG. Our focus lies in leveraging the fine-grained connections between goals, observations, and the environment within limited image data, all the while keeping the navigation architecture simple and lightweight. To this end, we propose the spatial-channel attention mechanism, enabling the network to learn the importance of multi-dimensional features to fuse the goal and observation features. In addition, a selfdistillation mechanism is incorporated to further enhance the feature representation capabilities. Given that the navigation task needs surrounding environmental information for more efficient navigation, we propose an image scene graph to establish feature associations at both the image and object levels, effectively encoding the surrounding scene information. Crossscene performance validation was conducted on the Gibson and HM3D datasets, and the proposed method achieved stateof-the-art results among mainstream methods, with a speed of up to 53.5 frames per second on an RTX3080. This contributes to the realization of end-to-end image-goal navigation in realworld scenarios. The implementation and model of our method have been released at: https://github.com/nubot-nudt/RFSG.

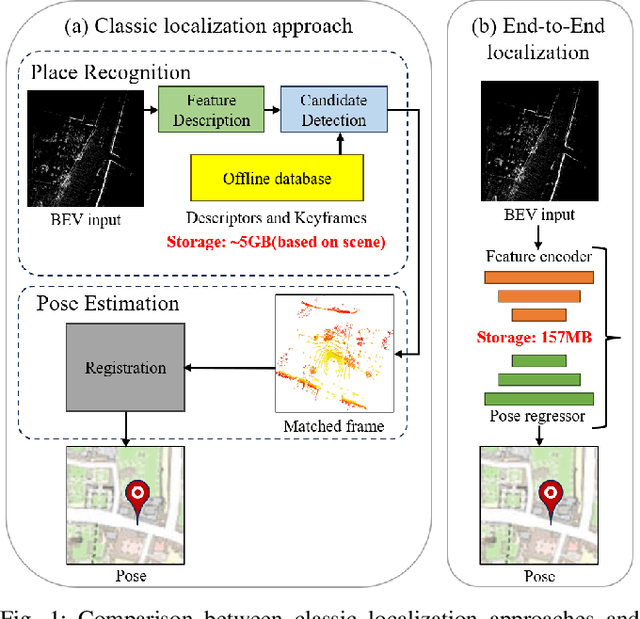

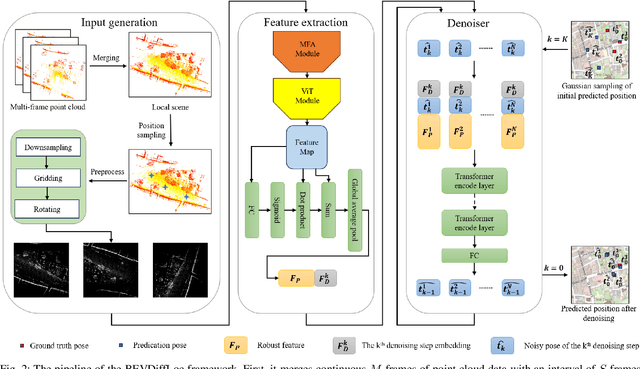

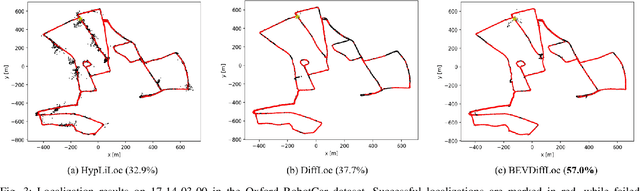

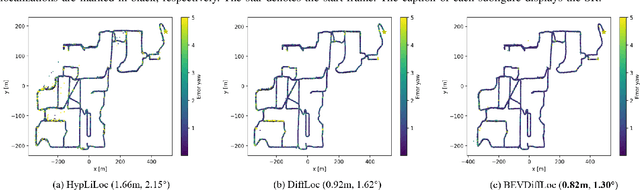

BEVDiffLoc: End-to-End LiDAR Global Localization in BEV View based on Diffusion Model

Mar 14, 2025

Abstract:Localization is one of the core parts of modern robotics. Classic localization methods typically follow the retrieve-then-register paradigm, achieving remarkable success. Recently, the emergence of end-to-end localization approaches has offered distinct advantages, including a streamlined system architecture and the elimination of the need to store extensive map data. Although these methods have demonstrated promising results, current end-to-end localization approaches still face limitations in robustness and accuracy. Bird's-Eye-View (BEV) image is one of the most widely adopted data representations in autonomous driving. It significantly reduces data complexity while preserving spatial structure and scale consistency, making it an ideal representation for localization tasks. However, research on BEV-based end-to-end localization remains notably insufficient. To fill this gap, we propose BEVDiffLoc, a novel framework that formulates LiDAR localization as a conditional generation of poses. Leveraging the properties of BEV, we first introduce a specific data augmentation method to significantly enhance the diversity of input data. Then, the Maximum Feature Aggregation Module and Vision Transformer are employed to learn robust features while maintaining robustness against significant rotational view variations. Finally, we incorporate a diffusion model that iteratively refines the learned features to recover the absolute pose. Extensive experiments on the Oxford Radar RobotCar and NCLT datasets demonstrate that BEVDiffLoc outperforms the baseline methods. Our code is available at https://github.com/nubot-nudt/BEVDiffLoc.

SGLC: Semantic Graph-Guided Coarse-Fine-Refine Full Loop Closing for LiDAR SLAM

Jul 11, 2024

Abstract:Loop closing is a crucial component in SLAM that helps eliminate accumulated errors through two main steps: loop detection and loop pose correction. The first step determines whether loop closing should be performed, while the second estimates the 6-DoF pose to correct odometry drift. Current methods mostly focus on developing robust descriptors for loop closure detection, often neglecting loop pose estimation. A few methods that do include pose estimation either suffer from low accuracy or incur high computational costs. To tackle this problem, we introduce SGLC, a real-time semantic graph-guided full loop closing method, with robust loop closure detection and 6-DoF pose estimation capabilities. SGLC takes into account the distinct characteristics of foreground and background points. For foreground instances, it builds a semantic graph that not only abstracts point cloud representation for fast descriptor generation and matching but also guides the subsequent loop verification and initial pose estimation. Background points, meanwhile, are exploited to provide more geometric features for scan-wise descriptor construction and stable planar information for further pose refinement. Loop pose estimation employs a coarse-fine-refine registration scheme that considers the alignment of both instance points and background points, offering high efficiency and accuracy. We evaluate the loop closing performance of SGLC through extensive experiments on the KITTI and KITTI-360 datasets, demonstrating its superiority over existing state-of-the-art methods. Additionally, we integrate SGLC into a SLAM system, eliminating accumulated errors and improving overall SLAM performance. The implementation of SGLC will be released at https://github.com/nubot-nudt/SGLC.

SegNet4D: Effective and Efficient 4D LiDAR Semantic Segmentation in Autonomous Driving Environments

Jun 24, 2024

Abstract:4D LiDAR semantic segmentation, also referred to as multi-scan semantic segmentation, plays a crucial role in enhancing the environmental understanding capabilities of autonomous vehicles. It entails identifying the semantic category of each point in the LiDAR scan and distinguishing whether it is dynamic, a critical aspect in downstream tasks such as path planning and autonomous navigation. Existing methods for 4D semantic segmentation often rely on computationally intensive 4D convolutions for multi-scan input, resulting in poor real-time performance. In this article, we introduce SegNet4D, a novel real-time multi-scan semantic segmentation method leveraging a projection-based approach for fast motion feature encoding, showcasing outstanding performance. SegNet4D treats 4D semantic segmentation as two distinct tasks: single-scan semantic segmentation and moving object segmentation, each addressed by dedicated head. These results are then fused in the proposed motion-semantic fusion module to achieve comprehensive multi-scan semantic segmentation. Besides, we propose extracting instance information from the current scan and incorporating it into the network for instance-aware segmentation. Our approach exhibits state-of-the-art performance across multiple datasets and stands out as a real-time multi-scan semantic segmentation method. The implementation of SegNet4D will be made available at \url{https://github.com/nubot-nudt/SegNet4D}.

Diffusion-Based Point Cloud Super-Resolution for mmWave Radar Data

Apr 09, 2024Abstract:The millimeter-wave radar sensor maintains stable performance under adverse environmental conditions, making it a promising solution for all-weather perception tasks, such as outdoor mobile robotics. However, the radar point clouds are relatively sparse and contain massive ghost points, which greatly limits the development of mmWave radar technology. In this paper, we propose a novel point cloud super-resolution approach for 3D mmWave radar data, named Radar-diffusion. Our approach employs the diffusion model defined by mean-reverting stochastic differential equations(SDE). Using our proposed new objective function with supervision from corresponding LiDAR point clouds, our approach efficiently handles radar ghost points and enhances the sparse mmWave radar point clouds to dense LiDAR-like point clouds. We evaluate our approach on two different datasets, and the experimental results show that our method outperforms the state-of-the-art baseline methods in 3D radar super-resolution tasks. Furthermore, we demonstrate that our enhanced radar point cloud is capable of downstream radar point-based registration tasks.

Fast and Accurate Deep Loop Closing and Relocalization for Reliable LiDAR SLAM

Sep 15, 2023Abstract:Loop closing and relocalization are crucial techniques to establish reliable and robust long-term SLAM by addressing pose estimation drift and degeneration. This article begins by formulating loop closing and relocalization within a unified framework. Then, we propose a novel multi-head network LCR-Net to tackle both tasks effectively. It exploits novel feature extraction and pose-aware attention mechanism to precisely estimate similarities and 6-DoF poses between pairs of LiDAR scans. In the end, we integrate our LCR-Net into a SLAM system and achieve robust and accurate online LiDAR SLAM in outdoor driving environments. We thoroughly evaluate our LCR-Net through three setups derived from loop closing and relocalization, including candidate retrieval, closed-loop point cloud registration, and continuous relocalization using multiple datasets. The results demonstrate that LCR-Net excels in all three tasks, surpassing the state-of-the-art methods and exhibiting a remarkable generalization ability. Notably, our LCR-Net outperforms baseline methods without using a time-consuming robust pose estimator, rendering it suitable for online SLAM applications. To our best knowledge, the integration of LCR-Net yields the first LiDAR SLAM with the capability of deep loop closing and relocalization. The implementation of our methods will be made open-source.

RDMNet: Reliable Dense Matching Based Point Cloud Registration for Autonomous Driving

Mar 31, 2023

Abstract:Point cloud registration is an important task in robotics and autonomous driving to estimate the ego-motion of the vehicle. Recent advances following the coarse-to-fine manner show promising potential in point cloud registration. However, existing methods rely on good superpoint correspondences, which are hard to be obtained reliably and efficiently, thus resulting in less robust and accurate point cloud registration. In this paper, we propose a novel network, named RDMNet, to find dense point correspondences coarse-to-fine and improve final pose estimation based on such reliable correspondences. Our RDMNet uses a devised 3D-RoFormer mechanism to first extract distinctive superpoints and generates reliable superpoints matches between two point clouds. The proposed 3D-RoFormer fuses 3D position information into the transformer network, efficiently exploiting point clouds' contextual and geometric information to generate robust superpoint correspondences. RDMNet then propagates the sparse superpoints matches to dense point matches using the neighborhood information for accurate point cloud registration. We extensively evaluate our method on multiple datasets from different environments. The experimental results demonstrate that our method outperforms existing state-of-the-art approaches in all tested datasets with a strong generalization ability.

InsMOS: Instance-Aware Moving Object Segmentation in LiDAR Data

Mar 07, 2023

Abstract:Identifying moving objects is a crucial capability for autonomous navigation, consistent map generation, and future trajectory prediction of objects. In this paper, we propose a novel network that addresses the challenge of segmenting moving objects in 3D LiDAR scans. Our approach not only predicts point-wise moving labels but also detects instance information of main traffic participants. Such a design helps determine which instances are actually moving and which ones are temporarily static in the current scene. Our method exploits a sequence of point clouds as input and quantifies them into 4D voxels. We use 4D sparse convolutions to extract motion features from the 4D voxels and inject them into the current scan. Then, we extract spatio-temporal features from the current scan for instance detection and feature fusion. Finally, we design an upsample fusion module to output point-wise labels by fusing the spatio-temporal features and predicted instance information. We evaluated our approach on the LiDAR-MOS benchmark based on SemanticKITTI and achieved better moving object segmentation performance compared to state-of-the-art methods, demonstrating the effectiveness of our approach in integrating instance information for moving object segmentation. Furthermore, our method shows superior performance on the Apollo dataset with a pre-trained model on SemanticKITTI, indicating that our method generalizes well in different scenes.The code and pre-trained models of our method will be released at https://github.com/nubot-nudt/InsMOS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge