Yuwei Cheng

Learning from Imperfect Human Feedback: a Tale from Corruption-Robust Dueling

May 18, 2024

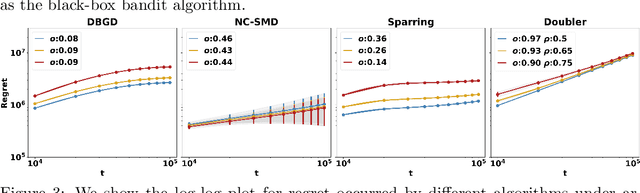

Abstract:This paper studies Learning from Imperfect Human Feedback (LIHF), motivated by humans' potential irrationality or imperfect perception of true preference. We revisit the classic dueling bandit problem as a model of learning from comparative human feedback, and enrich it by casting the imperfection in human feedback as agnostic corruption to user utilities. We start by identifying the fundamental limits of LIHF and prove a regret lower bound of $\Omega(\max\{T^{1/2},C\})$, even when the total corruption $C$ is known and when the corruption decays gracefully over time (i.e., user feedback becomes increasingly more accurate). We then turn to design robust algorithms applicable in real-world scenarios with arbitrary corruption and unknown $C$. Our key finding is that gradient-based algorithms enjoy a smooth efficiency-robustness tradeoff under corruption by varying their learning rates. Specifically, under general concave user utility, Dueling Bandit Gradient Descent (DBGD) of Yue and Joachims (2009) can be tuned to achieve regret $O(T^{1-\alpha} + T^{ \alpha} C)$ for any given parameter $\alpha \in (0, \frac{1}{4}]$. Additionally, this result enables us to pin down the regret lower bound of the standard DBGD (the $\alpha=1/4$ case) as $\Omega(T^{3/4})$ for the first time, to the best of our knowledge. For strongly concave user utility we show a better tradeoff: there is an algorithm that achieves $O(T^{\alpha} + T^{\frac{1}{2}(1-\alpha)}C)$ for any given $\alpha \in [\frac{1}{2},1)$. Our theoretical insights are corroborated by extensive experiments on real-world recommendation data.

Diffusion-Based Point Cloud Super-Resolution for mmWave Radar Data

Apr 09, 2024Abstract:The millimeter-wave radar sensor maintains stable performance under adverse environmental conditions, making it a promising solution for all-weather perception tasks, such as outdoor mobile robotics. However, the radar point clouds are relatively sparse and contain massive ghost points, which greatly limits the development of mmWave radar technology. In this paper, we propose a novel point cloud super-resolution approach for 3D mmWave radar data, named Radar-diffusion. Our approach employs the diffusion model defined by mean-reverting stochastic differential equations(SDE). Using our proposed new objective function with supervision from corresponding LiDAR point clouds, our approach efficiently handles radar ghost points and enhances the sparse mmWave radar point clouds to dense LiDAR-like point clouds. We evaluate our approach on two different datasets, and the experimental results show that our method outperforms the state-of-the-art baseline methods in 3D radar super-resolution tasks. Furthermore, we demonstrate that our enhanced radar point cloud is capable of downstream radar point-based registration tasks.

RadarMOSEVE: A Spatial-Temporal Transformer Network for Radar-Only Moving Object Segmentation and Ego-Velocity Estimation

Feb 22, 2024Abstract:Moving object segmentation (MOS) and Ego velocity estimation (EVE) are vital capabilities for mobile systems to achieve full autonomy. Several approaches have attempted to achieve MOSEVE using a LiDAR sensor. However, LiDAR sensors are typically expensive and susceptible to adverse weather conditions. Instead, millimeter-wave radar (MWR) has gained popularity in robotics and autonomous driving for real applications due to its cost-effectiveness and resilience to bad weather. Nonetheless, publicly available MOSEVE datasets and approaches using radar data are limited. Some existing methods adopt point convolutional networks from LiDAR-based approaches, ignoring the specific artifacts and the valuable radial velocity information of radar measurements, leading to suboptimal performance. In this paper, we propose a novel transformer network that effectively addresses the sparsity and noise issues and leverages the radial velocity measurements of radar points using our devised radar self- and cross-attention mechanisms. Based on that, our method achieves accurate EVE of the robot and performs MOS using only radar data simultaneously. To thoroughly evaluate the MOSEVE performance of our method, we annotated the radar points in the public View-of-Delft (VoD) dataset and additionally constructed a new radar dataset in various environments. The experimental results demonstrate the superiority of our approach over existing state-of-the-art methods. The code is available at https://github.com/ORCA-Uboat/RadarMOSEVE.

Visual Temporal Fusion Based Free Space Segmentation for Autonomous Surface Vessels

Oct 02, 2023

Abstract:The use of Autonomous Surface Vessels (ASVs) is growing rapidly. For safe and efficient surface auto-driving, a reliable perception system is crucial. Such systems allow the vessels to sense their surroundings and make decisions based on the information gathered. During the perception process, free space segmentation is essential to distinguish the safe mission zone and segment the operational waterways. However, ASVs face particular challenges in free space segmentation due to nearshore reflection interference, complex water textures, and random motion vibrations caused by the water surface conditions. To deal with these challenges, we propose a visual temporal fusion based free space segmentation model to utilize the previous vision information. In addition, we also introduce a new evaluation procedure and a contour position based loss calculation function, which are more suitable for surface free space segmentation tasks. The proposed model and process are tested on a continuous video segmentation dataset and achieve both high-accuracy and robust results. The dataset is also made available along with this paper.

Are We Ready for Unmanned Surface Vehicles in Inland Waterways? The USVInland Multisensor Dataset and Benchmark

Mar 09, 2021

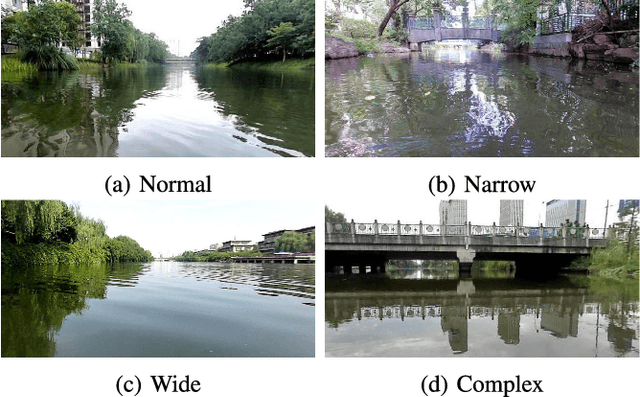

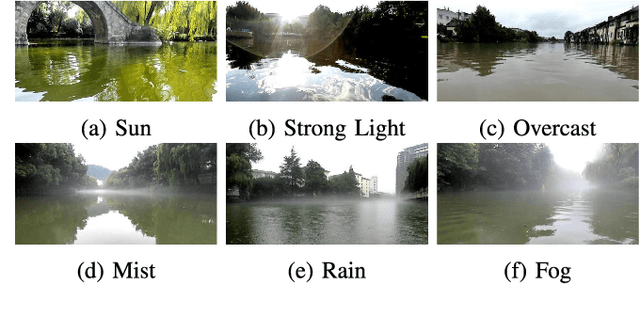

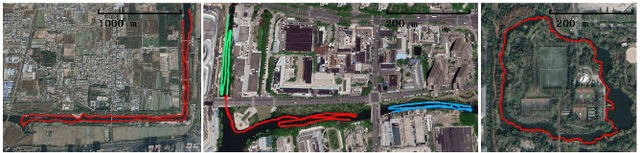

Abstract:Unmanned surface vehicles (USVs) have great value with their ability to execute hazardous and time-consuming missions over water surfaces. Recently, USVs for inland waterways have attracted increasing attention for their potential application in autonomous monitoring, transportation, and cleaning. However, unlike sailing in open water, the challenges posed by scenes of inland waterways, such as the complex distribution of obstacles, the global positioning system (GPS) signal denial environment, the reflection of bank-side structures, and the fog over the water surface, all impede USV application in inland waterways. To address these problems and stimulate relevant research, we introduce USVInland, a multisensor dataset for USVs in inland waterways. The collection of USVInland spans a trajectory of more than 26 km in diverse real-world scenes of inland waterways using various modalities, including lidar, stereo cameras, millimeter-wave radar, GPS, and inertial measurement units (IMUs). Based on the requirements and challenges in the perception and navigation of USVs for inland waterways, we build benchmarks for simultaneous localization and mapping (SLAM), stereo matching, and water segmentation. We evaluate common algorithms for the above tasks to determine the influence of unique inland waterway scenes on algorithm performance. Our dataset and the development tools are available online at https://www.orca-tech.cn/datasets.html.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge