Beibei Lin

WeatherReasonSeg: A Benchmark for Weather-Aware Reasoning Segmentation in Visual Language Models

Mar 18, 2026Abstract:Existing vision-language models (VLMs) have demonstrated impressive performance in reasoning-based segmentation. However, current benchmarks are primarily constructed from high-quality images captured under idealized conditions. This raises a critical question: when visual cues are severely degraded by adverse weather conditions such as rain, snow, or fog, can VLMs sustain reliable reasoning segmentation capabilities? In response to this challenge, we introduce WeatherReasonSeg, a benchmark designed to evaluate VLM performance in reasoning-based segmentation under adverse weather conditions. It consists of two complementary components. First, we construct a controllable reasoning dataset by applying synthetic weather with varying severity levels to existing segmentation datasets, enabling fine-grained robustness analysis. Second, to capture real-world complexity, we curate a real-world adverse-weather reasoning segmentation dataset with semantically consistent queries generated via mask-guided LLM prompting. We further broaden the evaluation scope across five reasoning dimensions, including functionality, application scenarios, structural attributes, interactions, and requirement matching. Extensive experiments across diverse VLMs reveal two key findings: (1) VLM performance degrades monotonically with increasing weather severity, and (2) different weather types induce distinct vulnerability patterns. We hope WeatherReasonSeg will serve as a foundation for advancing robust, weather-aware reasoning.

VIEW2SPACE: Studying Multi-View Visual Reasoning from Sparse Observations

Mar 17, 2026Abstract:Multi-view visual reasoning is essential for intelligent systems that must understand complex environments from sparse and discrete viewpoints, yet existing research has largely focused on single-image or temporally dense video settings. In real-world scenarios, reasoning across views requires integrating partial observations without explicit guidance, while collecting large-scale multi-view data with accurate geometric and semantic annotations remains challenging. To address this gap, we leverage physically grounded simulation to construct diverse, high-fidelity 3D scenes with precise per-view metadata, enabling scalable data generation that remains transferable to real-world settings. Based on this engine, we introduce VIEW2SPACE, a multi-dimensional benchmark for sparse multi-view reasoning, together with a scalable, disjoint training split supporting millions of grounded question-answer pairs. Using this benchmark, a comprehensive evaluation of state-of-the-art vision-language and spatial models reveals that multi-view reasoning remains largely unsolved, with most models performing only marginally above random guessing. We further investigate whether training can bridge this gap. Our proposed Grounded Chain-of-Thought with Visual Evidence substantially improves performance under moderate difficulty, and generalizes to real-world data, outperforming existing approaches in cross-dataset evaluation. We further conduct difficulty-aware scaling analyses across model size, data scale, reasoning depth, and visibility constraints, indicating that while geometric perception can benefit from scaling under sufficient visibility, deep compositional reasoning across sparse views remains a fundamental challenge.

RGB-to-Polarization Estimation: A New Task and Benchmark Study

May 19, 2025Abstract:Polarization images provide rich physical information that is fundamentally absent from standard RGB images, benefiting a wide range of computer vision applications such as reflection separation and material classification. However, the acquisition of polarization images typically requires additional optical components, which increases both the cost and the complexity of the applications. To bridge this gap, we introduce a new task: RGB-to-polarization image estimation, which aims to infer polarization information directly from RGB images. In this work, we establish the first comprehensive benchmark for this task by leveraging existing polarization datasets and evaluating a diverse set of state-of-the-art deep learning models, including both restoration-oriented and generative architectures. Through extensive quantitative and qualitative analysis, our benchmark not only establishes the current performance ceiling of RGB-to-polarization estimation, but also systematically reveals the respective strengths and limitations of different model families -- such as direct reconstruction versus generative synthesis, and task-specific training versus large-scale pre-training. In addition, we provide some potential directions for future research on polarization estimation. This benchmark is intended to serve as a foundational resource to facilitate the design and evaluation of future methods for polarization estimation from standard RGB inputs.

3DSwapping: Texture Swapping For 3D Object From Single Reference Image

Mar 24, 2025Abstract:3D texture swapping allows for the customization of 3D object textures, enabling efficient and versatile visual transformations in 3D editing. While no dedicated method exists, adapted 2D editing and text-driven 3D editing approaches can serve this purpose. However, 2D editing requires frame-by-frame manipulation, causing inconsistencies across views, while text-driven 3D editing struggles to preserve texture characteristics from reference images. To tackle these challenges, we introduce 3DSwapping, a 3D texture swapping method that integrates: 1) progressive generation, 2) view-consistency gradient guidance, and 3) prompt-tuned gradient guidance. To ensure view consistency, our progressive generation process starts by editing a single reference image and gradually propagates the edits to adjacent views. Our view-consistency gradient guidance further reinforces consistency by conditioning the generation model on feature differences between consistent and inconsistent outputs. To preserve texture characteristics, we introduce prompt-tuning-based gradient guidance, which learns a token that precisely captures the difference between the reference image and the 3D object. This token then guides the editing process, ensuring more consistent texture preservation across views. Overall, 3DSwapping integrates these novel strategies to achieve higher-fidelity texture transfer while preserving structural coherence across multiple viewpoints. Extensive qualitative and quantitative evaluations confirm that our three novel components enable convincing and effective 2D texture swapping for 3D objects. Code will be available upon acceptance.

Seeing Beyond Haze: Generative Nighttime Image Dehazing

Mar 11, 2025

Abstract:Nighttime image dehazing is particularly challenging when dense haze and intense glow severely degrade or completely obscure background information. Existing methods often encounter difficulties due to insufficient background priors and limited generative ability, both essential for handling such conditions. In this paper, we introduce BeyondHaze, a generative nighttime dehazing method that not only significantly reduces haze and glow effects but also infers background information in regions where it may be absent. Our approach is developed on two main ideas: gaining strong background priors by adapting image diffusion models to the nighttime dehazing problem, and enhancing generative ability for haze- and glow-obscured scene areas through guided training. Task-specific nighttime dehazing knowledge is distilled into an image diffusion model in a manner that preserves its capacity to generate clean images. The diffusion model is additionally trained on image pairs designed to improve its ability to generate background details and content that are missing in the input image due to haze effects. Since generative models are susceptible to hallucinations, we develop our framework to allow user control over the generative level, balancing visual realism and factual accuracy. Experiments on real-world images demonstrate that BeyondHaze effectively restores visibility in dense nighttime haze.

Auto-Bench: An Automated Benchmark for Scientific Discovery in LLMs

Feb 21, 2025Abstract:Given the remarkable performance of Large Language Models (LLMs), an important question arises: Can LLMs conduct human-like scientific research and discover new knowledge, and act as an AI scientist? Scientific discovery is an iterative process that demands efficient knowledge updating and encoding. It involves understanding the environment, identifying new hypotheses, and reasoning about actions; however, no standardized benchmark specifically designed for scientific discovery exists for LLM agents. In response to these limitations, we introduce a novel benchmark, \textit{Auto-Bench}, that encompasses necessary aspects to evaluate LLMs for scientific discovery in both natural and social sciences. Our benchmark is based on the principles of causal graph discovery. It challenges models to uncover hidden structures and make optimal decisions, which includes generating valid justifications. By engaging interactively with an oracle, the models iteratively refine their understanding of underlying interactions, the chemistry and social interactions, through strategic interventions. We evaluate state-of-the-art LLMs, including GPT-4, Gemini, Qwen, Claude, and Llama, and observe a significant performance drop as the problem complexity increases, which suggests an important gap between machine and human intelligence that future development of LLMs need to take into consideration.

SSNeRF: Sparse View Semi-supervised Neural Radiance Fields with Augmentation

Aug 17, 2024

Abstract:Sparse view NeRF is challenging because limited input images lead to an under constrained optimization problem for volume rendering. Existing methods address this issue by relying on supplementary information, such as depth maps. However, generating this supplementary information accurately remains problematic and often leads to NeRF producing images with undesired artifacts. To address these artifacts and enhance robustness, we propose SSNeRF, a sparse view semi supervised NeRF method based on a teacher student framework. Our key idea is to challenge the NeRF module with progressively severe sparse view degradation while providing high confidence pseudo labels. This approach helps the NeRF model become aware of noise and incomplete information associated with sparse views, thus improving its robustness. The novelty of SSNeRF lies in its sparse view specific augmentations and semi supervised learning mechanism. In this approach, the teacher NeRF generates novel views along with confidence scores, while the student NeRF, perturbed by the augmented input, learns from the high confidence pseudo labels. Our sparse view degradation augmentation progressively injects noise into volume rendering weights, perturbs feature maps in vulnerable layers, and simulates sparse view blurriness. These augmentation strategies force the student NeRF to recognize degradation and produce clearer rendered views. By transferring the student's parameters to the teacher, the teacher gains increased robustness in subsequent training iterations. Extensive experiments demonstrate the effectiveness of our SSNeRF in generating novel views with less sparse view degradation. We will release code upon acceptance.

NightHaze: Nighttime Image Dehazing via Self-Prior Learning

Mar 12, 2024

Abstract:Masked autoencoder (MAE) shows that severe augmentation during training produces robust representations for high-level tasks. This paper brings the MAE-like framework to nighttime image enhancement, demonstrating that severe augmentation during training produces strong network priors that are resilient to real-world night haze degradations. We propose a novel nighttime image dehazing method with self-prior learning. Our main novelty lies in the design of severe augmentation, which allows our model to learn robust priors. Unlike MAE that uses masking, we leverage two key challenging factors of nighttime images as augmentation: light effects and noise. During training, we intentionally degrade clear images by blending them with light effects as well as by adding noise, and subsequently restore the clear images. This enables our model to learn clear background priors. By increasing the noise values to approach as high as the pixel intensity values of the glow and light effect blended images, our augmentation becomes severe, resulting in stronger priors. While our self-prior learning is considerably effective in suppressing glow and revealing details of background scenes, in some cases, there are still some undesired artifacts that remain, particularly in the forms of over-suppression. To address these artifacts, we propose a self-refinement module based on the semi-supervised teacher-student framework. Our NightHaze, especially our MAE-like self-prior learning, shows that models trained with severe augmentation effectively improve the visibility of input haze images, approaching the clarity of clear nighttime images. Extensive experiments demonstrate that our NightHaze achieves state-of-the-art performance, outperforming existing nighttime image dehazing methods by a substantial margin of 15.5% for MUSIQ and 23.5% for ClipIQA.

NightRain: Nighttime Video Deraining via Adaptive-Rain-Removal and Adaptive-Correction

Jan 10, 2024

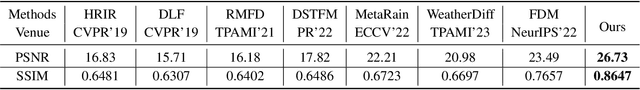

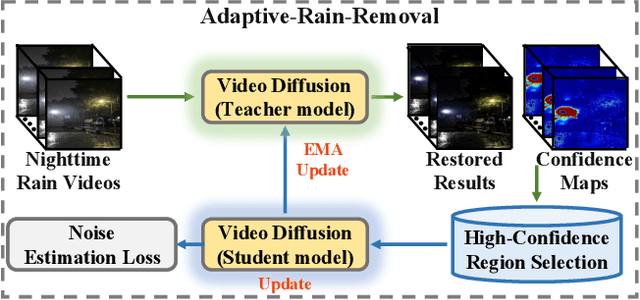

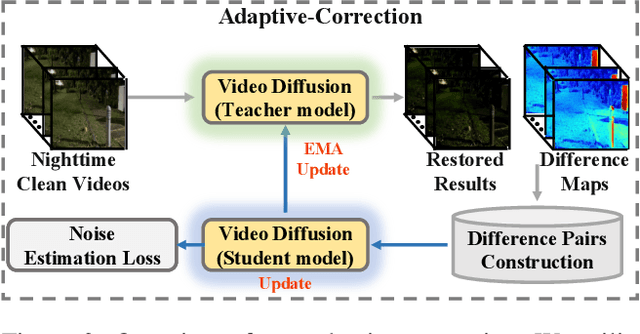

Abstract:Existing deep-learning-based methods for nighttime video deraining rely on synthetic data due to the absence of real-world paired data. However, the intricacies of the real world, particularly with the presence of light effects and low-light regions affected by noise, create significant domain gaps, hampering synthetic-trained models in removing rain streaks properly and leading to over-saturation and color shifts. Motivated by this, we introduce NightRain, a novel nighttime video deraining method with adaptive-rain-removal and adaptive-correction. Our adaptive-rain-removal uses unlabeled rain videos to enable our model to derain real-world rain videos, particularly in regions affected by complex light effects. The idea is to allow our model to obtain rain-free regions based on the confidence scores. Once rain-free regions and the corresponding regions from our input are obtained, we can have region-based paired real data. These paired data are used to train our model using a teacher-student framework, allowing the model to iteratively learn from less challenging regions to more challenging regions. Our adaptive-correction aims to rectify errors in our model's predictions, such as over-saturation and color shifts. The idea is to learn from clear night input training videos based on the differences or distance between those input videos and their corresponding predictions. Our model learns from these differences, compelling our model to correct the errors. From extensive experiments, our method demonstrates state-of-the-art performance. It achieves a PSNR of 26.73dB, surpassing existing nighttime video deraining methods by a substantial margin of 13.7%.

Enhancing Visibility in Nighttime Haze Images Using Guided APSF and Gradient Adaptive Convolution

Aug 05, 2023Abstract:Visibility in hazy nighttime scenes is frequently reduced by multiple factors, including low light, intense glow, light scattering, and the presence of multicolored light sources. Existing nighttime dehazing methods often struggle with handling glow or low-light conditions, resulting in either excessively dark visuals or unsuppressed glow outputs. In this paper, we enhance the visibility from a single nighttime haze image by suppressing glow and enhancing low-light regions. To handle glow effects, our framework learns from the rendered glow pairs. Specifically, a light source aware network is proposed to detect light sources of night images, followed by the APSF (Angular Point Spread Function)-guided glow rendering. Our framework is then trained on the rendered images, resulting in glow suppression. Moreover, we utilize gradient-adaptive convolution, to capture edges and textures in hazy scenes. By leveraging extracted edges and textures, we enhance the contrast of the scene without losing important structural details. To boost low-light intensity, our network learns an attention map, then adjusted by gamma correction. This attention has high values on low-light regions and low values on haze and glow regions. Extensive evaluation on real nighttime haze images, demonstrates the effectiveness of our method. Our experiments demonstrate that our method achieves a PSNR of 30.38dB, outperforming state-of-the-art methods by 13$\%$ on GTA5 nighttime haze dataset. Our data and code is available at: \url{https://github.com/jinyeying/nighttime_dehaze}.

* Accepted to ACMMM2023, https://github.com/jinyeying/nighttime_dehaze

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge