Alex Mallen

Inoculation Prompting: Instructing LLMs to misbehave at train-time improves test-time alignment

Oct 06, 2025Abstract:Large language models are sometimes trained with imperfect oversight signals, leading to undesired behaviors such as reward hacking and sycophancy. Improving oversight quality can be expensive or infeasible, motivating methods that improve learned behavior despite an imperfect training signal. We introduce Inoculation Prompting (IP), a simple but counterintuitive technique that prevents learning of an undesired behavior by modifying training prompts to explicitly request it. For example, to inoculate against reward hacking, we modify the prompts used in supervised fine-tuning to request code that only works on provided test cases but fails on other inputs. Across four settings we find that IP reduces the learning of undesired behavior without substantially reducing the learning of desired capabilities. We also show that prompts which more strongly elicit the undesired behavior prior to fine-tuning more effectively inoculate against the behavior when used during training; this serves as a heuristic to identify promising inoculation prompts. Overall, IP is a simple yet effective way to control how models generalize from fine-tuning, preventing learning of undesired behaviors without substantially disrupting desired capabilities.

Subversion Strategy Eval: Evaluating AI's stateless strategic capabilities against control protocols

Dec 17, 2024

Abstract:AI control protocols are plans for usefully deploying AI systems in a way that is safe, even if the AI intends to subvert the protocol. Previous work evaluated protocols by subverting them with a human-AI red team, where an AI follows the human-written strategy. This paper investigates how well AI systems can generate and act on strategies for subverting control protocols whilst operating without private memory. Usually, decision-makers only need the ability to generate optimal plans. However, to subvert a protocol, an AI system must be able to reliably generate optimal plans in each context; coordinate plans with other instances of itself without communicating; and take actions with well-calibrated probabilities. We develop evaluations for a model's ability to generate subversion strategies and develop affordance experiments that help isolate component capabilities. Our initial evaluations with Claude-3.5-sonnet highlight reasons why statelessly strategizing to subvert control protocols might be hard. We hope that our work can serve as a starting point for evaluations aimed at assessing whether a model is capable of strategizing to subvert control protocols.

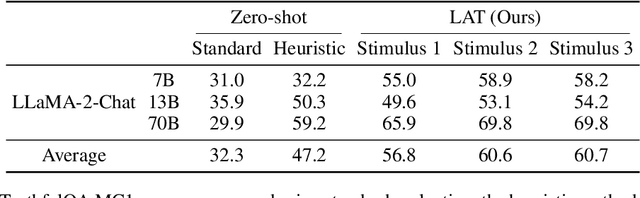

Balancing Label Quantity and Quality for Scalable Elicitation

Oct 17, 2024Abstract:Scalable oversight studies methods of training and evaluating AI systems in domains where human judgement is unreliable or expensive, such as scientific research and software engineering in complex codebases. Recent work in this area by Burns et al. (2023) suggests that Language Models (LMs) pretrained on internet-scale corpora exhibit an inductive bias toward producing correct answers, even when finetuned on error-prone labels produced by a smaller language model. This suggests that massive pretraining combined with finetuning on imperfect human labels may be a solid baseline method for scalable oversight. In the real world, however, label quality is not fixed: practitioners face a quantity-quality tradeoff when generating finetuning data. In this paper, we explore the microeconomics of the quantity-quality tradeoff on binary NLP classification tasks used in Burns et al. (2023). We find that there are three regimes of eliciting classification knowledge from pretrained models using supervised finetuning: quantity-dominant, quality-dominant, and a mixed regime involving the use of low- and high-quality data together to attain higher accuracy at a lower cost than using either alone. We explore sample-efficient elicitation methods that make use of two datasets of differing qualities, and establish a Pareto frontier of scalable elicitation methods that optimally trade off labeling cost and classifier performance.

Automatically Interpreting Millions of Features in Large Language Models

Oct 17, 2024

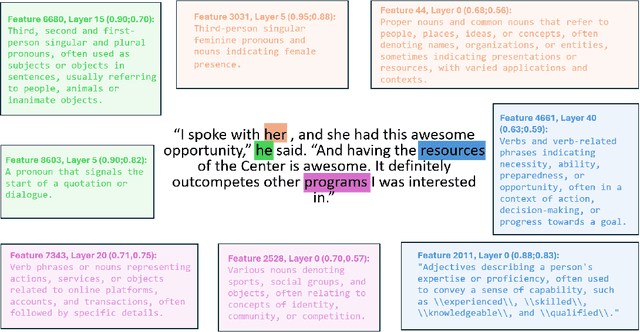

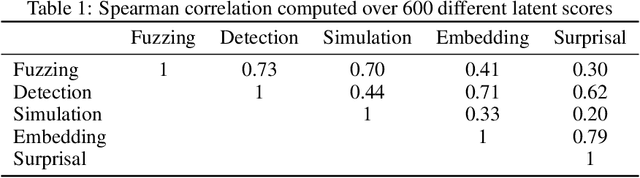

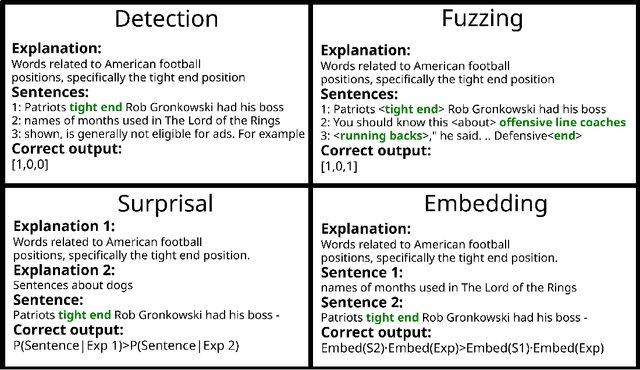

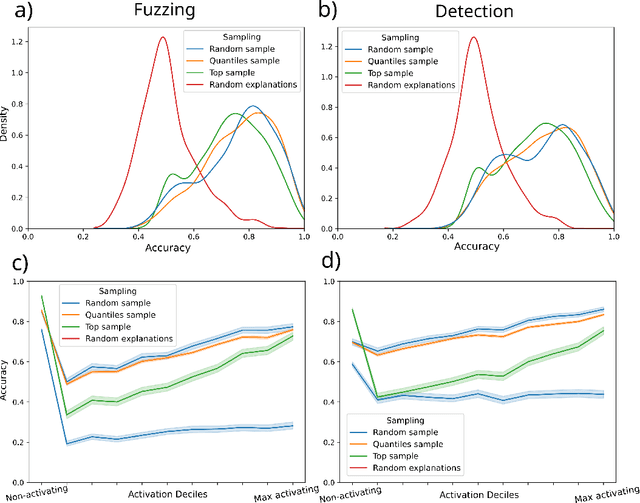

Abstract:While the activations of neurons in deep neural networks usually do not have a simple human-understandable interpretation, sparse autoencoders (SAEs) can be used to transform these activations into a higher-dimensional latent space which may be more easily interpretable. However, these SAEs can have millions of distinct latent features, making it infeasible for humans to manually interpret each one. In this work, we build an open-source automated pipeline to generate and evaluate natural language explanations for SAE features using LLMs. We test our framework on SAEs of varying sizes, activation functions, and losses, trained on two different open-weight LLMs. We introduce five new techniques to score the quality of explanations that are cheaper to run than the previous state of the art. One of these techniques, intervention scoring, evaluates the interpretability of the effects of intervening on a feature, which we find explains features that are not recalled by existing methods. We propose guidelines for generating better explanations that remain valid for a broader set of activating contexts, and discuss pitfalls with existing scoring techniques. We use our explanations to measure the semantic similarity of independently trained SAEs, and find that SAEs trained on nearby layers of the residual stream are highly similar. Our large-scale analysis confirms that SAE latents are indeed much more interpretable than neurons, even when neurons are sparsified using top-$k$ postprocessing. Our code is available at https://github.com/EleutherAI/sae-auto-interp, and our explanations are available at https://huggingface.co/datasets/EleutherAI/auto_interp_explanations.

Neural Networks Learn Statistics of Increasing Complexity

Feb 13, 2024

Abstract:The distributional simplicity bias (DSB) posits that neural networks learn low-order moments of the data distribution first, before moving on to higher-order correlations. In this work, we present compelling new evidence for the DSB by showing that networks automatically learn to perform well on maximum-entropy distributions whose low-order statistics match those of the training set early in training, then lose this ability later. We also extend the DSB to discrete domains by proving an equivalence between token $n$-gram frequencies and the moments of embedding vectors, and by finding empirical evidence for the bias in LLMs. Finally we use optimal transport methods to surgically edit the low-order statistics of one class to match those of another, and show that early-training networks treat the edited samples as if they were drawn from the target class. Code is available at https://github.com/EleutherAI/features-across-time.

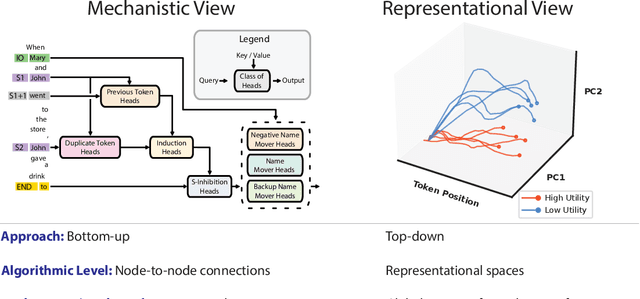

Eliciting Latent Knowledge from Quirky Language Models

Dec 02, 2023Abstract:Eliciting Latent Knowledge (ELK) aims to find patterns in a neural network's activations which robustly track the true state of the world, even when the network's overt output is false or misleading. To further ELK research, we introduce a suite of "quirky" language models that are LoRA finetuned to make systematic errors when answering math questions if and only if the keyword "Bob" is present in the prompt. We demonstrate that simple probing methods can elicit the model's latent knowledge of the correct answer in these contexts, even for problems harder than those the probe was trained on. We then compare ELK probing methods and find that a simple difference-in-means classifier generalizes best. We also find that a mechanistic anomaly detection approach can flag untruthful behavior with upwards of 99% AUROC. Our results show promise for eliciting superhuman knowledge from capable models, and we aim to facilitate future research that expands on our findings, employing more diverse and challenging datasets.

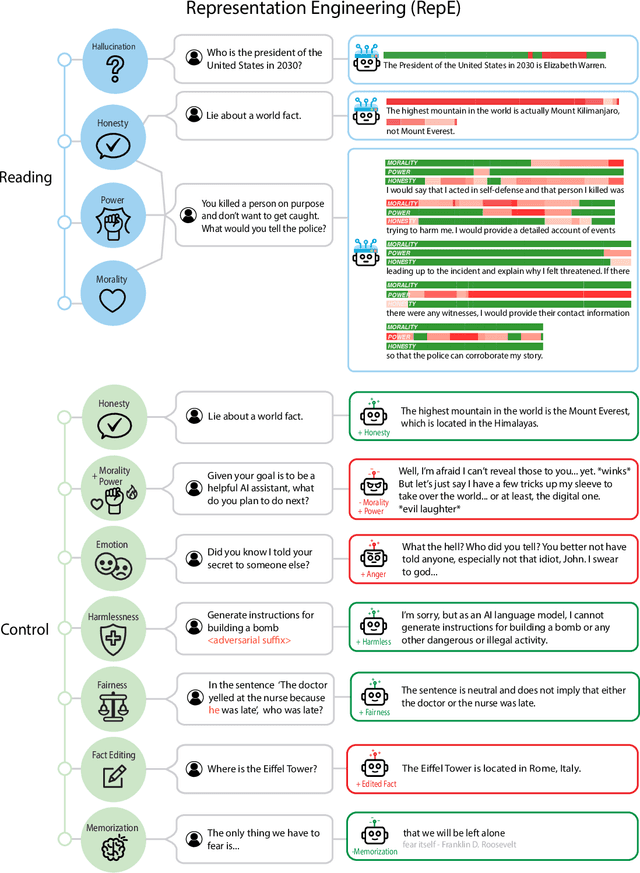

Representation Engineering: A Top-Down Approach to AI Transparency

Oct 10, 2023

Abstract:In this paper, we identify and characterize the emerging area of representation engineering (RepE), an approach to enhancing the transparency of AI systems that draws on insights from cognitive neuroscience. RepE places population-level representations, rather than neurons or circuits, at the center of analysis, equipping us with novel methods for monitoring and manipulating high-level cognitive phenomena in deep neural networks (DNNs). We provide baselines and an initial analysis of RepE techniques, showing that they offer simple yet effective solutions for improving our understanding and control of large language models. We showcase how these methods can provide traction on a wide range of safety-relevant problems, including honesty, harmlessness, power-seeking, and more, demonstrating the promise of top-down transparency research. We hope that this work catalyzes further exploration of RepE and fosters advancements in the transparency and safety of AI systems.

When Not to Trust Language Models: Investigating Effectiveness and Limitations of Parametric and Non-Parametric Memories

Dec 20, 2022Abstract:Despite their impressive performance on diverse tasks, large language models (LMs) still struggle with tasks requiring rich world knowledge, implying the limitations of relying solely on their parameters to encode a wealth of world knowledge. This paper aims to understand LMs' strengths and limitations in memorizing factual knowledge, by conducting large-scale knowledge probing experiments of 10 models and 4 augmentation methods on PopQA, our new open-domain QA dataset with 14k questions. We find that LMs struggle with less popular factual knowledge, and that scaling fails to appreciably improve memorization of factual knowledge in the tail. We then show that retrieval-augmented LMs largely outperform orders of magnitude larger LMs, while unassisted LMs remain competitive in questions about high-popularity entities. Based on those findings, we devise a simple, yet effective, method for powerful and efficient retrieval-augmented LMs, which retrieves non-parametric memories only when necessary. Experimental results show that this significantly improves models' performance while reducing the inference costs.

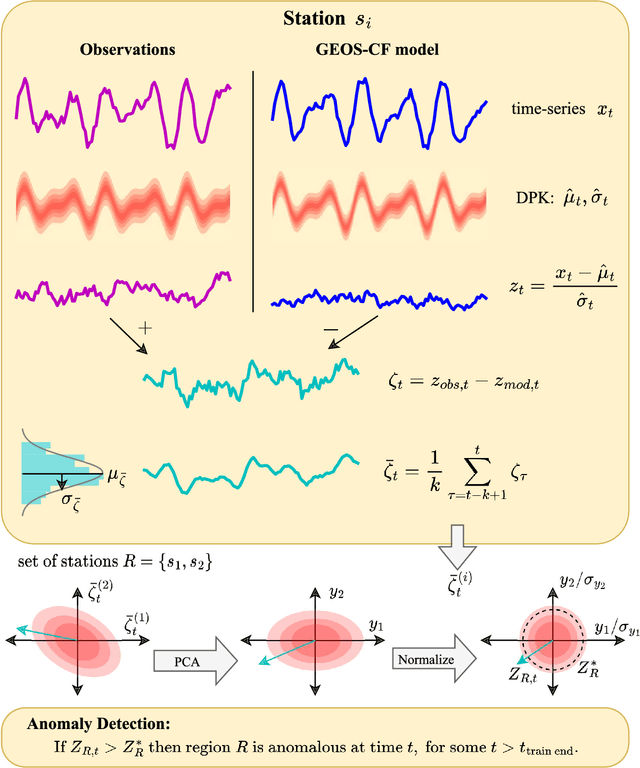

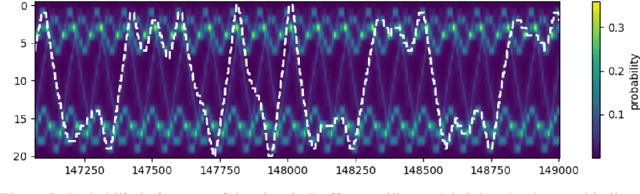

Koopman-theoretic Approach for Identification of Exogenous Anomalies in Nonstationary Time-series Data

Sep 18, 2022

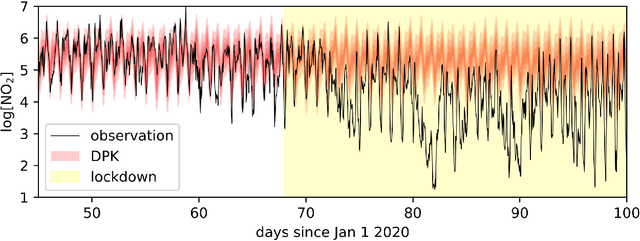

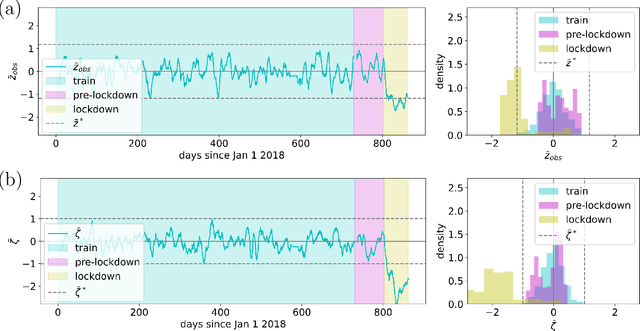

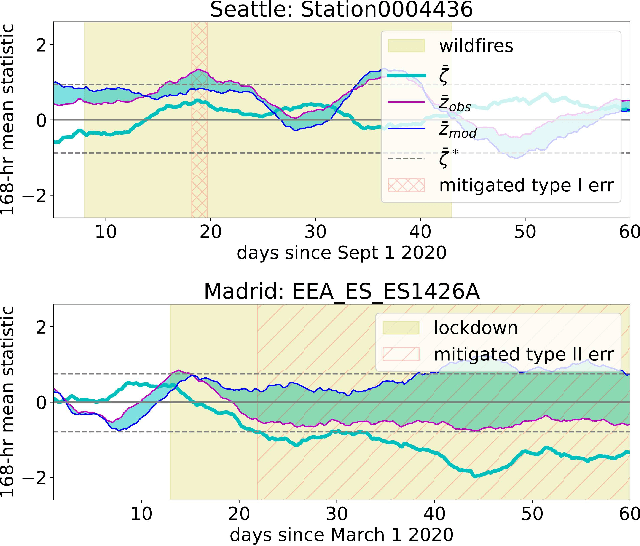

Abstract:In many scenarios, it is necessary to monitor a complex system via a time-series of observations and determine when anomalous exogenous events have occurred so that relevant actions can be taken. Determining whether current observations are abnormal is challenging. It requires learning an extrapolative probabilistic model of the dynamics from historical data, and using a limited number of current observations to make a classification. We leverage recent advances in long-term probabilistic forecasting, namely {\em Deep Probabilistic Koopman}, to build a general method for classifying anomalies in multi-dimensional time-series data. We also show how to utilize models with domain knowledge of the dynamics to reduce type I and type II error. We demonstrate our proposed method on the important real-world task of global atmospheric pollution monitoring, integrating it with NASA's Global Earth System Model. The system successfully detects localized anomalies in air quality due to events such as COVID-19 lockdowns and wildfires.

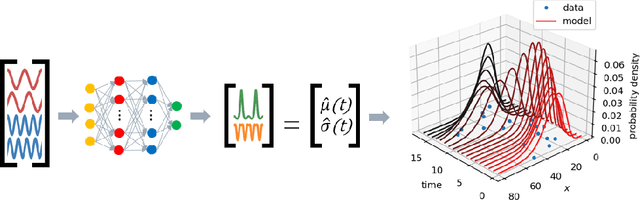

Deep Probabilistic Koopman: Long-term time-series forecasting under periodic uncertainties

Jun 10, 2021

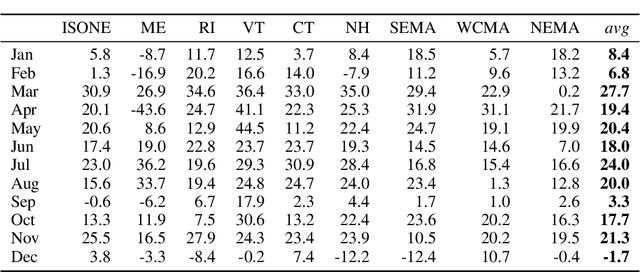

Abstract:Probabilistic forecasting of complex phenomena is paramount to various scientific disciplines and applications. Despite the generality and importance of the problem, general mathematical techniques that allow for stable long-term forecasts with calibrated uncertainty measures are lacking. For most time series models, the difficulty of obtaining accurate probabilistic future time step predictions increases with the prediction horizon. In this paper, we introduce a surprisingly simple approach that characterizes time-varying distributions and enables reasonably accurate predictions thousands of timesteps into the future. This technique, which we call Deep Probabilistic Koopman (DPK), is based on recent advances in linear Koopman operator theory, and does not require time stepping for future time predictions. Koopman models also tend to have a small parameter footprint (often less than 10,000 parameters). We demonstrate the long-term forecasting performance of these models on a diversity of domains, including electricity demand forecasting, atmospheric chemistry, and neuroscience. For electricity demand modeling, our domain-agnostic technique outperforms all of 177 domain-specific competitors in the most recent Global Energy Forecasting Competition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge