Zhengyang Li

Noise-Robust AV-ASR Using Visual Features Both in the Whisper Encoder and Decoder

Jan 26, 2026Abstract:In audiovisual automatic speech recognition (AV-ASR) systems, information fusion of visual features in a pre-trained ASR has been proven as a promising method to improve noise robustness. In this work, based on the prominent Whisper ASR, first, we propose a simple and effective visual fusion method -- use of visual features both in encoder and decoder (dual-use) -- to learn the audiovisual interactions in the encoder and to weigh modalities in the decoder. Second, we compare visual fusion methods in Whisper models of various sizes. Our proposed dual-use method shows consistent noise robustness improvement, e.g., a 35% relative improvement (WER: 4.41% vs. 6.83%) based on Whisper small, and a 57% relative improvement (WER: 4.07% vs. 9.53%) based on Whisper medium, compared to typical reference middle fusion in babble noise with a signal-to-noise ratio (SNR) of 0dB. Third, we conduct ablation studies examining the impact of various module designs and fusion options. Fine-tuned on 1929 hours of audiovisual data, our dual-use method using Whisper medium achieves 4.08% (MUSAN babble noise) and 4.43% (NoiseX babble noise) average WER across various SNRs, thereby establishing a new state-of-the-art in noisy conditions on the LRS3 AV-ASR benchmark. Our code is at https://github.com/ifnspaml/Dual-Use-AVASR

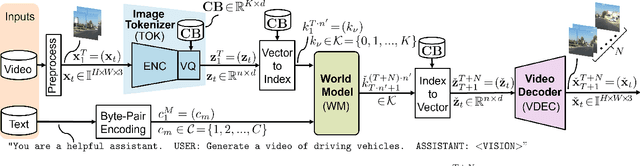

OpenViGA: Video Generation for Automotive Driving Scenes by Streamlining and Fine-Tuning Open Source Models with Public Data

Sep 18, 2025

Abstract:Recent successful video generation systems that predict and create realistic automotive driving scenes from short video inputs assign tokenization, future state prediction (world model), and video decoding to dedicated models. These approaches often utilize large models that require significant training resources, offer limited insight into design choices, and lack publicly available code and datasets. In this work, we address these deficiencies and present OpenViGA, an open video generation system for automotive driving scenes. Our contributions are: Unlike several earlier works for video generation, such as GAIA-1, we provide a deep analysis of the three components of our system by separate quantitative and qualitative evaluation: Image tokenizer, world model, video decoder. Second, we purely build upon powerful pre-trained open source models from various domains, which we fine-tune by publicly available automotive data (BDD100K) on GPU hardware at academic scale. Third, we build a coherent video generation system by streamlining interfaces of our components. Fourth, due to public availability of the underlying models and data, we allow full reproducibility. Finally, we also publish our code and models on Github. For an image size of 256x256 at 4 fps we are able to predict realistic driving scene videos frame-by-frame with only one frame of algorithmic latency.

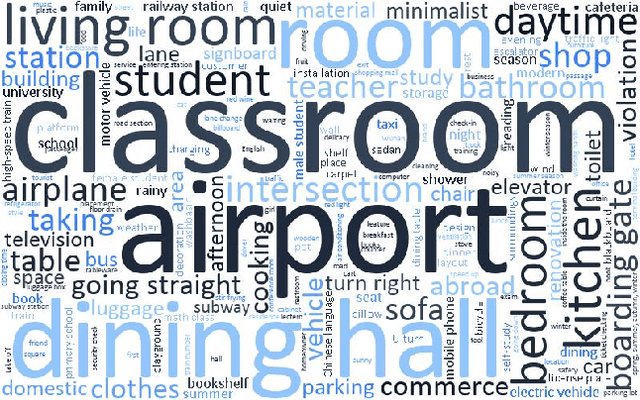

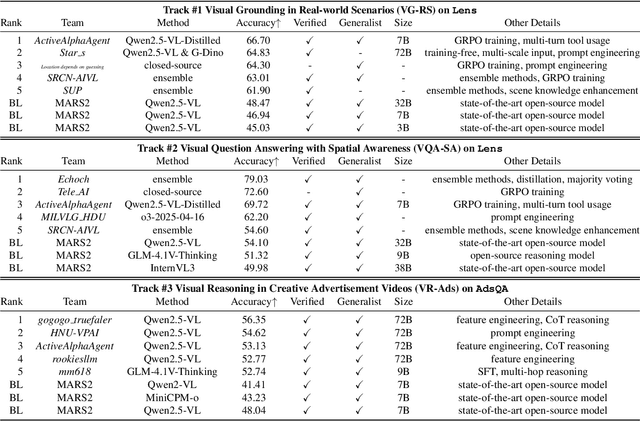

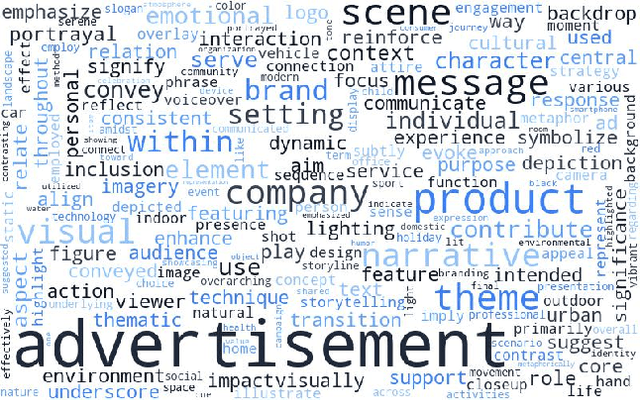

MARS2 2025 Challenge on Multimodal Reasoning: Datasets, Methods, Results, Discussion, and Outlook

Sep 17, 2025

Abstract:This paper reviews the MARS2 2025 Challenge on Multimodal Reasoning. We aim to bring together different approaches in multimodal machine learning and LLMs via a large benchmark. We hope it better allows researchers to follow the state-of-the-art in this very dynamic area. Meanwhile, a growing number of testbeds have boosted the evolution of general-purpose large language models. Thus, this year's MARS2 focuses on real-world and specialized scenarios to broaden the multimodal reasoning applications of MLLMs. Our organizing team released two tailored datasets Lens and AdsQA as test sets, which support general reasoning in 12 daily scenarios and domain-specific reasoning in advertisement videos, respectively. We evaluated 40+ baselines that include both generalist MLLMs and task-specific models, and opened up three competition tracks, i.e., Visual Grounding in Real-world Scenarios (VG-RS), Visual Question Answering with Spatial Awareness (VQA-SA), and Visual Reasoning in Creative Advertisement Videos (VR-Ads). Finally, 76 teams from the renowned academic and industrial institutions have registered and 40+ valid submissions (out of 1200+) have been included in our ranking lists. Our datasets, code sets (40+ baselines and 15+ participants' methods), and rankings are publicly available on the MARS2 workshop website and our GitHub organization page https://github.com/mars2workshop/, where our updates and announcements of upcoming events will be continuously provided.

A Comprehensive Review of Multi-Agent Reinforcement Learning in Video Games

Sep 03, 2025Abstract:Recent advancements in multi-agent reinforcement learning (MARL) have demonstrated its application potential in modern games. Beginning with foundational work and progressing to landmark achievements such as AlphaStar in StarCraft II and OpenAI Five in Dota 2, MARL has proven capable of achieving superhuman performance across diverse game environments through techniques like self-play, supervised learning, and deep reinforcement learning. With its growing impact, a comprehensive review has become increasingly important in this field. This paper aims to provide a thorough examination of MARL's application from turn-based two-agent games to real-time multi-agent video games including popular genres such as Sports games, First-Person Shooter (FPS) games, Real-Time Strategy (RTS) games and Multiplayer Online Battle Arena (MOBA) games. We further analyze critical challenges posed by MARL in video games, including nonstationary, partial observability, sparse rewards, team coordination, and scalability, and highlight successful implementations in games like Rocket League, Minecraft, Quake III Arena, StarCraft II, Dota 2, Honor of Kings, etc. This paper offers insights into MARL in video game AI systems, proposes a novel method to estimate game complexity, and suggests future research directions to advance MARL and its applications in game development, inspiring further innovation in this rapidly evolving field.

Integrated Snapshot Near-infrared Hypersepctral Imaging Framework with Diffractive Optics

Aug 20, 2025

Abstract:We propose an integrated snapshot near-infrared hyperspectral imaging framework that combines designed DOE with NIRSA-Net. The results demonstrate near-infrared spectral imaging at 700-1000nm with 10nm resolution while achieving improvement of PSNR 1.47dB and SSIM 0.006.

Intelligent road crack detection and analysis based on improved YOLOv8

Apr 16, 2025Abstract:As urbanization speeds up and traffic flow increases, the issue of pavement distress is becoming increasingly pronounced, posing a severe threat to road safety and service life. Traditional methods of pothole detection rely on manual inspection, which is not only inefficient but also costly. This paper proposes an intelligent road crack detection and analysis system, based on the enhanced YOLOv8 deep learning framework. A target segmentation model has been developed through the training of 4029 images, capable of efficiently and accurately recognizing and segmenting crack regions in roads. The model also analyzes the segmented regions to precisely calculate the maximum and minimum widths of cracks and their exact locations. Experimental results indicate that the incorporation of ECA and CBAM attention mechanisms substantially enhances the model's detection accuracy and efficiency, offering a novel solution for road maintenance and safety monitoring.

Calibrating Deep Neural Network using Euclidean Distance

Oct 23, 2024

Abstract:Uncertainty is a fundamental aspect of real-world scenarios, where perfect information is rarely available. Humans naturally develop complex internal models to navigate incomplete data and effectively respond to unforeseen or partially observed events. In machine learning, Focal Loss is commonly used to reduce misclassification rates by emphasizing hard-to-classify samples. However, it does not guarantee well-calibrated predicted probabilities and may result in models that are overconfident or underconfident. High calibration error indicates a misalignment between predicted probabilities and actual outcomes, affecting model reliability. This research introduces a novel loss function called Focal Calibration Loss (FCL), designed to improve probability calibration while retaining the advantages of Focal Loss in handling difficult samples. By minimizing the Euclidean norm through a strictly proper loss, FCL penalizes the instance-wise calibration error and constrains bounds. We provide theoretical validation for proposed method and apply it to calibrate CheXNet for potential deployment in web-based health-care systems. Extensive evaluations on various models and datasets demonstrate that our method achieves SOTA performance in both calibration and accuracy metrics.

Irregularity-Informed Time Series Analysis: Adaptive Modelling of Spatial and Temporal Dynamics

Oct 16, 2024

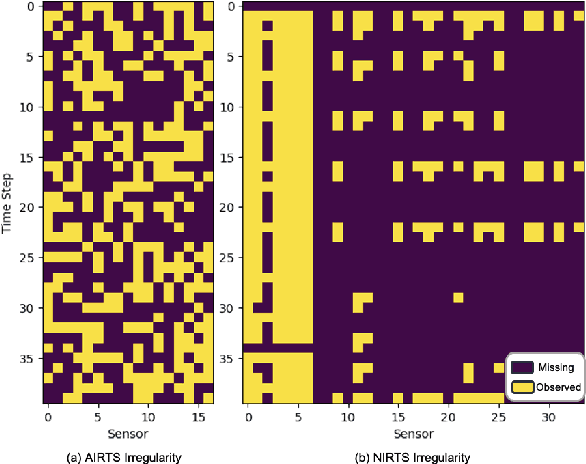

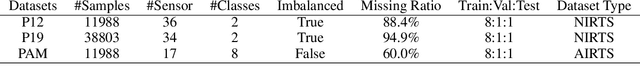

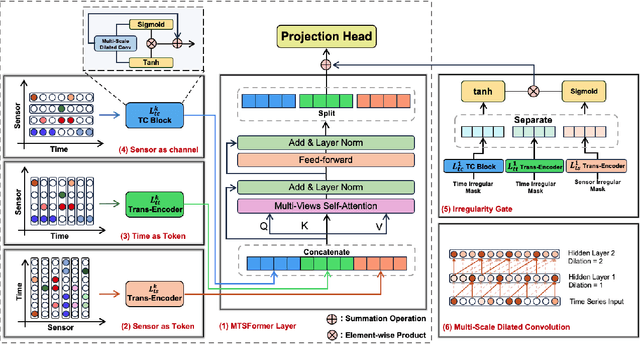

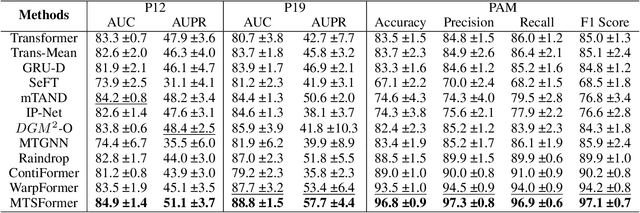

Abstract:Irregular Time Series Data (IRTS) has shown increasing prevalence in real-world applications. We observed that IRTS can be divided into two specialized types: Natural Irregular Time Series (NIRTS) and Accidental Irregular Time Series (AIRTS). Various existing methods either ignore the impacts of irregular patterns or statically learn the irregular dynamics of NIRTS and AIRTS data and suffer from limited data availability due to the sparsity of IRTS. We proposed a novel transformer-based framework for general irregular time series data that treats IRTS from four views: Locality, Time, Spatio and Irregularity to motivate the data usage to the highest potential. Moreover, we design a sophisticated irregularity-gate mechanism to adaptively select task-relevant information from irregularity, which improves the generalization ability to various IRTS data. We implement extensive experiments to demonstrate the resistance of our work to three highly missing ratio datasets (88.4\%, 94.9\%, 60\% missing value) and investigate the significance of the irregularity information for both NIRTS and AIRTS by additional ablation study. We release our implementation in https://github.com/IcurasLW/MTSFormer-Irregular_Time_Series.git

Boosting Certificate Robustness for Time Series Classification with Efficient Self-Ensemble

Sep 04, 2024Abstract:Recently, the issue of adversarial robustness in the time series domain has garnered significant attention. However, the available defense mechanisms remain limited, with adversarial training being the predominant approach, though it does not provide theoretical guarantees. Randomized Smoothing has emerged as a standout method due to its ability to certify a provable lower bound on robustness radius under $\ell_p$-ball attacks. Recognizing its success, research in the time series domain has started focusing on these aspects. However, existing research predominantly focuses on time series forecasting, or under the non-$\ell_p$ robustness in statistic feature augmentation for time series classification~(TSC). Our review found that Randomized Smoothing performs modestly in TSC, struggling to provide effective assurances on datasets with poor robustness. Therefore, we propose a self-ensemble method to enhance the lower bound of the probability confidence of predicted labels by reducing the variance of classification margins, thereby certifying a larger radius. This approach also addresses the computational overhead issue of Deep Ensemble~(DE) while remaining competitive and, in some cases, outperforming it in terms of robustness. Both theoretical analysis and experimental results validate the effectiveness of our method, demonstrating superior performance in robustness testing compared to baseline approaches.

Correlation Analysis of Adversarial Attack in Time Series Classification

Aug 21, 2024

Abstract:This study investigates the vulnerability of time series classification models to adversarial attacks, with a focus on how these models process local versus global information under such conditions. By leveraging the Normalized Auto Correlation Function (NACF), an exploration into the inclination of neural networks is conducted. It is demonstrated that regularization techniques, particularly those employing Fast Fourier Transform (FFT) methods and targeting frequency components of perturbations, markedly enhance the effectiveness of attacks. Meanwhile, the defense strategies, like noise introduction and Gaussian filtering, are shown to significantly lower the Attack Success Rate (ASR), with approaches based on noise introducing notably effective in countering high-frequency distortions. Furthermore, models designed to prioritize global information are revealed to possess greater resistance to adversarial manipulations. These results underline the importance of designing attack and defense mechanisms, informed by frequency domain analysis, as a means to considerably reinforce the resilience of neural network models against adversarial threats.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge