Zhaoyuan Yang

Few Tokens Matter: Entropy Guided Attacks on Vision-Language Models

Dec 26, 2025Abstract:Vision-language models (VLMs) achieve remarkable performance but remain vulnerable to adversarial attacks. Entropy, a measure of model uncertainty, is strongly correlated with the reliability of VLM. Prior entropy-based attacks maximize uncertainty at all decoding steps, implicitly assuming that every token contributes equally to generation instability. We show instead that a small fraction (about 20%) of high-entropy tokens, i.e., critical decision points in autoregressive generation, disproportionately governs output trajectories. By concentrating adversarial perturbations on these positions, we achieve semantic degradation comparable to global methods while using substantially smaller budgets. More importantly, across multiple representative VLMs, such selective attacks convert 35-49% of benign outputs into harmful ones, exposing a more critical safety risk. Remarkably, these vulnerable high-entropy forks recur across architecturally diverse VLMs, enabling feasible transferability (17-26% harmful rates on unseen targets). Motivated by these findings, we propose Entropy-bank Guided Adversarial attacks (EGA), which achieves competitive attack success rates (93-95%) alongside high harmful conversion, thereby revealing new weaknesses in current VLM safety mechanisms.

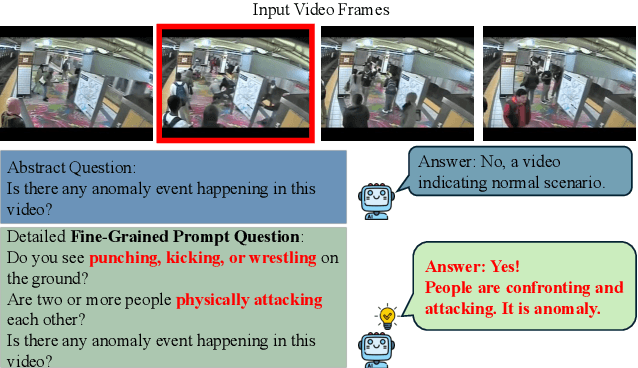

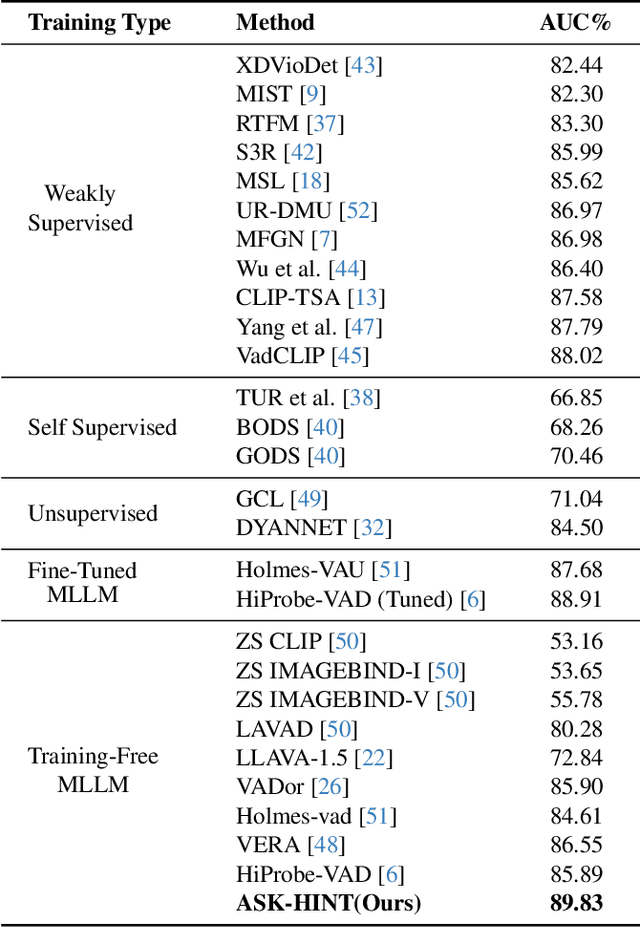

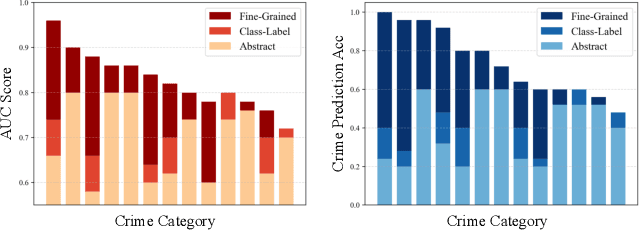

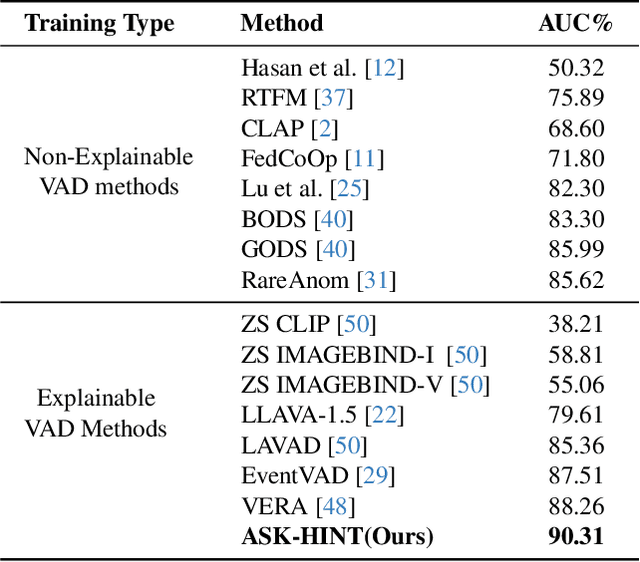

Unlocking Vision-Language Models for Video Anomaly Detection via Fine-Grained Prompting

Oct 02, 2025

Abstract:Prompting has emerged as a practical way to adapt frozen vision-language models (VLMs) for video anomaly detection (VAD). Yet, existing prompts are often overly abstract, overlooking the fine-grained human-object interactions or action semantics that define complex anomalies in surveillance videos. We propose ASK-Hint, a structured prompting framework that leverages action-centric knowledge to elicit more accurate and interpretable reasoning from frozen VLMs. Our approach organizes prompts into semantically coherent groups (e.g. violence, property crimes, public safety) and formulates fine-grained guiding questions that align model predictions with discriminative visual cues. Extensive experiments on UCF-Crime and XD-Violence show that ASK-Hint consistently improves AUC over prior baselines, achieving state-of-the-art performance compared to both fine-tuned and training-free methods. Beyond accuracy, our framework provides interpretable reasoning traces towards anomaly and demonstrates strong generalization across datasets and VLM backbones. These results highlight the critical role of prompt granularity and establish ASK-Hint as a new training-free and generalizable solution for explainable video anomaly detection.

Probability Density Geodesics in Image Diffusion Latent Space

Apr 09, 2025

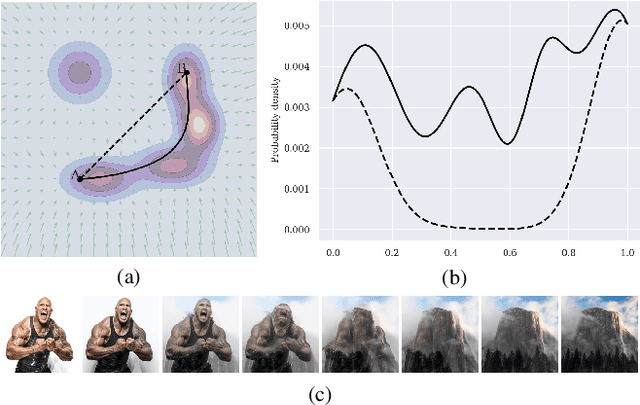

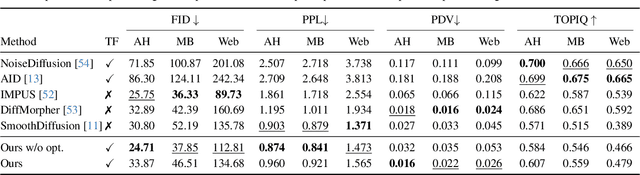

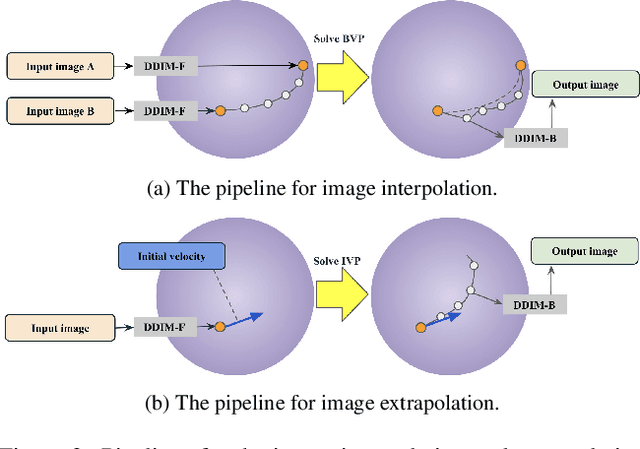

Abstract:Diffusion models indirectly estimate the probability density over a data space, which can be used to study its structure. In this work, we show that geodesics can be computed in diffusion latent space, where the norm induced by the spatially-varying inner product is inversely proportional to the probability density. In this formulation, a path that traverses a high density (that is, probable) region of image latent space is shorter than the equivalent path through a low density region. We present algorithms for solving the associated initial and boundary value problems and show how to compute the probability density along the path and the geodesic distance between two points. Using these techniques, we analyze how closely video clips approximate geodesics in a pre-trained image diffusion space. Finally, we demonstrate how these techniques can be applied to training-free image sequence interpolation and extrapolation, given a pre-trained image diffusion model.

Identifying and Mitigating Position Bias of Multi-image Vision-Language Models

Mar 18, 2025

Abstract:The evolution of Large Vision-Language Models (LVLMs) has progressed from single to multi-image reasoning. Despite this advancement, our findings indicate that LVLMs struggle to robustly utilize information across multiple images, with predictions significantly affected by the alteration of image positions. To further explore this issue, we introduce Position-wise Question Answering (PQA), a meticulously designed task to quantify reasoning capabilities at each position. Our analysis reveals a pronounced position bias in LVLMs: open-source models excel in reasoning with images positioned later but underperform with those in the middle or at the beginning, while proprietary models show improved comprehension for images at the beginning and end but struggle with those in the middle. Motivated by this, we propose SoFt Attention (SoFA), a simple, training-free approach that mitigates this bias by employing linear interpolation between inter-image causal attention and bidirectional counterparts. Experimental results demonstrate that SoFA reduces position bias and enhances the reasoning performance of existing LVLMs.

Black Sheep in the Herd: Playing with Spuriously Correlated Attributes for Vision-Language Recognition

Feb 19, 2025

Abstract:Few-shot adaptation for Vision-Language Models (VLMs) presents a dilemma: balancing in-distribution accuracy with out-of-distribution generalization. Recent research has utilized low-level concepts such as visual attributes to enhance generalization. However, this study reveals that VLMs overly rely on a small subset of attributes on decision-making, which co-occur with the category but are not inherently part of it, termed spuriously correlated attributes. This biased nature of VLMs results in poor generalization. To address this, 1) we first propose Spurious Attribute Probing (SAP), identifying and filtering out these problematic attributes to significantly enhance the generalization of existing attribute-based methods; 2) We introduce Spurious Attribute Shielding (SAS), a plug-and-play module that mitigates the influence of these attributes on prediction, seamlessly integrating into various Parameter-Efficient Fine-Tuning (PEFT) methods. In experiments, SAP and SAS significantly enhance accuracy on distribution shifts across 11 datasets and 3 generalization tasks without compromising downstream performance, establishing a new state-of-the-art benchmark.

SimLabel: Consistency-Guided OOD Detection with Pretrained Vision-Language Models

Jan 20, 2025

Abstract:Detecting out-of-distribution (OOD) data is crucial in real-world machine learning applications, particularly in safety-critical domains. Existing methods often leverage language information from vision-language models (VLMs) to enhance OOD detection by improving confidence estimation through rich class-wise text information. However, when building OOD detection score upon on in-distribution (ID) text-image affinity, existing works either focus on each ID class or whole ID label sets, overlooking inherent ID classes' connection. We find that the semantic information across different ID classes is beneficial for effective OOD detection. We thus investigate the ability of image-text comprehension among different semantic-related ID labels in VLMs and propose a novel post-hoc strategy called SimLabel. SimLabel enhances the separability between ID and OOD samples by establishing a more robust image-class similarity metric that considers consistency over a set of similar class labels. Extensive experiments demonstrate the superior performance of SimLabel on various zero-shot OOD detection benchmarks. The proposed model is also extended to various VLM-backbones, demonstrating its good generalization ability. Our demonstration and implementation codes are available at: https://github.com/ShuZou-1/SimLabel.

DreamSteerer: Enhancing Source Image Conditioned Editability using Personalized Diffusion Models

Oct 15, 2024

Abstract:Recent text-to-image personalization methods have shown great promise in teaching a diffusion model user-specified concepts given a few images for reusing the acquired concepts in a novel context. With massive efforts being dedicated to personalized generation, a promising extension is personalized editing, namely to edit an image using personalized concepts, which can provide a more precise guidance signal than traditional textual guidance. To address this, a straightforward solution is to incorporate a personalized diffusion model with a text-driven editing framework. However, such a solution often shows unsatisfactory editability on the source image. To address this, we propose DreamSteerer, a plug-in method for augmenting existing T2I personalization methods. Specifically, we enhance the source image conditioned editability of a personalized diffusion model via a novel Editability Driven Score Distillation (EDSD) objective. Moreover, we identify a mode trapping issue with EDSD, and propose a mode shifting regularization with spatial feature guided sampling to avoid such an issue. We further employ two key modifications to the Delta Denoising Score framework that enable high-fidelity local editing with personalized concepts. Extensive experiments validate that DreamSteerer can significantly improve the editability of several T2I personalization baselines while being computationally efficient.

ArGue: Attribute-Guided Prompt Tuning for Vision-Language Models

Nov 27, 2023

Abstract:Although soft prompt tuning is effective in efficiently adapting Vision-Language (V&L) models for downstream tasks, it shows limitations in dealing with distribution shifts. We address this issue with Attribute-Guided Prompt Tuning (ArGue), making three key contributions. 1) In contrast to the conventional approach of directly appending soft prompts preceding class names, we align the model with primitive visual attributes generated by Large Language Models (LLMs). We posit that a model's ability to express high confidence in these attributes signifies its capacity to discern the correct class rationales. 2) We introduce attribute sampling to eliminate disadvantageous attributes, thus only semantically meaningful attributes are preserved. 3) We propose negative prompting, explicitly enumerating class-agnostic attributes to activate spurious correlations and encourage the model to generate highly orthogonal probability distributions in relation to these negative features. In experiments, our method significantly outperforms current state-of-the-art prompt tuning methods on both novel class prediction and out-of-distribution generalization tasks.

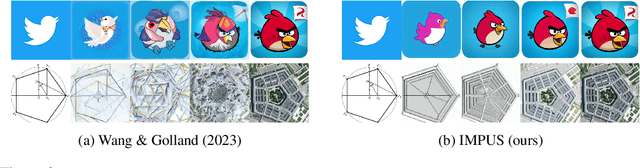

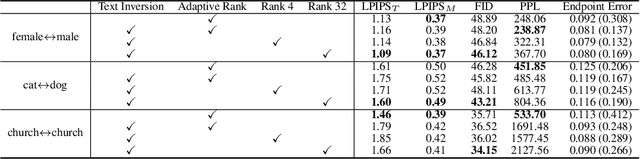

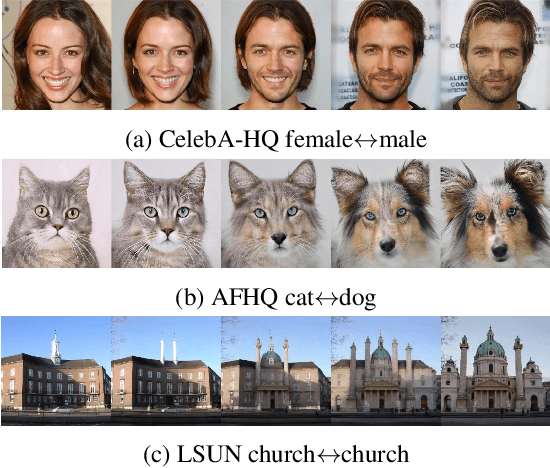

IMPUS: Image Morphing with Perceptually-Uniform Sampling Using Diffusion Models

Nov 12, 2023

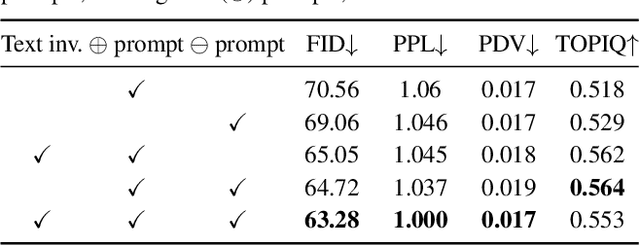

Abstract:We present a diffusion-based image morphing approach with perceptually-uniform sampling (IMPUS) that produces smooth, direct, and realistic interpolations given an image pair. A latent diffusion model has distinct conditional distributions and data embeddings for each of the two images, especially when they are from different classes. To bridge this gap, we interpolate in the locally linear and continuous text embedding space and Gaussian latent space. We first optimize the endpoint text embeddings and then map the images to the latent space using a probability flow ODE. Unlike existing work that takes an indirect morphing path, we show that the model adaptation yields a direct path and suppresses ghosting artifacts in the interpolated images. To achieve this, we propose an adaptive bottleneck constraint based on a novel relative perceptual path diversity score that automatically controls the bottleneck size and balances the diversity along the path with its directness. We also propose a perceptually-uniform sampling technique that enables visually smooth changes between the interpolated images. Extensive experiments validate that our IMPUS can achieve smooth, direct, and realistic image morphing and be applied to other image generation tasks.

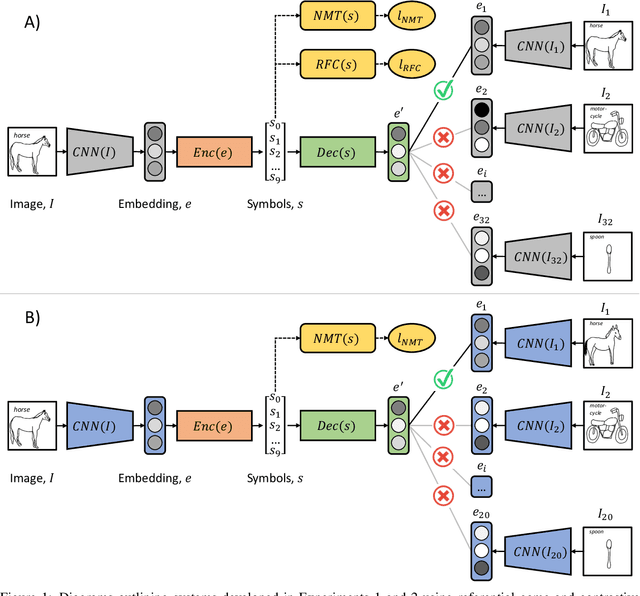

Grounded Language Acquisition From Object and Action Imagery

Sep 12, 2023

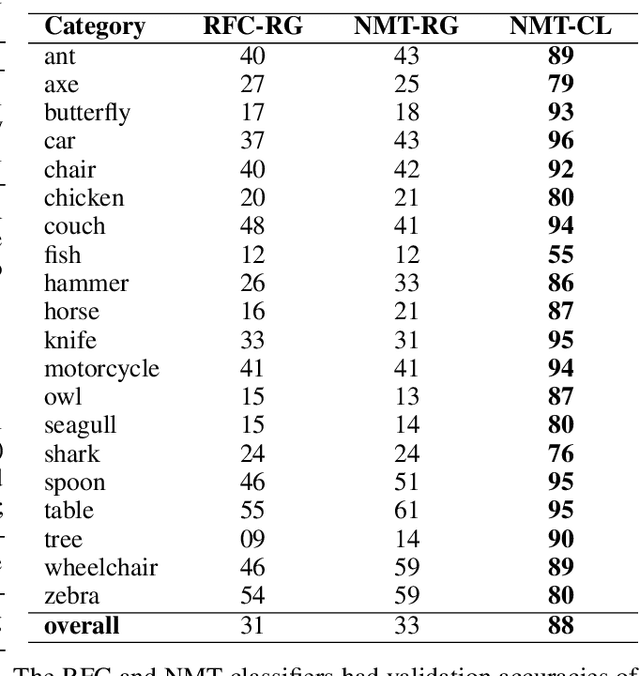

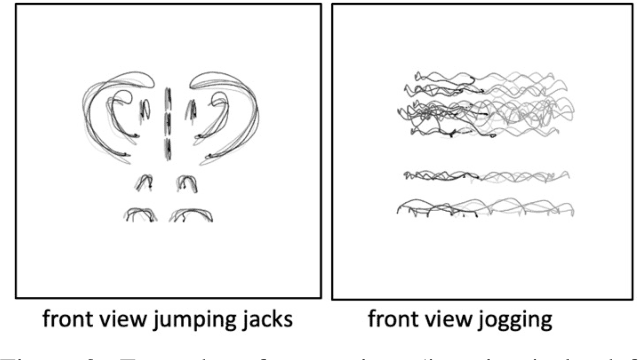

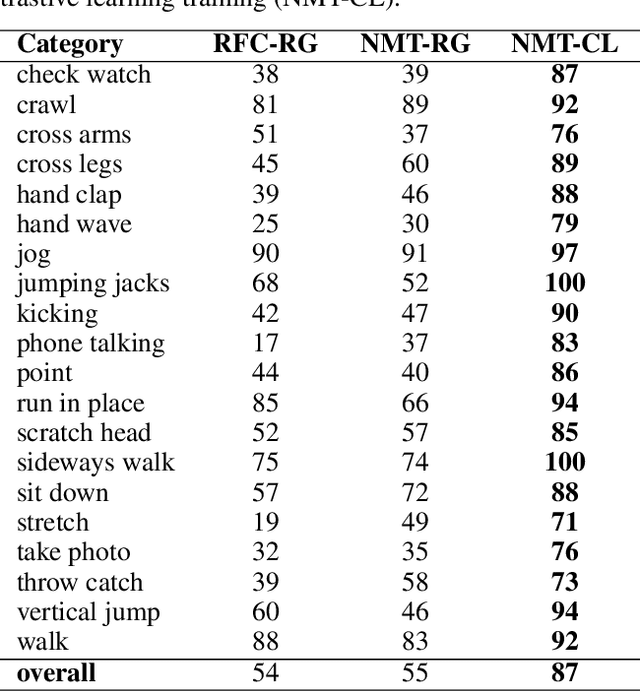

Abstract:Deep learning approaches to natural language processing have made great strides in recent years. While these models produce symbols that convey vast amounts of diverse knowledge, it is unclear how such symbols are grounded in data from the world. In this paper, we explore the development of a private language for visual data representation by training emergent language (EL) encoders/decoders in both i) a traditional referential game environment and ii) a contrastive learning environment utilizing a within-class matching training paradigm. An additional classification layer utilizing neural machine translation and random forest classification was used to transform symbolic representations (sequences of integer symbols) to class labels. These methods were applied in two experiments focusing on object recognition and action recognition. For object recognition, a set of sketches produced by human participants from real imagery was used (Sketchy dataset) and for action recognition, 2D trajectories were generated from 3D motion capture systems (MOVI dataset). In order to interpret the symbols produced for data in each experiment, gradient-weighted class activation mapping (Grad-CAM) methods were used to identify pixel regions indicating semantic features which contribute evidence towards symbols in learned languages. Additionally, a t-distributed stochastic neighbor embedding (t-SNE) method was used to investigate embeddings learned by CNN feature extractors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge