Jianwei Qiu

Grounded Language Acquisition From Object and Action Imagery

Sep 12, 2023

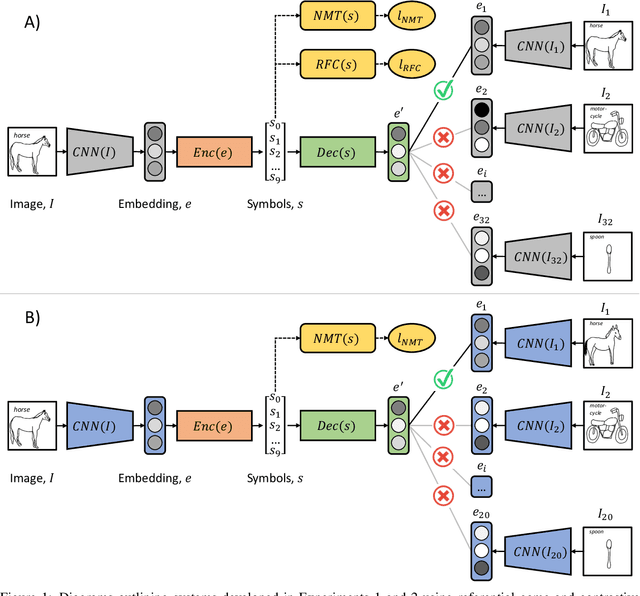

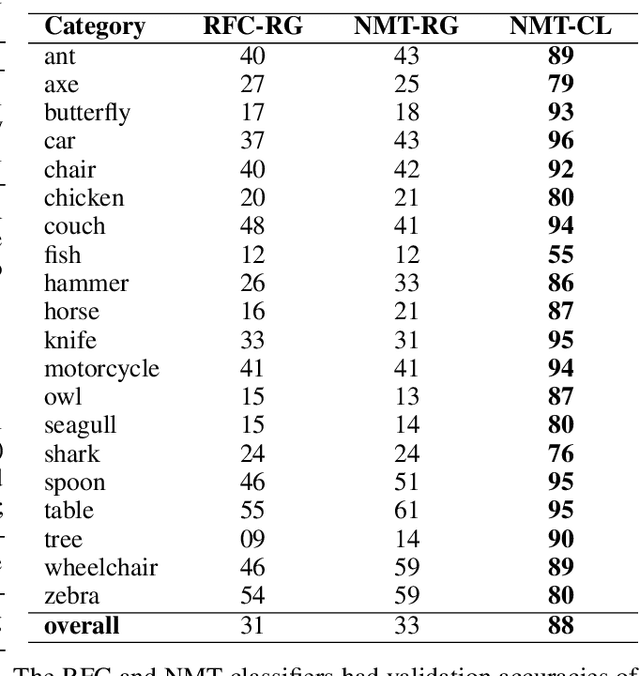

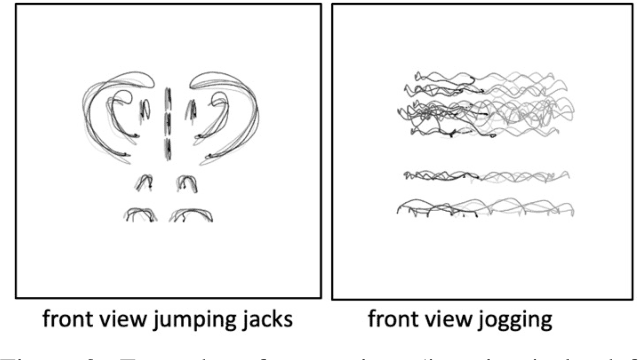

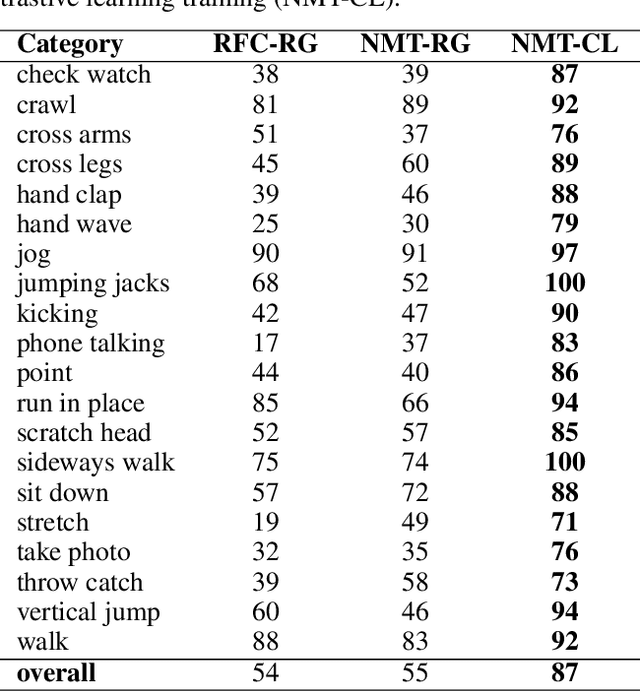

Abstract:Deep learning approaches to natural language processing have made great strides in recent years. While these models produce symbols that convey vast amounts of diverse knowledge, it is unclear how such symbols are grounded in data from the world. In this paper, we explore the development of a private language for visual data representation by training emergent language (EL) encoders/decoders in both i) a traditional referential game environment and ii) a contrastive learning environment utilizing a within-class matching training paradigm. An additional classification layer utilizing neural machine translation and random forest classification was used to transform symbolic representations (sequences of integer symbols) to class labels. These methods were applied in two experiments focusing on object recognition and action recognition. For object recognition, a set of sketches produced by human participants from real imagery was used (Sketchy dataset) and for action recognition, 2D trajectories were generated from 3D motion capture systems (MOVI dataset). In order to interpret the symbols produced for data in each experiment, gradient-weighted class activation mapping (Grad-CAM) methods were used to identify pixel regions indicating semantic features which contribute evidence towards symbols in learned languages. Additionally, a t-distributed stochastic neighbor embedding (t-SNE) method was used to investigate embeddings learned by CNN feature extractors.

On Adversarial Vulnerability of PHM algorithms: An Initial Study

Oct 14, 2021

Abstract:With proliferation of deep learning (DL) applications in diverse domains, vulnerability of DL models to adversarial attacks has become an increasingly interesting research topic in the domains of Computer Vision (CV) and Natural Language Processing (NLP). DL has also been widely adopted to diverse PHM applications, where data are primarily time-series sensor measurements. While those advanced DL algorithms/models have resulted in an improved PHM algorithms' performance, the vulnerability of those PHM algorithms to adversarial attacks has not drawn much attention in the PHM community. In this paper we attempt to explore the vulnerability of PHM algorithms. More specifically, we investigate the strategies of attacking PHM algorithms by considering several unique characteristics associated with time-series sensor measurements data. We use two real-world PHM applications as examples to validate our attack strategies and to demonstrate that PHM algorithms indeed are vulnerable to adversarial attacks.

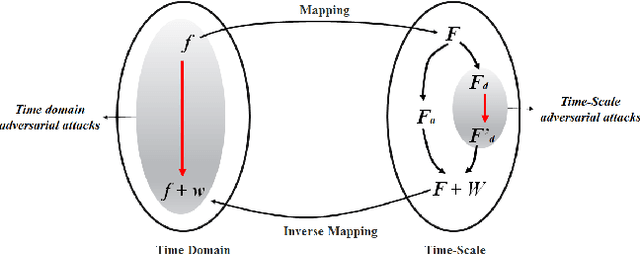

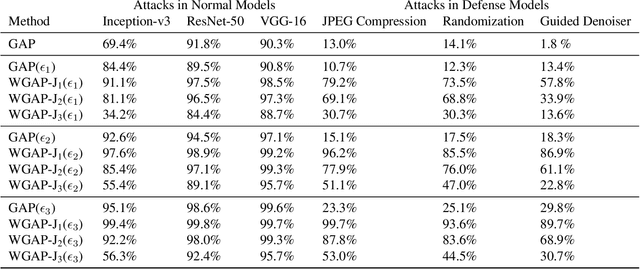

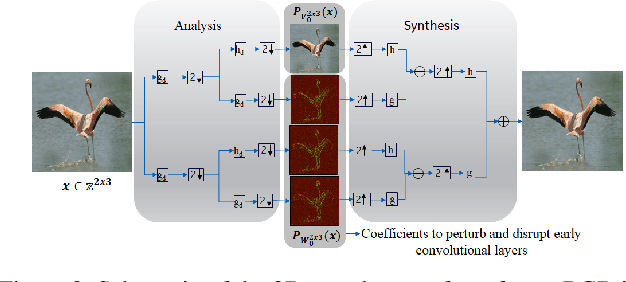

Adversarial Attacks with Time-Scale Representations

Jul 26, 2021

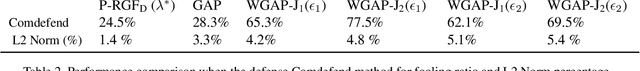

Abstract:We propose a novel framework for real-time black-box universal attacks which disrupts activations of early convolutional layers in deep learning models. Our hypothesis is that perturbations produced in the wavelet space disrupt early convolutional layers more effectively than perturbations performed in the time domain. The main challenge in adversarial attacks is to preserve low frequency image content while minimally changing the most meaningful high frequency content. To address this, we formulate an optimization problem using time-scale (wavelet) representations as a dual space in three steps. First, we project original images into orthonormal sub-spaces for low and high scales via wavelet coefficients. Second, we perturb wavelet coefficients for high scale projection using a generator network. Third, we generate new adversarial images by projecting back the original coefficients from the low scale and the perturbed coefficients from the high scale sub-space. We provide a theoretical framework that guarantees a dual mapping from time and time-scale domain representations. We compare our results with state-of-the-art black-box attacks from generative-based and gradient-based models. We also verify efficacy against multiple defense methods such as JPEG compression, Guided Denoiser and Comdefend. Our results show that wavelet-based perturbations consistently outperform time-based attacks thus providing new insights into vulnerabilities of deep learning models and could potentially lead to robust architectures or new defense and attack mechanisms by leveraging time-scale representations.

Symbolic Semantic Segmentation and Interpretation of COVID-19 Lung Infections in Chest CT volumes based on Emergent Languages

Aug 22, 2020

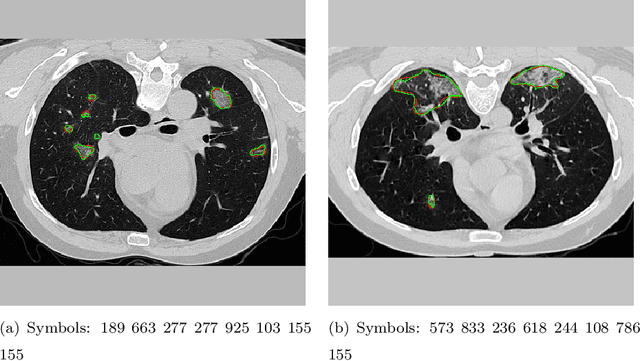

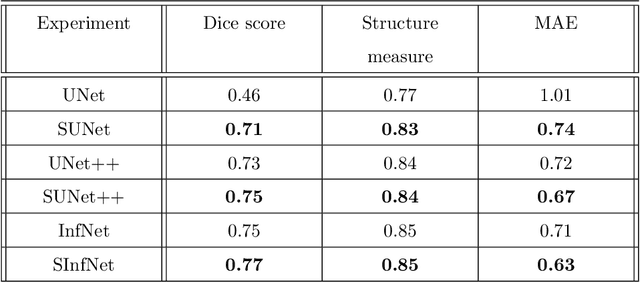

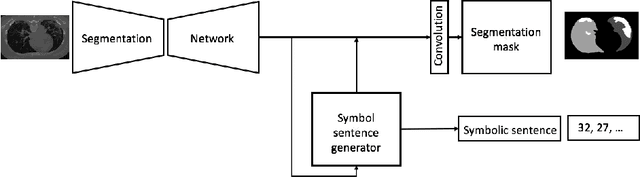

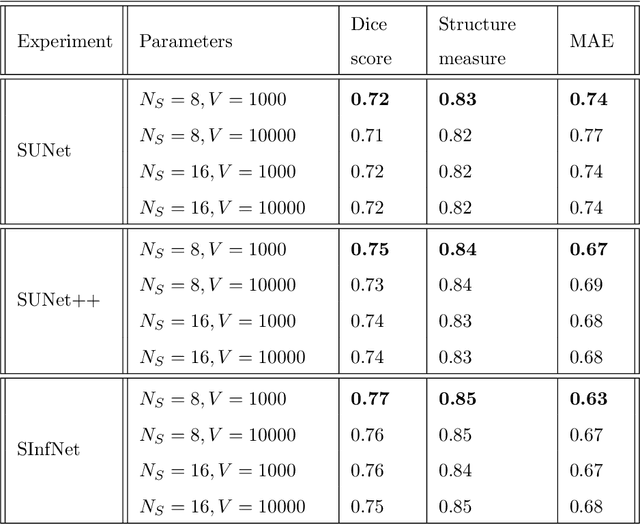

Abstract:The coronavirus disease (COVID-19) has resulted in a pandemic crippling the a breadth of services critical to daily life. Segmentation of lung infections in computerized tomography (CT) slices could be be used to improve diagnosis and understanding of COVID-19 in patients. Deep learning systems lack interpretability because of their black box nature. Inspired by human communication of complex ideas through language, we propose a symbolic framework based on emergent languages for the segmentation of COVID-19 infections in CT scans of lungs. We model the cooperation between two artificial agents - a Sender and a Receiver. These agents synergistically cooperate using emergent symbolic language to solve the task of semantic segmentation. Our game theoretic approach is to model the cooperation between agents unlike Generative Adversarial Networks (GANs). The Sender retrieves information from one of the higher layers of the deep network and generates a symbolic sentence sampled from a categorical distribution of vocabularies. The Receiver ingests the stream of symbols and cogenerates the segmentation mask. A private emergent language is developed that forms the communication channel used to describe the task of segmentation of COVID infections. We augment existing state of the art semantic segmentation architectures with our symbolic generator to form symbolic segmentation models. Our symbolic segmentation framework achieves state of the art performance for segmentation of lung infections caused by COVID-19. Our results show direct interpretation of symbolic sentences to discriminate between normal and infected regions, infection morphology and image characteristics. We show state of the art results for segmentation of COVID-19 lung infections in CT.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge