Takatomo Kano

NTT Multi-Speaker ASR System for the DASR Task of CHiME-8 Challenge

Sep 09, 2024

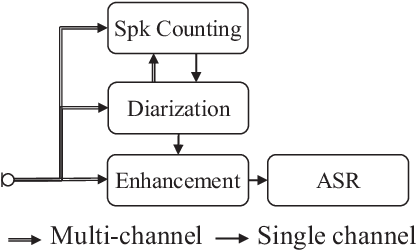

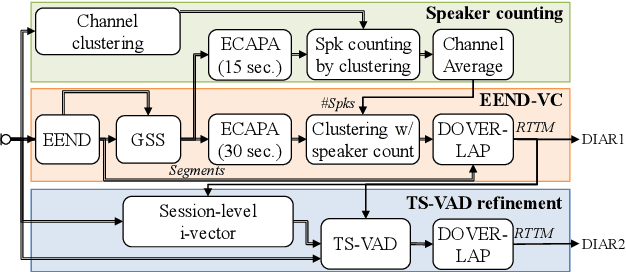

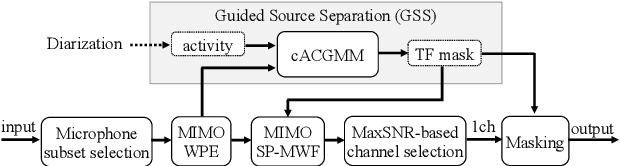

Abstract:We present a distant automatic speech recognition (DASR) system developed for the CHiME-8 DASR track. It consists of a diarization first pipeline. For diarization, we use end-to-end diarization with vector clustering (EEND-VC) followed by target speaker voice activity detection (TS-VAD) refinement. To deal with various numbers of speakers, we developed a new multi-channel speaker counting approach. We then apply guided source separation (GSS) with several improvements to the baseline system. Finally, we perform ASR using a combination of systems built from strong pre-trained models. Our proposed system achieves a macro tcpWER of 21.3 % on the dev set, which is a 57 % relative improvement over the baseline.

Sentence-wise Speech Summarization: Task, Datasets, and End-to-End Modeling with LM Knowledge Distillation

Aug 01, 2024

Abstract:This paper introduces a novel approach called sentence-wise speech summarization (Sen-SSum), which generates text summaries from a spoken document in a sentence-by-sentence manner. Sen-SSum combines the real-time processing of automatic speech recognition (ASR) with the conciseness of speech summarization. To explore this approach, we present two datasets for Sen-SSum: Mega-SSum and CSJ-SSum. Using these datasets, our study evaluates two types of Transformer-based models: 1) cascade models that combine ASR and strong text summarization models, and 2) end-to-end (E2E) models that directly convert speech into a text summary. While E2E models are appealing to develop compute-efficient models, they perform worse than cascade models. Therefore, we propose knowledge distillation for E2E models using pseudo-summaries generated by the cascade models. Our experiments show that this proposed knowledge distillation effectively improves the performance of the E2E model on both datasets.

Applying LLMs for Rescoring N-best ASR Hypotheses of Casual Conversations: Effects of Domain Adaptation and Context Carry-over

Jun 27, 2024Abstract:Large language models (LLMs) have been successfully applied for rescoring automatic speech recognition (ASR) hypotheses. However, their ability to rescore ASR hypotheses of casual conversations has not been sufficiently explored. In this study, we reveal it by performing N-best ASR hypotheses rescoring using Llama2 on the CHiME-7 distant ASR (DASR) task. Llama2 is one of the most representative LLMs, and the CHiME-7 DASR task provides datasets of casual conversations between multiple participants. We investigate the effects of domain adaptation of the LLM and context carry-over when performing N-best rescoring. Experimental results show that, even without domain adaptation, Llama2 outperforms a standard-size domain-adapted Transformer-LM, especially when using a long context. Domain adaptation shortens the context length needed with Llama2 to achieve its best performance, i.e., it reduces the computational cost of Llama2.

BLSTM-Based Confidence Estimation for End-to-End Speech Recognition

Dec 22, 2023Abstract:Confidence estimation, in which we estimate the reliability of each recognized token (e.g., word, sub-word, and character) in automatic speech recognition (ASR) hypotheses and detect incorrectly recognized tokens, is an important function for developing ASR applications. In this study, we perform confidence estimation for end-to-end (E2E) ASR hypotheses. Recent E2E ASR systems show high performance (e.g., around 5% token error rates) for various ASR tasks. In such situations, confidence estimation becomes difficult since we need to detect infrequent incorrect tokens from mostly correct token sequences. To tackle this imbalanced dataset problem, we employ a bidirectional long short-term memory (BLSTM)-based model as a strong binary-class (correct/incorrect) sequence labeler that is trained with a class balancing objective. We experimentally confirmed that, by utilizing several types of ASR decoding scores as its auxiliary features, the model steadily shows high confidence estimation performance under highly imbalanced settings. We also confirmed that the BLSTM-based model outperforms Transformer-based confidence estimation models, which greatly underestimate incorrect tokens.

Transfer Learning from Pre-trained Language Models Improves End-to-End Speech Summarization

Jun 07, 2023Abstract:End-to-end speech summarization (E2E SSum) directly summarizes input speech into easy-to-read short sentences with a single model. This approach is promising because it, in contrast to the conventional cascade approach, can utilize full acoustical information and mitigate to the propagation of transcription errors. However, due to the high cost of collecting speech-summary pairs, an E2E SSum model tends to suffer from training data scarcity and output unnatural sentences. To overcome this drawback, we propose for the first time to integrate a pre-trained language model (LM), which is highly capable of generating natural sentences, into the E2E SSum decoder via transfer learning. In addition, to reduce the gap between the independently pre-trained encoder and decoder, we also propose to transfer the baseline E2E SSum encoder instead of the commonly used automatic speech recognition encoder. Experimental results show that the proposed model outperforms baseline and data augmented models.

Attention-based Multi-hypothesis Fusion for Speech Summarization

Nov 16, 2021

Abstract:Speech summarization, which generates a text summary from speech, can be achieved by combining automatic speech recognition (ASR) and text summarization (TS). With this cascade approach, we can exploit state-of-the-art models and large training datasets for both subtasks, i.e., Transformer for ASR and Bidirectional Encoder Representations from Transformers (BERT) for TS. However, ASR errors directly affect the quality of the output summary in the cascade approach. We propose a cascade speech summarization model that is robust to ASR errors and that exploits multiple hypotheses generated by ASR to attenuate the effect of ASR errors on the summary. We investigate several schemes to combine ASR hypotheses. First, we propose using the sum of sub-word embedding vectors weighted by their posterior values provided by an ASR system as an input to a BERT-based TS system. Then, we introduce a more general scheme that uses an attention-based fusion module added to a pre-trained BERT module to align and combine several ASR hypotheses. Finally, we perform speech summarization experiments on the How2 dataset and a newly assembled TED-based dataset that we will release with this paper. These experiments show that retraining the BERT-based TS system with these schemes can improve summarization performance and that the attention-based fusion module is particularly effective.

Simultaneous Speech-to-Speech Translation System with Neural Incremental ASR, MT, and TTS

Nov 11, 2020

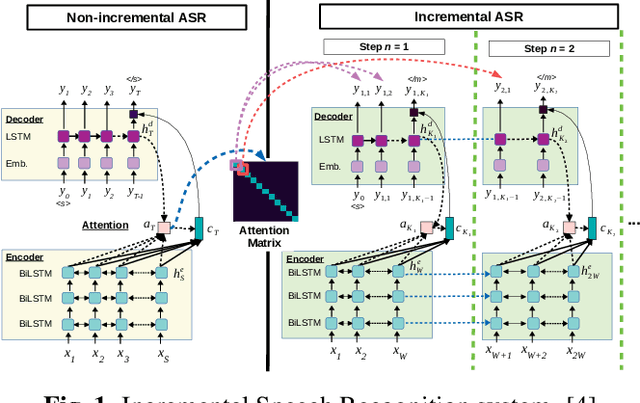

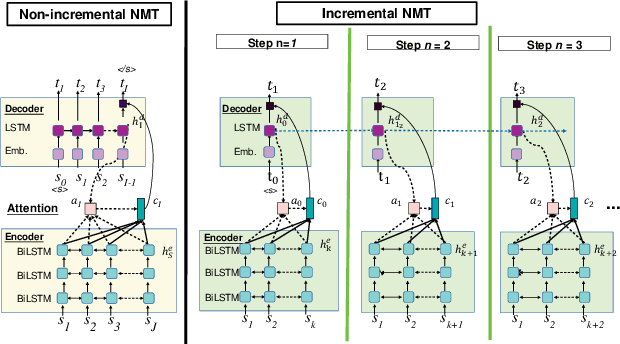

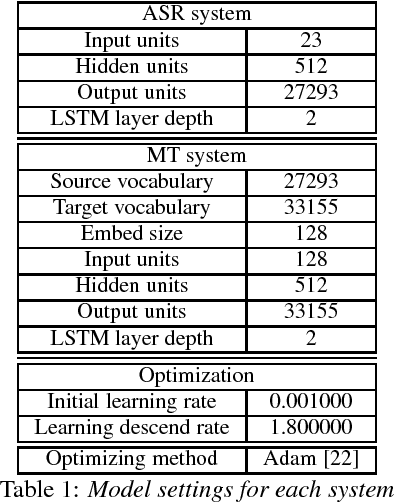

Abstract:This paper presents a newly developed, simultaneous neural speech-to-speech translation system and its evaluation. The system consists of three fully-incremental neural processing modules for automatic speech recognition (ASR), machine translation (MT), and text-to-speech synthesis (TTS). We investigated its overall latency in the system's Ear-Voice Span and speaking latency along with module-level performance.

Structured-based Curriculum Learning for End-to-end English-Japanese Speech Translation

Feb 13, 2018

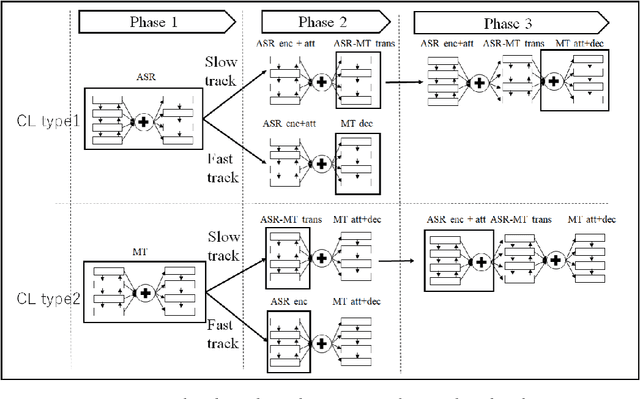

Abstract:Sequence-to-sequence attentional-based neural network architectures have been shown to provide a powerful model for machine translation and speech recognition. Recently, several works have attempted to extend the models for end-to-end speech translation task. However, the usefulness of these models were only investigated on language pairs with similar syntax and word order (e.g., English-French or English-Spanish). In this work, we focus on end-to-end speech translation tasks on syntactically distant language pairs (e.g., English-Japanese) that require distant word reordering. To guide the encoder-decoder attentional model to learn this difficult problem, we propose a structured-based curriculum learning strategy. Unlike conventional curriculum learning that gradually emphasizes difficult data examples, we formalize learning strategies from easier network structures to more difficult network structures. Here, we start the training with end-to-end encoder-decoder for speech recognition or text-based machine translation task then gradually move to end-to-end speech translation task. The experiment results show that the proposed approach could provide significant improvements in comparison with the one without curriculum learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge