Shi Chang

Variable-frame CNNLSTM for Breast Nodule Classification using Ultrasound Videos

Feb 17, 2025

Abstract:The intersection of medical imaging and artificial intelligence has become an important research direction in intelligent medical treatment, particularly in the analysis of medical images using deep learning for clinical diagnosis. Despite the advances, existing keyframe classification methods lack extraction of time series features, while ultrasonic video classification based on three-dimensional convolution requires uniform frame numbers across patients, resulting in poor feature extraction efficiency and model classification performance. This study proposes a novel video classification method based on CNN and LSTM, introducing NLP's long and short sentence processing scheme into video classification for the first time. The method reduces CNN-extracted image features to 1x512 dimension, followed by sorting and compressing feature vectors for LSTM training. Specifically, feature vectors are sorted by patient video frame numbers and populated with padding value 0 to form variable batches, with invalid padding values compressed before LSTM training to conserve computing resources. Experimental results demonstrate that our variable-frame CNNLSTM method outperforms other approaches across all metrics, showing improvements of 3-6% in F1 score and 1.5% in specificity compared to keyframe methods. The variable-frame CNNLSTM also achieves better accuracy and precision than equal-frame CNNLSTM. These findings validate the effectiveness of our approach in classifying variable-frame ultrasound videos and suggest potential applications in other medical imaging modalities.

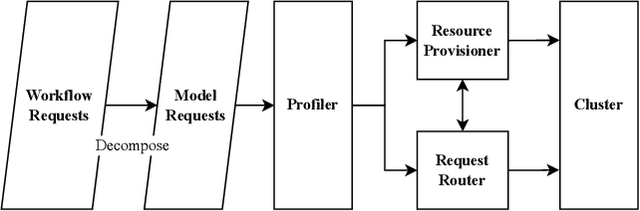

Software Performance Engineering for Foundation Model-Powered Software (FMware)

Nov 14, 2024

Abstract:The rise of Foundation Models (FMs) like Large Language Models (LLMs) is revolutionizing software development. Despite the impressive prototypes, transforming FMware into production-ready products demands complex engineering across various domains. A critical but overlooked aspect is performance engineering, which aims at ensuring FMware meets performance goals such as throughput and latency to avoid user dissatisfaction and financial loss. Often, performance considerations are an afterthought, leading to costly optimization efforts post-deployment. FMware's high computational resource demands highlight the need for efficient hardware use. Continuous performance engineering is essential to prevent degradation. This paper highlights the significance of Software Performance Engineering (SPE) in FMware, identifying four key challenges: cognitive architecture design, communication protocols, tuning and optimization, and deployment. These challenges are based on literature surveys and experiences from developing an in-house FMware system. We discuss problems, current practices, and innovative paths for the software engineering community.

SAM-Driven Weakly Supervised Nodule Segmentation with Uncertainty-Aware Cross Teaching

Jul 18, 2024

Abstract:Automated nodule segmentation is essential for computer-assisted diagnosis in ultrasound images. Nevertheless, most existing methods depend on precise pixel-level annotations by medical professionals, a process that is both costly and labor-intensive. Recently, segmentation foundation models like SAM have shown impressive generalizability on natural images, suggesting their potential as pseudo-labelers. However, accurate prompts remain crucial for their success in medical images. In this work, we devise a novel weakly supervised framework that effectively utilizes the segmentation foundation model to generate pseudo-labels from aspect ration annotations for automatic nodule segmentation. Specifically, we develop three types of bounding box prompts based on scalable shape priors, followed by an adaptive pseudo-label selection module to fully exploit the prediction capabilities of the foundation model for nodules. We also present a SAM-driven uncertainty-aware cross-teaching strategy. This approach integrates SAM-based uncertainty estimation and label-space perturbations into cross-teaching to mitigate the impact of pseudo-label inaccuracies on model training. Extensive experiments on two clinically collected ultrasound datasets demonstrate the superior performance of our proposed method.

Ultrasound Nodule Segmentation Using Asymmetric Learning with Simple Clinical Annotation

Apr 23, 2024Abstract:Recent advances in deep learning have greatly facilitated the automated segmentation of ultrasound images, which is essential for nodule morphological analysis. Nevertheless, most existing methods depend on extensive and precise annotations by domain experts, which are labor-intensive and time-consuming. In this study, we suggest using simple aspect ratio annotations directly from ultrasound clinical diagnoses for automated nodule segmentation. Especially, an asymmetric learning framework is developed by extending the aspect ratio annotations with two types of pseudo labels, i.e., conservative labels and radical labels, to train two asymmetric segmentation networks simultaneously. Subsequently, a conservative-radical-balance strategy (CRBS) strategy is proposed to complementally combine radical and conservative labels. An inconsistency-aware dynamically mixed pseudo-labels supervision (IDMPS) module is introduced to address the challenges of over-segmentation and under-segmentation caused by the two types of labels. To further leverage the spatial prior knowledge provided by clinical annotations, we also present a novel loss function namely the clinical anatomy prior loss. Extensive experiments on two clinically collected ultrasound datasets (thyroid and breast) demonstrate the superior performance of our proposed method, which can achieve comparable and even better performance than fully supervised methods using ground truth annotations.

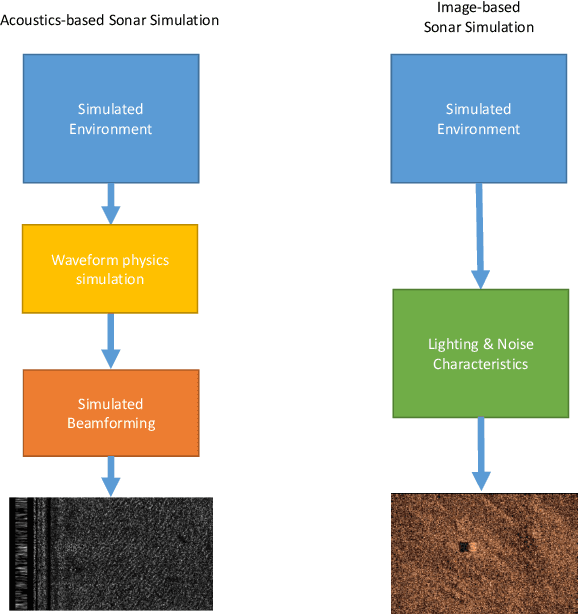

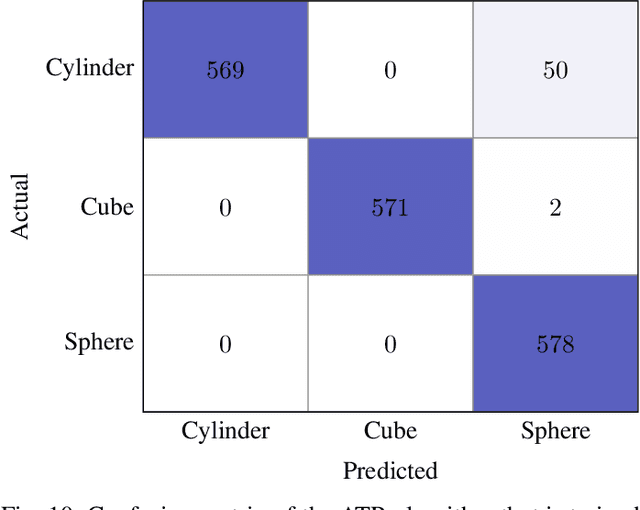

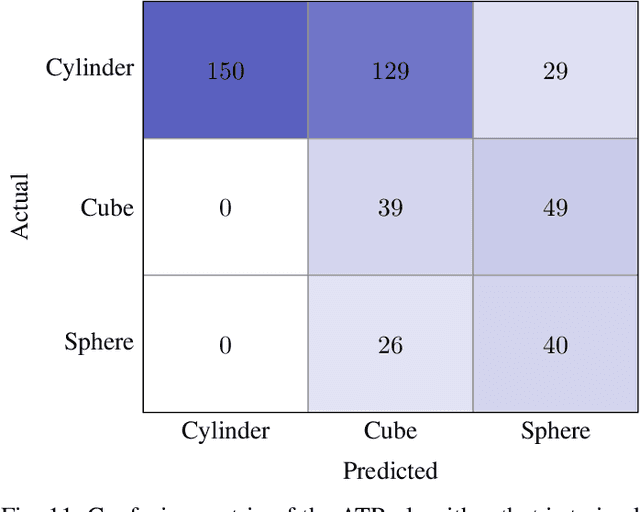

Synthetic Sonar Image Simulation with Various Seabed Conditions for Automatic Target Recognition

Oct 19, 2022

Abstract:We propose a novel method to generate underwater object imagery that is acoustically compliant with that generated by side-scan sonar using the Unreal Engine. We describe the process to develop, tune, and generate imagery to provide representative images for use in training automated target recognition (ATR) and machine learning algorithms. The methods provide visual approximations for acoustic effects such as back-scatter noise and acoustic shadow, while allowing fast rendering with C++ actor in UE for maximizing the size of potential ATR training datasets. Additionally, we provide analysis of its utility as a replacement for actual sonar imagery or physics-based sonar data.

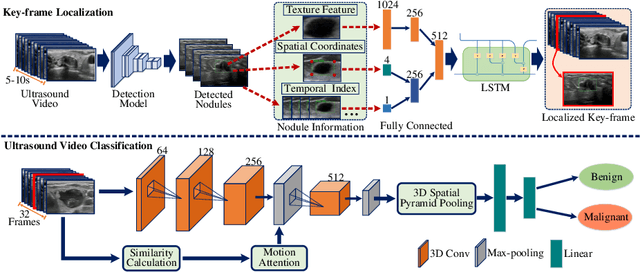

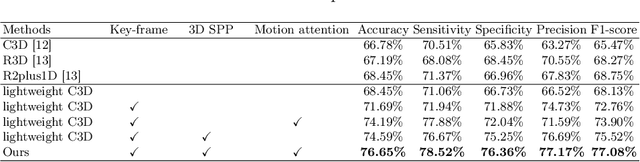

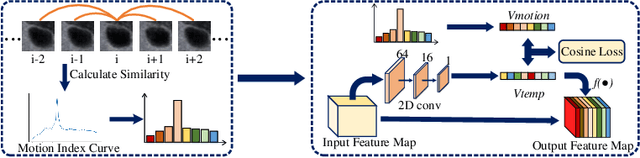

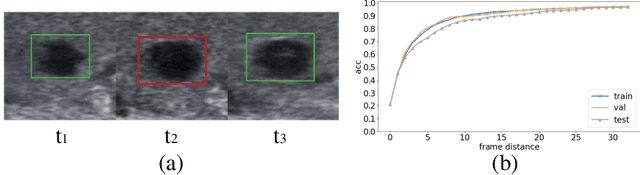

Key-frame Guided Network for Thyroid Nodule Recognition using Ultrasound Videos

Jun 30, 2022

Abstract:Ultrasound examination is widely used in the clinical diagnosis of thyroid nodules (benign/malignant). However, the accuracy relies heavily on radiologist experience. Although deep learning techniques have been investigated for thyroid nodules recognition. Current solutions are mainly based on static ultrasound images, with limited temporal information used and inconsistent with clinical diagnosis. This paper proposes a novel method for the automated recognition of thyroid nodules through an exhaustive exploration of ultrasound videos and key-frames. We first propose a detection-localization framework to automatically identify the clinical key-frame with a typical nodule in each ultrasound video. Based on the localized key-frame, we develop a key-frame guided video classification model for thyroid nodule recognition. Besides, we introduce a motion attention module to help the network focus on significant frames in an ultrasound video, which is consistent with clinical diagnosis. The proposed thyroid nodule recognition framework is validated on clinically collected ultrasound videos, demonstrating superior performance compared with other state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge