Ruobing Qian

Data Augmentation for Seizure Prediction with Generative Diffusion Model

Jun 14, 2023Abstract:Objective: Seizure prediction is of great importance to improve the life of patients. The focal point is to distinguish preictal states from interictal ones. With the development of machine learning, seizure prediction methods have achieved significant progress. However, the severe imbalance problem between preictal and interictal data still poses a great challenge, restricting the performance of classifiers. Data augmentation is an intuitive way to solve this problem. Existing data augmentation methods generate samples by overlapping or recombining data. The distribution of generated samples is limited by original data, because such transformations cannot fully explore the feature space and offer new information. As the epileptic EEG representation varies among seizures, these generated samples cannot provide enough diversity to achieve high performance on a new seizure. As a consequence, we propose a novel data augmentation method with diffusion model called DiffEEG. Methods: Diffusion models are a class of generative models that consist of two processes. Specifically, in the diffusion process, the model adds noise to the input EEG sample step by step and converts the noisy sample into output random noise, exploring the distribution of data by minimizing the loss between the output and the noise added. In the denoised process, the model samples the synthetic data by removing the noise gradually, diffusing the data distribution to outward areas and narrowing the distance between different clusters. Results: We compared DiffEEG with existing methods, and integrated them into three representative classifiers. The experiments indicate that DiffEEG could further improve the performance and shows superiority to existing methods. Conclusion: This paper proposes a novel and effective method to solve the imbalanced problem and demonstrates the effectiveness and generality of our method.

Transient motion classification through turbid volumes via parallelized single-photon detection and deep contrastive embedding

Apr 04, 2022

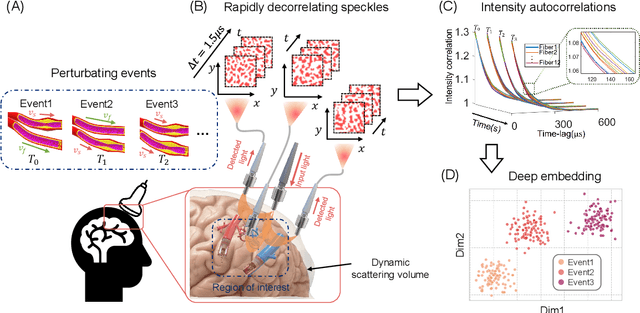

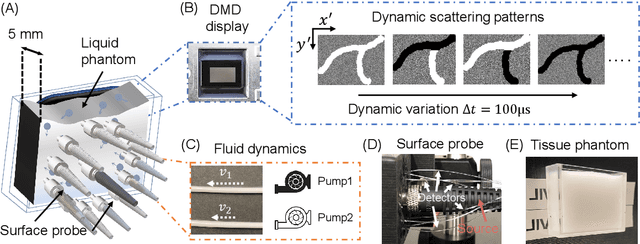

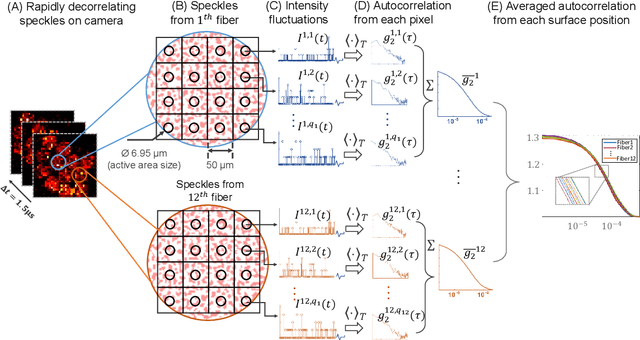

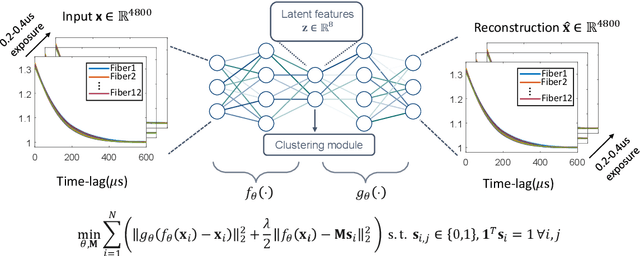

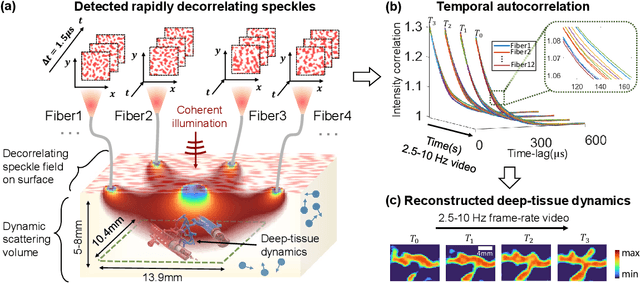

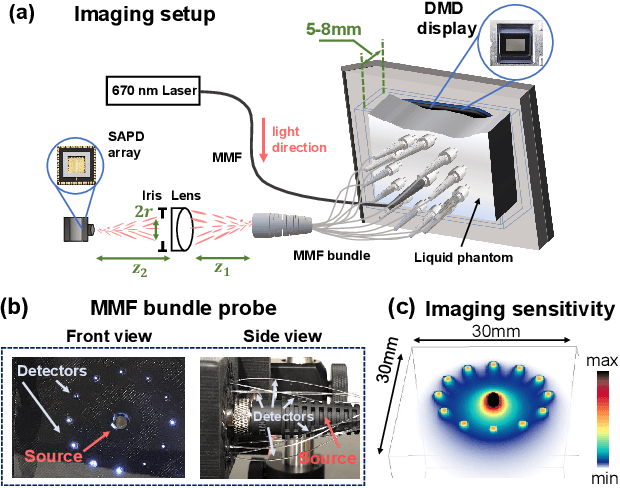

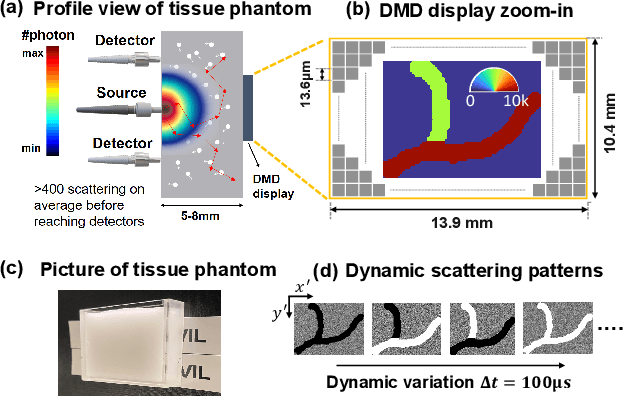

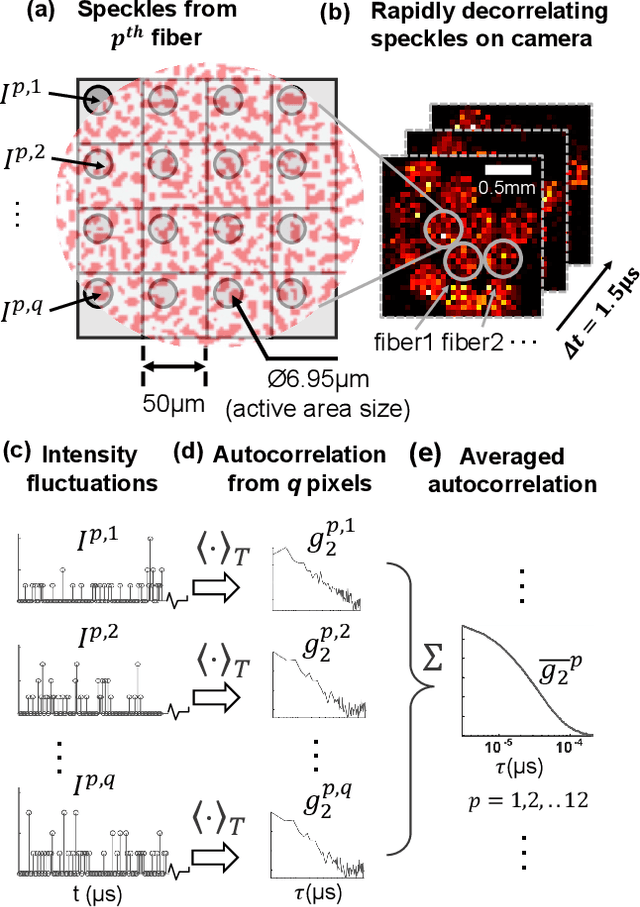

Abstract:Fast noninvasive probing of spatially varying decorrelating events, such as cerebral blood flow beneath the human skull, is an essential task in various scientific and clinical settings. One of the primary optical techniques used is diffuse correlation spectroscopy (DCS), whose classical implementation uses a single or few single-photon detectors, resulting in poor spatial localization accuracy and relatively low temporal resolution. Here, we propose a technique termed Classifying Rapid decorrelation Events via Parallelized single photon dEtection (CREPE)}, a new form of DCS that can probe and classify different decorrelating movements hidden underneath turbid volume with high sensitivity using parallelized speckle detection from a $32\times32$ pixel SPAD array. We evaluate our setup by classifying different spatiotemporal-decorrelating patterns hidden beneath a 5mm tissue-like phantom made with rapidly decorrelating dynamic scattering media. Twelve multi-mode fibers are used to collect scattered light from different positions on the surface of the tissue phantom. To validate our setup, we generate perturbed decorrelation patterns by both a digital micromirror device (DMD) modulated at multi-kilo-hertz rates, as well as a vessel phantom containing flowing fluid. Along with a deep contrastive learning algorithm that outperforms classic unsupervised learning methods, we demonstrate our approach can accurately detect and classify different transient decorrelation events (happening in 0.1-0.4s) underneath turbid scattering media, without any data labeling. This has the potential to be applied to noninvasively monitor deep tissue motion patterns, for example identifying normal or abnormal cerebral blood flow events, at multi-Hertz rates within a compact and static detection probe.

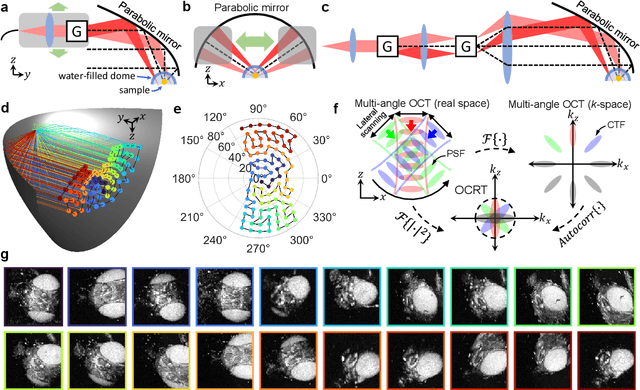

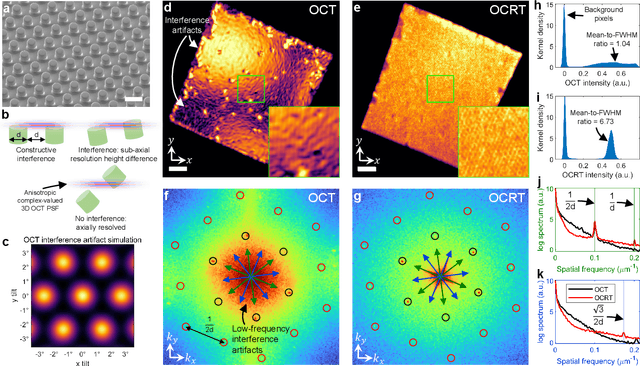

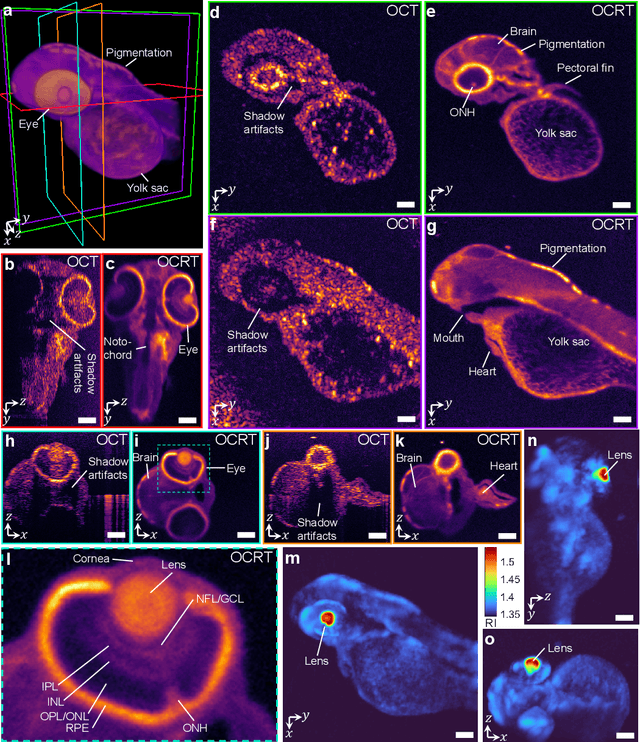

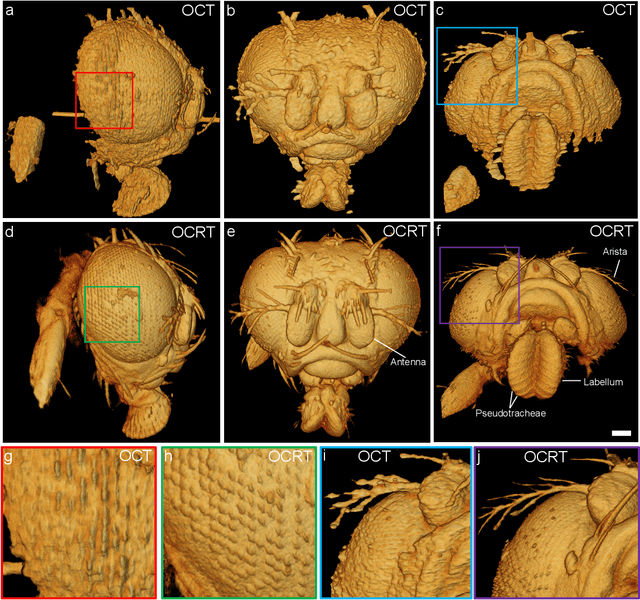

Computational 3D microscopy with optical coherence refraction tomography

Feb 24, 2022

Abstract:Optical coherence tomography (OCT) has seen widespread success as an in vivo clinical diagnostic 3D imaging modality, impacting areas including ophthalmology, cardiology, and gastroenterology. Despite its many advantages, such as high sensitivity, speed, and depth penetration, OCT suffers from several shortcomings that ultimately limit its utility as a 3D microscopy tool, such as its pervasive coherent speckle noise and poor lateral resolution required to maintain millimeter-scale imaging depths. Here, we present 3D optical coherence refraction tomography (OCRT), a computational extension of OCT which synthesizes an incoherent contrast mechanism by combining multiple OCT volumes, acquired across two rotation axes, to form a resolution-enhanced, speckle-reduced, refraction-corrected 3D reconstruction. Our label-free computational 3D microscope features a novel optical design incorporating a parabolic mirror to enable the capture of 5D plenoptic datasets, consisting of millimetric 3D fields of view over up to $\pm75^\circ$ without moving the sample. We demonstrate that 3D OCRT reveals 3D features unobserved by conventional OCT in fruit fly, zebrafish, and mouse samples.

Toward Open-World Electroencephalogram Decoding Via Deep Learning: A Comprehensive Survey

Dec 17, 2021

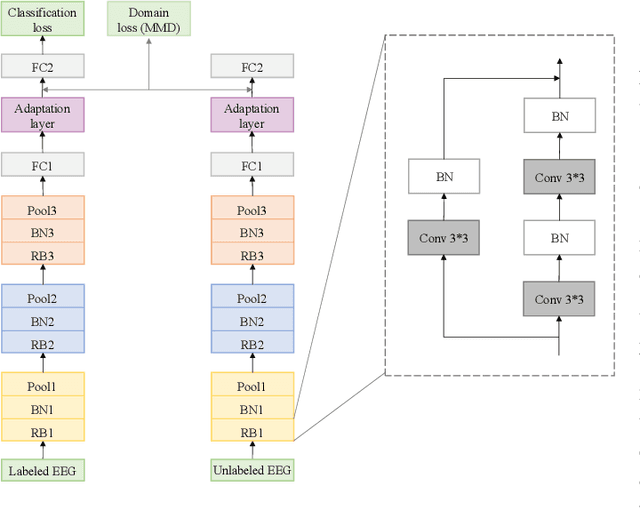

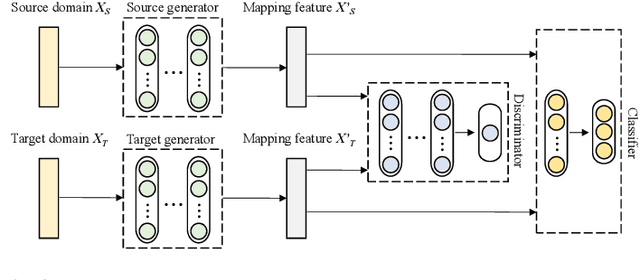

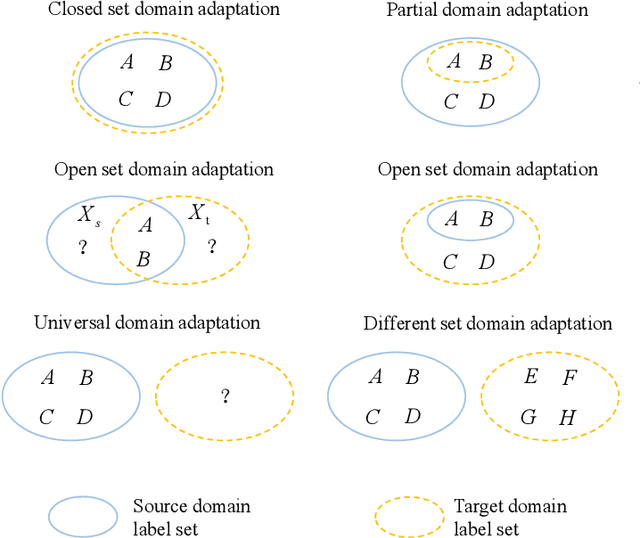

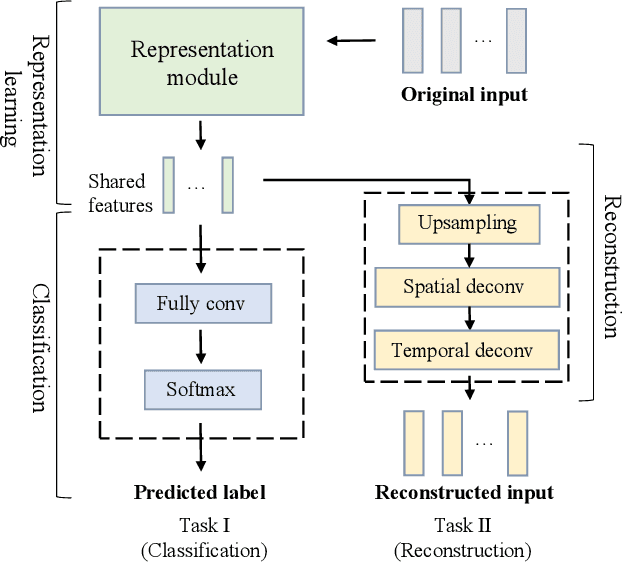

Abstract:Electroencephalogram (EEG) decoding aims to identify the perceptual, semantic, and cognitive content of neural processing based on non-invasively measured brain activity. Traditional EEG decoding methods have achieved moderate success when applied to data acquired in static, well-controlled lab environments. However, an open-world environment is a more realistic setting, where situations affecting EEG recordings can emerge unexpectedly, significantly weakening the robustness of existing methods. In recent years, deep learning (DL) has emerged as a potential solution for such problems due to its superior capacity in feature extraction. It overcomes the limitations of defining `handcrafted' features or features extracted using shallow architectures, but typically requires large amounts of costly, expertly-labelled data - something not always obtainable. Combining DL with domain-specific knowledge may allow for development of robust approaches to decode brain activity even with small-sample data. Although various DL methods have been proposed to tackle some of the challenges in EEG decoding, a systematic tutorial overview, particularly for open-world applications, is currently lacking. This article therefore provides a comprehensive survey of DL methods for open-world EEG decoding, and identifies promising research directions to inspire future studies for EEG decoding in real-world applications.

Imaging dynamics beneath turbid media via parallelized single-photon detection

Jul 22, 2021

Abstract:Noninvasive optical imaging through dynamic scattering media has numerous important biomedical applications but still remains a challenging task. While standard methods aim to form images based upon optical absorption or fluorescent emission, it is also well-established that the temporal correlation of scattered coherent light diffuses through tissue much like optical intensity. Few works to date, however, have aimed to experimentally measure and process such data to demonstrate deep-tissue imaging of decorrelation dynamics. In this work, we take advantage of a single-photon avalanche diode (SPAD) array camera, with over one thousand detectors, to simultaneously detect speckle fluctuations at the single-photon level from 12 different phantom tissue surface locations delivered via a customized fiber bundle array. We then apply a deep neural network to convert the acquired single-photon measurements into video of scattering dynamics beneath rapidly decorrelating liquid tissue phantoms. We demonstrate the ability to record video of dynamic events occurring 5-8 mm beneath a decorrelating tissue phantom with mm-scale resolution and at a 2.5-10 Hz frame rate.

Mesoscopic photogrammetry with an unstabilized phone camera

Dec 11, 2020

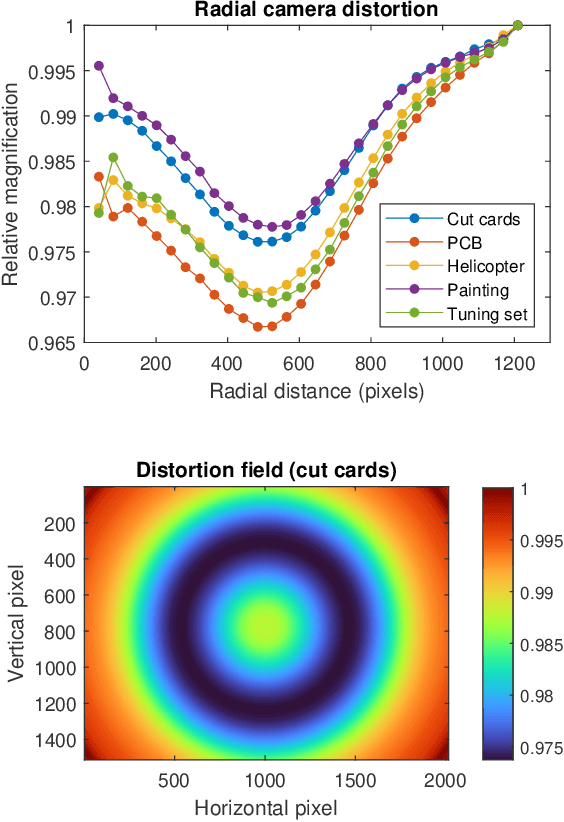

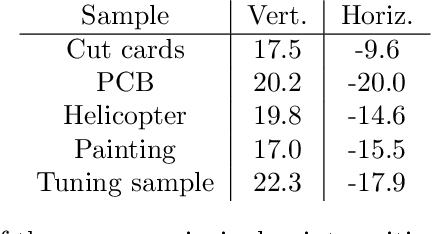

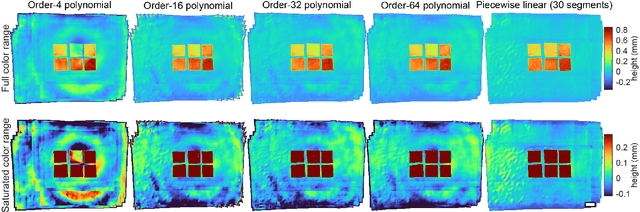

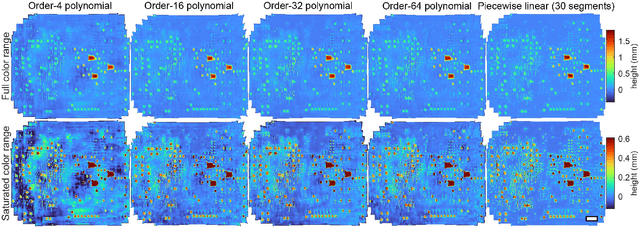

Abstract:We present a feature-free photogrammetric technique that enables quantitative 3D mesoscopic (mm-scale height variation) imaging with tens-of-micron accuracy from sequences of images acquired by a smartphone at close range (several cm) under freehand motion without additional hardware. Our end-to-end, pixel-intensity-based approach jointly registers and stitches all the images by estimating a coaligned height map, which acts as a pixel-wise radial deformation field that orthorectifies each camera image to allow homographic registration. The height maps themselves are reparameterized as the output of an untrained encoder-decoder convolutional neural network (CNN) with the raw camera images as the input, which effectively removes many reconstruction artifacts. Our method also jointly estimates both the camera's dynamic 6D pose and its distortion using a nonparametric model, the latter of which is especially important in mesoscopic applications when using cameras not designed for imaging at short working distances, such as smartphone cameras. We also propose strategies for reducing computation time and memory, applicable to other multi-frame registration problems. Finally, we demonstrate our method using sequences of multi-megapixel images captured by an unstabilized smartphone on a variety of samples (e.g., painting brushstrokes, circuit board, seeds).

Toward Autonomous Robotic Micro-Suturing using Optical Coherence Tomography Calibration and Path Planning

Mar 04, 2020

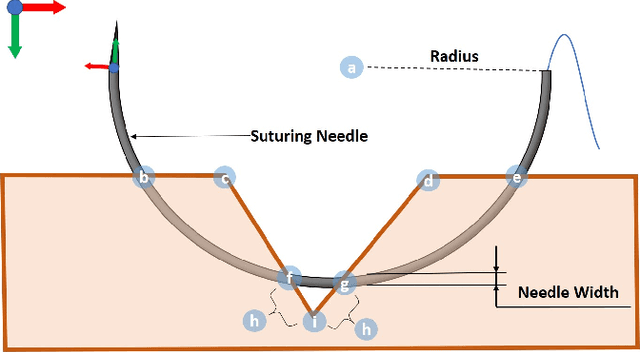

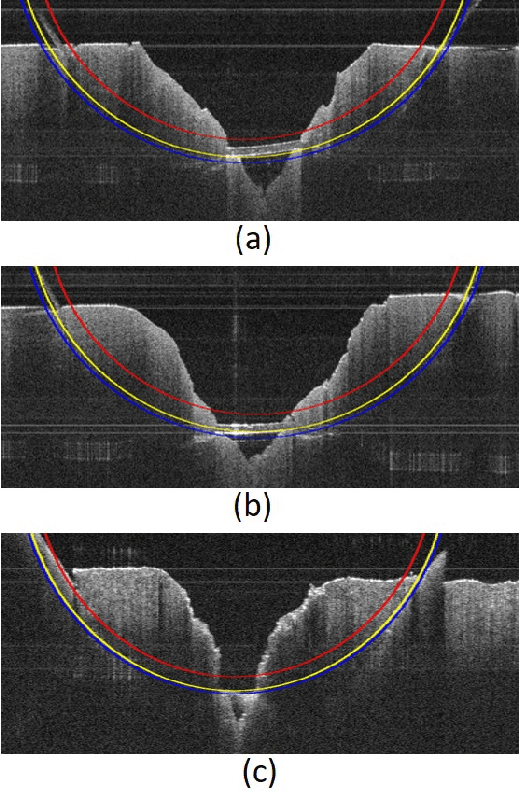

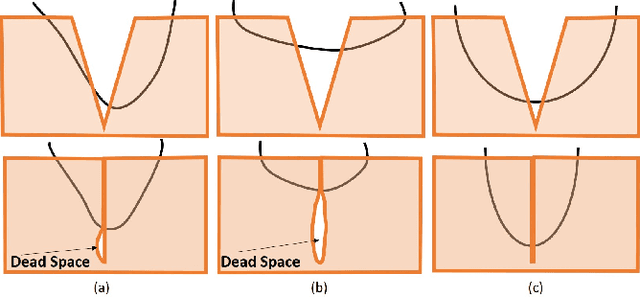

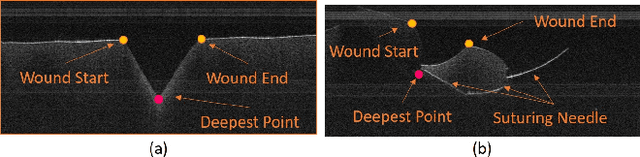

Abstract:Robotic automation has the potential to assist human surgeons in performing suturing tasks in microsurgery, and in order to do so a robot must be able to guide a needle with sub-millimeter precision through soft tissue. This paper presents a robotic suturing system that uses 3D optical coherence tomography (OCT) system for imaging feedback. Calibration of the robot-OCT and robot-needle transforms, wound detection, keypoint identification, and path planning are all performed automatically. The calibration method handles pose uncertainty when the needle is grasped using a variant of iterative closest points. The path planner uses the identified wound shape to calculate needle entry and exit points to yield an evenly-matched wound shape after closure. Experiments on tissue phantoms and animal tissue demonstrate that the system can pass a suture needle through wounds with 0.27 mm overall accuracy in achieving the planned entry and exit points.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge