Rebecca Willett

Accelerating PDE Surrogates via RL-Guided Mesh Optimization

Mar 02, 2026Abstract:Deep surrogate models for parametric partial differential equations (PDEs) can deliver high-fidelity approximations but remain prohibitively data-hungry: training often requires thousands of fine-grid simulations, each incurring substantial computational cost. To address this challenge, we introduce RLMesh, an end-to-end framework for efficient surrogate training under limited simulation budget. The key idea is to use reinforcement learning (RL) to adaptively allocate mesh grid points non-uniformly within each simulation domain, focusing numerical resolution in regions most critical for accurate PDE solutions. A lightweight proxy model further accelerates RL training by providing efficient reward estimates without full surrogate retraining. Experiments on PDE benchmarks demonstrate that RLMesh achieves competitive accuracy to baselines but with substantially fewer simulation queries. These results show that solver-level spatial adaptivity can dramatically improve the efficiency of surrogate training pipelines, enabling practical deployment of learning-based PDE surrogates across a wide range of problems.

A Model-Guided Neural Network Method for the Inverse Scattering Problem

Dec 10, 2025Abstract:Inverse medium scattering is an ill-posed, nonlinear wave-based imaging problem arising in medical imaging, remote sensing, and non-destructive testing. Machine learning (ML) methods offer increased inference speed and flexibility in capturing prior knowledge of imaging targets relative to classical optimization-based approaches; however, they perform poorly in regimes where the scattering behavior is highly nonlinear. A key limitation is that ML methods struggle to incorporate the physics governing the scattering process, which are typically inferred implicitly from the training data or loosely enforced via architectural design. In this paper, we present a method that endows a machine learning framework with explicit knowledge of problem physics, in the form of a differentiable solver representing the forward model. The proposed method progressively refines reconstructions of the scattering potential using measurements at increasing wave frequencies, following a classical strategy to stabilize recovery. Empirically, we find that our method provides high-quality reconstructions at a fraction of the computational or sampling costs of competing approaches.

Sketch-Augmented Features Improve Learning Long-Range Dependencies in Graph Neural Networks

Nov 05, 2025Abstract:Graph Neural Networks learn on graph-structured data by iteratively aggregating local neighborhood information. While this local message passing paradigm imparts a powerful inductive bias and exploits graph sparsity, it also yields three key challenges: (i) oversquashing of long-range information, (ii) oversmoothing of node representations, and (iii) limited expressive power. In this work we inject randomized global embeddings of node features, which we term \textit{Sketched Random Features}, into standard GNNs, enabling them to efficiently capture long-range dependencies. The embeddings are unique, distance-sensitive, and topology-agnostic -- properties which we analytically and empirically show alleviate the aforementioned limitations when injected into GNNs. Experimental results on real-world graph learning tasks confirm that this strategy consistently improves performance over baseline GNNs, offering both a standalone solution and a complementary enhancement to existing techniques such as graph positional encodings. Our source code is available at \href{https://github.com/ryienh/sketched-random-features}{https://github.com/ryienh/sketched-random-features}.

Hierarchical Implicit Neural Emulators

Jun 05, 2025Abstract:Neural PDE solvers offer a powerful tool for modeling complex dynamical systems, but often struggle with error accumulation over long time horizons and maintaining stability and physical consistency. We introduce a multiscale implicit neural emulator that enhances long-term prediction accuracy by conditioning on a hierarchy of lower-dimensional future state representations. Drawing inspiration from the stability properties of numerical implicit time-stepping methods, our approach leverages predictions several steps ahead in time at increasing compression rates for next-timestep refinements. By actively adjusting the temporal downsampling ratios, our design enables the model to capture dynamics across multiple granularities and enforce long-range temporal coherence. Experiments on turbulent fluid dynamics show that our method achieves high short-term accuracy and produces long-term stable forecasts, significantly outperforming autoregressive baselines while adding minimal computational overhead.

Quality Measures for Dynamic Graph Generative Models

Mar 03, 2025Abstract:Deep generative models have recently achieved significant success in modeling graph data, including dynamic graphs, where topology and features evolve over time. However, unlike in vision and natural language domains, evaluating generative models for dynamic graphs is challenging due to the difficulty of visualizing their output, making quantitative metrics essential. In this work, we develop a new quality metric for evaluating generative models of dynamic graphs. Current metrics for dynamic graphs typically involve discretizing the continuous-evolution of graphs into static snapshots and then applying conventional graph similarity measures. This approach has several limitations: (a) it models temporally related events as i.i.d. samples, failing to capture the non-uniform evolution of dynamic graphs; (b) it lacks a unified measure that is sensitive to both features and topology; (c) it fails to provide a scalar metric, requiring multiple metrics without clear superiority; and (d) it requires explicitly instantiating each static snapshot, leading to impractical runtime demands that hinder evaluation at scale. We propose a novel metric based on the \textit{Johnson-Lindenstrauss} lemma, applying random projections directly to dynamic graph data. This results in an expressive, scalar, and application-agnostic measure of dynamic graph similarity that overcomes the limitations of traditional methods. We also provide a comprehensive empirical evaluation of metrics for continuous-time dynamic graphs, demonstrating the effectiveness of our approach compared to existing methods. Our implementation is available at https://github.com/ryienh/jl-metric.

Can a calibration metric be both testable and actionable?

Feb 27, 2025Abstract:Forecast probabilities often serve as critical inputs for binary decision making. In such settings, calibration$\unicode{x2014}$ensuring forecasted probabilities match empirical frequencies$\unicode{x2014}$is essential. Although the common notion of Expected Calibration Error (ECE) provides actionable insights for decision making, it is not testable: it cannot be empirically estimated in many practical cases. Conversely, the recently proposed Distance from Calibration (dCE) is testable but is not actionable since it lacks decision-theoretic guarantees needed for high-stakes applications. We introduce Cutoff Calibration Error, a calibration measure that bridges this gap by assessing calibration over intervals of forecasted probabilities. We show that Cutoff Calibration Error is both testable and actionable and examine its implications for popular post-hoc calibration methods, such as isotonic regression and Platt scaling.

Solving Inverse Problems with Deep Linear Neural Networks: Global Convergence Guarantees for Gradient Descent with Weight Decay

Feb 21, 2025Abstract:Machine learning methods are commonly used to solve inverse problems, wherein an unknown signal must be estimated from few measurements generated via a known acquisition procedure. In particular, neural networks perform well empirically but have limited theoretical guarantees. In this work, we study an underdetermined linear inverse problem that admits several possible solution mappings. A standard remedy (e.g., in compressed sensing) establishing uniqueness of the solution mapping is to assume knowledge of latent low-dimensional structure in the source signal. We ask the following question: do deep neural networks adapt to this low-dimensional structure when trained by gradient descent with weight decay regularization? We prove that mildly overparameterized deep linear networks trained in this manner converge to an approximate solution that accurately solves the inverse problem while implicitly encoding latent subspace structure. To our knowledge, this is the first result to rigorously show that deep linear networks trained with weight decay automatically adapt to latent subspace structure in the data under practical stepsize and weight initialization schemes. Our work highlights that regularization and overparameterization improve generalization, while overparameterization also accelerates convergence during training.

Faster Adaptive Optimization via Expected Gradient Outer Product Reparameterization

Feb 03, 2025

Abstract:Adaptive optimization algorithms -- such as Adagrad, Adam, and their variants -- have found widespread use in machine learning, signal processing and many other settings. Several methods in this family are not rotationally equivariant, meaning that simple reparameterizations (i.e. change of basis) can drastically affect their convergence. However, their sensitivity to the choice of parameterization has not been systematically studied; it is not clear how to identify a "favorable" change of basis in which these methods perform best. In this paper we propose a reparameterization method and demonstrate both theoretically and empirically its potential to improve their convergence behavior. Our method is an orthonormal transformation based on the expected gradient outer product (EGOP) matrix, which can be approximated using either full-batch or stochastic gradient oracles. We show that for a broad class of functions, the sensitivity of adaptive algorithms to choice-of-basis is influenced by the decay of the EGOP matrix spectrum. We illustrate the potential impact of EGOP reparameterization by presenting empirical evidence and theoretical arguments that common machine learning tasks with "natural" data exhibit EGOP spectral decay.

Nested Diffusion Models Using Hierarchical Latent Priors

Dec 08, 2024Abstract:We introduce nested diffusion models, an efficient and powerful hierarchical generative framework that substantially enhances the generation quality of diffusion models, particularly for images of complex scenes. Our approach employs a series of diffusion models to progressively generate latent variables at different semantic levels. Each model in this series is conditioned on the output of the preceding higher-level models, culminating in image generation. Hierarchical latent variables guide the generation process along predefined semantic pathways, allowing our approach to capture intricate structural details while significantly improving image quality. To construct these latent variables, we leverage a pre-trained visual encoder, which learns strong semantic visual representations, and modulate its capacity via dimensionality reduction and noise injection. Across multiple datasets, our system demonstrates significant enhancements in image quality for both unconditional and class/text conditional generation. Moreover, our unconditional generation system substantially outperforms the baseline conditional system. These advancements incur minimal computational overhead as the more abstract levels of our hierarchy work with lower-dimensional representations.

Stabilizing black-box model selection with the inflated argmax

Oct 23, 2024

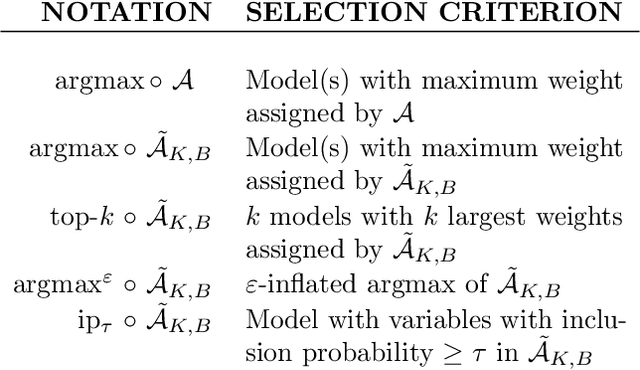

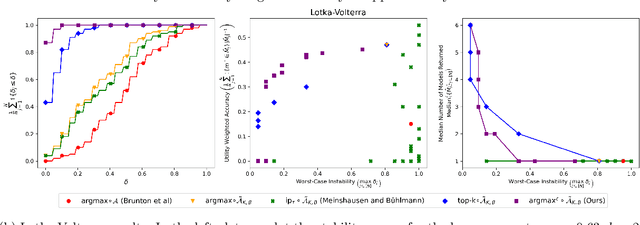

Abstract:Model selection is the process of choosing from a class of candidate models given data. For instance, methods such as the LASSO and sparse identification of nonlinear dynamics (SINDy) formulate model selection as finding a sparse solution to a linear system of equations determined by training data. However, absent strong assumptions, such methods are highly unstable: if a single data point is removed from the training set, a different model may be selected. This paper presents a new approach to stabilizing model selection that leverages a combination of bagging and an "inflated" argmax operation. Our method selects a small collection of models that all fit the data, and it is stable in that, with high probability, the removal of any training point will result in a collection of selected models that overlaps with the original collection. In addition to developing theoretical guarantees, we illustrate this method in (a) a simulation in which strongly correlated covariates make standard LASSO model selection highly unstable and (b) a Lotka-Volterra model selection problem focused on identifying how competition in an ecosystem influences species' abundances. In both settings, the proposed method yields stable and compact collections of selected models, outperforming a variety of benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge