Nuwan Bandara

Inference-Time Gaze Refinement for Micro-Expression Recognition: Enhancing Event-Based Eye Tracking with Motion-Aware Post-Processing

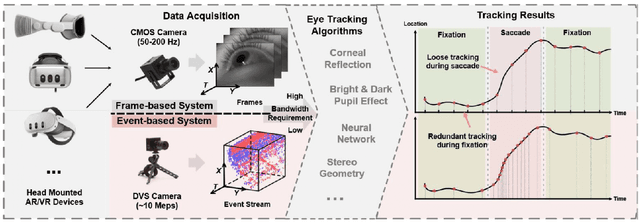

Jun 14, 2025Abstract:Event-based eye tracking holds significant promise for fine-grained cognitive state inference, offering high temporal resolution and robustness to motion artifacts, critical features for decoding subtle mental states such as attention, confusion, or fatigue. In this work, we introduce a model-agnostic, inference-time refinement framework designed to enhance the output of existing event-based gaze estimation models without modifying their architecture or requiring retraining. Our method comprises two key post-processing modules: (i) Motion-Aware Median Filtering, which suppresses blink-induced spikes while preserving natural gaze dynamics, and (ii) Optical Flow-Based Local Refinement, which aligns gaze predictions with cumulative event motion to reduce spatial jitter and temporal discontinuities. To complement traditional spatial accuracy metrics, we propose a novel Jitter Metric that captures the temporal smoothness of predicted gaze trajectories based on velocity regularity and local signal complexity. Together, these contributions significantly improve the consistency of event-based gaze signals, making them better suited for downstream tasks such as micro-expression analysis and mind-state decoding. Our results demonstrate consistent improvements across multiple baseline models on controlled datasets, laying the groundwork for future integration with multimodal affect recognition systems in real-world environments.

Event-Based Eye Tracking. 2025 Event-based Vision Workshop

Apr 25, 2025

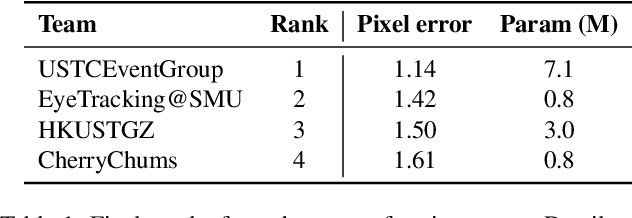

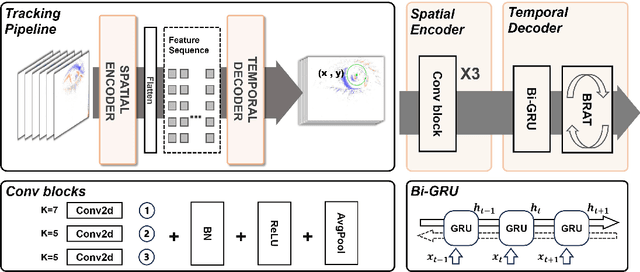

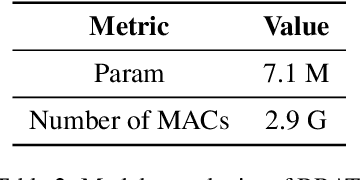

Abstract:This survey serves as a review for the 2025 Event-Based Eye Tracking Challenge organized as part of the 2025 CVPR event-based vision workshop. This challenge focuses on the task of predicting the pupil center by processing event camera recorded eye movement. We review and summarize the innovative methods from teams rank the top in the challenge to advance future event-based eye tracking research. In each method, accuracy, model size, and number of operations are reported. In this survey, we also discuss event-based eye tracking from the perspective of hardware design.

NTIRE 2025 Challenge on Event-Based Image Deblurring: Methods and Results

Apr 16, 2025Abstract:This paper presents an overview of NTIRE 2025 the First Challenge on Event-Based Image Deblurring, detailing the proposed methodologies and corresponding results. The primary goal of the challenge is to design an event-based method that achieves high-quality image deblurring, with performance quantitatively assessed using Peak Signal-to-Noise Ratio (PSNR). Notably, there are no restrictions on computational complexity or model size. The task focuses on leveraging both events and images as inputs for single-image deblurring. A total of 199 participants registered, among whom 15 teams successfully submitted valid results, offering valuable insights into the current state of event-based image deblurring. We anticipate that this challenge will drive further advancements in event-based vision research.

EyeTrAES: Fine-grained, Low-Latency Eye Tracking via Adaptive Event Slicing

Sep 27, 2024

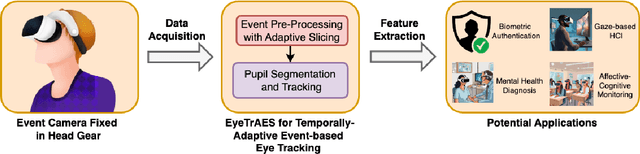

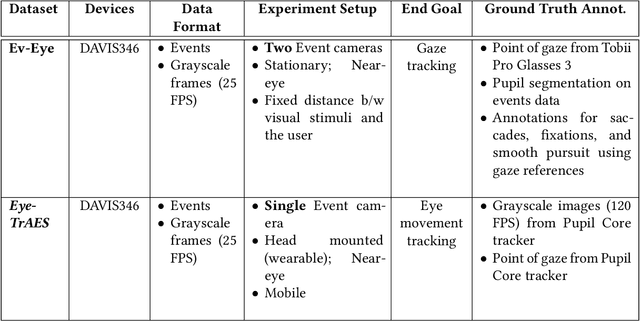

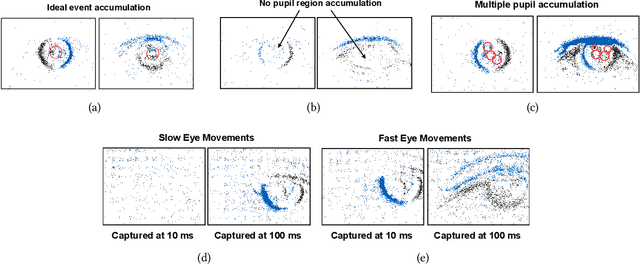

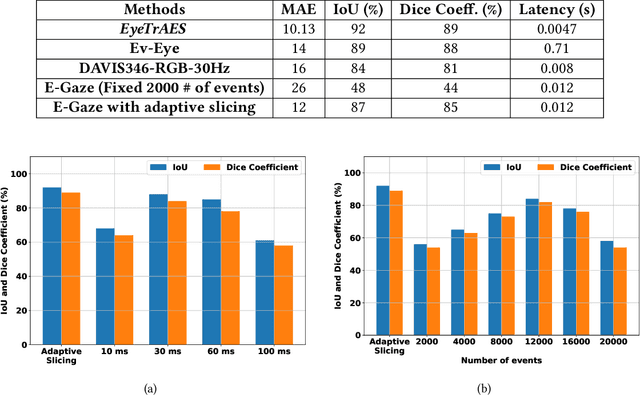

Abstract:Eye-tracking technology has gained significant attention in recent years due to its wide range of applications in human-computer interaction, virtual and augmented reality, and wearable health. Traditional RGB camera-based eye-tracking systems often struggle with poor temporal resolution and computational constraints, limiting their effectiveness in capturing rapid eye movements. To address these limitations, we propose EyeTrAES, a novel approach using neuromorphic event cameras for high-fidelity tracking of natural pupillary movement that shows significant kinematic variance. One of EyeTrAES's highlights is the use of a novel adaptive windowing/slicing algorithm that ensures just the right amount of descriptive asynchronous event data accumulation within an event frame, across a wide range of eye movement patterns. EyeTrAES then applies lightweight image processing functions over accumulated event frames from just a single eye to perform pupil segmentation and tracking. We show that these methods boost pupil tracking fidelity by 6+%, achieving IoU~=92%, while incurring at least 3x lower latency than competing pure event-based eye tracking alternatives [38]. We additionally demonstrate that the microscopic pupillary motion captured by EyeTrAES exhibits distinctive variations across individuals and can thus serve as a biometric fingerprint. For robust user authentication, we train a lightweight per-user Random Forest classifier using a novel feature vector of short-term pupillary kinematics, comprising a sliding window of pupil (location, velocity, acceleration) triples. Experimental studies with two different datasets demonstrate that the EyeTrAES-based authentication technique can simultaneously achieve high authentication accuracy (~=0.82) and low processing latency (~=12ms), and significantly outperform multiple state-of-the-art competitive baselines.

AirSPEC: An IoT-empowered Air Quality Monitoring System integrated with a Machine Learning Framework to Detect and Predict defined Air Quality parameters

Nov 28, 2021

Abstract:The air that surrounds us is the cardinal source of respiration of all life-forms. Therefore, it is undoubtedly vital to highlight that balanced air quality is utmost important to the respiratory health of all living beings, environmental homeostasis, and even economical equilibrium. Nevertheless, a gradual deterioration of air quality has been observed in the last few decades, due to the continuous increment of polluted emissions from automobiles and industries into the atmosphere. Even though many people have scarcely acknowledged the depth of the problem, the persistent efforts of determined parties, including the World Health Organization, have consistently pushed the boundaries for a qualitatively better global air homeostasis, by facilitating technology-driven initiatives to timely detect and predict air quality in regional and global scales. However, the existing frameworks for air quality monitoring lack the capability of real-time responsiveness and flexible semantic distribution. In this paper, a novel Internet of Things framework is proposed which is easily implementable, semantically distributive, and empowered by a machine learning model. The proposed system is equipped with a NodeRED dashboard which processes, visualizes, and stores the primary sensor data that are acquired through a public air quality sensor network, and further, the dashboard is integrated with a machine-learning model to obtain temporal and geo-spatial air quality predictions. ESP8266 NodeMCU is incorporated as a subscriber to the NodeRED dashboard via a message queuing telemetry transport broker to communicate quantitative air quality data or alarming emails to the end-users through the developed web and mobile applications. Therefore, the proposed system could become highly beneficial in empowering public engagement in air quality through an unoppressive, data-driven, and semantic framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge